UniDriveVLA: Unifying Understanding, Perception, and Action Planning for Autonomous Driving

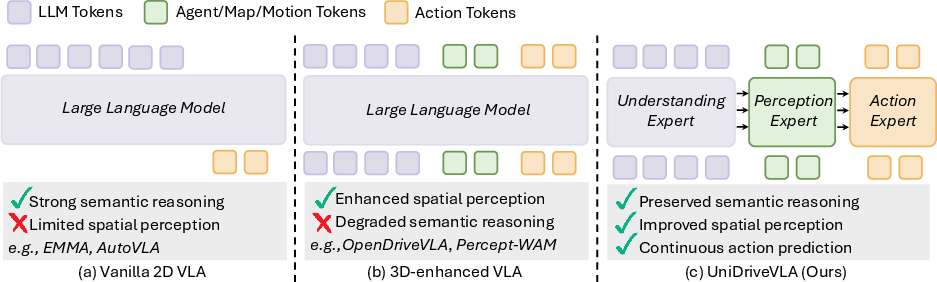

Abstract: Vision-Language-Action (VLA) models have recently emerged in autonomous driving, with the promise of leveraging rich world knowledge to improve the cognitive capabilities of driving systems. However, adapting such models for driving tasks currently faces a critical dilemma between spatial perception and semantic reasoning. Consequently, existing VLA systems are forced into suboptimal compromises: directly adopting 2D Vision-LLMs yields limited spatial perception, whereas enhancing them with 3D spatial representations often impairs the native reasoning capacity of VLMs. We argue that this dilemma largely stems from the coupled optimization of spatial perception and semantic reasoning within shared model parameters. To overcome this, we propose UniDriveVLA, a Unified Driving Vision-Language-Action model based on Mixture-of-Transformers that addresses the perception-reasoning conflict via expert decoupling. Specifically, it comprises three experts for driving understanding, scene perception, and action planning, which are coordinated through masked joint attention. In addition, we combine a sparse perception paradigm with a three-stage progressive training strategy to improve spatial perception while maintaining semantic reasoning capability. Extensive experiments show that UniDriveVLA achieves state-of-the-art performance in open-loop evaluation on nuScenes and closed-loop evaluation on Bench2Drive. Moreover, it demonstrates strong performance across a broad range of perception, prediction, and understanding tasks, including 3D detection, online mapping, motion forecasting, and driving-oriented VQA, highlighting its broad applicability as a unified model for autonomous driving. Code and model have been released at https://github.com/xiaomi-research/unidrivevla

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (in simple terms)

This paper is about teaching a self-driving car’s brain to do three things well at the same time:

- understand what it sees and what it’s being asked to do (understanding),

- figure out where things are in 3D space (perception),

- decide safe, smooth actions to take next (planning).

The authors built a single system, called UniDriveVLA, that combines these skills without the parts getting in each other’s way.

The main questions the researchers asked

- How can we give a driving AI strong “spatial awareness” (where things are and how they move) without hurting its “reasoning” ability (understanding instructions and using world knowledge)?

- Can one unified model handle many driving tasks (detecting cars, making maps, predicting motion, answering questions, and planning the car’s path) instead of having lots of separate models?

- Will this unified model actually drive better in tests than previous systems?

How they built it (methods explained simply)

Think of a team working together to drive a car. If everyone talks at once and shares the same notepad, things get messy. The key idea here is to give each person their own notepad but let them share just the right information at the right times.

The “three specialists” idea

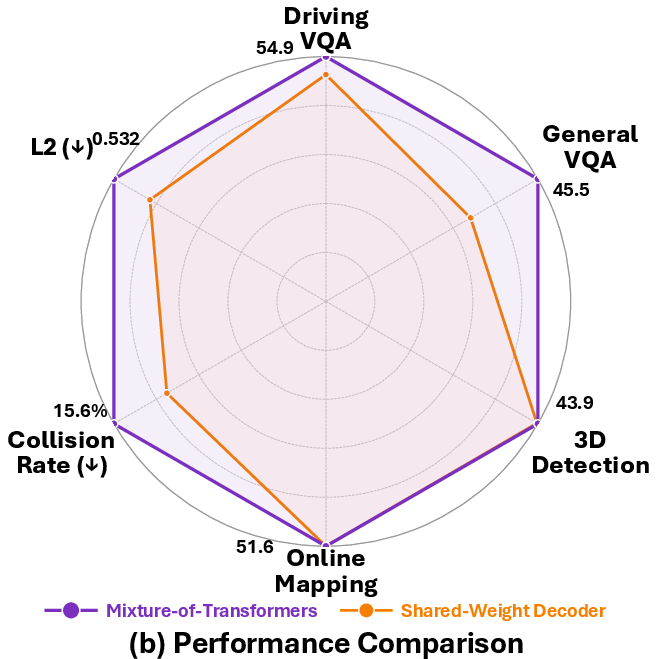

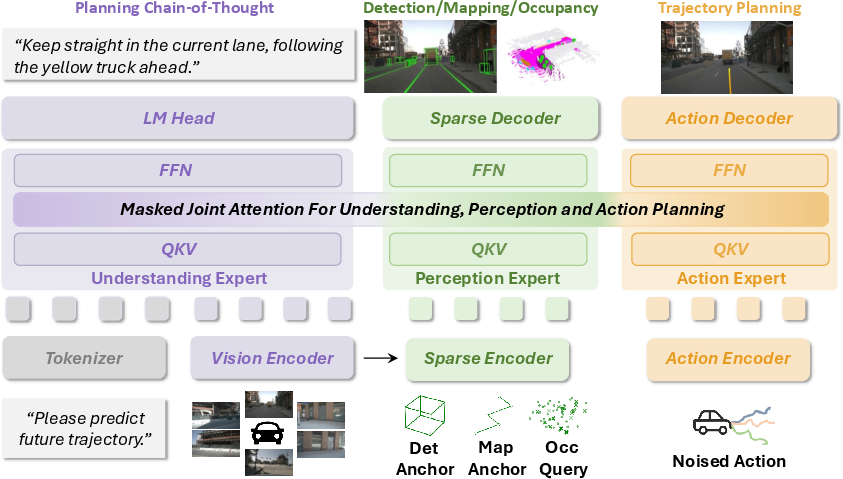

The model is a Mixture-of-Transformers (think “mixture of experts”), with three specialists:

- Understanding expert: reads the scene like a human—“That’s a pedestrian,” “The sign says stop,” “Turn left at the next street.”

- Perception expert: focuses on “where” things actually are in 3D space around the car.

- Action (planning) expert: decides the car’s next path and movements.

Each expert has its own parameters (its own “notepad”), so learning one skill doesn’t accidentally overwrite another.

How they talk to each other (masked joint attention)

The experts share information through a controlled “attention” pattern—like passing notes with rules:

- The understanding expert doesn’t get distracted by the others while it’s thinking (keeps its reasoning clean).

- The perception expert can look at what the understanding expert already figured out to add meaning (e.g., “that blob is a cyclist”).

- The action expert can use both understanding and perception to plan safe maneuvers.

This setup reduces “interference,” where mixing everything together can blur important differences (like confusing language facts with 3D geometry).

Seeing more with less (sparse perception)

Instead of building a heavy, detailed 3D map of everything all the time, the model focuses on the most important spots—like pinning sticky notes on a bird’s-eye map where objects, lanes, and obstacles matter. This “sparse” approach:

- pulls key clues from the model’s 2D vision features,

- tracks objects and roads over time,

- predicts how things might move,

- keeps the language reasoning sharp because it doesn’t flood the system with dense 3D data.

Training in three steps (so nothing gets forgotten)

- Step 1: Strengthen language and general understanding using lots of image–text data (so it “thinks” well).

- Step 2: Carefully add perception and planning training, tuning the model in a controlled way so its reasoning isn’t damaged.

- Step 3: Freeze the language part and sharpen perception and planning (specialization), including motion prediction for better, more cautious decisions.

How they tested it

They tested on two popular benchmarks:

- nuScenes (open-loop): judge how well the model predicts future paths from recorded data.

- Bench2Drive (closed-loop): put the model in a driving simulator to actually control the car in real time.

They also checked many tasks:

- 3D detection (find cars, bikes, people),

- online mapping (lanes, dividers),

- motion forecasting (where others will move),

- driving-focused Q&A (understanding scenes and instructions).

What they found and why it matters

- Better overall driving performance:

- Closed-loop (simulator) on Bench2Drive: UniDriveVLA reached top Driving Score among models trained with similar data, and showed strong handling of tricky maneuvers like merging and overtaking.

- Open-loop (prediction) on nuScenes: strong path accuracy, especially when the model wasn’t given extra ego-vehicle state, showing it learned good scene understanding from vision alone.

- Good balance of skills:

- Strong perception: competitive results for detecting objects and drawing road maps.

- Solid planning: low path error and competitive collision rates.

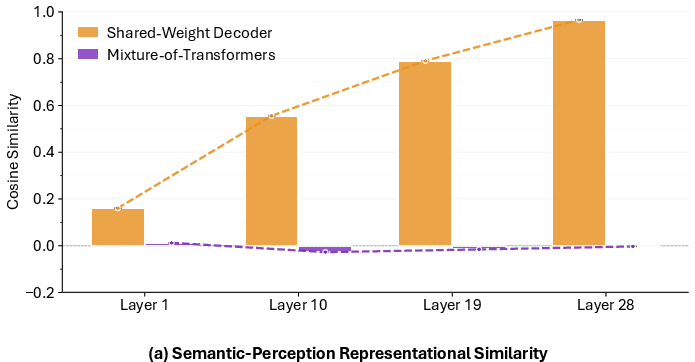

- Preserved reasoning: by decoupling experts, the model kept its language understanding instead of losing it when perception was added.

- Why this is important:

- Self-driving needs both “brains” (reasoning and knowledge) and “senses” (precise spatial awareness). Many systems improve one and harm the other. This model shows you can have both by separating who learns what and how they share.

What this could lead to (implications)

- Simpler, more capable driving stacks: one unified model handling many tasks can reduce complexity and make updates easier.

- Safer, more reliable driving: better perception plus smarter planning can reduce mistakes in busy, complex scenes.

- Better generalization: keeping the model’s reasoning ability may help it handle unusual situations by using broader world knowledge.

- A foundation for future improvements: the “three specialists + careful communication + sparse perception + staged training” recipe can be applied to other robots or vehicles.

In short, UniDriveVLA is like organizing a well-run team inside the car’s AI—each expert does their job, they share just enough information at the right times, and together they drive more safely and smartly.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

Below is a consolidated list of the key uncertainties and open problems the paper leaves unresolved. Each point is framed to be concrete and actionable for future research.

- Lack of real-world closed-loop validation: Results are limited to CARLA (Bench2Drive) and open-loop nuScenes. On-vehicle, real-traffic evaluations (urban/highway, varied geographies) are missing to verify safety, robustness, and latency under deployment constraints.

- Limited domain generalization analysis: No evaluation under strong domain shifts (night, rain/snow, glare, sensor obstruction, rare road layouts). Tests on additional datasets (nuPlan, Waymo Open, Argoverse/Motion, ONCE, BDD100K) are needed to quantify robustness.

- Real-time performance and resource footprint unreported: Inference throughput, per-frame latency, memory, and power on target hardware (e.g., NVIDIA Orin-class ECUs) are not provided; the impact of MoT and masked joint attention on worst-case latency and scheduling is unknown.

- Sparse-vs-dense perception trade-offs not characterized: The proposed sparse perception improves some metrics, but there is no head-to-head comparison against dense BEV/occupancy pipelines on the same stack (accuracy, robustness, compute) or analysis of failure modes where sparse querying misses critical context.

- Motion forecasting gap: Motion metrics are substantially weaker than specialized baselines (e.g., minADE/minFDE of 1.264/2.121 vs ~0.6/0.96 in prior work). It remains unclear how to incorporate stronger forecasting heads, multi-hypothesis distributions, or interaction-aware objectives without harming reasoning.

- Comfort degradation in closed-loop: The reported Comfortness is low (11.78), suggesting high jerk/acceleration or oscillatory control. Methods for comfort-aware planning (e.g., jerk/latency penalties, dynamics-aware loss, model-predictive smoothing) are not explored.

- Uncertainty- and risk-aware planning absent: The model outputs a single trajectory without calibrated uncertainty; there is no risk-sensitive objective, epistemic/aleatoric modeling, or safety envelope integration (e.g., RSS-style constraints).

- No safety guarantees or formal verification: There is no analysis of worst-case behavior, rule compliance, or formal safety constraints incorporated into the action expert or the attention masks.

- Cross-expert communication design underexplored: The masked joint attention pattern is hand-designed; there is no ablation of alternative visibility patterns, learned masks/routers, or dynamic token routing policies and their impact on reasoning–perception interference.

- Expert specialization and scaling laws unclear: The choice of three experts (understanding, perception, action) is not justified via scaling experiments; it is unknown whether additional experts (e.g., map, prediction, occupancy) or different expert capacities would yield better Pareto trade-offs.

- Token budget and mask granularity not analyzed: The number/length of tokens per expert, masking granularity, and their effect on latency, memory, and performance are not reported or tuned systematically.

- Training strategy sensitivity poorly quantified: The three-stage progressive schedule (ratios, LoRA placement, LR multipliers, EMA) lacks sensitivity analysis; it is unclear which components are essential to preserving VLM reasoning while improving planning.

- Data mixture and supervision quality: The curation process (driving vs general data, filtering criteria, license/bias) is only described at a high level; the model’s reliance on Think2Drive demonstrations (and no PDM-Lite) leaves open how demonstration quality affects closed-loop skill acquisition.

- No on-policy or RL fine-tuning: Unlike recent VLA/RL works, there is no DAgger-style or RLHF/RLAIF fine-tuning to address distribution shift, compounding errors, or policy alignment in interactive long-horizon tasks.

- Navigation command handling under-specified: The model accepts L_nav but there is no evaluation with varied instruction modalities (free-form language vs route waypoints vs HD-map constraints) or under ambiguous/conflicting guidance.

- Limited interpretability of decision-making: While perception outputs are interpretable, the model provides no causal explanations or counterfactual rationales for actions, nor mechanisms to audit cross-expert influences on critical decisions.

- General multimodal capability degradation: The model underperforms general-purpose VLMs on MMStar/MMMU/ChartQA/MME after driving adaptation; strategies to preserve or recover general capabilities (e.g., continual learning, rehearsal, modular adapters, retrieval augmentation) are not investigated.

- Sensor modality limitations: Experiments use multi-view cameras only; the benefits and integration of LiDAR/radar/IMU/GNSS, as well as robustness to sensor dropouts and desynchronization, are not quantified.

- Calibration and synchronization robustness: The impact of camera calibration errors, timestamp jitter, rolling-shutter distortions, and time alignment on sparse querying and planning is not studied.

- Occupancy modeling is auxiliary and unvalidated: Occupancy is used as a latent auxiliary branch; there is no direct evaluation of occupancy accuracy/consistency or its causal contribution to planning improvements.

- Map prediction scope and consistency: Online map outputs are evaluated with mAP, but temporal consistency, drift, and alignment with HD-map priors are not assessed; the utility of map confidence in planning is unclear.

- Failure case taxonomy missing: There is no qualitative/quantitative breakdown of failures (e.g., occluded pedestrian, cut-ins, unprotected lefts, unusual signage), making it hard to target improvements.

- Loss balancing and multi-task interference: Fixed loss weights are used; no exploration of adaptive weighting (e.g., GradNorm, uncertainty weighting) or conflict-aware optimization to better balance understanding, perception, and action.

- Multi-modal futures and multi-agent interactions: The action head uses flow matching toward a single velocity sequence; handling of multi-modal future hypotheses, negotiation with other agents, and game-theoretic interactions is not addressed.

- Masked joint attention stability: The stability/robustness of masked joint attention to noisy tokens (e.g., spurious semantic cues) and its susceptibility to language hallucinations affecting planning are not analyzed.

- Scalability and portability: The approach is evaluated with Qwen3-VL-2B/8B backbones; how performance/latency scales with larger/smaller VLMs, different VLM families, or parameter-efficient adapters is not systematically studied.

- Reproducibility details: Key hyperparameters (token counts per expert, attention heads, precision/mixed-precision policies, dataset sizes after filtering) and code-level defaults impacting performance/latency are not fully disclosed, impeding exact replication.

- Ethical, legal, and governance considerations: Data provenance, privacy, annotation bias, and policy compliance (e.g., localization-specific rules-of-the-road) are not discussed; mechanisms for auditability and accountability are absent.

- Sim2Real transfer: There is no explicit methodology or evidence for transferring skills learned in simulation (Bench2Drive) to real-world deployment (domain randomization, dynamics matching, sensor modeling, or adaptation strategies).

- Robustness to long-horizon and high-speed scenarios: Temporal horizon limits, memory mechanisms for long-term context, and high-speed highway performance are not evaluated; potential degradation under long occlusions or delayed observability is unknown.

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed now, leveraging UniDriveVLA’s decoupled Mixture-of-Transformers experts, sparse perception, and three-stage training.

- Automotive/Autonomous Vehicles: Camera-first end-to-end planner retrofit

- Use case: Integrate UniDriveVLA as a mid-level trajectory planner in existing camera-based stacks to boost planning accuracy and efficiency (demonstrated gains on Bench2Drive and nuScenes without ego-status).

- Workflow/tools: Fine-tune with the provided three-stage strategy (full VLM pretrain → joint LoRA updates with reduced LR → expert specialization), use Qwen3‑VL backbone, SigLIP‑2 vision encoder, and masked joint attention. Validate closed-loop in CARLA/Bench2Drive.

- Assumptions/Dependencies: Multi-view camera availability, GPU/automotive-grade compute, dataset alignment to deployment domain, safety case and on-road validation under local regulations.

- Consumer Software/ADAS: Dashcam assistant for incident triage and scene explanation

- Use case: Post-drive analysis that extracts detection, map elements, occupancy, and motion forecasts, coupled with driving-oriented VQA to generate concise incident narratives for users and claims departments.

- Workflow/tools: Batch-process dashcam footage; expose “Explain my drive” reports with heatmaps and trajectory overlays; human-in-the-loop review.

- Assumptions/Dependencies: Sufficient visual coverage (single/multi-view), domain adaptation for night/adverse weather, privacy and data retention policies.

- Simulation & Validation (Industry/Academia): Closed-loop evaluation harness

- Use case: Build a CARLA-based pipeline to measure behavior-level skills (merging, overtaking, give-way, emergency brake) and iterate policy improvements.

- Workflow/tools: Bench2Drive scenarios, DriveBench for understanding tasks, ablation scripts to isolate perception/planning components.

- Assumptions/Dependencies: Scenario fidelity vs. real-world, bridging sim-to-real gap, alignment of simulator dynamics to target fleet vehicles.

- Data Operations: Labeler-assist for detection, mapping, and occupancy

- Use case: Use sparse perception outputs as pre-labels in 3D detection and online map annotation workflows to reduce manual labeling time and cost.

- Workflow/tools: Integrate with CVAT/Label Studio; confidence-thresholding; uncertainty flags; iterative human corrections.

- Assumptions/Dependencies: Dataset-specific calibration; quality assurance gates; domain shift monitoring across cities and seasons.

- Fleet Operations/Mobility: Remote monitoring and root-cause analysis

- Use case: Generate post-mission summaries combining motion forecasts with language explanations to quickly diagnose disengagements or incidents.

- Workflow/tools: Telemetry and video ingestion; automated “why” narratives; KPI dashboards for collision rate, trajectory L2, efficiency.

- Assumptions/Dependencies: Secure data pipelines, operational SOPs for incident review, access to multi-view video.

- Robotics (Warehousing/Delivery): Expert-decoupled policy in mobile robots

- Use case: Transfer the MoT + masked joint attention design to robotics tasks that require separating semantic instructions (LLM), spatial perception, and action planning.

- Workflow/tools: Replace driving-specific queries with domain-specific sparse queries (e.g., aisle landmarks, pallet IDs); retrain experts with warehouse datasets.

- Assumptions/Dependencies: Task-specific data and evaluation suites; safe motion controllers; on-edge inference constraints.

- ML Engineering/Software: Reusable MoT components and masked joint attention layer

- Use case: Adopt the open-source code to build modularized, decoupled multimodal systems beyond driving (e.g., inspection bots, camera analytics).

- Workflow/tools: PyTorch modules for expert-specific projections, masking patterns, flow-matching action heads; ONNX/TensorRT export for inference.

- Assumptions/Dependencies: Licensing compatibility, internal CI/CD and MLOps for model deployment, quantization/latency targets.

- Academic Research: Representation interference diagnostics and mitigation

- Use case: Replicate cosine similarity analyses to study token collapse in shared decoders and test decoupling strategies in other multimodal settings.

- Workflow/tools: Layer-wise similarity probes; ablations of masks and cross-expert coupling; benchmarks on VQA + spatial tasks.

- Assumptions/Dependencies: Access to training logs and intermediate features; reproducible experimental setups.

- Education/Training: Course labs in unified perception-reasoning-planning

- Use case: Teach modern autonomy stacks with hands-on labs using the three-stage training pipeline and sparse perception queries.

- Workflow/tools: nuScenes/Bench2Drive subsets, DriveBench tasks, guided ablations and evaluation scripts.

- Assumptions/Dependencies: Compute availability for students; curated, smaller datasets for classroom timelines.

- Insurance/Legal (Finance/Policy): Claims analysis from dashcam

- Use case: Generate scene reconstructions and trajectory narratives to support claims adjudication and liability assessment.

- Workflow/tools: Batch ingestion, evidence timelines, detection/map overlays for collision context; human review.

- Assumptions/Dependencies: Regulatory acceptance of ML evidence, bias and fairness audits, data privacy and consent.

Long-Term Applications

Below are strategic directions that require further research, scaling, validation, and/or regulatory alignment before broad deployment.

- Automotive: Explainable L3/L4 autonomy with unified perception-reasoning-planning

- Use case: Production-grade autonomous driving that preserves VLM reasoning while delivering robust spatial perception and safe policies.

- Potential products/workflows: Integrated safety case tooling; continuous learning with RL fine-tuning; standardized narrative logs from understanding expert.

- Assumptions/Dependencies: Extensive real-world testing, redundancy/failover, sensor fusion beyond cameras, functional safety (ISO 26262), SOTIF, and regulatory certification.

- Policy/Regulation: Standardized explainability and audit trails for AV behavior

- Use case: Use the understanding expert to produce compliant, human-readable rationales for maneuvers and errors.

- Potential products/workflows: “Behavior report” schemas, third-party auditors, incident replay with causality claims.

- Assumptions/Dependencies: Consensus standards, verifiability of language outputs, governance over model updates.

- Automotive/Software: Natural-language controllable driving policies

- Use case: Safe prompt-based “policy edits” (e.g., “drive more conservatively in rain”) that adjust planning via the action expert without retraining.

- Potential tools: Guarded prompt interfaces; safety filters; reward-shaping via RLHF/DPO.

- Assumptions/Dependencies: Robust safety constraints, formal verification of policy changes, driver/operator consent.

- Multi-Sensor Fusion (Automotive/Robotics): Extend sparse perception to LiDAR/Radar

- Use case: Introduce multi-modal sparse queries to boost robustness in adverse conditions.

- Potential tools/workflows: Cross-modal tokenization, calibration pipelines, fusion training curricula.

- Assumptions/Dependencies: High-quality multi-sensor datasets, hardware integration costs, complexity of synchronization.

- Smart City/Mapping: City-scale continuous online mapping from fleets

- Use case: Aggregate perception outputs to maintain up-to-date maps (lane boundaries, dividers, occupancy), improving routing and infrastructure planning.

- Potential products/workflows: Map-update services, anomaly alerts for construction/road closures.

- Assumptions/Dependencies: Privacy safeguards, standardized data exchanges with municipalities, edge/on-vehicle compute budgets.

- Finance/Insurance: Usage-based risk scoring and safety coaching

- Use case: Derive risk indices from motion forecasts, occupancy proximity, and collision proxies to inform premiums and driver coaching programs.

- Potential tools/workflows: Risk dashboards; periodic coaching summaries; insurer APIs.

- Assumptions/Dependencies: Regulatory approval, fairness metrics, transparency on model decisions.

- Teleoperation/Remote Assistance (Mobility): VLA co-pilot for operators

- Use case: Provide real-time semantic summaries and spatial priors to assist remote drivers in edge cases.

- Potential tools/workflows: HMI overlays with planned trajectories, risk heatmaps, natural-language alerts.

- Assumptions/Dependencies: Low-latency connectivity, human factors validation, liability frameworks.

- Daily Life/ADAS: Real-time driver coaching and hazard commentary

- Use case: On-device assistant that explains upcoming hazards or complex traffic scenarios.

- Potential products/workflows: Voice-based guidance, configurable comfort/efficiency modes linked to action expert outputs.

- Assumptions/Dependencies: Stable sub-100 ms inference on automotive SoCs, false-positive mitigation, user acceptance.

- ML Systems/Hardware: Edge deployment via quantized MoT experts

- Use case: Compress and quantize expert pathways to run reliably on automotive-grade hardware (e.g., Orin, Journey).

- Potential tools/workflows: Post-training quantization, distillation across experts, mixed-precision masked attention kernels.

- Assumptions/Dependencies: Performance-efficiency trade-offs, memory bandwidth constraints, thermal envelopes.

- Cross-Domain Robotics (Agriculture/Drones): Unified VLA for new platforms

- Use case: Adapt sparse perception and expert decoupling to aerial or off-road contexts for semantic tasking + safe action planning.

- Potential products/workflows: Domain-specific query banks; flight/terrain-aware motion objectives; masked cross-expert transfer.

- Assumptions/Dependencies: Domain-tailored datasets, safety-critical control integration, environmental robustness.

- Public Safety/Emergency Response: Incident reconstruction and actionable insights

- Use case: Post-event analysis that merges trajectories, occupancy, and language narratives to inform investigations and infrastructure fixes.

- Potential tools/workflows: Standardized report generators; integration with city incident management systems.

- Assumptions/Dependencies: Legal admissibility, data governance, community transparency.

Glossary

- 3D Q-Formers: Transformer modules designed to extract and align 3D spatial features with LLMs for multimodal reasoning. Example: "3D Q-Formers"

- Action decoders: Network components that map high-level features to concrete control actions or trajectories. Example: "couple VLMs with action decoders"

- Autoregressive language modeling: Training objective where the model predicts the next token conditioned on previous tokens. Example: "autoregressive language modeling"

- BEV encoders: Networks that transform multi-view images into bird’s-eye-view feature maps for spatial reasoning. Example: "incorporate BEV encoders"

- BEV grid constructions: Dense top-down spatial discretizations used to represent the scene for perception and planning. Example: "BEV grid constructions"

- Bench2Drive: A closed-loop driving benchmark built on CARLA for evaluating autonomous planning and control. Example: "closed-loop evaluation on Bench2Drive."

- Bird’s-eye-view representations: Top-down projections of the scene used to aggregate spatial cues across views. Example: "dense bird's-eye-view representations"

- CARLA simulator: An open-source simulator for urban driving research and evaluation. Example: "CARLA simulator"

- Causal masking: Attention masking strategy that restricts tokens to attend only to preceding tokens to preserve autoregressive behavior. Example: "understanding tokens follow causal masking"

- Catastrophic forgetting: Degradation of previously learned capabilities when fine-tuning on new tasks. Example: "mitigates catastrophic forgetting"

- Closed-loop evaluation: Assessment where the model’s actions are executed in a simulator/environment, affecting future observations. Example: "closed-loop driving evaluation."

- Collision rate: Metric measuring the proportion of predicted or executed trajectories that result in collisions. Example: "collision rate"

- Cosine similarity: A measure of angular similarity between feature vectors used to analyze representation alignment. Example: "Cosine similarity between LLM tokens and perception tokens across layers."

- Decoupled parameter spaces: Architectural design where different tasks/modalities use separate parameter subsets to reduce interference. Example: "decoupled parameter spaces"

- Deformable feature aggregation: Mechanism that samples and aggregates features from adaptively learned spatial offsets for efficient perception. Example: "deformable feature aggregation"

- Diffusion LLMs (DLLMs): LLMs employing diffusion-style generative processes to handle continuous outputs like actions. Example: "Diffusion LLMs (DLLMs)"

- DriveBench: A benchmark for evaluating driving-oriented perception, prediction, planning, and behavior understanding. Example: "DriveBench"

- Ego-state inputs: Vehicle’s own kinematic state (e.g., speed, acceleration, orientation) used as input to planning models. Example: "without ego-state inputs"

- End-to-end (E2E) driving models: Systems that learn a direct mapping from sensor inputs to driving actions or trajectories. Example: "end-to-end (E2E) driving models"

- Exponential Moving Average (EMA): A parameter smoothing technique that maintains a decayed running average of weights during training. Example: "use EMA during training."

- Feature collapse: Undesirable convergence where different features become nearly identical, reducing representational diversity. Example: "feature collapse into nearly identical representations,"

- Flow matching: A generative training approach that learns continuous trajectories by matching probability flows between distributions. Example: "flow-matching-based trajectory generation"

- Low-Rank Adaptation (LoRA): Parameter-efficient fine-tuning technique that injects learnable low-rank updates into pretrained weights. Example: "Low-Rank Adaptation"

- Masked Joint Attention: Attention mechanism that enforces controlled cross-expert visibility via masks to coordinate understanding, perception, and action. Example: "Masked Joint Attention"

- Mixture-of-Experts (MoE): Architecture that routes inputs to specialized expert networks within a larger model for efficiency and capacity. Example: "Mixture-of-Experts (MoE) architecture"

- Mixture-of-Transformers (MoT): A transformer framework with multiple expert transformers specialized for different modalities or tasks. Example: "Mixture-of-Transformers (MoT) architecture"

- Motion forecasting: Predicting future trajectories of dynamic agents in the scene. Example: "motion forecasting"

- nuScenes: A large-scale autonomous driving dataset for perception and planning evaluation. Example: "on nuScenes"

- Occupancy prediction: Estimating which regions of space are occupied or free, often in a grid or voxelized representation. Example: "occupancy prediction"

- Online mapping: Real-time prediction of map elements (e.g., lanes, dividers) from sensor data. Example: "online mapping"

- Open-loop evaluation: Assessment using ground-truth future states to evaluate predicted trajectories without executing the control loop. Example: "open-loop evaluation on nuScenes"

- Query-driven paradigm: Perception approach that uses a sparse set of learned queries to extract and aggregate features from images. Example: "query-driven paradigm"

- Reinforcement learning (RL): Learning framework where policies are optimized using reward signals from interaction with an environment. Example: "reinforcement learning (RL)"

- Representation interference: Negative interaction where jointly optimizing different objectives entangles and degrades their representations. Example: "representation interference"

- Sparse perception paradigm: Strategy that uses sparse queries/tokens to model spatial information instead of dense 3D grids, improving efficiency. Example: "sparse perception paradigm"

- Spatio-temporal sampling: Techniques that sample features across space and time to capture motion and context. Example: "adaptive spatio-temporal sampling mechanisms"

- ST-P3: A planning evaluation protocol on nuScenes measuring trajectory errors and collisions over specific horizons. Example: "ST-P3"

- UniAD: A benchmark/protocol and model family for unified autonomous driving evaluation and training. Example: "UniAD"

- Vision-Language-Action (VLA): Models that integrate visual perception, language understanding, and action planning within a unified framework. Example: "Vision-Language-Action (VLA) models"

- Vision-LLMs (VLMs): Foundation models trained jointly on images and text to support multimodal understanding and reasoning. Example: "Vision-LLMs (VLMs)"

Collections

Sign up for free to add this paper to one or more collections.