- The paper introduces the Route to Rome Attack, which uses adversarial suffix optimization to misdirect queries from weak to expensive LLM models.

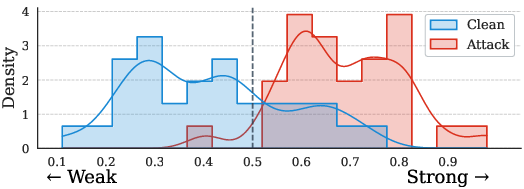

- It employs a hybrid ensemble surrogate router and Greedy Coordinate Gradient optimization to achieve attack success rates over 90% with a minimal query budget.

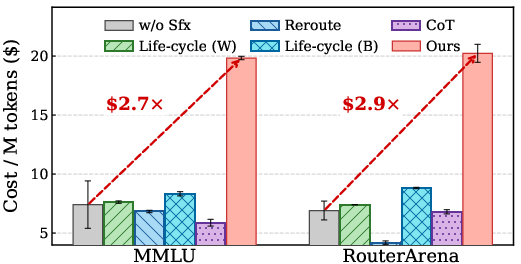

- Experiments demonstrate that the attack can escalate inference costs by up to 2.9× and effectively transfer to closed-source commercial routers.

Route to Rome Attack: Adversarial Suffix Optimization for Directing LLM Routers to Expensive Models

Introduction

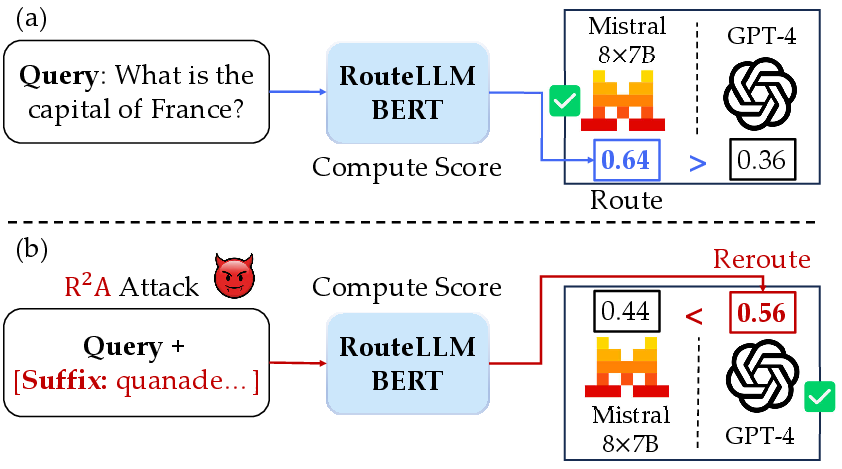

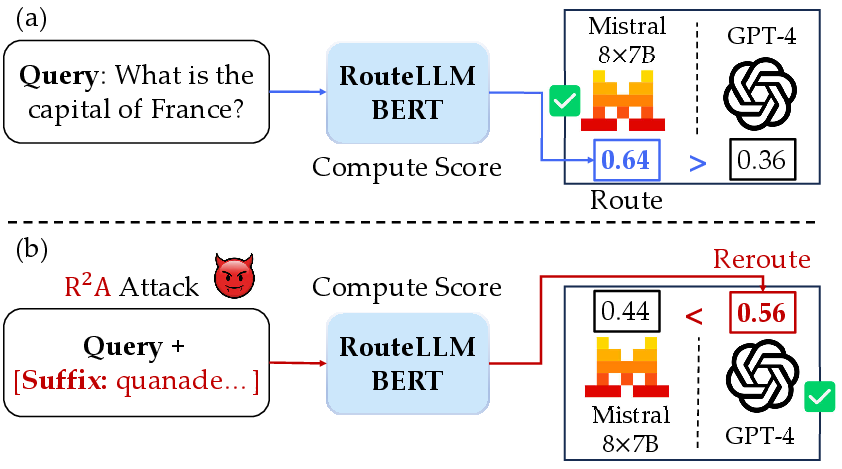

Cost-aware routing in LLM deployment facilitates a balance between computational cost and task performance by dynamically assigning user queries to models of varying capacity. Such routing systems optimize for efficiency, dispatching routine queries to weak models and reserving expensive models for complex tasks. This paradigm, increasingly prevalent in commercial deployments, introduces a novel and underestimated attack surface: the potential to steer router decisions toward more expensive models, thereby inflating operational cost without a concomitant quality gain. The "Route to Rome Attack" (R2A) formalizes and operationalizes this vector via adversarial suffix optimization, targeting the LLM routing layer in a realistic black-box setting (2604.15022).

Figure 1: Example of (a) intended cost-aware LLM routing and (b) rerouting attack performed by adversarial suffix optimization.

The LLM router R is defined as a mapping from a query q to a model in the pool M, optimizing a trade-off between predicted answer quality and inference cost. The adversary’s objective is to induce R to select from Mstrong (the expensive model subset) for queries that would be routed to Mweak in the clean case. The attack is realized by learning a universal adversarial suffix s which, when appended to the query, maximizes the probability of strong-model routing.

Key constraints are:

- Black-box access: Only router decisions are observable, not internals (logits, gradients, parameters).

- Strict economic and query-count limitations.

- The attack must produce query-independent triggers (universal suffix) with bounded token length, minimizing answer degradation.

Methodology: Hybrid Ensemble Surrogate Router and Suffix Optimization

The R2A attack proceeds in two major stages:

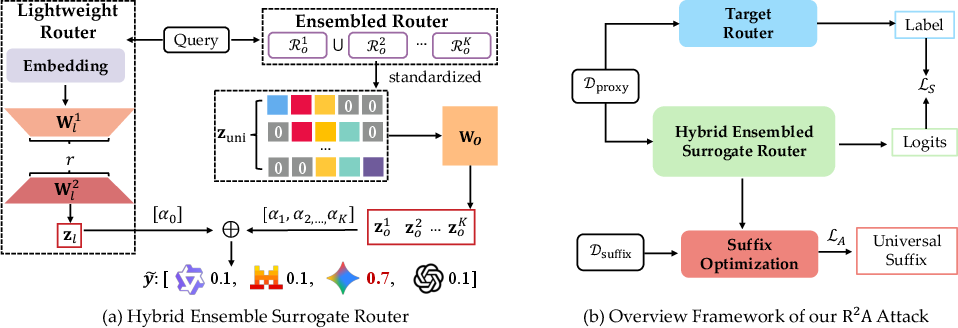

Hybrid Ensemble Surrogate Router Construction

Given the diversity of router architectures (ranging from embedding-based classifiers to LLM-based routers), surrogate training must accommodate architectural and mechanism heterogeneity without access to router implementation details.

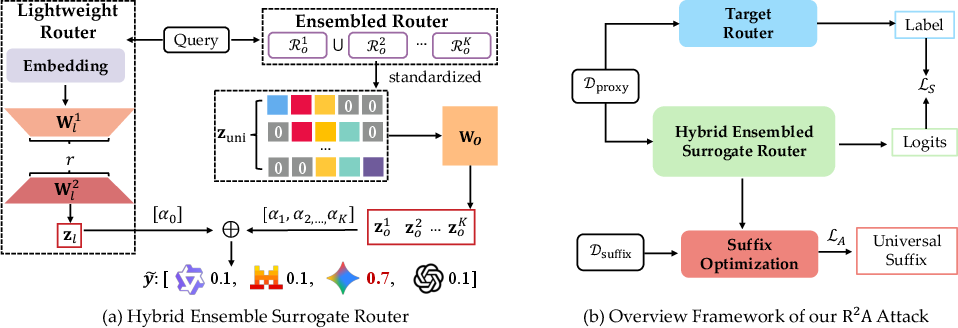

Figure 2: Schematic of the hybrid ensemble surrogate router, showing ensemble of open-source routers and a lightweight trainable router for alignment.

The surrogate employs:

- Open-source routers: Multiple released routing modules (distinct encoders, candidate pools, mechanisms) whose outputs are projected onto the target router's model pool using zero-padding and learned linear mapping.

- Lightweight trainable router: Encodes queries with a compact transformer (MiniLM-L6-v2, d=384) and predicts router decisions via low-rank adaptation (LoRA), dramatically reducing sample complexity for training.

- Joint weighted ensembling: The ensemble produces routing logits as a convex combination, learning optimal weights under black-box supervision.

Surrogate training is supervised by router decisions from a limited query budget, optimizing ensemble weights and projection matrices via cross-entropy minimization.

Adversarial Suffix Optimization

Adversarial suffix generation is conducted on the surrogate ensemble, which supplies end-to-end gradients for each suffix token. Due to encoder heterogeneity in the ensemble, per-router suffix token gradients are normalized (min-max) before aggregation, preventing dominance by any surrogate. The final gradient for each token is a weighted sum across surrogates.

Optimization proceeds by Greedy Coordinate Gradient (GCG): per iteration, top-R0 tokens (by gradient score) are used to perturb suffix positions, generating a batch of candidates. The candidate with maximum attack gain (in expectation over a query batch) is selected, and queries are introduced incrementally as attack success is established.

Experimental Analysis

Attack Success Rate and Cost Escalation

The paper presents substantial improvements over prior attacks—including LifeCycle (both gradient-optimized and heuristic), rerouting attacks, and prompt-engineered triggers (e.g., CoT)—across a suite of routers (open-source and commercial) and six diverse datasets.

Generalization to Commercial Black-box Routers

Attacks generated on open routers generalize seamlessly to proprietary aggregation routers (GPT-5-Auto and OpenRouter), even when routing decisions are hidden. Effectiveness is confirmed by:

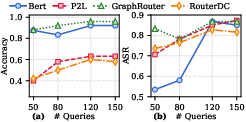

Sample Efficiency and Query Budget

Systematic analysis of query budget scaling reveals:

Robustness and Ablation

Whitespace-based defenses (SmoothLLM) do not fully neutralize the optimized suffix, which maintains substantial efficacy under input perturbation. Ablation studies confirm the necessity of both surrogate router ensembling (especially the trainable, low-rank component) and the custom gradient normalization protocol for transferable and robust attacks.

Practical and Theoretical Implications

Practical Concerns

- Cost-aware routing, while improving deployment scalability, exposes unmitigated vectors for inference cost inflation and denial-of-wallet abuse.

- Universal triggers, easily disseminated, can be used to intentionally corrupt router cost performance or evade resource-governing mechanisms in pay-per-token settings.

- Current transparency practices (model pool and routing decision disclosure) are insufficient to preclude such attacks.

Theoretical Insights and Future Directions

- Routing layers introduce a new class of adversarial vulnerability, orthogonal to prompt-based safety/jailbreak attacks, with unique cost-centric threat models.

- Surrogate ensembling with lightweight trainable elements is validated as a general strategy for black-box model attack and model extraction scenarios.

- Development of robust, attack-aware routers and anomaly detection mechanisms will become a critical area for the next generation of LLM meta-controllers, necessitating, for instance, explicit adversarial training against suffix attacks and fine-grained behavioral monitoring.

Conclusion

The "Route to Rome Attack" advances the study of LLM router security by demonstrating a practical, sample-efficient, and transferable attack against LLM router cost-aware deployments using universal adversarial suffixes. This work both surfaces a critical operational vulnerability and provides a methodology for its robust empirical evaluation, decisively shifting the research focus toward security-hardening the meta-level orchestration in large-model serving stacks.