Reinforcement Learning via Value Gradient Flow

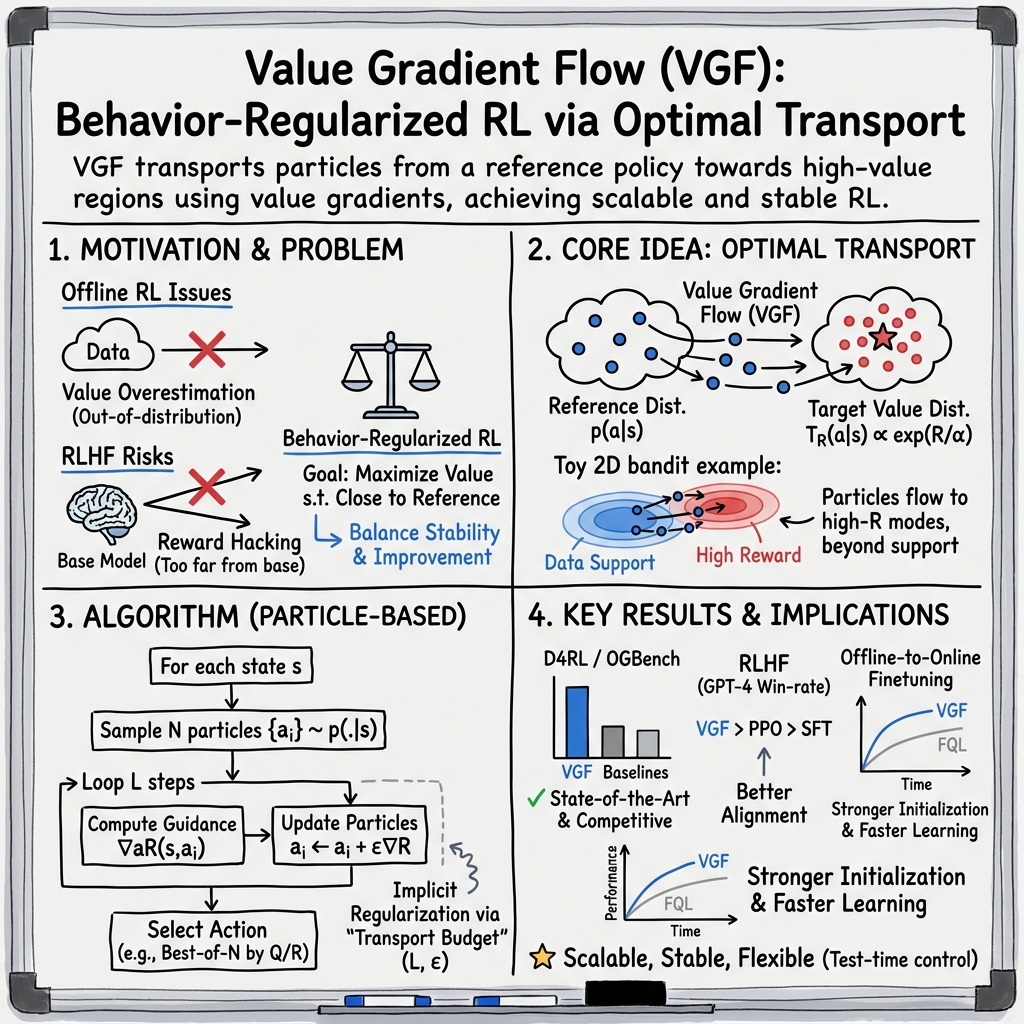

Abstract: We study behavior-regularized reinforcement learning (RL), where regularization toward a reference distribution (the dataset in offline RL or the base model in LLM RL finetuning) is essential to prevent value over-optimization caused by erroneous out-of-distribution extrapolation. Existing methods either rely on reparameterized policy gradient, which are difficult to scale to large generative models, or on reject sampling, which can be overly conservative when attempting to move beyond the behavior support. In this paper, we propose Value Gradient Flow (VGF), a scalable new paradigm for behavior-regularized RL. VGF casts behavior-regularized RL as an optimal transport problem that maps the reference distribution to the value-induced optimal policy distribution. We solve this transport problem via discrete gradient flow, where value gradients guide particles initialized from the reference distribution. Our analysis shows that VGF imposes regularization implicitly by controlling the transport budget. VGF eliminates explicit policy parameterization while remaining expressive and flexible, this enables adaptive test-time scaling by adjusting the transport budget. Extensive experiments demonstrate that VGF significantly outperforms prior methods, achieving state-of-the-art results on offline RL benchmarks (D4RL, OGBench) and LLM RL tasks. Code and runs can be found at https://ryanxhr.github.io/vgf.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

A clear, simple explanation of “Reinforcement Learning via Value Gradient Flow (VGF)”

1) What is this paper about?

This paper introduces a new way to train decision-making systems called Value Gradient Flow (VGF). It helps robots and AI (including LLMs) learn from existing data without going wild or making risky choices. The big idea is to gently guide what the system already does well toward even better actions, using small, smart nudges.

2) What questions are the researchers asking?

In everyday terms, they ask:

- How can we improve a model’s choices without letting it drift too far from what we know is safe or reliable (like sticking close to the dataset or base model)?

- Can we avoid complicated training tricks that are hard to use with big generative models (like diffusion models)?

- Is there a way to control how much we “push” a model during training and testing, so we can scale up improvements when it’s safe?

3) How does VGF work?

Think of this as moving a crowd toward better seats in a stadium:

- You start with a “reference crowd” (the safe, known behavior). In offline RL, that’s the dataset; for LLMs, it’s the base model’s outputs.

- You look at a “value map” that scores how good each possible action or answer is (this is a value function for RL or a reward model for text).

- You place several “particles” (think: multiple candidate actions/responses sampled from the reference) on the map.

- Then you nudge each particle a tiny bit toward better areas (higher value). You do this for a small, fixed number of steps.

These tiny nudges follow the “gradient” (the direction of improvement), like walking uphill to higher scores. After a few steps, the moved particles form a new, better “implicit policy” (how the model will act), even though you never trained a separate policy network.

A helpful analogy: optimal transport is like reshaping a sand pile into a new shape with the least effort. VGF treats improving behavior as moving sand (probability) from the old pile (reference) toward an “ideal” pile shaped by value scores—using short, controlled moves.

- Transport budget = how many steps and how far each step pushes. This is the built‑in safety: small budget means staying close to the original behavior; a larger budget reaches further improvements.

- Test-time control: After training, you can choose a bigger budget if you trust your value model, giving “test-time scaling” (more improvement without retraining). If you’re unsure, set the budget to zero and just pick the best from a few samples (like “best-of-N”), which is very safe.

For LLMs:

- Actions are discrete words, so VGF moves in a continuous “hidden space” (like embeddings or latent codes) and only converts back to text at the end.

- This avoids complicated backpropagation through multi-step sampling that big generative models use.

- It gives you simple, fast, inference-time steering: push outputs toward higher reward without heavy RL loops.

4) What did they find, and why is it important?

Main takeaways:

- Strong results on standard offline RL benchmarks (D4RL and OGBench): VGF beats or matches other top methods, especially on tough tasks like AntMaze and puzzle-like environments.

- Better RLHF (training LLMs with human-like feedback): VGF achieved higher “win rates” (judged by GPT-4) than common methods like PPO and DPO on datasets like TL;DR and Anthropic Helpful/Harmless conversations.

- Adaptive scaling: Increasing the test-time steps often improves performance if the value model generalizes well. If you’re worried about mistakes, you can dial the steps down—even to zero—to stay conservative.

- Not overly conservative: Unlike methods that stick strictly to the original data/model, VGF can move beyond the “support” (it can find new, better behaviors) while still controlling how far it goes.

- Stable and scalable: It avoids unstable training tricks and works nicely with modern generative models (diffusion/flow), because it doesn’t require differentiating through their whole sampling process.

Why this matters:

- In offline RL, it’s hard to improve beyond the dataset without making dangerous guesses. VGF offers safer, bounded improvements.

- For LLMs, it offers an easier path to align with preferences (better answers) without complex RL training pipelines, and with simple knobs at inference time.

5) What’s the bigger impact?

VGF offers a practical, flexible recipe for improving AI safely:

- One knob to rule them all: the transport budget cleanly controls how far you deviate from known-safe behavior. That makes tuning easier.

- Works with powerful generators: It plugs into diffusion/flow models and LLMs without the mess of backpropagating through long sampling chains.

- Test-time scaling: You can deploy a single trained model and dynamically adjust how bold or conservative it should be, depending on context or confidence.

- Better alignment: For LLMs, this can reduce reward hacking or over-optimization by letting you enforce gentle, controlled nudges rather than pushing too hard.

A simple limitation: if your starting data is very biased or low quality, you still need a decent value/reward model to guide the nudges. The authors suggest future work on reweighting data and improving value models to handle long, complex tasks even better.

In short: VGF is like a smart, adjustable steering wheel for AI learning—easy to turn a little or a lot—helping models improve safely and effectively without heavy, complicated training.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of what remains missing, uncertain, or unexplored in the paper, organized by theme to aid follow-on research.

Theoretical guarantees and problem formulation

- Lack of end-to-end guarantees: No proof that the particle-based VGF procedure converges to the optimal policy for the original constrained problem in Equation (1) (beyond the surrogate MaxEnt derivation and SVGD-style intuition).

- Unclear constraint satisfaction: The paper posits the “transport budget” as an implicit behavior regularizer but does not quantify how it maps to standard divergence constraints (e.g., KL, TV) or provide criteria to meet a target constraint ε in Equation (1).

- Incomplete linkage to the JKO scheme: The connection from the JKO/Wasserstein gradient flow formulation to the implemented particle update is heuristic; there is no formal equivalence or approximation error bound between the discrete gradient flow objective (Equation (6)) and the applied SVGD-like update (Equation (7)).

- Support expansion without safety bounds: Theorem 2 shows VGF can move beyond the behavior support, but there is no bound on the amount of OOD shift or its effect on performance/safety in offline RL (no high-probability guarantees under dataset misspecification).

- No performance or regret bounds: There are no finite-sample guarantees on value suboptimality/regret for VGF under standard offline RL assumptions (e.g., concentrability, coverage).

- MaxEnt vs. non-MaxEnt inconsistency: The analysis uses a MaxEnt surrogate to derive a repulsive term for multi-modality preservation, but the implementation “w/o MaxEnt” drops this term; the theoretical and practical consequences of removing the repulsive force (e.g., particle collapse, mode loss) are not analyzed.

- Lipschitz and kernel assumptions left unexamined: Theorem 1 requires R(s,a) to be c-Lipschitz and uses kernelized updates, but there is no discussion of how kernel choice/bandwidth, action dimensionality, and Lipschitz constants impact convergence, stability, or expressivity.

Method design, hyperparameters, and stability

- No principled selection of transport budget: There is no method to select or adapt step size ε, number of steps L (train/test), and particle count N to meet target divergence, performance, or safety criteria; tuning remains task-specific.

- Missing per-state adaptivity: Transport budget is global; there is no mechanism to adapt L or ε per state based on uncertainty, value gradient magnitude, or OOD risk.

- Kernel choice and bandwidth: The kernel k(·,·) is unspecified in practice and not ablated; there is no guidance on bandwidth scaling in high-dimensional action spaces where kernels can degenerate.

- Particle count vs. diversity trade-offs: With small N (e.g., N=5), there is no analysis of particle collapse, diversity, or mode coverage vs. compute; no diagnostics or remedies are proposed.

- Gradient approximator bias: The auxiliary network f(s,a) trained to predict ∇aQ(s,a) introduces approximation bias; its impact on policy quality, stability, and sample efficiency is not evaluated or bounded.

- Value overestimation risks: Without explicitly conservative critics (e.g., CQL-style penalties), there is no analysis of overestimation and compounding bias when transporting beyond dataset support; no criteria for when to fall back to Ltest=0 beyond qualitative guidance.

- Best-of-N selection bias: Selecting the best particle by learned Q/reward risks optimistic bias; there is no calibration or uncertainty-aware selection strategy.

Practical scalability and implementation gaps

- Compute/latency characterization: No measurement of training or inference-time costs vs. baselines (e.g., PPO-style RLHF, diffusion/flow policies), especially for large models or long horizons.

- High-dimensional action spaces: Scalability of kernelized particle updates and gradient flows in very high-dimensional actions (e.g., manipulation with many DoF, video actions) is untested and unanalyzed.

- Discrete action RL beyond LLMs: The approach for discretized action spaces is only discussed for LLMs via embeddings/latents; applicability and performance for generic discrete-control offline RL remain untested.

- Robustness to sparse or non-smooth rewards: The method assumes differentiable R(s,a); behavior for sparse, piecewise, or adversarially non-smooth reward landscapes is not explored (e.g., gradient clipping, smoothing, or trust regions).

- Online fine-tuning mechanics: The online extension reports results but lacks algorithmic detail on data collection policy, exploration strategy under implicit regularization, and stability under nonstationarity.

RLHF-specific gaps and safety considerations

- Decoding fidelity from surrogate space: Performing gradient flow in embedding/latent space and decoding once at the end lacks analysis of exposure bias, decoding instability, or mismatch between surrogate gradients and discrete token outcomes.

- Reward hacking under implicit regularization: Without explicit KL constraints, there is no systematic study of reward model exploitation, safety regressions, or harmful content risks as transport budget increases.

- Adaptive transport for RLHF: No procedure to adapt Ltest per prompt based on reward uncertainty, OOD detection, or toxicity risk; fallback to Ltest=0 is heuristic.

- Generalization to larger LLMs and tasks: Experiments are limited (e.g., Pythia-2.8B, TL;DR, Anthropic-HH); scaling behavior with model size, longer contexts, more complex objectives, or multi-metric rewards (helpful/harmless/honest) is not investigated.

- Human evaluation breadth: GPT-4 win rates are reported, but human studies, safety red-teaming, and robustness to prompt attacks are absent.

Experimental coverage and ablations

- Limited ablations: Only Ltrain is ablated; there are no studies for ε, N, kernel type/bandwidth, gradient approximator f(s,a), or the presence/absence of the repulsive term from the MaxEnt surrogate.

- Fairness and compute parity: Comparisons lack detailed compute budgets, wall-clock, model sizes, or sampling costs across methods, which could confound performance claims.

- Long-horizon and credit assignment: Although suggested as future work, there is no empirical probing of long-horizon tasks where value extrapolation and compounding errors are more severe.

- Dataset skew and poor-behavior regimes: The method’s sensitivity to heavily suboptimal or biased behavior datasets (beyond the brief limitation note) is not systematically studied; no reweighting or coverage-correction strategies are evaluated.

- Uncertainty and OOD detection: There is no empirical assessment of using ensembles, calibration, or uncertainty-aware transport to prevent catastrophic OOD moves during test-time scaling.

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that leverage VGF’s implicit behavior regularization, particle-based value-gradient guidance, and adaptive test-time scaling. For each, we indicate the sector and key assumptions/dependencies.

- LLM alignment without PPO using gradient-flow decoding

- Sector: software/LLMs, content moderation, customer support

- What to deploy: a “gradient-flow decoding” module that steers an SFT/base model at inference using reward-model gradients in embedding or latent space; a “transport budget” knob (steps/step size/particles) to trade off reward vs. drift; fallback to best-of-N by setting budget to 0

- Why now: paper shows higher win-rates than PPO/DPO baselines on TL;DR and Anthropic-HH; no backprop-through-sampling; simpler engineering footprint

- Tools/workflows: HuggingFace integration; A/B tests that sweep transport budgets; latency-aware budget scheduler; guardrail that reverts to best-of-N on reward-model uncertainty spikes

- Assumptions/dependencies: differentiable reward wrt embeddings/latent; reliable SFT reference model; reward-model calibration; access to token embeddings or latent decoder Jacobians; safety review for reward hacking if budgets are too large

- Offline robotics policy improvement from logs

- Sector: robotics/automation (warehouses, logistics arms, mobile robots)

- What to deploy: a VGF controller that samples a few actions from a behavior cloning (BC) policy and nudges them via Q-gradients; best-of-N selection at execution; test-time budget scaling per task difficulty

- Why now: SOTA gains on D4RL and OGBench; stronger offline-to-online initialization and faster adaptation

- Tools/workflows: ROS-compatible inference server; trained f(s,a) head to approximate ∇aQ for fast guidance; safety watchdog that caps transport distance and enforces fallback to BC best-of-N

- Assumptions/dependencies: adequate offline coverage; differentiable Q; reward/value fidelity in near-support regions; calibrated budget per environment

- Re-ranking in recommendation, search, and ads with drift control

- Sector: recommender systems, ads, search

- What to deploy: start from logged policy rankings, apply small VGF steps guided by a value/utility model (e.g., calibrated CTR/LTV), then pick best-of-N

- Why now: behavior-regularized improvement beyond the logged policy while controlling OOD deviation; can be dropped into existing re-rankers

- Tools/workflows: online inference service with budget sweeps; bandit-style counterfactual validation; monitor for distribution shift (MMD/KL) vs. logs

- Assumptions/dependencies: reliable counterfactual or self-normalized off-policy evaluation; differentiable surrogate for ranking objective; safeguards against exposure bias and drift

- Energy and building control from historical telemetry

- Sector: energy, smart buildings/IoT

- What to deploy: offline VGF to suggest HVAC/DER setpoints by nudging BC actions with Q-gradients; dynamic test-time budget tied to forecast uncertainty

- Why now: low-risk offline-to-online path; budget acts as a safety dial

- Tools/workflows: digital twin to validate OOD regions; budget governor tied to anomaly detection; fallback to best-of-N

- Assumptions/dependencies: sufficiently representative historical data; physics-aware reward; differentiable value; compliance with operational constraints

- Finance and operations decision support via offline logs

- Sector: finance (execution, market making), supply chain (inventory, routing), operations research

- What to deploy: VGF on top of logged strategies to find modest improvements within controlled transport budgets; scenario backtesting; budget sweeps for risk control

- Why now: reduces over-optimization risk vs. unconstrained RL; practical best-of-N fallback

- Tools/workflows: risk-sensitive reward shaping; budget as a VaR-like knob; guardrails to cap OOD drift

- Assumptions/dependencies: robust backtests; differentiable reward proxies; stationarity assumptions; regulatory compliance

- Productized “alignment knob” for user-facing assistants

- Sector: consumer software/UX

- What to deploy: a user slider that maps to the VGF transport budget at inference: safer/conservative (0–1 steps) to more assertive/creative (3–5 steps)

- Why now: immediately exploitable with SFT + reward model; no training-time changes required

- Tools/workflows: per-conversation budget schedule; budget-aware latency management (particles × steps)

- Assumptions/dependencies: calibrated reward for the intended preference dimension (helpfulness, harmlessness, style); abuse-resistant upper bounds on budget

- MLOps and safety controls for behavior-regularized RLHF/RL

- Sector: MLOps, compliance

- What to deploy: dashboards that track transport budgets, value uncertainty, and online metrics; automatic fallback to best-of-N when reward/value uncertainty rises; budget whitelists per domain

- Why now: budget is an actionable, interpretable control; simple to monitor and govern

- Tools/workflows: “transport budget scheduler,” “uncertainty-aware budget cap,” and “OOD watchdog” microservices

- Assumptions/dependencies: uncertainty estimation (ensembles or dropout); red-team evaluation; clear SLOs for safety vs. performance

- Academic baselines and teaching materials

- Sector: academia

- What to deploy: VGF as a baseline for offline RL and RLHF assignments; lab modules demonstrating optimal transport, gradient flows, and behavior regularization

- Why now: open code; strong empirical performance; straightforward Algorithm 1 implementation

- Tools/workflows: JAX/PyTorch reference package; notebooks showing test-time scaling and fallback modes

- Assumptions/dependencies: standard RL benchmarks; compute for small particle counts (e.g., N=5)

Long-Term Applications

The following require further research, scaling, or domain-specific development (e.g., stronger reward/value models, safety validation, or regulatory approval).

- Fleet-scale autonomous driving from offline logs

- Sector: autonomous vehicles

- Opportunity: nudge trajectories beyond logged behavior under a tightly controlled transport budget; adaptive budget by scenario risk (weather, density)

- Potential product: “VGF policy-improvement layer” in planning stack with context-aware budget control

- Dependencies: highly reliable value/reward models; rigorous OOD detection; formal safety guarantees; regulatory certification; real-time constraints

- Clinical decision support and treatment planning

- Sector: healthcare

- Opportunity: policy improvement over clinician-logged policies (dosing, triage) with budgeted drift and interpretable fallback

- Potential product: decision-support tool with “no-regret” modes and budget caps; particle trajectory visualizations for audit

- Dependencies: causal validity of reward/value surrogates; IRB oversight; bias auditing; robust uncertainty quantification; regulatory approval (FDA/CE)

- Process and industrial control at scale (chemicals, manufacturing)

- Sector: industrial robotics/controls

- Opportunity: offline-to-online VGF to tune setpoints/schedules beyond standard MPC/heuristics; multi-modal action exploration preserved by particles

- Potential product: “VGF co-controller” integrated with PLC/MPC; budget based on hazard analysis

- Dependencies: high-fidelity simulators/digital twins; safety interlocks; explainability requirements

- Multi-modal generative alignment (text-image-audio) via value gradients

- Sector: generative media

- Opportunity: unify preference optimization for diffusion/flow models with first-order guidance (e.g., aesthetic or safety rewards) without PPO-style RL

- Potential product: “Gradient-flow sampler” SDK for image/video/audio models with preference controls and budget knobs

- Dependencies: differentiable reward models for multimodal content; robust defenses against reward gaming; scalable gradient-flow kernels

- Personalized education and coaching with controllable drift

- Sector: education, wellness

- Opportunity: adapt tutoring strategies/actions beyond baseline while constraining drift; per-learner budget personalization

- Potential product: VGF-powered tutor with domain-specific rewards (retention, engagement) and safety caps

- Dependencies: valid proxies for learning outcomes; privacy-preserving data; guardrails to prevent harmful strategies

- Multi-agent systems with budget-aware coordination

- Sector: robotics, logistics, gaming

- Opportunity: agents improve policies locally with VGF while respecting joint constraints; budgets act as coordination contracts

- Potential product: “Budgeted coordination layer” for swarms or warehouse fleets

- Dependencies: stable multi-agent reward/value estimation; communication protocols; global safety constraints

- Standardization and governance: “transport budget” as a reportable control

- Sector: policy/regulation, AI safety

- Opportunity: treat transport budgets as auditable levers (akin to KL caps), included in model cards and deployment attestations

- Potential product: compliance templates and auditing tools that log budget settings and OOD metrics

- Dependencies: consensus on metrics; benchmarks for over-optimization; regulator buy-in

- Hardware and systems acceleration for particle-based gradient flow

- Sector: systems/accelerators

- Opportunity: optimized kernels for particle updates, kernel interactions, and ∇aQ inference; batching across states

- Potential product: inference stack with fused VGF steps; compiler passes co-optimizing particles and model evals

- Dependencies: stable kernels for various k(·,·); memory/latency trade-offs; integration with existing serving stacks

- Interpretability via particle trajectories

- Sector: AI safety/interpretability

- Opportunity: visualize how actions move from the reference toward high-value regions; detect reward hacking or spurious gradients

- Potential product: “FlowLens” dashboard for per-state particle paths, budget usage, and value gradients

- Dependencies: usable UX; linking trajectories to human-interpretable features; standardized diagnostics

Notes on Key Assumptions and Dependencies Across Applications

- Differentiable value/reward: VGF needs ∇aR or ∇aQ (or ∇yR in LLM embedding/latent space). When not directly available, train a gradient head f(s,a) ≈ ∇aQ.

- Reference distribution quality: Performance depends on reasonable support from BC/base policy; heavy skew toward suboptimal behavior may require reweighting or dataset curation.

- Reward/value reliability: Extrapolation error can misguide flows; mitigate with small budgets, uncertainty-aware gating, and fallback to best-of-N.

- Hyperparameters: Train-time steps (Ltrain) and test-time steps (Ltest) are task-dependent; tune conservatively and consider dynamic schedules.

- Compute/latency: Particle count N and steps L impact cost; amortize with gradient heads, batching, and budget-aware serving.

- Safety: Larger budgets increase drift and potential reward hacking; enforce caps, monitoring, and human-in-the-loop review where needed.

Glossary

- Advantage-weighted updates: Policy updates that weight actions by estimated advantage to bias learning toward better-than-average actions. "advantage-weighted updates"

- Bellman operator: A mapping that enforces one-step consistency of value functions under a policy, central to dynamic programming in RL. "Let Tπ be the Bellman operator with policy π"

- Behavior cloning (BC): Supervised learning to imitate actions from a dataset by modeling the behavior policy. "weighted behavior cloning (BC)"

- Behavior-regularized RL: Reinforcement learning that constrains policy improvement to stay near a reference distribution to reduce distributional shift. "behavior-regularized RL"

- Best-of-N sampling: A selection strategy that draws N samples from a distribution and chooses the one with the highest evaluated score. "a simple best-of-N sampling policy (Nakano et al., 2021)"

- Boltzmann distribution: A softmax distribution over actions proportional to the exponential of scaled value or reward, promoting stochasticity. "a variational distribution as the Boltzmann distribution over the value function R(s, a)"

- Causal entropy: Entropy of a policy conditioned on state, encouraging stochasticity in action selection at each state. "is the causal entropy of the policy"

- D4RL: A widely used benchmark suite of offline RL datasets for locomotion and navigation tasks. "D4RL"

- Diffusion-based policies: Generative policies that sample actions via iterative denoising, derived from diffusion models. "diffusion-based policies"

- Discrete gradient flow: A stepwise approximation to continuous gradient flow over probability measures, used to transport distributions. "We solve this transport problem via discrete gradient flow"

- Epsilon-support (ε-support): The set of points where a distribution’s density exceeds a threshold ε, describing a relaxed notion of support. "Define the e-support of a distribution P as suppe (P) := {x : p(x) ≥€}."

- Flow-matching loss: An objective for training flow models to match continuous-time dynamics that transform a simple distribution into data. "flow-matching loss"

- Jordan–Kinderlehrer–Otto (JKO) scheme: A minimizing-movement scheme that discretizes gradient flows in the space of probability measures. "Jordan-Kinderlehrer-Otto (JKO) minimizing-movement scheme"

- KL-divergence: A directed divergence measuring how one probability distribution differs from another, often used as a regularizer. "Using KL-divergence gives Equation (2) a closed-form solution"

- Markov Decision Process (MDP): A mathematical framework for sequential decision making defined by states, actions, transitions, and rewards. "presented as a Markov Decision Process (MDP)"

- Maximum-Entropy (MaxEnt) RL: An RL formulation that augments reward maximization with policy entropy to encourage exploration. "This Maximum- Entropy (MaxEnt) formulation of RL"

- Maximum Mean Discrepancy (MMD): A kernel-based distance between distributions measuring differences in all RKHS moments. "the Maximum Mean Discrepancy (MMD) distance"

- OGBench: A benchmark suite for offline goal-conditioned RL across locomotion and manipulation tasks. "OGBench (Park et al., 2025a)"

- One-step flow model: A flow-based generative policy that maps noise to actions in a single transformation step. "one-step flow model"

- Optimal transport: A framework for transforming one probability distribution into another with minimal cost under a transport metric. "casts behavior-regularized RL as an optimal transport problem"

- Particle-based gradient flow: A method that represents distributions as particles and moves them via gradient flow to minimize a divergence. "a particle-based gradient flow solver"

- PPO-style optimization: A first-order policy gradient method (Proximal Policy Optimization) with clipped objective for stable on-policy updates. "PPO-style optimization (Ouyang et al., 2022)"

- Q-function (action-value function): A function estimating expected return from a state-action pair under a policy. "action-value function (Q-function)"

- Reject sampling: A sampling method that filters proposals using an acceptance criterion, potentially conservative in constrained RL. "reject sampling"

- Reparameterized policy gradient: A gradient estimator that differentiates through a stochastic policy by reparameterizing random variables. "reparameterized policy gradient, which are difficult to scale to large generative models"

- Reproducing kernel Hilbert space (RKHS): A Hilbert space of functions where evaluation is inner-product with a kernel feature map; used to constrain vector fields. "reproducing kernel Hilbert space (RKHS)"

- Reward hacking (reward gaming): Exploiting imperfections in a learned reward model to achieve high reward without truly desirable behavior. "reward hacking or reward gaming"

- RLHF (Reinforcement Learning from Human Feedback): Training methods that align policies with human preferences using preference data and learned rewards. "RL from human feedback (RLHF)"

- Support (of a distribution): The set of points with non-negligible probability mass; staying within support avoids OOD actions. "within the support of the behavior distribution"

- Temporal-Difference (TD) learning: A method that updates value functions using bootstrapped estimates from subsequent states. "by TD learning"

- Transport budget: A limit on how far and how often probability mass is moved during transport, acting as implicit regularization. "transport budget as implicit regularization"

- Value Gradient Flow (VGF): The proposed method that transports samples from a reference distribution toward higher-value regions using value gradients. "We propose Value Gradient Flow (VGF)"

- Wasserstein distance (2-Wasserstein): An optimal transport metric that measures the cost of morphing one distribution into another; W2 is the squared Euclidean cost case. "2-Wasserstein distance (Peyre & Cuturi, 2019)"

- Weighted behavior cloning (BC): A variant of BC that weights demonstrations by scores (e.g., exponentiated rewards) to bias imitation toward better actions. "weighted behavior cloning (BC)"

Collections

Sign up for free to add this paper to one or more collections.