- The paper presents R2Eval, showing a dramatic performance drop of 64.32% in input prediction and 52.22% in output prediction using real-world code challenges.

- It employs a hybrid static and dynamic analysis to serialize complex, custom types from production-grade Python repositories.

- The findings suggest that conventional synthetic benchmarks overestimate LLM performance, underlining the need for models that understand real-world code semantics.

Evaluating Code Reasoning of LLMs in Real-World Contexts: The R2Eval Benchmark

Introduction

The assessment of code reasoning capabilities in LLMs has conventionally relied on benchmarks derived from synthetic, simplistic, or challenge-style code snippets. This paradigm disregards the actual complexity encountered in production-grade software, where program context, diverse dependencies, and compound custom types are pervasive. The paper "Evaluating LLMs Code Reasoning Under Real-World Context" (2604.12881) introduces R2Eval, a benchmark specifically designed to address these limitations by extracting code reasoning problems from ten widely used Python repositories, preserving the inherent structural intricacies and data type heterogeneity of authentic codebases. This approach enables a substantially more rigorous evaluation of LLMs' code reasoning capabilities under practical circumstances.

Benchmark Design and Methodology

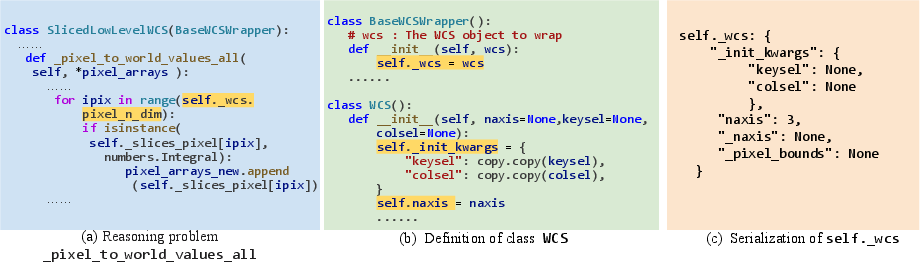

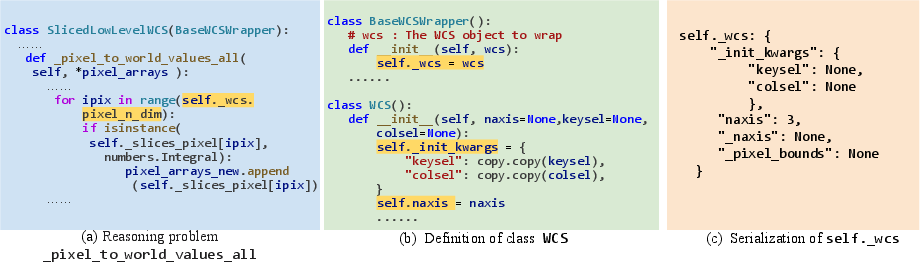

R2Eval systematically sources 135 code reasoning problems from prominent open-source Python projects, including scikit-learn, django, requests, seaborn, sphinx, pytest, astropy, xarray, matplotlib, and sympy. Each problem is represented as a triplet {P,I,O}, where P provides the relevant code context, and I and O correspond to serialized method input and output instances, respectively. Crucially, the benchmark employs a hybrid pipeline based on both static and dynamic analysis to serialize compound and custom types in a principled fashion. This addresses the challenge presented by objects lacking canonical serialization routines (e.g., missing implementations of __str__ or custom representations), ensuring that LLMs are evaluated on problems faithfully reflecting real project data complexity.

Figure 1: Example of custom type variable serialization in R2Eval.

The serialization process recursively decomposes complex variables—down to primitives or core collection types—and encodes them in JSON. This enables deterministic evaluation, as LLM predictions can be deserialized and compared semantically (via runtime tests) instead of brittle string-wise matching.

Experimental Findings

R2Eval facilitates the evaluation of both input and output prediction tasks across multiple LLMs, including o4-mini, Gemini-2.5-Pro, DeepSeek-R1, GPT-4.1, Gemini-1.5-Pro, and DeepSeekCoder-33B-Inst. The experimental results reveal a dramatic reduction in LLM performance when moving from synthetic/curated (CRUXEval) to real-world (R2Eval) benchmarks. On average, input prediction accuracy drops by 64.32% and output prediction by 52.22%. For instance, top-performing LLMs such as o4-mini exhibit an input prediction decline from 92.59% to 20.00%, and output prediction from 91.85% to 28.15%. These results highlight the limitation of previously reported performance metrics, which fail to generalize to realistic scenarios.

The performance drop is attributed to:

- Complex Inter- and Intra-Procedural Dependencies: Real-world methods rely on context rarely present in isolated snippets.

- Prevalence of Custom and Compound Types: LLMs are required to reason about program state involving arbitrarily nested, user-defined structures.

- Third-Party API and Library Usage: Code often invokes external abstractions not captured in canonical benchmarks.

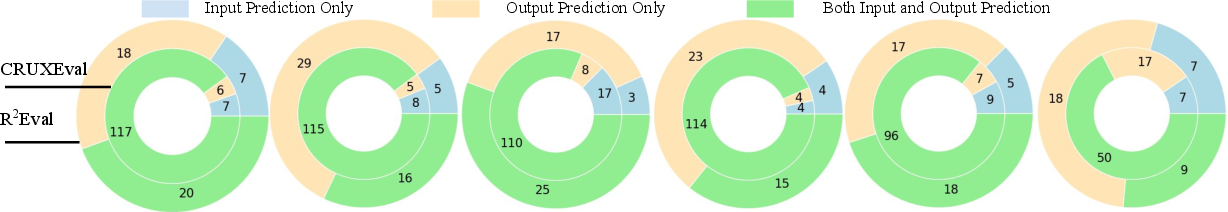

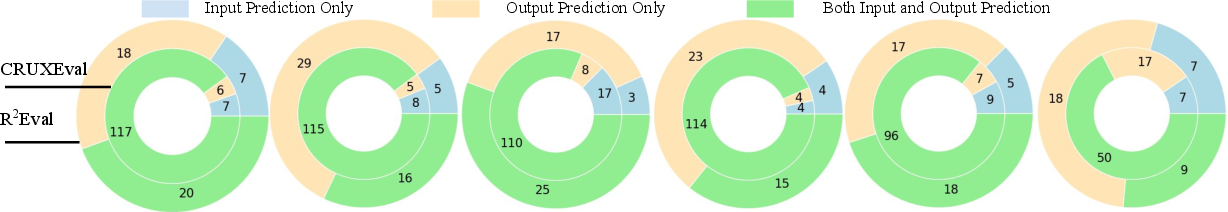

Moreover, the overlap analysis, as visualized in Figure 2, demonstrates that the set of problems on which LLMs succeed diverges substantially between benchmarks. The intersection of successful input/output predictions diminishes as complexity increases, indicating major challenges in backward (input) reasoning under authentic code dependencies.

Figure 2: Unique and common problems each LLM succeeds in predicting their inputs and outputs across R20Eval and CRUXEval.

Implications and Theoretical Outlook

These findings support several non-trivial conclusions. Firstly, established performance metrics on standard code reasoning benchmarks significantly overestimate LLMs' practical competence for real-world tasks. Secondly, evaluating models exclusively on primitive I/O types is insufficient, as it elides key aspects of reasoning involving serialization, object identity, and state mutation. Thirdly, input prediction is markedly harder than output prediction in realistic settings—a trend amplified by the richness of context and variable complexity in actual codebases.

Practically, the availability of R21Eval enables the development and targeted evaluation of future LLM architectures or training protocols focused explicitly on structural code understanding, object state transitions, and robust serialization mechanisms. Theoretically, the pronounced gap in performance suggests that current LLMs have not generalized beyond syntactic token-sequence modeling to genuine program semantics when realistic context and data types are involved.

Future Directions

R22Eval paves the way for several lines of investigation. One area is the integration of symbolic reasoning or type-aware intermediate representations within LLMs to enhance their handling of custom and nested types. Another avenue is the augmentation of training data with diversified real-world code and context, in contrast to synthetic or challenge-driven examples. The approach also underscores the importance of dynamic test-based evaluation for LLM correctness assessment, especially when predicting on semantically rich and non-canonical I/O data.

Conclusion

The R23Eval benchmark constitutes a significant advancement in the rigorous evaluation of LLM code reasoning under genuinely realistic settings. The observed performance degradation across all tested models when confronted with real-world complexity mandates a reevaluation of LLM assessment methodologies in software engineering. The serialization/deserialization pipeline addresses a critical gap in prior work, and the results motivate foundational improvements in LLM architectures and training strategies vis-à-vis complex program understanding.