- The paper presents a novel graph-based RAG framework that integrates knowledge consolidation, associative navigation, and interference elimination to improve retrieval recall and generation accuracy.

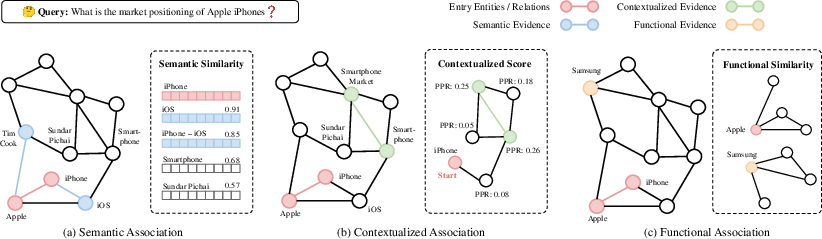

- The methodology employs semantic, contextualized, and functional association strategies with LLM-driven filtering to mitigate noise and assemble coherent evidence chains.

- Empirical results show 7–10% improvements in recall and 3–11% gains in generation accuracy, demonstrating the framework’s robustness across diverse domains.

CodaRAG: Associative Evidence Retrieval for Robust Retrieval-Augmented Generation

Introduction

CodaRAG presents a graph-based Retrieval-Augmented Generation (RAG) framework that explicitly models associative retrieval, drawing inspiration from Complementary Learning Systems (CLS) theory in cognitive science. The work addresses key shortcomings of both naive and graph-based RAG systems: fragmented evidence retrieval, loss of logical chains, and noise introduced by hyper-associative expansion. Unlike previous graph-based approaches that either limit their evidence assembly to local expansion or utilize the graph as a post hoc scoring artifact, CodaRAG reframes the RAG pipeline as an active, multi-dimensional associative navigation process grounded in a consolidated knowledge graph (KG).

Architecture: Staged Associativity with CLS Inspiration

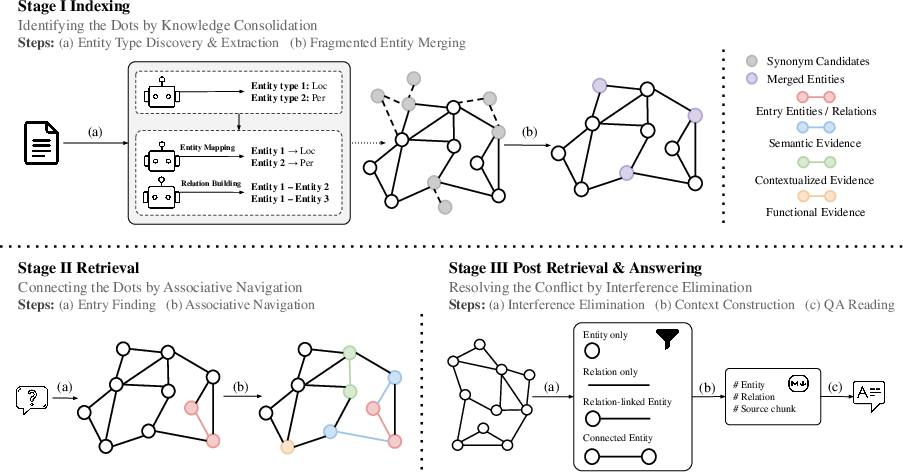

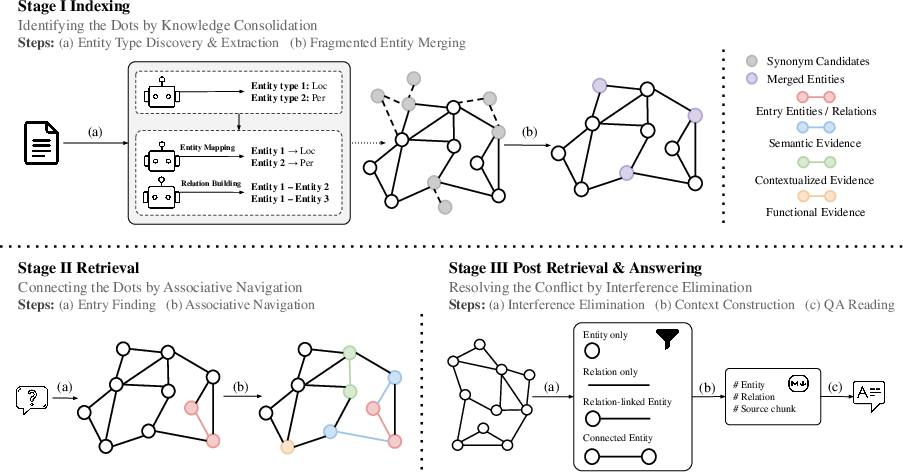

CodaRAG operates in three major stages: Knowledge Consolidation, Associative Navigation, and Interference Elimination. The system first consolidates unstructured extraction results to repair fragmented entity mentions and unify the representational substrate (Stage I). It then discovers evidence chains via complementary associative processes coordinated over the consolidated KG (Stage II). Finally, it applies an executive control filter to prune hyper-associative or low-value connections (Stage III).

Figure 1: The three-stage CodaRAG pipeline: I. Knowledge Consolidation forms a robust KG, II. Associative Navigation traverses semantic, contextualized, and functional pathways to recover dispersed evidence, III. Interference Elimination filters noisy associations for high-precision context construction.

The CLS analogy is deeply operationalized: rapid, local semantic association models hippocampal fast retrieval, while contextualized and functional pathways emulate neocortical integration and structural mapping. This is instantiated as follows:

Main Results and Empirical Findings

CodaRAG is evaluated on the GraphRAG-Bench, which explicitly targets the effects of graph structure in retrieval and contextualization for generation. The selected tasks span Fact Retrieval, Complex Reasoning, Contextual Summarization, and Creative Generation in both structured (Medical) and unstructured (Novel) domains.

CodaRAG exhibits absolute improvements of 7–10% in retrieval recall and 3–11% in generation accuracy relative to the strongest prior baseline (HippoRAG 2), across all evaluated domains and task types. The method also demonstrates more balanced coverage–accuracy trade-offs in summarization and improved faithfulness in creative settings.

Detailed ablation shows that:

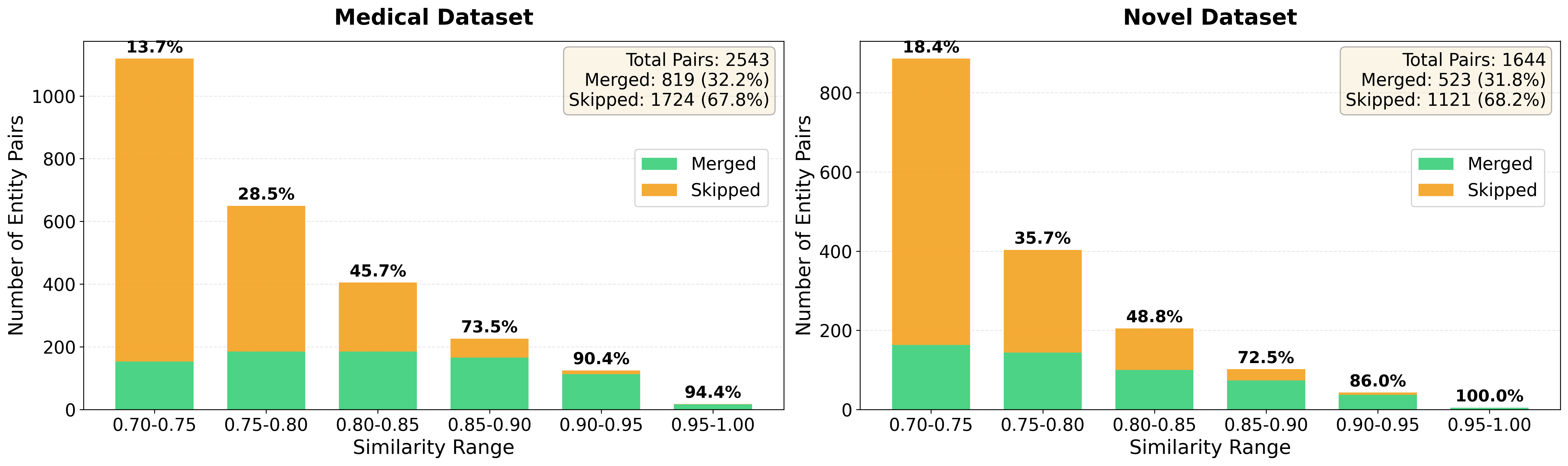

- Knowledge Consolidation (canonical merging and type induction) significantly enhances both entry-point relevance and downstream reasoning.

- Semantic, contextualized, and functional association modules contribute non-redundant gains; notably, omitting Semantic Association yields the largest degradation in both retrieval and generation quality. Contextualized Association primarily improves evidence coverage and the global organizational coherence of retrieval.

- Interference Elimination preserves retrieval recall while preventing significant drops in generation faithfulness and accuracy, validating its criticality for robust context construction.

Case studies in both medical and open-domain history settings demonstrate that CodaRAG uniquely reconstructs latent logical schema (e.g., medical care workflows or historical-narrative connections), whereas previous methods capture only local or high-frequency evidence without integration.

Theoretical and Practical Implications

The central claim is that retrieval for LLM-augmented generation must move beyond local or naive graph traversals to explicitly orchestrated, multi-path associative navigation. This reflects cognitive models that coordinate fast “reminding” with slow, integrative, and analogy-driven evidence assembly. The strong empirical results—particularly the resilience to query diversity and the improved performance in creative and reasoning-heavy generation—provide evidence for the superiority of this design. Furthermore, error analysis demonstrates that CodaRAG’s robustness emerges from the explicit regulation of associative “noise,” and the ablation component study affirms that optimal retrieval requires complementary mechanisms rather than over-specialized or single-metric expansion.

Practically, the authors note that CodaRAG’s retrieval-stage complexity is higher (especially due to LLM gating in merging and filtering), but the design amortizes costs through one-time KG construction and selective, context-aware inference routines.

Future Directions

The presented framework introduces a paradigm where human-inspired associative control and interference regulation are first-class citizens in KG-based RAG. Future developments in AI may explore:

- Integration with continuous or multimodal KGs, further enhancing the semantic and cross-domain coverage.

- Learned adaptive gating for association pathway selection, balancing retrieval cost, and reasoning quality dynamically.

- Transfer to low-resource or highly noisy knowledge extraction regimes, where consolidation and interference filtering are likely of even higher importance.

- Scaling to open-world settings with continual KG update, necessitating online consolidation and similarity-sensitive merges aligned with non-stationary distributions.

Conclusion

CodaRAG formally advances RAG via structured, associative strategies inspired by the complementary mechanisms of human memory. The framework operationalizes knowledge consolidation, orchestrates multi-dimensional associative reasoning, and enforces interference control, yielding substantial and robust gains in retrieval and grounded generation across domains and task types. The results establish that “connecting the dots with associativity”—as opposed to naive retrieval—serves as an effective mechanism for overcoming evidence fragmentation and achieving high-fidelity, logic-driven sequence generation for LLMs.