- The paper introduces a dual-intent model that fuses semantic (prototype) and probabilistic (distribution) representations, leading to robust recommendation performance.

- It employs comprehensive optimization with alignment, coarse/fine-grained matching, and self-supervised regularization to improve embedding quality.

- Experimental results on sparse datasets demonstrate significant gains, including up to +10.6% Recall@20 and lower computational costs compared to state-of-the-art baselines.

Dual-Intent Space Representation Optimization in Recommendation: DIAURec

Introduction and Motivations

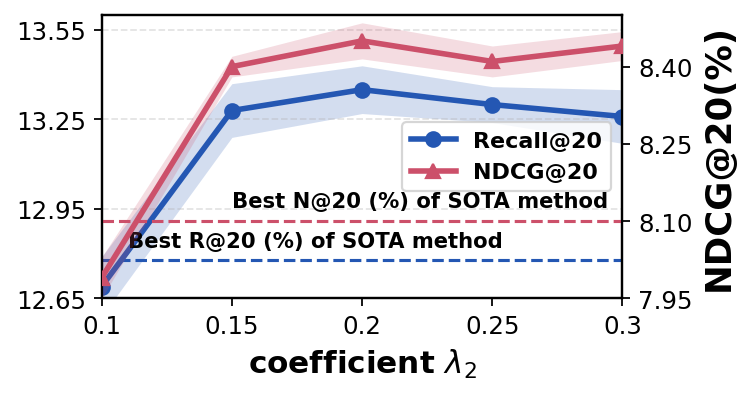

DIAURec ("Dual-Intent Space Representation Optimization for Recommendation") (2604.09087) addresses the challenge of achieving robust and expressive user/item representations in recommender systems, particularly under conditions of interaction sparsity and semantic heterogeneity. Current intent-based approaches typically rely on interaction data for intent disentanglement, and often utilize deterministic (point) representations, leading to instability when observations are limited or noisy. LLM-based recommender paradigms, in contrast, can enrich modeling with semantic context but incur substantial computational overhead and under-exploit representation optimization. The authors identify that alignment and uniformity in the representation space—beyond mere model interpretability—are critical for recommendation quality, a concern that prior work often relegates to the periphery.

DIAURec proposes an integrated framework that unifies both collaborative and language modeling under a dual-intent representation paradigm. The method reconstructs user/item representations through joint modeling of prototype intent (semantic-based) and distribution intent (collaboration-based), followed by a comprehensive optimization strategy that fuses alignment-uniformity objectives, fine/grained and coarse/grained matching, and regularization for robust and collapse-resistant embedding learning.

Figure 1: Schematic contrasting intent-based, LLM-based, and dual-intent (DIAURec) recommendation paradigms; highlighting dual-intent representation and comprehensive optimization.

Methodology: Dual-Intent Representation and Optimization

The core contribution of DIAURec is threefold: (i) dual-intent modeling, (ii) comprehensive representation optimization, and (iii) efficient architecture for practical deployment.

Dual-Intent Modeling involves two parallel strategies. Prototype intent is constructed by associating semantic representations (obtained via LLM-driven textual aggregation and projection) with a finite set of intent prototypes through soft assignment. Distribution intent leverages the stochasticity inherent in collaborative signals by modeling user/item representations as Gaussian distributions and mapping them onto the hypersphere via von Mises-Fisher sampling, capturing both center and dispersion (mean and variance) aspects. Representation for an entity is a mixture of these two signals, enabling the model to capture both the structured uncertainty of interaction data and the semantic richness of textual attributes.

Comprehensive Optimization goes beyond basic negative sampling or margin-based objectives. DIAURec enforces alignment (contraction of positive user-item pairs) and uniformity (dispersion on the hypersphere for user representations), calibrated via the balancing coefficient ω. To address space-specific inconsistencies, the framework introduces:

- Coarse-grained Matching: Enforces global geometric consistency between reconstructed collaborative and semantic/intent representations via orthogonal mapping, with constraints to ensure mapping regularity.

- Fine-grained Matching: Adopts mutual information maximization to align each representation with its empirically closest semantic prototype, mitigating representation isolation and facilitating structural uniformity.

- Regularization: Two self-supervised contrastive regularizers are layered: intra-space (within collaborative embeddings, deterring collapse) and interaction-based (between users and positively/negatively associated items).

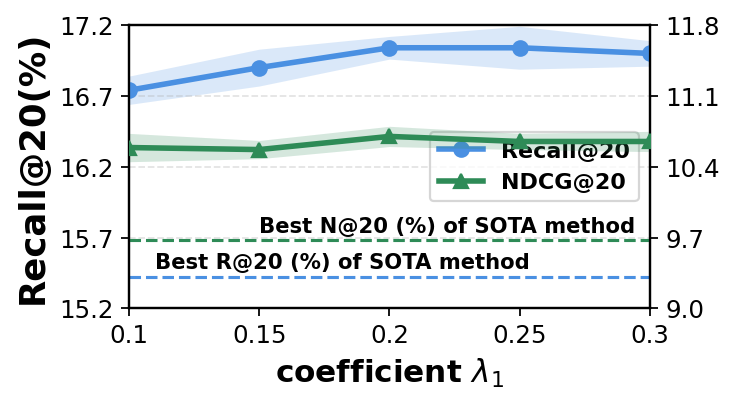

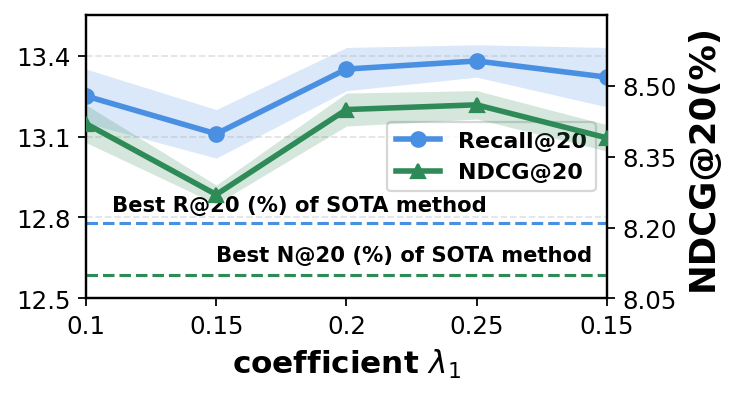

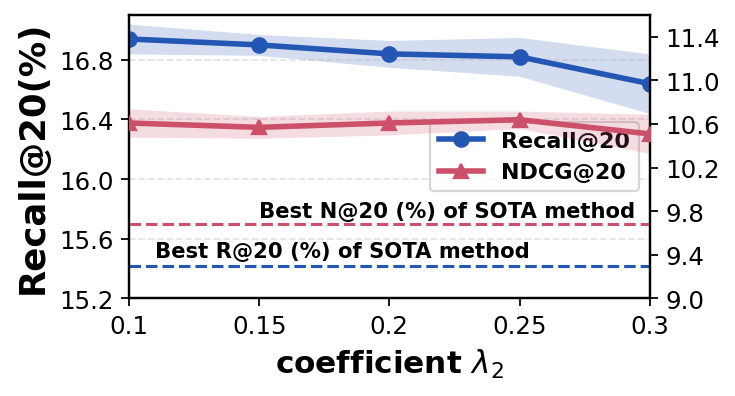

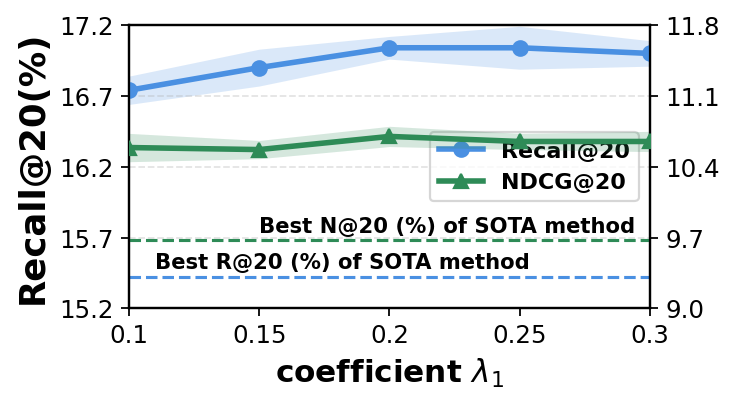

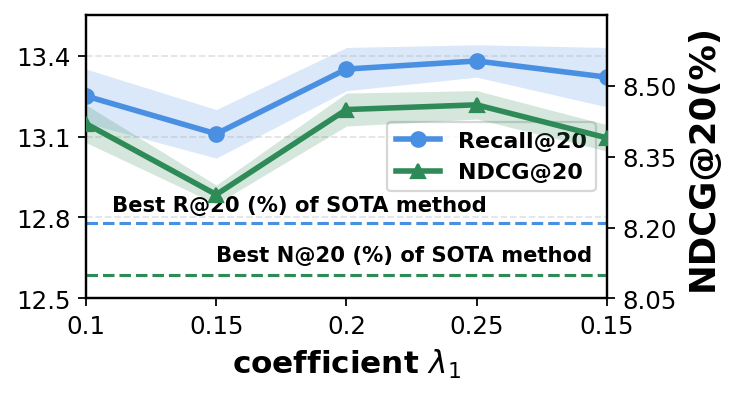

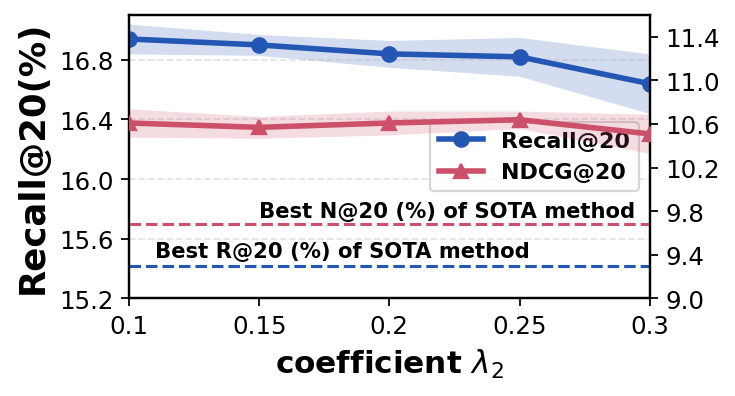

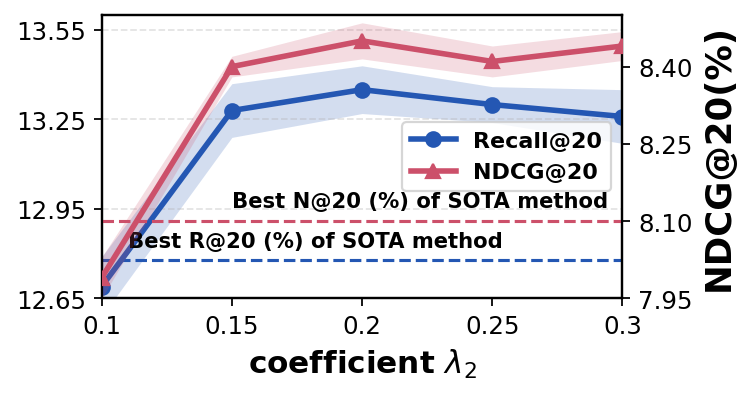

Multi-task joint optimization aggregates these objectives, with key losses, matching, and regularization terms weighted by λ1 and λ2. Notably, uniformity optimization is selectively omitted for item representations due to empirically documented deleterious effects on item clustering (contrary to its benefit for user coverage).

Figure 2: Overview of DIAURec architecture: dual-intent reconstruction (left), multi-granularity optimization (right), and regularization blocks.

Comparative Experimental Analysis

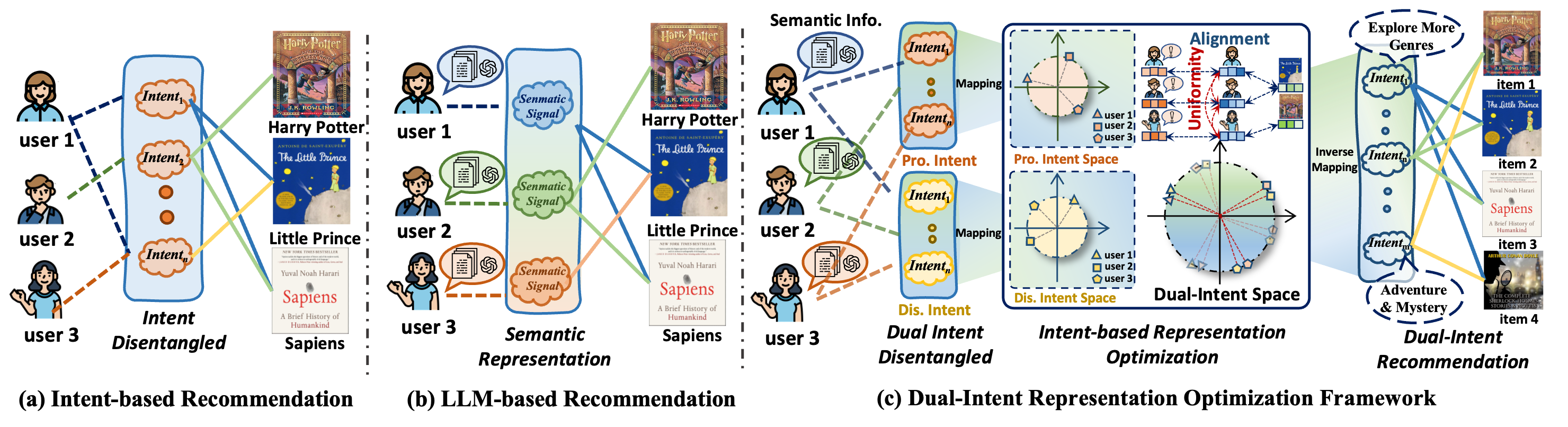

Evaluation on Amazon-book, Yelp, and Steam (all highly sparse) demonstrates that DIAURec consistently outperforms 15 state-of-the-art baselines spanning classical GCN-based CF, intent-disentangling approaches, LLM-based semantic fusion, and representation alignment/uniformity models. Gains are robust across Recall@20 (up to +10.6% on Amazon-book), with statistical significance.

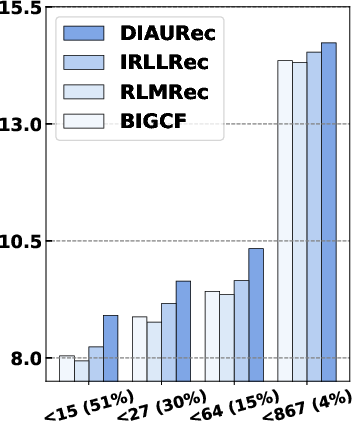

Under conditions of extreme data sparsity (users with minimal interactions), DIAURec’s dual-intent design shows enhanced resistance to performance degradation, a property not matched by single-intent or LLM-only baselines.

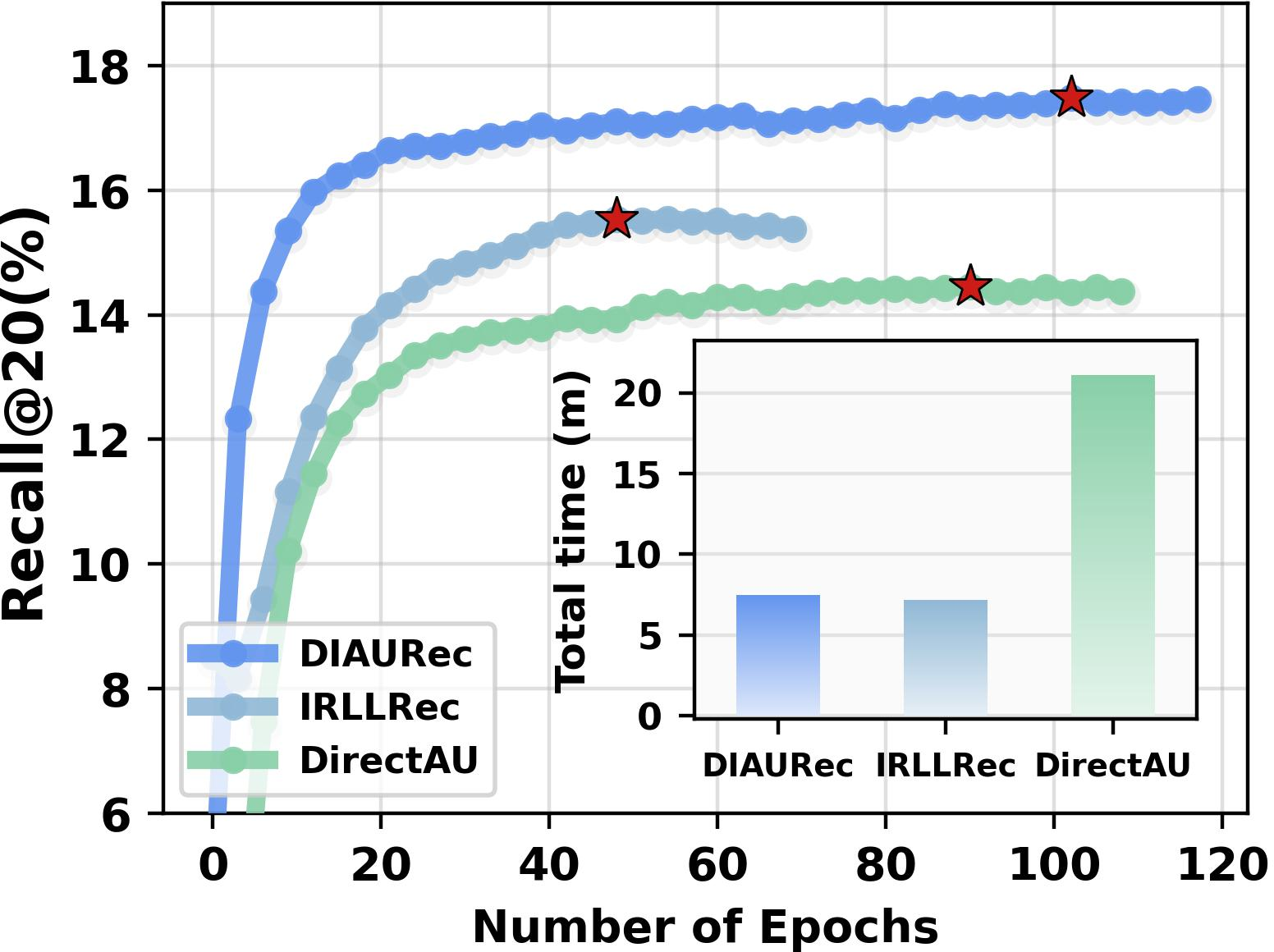

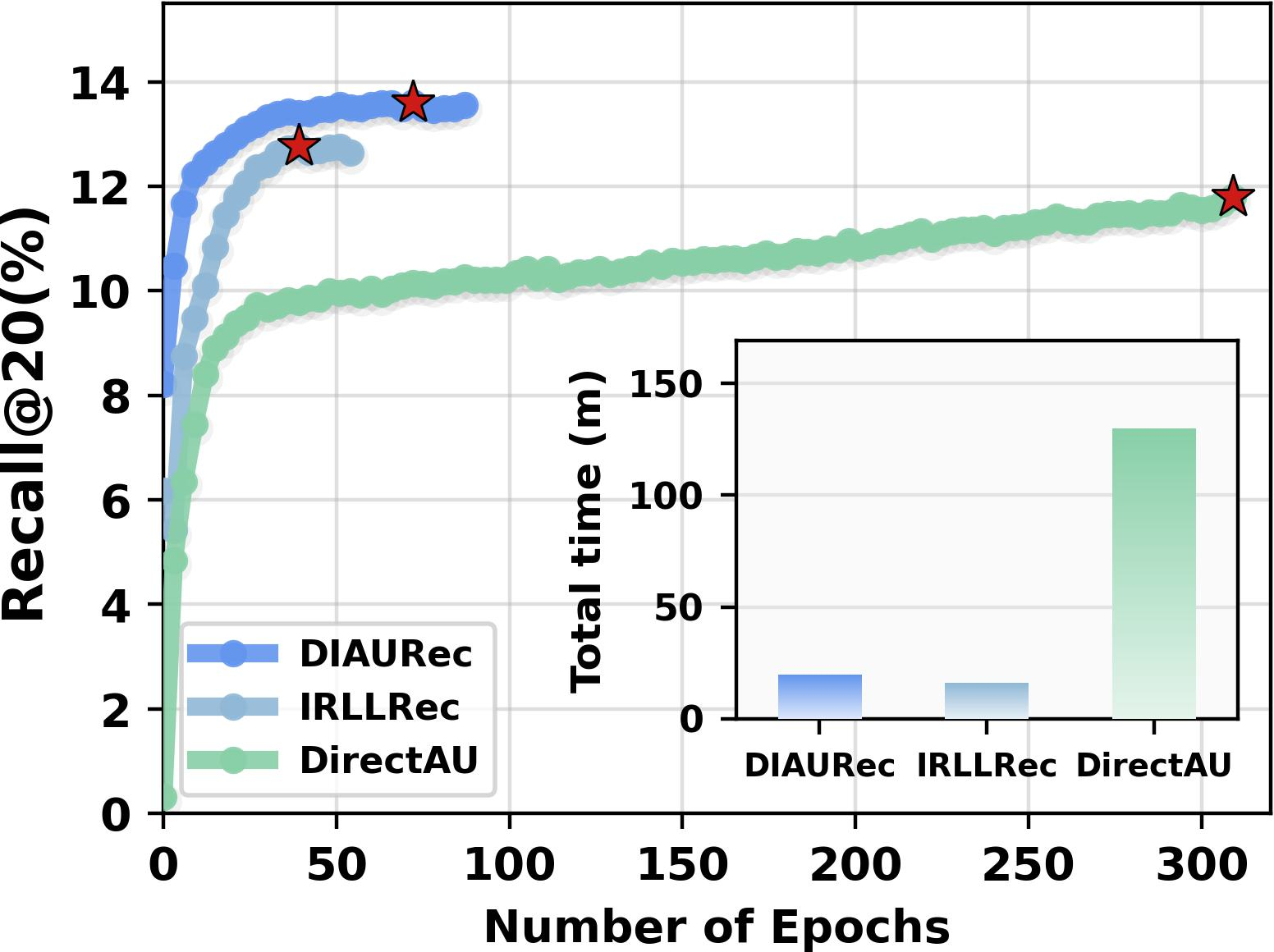

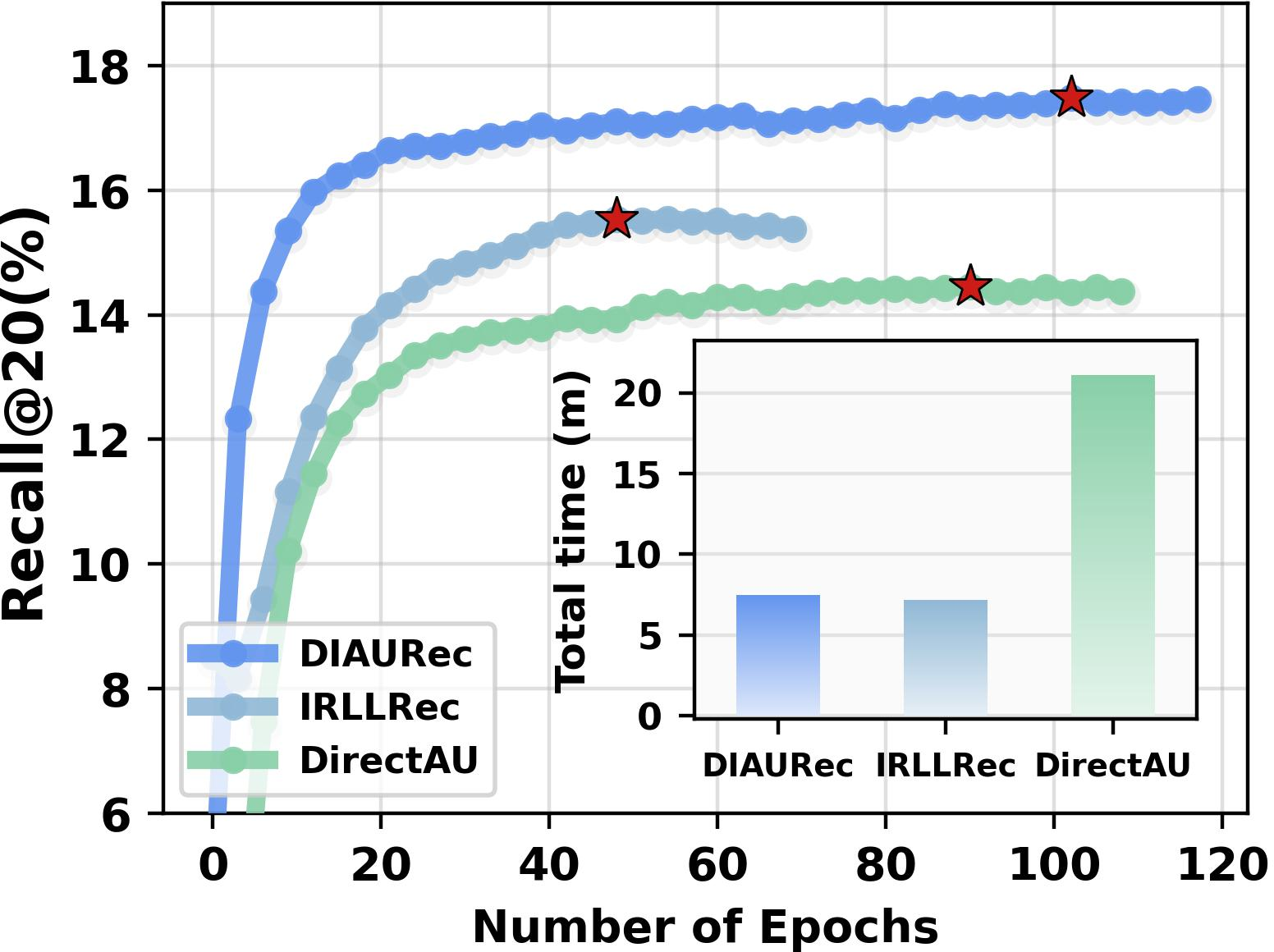

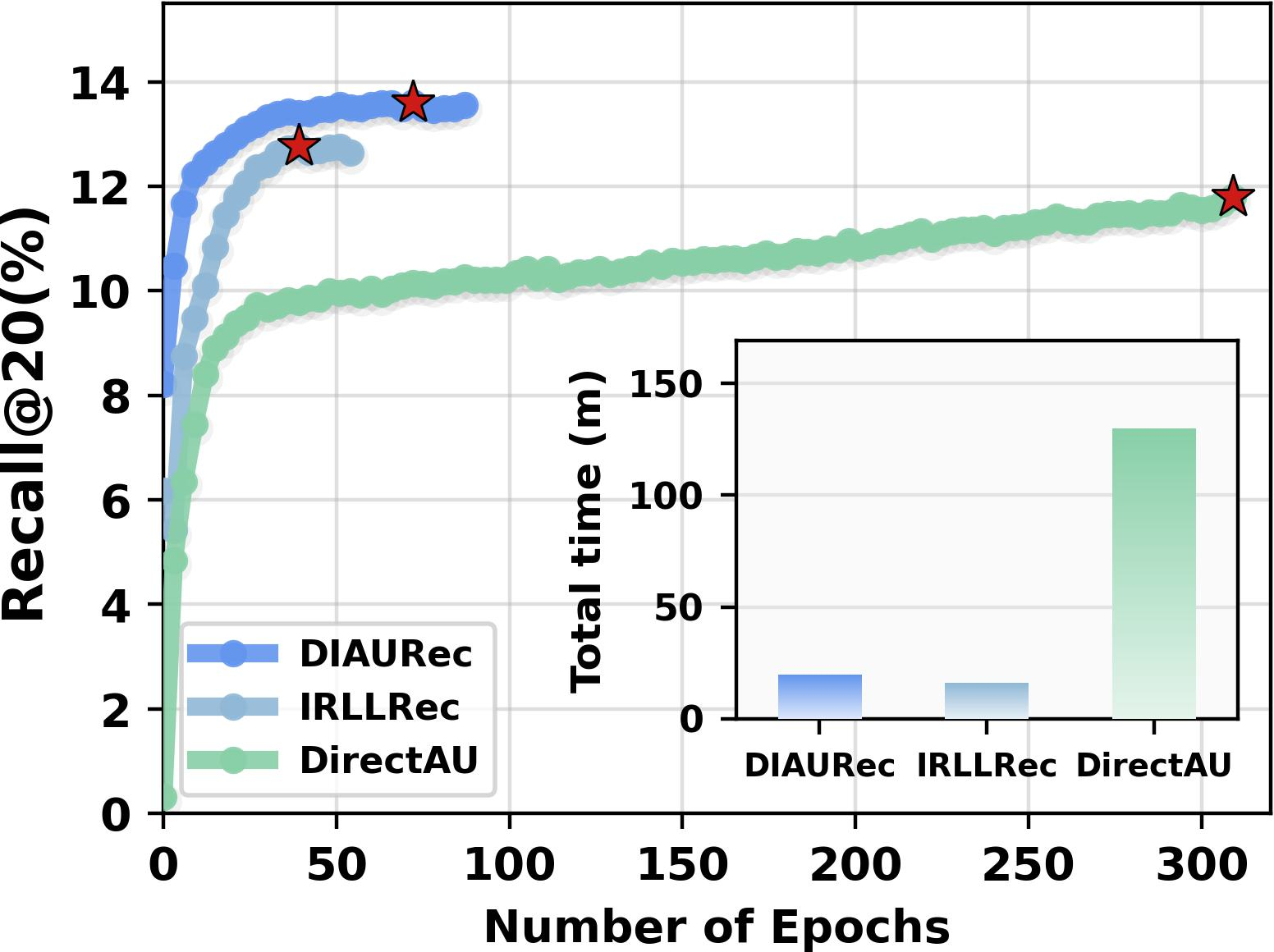

Training efficiency analysis indicates that DIAURec achieves both higher convergence rates and lower computational costs compared with DirectAU and IRLLRec, due to focused use of pre-computed LLM features and parametrically efficient optimization.

Figure 3: Performance analysis (NDCG@20) on Amazon-book showing improved results across all user activity levels.

Ablation and Objective Analyses

Systematic ablation of DIAURec modules (removing fine/coarse matching, dual-intent, intra-/inter-regularization) discloses that the collaborative presence of all components is essential for peak performance. Removal of dual-intent and intra-space regularization, in particular, induces steep losses in recommendation metrics, evidencing the necessity of probabilistic representation modeling and representation-level regularization.

Substitution of the core alignment-uniformity loss with classical BPR sharply impairs performance, confirming the limitations of standard ranking-aware objectives in representation optimization. Enforcing uniformity on both user and item spaces (or on items only) degrades results, consistent with the Laplacian analysis demonstrating that item relations are sensitive to over-dispersion.

Layer-wise analysis of the GCN encoder affirms that shallow architectures (L=2) optimally balance neighborhood signal without the over-smoothing arising in deeper stacks.

Theoretical and Practical Implications

DIAURec formalizes and systematizes representation optimization in recommender systems, offering the following contributions:

- Demonstrates that probabilistic distributional modeling (variance and mixtures) can be deeply integrated with semantic prototype encoding, yielding more robust dual-intent spaces.

- Provides evidence that targeting both alignment and uniformity—under domain-aware space selection and with regularization—prevents issues of mode collapse and embedding homogeneity, thus enhancing both expressiveness and robustness.

- Empirically establishes that coarse- and fine-grained matching, when jointly enforced, close gaps between collaborative and semantic modalities, addressing inconsistency-driven degradation common to prior LLM-based hybrids.

Figure 4: Sparsity-sensitive robustness of DIAURec compared to SOTA baselines; consistent performance under decreasing user activity.

Practical implications include a method for incorporating LLM-based features in a manner that minimizes online computational overhead and eschews the need for expensive prompt engineering or chain-of-thought inference loops. This enables deployment in resource-constrained environments or where real-time responsiveness is integral.

Future Directions

DIAURec establishes a blueprint for future work in several axes:

- Extension to dynamic and sequential recommendation settings, where user intent evolution can be explicitly captured over time using time-varying dual-intent spaces.

- Enrichment with multimodal side information (images, graph attributes), where prototype and distributional spaces naturally generalize to cross-modal summarization.

- Further development of adaptive regularization mechanisms responsive to online drift in user/item embedding populations.

Figure 5: Training time efficiency and convergence speed comparisons; DIAURec combines fast convergence with improved accuracy.

Conclusion

DIAURec articulates a technically rigorous, well-optimized framework for recommendation, leveraging dual-intent latent space construction, advanced representation matching, and robust optimization. Empirical results substantiate significant, statistically robust gains over a broad landscape of recent strong baselines. The framework is particularly relevant in sparse, heterogeneous, or large-scale environments and advances the methodological state-of-the-art in both representation learning and practical recommendation system design.