- The paper introduces EEG2Vision, a comprehensive framework that leverages neural embeddings and latent diffusion models to reconstruct 2D visual images from EEG data.

- It demonstrates that electrode density and montage design are critical, with higher densities and optimal placement significantly enhancing semantic decoding accuracy.

- The integration of a multimodal prompt-guided boosting module using LLM-derived semantics improves perceptual quality and stabilizes visual reconstructions even in low-density setups.

EEG2Vision: A Multimodal Framework for 2D Visual Reconstruction from EEG

Introduction

The EEG2Vision framework addresses the longstanding challenge of reconstructing visual stimuli from non-invasive EEG signals. This task is fundamentally constrained by the low spatial resolution and significant noise in EEG, especially under practical, low-density montages. While fMRI-based approaches traditionally benefit from high spatial fidelity, EEG provides superior temporal resolution and portability. However, the leap from EEG-driven visual classification to high-fidelity image reconstruction remains formidable due to reduced neural information and increased ambiguity as channel count decreases. EEG2Vision offers an end-to-end solution incorporating rigorous electrode density analysis and a multimodal post-hoc image boosting stage, thereby systematically evaluating—and extending—the practical limits of EEG-based brain-to-image models.

Framework Architecture

EEG2Vision consists of five tightly-coupled modules:

- Image Processing: Images are encoded into diffusion-based latent representations.

- Semantic Prior Generation: A pretrained EEG image decoder predicts the headline category label from an EEG signal, providing a coarse semantic caption for classifier-free guidance (CFG) within the diffusion process.

- Neural Embedding Extraction: Raw EEG is projected via 1D temporal convolutions into a spatial tensor matching the latent diffusion space.

- Diffusion-based Image Generation: EEG2Vision employs a ControlNet-modulated latent diffusion model, wherein EEG latent and semantic priors modulate a frozen backbone through residual connections.

- Prompt-Guided Post-Reconstruction Boosting: To mitigate artifacts and boost perceptual credibility—particularly in low-density regimes—the framework uses a multimodal LLM to semantically describe decoded images and leverages text-conditional image-to-image diffusion for geometry and texture refinement.

Figure 1: Overall pipeline of EEG2Vision, showing information flow from visual and neural inputs through to the prompt-guided image enhancement.

Quantitative and Qualitative Reconstruction Results

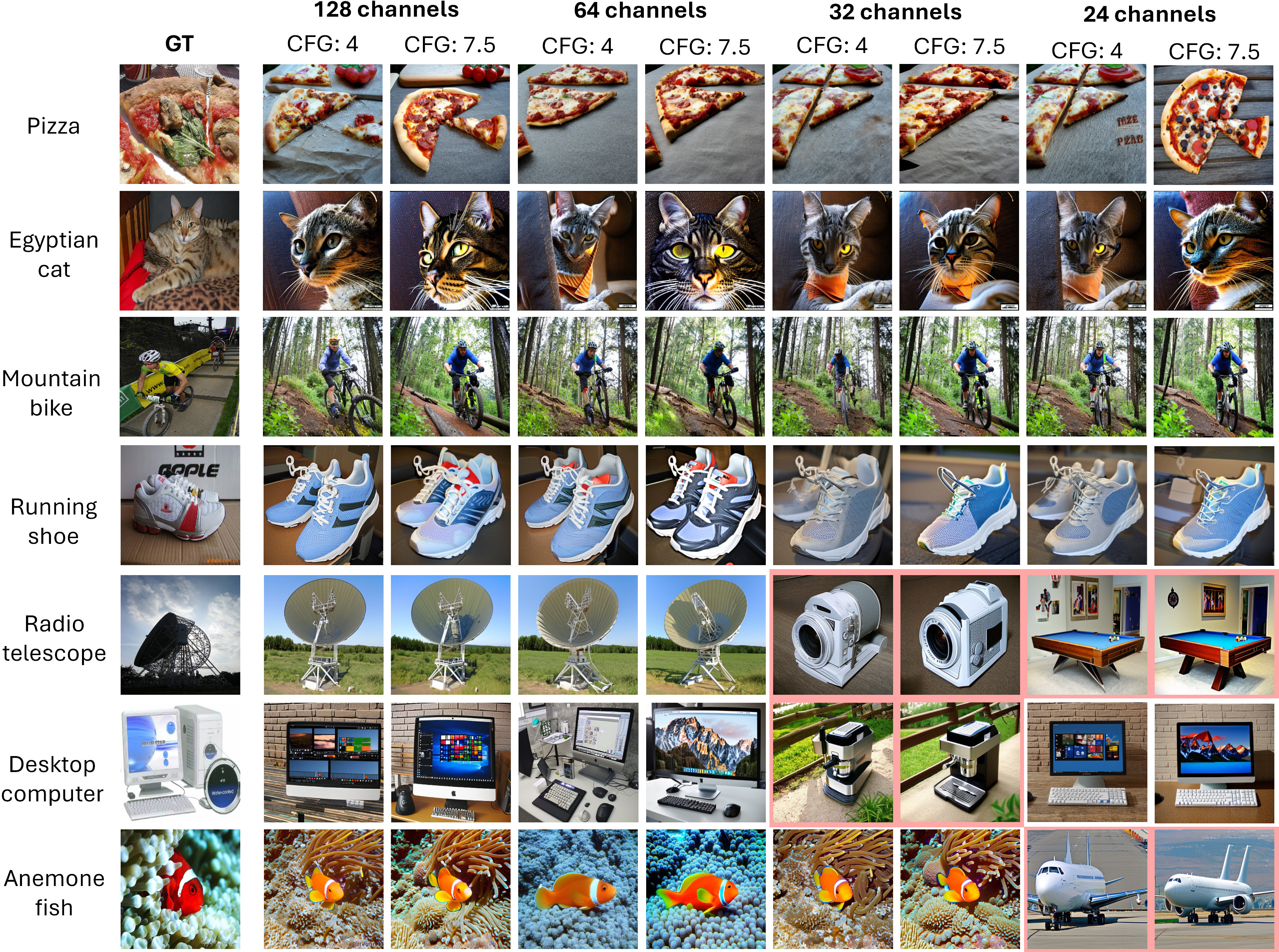

The EEG2Vision framework is evaluated at four EEG densities (128, 64, 32, 24 channels), providing a controlled analysis of the tradeoffs between channel count and image fidelity. Results reveal a marked drop in semantic decoding performance as channel count decreases—Top-1 accuracy falls from 89% (128ch) to 38% (24ch) for 50-way classification. However, the drop in perceptual and distributional metrics is much less pronounced (e.g., FID increases from 76.77 to 80.51), and IS remains close to 34 across the range, indicating the robustness of the latent diffusion backbone to degraded neural input. The superiority of higher CFG scales (γ=7.5) across all densities confirms the importance of anchoring the diffusion process with LLM-derived semantic priors in low-information regimes.

Notably, the 24-channel configuration, when carefully designed for neurophysiological coverage, occasionally matches or outperforms the 32-channel setup. This highlights the primacy of electrode placement, rather than merely quantity, for the preservation of functionally relevant spatial information.

Figure 2: Representative reconstructions across channel densities and CFG settings, demonstrating the qualitative impact of spatial sampling reduction. Highlighted failure cases correspond to low-accuracy classes.

Impact of Multimodal Prompt-Guided Boosting

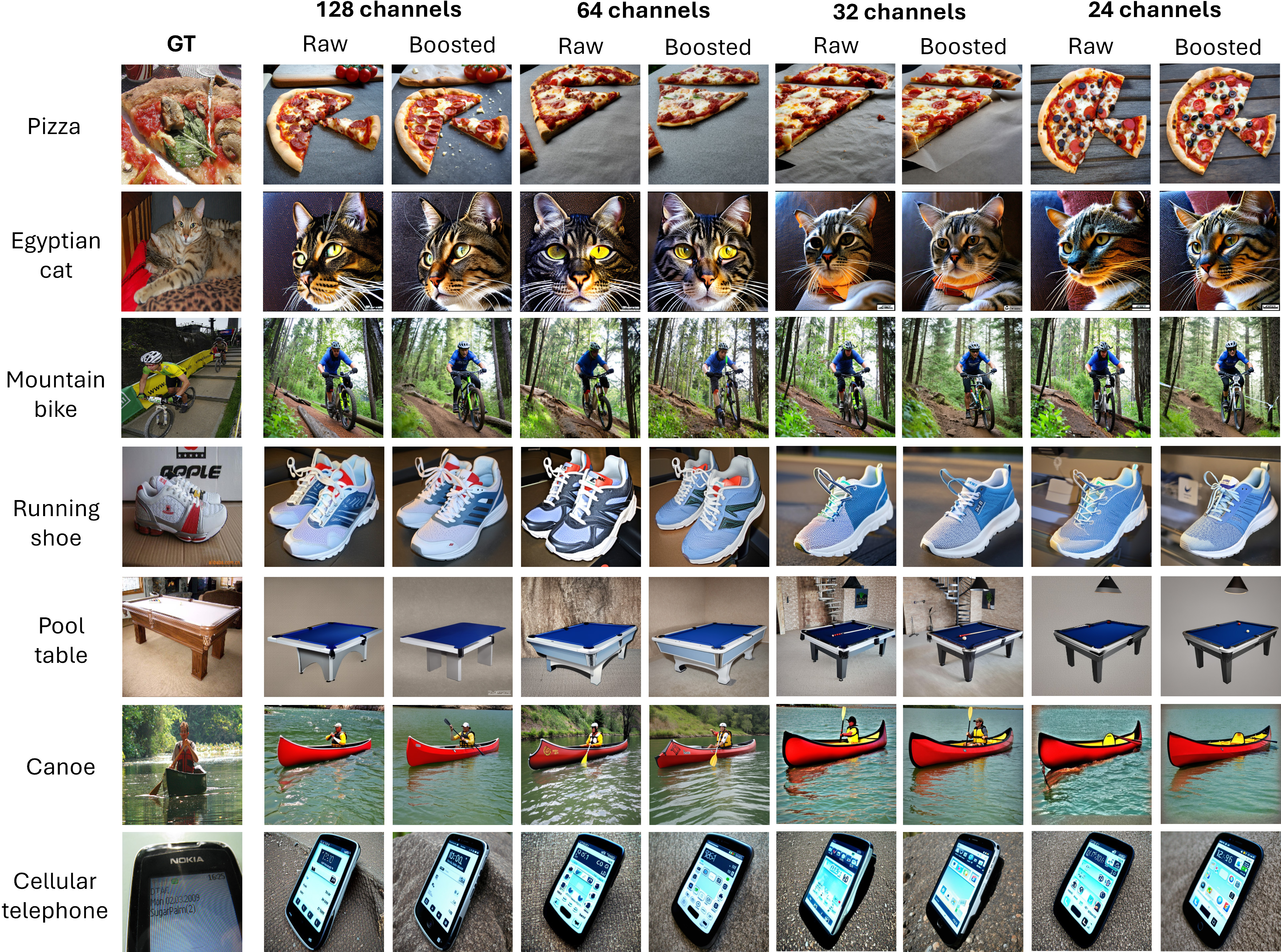

The post-hoc refinement module leverages a large vision-LLM (LLaMA 3.2 Vision) to extract structured, image-grounded descriptions from raw reconstructions, which are used to steer an image-to-image diffusion process for quality enhancement. This stage is model-agnostic and exclusively acts on reconstructions, increasing semantic clarity and reducing geometric and textural artifacts.

Empirically, the refinement yields consistent improvements across all observed image quality metrics—Inception Score increases by 6.7%–9.7%, with the largest relative boost in the lowest-density settings. FID and LPIPS drop marginally, and, crucially, human raters prefer the refined outputs in over 78% of trialed cases, with higher effect sizes (weighted up to 87.1%) in low-density montages.

Figure 3: Side-by-side qualitative comparison of raw EEG-based reconstructions and post-hoc boosted images across all channel densities.

Electrode and Regional Ablation

Fine-grained ablation experiments quantify each electrode’s and region’s contribution to decoding accuracy. The occipital region emerges as essential: its ablation reduces Top-1 accuracy to 3.1%. Central and parietal removals also severely impact performance, reflecting early visual feature encoding in these regions and the contribution of early attentional and feedback dynamics. The temporal and frontal cortices are less critical for visual category reconstruction in this paradigm.

Practical and Theoretical Implications

From a BCI systems and digital neuroscience perspective, these findings delineate a clear operational boundary: below 64 channels, there is severe drop-off in category-level decoding, restricting the paradigm’s application if strict semantic discrimination is essential. In contrast, the modest drop in perceptual quality at 24 channels, especially after multimodal boosting, suggests EEG-based image reconstruction may be viable on wearable, low-density systems for assistive communication, affective computing, or intent-driven generative AI interfaces.

The demonstrated importance of montage design, over mere density, guides future hardware development in BCI. The explicit, controllable boosting pipeline enables modular improvements and domain transfer without retraining generative models. The pipeline further exemplifies how weak and noisy brain-to-image mappings can be stabilized by infusing high-level, cross-modal priors.

Future Directions

Several key frontiers emerge: (i) subject-adaptive or subject-transferable neural encoders, possibly leveraging meta-learning or few-shot adaptation, to limit inter-subject variability at low spatial sampling; (ii) reinforcement of semantic grounding within the refinement stage to preclude semantic drift; (iii) hybridization with eye-tracking or EOG modalities for improved attentional alignment and decoding; (iv) joint optimization strategies linking neural encoding, diffusion-based generation, and perceptual refinement modules.

Conclusion

EEG2Vision establishes a rigorous, modular end-to-end framework for visual reconstruction from EEG under realistic spatial constraints, combining state-of-the-art latent diffusion models, semantic priors, and multimodal refinement. While categorical decoding accuracy is highly sensitive to montage resolution, distributional and perceptual image quality can be stabilized, especially with prompt-guided LLM-based boosting. The demonstrated robustness at 24 channels has significant implications for broader deployment beyond lab settings, representing a critical step toward real-world neural-driven generative systems and accessible brain–computer interfaces that translate neural signals directly into visual representations (2604.08063).