TAMEn: Tactile-Aware Manipulation Engine for Closed-Loop Data Collection in Contact-Rich Tasks

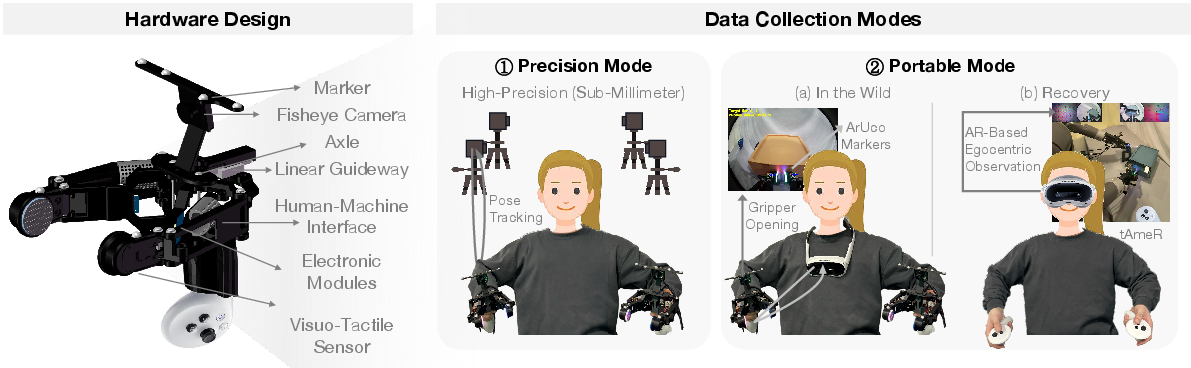

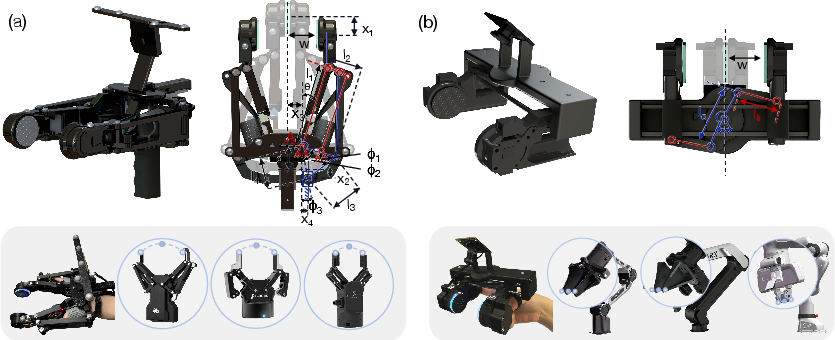

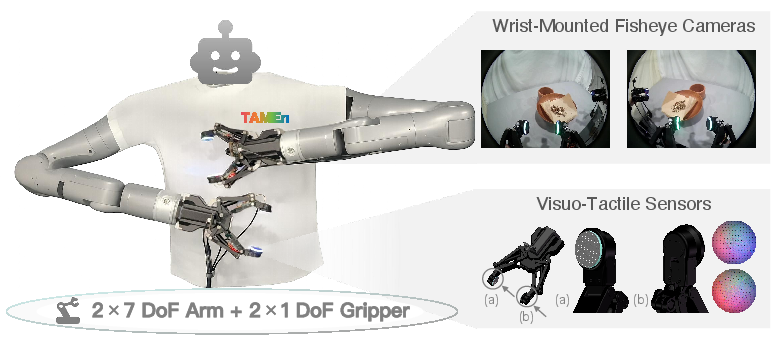

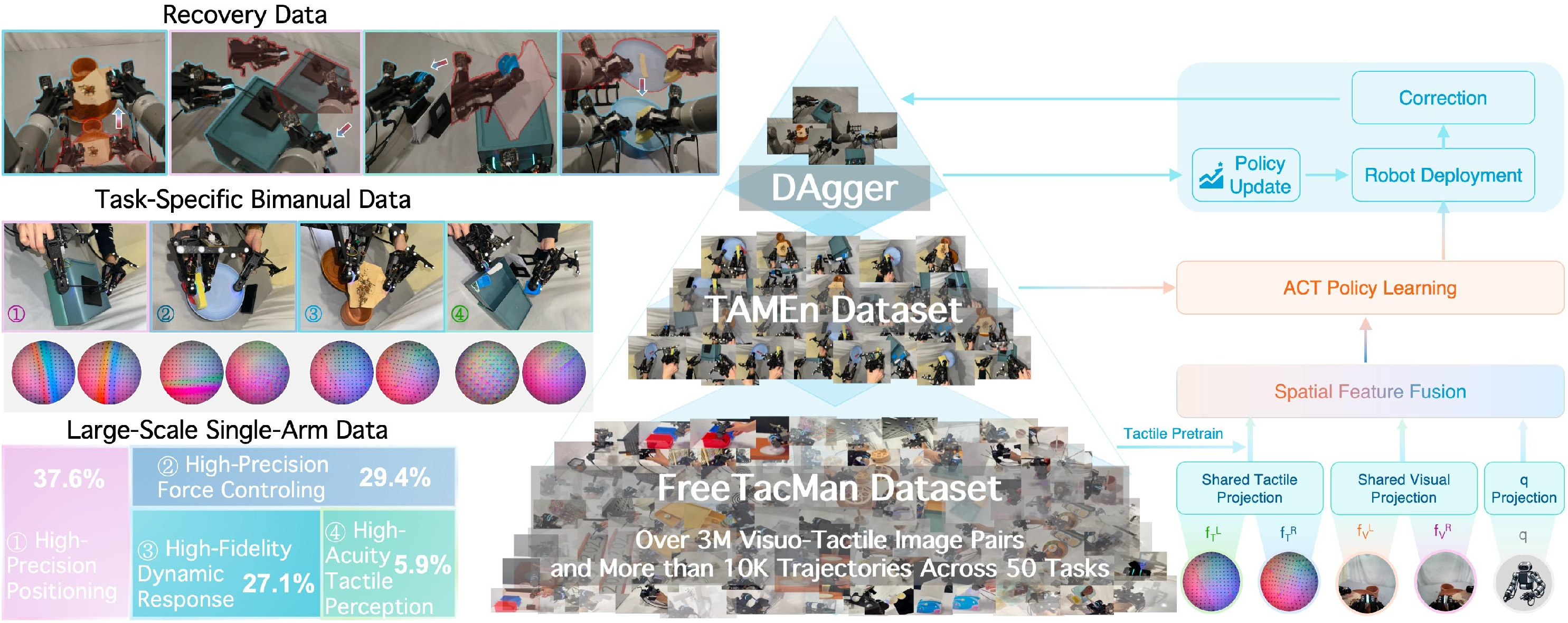

Abstract: Handheld paradigms offer an efficient and intuitive way for collecting large-scale demonstration of robot manipulation. However, achieving contact-rich bimanual manipulation through these methods remains a pivotal challenge, which is substantially hindered by hardware adaptability and data efficacy. Prior hardware designs remain gripper-specific and often face a trade-off between tracking precision and portability. Furthermore, the lack of online feasibility checking during demonstration leads to poor replayability. More importantly, existing handheld setups struggle to collect interactive recovery data during robot execution, lacking the authentic tactile information necessary for robust policy refinement. To bridge these gaps, we present TAMEn, a tactile-aware manipulation engine for closed-loop data collection in contact-rich tasks. Our system features a cross-morphology wearable interface that enables rapid adaptation across heterogeneous grippers. To balance data quality and environmental diversity, we implement a dual-modal acquisition pipeline: a precision mode leveraging motion capture for high-fidelity demonstrations, and a portable mode utilizing VR-based tracking for in-the-wild acquisition and tactile-visualized recovery teleoperation. Building on this hardware, we unify large-scale tactile pretraining, task-specific bimanual demonstrations, and human-in-the-loop recovery data into a pyramid-structured data regime, enabling closed-loop policy refinement. Experiments show that our feasibility-aware pipeline significantly improves demonstration replayability, and that the proposed visuo-tactile learning framework increases task success rates from 34% to 75% across diverse bimanual manipulation tasks. We further open-source the hardware and dataset to facilitate reproducibility and support research in visuo-tactile manipulation.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

TAMEn: A simple explanation

What’s this paper about?

This paper introduces TAMEn, a “Tactile-Aware Manipulation Engine.” In plain words, it’s a system that helps robots use both sight and touch to handle tough, hands-on tasks that involve a lot of contact—like gripping, pushing, pulling, and squeezing—using two robot arms at the same time. The goal is to collect better training data and train better robot policies so robots can do chores that humans find easy but robots usually struggle with, such as plugging in a cable, holding a sponge and scrubbing a dish, or opening a drawer while holding something else.

What questions does the paper try to answer?

The authors focus on three main questions:

- How can we collect large amounts of high-quality data for two-handed, contact-heavy tasks in a practical way?

- Does adding a sense of touch (tactile sensing) make robot performance better than vision alone?

- Can we make the robot more reliable by teaching it not just from successful examples, but also from human corrections when it starts to fail?

How did they do it? (Methods explained simply)

Think of teaching a robot like teaching a beginner to cook: you show it what to do (demonstrations), check that it can repeat it (replay), and help it when it messes up (corrections). TAMEn puts all of this into one system.

Here are the main pieces:

- A wearable handheld “robot gripper” for humans:

- The team built a small, wearable gripper that a person can hold and move naturally, as if it were their fingers. It has tiny cameras and soft sensors at the “fingertips” so it can “feel” pressure and texture—similar to how your skin feels touch.

- This gripper records what it sees (video), what it feels (tactile), and how it moves (pose), all at the same time.

- Two ways to track motion (precision vs. portability):

- Precision mode (Motion Capture): Uses special cameras around the room to track the handheld gripper very accurately (tiny errors, less than a millimeter). Great for high-quality data.

- Portable mode (VR-based tracking): Uses a VR handle to track the gripper without needing a special room setup. It’s cheaper and easy to move—good for “in the wild” data collection—while still being accurate within about 1 cm.

- Check if demos are actually doable by the robot (online feasibility checking):

- While a person is recording a demonstration, the system checks in real time whether the robot can actually copy that movement safely. If not, it tells the person immediately to try again differently, instead of discovering problems later.

- This avoids wasting time on demos the robot can’t perform (e.g., too fast, out of reach, or against joint limits).

- Fixing mistakes with AR teleoperation and tactile feedback (tAmeR):

- When the robot is running its learned policy and starts to fail (say, the cable slips or the sponge loses contact), a human can jump in using an AR headset.

- The headset shows live video from the robot’s “wrists” plus the tactile sensor views, so the human can see and feel what the robot is experiencing and guide it to recover.

- These “recovery” corrections are saved as training data, helping the robot learn how to bounce back from near-fail situations.

- A “data pyramid” for smarter training:

- Base layer: Lots of single-arm data with sight+touch to learn good general “feel” for contact (pretraining).

- Middle layer: Task-specific, two-arm demonstrations to learn coordination (like holding and placing at the same time).

- Top layer: Recovery data—short, targeted corrections collected during real robot runs—so the robot learns to handle tricky moments and near-failures.

- Closed-loop learning:

- The robot first learns from big, general datasets (base), then fine-tunes on exact tasks (middle), and then keeps improving by adding real corrections from mistakes (top). This loop repeats, making the policy stronger over time.

What did they test, and what did they find?

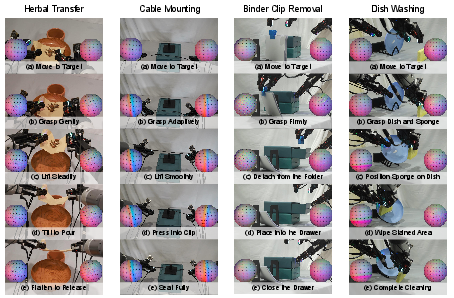

They tried several real-world, contact-rich, two-handed tasks using a dual-arm robot with cameras on the wrists and touch sensors on the fingertips:

- Herbal transfer: Lift herbs on a sheet and pour them into a container without tearing the sheet.

- Cable mounting: Pick up a flexible cable and press-fit it into a clip.

- Binder clip removal: Remove a clip from a folder, place it into a drawer, and close the drawer.

- Dish washing: Hold a dish and a sponge and scrub until a stain is gone.

Here are the main results, and why they matter:

- Online feasibility checks make demos actually usable:

- Without checks: only 26% of recorded demonstrations could be replayed on the robot.

- With checks: 100% were replayable.

- Why it matters: Saves tons of time by avoiding bad demos.

- Better tracking, both in the lab and on the go:

- A structured “object-based” motion capture method was robust for both experts and beginners (100% tracking success), while regular marker tracking failed often, especially for new users.

- The portable VR tracking stayed within ~1 cm of the precise tracking—good enough for many tasks—while being much easier to deploy.

- Touch + vision beats vision alone:

- Using only cameras (vision): about 34% average success.

- Adding touch sensors: about 55%.

- Why it matters: Many small contact events (like slipping, pressing, or seating) are hard to see but easy to feel.

- Pretraining on lots of single-arm sight+touch data helps:

- With tactile pretraining: success rose to ~65%.

- Why it matters: The robot learns good “contact sense” before tackling harder two-arm tasks.

- Learning from real mistakes (online recovery) is very powerful:

- Adding just ~10% recovery corrections during execution raised success to ~75%.

- Adding 50% more normal demos (without recovery) only reached ~70%.

- Offline “recovery-like” demos (copied by hand) didn’t help much (56%)—real, on-the-spot corrections were better.

- Why it matters: Targeted fixes during actual failures are more informative than lots of generic extra practice.

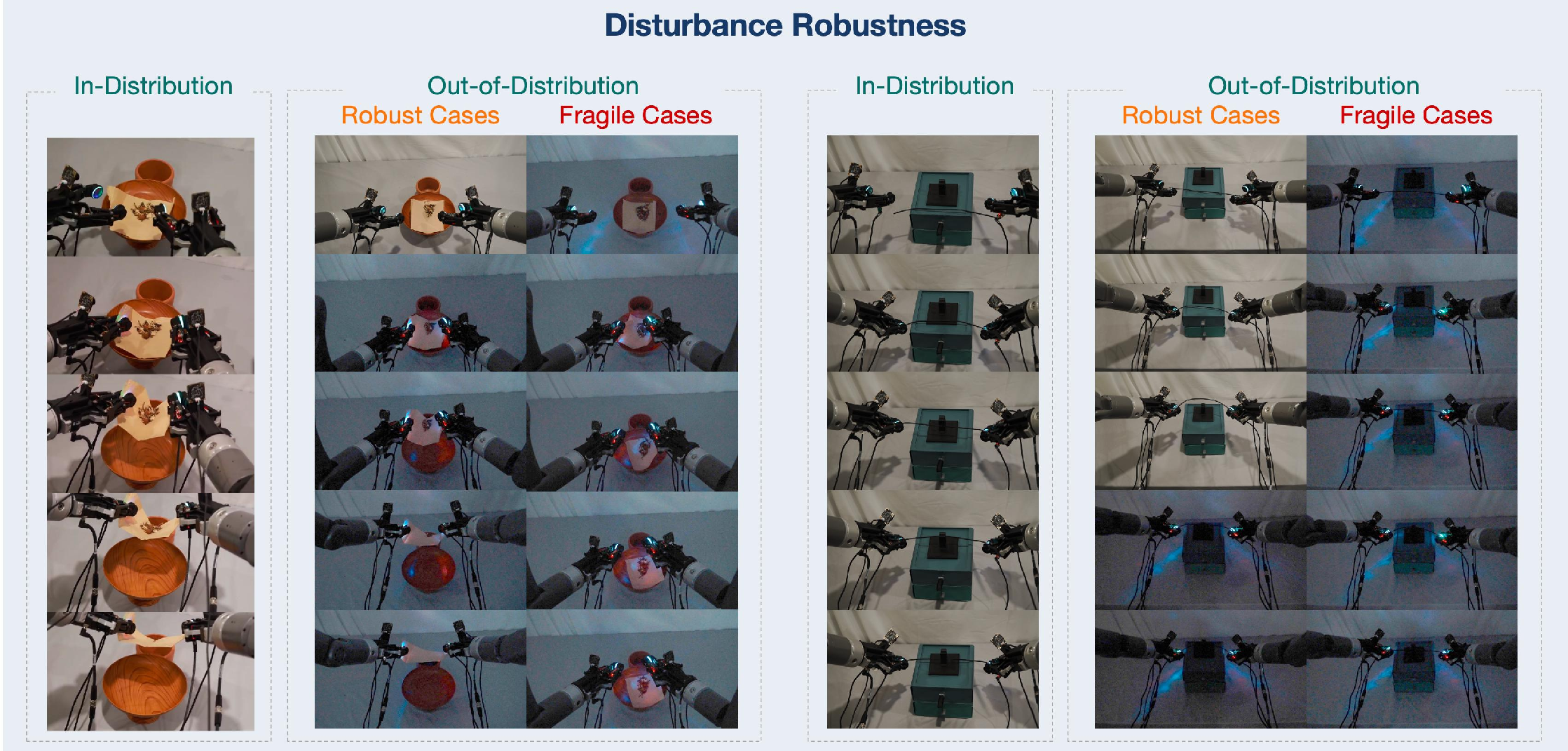

- Generalizes better to new objects and disturbed lighting:

- With unseen colors or materials, the touch-augmented system still performed well (for example, 60% in herbal transfer where vision-only was 0%).

- Under lighting changes (which confuse vision), tactile-augmented policies kept working during the contact-heavy parts, especially after the grasp.

- Why it matters: Touch provides stable cues when vision is unreliable.

Why does this matter? (Implications)

- More reliable robots in the real world: By combining sight and touch, and by learning from human corrections when things go wrong, robots get better at difficult, hands-on tasks that involve feeling their way through—like fitting parts, handling flexible items, or scrubbing.

- Practical and scalable data collection: The dual-mode setup means you can collect high-precision data in the lab and also gather useful data anywhere with a lower-cost, portable setup.

- Faster development: Online checks prevent wasted demos, and recovery data accelerates learning where it matters most—near failures.

- Open-source impact: The team releases the hardware and dataset, so other researchers and students can build on this and push visuo-tactile robot learning further.

- Future possibilities: Home assistance (kitchen chores, tidying), factory assembly, and service robots could benefit, especially where careful touch is essential.

In short, TAMEn shows that teaching robots to “see and feel,” validating demos as they’re collected, and learning from real-time human corrections can dramatically improve performance on tricky, contact-rich, two-handed tasks.

Knowledge Gaps

Below is a concise, actionable list of the paper’s unresolved knowledge gaps, limitations, and open questions that future work could address.

- Hardware generality beyond two-jaw grippers: The interface and adaptation equations are validated only for flexion–extension and parallel-jaw grippers; it is unclear how to extend the configuration mapping to multi-fingered hands, suction, soft/compliant grippers, or grippers with non-symmetric kinematics.

- Empirical validation of “rapid adaptation”: The paper provides a parameterization for cross-gripper adaptation but does not report adaptation time, failure cases, or success rates when deploying to diverse grippers and robots.

- Cross-embodiment tactile domain shift: The system claims handheld visuo-tactile collection and robot execution, but it does not quantify or correct for the domain gap between human-held tactile signals and robot-mounted tactile sensors during training and deployment.

- Sensor-agnostic claims untested: Although the interface is said to support multiple tactile sensors (e.g., GelSight, Xense, DW-Tac, PaXini), experiments use only one sensor; cross-sensor transfer, calibration, and robustness are not evaluated.

- Portable tracking rotation/drift not characterized: VR-based tracking error is reported only as positional error (~1 cm); orientation error, long-horizon drift, and environment-dependent stability (texture-poor scenes, fast motions, occlusions) are not quantified.

- Impact of tracking noise on policy performance: The paper does not analyze how MoCap vs VR tracking errors propagate into learning quality and success rates, or how much portable-mode noise the policy can tolerate before success degrades.

- Online feasibility checker under-specified: The real-time executability screening lacks implementation details (constraints checked, thresholds, latency, false-positive/negative rates), ablations on individual constraints, and quantitative impact across more tasks.

- Human guidance vs “hard filtering”: Online feasibility checks appear to flag/stop infeasible motions but do not proactively guide users (e.g., visualizing reachable regions or speed limits); the potential of predictive, user-in-the-loop guidance is unstudied.

- Safety and failure-handling guarantees: There is no analysis of how the feasibility layer handles near-contact collisions, force/torque limits, or dynamic obstacles during demonstration collection or correction.

- AR tAmeR latency and usability: The paper does not measure AR streaming latency (vision+tactile), its effect on recovery success, or compare AR teleoperation to monitor-based teleoperation in a controlled user study.

- No true haptic feedback: “Tactile feedback” in AR appears visual-only (tactile video), without force/kinesthetic cues; the trade-offs vs vibrotactile/kinesthetic haptics and their impact on correction quality remain unexplored.

- Dual-arm teleoperation ergonomics: The paper does not describe how operators control two arms during recovery (one vs two handhelds), operator cognitive load, or error/fatigue over long sessions.

- Policy action space lacks force/impedance control: Actions are joint and continuous gripper commands only; the absence of force/impedance control may limit robustness in contact-rich phases, but this is not evaluated.

- Limited task diversity and scale: Only four tasks are tested; tasks involve modest long-horizon complexity. Generalization to more challenging, cluttered, or industrial tasks (tight clearances, deformables with fast dynamics) remains unknown.

- Robustness beyond lighting: Disturbance tests cover lighting changes only; robustness to occlusions, camera motion blur, tactile gel wear/aging, sensor miscalibration, and external mechanical perturbations is not assessed.

- Unseen-object generalization scope: “Unseen” cases are largely color changes and a cable color swap; generalization to shape, material, size, stiffness, and friction variations is not studied.

- Recovery data triggering and taxonomy: Failures are detected by humans; no automatic failure detectors, taxonomy of failure modes, or analysis of which failure types benefit most from online recovery are provided.

- Recovery data quantity–quality trade-off: The paper shows 10% online recovery helps, but does not provide performance curves vs. recovery data volume, or compare interventions at different stages (early vs late) and their marginal utility.

- Pretraining ablations: The multi-positive contrastive tactile pretraining is not compared to alternative representation learning strategies (e.g., masked modeling, sequence models, cross-modal predictive objectives), leaving open which pretraining is most effective.

- Data efficiency and sample complexity: Counts of bimanual demonstrations and recovery trajectories per task are not reported; learning curves and sensitivity to data volume are missing.

- Synchronization and calibration details: Procedures and accuracy for calibrating/aligning the two arms, cameras, and tactile sensors (both in handheld and robot setups) are not described; the effect of miscalibration on replayability and performance is unknown.

- MoCap object-based tracking overhead: Structured marker initialization requires post-processing to establish identities; the setup time, operator burden, and robustness of this procedure across environments/users are not quantified.

- Switching overhead between modes: Practical costs (time, calibration, accuracy loss) of switching between MoCap and VR modes in real workflows are not reported.

- Generalization across robot platforms: All evaluations use a dual-arm JAKA K1; portability to other arms (different kinematics, latencies, control rates) and cross-platform policy transfer are untested.

- Policy/model comparisons: Only ACT-based baselines are used; comparisons to stronger tactile-aware policies (e.g., diffusion policies, world models, residual/compliant controllers) and to teleoperation-collected robot-side datasets are absent.

- Evaluation metrics beyond success rate: No fine-grained metrics (contact stability, slip rate, contact force proxies, alignment error, cycle time) or failure analyses are provided to diagnose where tactile cues help or fail.

- Handling of morphology and physics mismatch: The mapping from handheld to robot ignores differences in compliance, friction, fingertip geometry, and dynamics; the resulting sim-to-real–like biases and their mitigation are not studied.

- Long-term “data flywheel” dynamics: The paper motivates a data flywheel but does not report multi-iteration improvements, convergence behavior, or diminishing returns across repeated collect–train–deploy cycles.

Practical Applications

Immediate Applications

Below is a concise set of deployable use cases that leverage TAMEn’s hardware, dual-mode tracking, feasibility-aware collection, AR teleoperation, and pyramid-structured learning. Each item notes sector links, potential tools/workflows, and key dependencies.

- Precision “teach-and-validate” for bimanual assembly (e.g., cable seating, clip operations)

- Sectors: manufacturing (electronics, appliances), automotive

- What: Use MoCap mode to capture sub-mm accurate bimanual demonstrations with online executability checks to reduce post-hoc data cleaning and replay failures.

- Tools/workflows: Feasibility Validator SDK; “TAMEn Collector (MoCap mode)” + replay dashboard; nightly train-and-deploy loop

- Dependencies/assumptions: Accurate robot kinematics/limits; calibrated MoCap environment; collision checks if required; two-arm or two-EOAT setup

- Portable, in-the-wild data capture and recovery for field cells

- Sectors: logistics/fulfillment (bin picking of deformables, cable routing), services (store ops), maintenance

- What: Use VR mode to collect demonstrations at workcells without base stations; switch to AR tAmeR for on-the-spot recovery data during policy runs.

- Tools/workflows: “TAMEn Portable” kit (≈$700 dual-arm); tAmeR AR console; data ingestion to pyramid

- Dependencies/assumptions: Acceptable VR drift (≈≤1 cm); Wi‑Fi/5G latency within teleop tolerance; operator safety protocols

- Recovery-on-demand via AR teleoperation (reduced downtime)

- Sectors: manufacturing, warehousing, robotics operations (RaaS)

- What: Human operators intervene only at failure-prone states, streaming wrist RGB + tactile to AR to guide recovery; corrected episodes feed DAgger-style updates.

- Tools/workflows: tAmeR AR console; “Recovery Library” dataset; on-policy DAgger job in MLOps

- Dependencies/assumptions: Low-latency streaming; safe teleop gates; role-based access to live robots

- Rapid adaptation across gripper morphologies (lower retooling cost)

- Sectors: integrators, OEMs, robotics startups

- What: Map target gripper kinematics to TAMEn’s standardized handheld mechanism via a small set of geometric parameters (flexion–extension or parallel-jaw).

- Tools/workflows: “Gripper Configurator” CAD param tool; jig library; printable adapters

- Dependencies/assumptions: Basic CAD/printing; alignment calibration; consistent linkage tolerances

- Data flywheel for contact-rich tasks (continuous improvement)

- Sectors: robotics software, MLOps

- What: Organize data into the pyramid (pretrain priors → task demos → online recovery) to boost success rates (shown 34%→75%).

- Tools/workflows: “Pyramid Orchestrator” for dataset versioning; tactile encoder checkpoints; DAgger scheduling

- Dependencies/assumptions: Compute for pretraining/fine-tuning; data governance; reproducible builds

- Deformable object handling in packaging/kitting

- Sectors: logistics, e-commerce fulfillment, CPG

- What: Teach manipulation of bags, cables, foam inserts, flexible wraps using tactile cues for slip/seat detection.

- Tools/workflows: Task packs (prebuilt skill graphs: grasp → route → seat → verify with tactile)

- Dependencies/assumptions: Stable tactile sensor skins; task-specific jigs/fixtures

- Delicate contact QA (slip/overload detection)

- Sectors: manufacturing quality control

- What: Use fingertip visuo-tactile signals to detect incipient slip, overforce, or mis-seating during assembly/inspection.

- Tools/workflows: “Tactile Sentinels” monitoring plugin; rule-based alarms + policy fallback

- Dependencies/assumptions: Tactile calibration; thresholds tuned per SKU; safe stop procedures

- Academic visuo-tactile benchmarks and dataset curation

- Sectors: academia, corporate research

- What: Reproduce and extend TAMEn tasks; contribute new visuo-tactile datasets; evaluate pretraining and DAgger variants.

- Tools/workflows: Open hardware files; FreeTacMan pretraining; standardized task suites; code for feasibility checks

- Dependencies/assumptions: Lab robots/cameras; dataset licenses; student operator training

- Educational kits for contact-rich bimanual skills

- Sectors: education (STEM programs, maker spaces)

- What: Low-cost VR-mode handheld collectors to teach skills like drawer operation, cable routing, wiping.

- Tools/workflows: Lesson plans; sandbox tasks; “Skill Replay” app

- Dependencies/assumptions: Safety training; simplified robots or cobots with power/force limits

- Service robotics pilots (e.g., dishwashing, surface wiping)

- Sectors: food service, hospitality, facilities

- What: Prototype contact-heavy cleaning tasks with tactile-aware policies and AR-assisted recovery to handle mess and occlusion.

- Tools/workflows: Cleaning task pack; tAmeR intervention SOP; performance dashboards (success, intervention rate)

- Dependencies/assumptions: Wet-contact-capable sensor skins; hygiene standards; splash-safe enclosures

Long-Term Applications

These applications require further research, scaling, standardization, or regulatory clearance, but are naturally enabled by TAMEn’s methods and results.

- General-purpose bimanual home assistant with tactile competence

- Sectors: consumer robotics

- What: Household chores (laundry folding, tidying, food prep) that demand nuanced, deformable and contact-rich control plus intermittent human recovery via AR.

- Dependencies/assumptions: Cost reduction of dual-arm hardware; robust tactile skins; long-horizon skill libraries; home-safe autonomy

- Fleet-scale closed-loop improvement (factory/warehouse)

- Sectors: manufacturing, logistics

- What: Central “intervention desk” that oversees many cells; human operators provide brief tAmeR recoveries; updates roll into shared data pyramids and models.

- Tools/workflows: Multi-site MLOps; KPI loops (intervention rate, MTTR); privacy-preserving data sync

- Dependencies/assumptions: Network QoS; standardized robot APIs; site-level data governance

- Tactile foundation models for manipulation

- Sectors: software/AI, robotics platforms

- What: Pretrain cross-domain visuo-tactile encoders on millions of contact episodes for plug-and-play fine-tuning on new tasks/robots.

- Tools/workflows: Model hub for tactile encoders; adapters for gripper types; continual pretraining pipelines

- Dependencies/assumptions: Large shared datasets; cross-sensor/domain alignment; evaluation standards

- Cross-embodiment, gripper-agnostic policy transfer

- Sectors: integrators, OEMs

- What: Configuration-level mapping enabling near–zero-shot transfer of bimanual skills across grippers/arms with minimal re-collection.

- Dependencies/assumptions: Unified kinematic/IO abstraction; calibration automation; domain randomization for contact

- Assistive-care bimanual robots (dressing, feeding)

- Sectors: healthcare, eldercare

- What: Safe manipulation of people and clothing/utensils using tactile cues for force limitation and slip detection, with clinician AR oversight.

- Dependencies/assumptions: Medical-grade safety; compliance and liability frameworks; validated human-contact tactile sensing

- Surgical training and teleoperation with tactile feedback

- Sectors: surgical robotics

- What: Collect expert demonstrations and perform AR-guided micro-recoveries during robot-assisted procedures; build tactile pretraining corpora for tissue interaction.

- Dependencies/assumptions: Sterilizable sensors; sub-mm force/texture fidelity; regulatory approval; integration with surgical platforms

- Agricultural handling of delicate produce and plants

- Sectors: agriculture/agrifood

- What: Tactile-aware grasp, pick, place, and packing for soft produce; AR recovery when occlusion or visual ambiguity arises.

- Dependencies/assumptions: Field-ready ruggedization; weather/lighting robustness; seasonal generalization

- Energy/infrastructure maintenance in low-visibility spaces

- Sectors: energy, utilities

- What: Cable/fuse seating, gasket alignment, valve turning inside cabinets, conduits, or dim vaults where vision is unreliable but tactile cues are informative.

- Dependencies/assumptions: Explosion-proof or IP-rated hardware; remote AR links; task-specific safety certifications

- Standardized tactile end-effector ecosystem

- Sectors: robotics hardware, supply chain

- What: Interoperable fingertip modules and calibration standards so policies and pretraining can transfer across vendors.

- Dependencies/assumptions: Industry consortium; sensor interface standards; certification labs

- Policy and regulatory frameworks for tactile data and AR teleop

- Sectors: policy/regulation, EHS

- What: Standards for safe human-in-the-loop teleoperation, tactile data handling (privacy, IP), and cyber-physical security for closed-loop learning.

- Dependencies/assumptions: Multi-stakeholder governance; incident reporting norms; compliance tooling

Notes on Feasibility and Assumptions Across Applications

- Hardware availability: Dual-arm platforms and grippers with room for fingertip visuo-tactile sensors; moisture/temperature constraints for cleaning or outdoor tasks.

- Sensing quality: Tactile calibration, durability, and replacement logistics; visual occlusions mitigated by wrist fisheyes; lighting variability.

- Tracking trade-offs: MoCap requires instrumented spaces; VR portable mode has small drift; both need periodic calibration.

- Online feasibility coverage: IK/limits/velocity checks are included; application-specific collision checking and process constraints may need to be added.

- Human factors: Operator training for handheld collection and AR teleop; ergonomic considerations; SOPs for safe intervention.

- Data/compute: Storage and governance of multimodal data; GPU time for pretraining/fine-tuning; reproducible MLOps.

- Integration: Robot controller APIs, timing/latency guarantees, and safety interlocks; facility IT for AR streaming and edge inference.

These applications build directly on TAMEn’s demonstrated gains in replayability, robust visuo-tactile learning, and closed-loop recovery, while clarifying the sector pathways, productizable tools, and dependencies needed to move from pilots to scale.

Glossary

- ACT: A learned visuomotor policy architecture that predicts action sequences for manipulation from sensory inputs. "The downstream ACT policy is trained on task-specific bimanual demonstrations with a supervised action loss:"

- AR-based teleoperation: Remote robot control using augmented reality interfaces to overlay sensor feedback and controls. "AR-based teleoperation with tactile feedback (tAmeR)"

- bimanual manipulation: Robotic manipulation that uses two arms or grippers in coordinated tasks. "contact-rich bimanual manipulation"

- contrastive objective: A representation learning loss that pulls matched pairs together and pushes mismatched pairs apart in embedding space. "using a contrastive objective."

- crankâslider mechanism: A linkage that converts rotary motion into linear motion (or vice versa), used to actuate grippers. "A crankâslider mechanism is commonly used in collection interfaces for this gripper type"

- data flywheel: A closed-loop process where data collection and model improvement reinforce each other iteratively. "closed-loop data flywheel"

- DAgger: Dataset Aggregation; an imitation learning algorithm that iteratively collects expert corrections on the learner’s states. "a DAgger-style update loop"

- deformable object manipulation: Robotic handling of objects that can bend, stretch, or deform. "deformable object manipulation (herbal transfer)"

- DoF: Degrees of Freedom; the number of independent parameters defining a system’s configuration. "a continuous 16-DoF action space"

- end-effector: The tool or gripper at the end of a robot arm that interacts with objects. "two end-effectors must coordinate"

- executability: The ability of a demonstration or trajectory to be feasibly executed by a robot under its constraints. "checked online for executability."

- feasibility validation: Real-time checking that demonstrated motions satisfy robot kinematic and safety constraints. "Feasibility validation for robot execution."

- flexionâextension gripper: A gripper type whose closing motion follows a flexion–extension path, often mimicking finger motion. "Flexionâextension gripper."

- gripper morphologies: The structural and kinematic forms of different gripper designs. "Interface adaptation across gripper morphologies."

- human-in-the-loop: A setup where human feedback or intervention is incorporated during data collection or policy execution. "human-in-the-loop recovery data"

- incipient slip: The onset of slipping at a contact interface before full slip occurs, detectable via tactile sensing. "incipient slip"

- inverse kinematics: Computing joint configurations that achieve a desired end-effector pose. "violate inverse kinematics, joint-limit, workspace, or motion constraints"

- inverse-solution failure: Failure to find a valid inverse kinematics solution for a desired pose under constraints. "including inverse-solution failure, soft-limit violation, overspeed motion, and runtime communication anomalies."

- joint-limit: The maximum or minimum allowable angles/positions for robot joints. "violate inverse kinematics, joint-limit, workspace, or motion constraints"

- marker occlusion: Loss of visibility of motion-capture markers due to obstruction, causing tracking issues. "marker occlusion frequently arises"

- MoCap: Motion capture; a system that tracks 3D positions/poses using reflective markers and cameras. "fast switches between MoCap and VR-based tracking."

- motion capture: Optical tracking of objects via markers to obtain precise pose trajectories. "In the motion-capture mode, the interface is tracked by the NOKOV system"

- object-based tracking: Pose tracking that exploits the known structure of a multi-marker object to improve robustness. "Object-based tracking can improve robustness"

- parallel-jaw gripper: A gripper with two jaws that move in parallel to open and close symmetrically. "Parallel-jaw gripper."

- pyramid-structured data regime: A multi-layer data organization that progresses from broad pretraining data to task-specific and recovery data. "pyramid-structured data regime"

- reachability: Whether a target pose lies within the robot’s kinematic workspace. "Geometric reachability alone does not guarantee stable execution."

- replayability: The likelihood that recorded demonstrations can be faithfully executed by the robot. "poor replayability."

- SLAM: Simultaneous Localization and Mapping; estimating a sensor’s pose while building a map of the environment. "SLAM-based methods"

- structured marker object: A predefined, rigid arrangement of motion-capture markers with known topology for robust tracking. "structured marker object"

- tactile pretraining: Pretraining representations using large-scale tactile (and often aligned visual) data before task-specific learning. "large-scale tactile pretraining"

- teleoperation: Direct human control of a robot, often remotely, using mapped inputs. "teleoperation systems rely primarily on visual feedback"

- tAmeR: The paper’s AR-based teleoperation system that streams visual and tactile feedback for recovery data collection. "tAmeR, our AR-based teleoperation system"

- visuo-tactile: Combining visual and tactile sensing/modalities for perception and control. "visuo-tactile learning framework"

- VR-based tracking: Using virtual reality controllers/base stations to track handheld devices or interfaces. "VR-based tracking"

- workspace: The spatial region reachable by the robot’s end-effector given its kinematics and limits. "violate inverse kinematics, joint-limit, workspace, or motion constraints"

Collections

Sign up for free to add this paper to one or more collections.