- The paper presents a hierarchical, solver-agnostic multi-agent framework that autonomously transforms perceptual data into engineering reports.

- It employs iterative quality gates and uncertainty quantification to ensure robust numerical simulation and compliant design assessments.

- The system achieves significant efficiency gains, automating workflows that traditionally required days of manual engineering analysis.

Autonomous Multi-Agent Framework for Solver-Agnostic Computational Mechanics

Framework Architecture and Agent Design

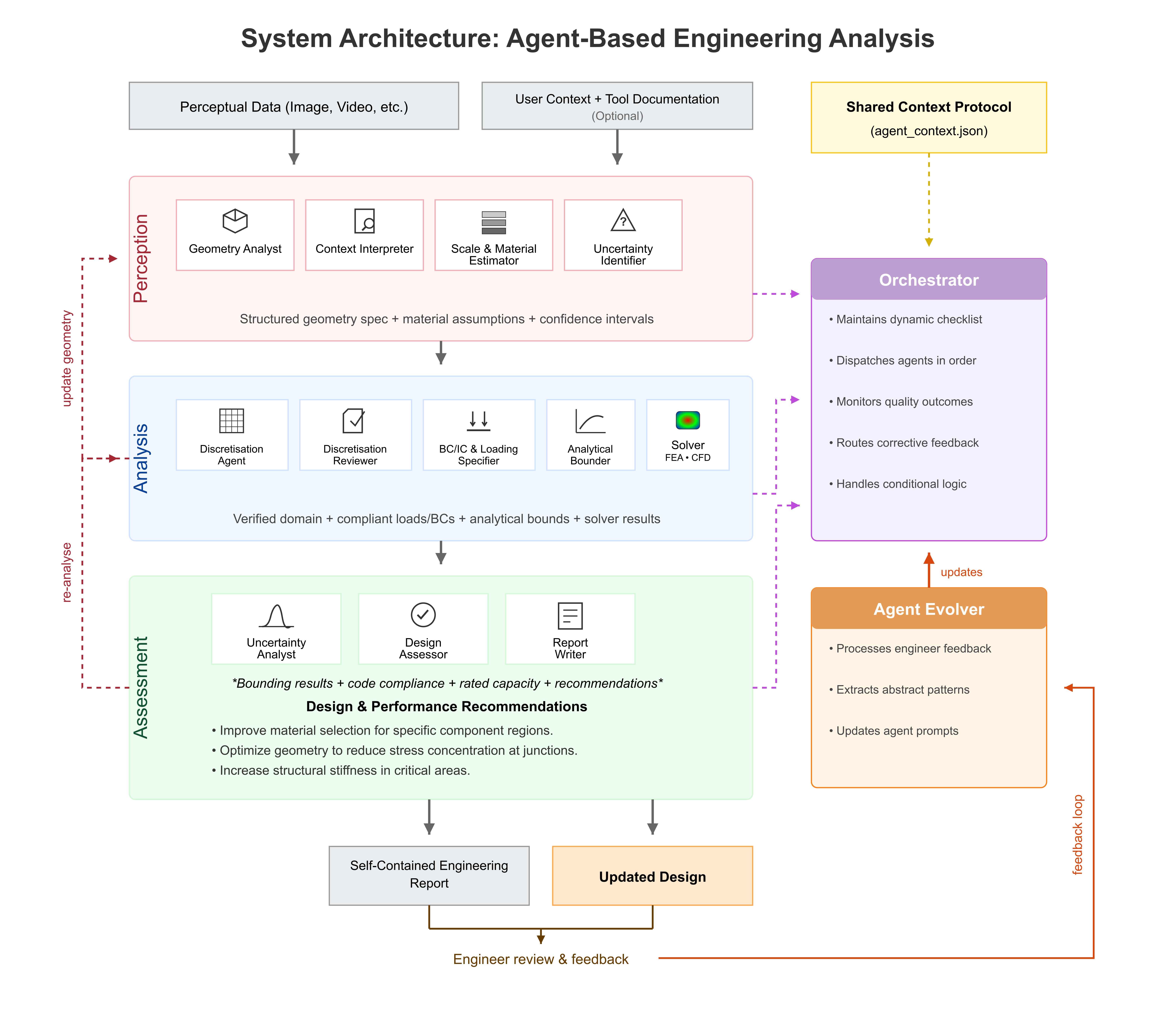

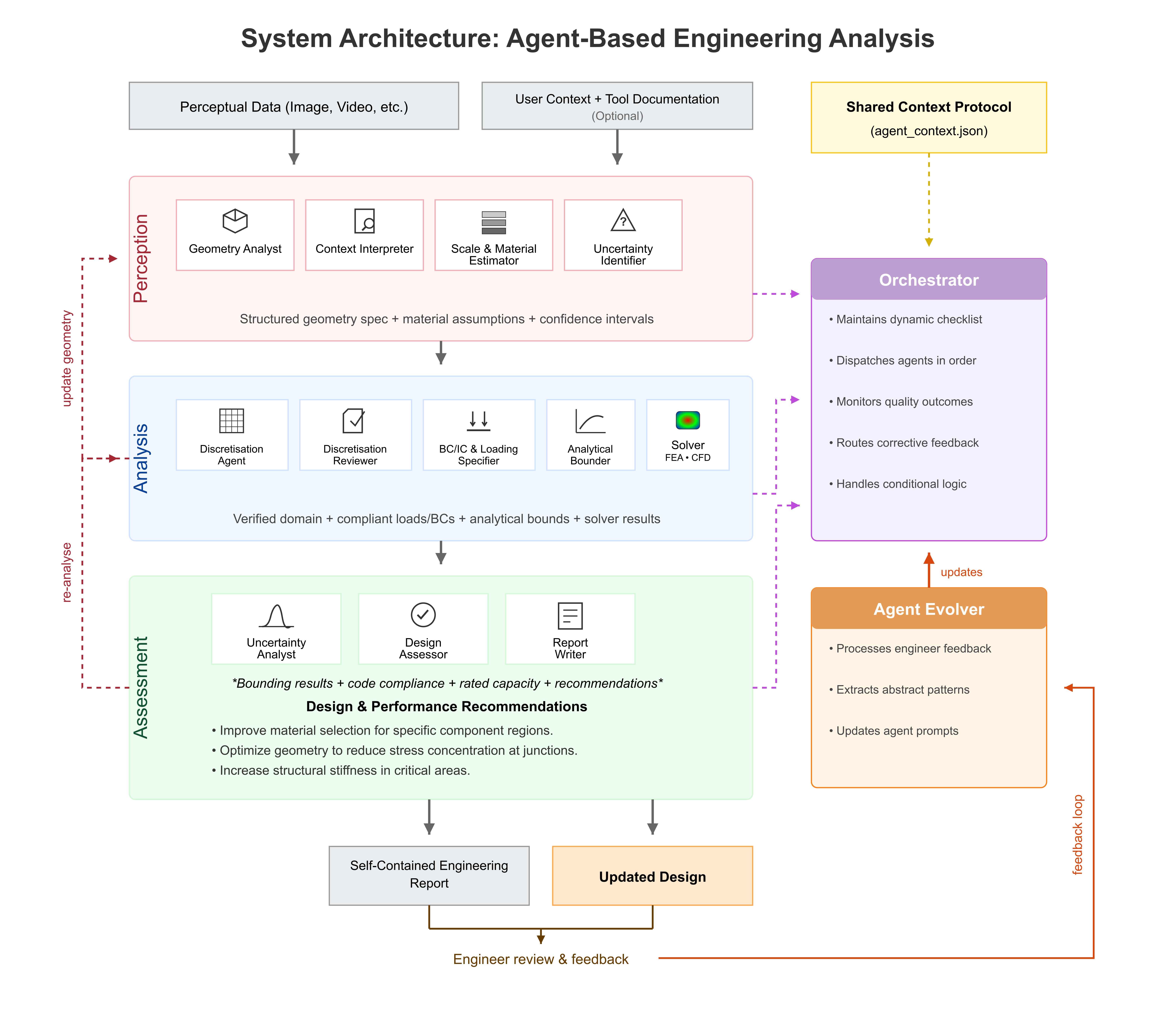

The paper presents a hierarchical, solver-agnostic multi-agent architecture leveraging LLMs to realize fully autonomous computational mechanics pipelines—from perceptual data to code-compliant engineering reports. The core system comprises three iterative processing layers: perception, analysis, and assessment, encapsulated and coordinated by a meta-level orchestrator agent. This orchestrator maintains a dynamic execution checklist, selects specialized LLM-driven agents, enforces cross-layer quality gates, and mediates iterative feedback. Each agent is characterized by scope definition, allowable toolset, internal critic protocol, and persistent memory for learning from prior corrections.

Figure 1: Three-layer multi-agent architecture orchestrated for iterative engineering analysis, with feedback and agent evolution via human-in-the-loop corrections.

Distinct from prior work limited to specific modeling components or text-structured input, this framework automates the entire workflow, from unstructured perceptual data (images, videos) through geometry and material inference, discretization, physics solver execution, uncertainty quantification, and design code assessment. Agents communicate strict structured data through a JSON context schema, minimizing loss or ambiguity in complex multi-step pipelines.

The perception layer is responsible for inferring geometry, materials, and contextual parameters directly from perceptual data, predominantly images. Multiple processing pathways—including direct VLM interpretation, CAD script synthesis, feature-based edge extraction, and (multi-view) point cloud reconstruction—are selected according to input modality and data quality.

In the demonstration, the geometry analyst agent infers principal L-bracket dimensions, hole locations, and material class from a single photograph using proportional reasoning and reference matching. It assigns explicit uncertainty bands (non-probabilistic plausibility tags) to all extracted dimensions, reflecting agent self-assessed confidence in absence of empirical calibration. Notably, several key parameters, such as arm length and thickness, exhibit substantial residuals versus physical measurements, particularly emphasizing the necessity for professional engineering review.

Figure 2: Input photograph with contextual cues guiding perception-layer feature extraction for geometry and material inference.

Layered Analysis and Quality Gates

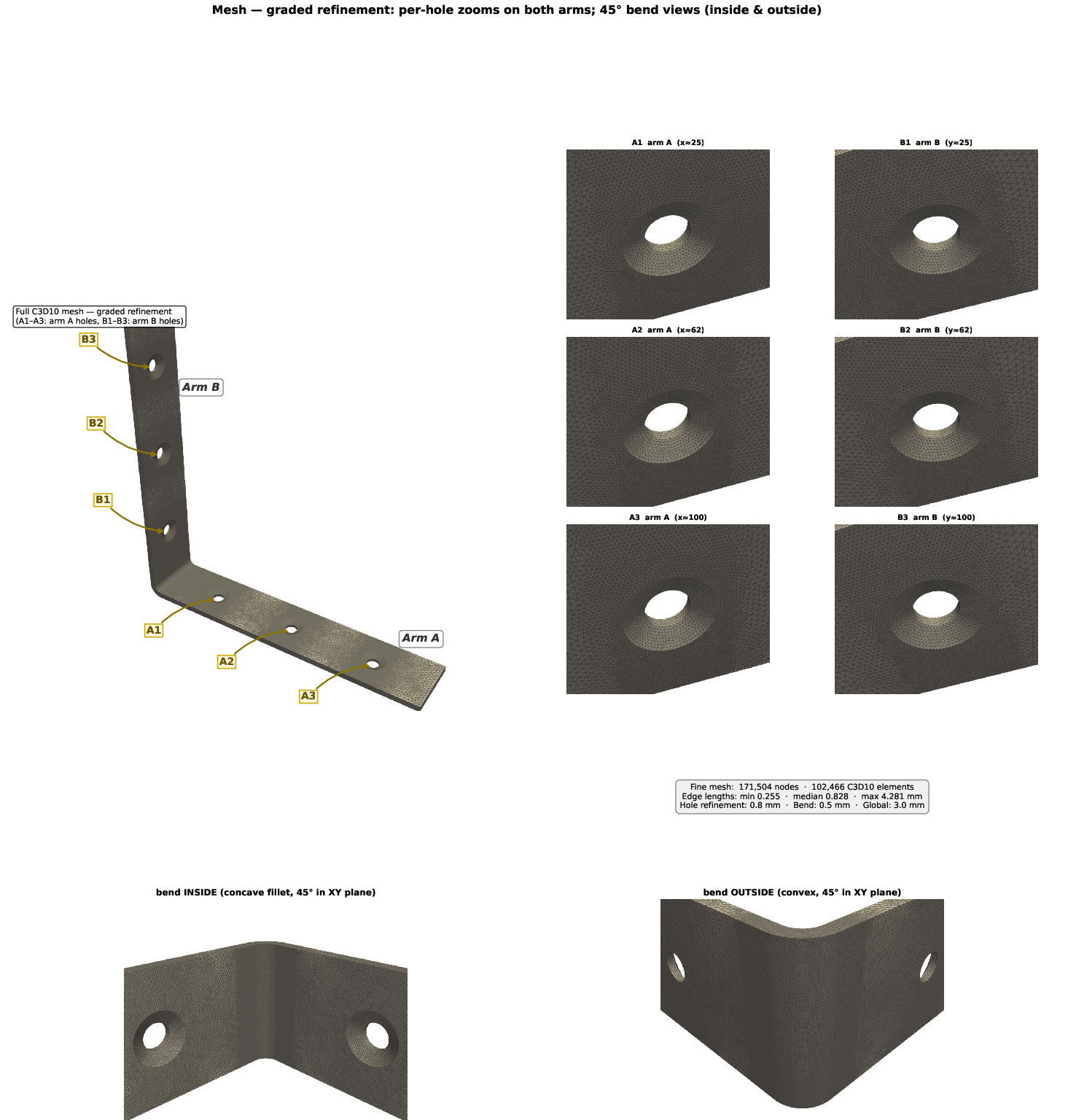

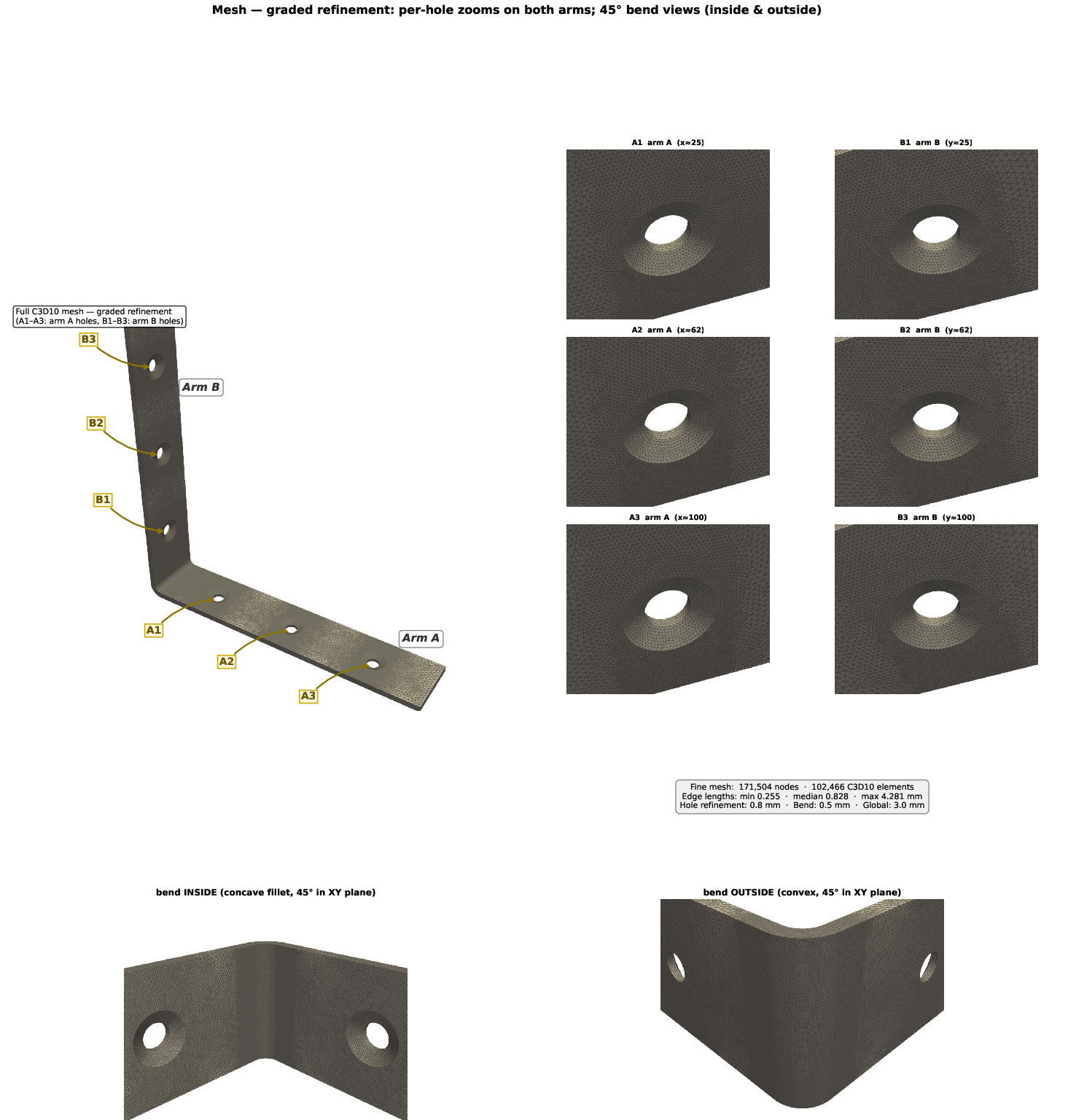

Upon geometric and material inference, the analysis layer executes the full computational workflow. Discretization agents construct and refine FE meshes using hierarchical feature-driven criteria, with mesh review agents performing topology and connectivity audits as part of Gate 1.

Figure 3: Mesh generation and autonomous feature verification ensuring topological validity and refinement around geometric hot-spots.

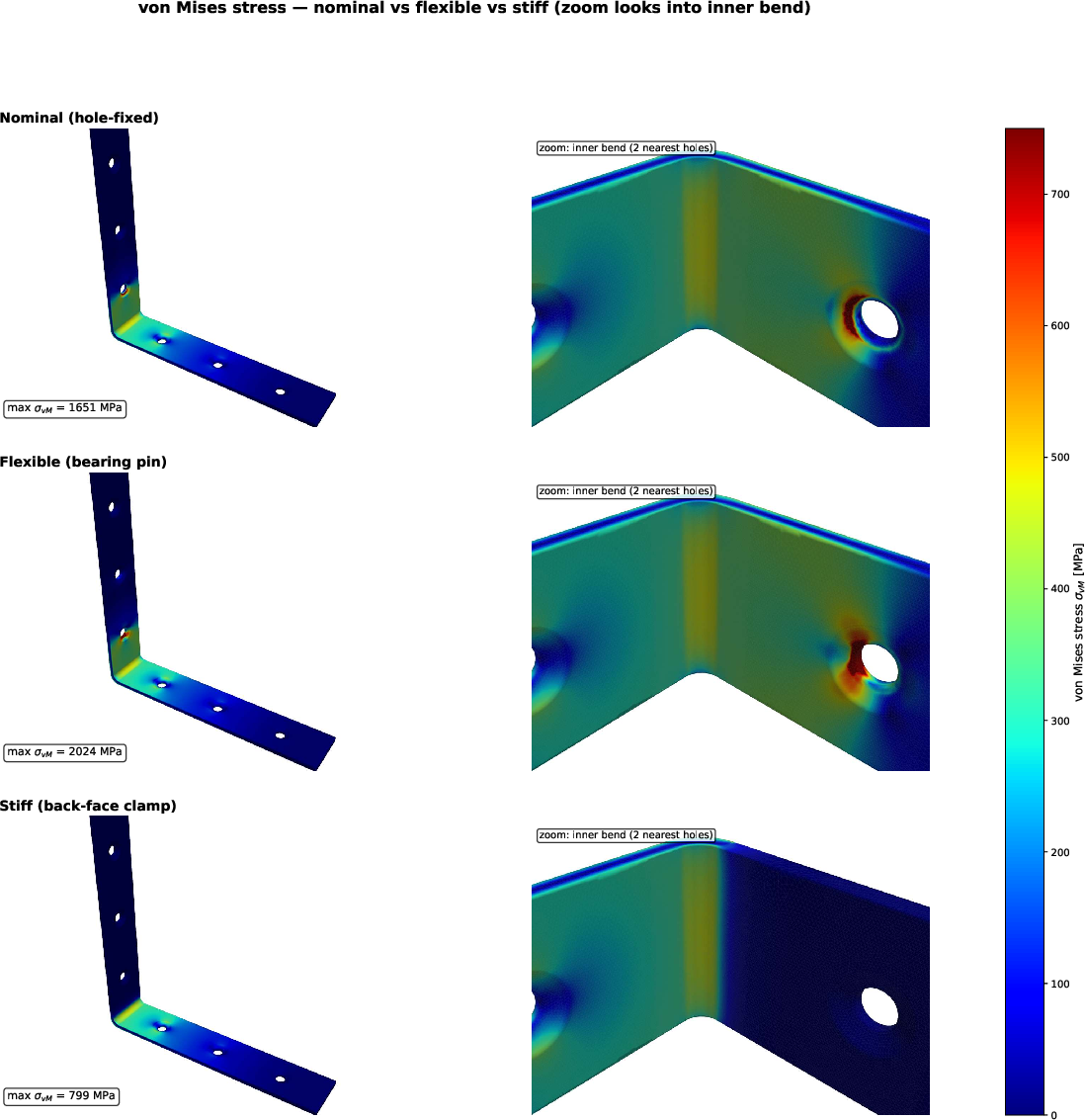

Loading, boundary condition generation, and code selection are inferred through context and regulatory cues; multiple BC hypotheses capture physical modeling uncertainty. Analytical bounds on stresses and deflections provide reference checks for numerical simulation results.

The pipeline leverages solver-agnostic mathematics, with extensibility to FEM, DEM, SPH, MPM, FVM, and peridynamics. The orchestrator dynamically adjusts agent instantiations and workflow according to the problem domain, with only solver interfaces/agents requiring specialization.

Autonomous Simulation Results and Quality Assessment

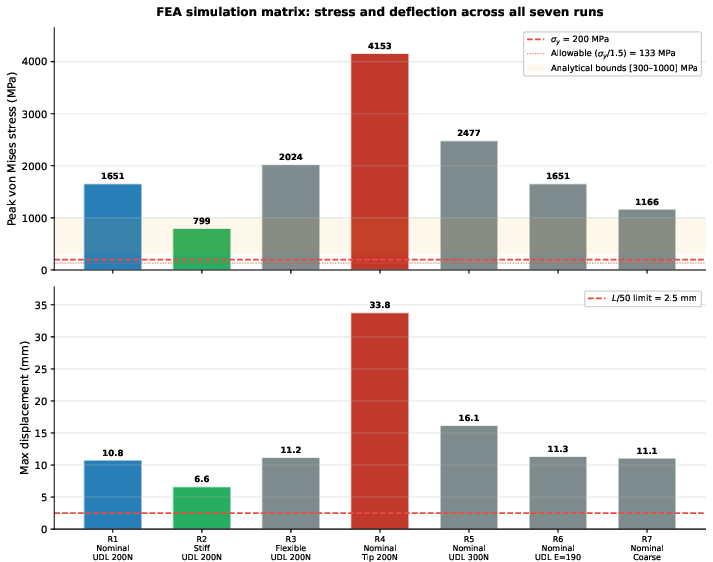

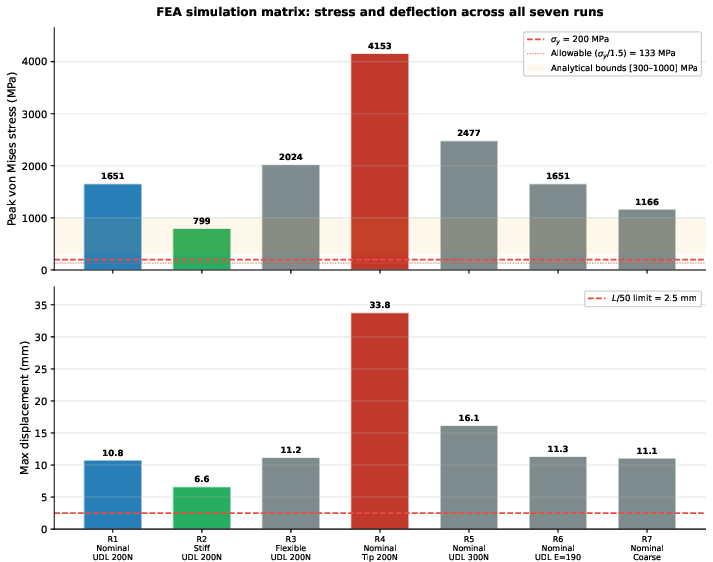

Applying the framework to a steel L-bracket, agents autonomously generate a 171k-node tetrahedral mesh, set up seven FEA analyses (covering three BCs and four sensitivity variants), and assess results against code-driven design limits. The full end-to-end pipeline—from image to report—executes in 22 minutes at an LLM API cost of approximately $1, a demonstrated improvement over manual workflows requiring multiple days.

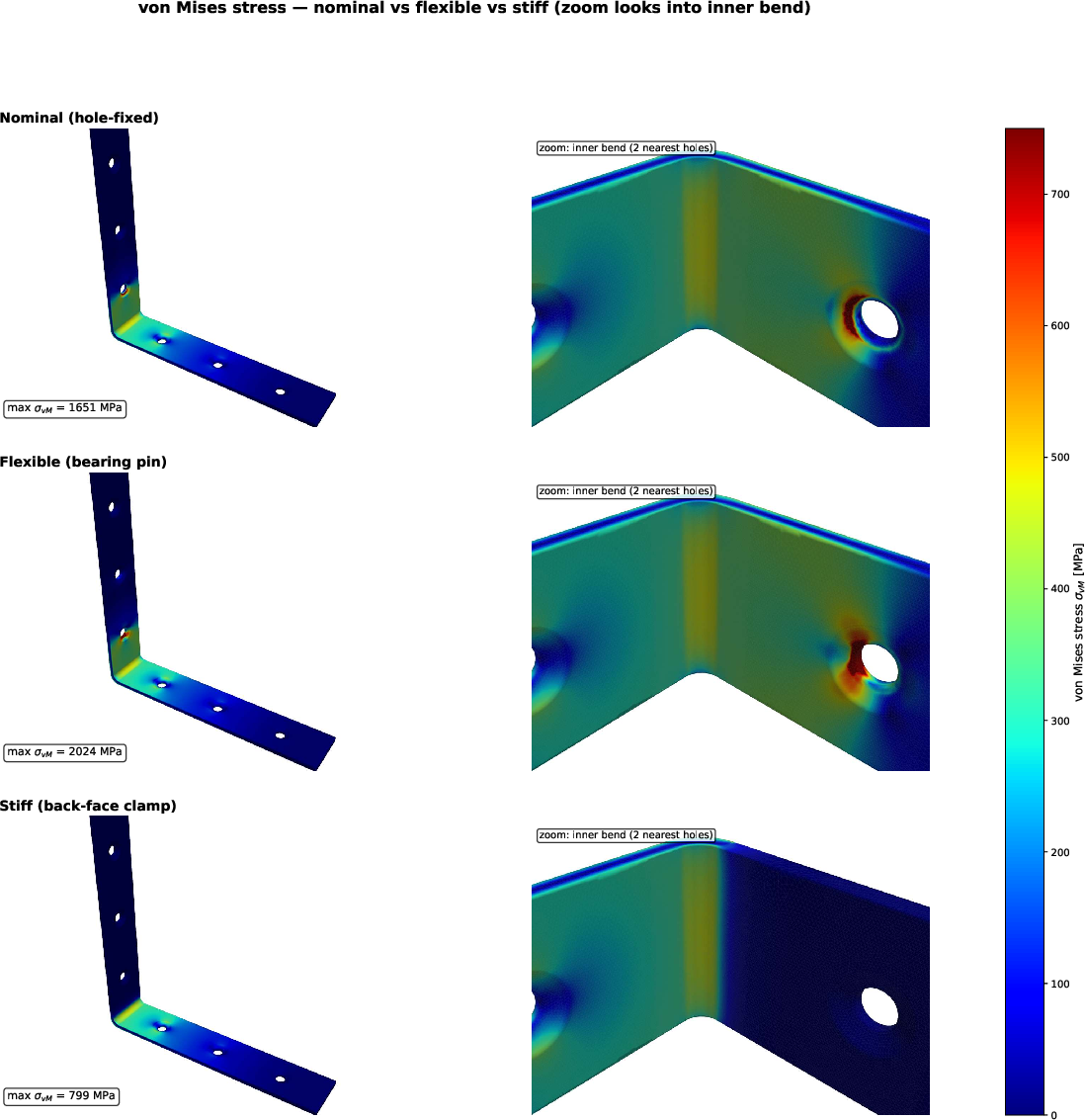

The FEA module demonstrates competent, robust simulation capability. Mesh refinement, BC hypotheses, and analytical bounds are constructed and evaluated consistently. Strong numerical outcomes include:

- Stress and deflection predictions consistent with both beam-theory scaling and hand calculations.

- Autonomous identification of stress hot-spots at geometric discontinuities and countersinks.

- Correct identification—in unconstrained, first-iteration context—that original design fails code-mandated limits, both for strength and serviceability.

Figure 4: Von Mises stress field for multiple BC variants, highlighting critical regions and comparison to code thresholds.

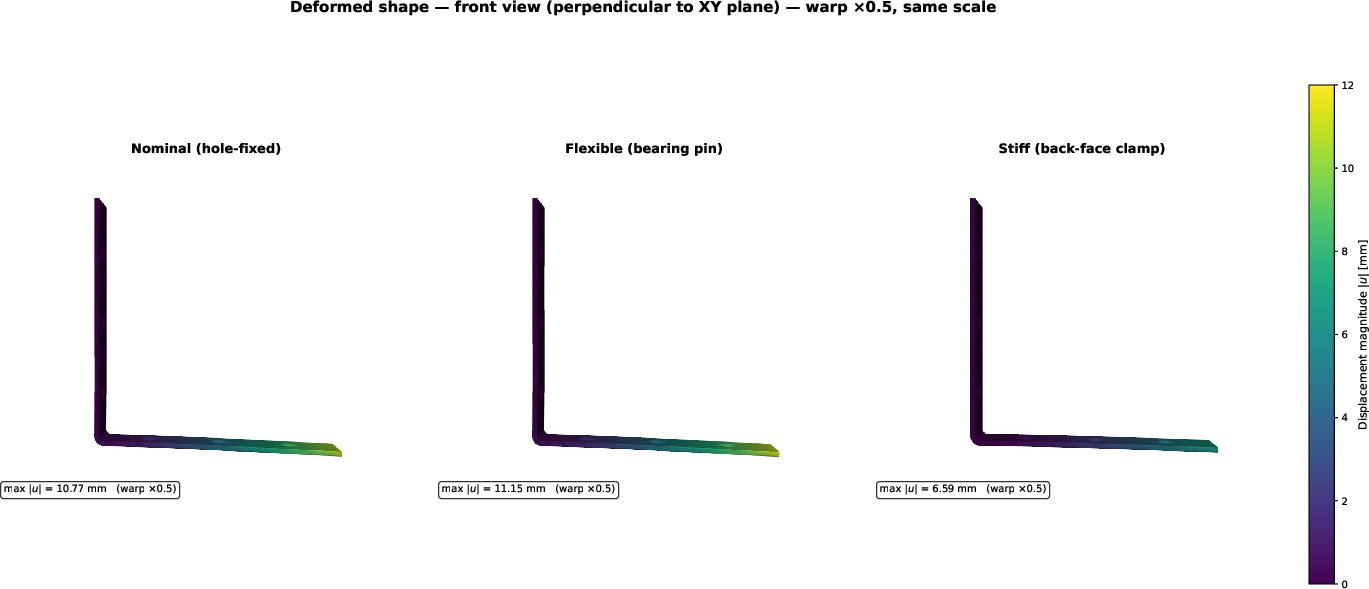

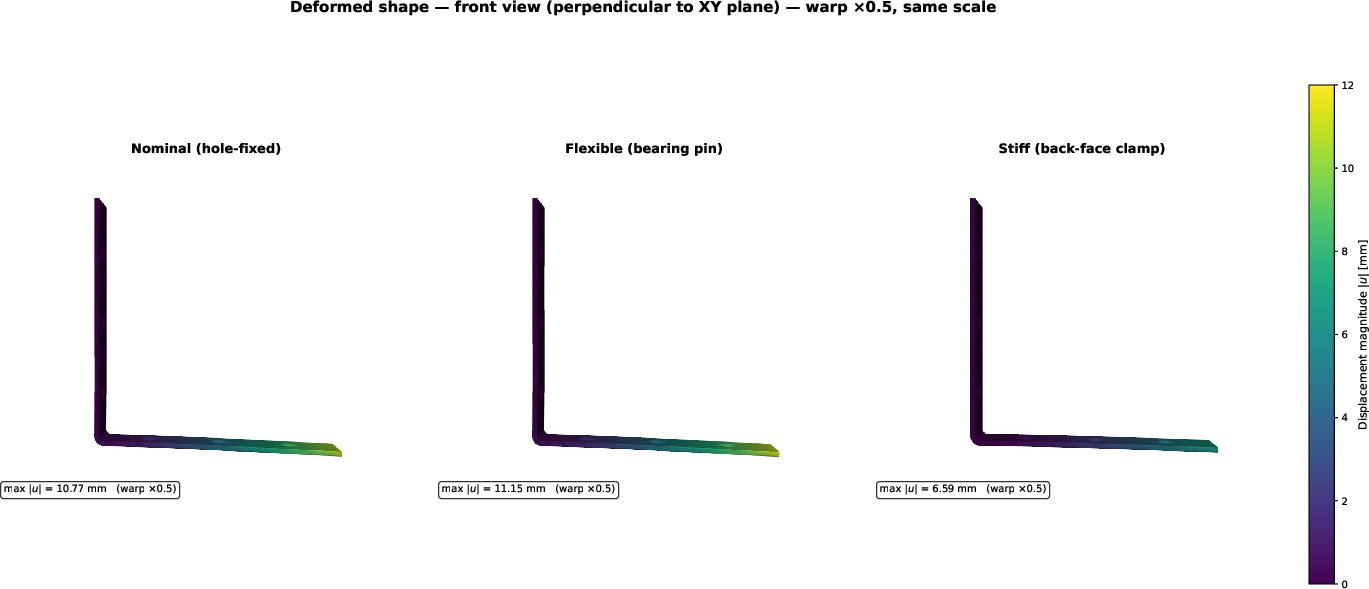

Figure 5: Deformed shape fields for all BCs, illustrating the impact of boundary condition uncertainty on predicted tip deflection.

Notably, the system quantifies the sensitivity of results to geometric, material, and boundary uncertainties, reporting factor of safety ranges per limit state and directly correlating result spread to dominant source parameters.

Figure 6: Summary of stress and displacement results across all analysis variants, contextualized with bounds and code-mandated performance limits.

Autonomous Redesign and Feedback Loop

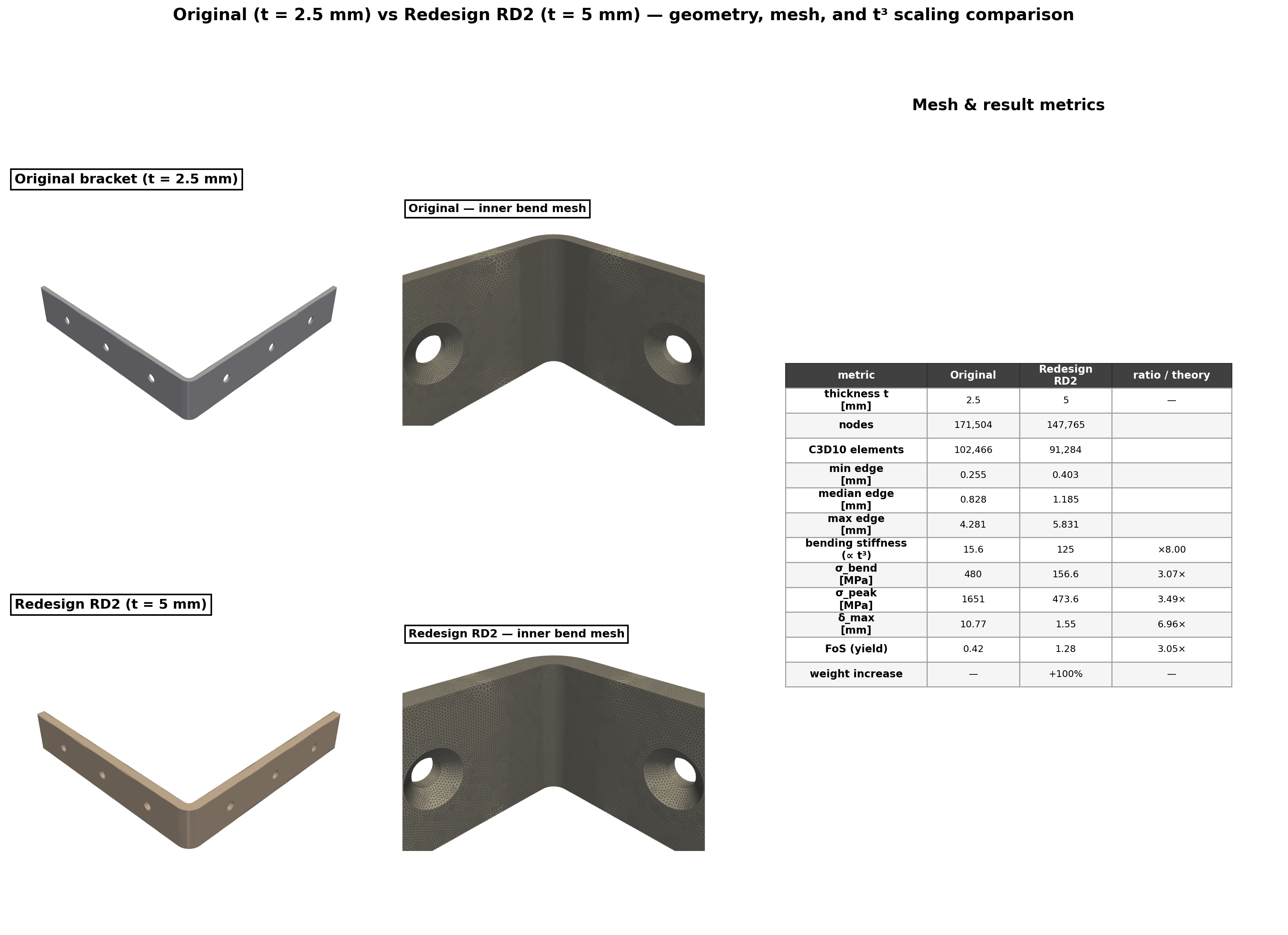

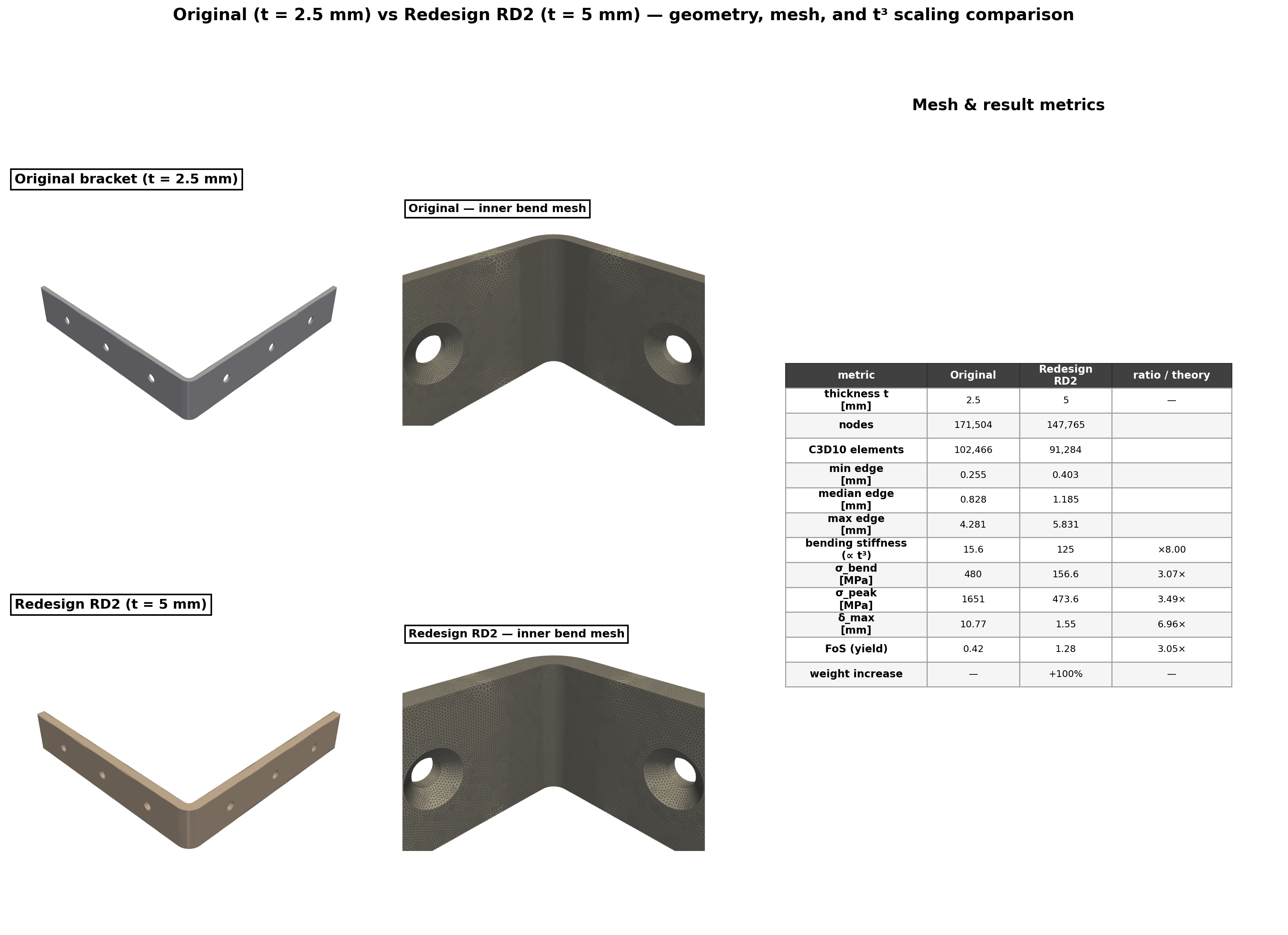

When original design fails, the assessment layer identifies governing limit states, ranks sources of uncertainty, and proposes actionable redesigns (e.g., thickness increase, gusset addition). The orchestrator then executes a redesign simulation, demonstrating performance improvements and scaling consistency with analytical predictions.

Figure 7: Geometry and result comparison for baseline versus autonomously determined redesign, with quantitative scaling validation.

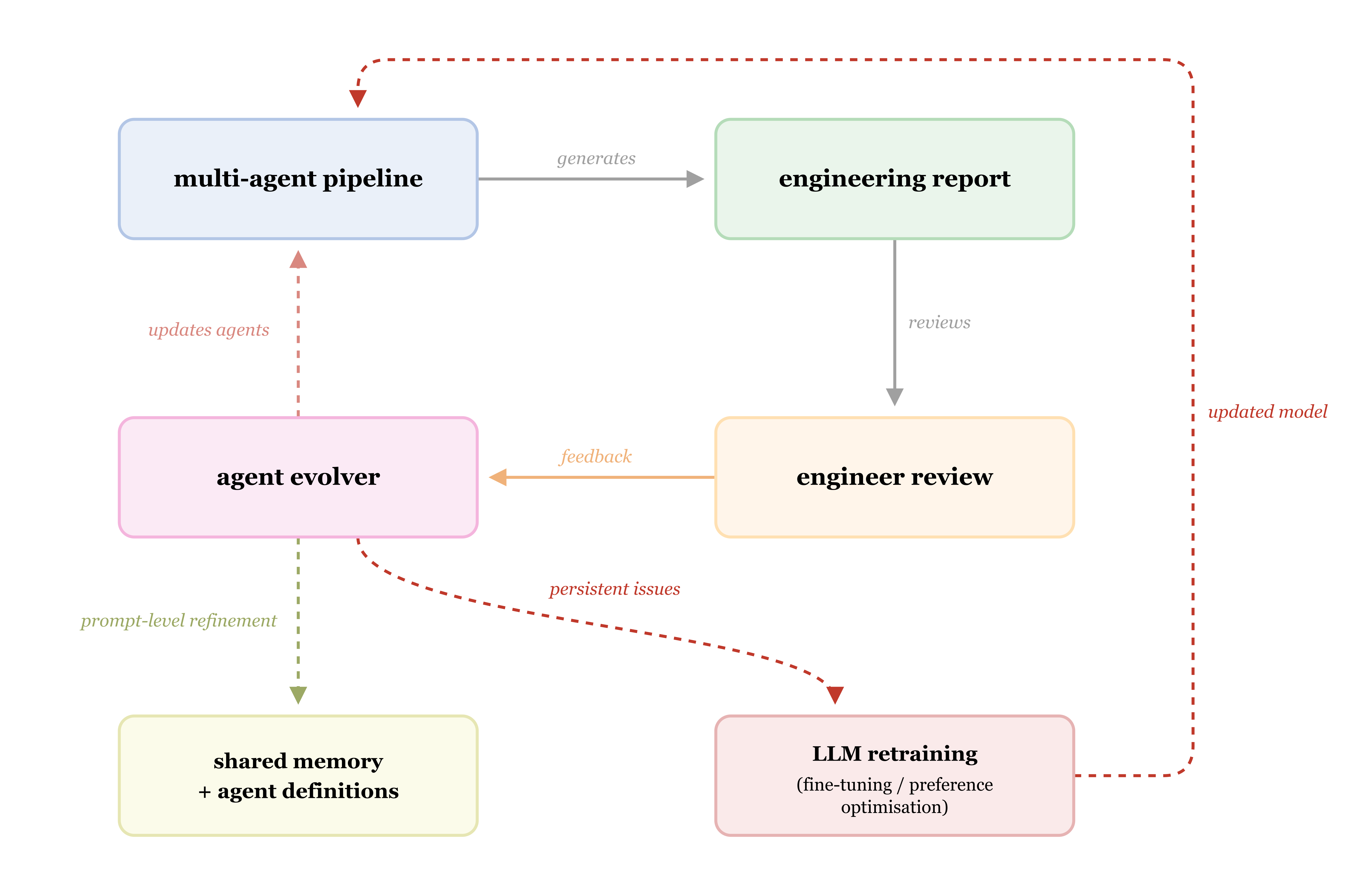

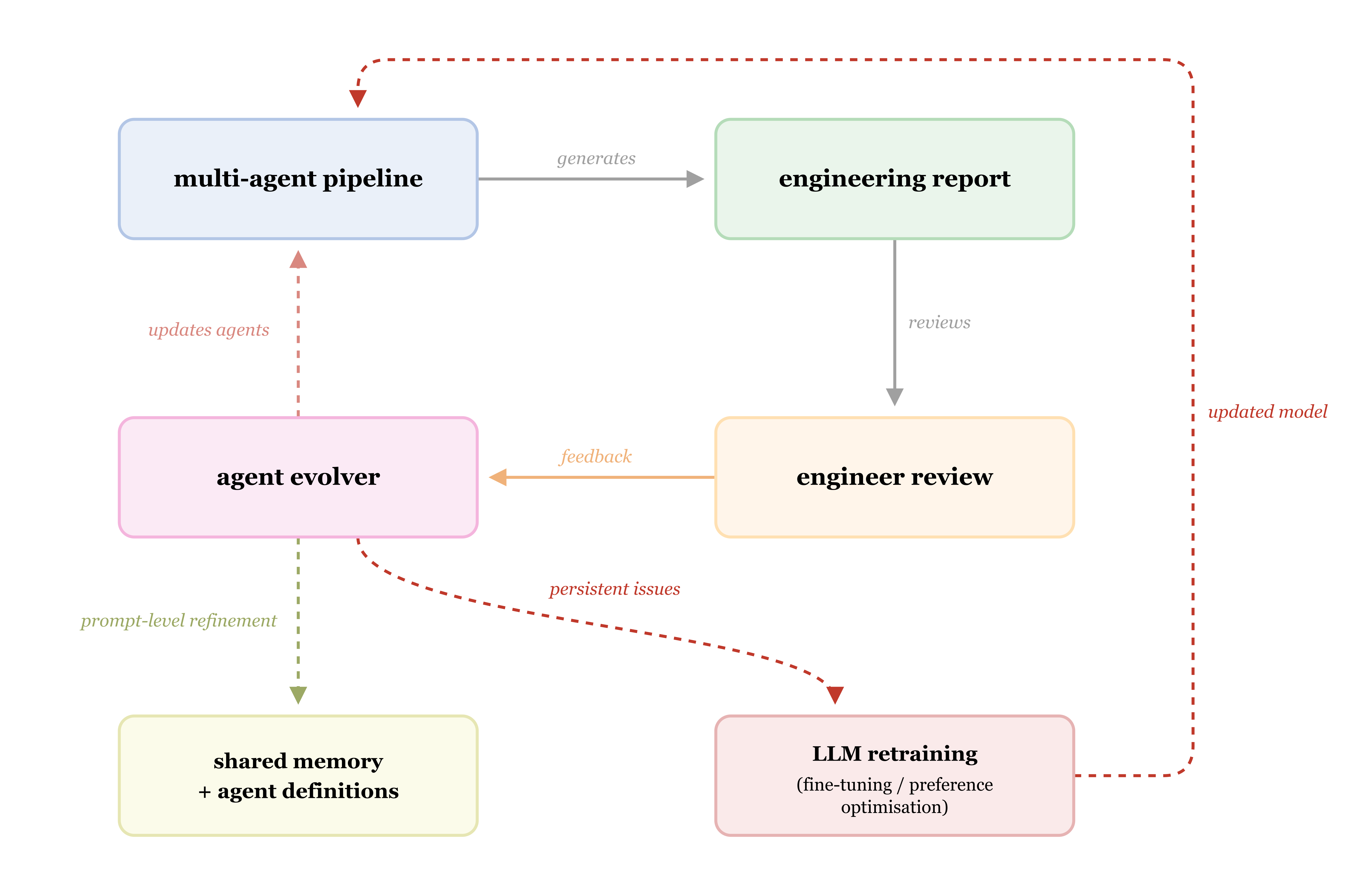

The system implements a two-tier self-improving architecture: prompt/memory-level refinement incorporating professional feedback post-analysis, and, where recurring corrections are detected, escalation to fine-tuning or preference optimization of the underlying LLM. This enables targeted, auditable learning from real-world engineer intervention while ensuring full traceability and reversibility.

Figure 8: Self-improving feedback topology—prompt-level agent learning with escalation to model-level refinement as dictated by correction recurrence.

Implications and Future Directions

Practically, this research establishes a viable paradigm for rapid, cost-efficient, and transparent computational engineering analysis harnessing LLM-driven multi-agent systems. The framework drastically reduces human labor in routine analysis but emphatically positions the professional engineer as the ultimate authority for oversight and sign-off, addressing regulatory and liability concerns.

Theoretically, this agentic architecture provides a rigorous, extensible interface between unstructured physical data and solver-agnostic computational modeling, embedded with uncertainty quantification and interpretable, auditable feedback loops. The formalization of agent operations and quality gates offers a template for verifiable autonomous engineering systems, and the mathematical treatment of uncertainty propagation and conservatism supports robust UQ across modeling hierarchies.

Limitations include restricted capability for complex assemblies, nonlinear and multi-physics problems, and reliance on rapidly evolving vision-LLM capabilities for perceptual inference accuracy. Future research directions involve integrating nonlinear solvers, 3D reconstruction from multi-modal inputs, adaptive agent retraining based on accumulated professional feedback, and formal verification of system-level decision quality.

Conclusion

This work delivers a validated demonstration of autonomous, multi-agent LLM-driven computational mechanics, encompassing the full chain from perceptual data to code-compliant engineering assessment and iterative redesign, all within a traceable, auditable, and extensible quality framework. While full professional oversight remains mandatory, the system evidences strong potential for significant efficiency and reproducibility gains in engineering analysis pipelines, and establishes a foundation for further advances in agentic, solver-agnostic, autonomous modeling methodologies.