- The paper introduces a two-stage framework where a deep elevation estimator replaces LiDAR data to predict digital surface models for radio environment maps.

- The method uses the Im2Ele model to predict elevation from aerial RGB images, achieving an MAE of 1.02 m and RMSE of 3.11 m on urban benchmarks.

- The study reports up to a 7.8% RMSE improvement over image-only methods, emphasizing scalable, eco-friendly radio mapping for 6G networks.

Learned Elevation Models as a Lightweight Alternative to LiDAR for Radio Environment Map Estimation

Introduction

The proliferation of 6G and similar advanced wireless systems has intensified the need for highly accurate radio environment map (REM) estimators, particularly as operations shift towards higher frequency bands where EM signal propagation becomes acutely sensitive to environmental geometries. Traditional approaches rely on high-resolution, 3D environmental datasets, typically obtained via LiDAR, but these suffer from significant scalability, cost, and obsolescence constraints. The paper "Learned Elevation Models as a Lightweight Alternative to LiDAR for Radio Environment Map Estimation" (2604.05520) proposes a two-stage framework wherein deep models predict elevation from aerial RGB imagery, allowing REM estimation without requiring 3D input data at inference. This essay overviews the proposed method, experimental protocols, numerical benchmarks, and implications for scalable radio mapping in dynamic or large-scale settings.

Two-Stage REM Estimation Framework

The approach is driven by the observation that the indispensability of 3D geometry for accurate REM estimation can be circumvented by introducing a task-specific, CNN-based elevation predictor. The pipeline comprises:

- Stage 1: A deep elevation estimator trained on paired aerial RGB images and LiDAR-derived ground-truth to predict normalized Digital Surface Model (nDSM) maps. The Im2Ele model, an adaptation of the SENet backbone, is used here, with training augmented for urban morphology generalization but excluding color jitter to minimize instability on reflective surfaces.

- Stage 2: The predicted nDSM maps, concatenated with the source RGB imagery and transmitter/antenna parameters, serve as input to any of several CNN-based REM estimators (LitRadioUNet, LitUNetDCN, LitPMNet), which output pixelwise path loss predictions.

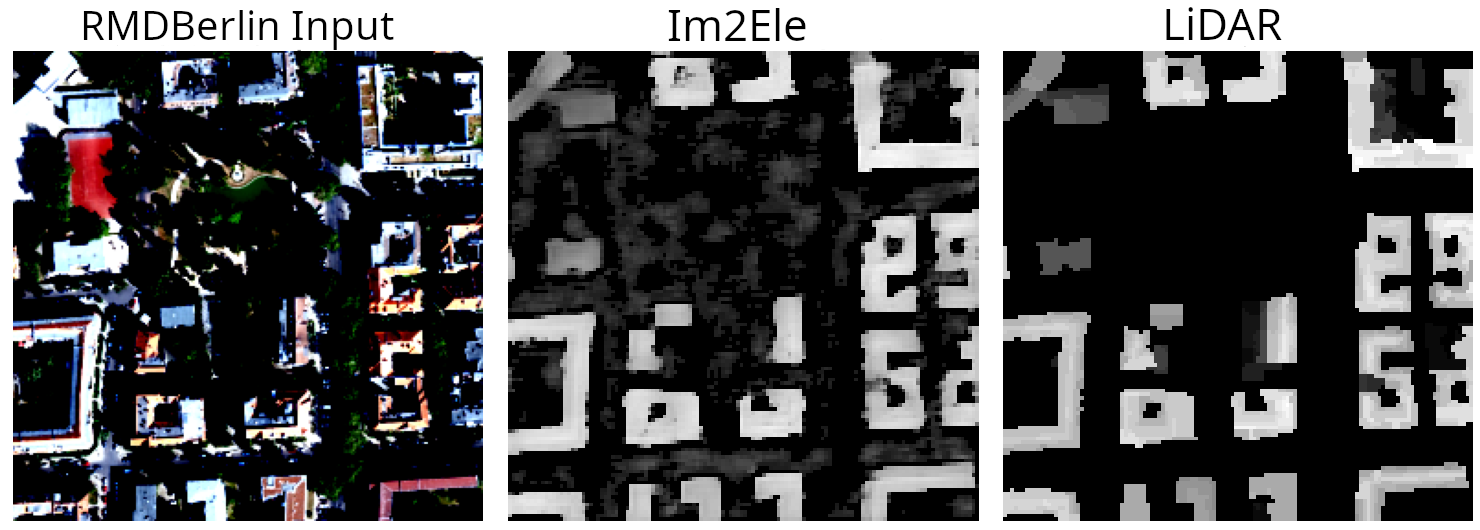

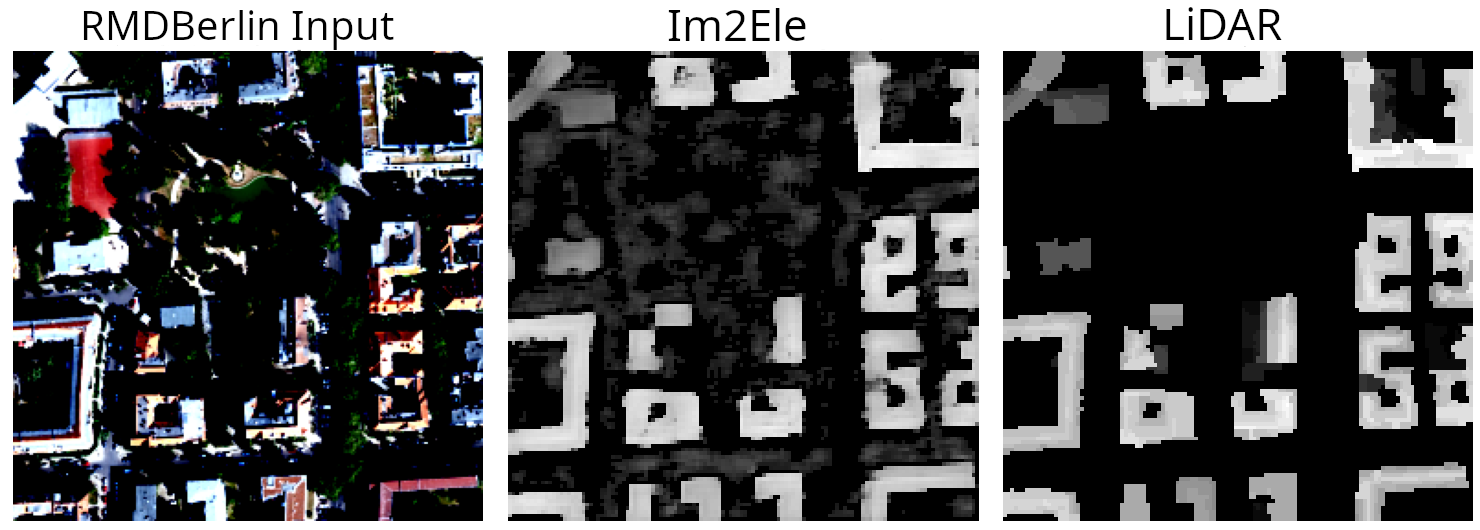

Figure 1: Qualitative comparisons show generated height maps from Im2Ele successfully capture urban morphology relative to ground-truth LiDAR nDSM, but with increased variance in vegetated areas.

The framework is strictly modular: elevation estimation and REM regression are trained separately, avoiding gradient interference. At inference, only RGB imagery is required, introducing significant advantages in deployability and operational carbon footprint.

Benchmarks and Experimental Analysis

Elevation Estimation Accuracy

The retrained Im2Ele achieves an MAE of 1.02 m and RMSE of 3.11 m on the RMDirectionalBerlin test set, improving upon its original implementation's MAE (1.19 m) but with slightly degraded RMSE (from 2.88 m to 3.11 m). This is attributed to the ground-truth being defined only for buildings, compromising generalization in mixed urban-vegetation scenes.

Three deployment variants are evaluated:

- LiDAR→nDSM+images: Ground-truth nDSM with RGB images—represents the performance upper bound.

- Im2Ele-predicted→nDSM+images: Predicted elevation maps replace true nDSM; inference requires only RGB.

- Image-only: RGB images and antenna params only; replicates prior art and serves as the direct baseline.

Across all REM estimator architectures:

| Network |

RMSE (LiDAR) |

RMSE (Im2Ele) |

RMSE (Image-only) |

RMSE Improv. over Image-only |

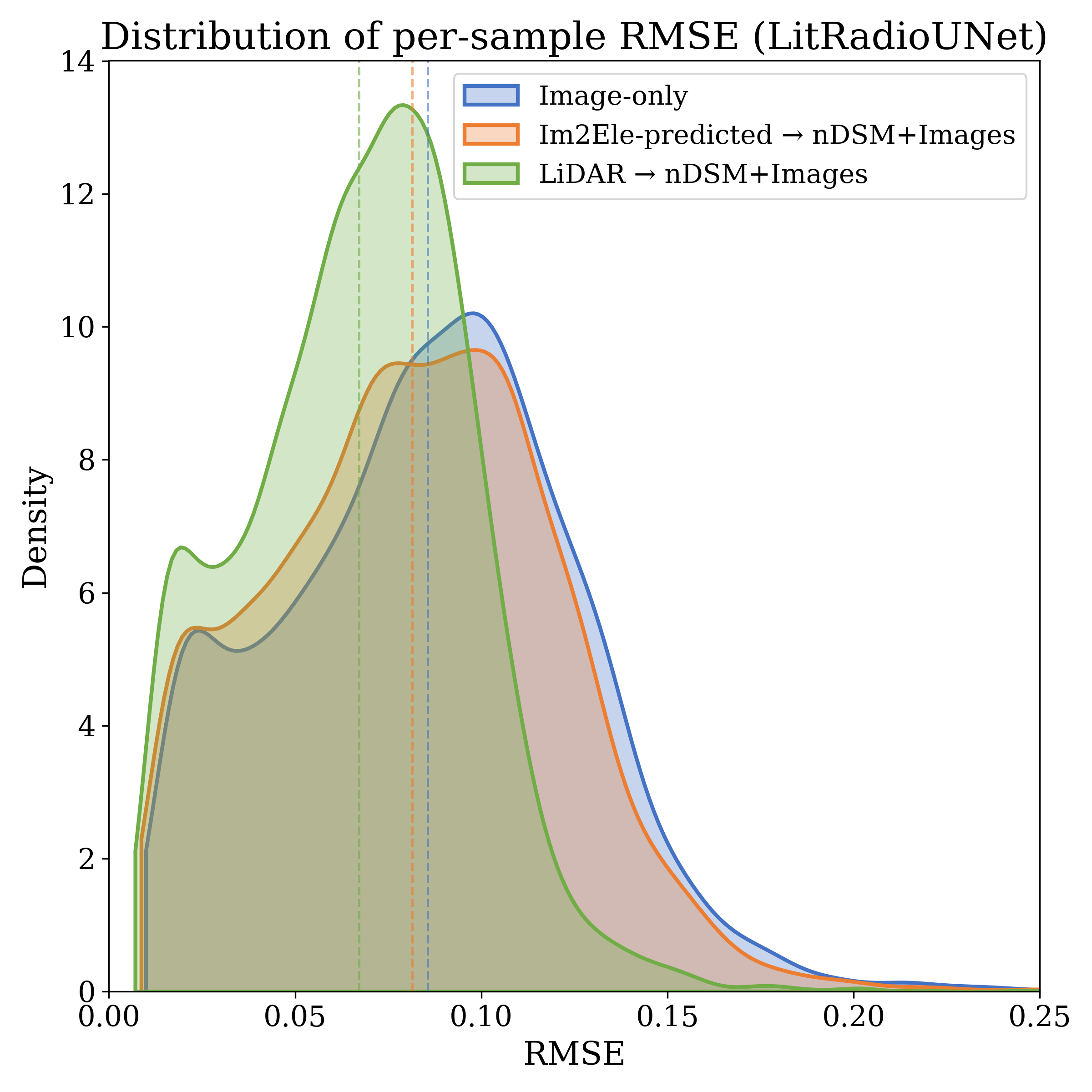

| LitRadioUNet |

0.0735 |

0.0901 |

0.0942 |

4.3% |

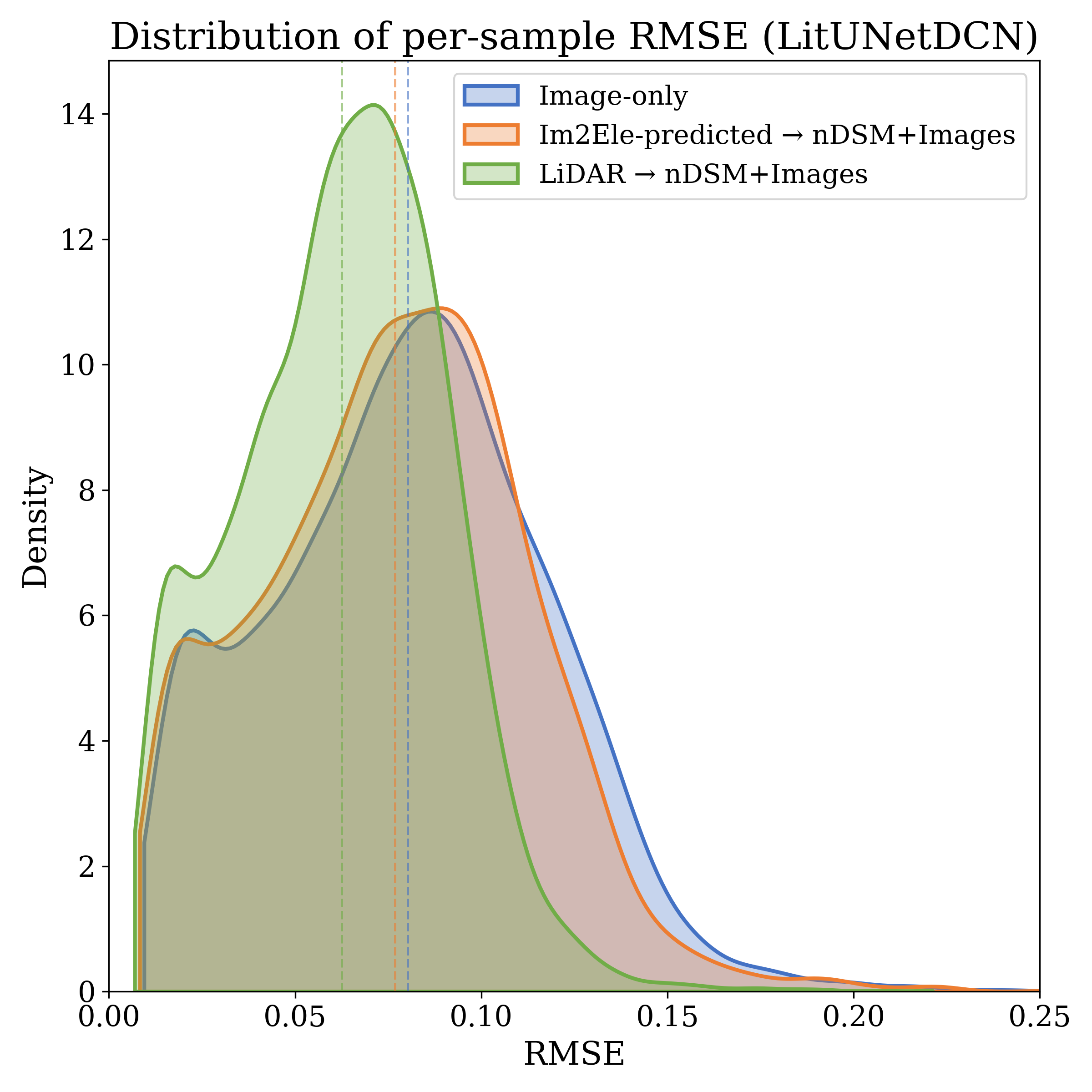

| LitUNetDCN |

0.0684 |

0.0847 |

0.0885 |

4.3% |

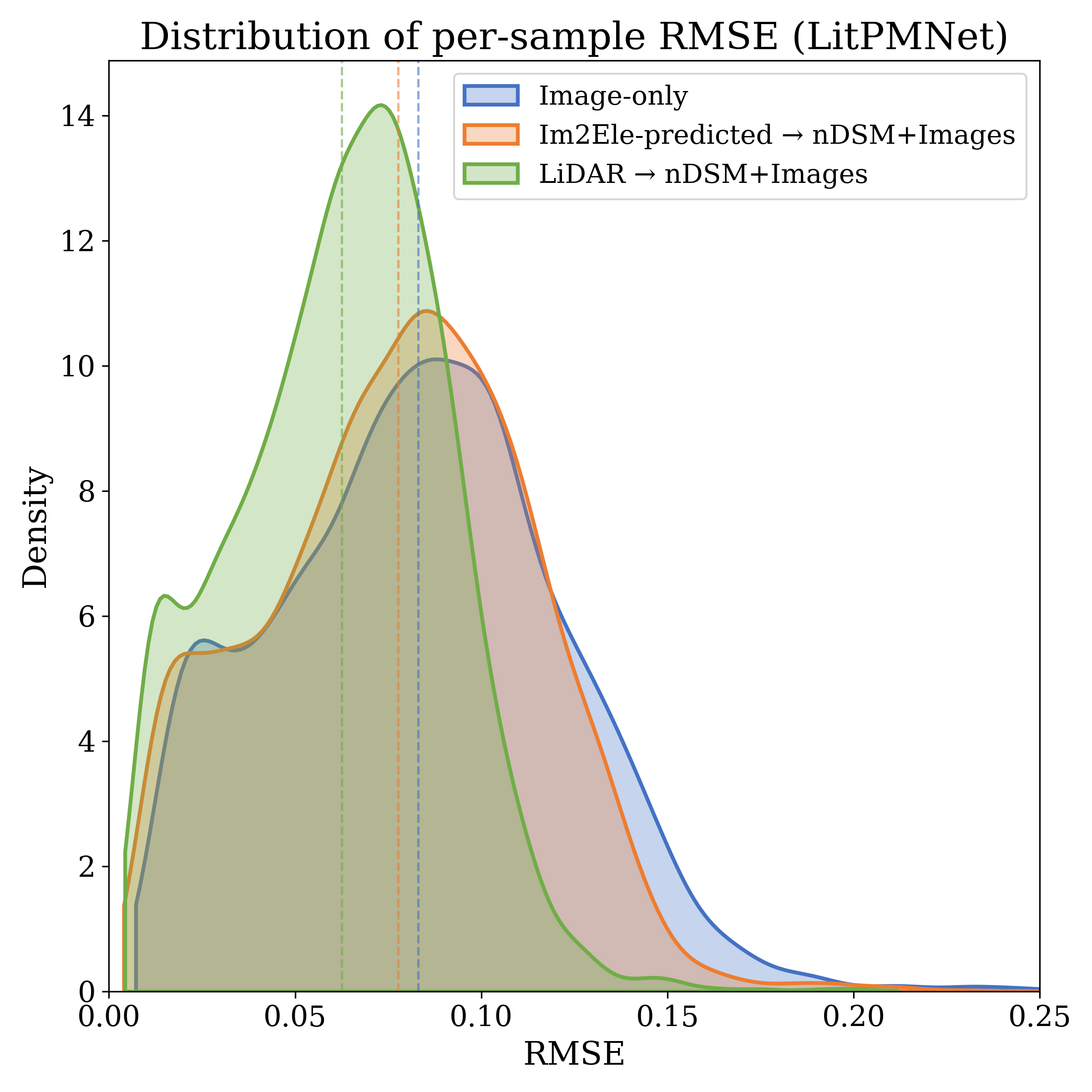

| LitPMNet |

0.0686 |

0.0842 |

0.0918 |

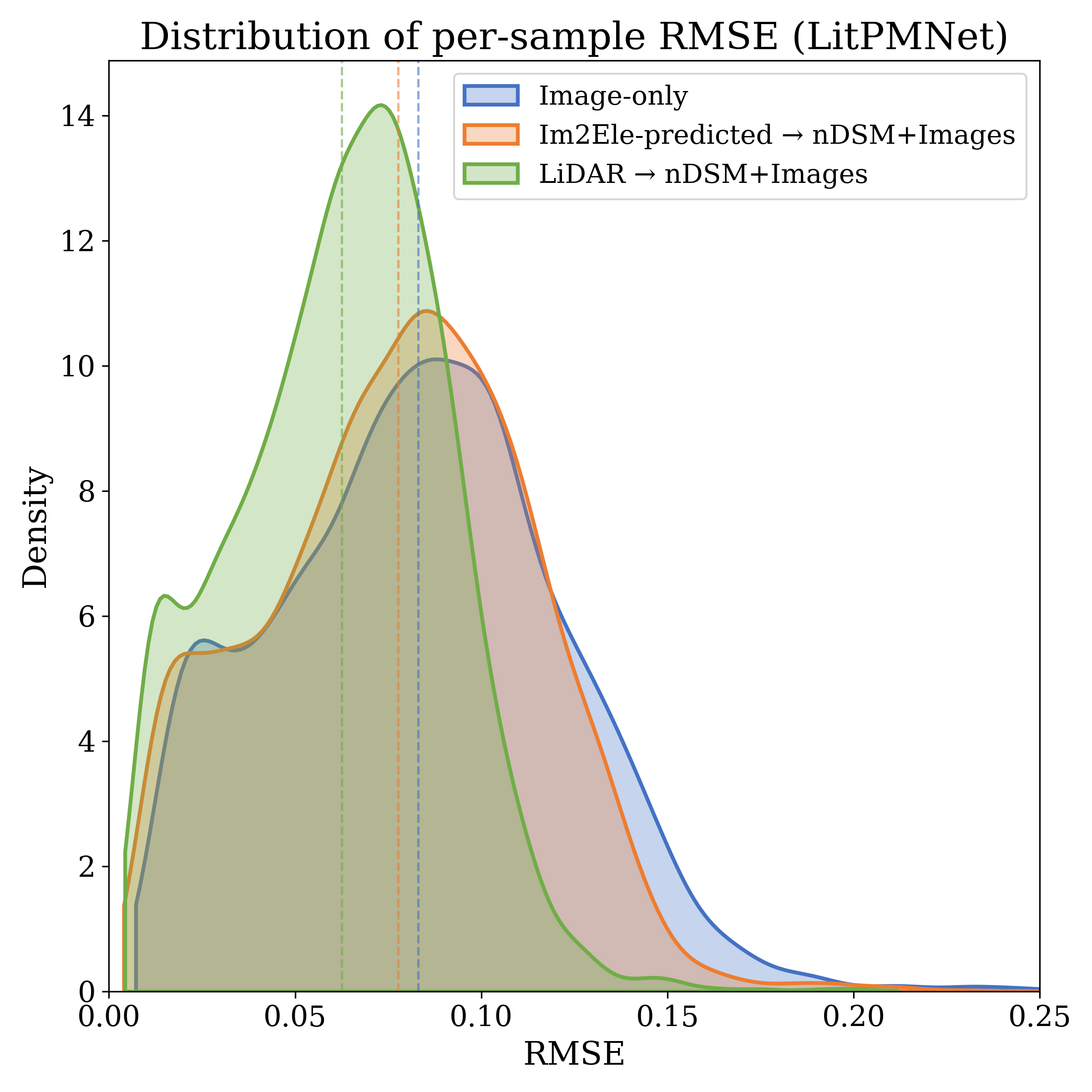

7.8% |

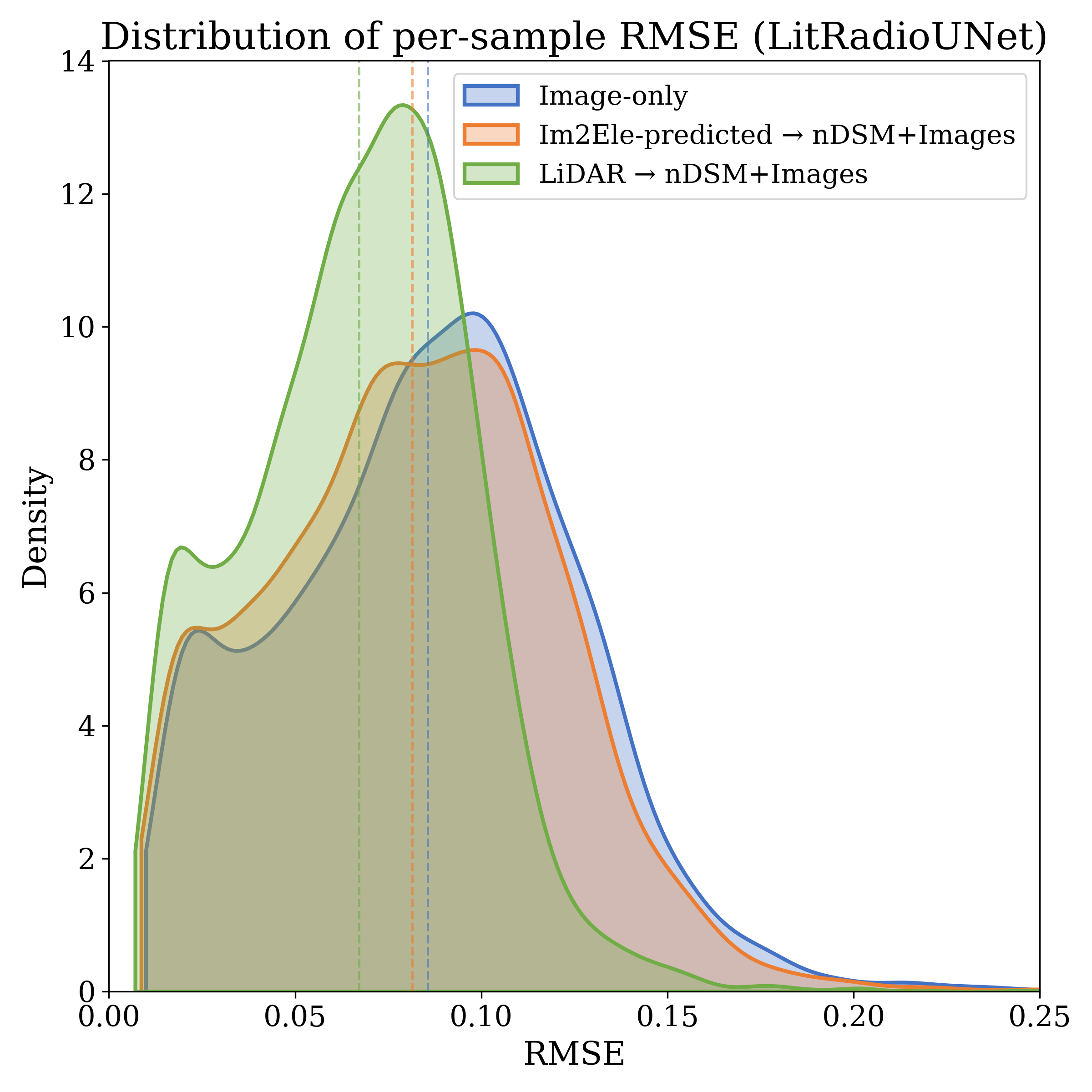

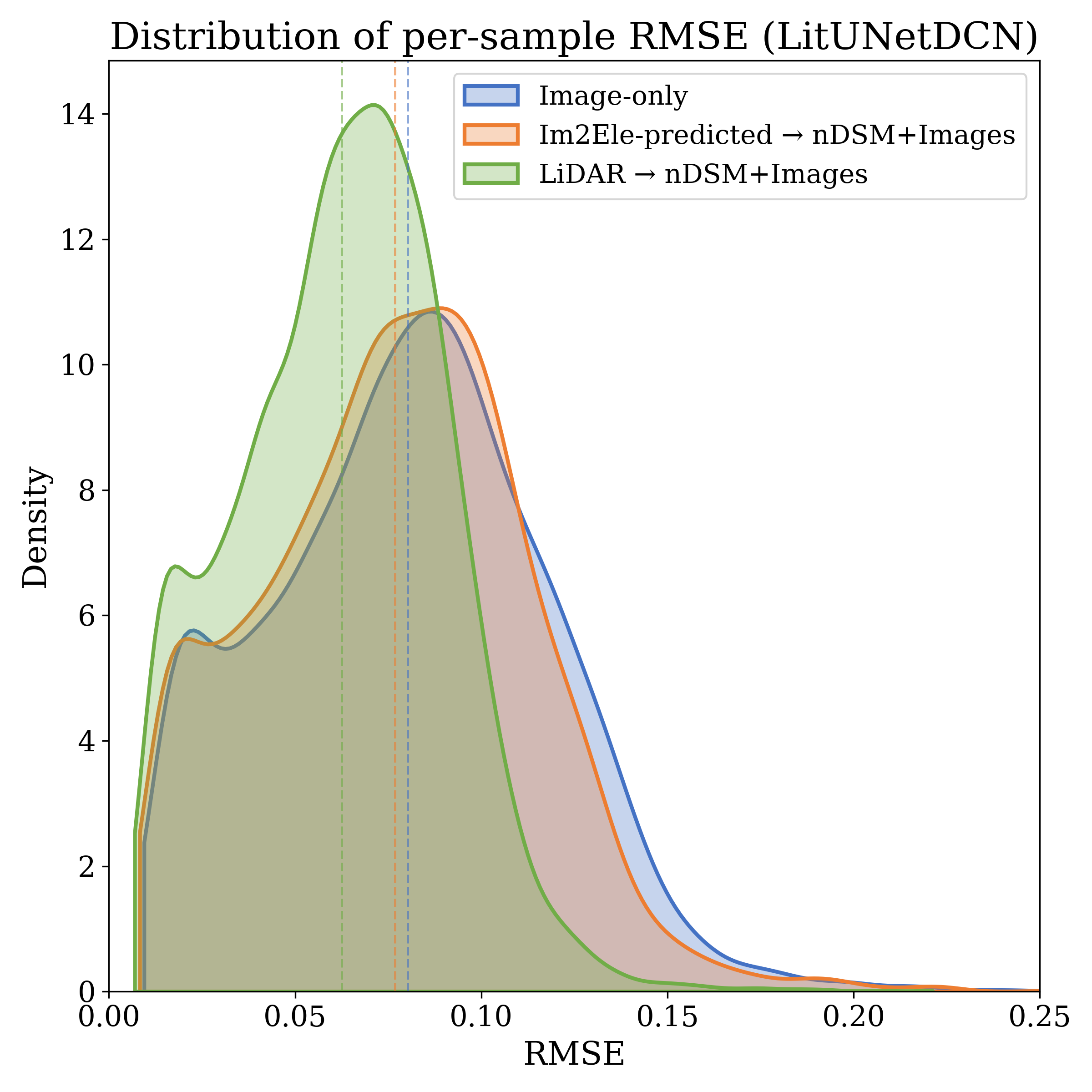

Significantly, the two-stage approach consistently shifts the RMSE distribution leftwards, both reducing means and limiting the occurrence of extreme error events compared to image-only baselines. While the performance gap to the LiDAR-informed upper bound persists, results suggest that the majority of the benefit conferred by 3D geometry can be recovered from RGB-conditional elevation proxies, especially as training data volumes scale.

Model Architecture and Scalability

Figure 2: LitRadioUNet is used as one of the agnostic REM estimator backbones, allowing comparison across input modalities (RGB, estimated and ground-truth nDSM).

The architecture separates geometric inference from propagation regression, enabling specialization and model reuse. This brings decisive memory and operational advantages. For instance, on the RMDirectionalBerlin benchmark, raw LiDAR demands 249 GB of storage, with derived nDSM products occupying several MBs. In contrast, the deployed Im2Ele model requires only 606 MB of static storage, irrespective of deployment area—a fixed cost with O(1) scaling, compared to the O(A) growth of LiDAR-based pipelines.

Environmental and Practical Deployment Impact

Analysis of data acquisition and carbon emissions underscores the framework’s operational viability. Drone-based LiDAR surveys for 27.79 km² require 22,741 kJ (2293 g CO₂), while RGB imagery for equivalent coverage expends only 18,950 kJ (1911 g CO₂). Once trained, Im2Ele inferences per tile are negligible (<0.02 g CO₂), and deployment via satellite imagery (e.g., Vantor) could further eliminate flight missions entirely. Thus, large-scale or frequently updated REM estimation is practical with this approach, in contrast to the cost and latency associated with repeated LiDAR campaigns.

Implications and Future Directions

Bold claim: The study demonstrates that learned monocular elevation models, though imperfect, can confer a majority of the geometric benefit previously attainable only with 3D sensor data, at a fraction of the acquisition and runtime resource cost. Contradictory to end-to-end direct mapping, decoupling geometry reconstruction yields not only accuracy gains (up to 7.8% RMSE improvement) but also more robust error behavior and model scalability.

The findings promote several directions for future research:

- Extension to diverse environments (rural, dense vegetation) where current Im2Ele generalizes weakly due to limited data.

- Scaling up with global-scale satellite imagery, leveraging on-demand platforms for dynamic REM updates.

- Joint training regimes where REM and geometry estimators share features or are end-to-end differentiable.

- Broader integration with digital twins and environment-aware 6G network control frameworks, increasing the dynamism and spatial-temporal granularity of REMs.

Conclusion

The two-stage learned REM estimation framework provides a performant and scalable alternative to traditional LiDAR-dependent radio mapping. By interposing a learned, lightweight elevation proxy in place of expensive, static 3D geometry during inference, it achieves nontrivial RMSE improvements over purely image-based approaches and approaches the upper bound set by full LiDAR input. The associated reductions in acquisition cost and carbon footprint render this method particularly promising for future large-scale, dynamic, or resource-constrained deployments in next-generation wireless networks.