- The paper introduces the Unified Mixture Sampler (UMS) which generalizes mixture-based MCMC by dynamically adapting mixture components via deterministic recentering and scaling.

- It demonstrates high-precision approximation for exp-exp kernels across various models, yielding notable efficiency gains compared to conventional methods.

- Empirical evaluations, particularly in stochastic conditional duration models, show lower inefficiency factors and superior effective sample sizes.

Unified Mixture Sampler for Nonlinear State-Space Models with Exp-Exp Kernels

Introduction

Accurate and efficient inference in nonlinear and non-Gaussian state-space models remains a central challenge in applied econometrics and financial econometrics. Existing strategies, particularly the auxiliary mixture sampler, have enabled high-performance Markov Chain Monte Carlo (MCMC) for models such as stochastic volatility (SV) by cleverly reparametrizing the non-Gaussian likelihood as a finite mixture of linear Gaussian states. However, core limitations persist: mixture samplers are typically model-specific, demanding new approximations for each distribution, and are computationally inefficient in settings where shape parameters are unknown and iteratively updated, such as in stochastic conditional duration (SCD) models with Weibull or Gamma noise.

The paper "Unified Mixture Sampler for State-Space Models: Application to Stochastic Conditional Duration Models" (2604.04517) introduces the Unified Mixture Sampler (UMS), which generalizes mixture-based MCMC for a broad class of observation models characterized by so-called `exp-exp' kernels, i.e., likelihoods of the form exp(−Aexp(⋅)). The UMS achieves this via a deterministic recentering and rescaling algorithm, dynamically adapting a canonical mixture-of-normals to arbitrary distributional parameters—thereby eliminating the need for new mixture derivations. The framework is shown to yield high-precision approximations across SV, SCD, logit, Poisson, and extreme value models, with notable computational and statistical efficiency gains over conventional methods.

Unified Mixture Approximation: Methodology

State-space models of interest are given by a latent AR(1) process,

ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),

and nonlinear observation equations yt=fθ(ht,ϵt). The likelihoods often, after transformation, reduce to exp-exp kernels:

p(yt∣ht)∝exp(acx/2−bexp(cx)/2),

with problem-specific a,b,c.

The canonical auxiliary mixture sampler (AMS) approximates the intractable logχ2 error under the SV model using a fixed mixture of 10 normal densities (Omori et al., 2007). The UMS generalizes this: for a target kernel as above, it employs an analytic recentering and scaling of the mixture weights and component locations for each (a,b,c). Thus, mixture components become functions of the current data and parameters—critically, this recalibration is deterministic and instantaneous.

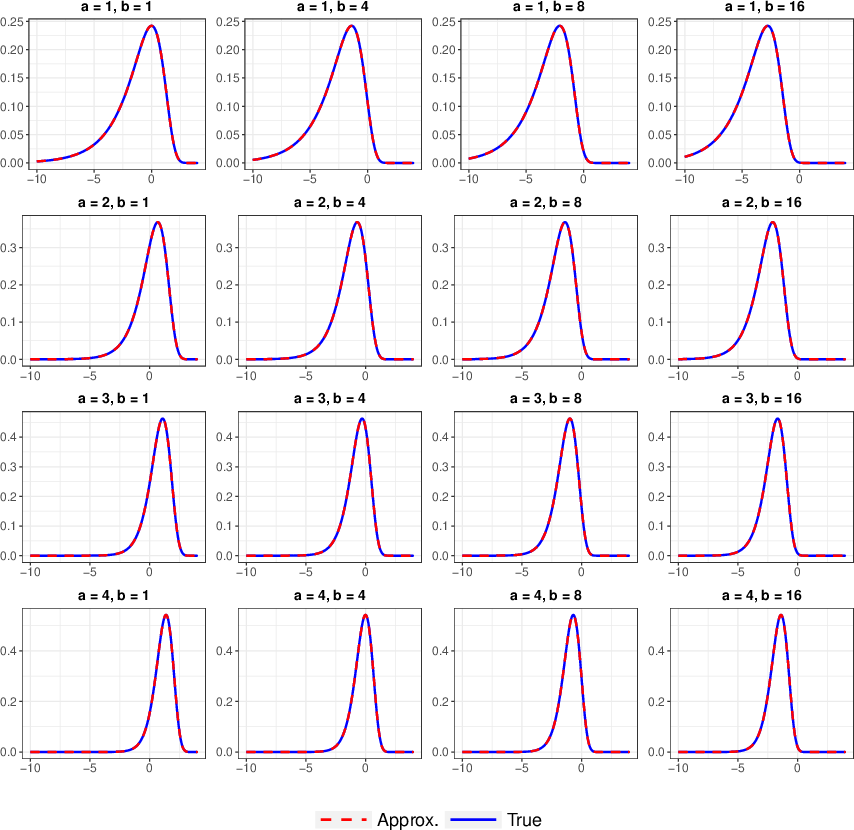

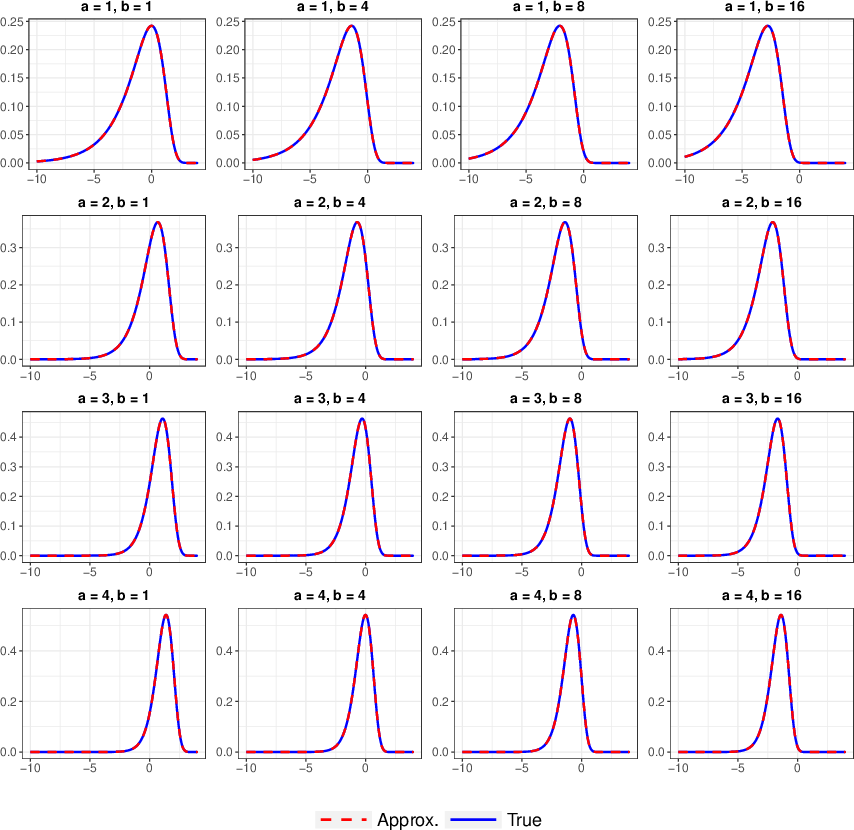

Figure 1: True density (blue) versus the unified mixture approximation (red) for c=1.

The figure confirms that the recentered mixture delivers an accurate match to the true non-Gaussian kernel over a range of parameter values. Furthermore, when compared against preexisting, manually optimized mixtures (e.g., for the Type I extreme value distribution), the UMS approximation demonstrates comparable fidelity.

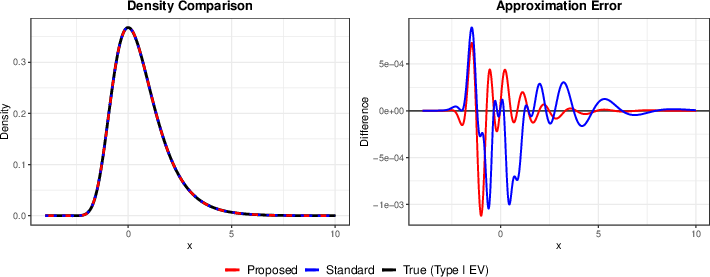

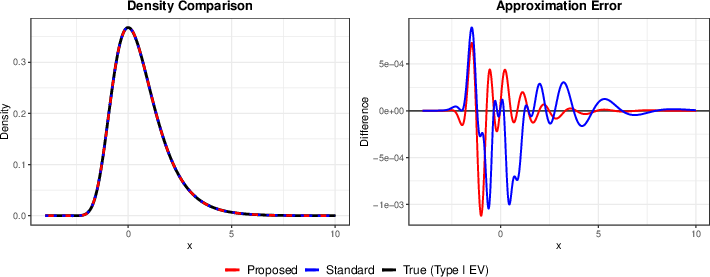

Figure 2: True density of the Type I extreme value distribution (black) versus the unified mixture approximation (red) and the conventional mixture from Fr\"uhwirth-Schnatter and Fr\"uhwirth (blue), with difference curve.

By integrating this adaptive kernel approximation in the MCMC, the complicated nonlinear updates for ht (and latent indicators st) are rendered jointly Gaussian, allowing the use of efficient simulation smoothers (e.g., Durbin-Koopman). All steps for updating mixture components—scaling, recentering, and updating weights—are analytic. This scalability is crucial when parameters such as the Weibull/Gamma shape must be updated in each iteration.

Stochastic Conditional Duration Model Application

In SCD models, durations are modeled as ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),0, where ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),1 can follow, e.g., standardized Weibull or Gamma distributions. The key challenge is that the log-likelihood for ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),2 inherits exp-exp structure, where the shape parameter of the error distribution (ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),3 for Weibull, ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),4 for Gamma) enters nonlinearly and is unknown.

In the UMS framework, for the Weibull-SCD kernel,

ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),5

with ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),6. The mixture adaptation recipes for weights, means, and variances are derived analytically for each ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),7 and ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),8. A similar recentering holds for the Gamma-SCD case.

After augmenting with latent mixture indicators ht+1=μ+ϕ(ht−μ)+ηt,ηt∼N(0,σ2),9, the observation equation is transformed into a linear Gaussian one:

yt=fθ(ht,ϵt)0

permitting state updates via the simulation smoother.

The overall MCMC updates are blockwise: unknown shape parameters are sampled via random-walk Metropolis-Hastings, while latent states and AR parameters are jointly sampled using a proposal informed by the (mixture-approximated) posterior and corrected by an MH acceptance ratio that accounts for residual approximation error.

Empirical Results

The UMS was evaluated using simulated SCD data (Weibull and Gamma noise, various shape parameters) and compared to the standard single-move slice sampler. The results show:

- The UMS yielded posterior mean estimates (with credible intervals) closely matching ground truth, indicating that the mixture approximation does not degrade statistical accuracy.

- Inefficiency factors (IFs) for both AR1 state parameters and unknown shape parameters were small (typically yt=fθ(ht,ϵt)1), in contrast to much larger IFs for the slice sampler. For latent states, IFs were an order of magnitude lower (Table: IFs for yt=fθ(ht,ϵt)2 for Weibull-SCD with yt=fθ(ht,ϵt)3 are 2.4 vs 36.0).

- Per-iteration computational time for UMS is somewhat higher due to simulation smoothing; however, the net number of effective samples per unit time is vastly superior under UMS, especially when duration clustering is strong (i.e., for small yt=fθ(ht,ϵt)4).

Strong numerical result: Across all scenarios considered, UMS dominated the slice sampler in terms of effective sample size.

Theoretical and Practical Implications

The UMS establishes that the auxiliary mixture methodology—long considered model-specific—is, in fact, extensible to a general analytic framework by recentering and rescaling a base mixture. This insight enables:

- Efficient MCMC for a wide class of state-space models whose observation kernels are exp-exp, including SV, SCD, logit, Poisson, and even certain max-stable/extreme value models.

- Seamless handling of models with time-varying or unknown shape parameters, with no need for mixture re-optimization at every MCMC step, making previously infeasible inference tasks practical.

- Plug-and-play efficient smoothing in large scale dynamic models, as the deterministic calculus for mixture adaptation imposes negligible overhead.

These advances suggest new directions for time series modeling where non-Gaussian measurement error is present and parameters evolve over time. The framework could potentially be extended further to accommodate richer dynamic latent variable models, nonparametric mixture bases, or joint modeling of multiple processes.

Conclusion

The Unified Mixture Sampler provides a universal, fully analytic, and computationally efficient framework for mixture-based MCMC in general nonlinear, non-Gaussian state-space models with exp-exp likelihoods. By permitting dynamic, deterministic adaptation of mixture components, the UMS addresses limitations of model-specificity and computational burden previously inherent in the auxiliary mixture sampler. Its statistical and computational efficiency is empirically verified in SCD models and is readily generalized to other observation processes. The method offers a robust platform for precise and scalable Bayesian learning in complex dynamic systems.

(2604.04517)