- The paper introduces Iso-FM, a method that adds a Jacobian-free regularizer to directly suppress pathwise acceleration in flow matching.

- It demonstrates significant improvements in few-step FID metrics on CIFAR-10 by reducing trajectory curvature without altering core training dynamics.

- The approach offers a plug-and-play strategy that bridges Eulerian and Lagrangian methods, enabling efficient, high-fidelity generation.

Isokinetic Flow Matching for Pathwise Straightening in Generative Flows

Overview and Problem Statement

Isokinetic Flow Matching (Iso-FM) introduces a principled, plug-and-play regularization strategy for Flow Matching (FM) in generative models, explicitly targeting the suppression of pathwise acceleration in the learned marginal velocity field (2604.04491). The motivation is grounded in the observation that standard FM, although constructing theoretically straight conditional paths, induces high-curvature marginal flows during training due to endpoint ignorance at inference and trajectory superposition. This geometric curvature inflates ODE solver discretization errors, severely impeding the efficiency of few-step generation.

Iso-FM addresses this inefficiency by augmenting the FM objective with a Jacobian-free regularizer: a self-guided finite-difference surrogate for the material derivative Dv/Dt, penalizing both temporal and convective acceleration. The result is a marked reduction in trajectory curvature, empirically yielding significant improvements in few-step FID on benchmark generative modeling tasks.

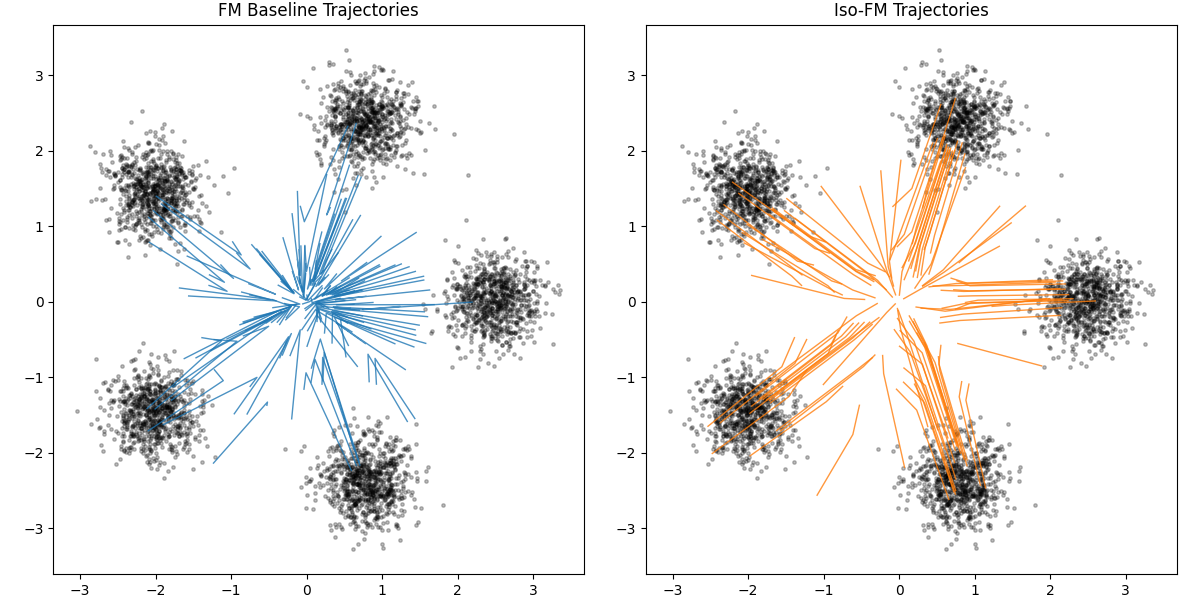

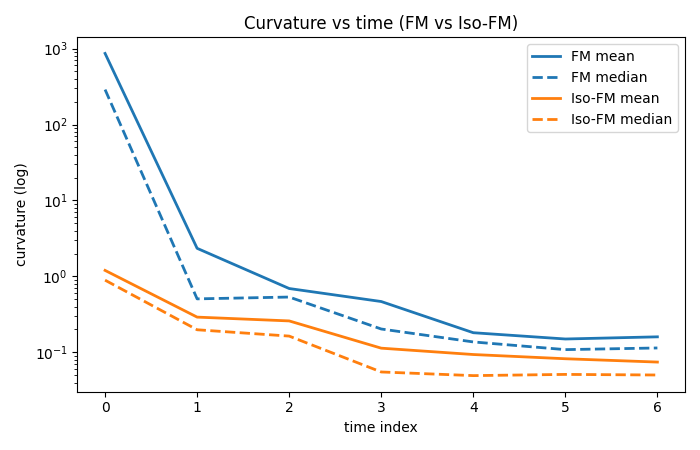

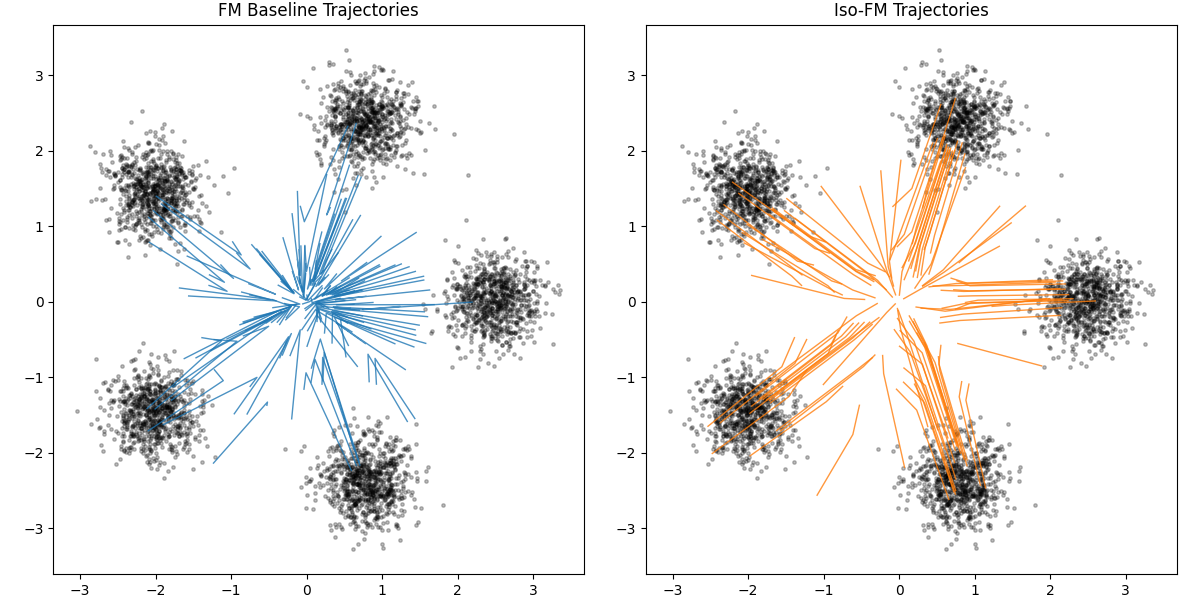

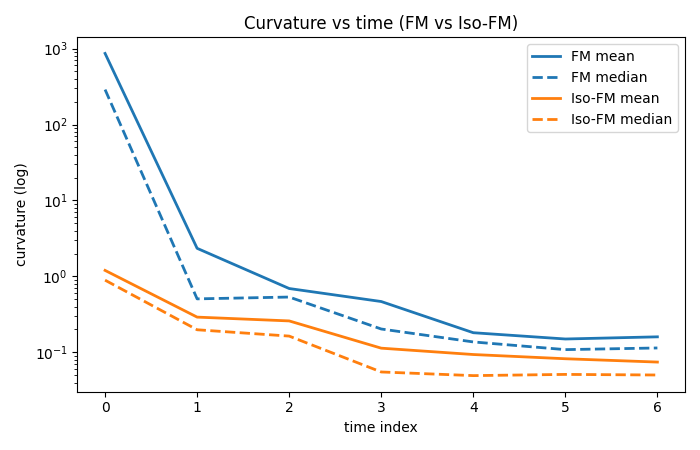

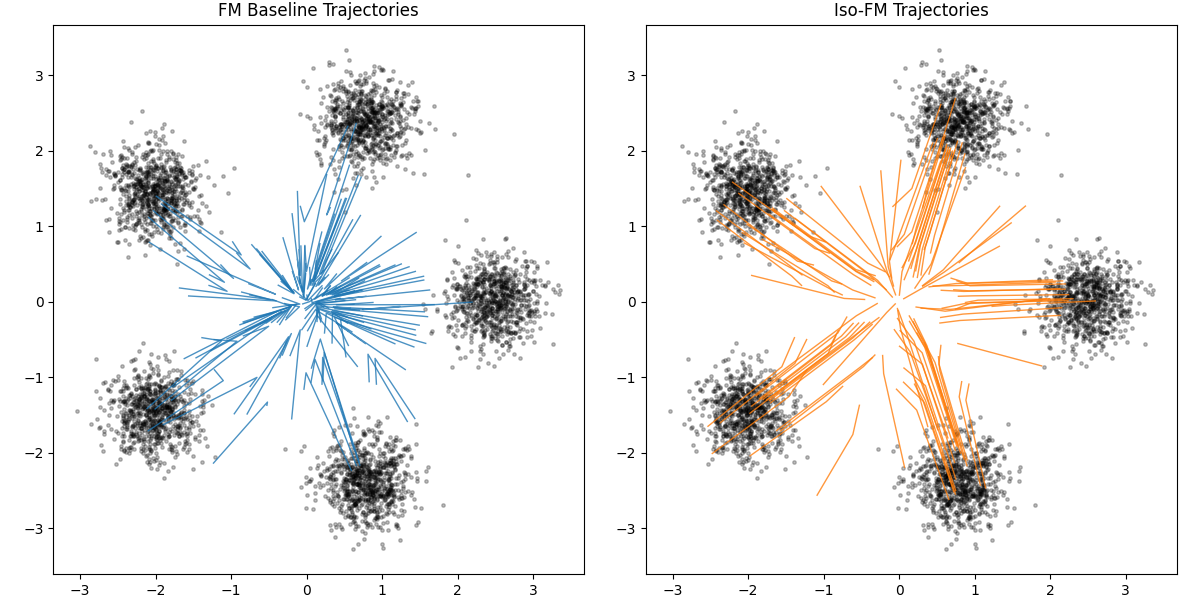

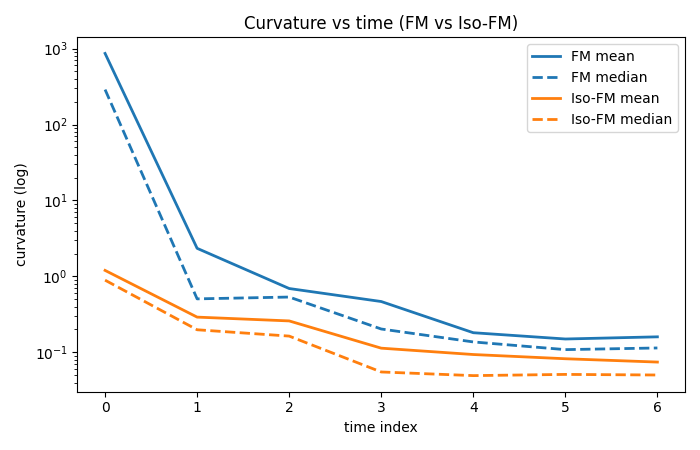

Figure 1: Iso-FM suppresses path bending in low-dimensional transport, yielding straighter generative trajectories and empirically reduced curvature (measured by the material acceleration Dv/Dt).

Theoretical Contributions and Regularization Mechanism

Iso-FM is rooted in a dynamical systems perspective on generative transport. In the Eulerian regime, generative sampling is defined by integrating a neural velocity field v(x,t). The pathwise material derivative,

DtDv=∂tv+(v⋅∇x)v,

measures total acceleration (including both explicit temporal change and spatially varying flow).

Standard FM—by design—regresses the network to an average over conditional endpoint velocities but cannot encode trajectory endpoints during inference, resulting in a marginal field v(x,t) with strong local curvature and high ∥Dv/Dt∥. This necessitates dense NFE discretization for solver stability and accuracy.

Iso-FM introduces a Jacobian-free regularizer:

LIso=∥vθ(xt,t)−sg[vθ(xt+ε,t+ε)]∥2,

where sg[⋅] denotes stop-gradient and the lookahead point xt+ε is generated via a model-guided forward Euler step. This loss directly penalizes velocity inconsistency along the model's own predicted paths, diminishing both convective and temporal acceleration without auxiliary encoders or computationally onerous second-order derivatives. Theoretical analysis establishes that suppression of Dv/Dt directly reduces one-step Euler solver error (see Equation A.2), aligning training with the goal of practical, coarse-step integration.

Figure 2: Iso-FM yields both straighter low-dimensional transport trajectories and consistently lower trajectory curvature compared to baseline FM, supporting its theoretical objective of acceleration suppression.

Empirical Evaluation: Efficiency and Quality Gains

Iso-FM was evaluated on CIFAR-10 using a DiT-S/2 backbone under both conditional and unconditional training regimes, including settings with and without minibatch optimal transport (OT) source-target pairing. All model comparisons were controlled for architecture, training horizon, and evaluation protocol, measuring FID at NFE = 1, 2, 4.

Key empirical results:

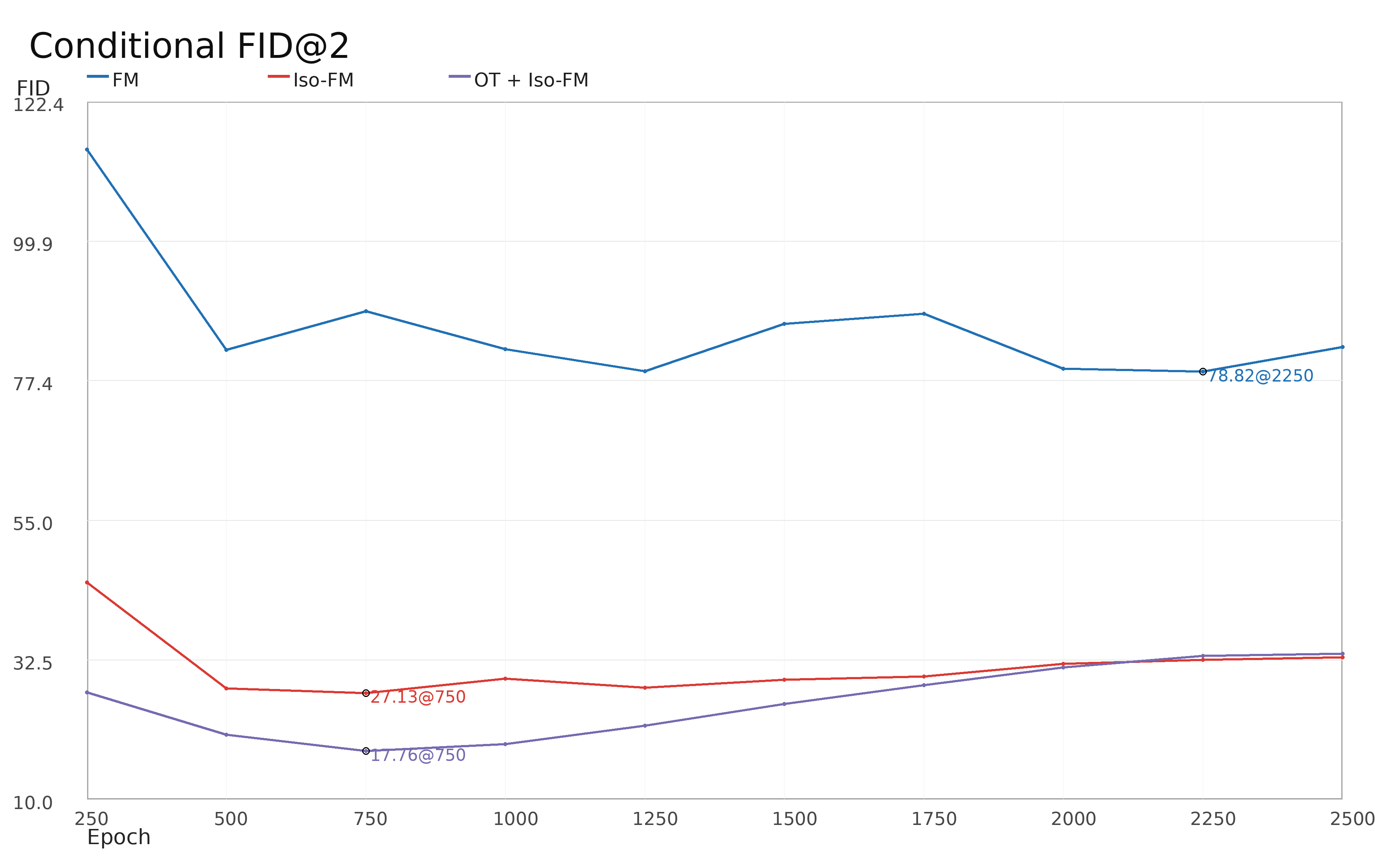

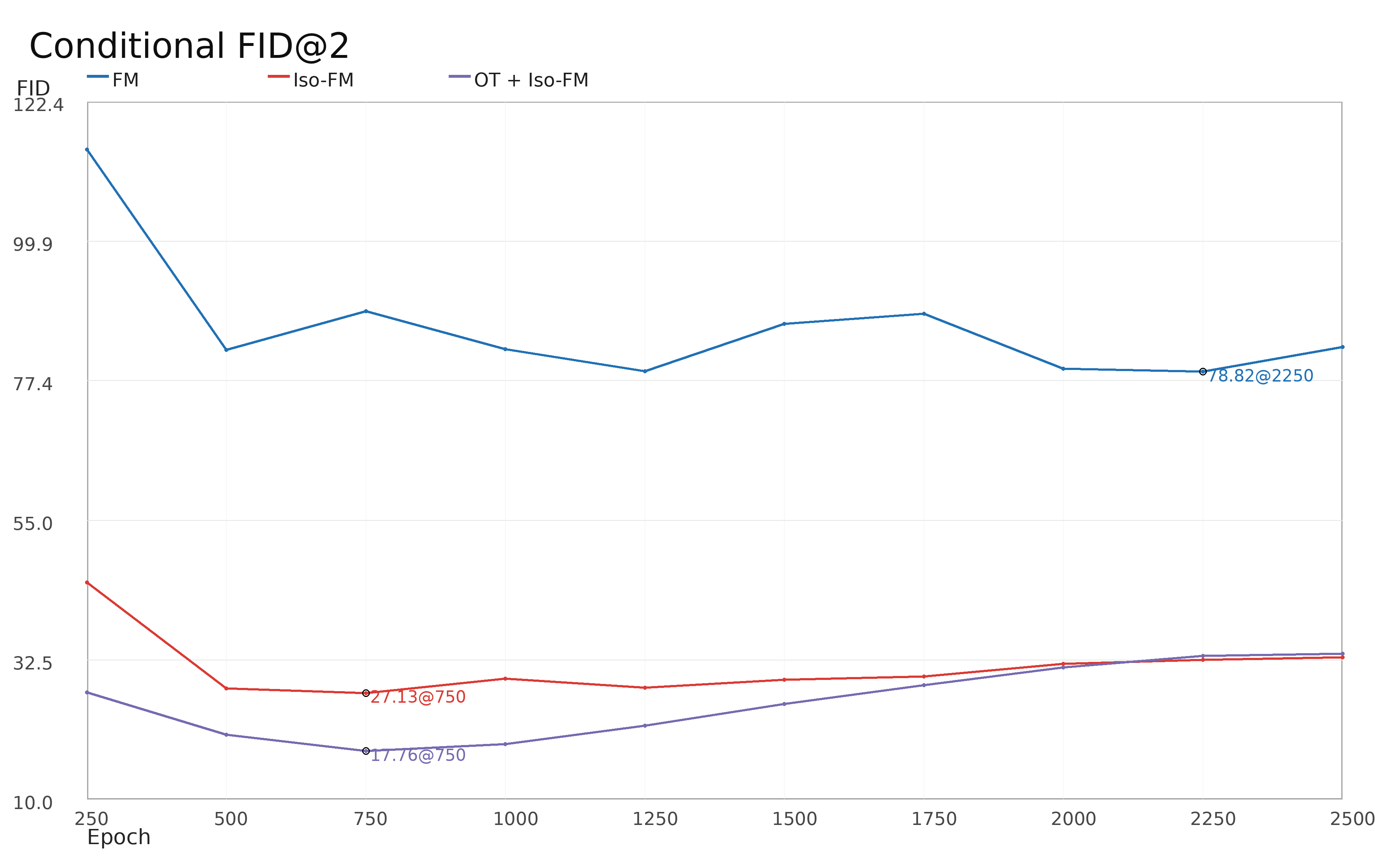

- In conditional, non-OT experiments, Iso-FM reduced FID@2 from 78.82 (FM) to 27.13—a 2.9Dv/Dt0 relative efficiency gain (a reduction of 65.6%).

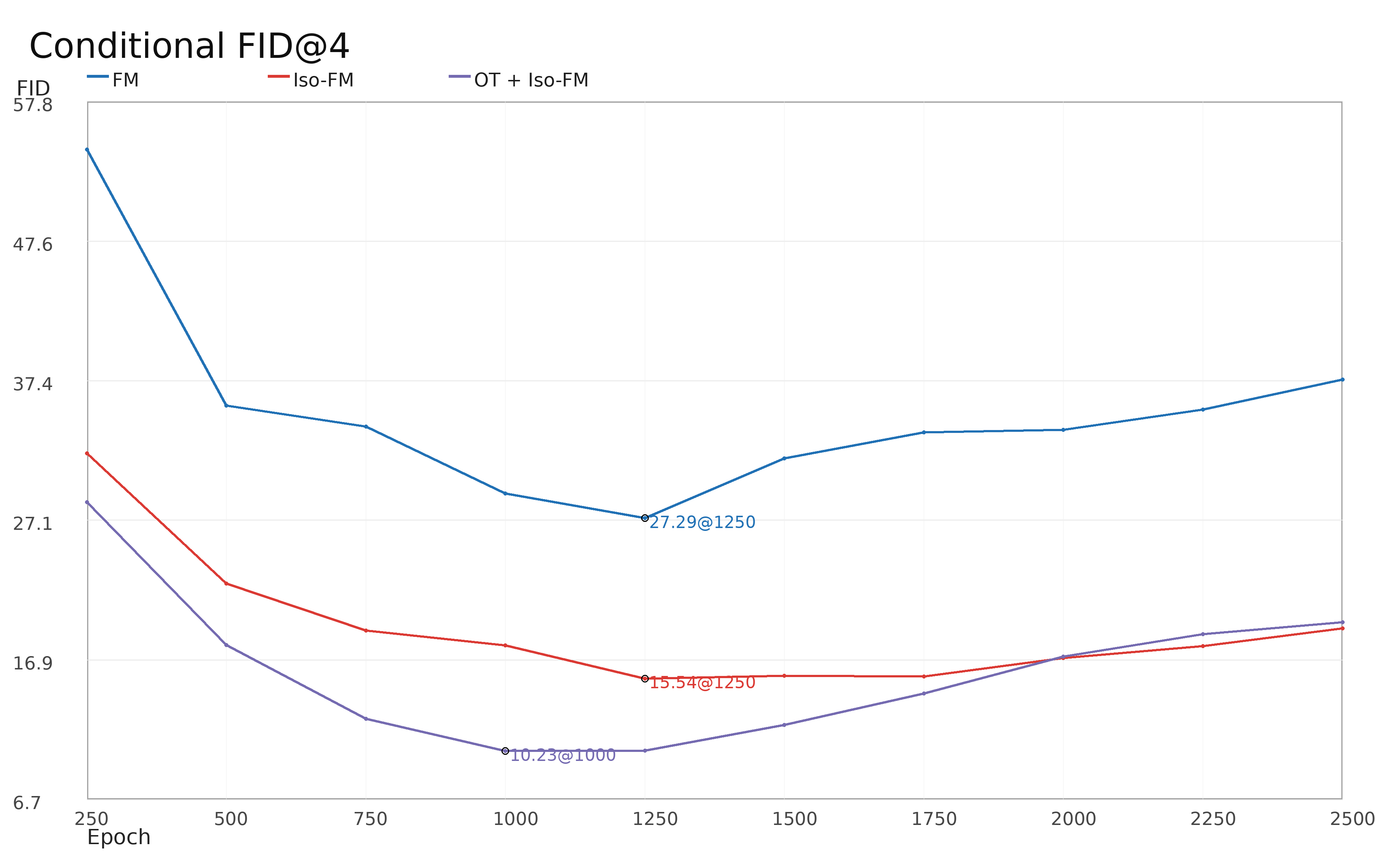

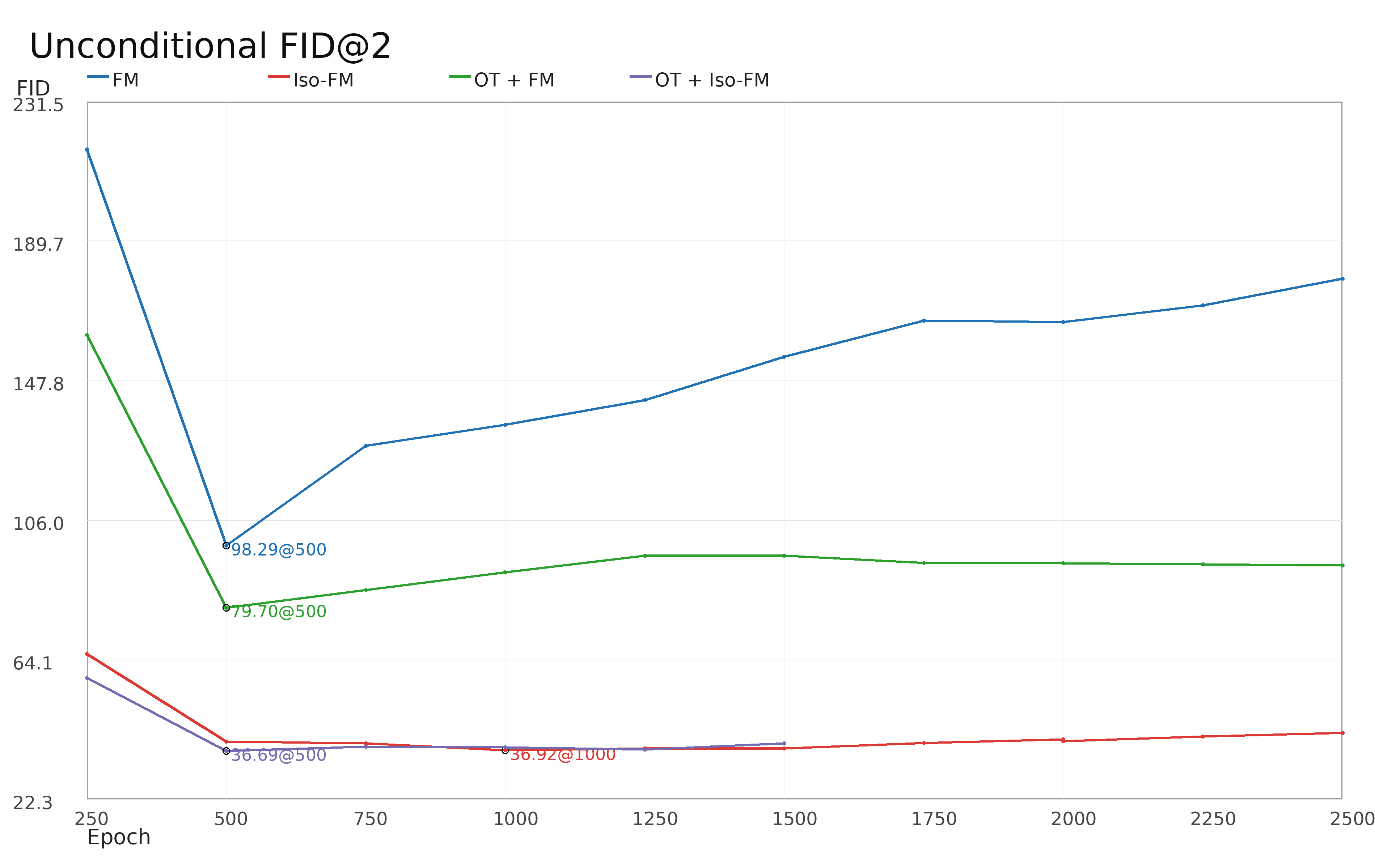

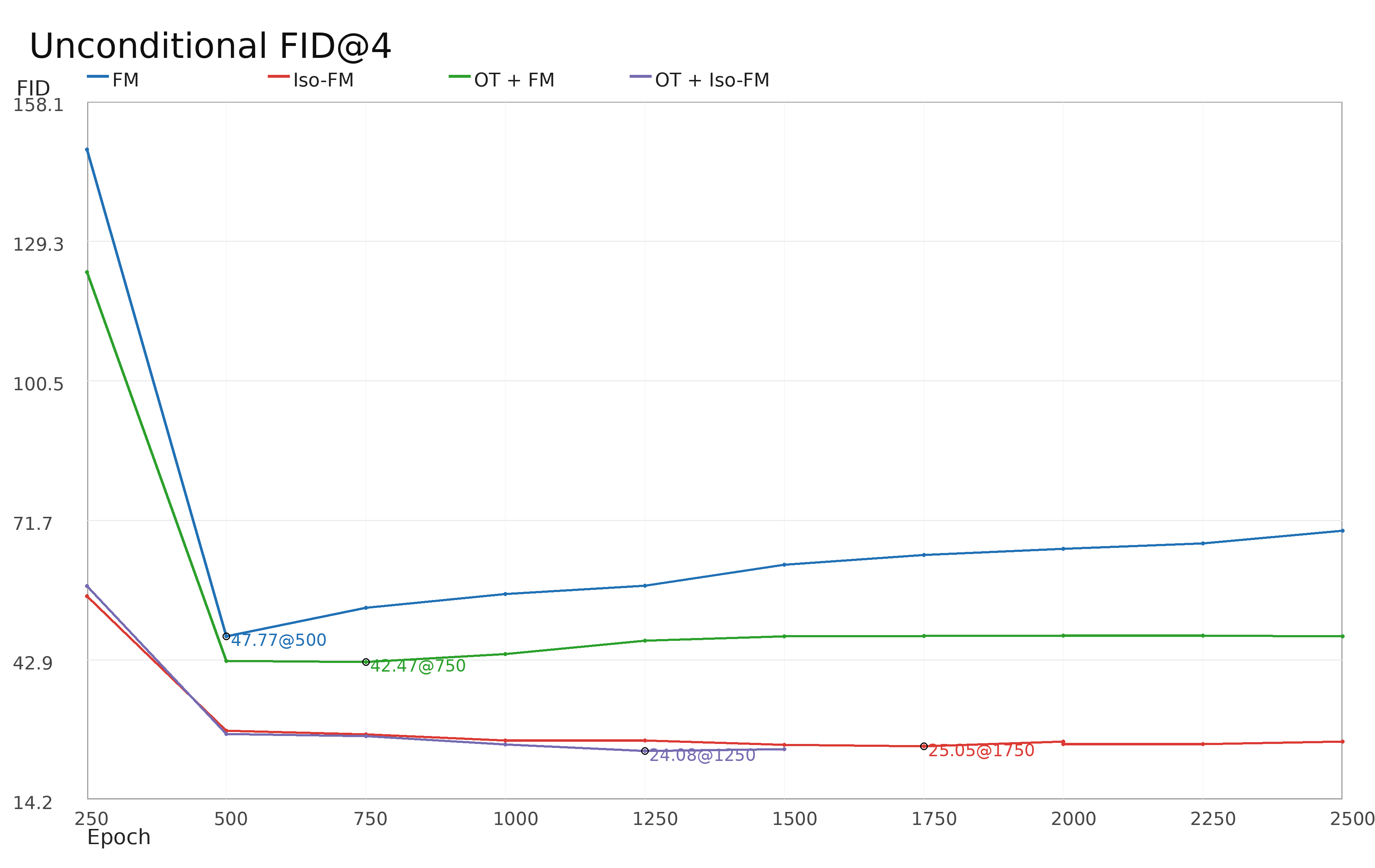

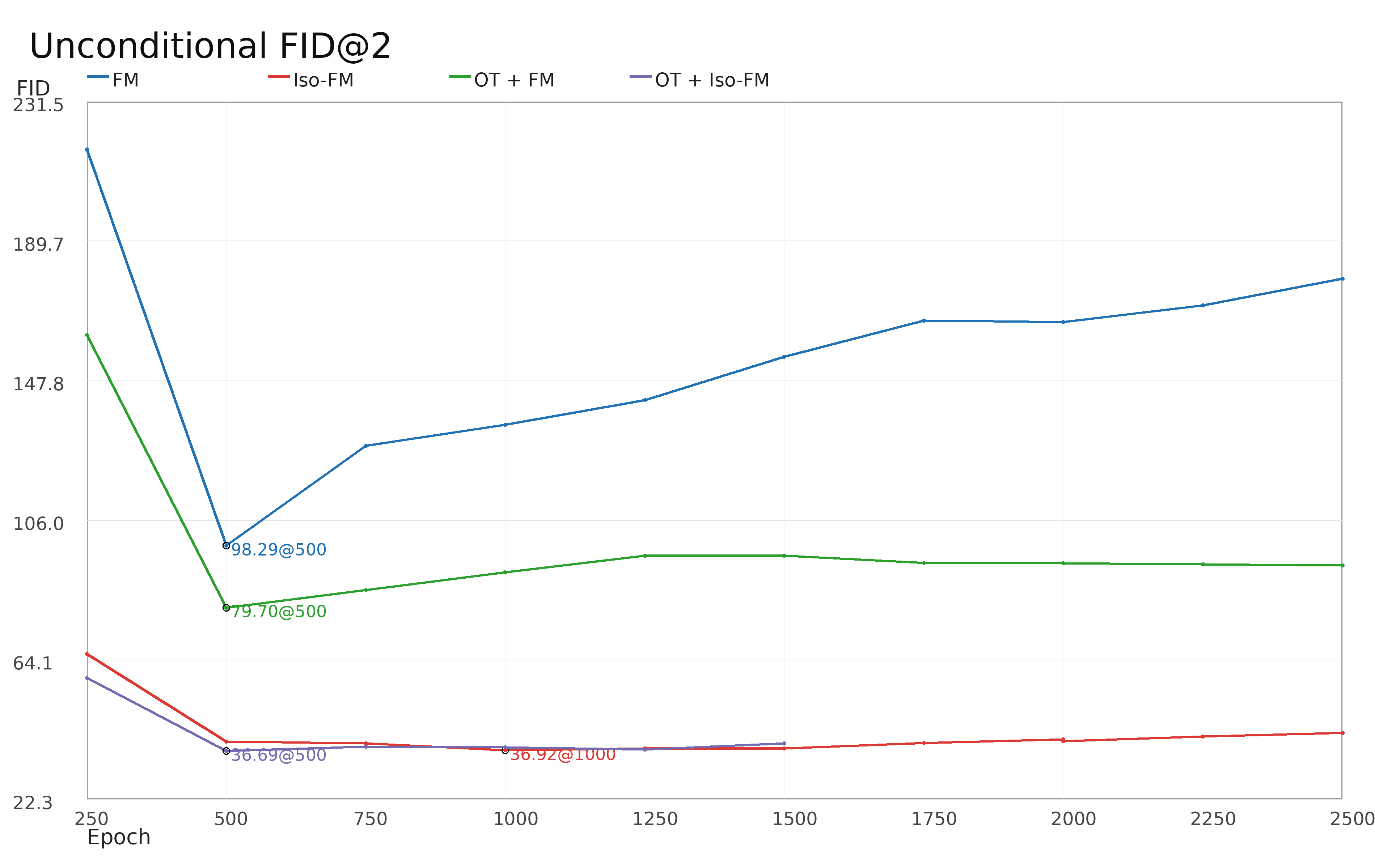

- In unconditional, non-OT settings, FID@2 improved from 98.29 to 36.92 (62.4% reduction).

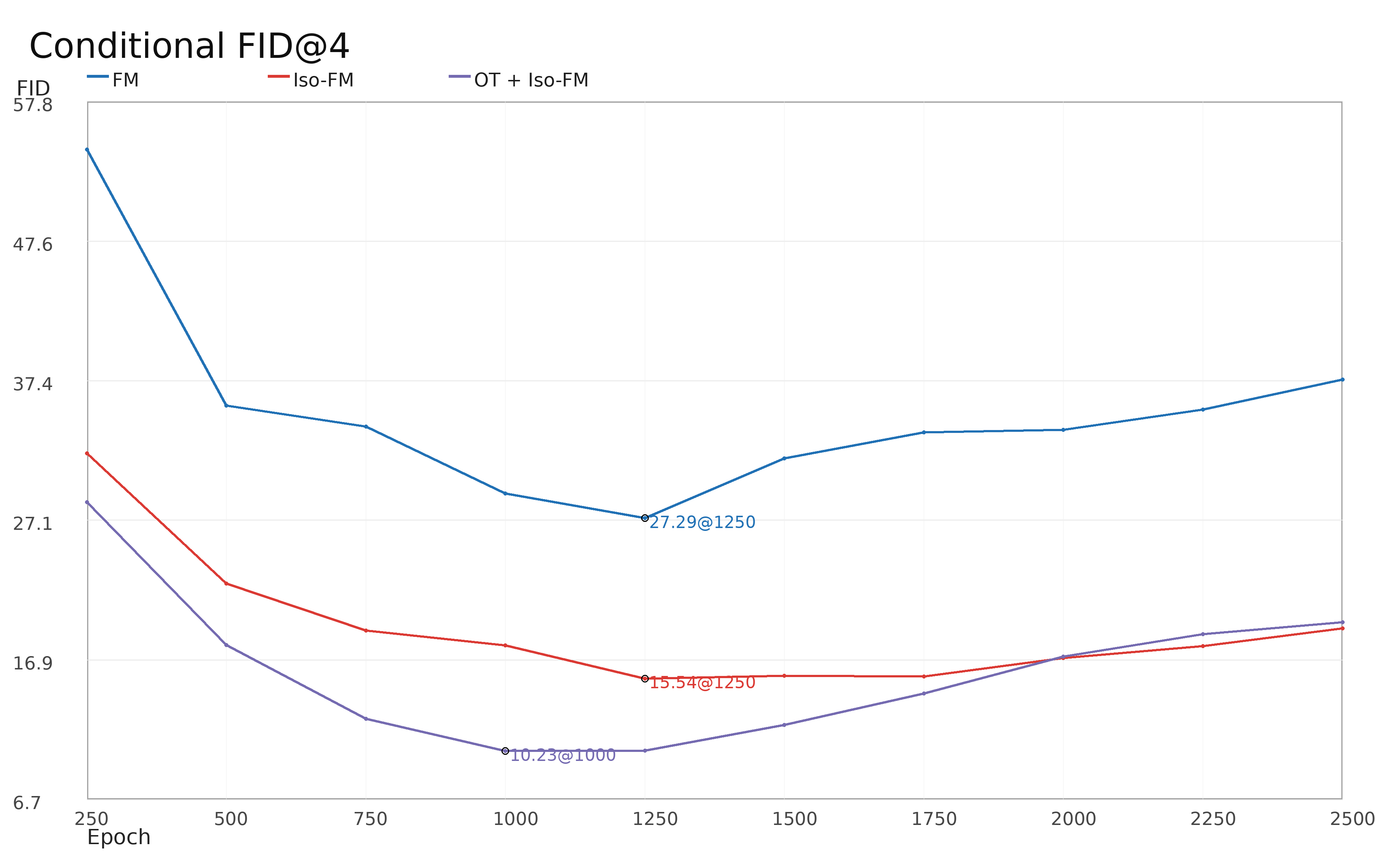

- With OT coupling, Iso-FM further reduced conditional FID@2 to 17.76 and FID@4 to 10.23, with strong improvements also observed in unconditional setups.

Figure 3: Conditional CIFAR-10 FID@2 and FID@4 as a function of training epoch, comparing FM, Iso-FM, and OT+Iso-FM.

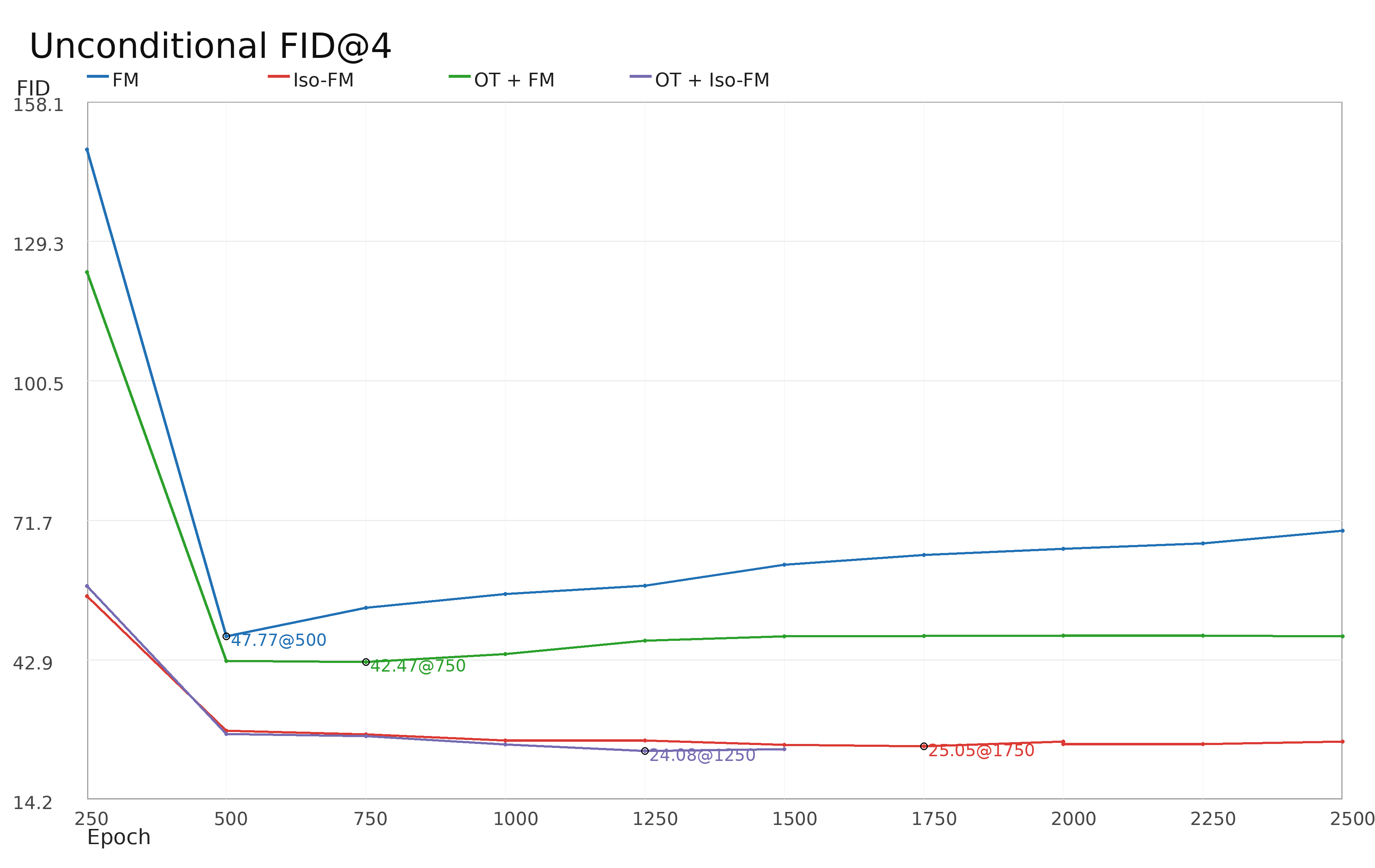

Figure 4: Unconditional CIFAR-10 FID@2 and FID@4 versus epoch, showing consistent efficiency improvements by Iso-FM across settings.

The results unequivocally demonstrate that controlling for pathwise acceleration in FM alters the quality-vs-NFE trade-off, substantially improving sample quality for few-step generation. Iso-FM achieves these gains without auxiliary distillation, model scaling, or architectural changes.

Sample Quality and Visual Evidence

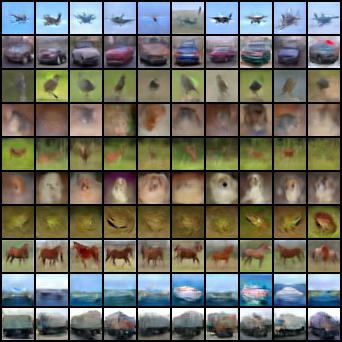

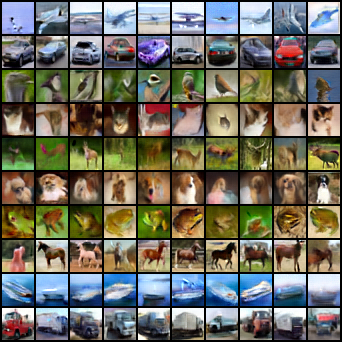

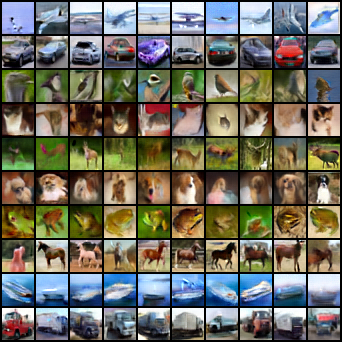

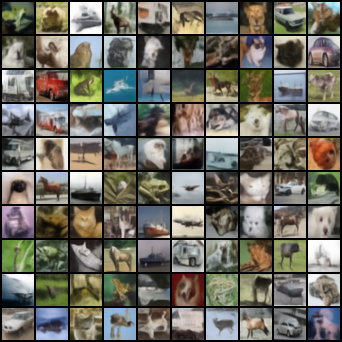

Visual inspection of generated samples corroborates the quantitative findings. Conditional and OT-augmented unconditional samples produced by Iso-FM exhibit enhanced fidelity and diversity compared to FM baselines at matched or fewer inference steps.

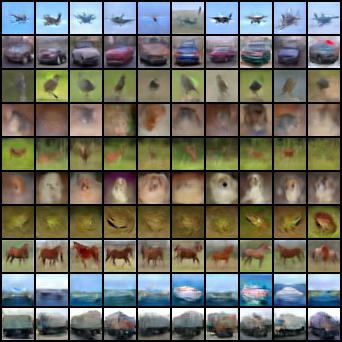

Figure 5: Conditional CIFAR-10 samples: FM (left) vs Iso-FM (right), both at epoch 1250.

Figure 6: Unconditional CIFAR-10 OT samples: OT+FM (left, epoch 500) vs OT+Iso-FM (right, epoch 1250).

Positioning Relative to Prior Work

Iso-FM stands in contrast to existing methods that address trajectory straightening via (i) modified source-target couplings (Lagrangian or OT-based flow straightening [liu2022flowstraightfastlearning, tong2024improving]), or (ii) direct flow map or consistency training (Consistency Models [song2023consistencymodels], MeanFlow [geng2025meanflowsonestepgenerative], Consistency Flow Matching [yang2024consistencyflowmatchingdefining]). Iso-FM is orthogonal: it operates in the Eulerian regime, regularizing the instantaneous velocity field without explicit map learning or additional supervision. This positions Iso-FM as both compositional with other straightening techniques and uniquely lightweight in its integration and computational footprint.

Implications and Future Directions

The principal implication is that appropriately regularized, single-stage Eulerian FM models can achieve a degree of few-step generative efficiency previously thought to require multi-stage Lagrangian distillation or costly path conditioning. In practice, Iso-FM enables reduced sampling compute and faster inference without sacrificing diversity or sample quality.

Theoretically, the work clarifies the geometric limits of straightening: the marginal material acceleration Dv/Dt1 is fundamentally lower-bounded by the spatial divergence of the conditional velocity variance (see Appendix A.5). As such, perfect global linearization is unattainable on complex, multimodal data, but substantial straightening—and hence sampling acceleration—is possible without mode collapse when acceleration regularization is applied as a soft, auxiliary term.

Iso-FM's plug-in character and Jacobian-free formulation position it for broad adoption, including potential extension to larger backbones, higher-resolution domains, and as a stabilization strategy in hybrid Eulerian-Lagrangian or map-based generative pipelines. Open theoretical challenges remain in quantifying global error bounds, topological consistency, and principled adaptation of regularization scheduling.

Conclusion

Iso-FM establishes pathwise acceleration regularization as a rigorously justified, compute-efficient mechanism for straightening generative flows in flow-based models. By suppressing material acceleration directly in the Eulerian field, Iso-FM delivers substantial improvements in few-step sample quality—demonstrated empirically on CIFAR-10 at parity with or in excess of existing FM- and OT-based baselines—without requiring changes to core training dynamics or model architecture.

This contribution refines the understanding of transport geometry in controlled generative modeling, bridging the gap between Eulerian and Lagrangian frameworks, and sets the stage for future work on hybrid models, adaptive regularization, and theoretical guarantees for fast, high-fidelity deep generative sampling.

References:

- (2604.04491) Isokinetic Flow Matching for Pathwise Straightening of Generative Flows

- See main text for citations to [liu2022flowstraightfastlearning], [song2023consistencymodels], [tong2024improving], [yang2024consistencyflowmatchingdefining], [geng2025meanflowsonestepgenerative], among others.