- The paper introduces the Circuit Harmonic Matrix to encode joint input-parameter harmonics, clarifying architectural effects on model expressivity.

- It develops a harmonic decomposition framework that quantifies coefficient variances, frequency couplings, and the structure of the Quantum Neural Tangent Kernel.

- Empirical studies confirm that circuit design choices influence trainability and spectral bias, providing actionable insights for optimized QML architectures.

Circuit Harmonic Matrices: A Spectral Framework for Quantum Machine Learning

Introduction and Motivation

This work presents a comprehensive spectral and algebraic framework for the architectural analysis of parametrized quantum circuits (PQCs) in quantum machine learning (QML), targeting the explicit effect of circuit design choices on the expressive capacity and trainability of quantum models. The central advance is the definition and application of the Circuit Harmonic Matrix C, which encodes the joint spectral structure induced by the quantum circuit and provides a data-agnostic description of frequency correlations, coefficient statistics, and kernel geometry. The approach enables the decomposition of QML models—particularly re-uploading PQCs with commuting phase encoders—into joint input-parameter harmonic expansions, yielding uniform tools to analyze second-order statistics, gradient-based kernels, and frequency coupling without any interaction with data.

Harmonic Decomposition and Construction of the Circuit Harmonic Matrix

PQCs used in QML typically consist of an input encoding stage (classical-to-quantum data embedding via parameterized gates) followed by trainable unitaries dependent on variational parameters, terminating in an observable measurement. For a broad and practically relevant class of circuits possessing commuting phase encoders (e.g., RP(x) with P a Pauli operator), the functional dependence of the quantum model f(x;θ) on the classical input x is band-limited and can be expressed as a truncated Fourier expansion:

f(x;θ)=ω∈Ω∑aω(θ)eiω⋅x

Here, Ω is a finite input frequency set determined solely by the encoder structure, independent of the variational block.

Remarkably, for single-use, Pauli-rotation variational circuits with Clifford interleavings, each trainable Fourier coefficient aω(θ) is itself a finite trigonometric polynomial of the parameters and admits a character expansion:

aω(θ)=k∈K∑Cωkeik⋅θ

with K a finite collection of parameter-frequency harmonics generated by the choice and pattern of trainable gates.

Aggregating all RP(x)0 yields the Circuit Harmonic Matrix RP(x)1 (Figure 1):

Figure 1: YZY entangling ansatz with all-to-all CNOT connectivity and RP(x)2 encoding, exemplifying the joint input-parameter structure that determines the harmonic support in the circuit harmonic matrix RP(x)3.

RP(x)4 encodes all architectural information relevant to the accessible input and parameter harmonics, integrating contributions from encoder structure, variational gate set, measurement observable, and initial quantum state.

Architectural Analysis using RP(x)5: Second-order Statistics and Frequency Couplings

Central objects of learning dynamics—variances, covariances, and correlations of trainable features—are explicit algebraic functions of RP(x)6. When the parameters RP(x)7 are initialized randomly (RP(x)8), coefficient statistics factor as:

- Covariance: RP(x)9

- Variances: Diagonal entries of P0

- Pearson Correlations: P1

where P2 is a projector excluding the constant mode and P3 is the diagonal variance matrix. This Gram-matrix structure (Figure 2) makes explicit the cross-frequency couplings imposed by circuit architecture, and reveals nontrivial redundancy and spectral bias phenomena.

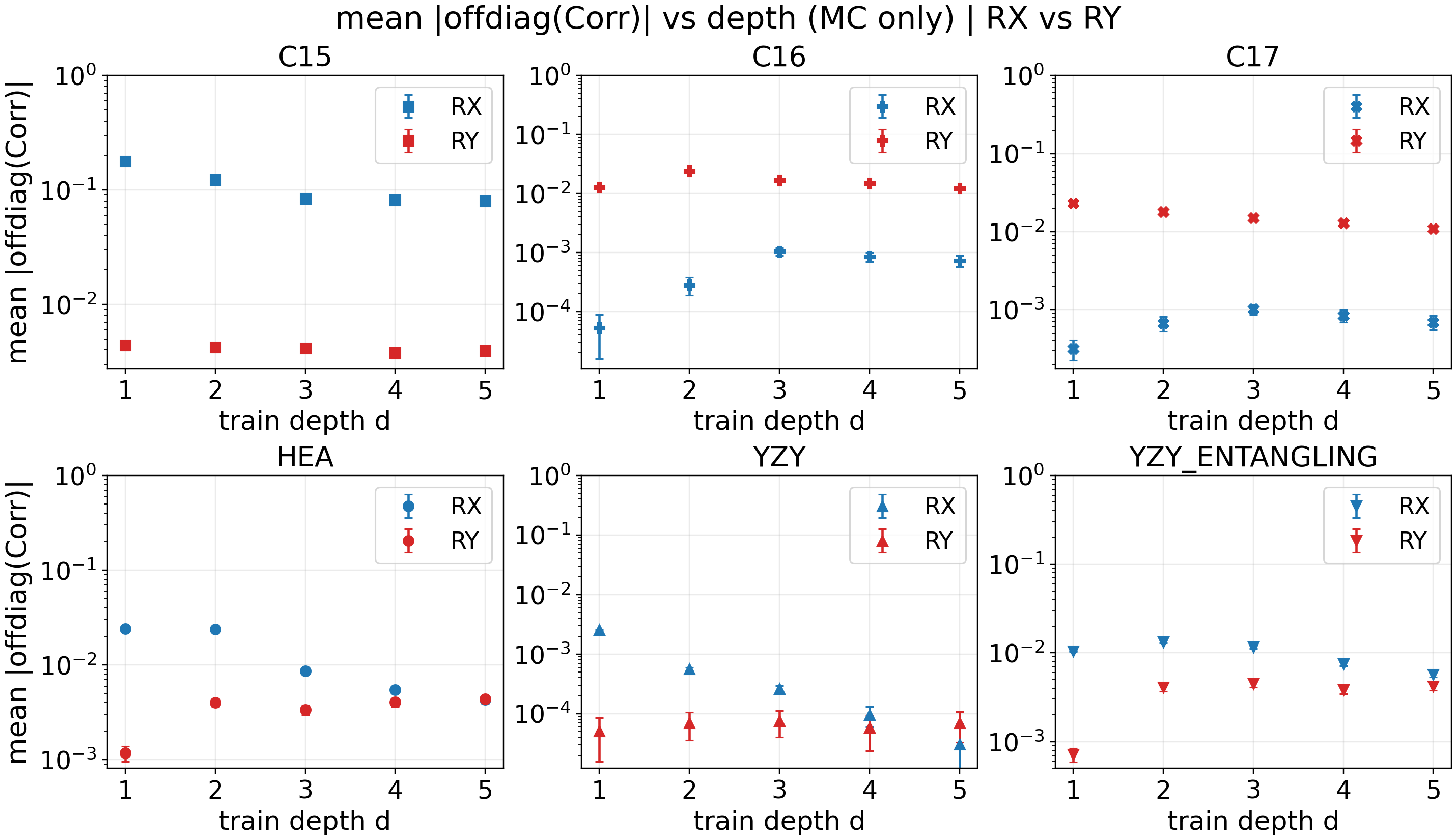

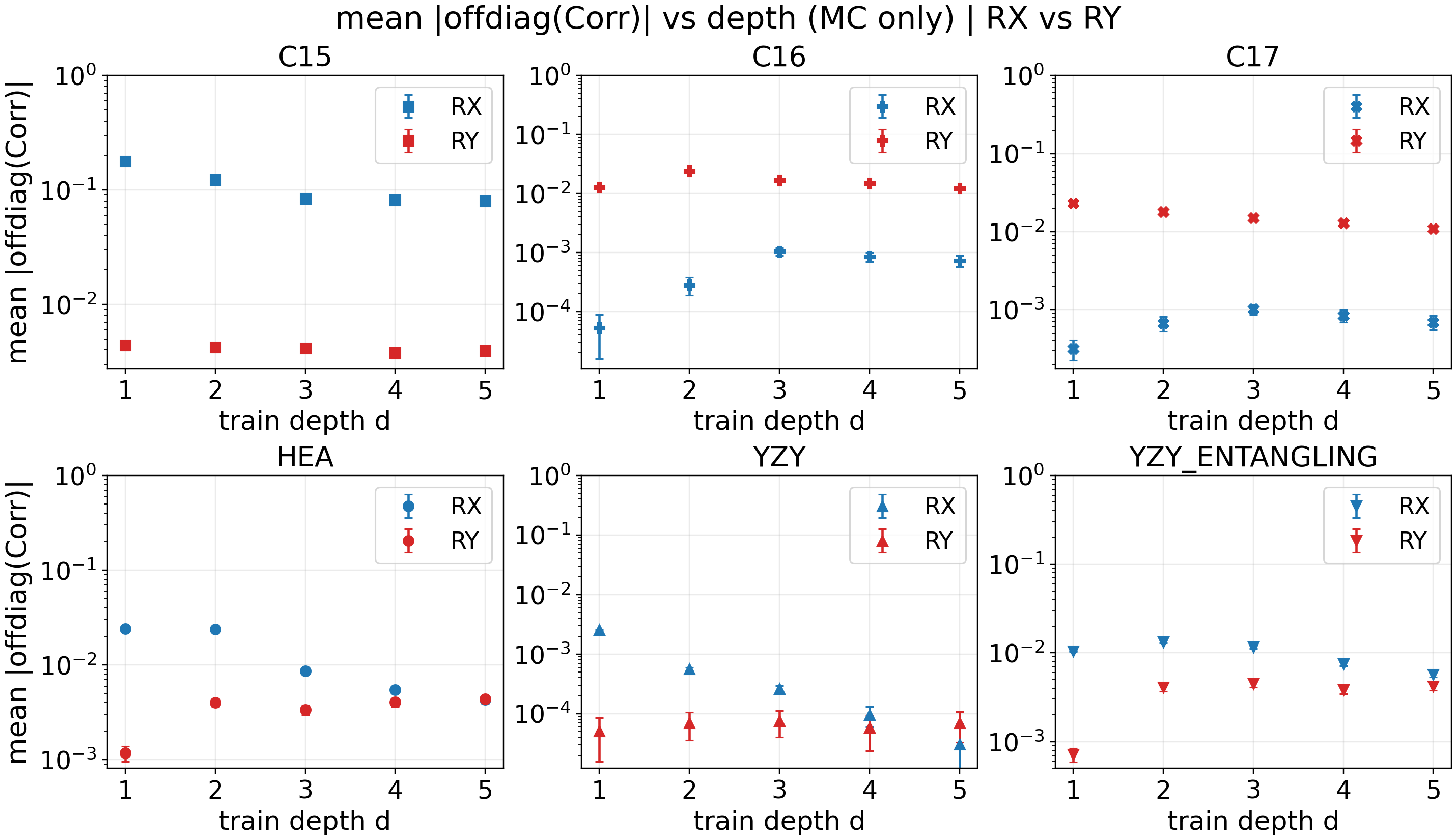

Figure 2: Mean off-diagonal correlation as a function of circuit depth for multiple architectures, highlighting the persistence or decay of architectural frequency correlations as depth increases.

The explicit path-based decomposition of P4 clarifies how encoder-induced redundancy and trainable block design determine the magnitude and structure of these correlations, which has direct implications for PQC expressivity and spectral bias as discussed numerically below.

Quantum Neural Tangent Kernel and Kernel Geometry from P5

Gradient-based learning dynamics are fundamentally determined by the Jacobian of the model output with respect to parameters, and their empirical Gram matrix: the Quantum Neural Tangent Kernel (QNTK). The QNTK for a finite input set is given by

P6

Exploiting the harmonic decomposition, the parameter-averaged QNTK is shown to factor as:

P7

with P8 the squared Hamming weight of parameter harmonics (Figure 3). This indicates that both the functional directions accessible under gradient descent and their associated training bias are determined entirely by the architecture-level matrix P9 and the trainable gate set.

Figure 3: Averaged harmonic QNTK for the YZY circuit with entanglers, f(x;θ)0 encoding, and varying depth, revealing the congruence between the QNTK structure and the harmonic correlation matrix induced by f(x;θ)1.

Projection to the data-space QNTK is mediated by the input design matrix, connecting architectural and data-dependent aspects.

Numerical Study: Variance, Correlation, and QNTK Structure across Circuit Classes

Empirical validation on standard circuit ansätze (YZY, Circuit 16, Circuit 17, with various encoding schemes) confirms precise agreement between f(x;θ)2-derived predictions and Monte Carlo estimates for variances, correlations, and QNTKs under randomized parameters. Key observations include:

- The frequency spectrum of variances and nontrivial correlation structure is dominated by both encoder redundancy and entangling/block-depth properties (see Figure 4 for the YZY architecture with no entangling).

Figure 4: YZY circuit without entangling, showing restricted accessible frequency spectrum due to absence of parameter mixing.

- Correlation matrices reveal architecture-specific cross-frequency couplings which can persist or diminish as circuit depth and entanglement are varied (Figures 5, 6).

Figure 5: Circuit 16 (f(x;θ)3 encoding) schematic, illustrating a trainable block with restricted gate pattern and its effect on spectral redundancy.

Figure 6: Circuit 17 (f(x;θ)4 encoding) schematic, highlighting further architectural constraints and their impact on the expressibility and frequency coupling.

- The magnitude and decay of off-diagonal correlations, as well as the eventual diagionalization of QNTKs in deep/entangling architectures, corresponds closely with theoretical predictions for approach to 2-design-like behavior (see also Figure 2).

Implications and Theoretical Significance

The formalism provides an analytic bridge between circuit structure and the geometry of the QML hypothesis class, separating intrinsic architectural effects from dataset-dependent phenomena. Some key implications are:

- Trainability Diagnostics: f(x;θ)5-based metrics directly predict barren plateaux, frequency inaccessibility, and regimes where trainable directions vanish, generalizing recent results on PQC expressibility and spectral bias (2604.04292, Mhiri et al., 2024).

- Kernel Geometry: The accessible kernel space is governed by the architecture via f(x;θ)6, meaning that both lazy training and feature learning regimes are inherently restricted by circuit design, independently of the data.

- Inductive Bias: By controlling f(x;θ)7 through specific encoder and entangling patterns, one can tailor the spectral and cross-frequency bias of the QML model, providing a principled approach to architecture selection and initialization.

Limitations and Future Directions

The paper highlights several theoretical and practical caveats. The framework assumes commuting phase encoders and single-use, independent parameter architectures. Extensions to non-commuting encoders, parameter sharing (higher harmonics in f(x;θ)8), and the inclusion of hardware noise or more general ansätze will require further generalization. Higher-order statistics (beyond covariance and kernel structure), as well as the dynamics of trained (non-uniform) parameter distributions, remain open frontiers.

Advancing these directions could bring sharper theoretical guarantees for QML trainability and function approximation, support principled circuit synthesis for application-specific inductive biasing, and inform dequantization conditions or classical surrogate constructions for quantum models.

Conclusion

This work introduces the circuit harmonic matrix f(x;θ)9 as a unifying, architecture-resolved object encoding all accessible joint input and parameter harmonics in PQCs for QML. x0 enables explicit pre-training computation of coefficient variances, correlations, and the geometry of quantum neural tangent kernels, providing strong theoretical and numerical support for architecture-driven analysis of expressivity, spectral bias, and trainability. This framework not only clarifies the effect of circuit structure on QML models but also offers a foundation for systematic architecture design and comparative analysis, thereby advancing both the theoretical and practical development of quantum machine learning.