- The paper introduces a novel adversarial attack, Spike-PTSD, which leverages PTSD-inspired spike scaling to disrupt SNN functionality.

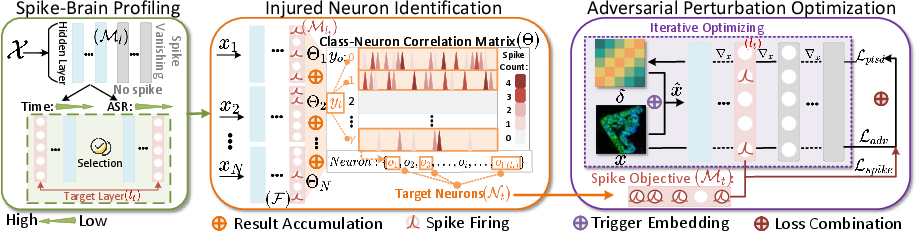

- It employs a three-phase approach—spike-brain profiling, injured neuron identification, and adversarial perturbation optimization—to achieve above 99% attack success across multiple datasets.

- Empirical results demonstrate that minimal, targeted perturbations can hijack critical neurons, exposing vulnerabilities in both hardware and algorithmic defenses of SNNs.

Bio-Plausible Adversarial Attacks on SNNs: The Spike-PTSD Framework

Introduction

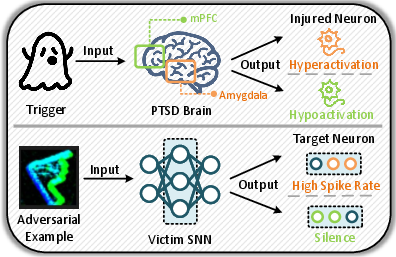

The adoption of Spiking Neural Networks (SNNs) in energy-critical and embedded applications instigates urgent security considerations. Traditional Adversarial Example Attacks (AEAs) achieved limited success in destabilizing SNNs due to their repeated reliance on differentiability and ANN-inspired mechanisms, which fail to exploit intrinsic neurodynamics. "Spike-PTSD: A Bio-Plausible Adversarial Example Attack on Spiking Neural Networks via PTSD-Inspired Spike Scaling" (2604.01750) systematically bridges neuroscience and adversarial learning by translating abnormal neuronal firing — as observed in PTSD-affected biological brains — into an optimized bio-plausible adversarial attack mechanism for SNNs.

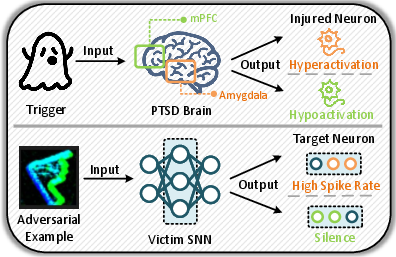

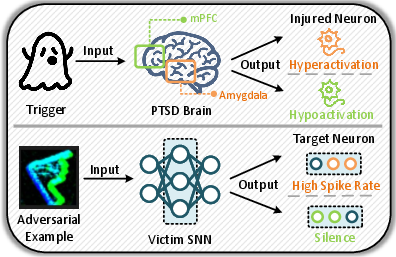

Figure 1: Motivation behind Spike-PTSD and analogies between the post-traumatic stress response of the PTSD-affected brain and AEAs against SNNs.

Framework Overview and Methodological Innovations

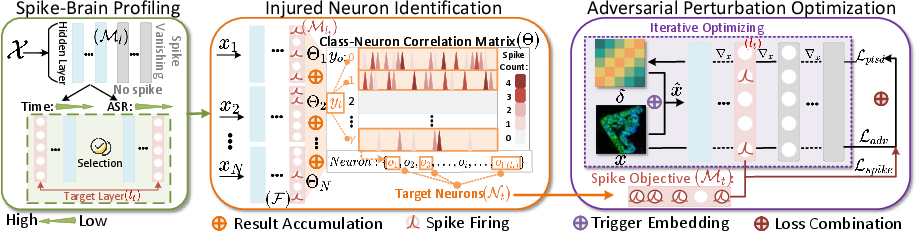

The proposed Spike-PTSD attack pipeline is structured around three principle modules: spike-brain profiling (SBP), injured neuron identification (INI), and adversarial perturbation optimization (APO). The method directly incorporates knowledge from computational neuroscience, notably the observation of hyper- and hypoactivation patterns in PTSD, to select critical layers and neurons for adversarial manipulation.

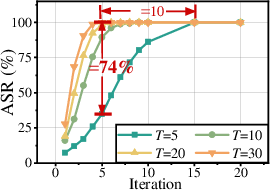

Figure 2: The overview of Spike-PTSD framework with three key modules: (i) Determine the target layer (lt) during spike-brain profiling on computational time or ASR. (ii) Then, select target class (yt) and neurons (Nt) in lt according to spike-sensitivity and fired spikes under the targeted attack setting (the orange region). (iii) Finally, set the spike objective of Nt and update the perturbation (δ) during the combined optimization process (the purple region) to generate AEs (x^).

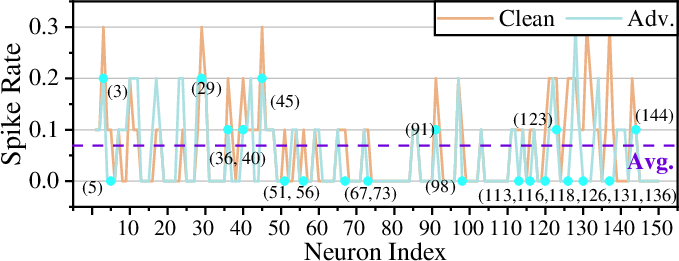

Spike-brain profiling (SBP) first isolates decision-critical layers in SNNs by analyzing spatiotemporal spike maps and pruning layers exhibiting vanishing activity. INI exploits the resulting layer to extract neuron subsets with either high class-specific correlation (targeted, analogizing amygdala hyperactivation) or with globally dominant firing rates (untargeted, analogizing mPFC hypoactivation).

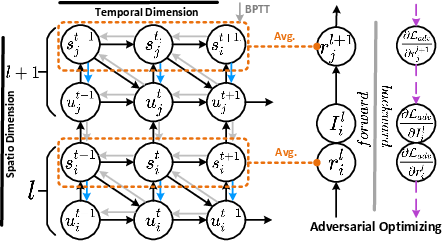

For optimization, Spike-PTSD augments classical adversarial losses with a mean-squared-error-based spike scaling term, enforcing either amplification or suppression according to attack polarity. The final loss is composed as a weighted sum of cross-entropy adversarial loss and spike modulation loss, tuned to maximally destabilize the victim SNN's functional dynamics.

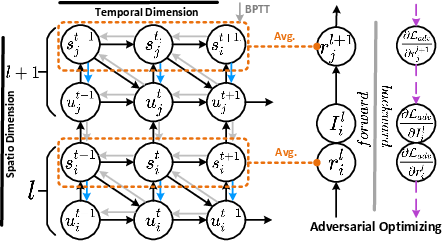

Figure 3: Forward and backward processes with surrogate gradients in direct SNN training and AEAs. s and n denote spike and n-th layer, respectively.

Spike Dynamics under Adversarial Manipulation

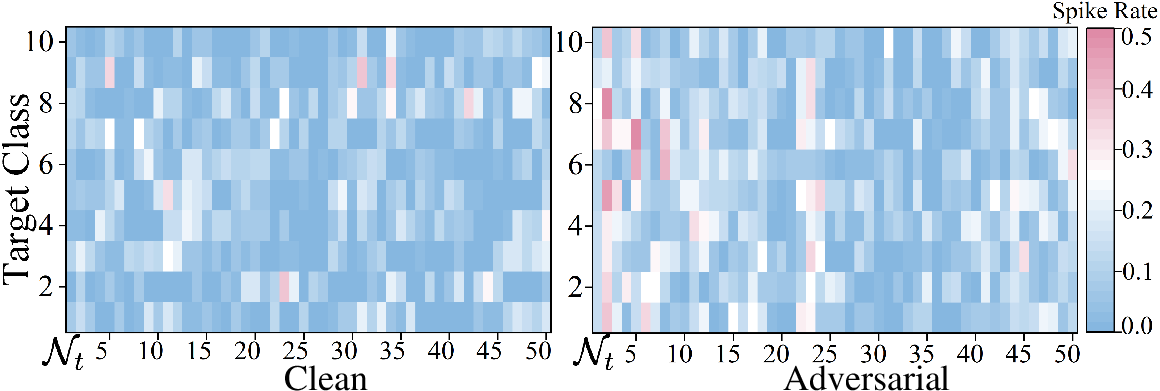

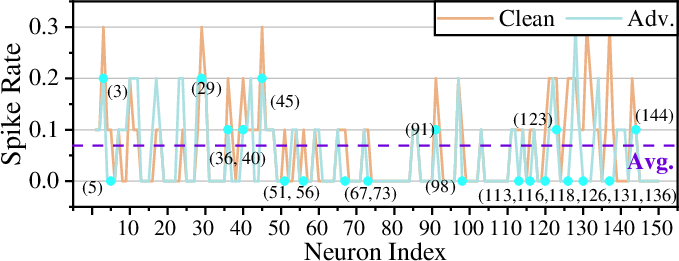

Spike-PTSD provides robust mechanisms to destabilize critical neural dynamics in SNNs. For targeted attacks, aggressive amplification of spike rates for class-relevant neurons causes erroneous class activation. Conversely, untargeted attacks suppress spike rates of dominant neurons, leading to global inference failure.

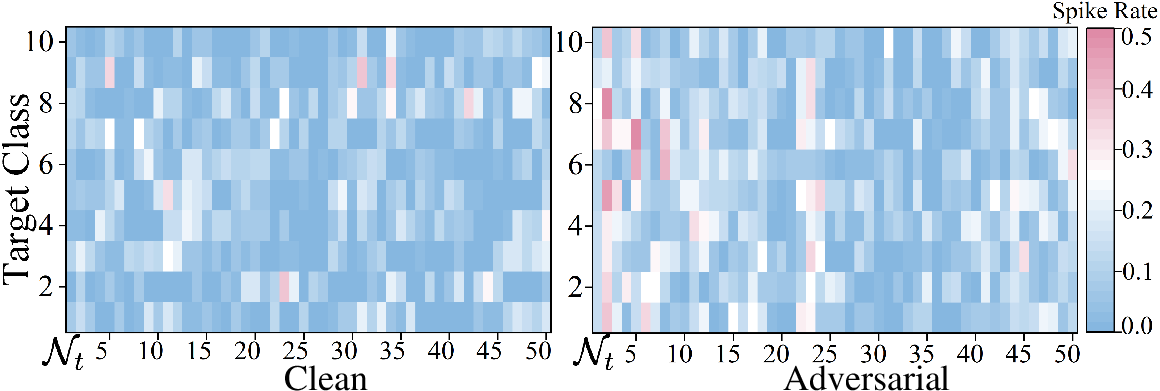

Empirical results demonstrate pronounced shifts in spike rates of yt0 post-perturbation, confirming the ability of minor input perturbations to transition functional neurons into pathological states analogous to PTSD-induced hyper/hypoactivity in biological circuits.

Figure 4: Spike rate changes under targeted attack (yt1) with spike amplification objectives on CIFAR10-DVS (VGGDVS). The data is intercepted from the first 50 neurons in yt2.

Figure 5: Spike rate changes under untargeted Spike-PTSD with spike suppression objectives on CIFAR10-DVS (VGGDVS). Neurons with yt3 above the Avg. on the clean line are yt4.

Evaluation and Quantitative Results

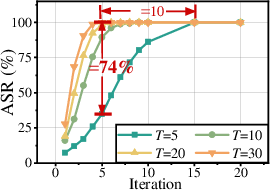

Spike-PTSD is evaluated across six datasets (CIFAR10, CIFAR100, SVHN, N-MNIST, CIFAR10-DVS, DVS128Gesture), three encoding schemes (direct, Poisson, frame-based), and four SNN architectures (VGG16, ResNet18, VGGDVS, ResNet19DVS). Experimental protocol adheres to strong white-box threat assumptions, with full access to model and spike traces.

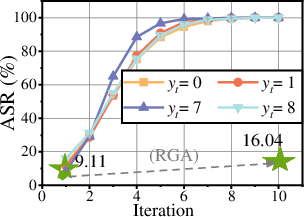

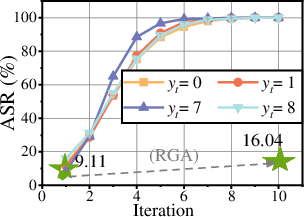

The method consistently achieves Attack Success Rates (ASR) exceeding 99% across both targeted and untargeted settings, even where prior SOTA AEA algorithms (e.g., RGA, HART, PDSG) are unable to exceed single-digit ASR under targeted constraints. Importantly, high ASR is attained for both static and neuromorphic (event-based) datasets, demonstrating attack generality.

Ablation studies show that:

- Shallow layers yield lower computational overhead, while deeper layers (with greater neuron counts) maximize ASR in targeted settings.

- The spike scaling objective provides substantial attack improvements, able to nearly replace the adversarial term for untargeted attacks.

Systemic, Hardware, and Theoretical Implications

Spike-PTSD exposes foundational vulnerabilities at both the algorithmic and hardware levels. On neuromorphic substrates (e.g., Intel Loihi, IBM TrueNorth), the findings imply that adversarial spike scaling can be effected via analog manipulation of membrane voltages, thresholding, or spike-timing — all of which may arise either unintentionally from hardware noise or be weaponized, e.g., via hardware Trojans or malicious sensor input. The demonstrated ability to consistently trigger misclassification with precise perturbations in only a handful of critical neurons raises alarms about the sufficiency of conventional SNN robustness arguments that rely primarily on binary nonlinearity and input discreteness.

These results indicate that hardware-level validation and trust assurance must incorporate adversarial stress testing as an integral part of the design automation flow. From the theoretical perspective, the analogy between biological PTSD and SNN vulnerability may guide the development of intrinsically robust architectures or neuro-inspired regularization terms.

Figure 1: Motivation behind Spike-PTSD and analogies between the post-traumatic stress response of the PTSD-affected brain and AEAs against SNNs.

Figure 6: Backdoor attack performance under N-MNIST, CIFAR10-DVS, and DVS128Gesture on VGGDVS with different target classes and timesteps.

Conclusion

Spike-PTSD introduces a new paradigm for AEA research on SNNs by formalizing a biologically grounded, spike scaling-based attack framework. The method undermines previously assumed SNN robustness limits, reliably achieving near-perfect attack rates through neuro-inspired strategies. Beyond the immediate improvements in adversarial evaluation, the work motivates ongoing efforts in neuroscience-guided robustness analyses, SNN hardware security, and automated chip-level adversarial validation. Integration of such bio-plausible attacks into neuromorphic design tools will be critical for future trustworthy deployment of SNNs in real-world safety- and security-sensitive applications.