- The paper introduces QBOOT, a quantum algorithm that exactly encodes the full bootstrap resample distribution using quantum superposition and amplitude estimation.

- It rigorously demonstrates a near-quadratic reduction in computational cost over classical Monte Carlo bootstrap methods while eliminating stochastic errors.

- Empirical tests on sample-mean problems validate the theoretical error bounds and resource scaling, paving the way for scalable quantum statistical inference.

Quantum Statistical Bootstrap: An Exact and Scalable Framework for Quantum Statistical Inference

Introduction

The paper "Quantum Statistical Bootstrap" (2604.00951) introduces QBOOT, a quantum algorithm designed to compute the ideal nonparametric bootstrap estimator exactly, bypassing the stochastic error and computational expense characteristic of classical Monte Carlo-based bootstrap methods. QBOOT leverages quantum superposition to encode all potential bootstrap resamples, computes the statistic of interest in parallel, and utilizes quantum amplitude estimation (QAE) for statistical inference. The authors rigorously analyze the theoretical and statistical properties of QBOOT and demonstrate its computational advantages through simulation experiments.

Classical vs. Quantum Bootstrap

The classical bootstrap is central in statistical inference but exhibits two pronounced limitations: it relies on Monte Carlo sampling to approximate the ideal resampling distribution, thus introducing approximation error O(B−1/2), and the associated cost grows rapidly with data size n and the complexity of the statistic f. Evaluating the true bootstrap CDF requires summing over nn configurations, making it infeasible for even moderate n.

QBOOT achieves an exact encoding of the empirical resample distribution into a uniform quantum superposition over all index vectors (i1,...,in)∈[n]n. For any statistic f, the indicator g(z,x)=1{f(x)≤z} is evaluated reversibly across all resamples. The resulting marked amplitude equals the ideal bootstrap aggregate—removing the stochastic error of the Monte Carlo approximation.

QBOOT Algorithmic Framework

State Preparation and Parallel Evaluation: QBOOT prepares a quantum state representing the uniform distribution over all bootstrap resamples. This is achieved by associating each observed datum with a computational basis state and creating a tensor product of n uniform superpositions, yielding a state on n⌈log2n⌉ qubits.

Coherent Evaluation of Statistics: A reversible circuit, n0, implements the evaluation and thresholding of the statistic n1 across all superposed resamples. The label qubit is toggled conditioned on n2 in superposition.

Quantum Amplitude Estimation (QAE): Applying QAE with n3 precision qubits extracts the probability amplitude of the "accept" label qubit, producing an estimator n4 for outcome n5 from QAE. The quantum error vanishes at rate n6, whereas classical CBOOT converges at n7 for the same computational cost.

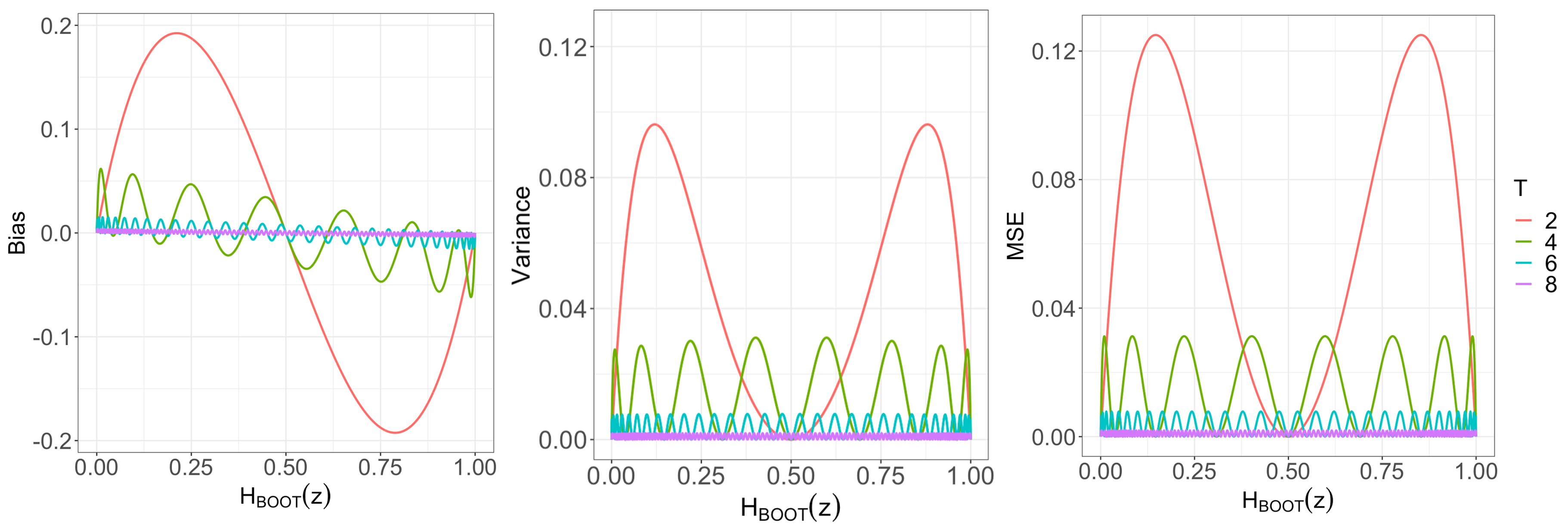

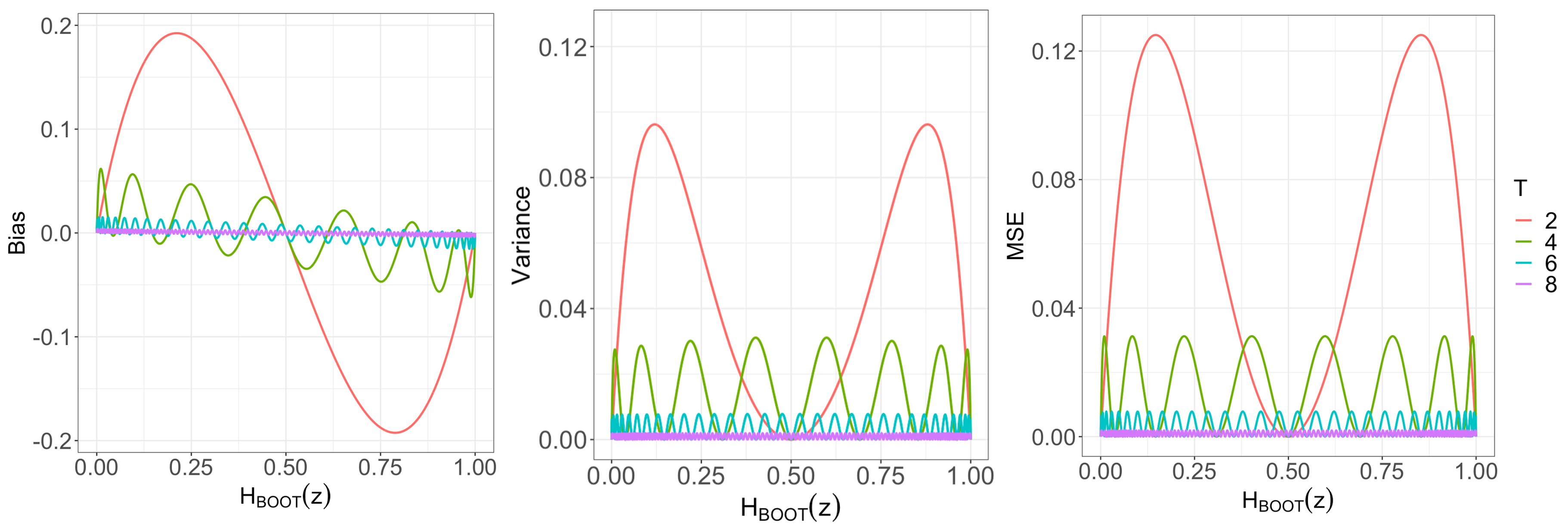

Figure 1: Bias, variance, and MSE of n8 as functions of n9 and number of precision qubits f0.

Theoretical Analysis of QBOOT

The authors provide a rigorous quantification of the statistical error structure, distinguishing between:

- Quantum error f1: The accuracy limit imposed by QAE, which decays as f2;

- Bootstrap error f3: The inherent difference between the ideal bootstrap and the true underlying sampling distribution, typically f4 for smooth functionals and f5 for non-smooth statistics.

For a fixed target accuracy f6, QBOOT achieves f7 cost scaling, compared to f8 for classical Monte Carlo. The overall cost for matched error is thus reduced by a near-quadratic factor.

Error Balancing and Resource Scaling: The work complexity of QBOOT is f9, dominated by gate complexity of the statistic oracle and state preparation. For meaningful accuracy, balancing nn0 and nn1 recommends nn2 for smooth cases, leading to linear-in-nn3 quantum speedups for common statistics.

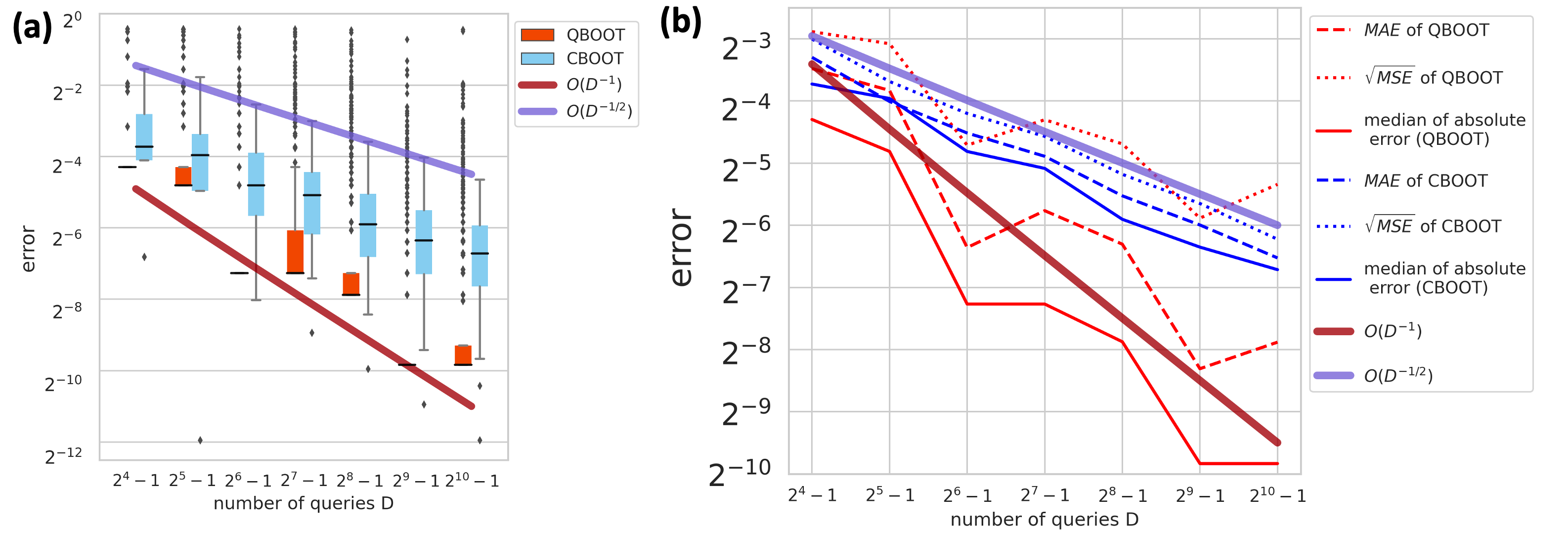

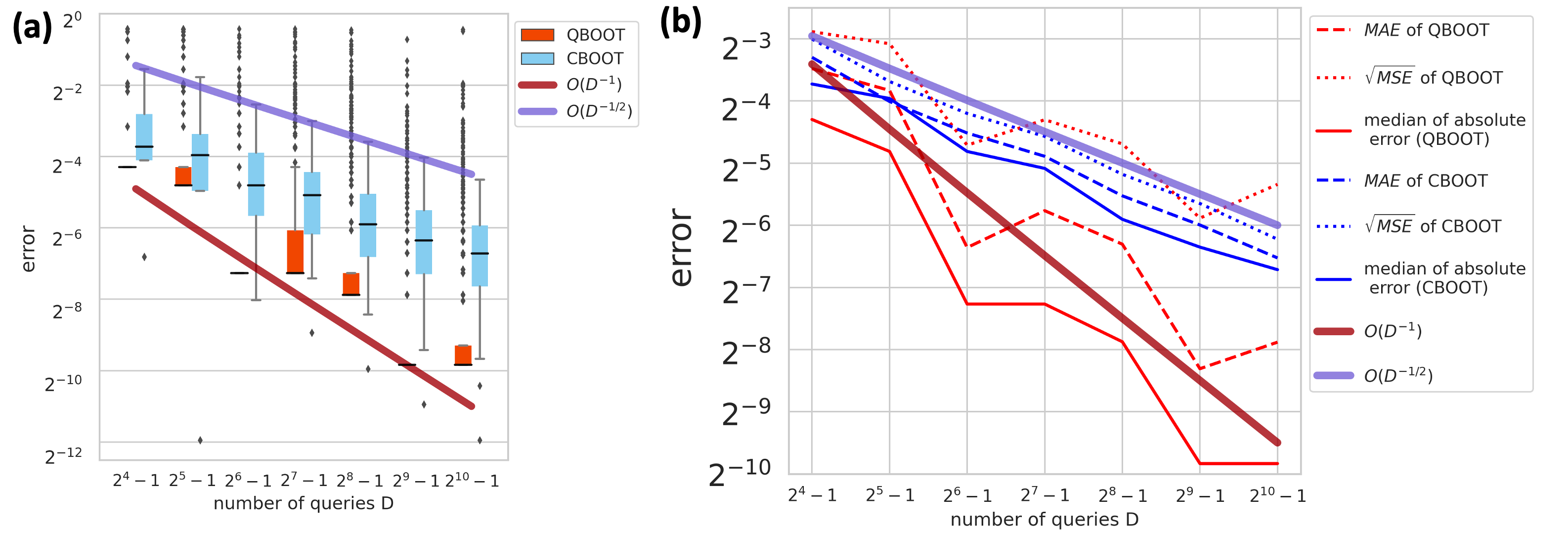

Figure 2: QBOOT vs. CBOOT at matched cost: QBOOT converges as nn4 while CBOOT exhibits slower nn5 error reduction.

The authors implement QBOOT on IBM's noiseless quantum simulator for the sample-mean problem (nn6), evaluating the CDF at nn7. The empirical results confirm the theoretical convergence rates: QBOOT achieves median and mean absolute errors lower than classical CBOOT for the same computational cost. However, QAE displays occasional outliers due to phase aliasing; repeating QAE independently nn8 times and taking the median sharply mitigates this, restoring the MSE decay to the nn9 regime.

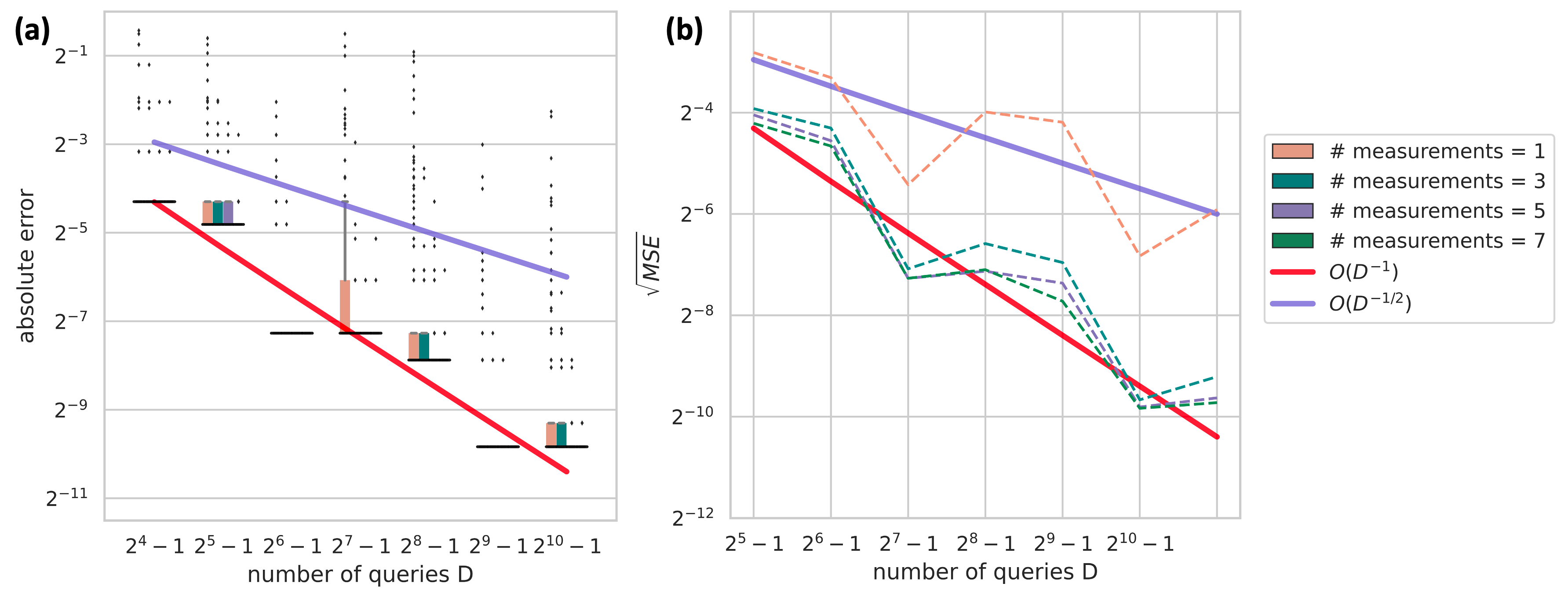

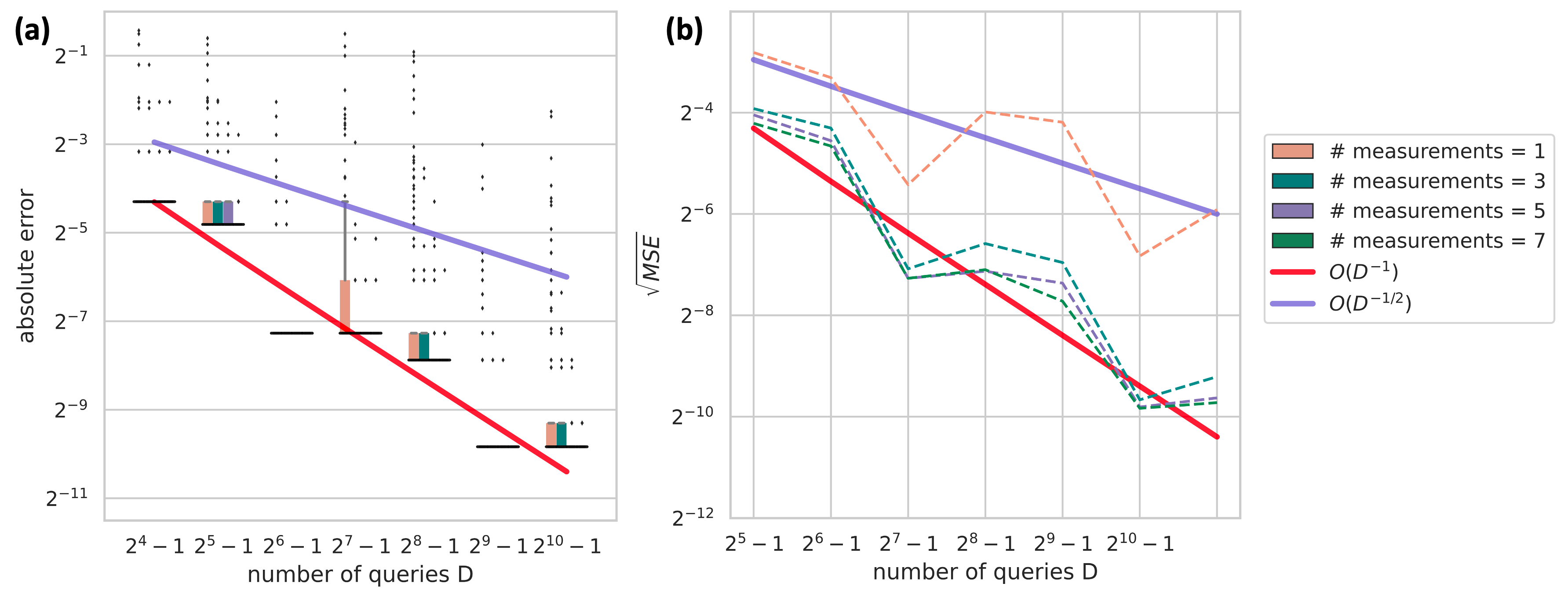

Figure 3: Robust aggregation for QBOOT using the median-of-n0 strategy, showing significant reduction in outlier errors.

Additionally, the authors provide explicit error probability and work-complexity quantifications for the median-of-n1 QBOOT estimator. For moderate n2 (e.g., n3), the probability of large errors decays exponentially, and the quantum speedup is preserved.

Quantum Resource Requirements and Practical Constraints

The main resource bottleneck for immediate application is qubit count: the QBOOT register grows as n4. For modest n5 (e.g., 10--20), deployment on NISQ hardware is plausible, but large-n6 tasks will require n7-out-of-n8 bootstrap and further circuit compression. The authors also discuss the generalization of QBOOT to parametric, smoothed, or Bayesian resampling via modification of the state-preparation routine. The algorithm is general as long as n9 can be implemented reversibly.

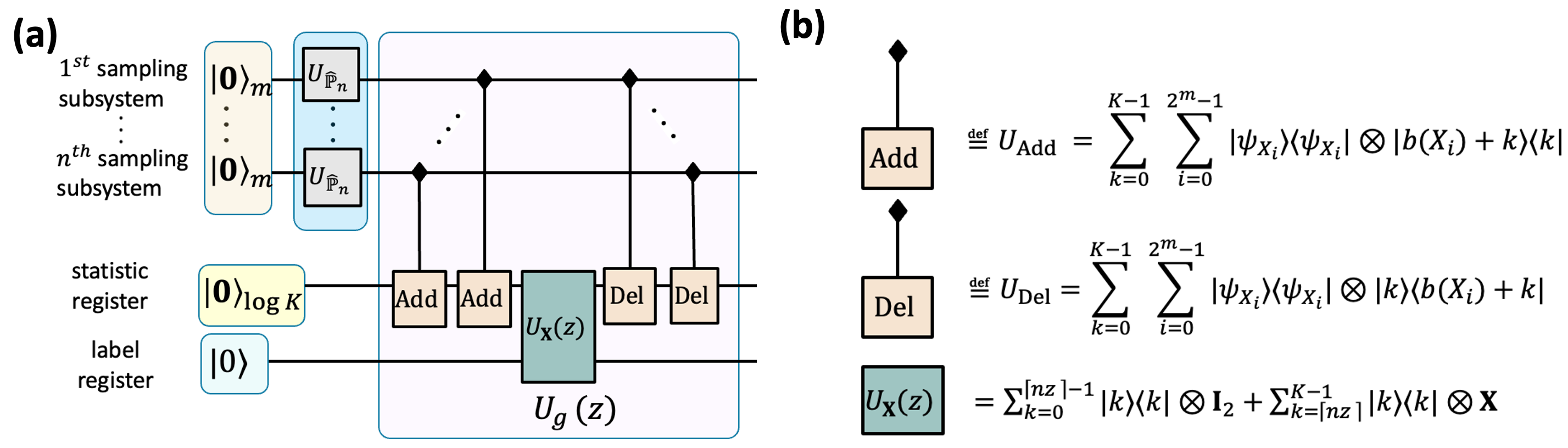

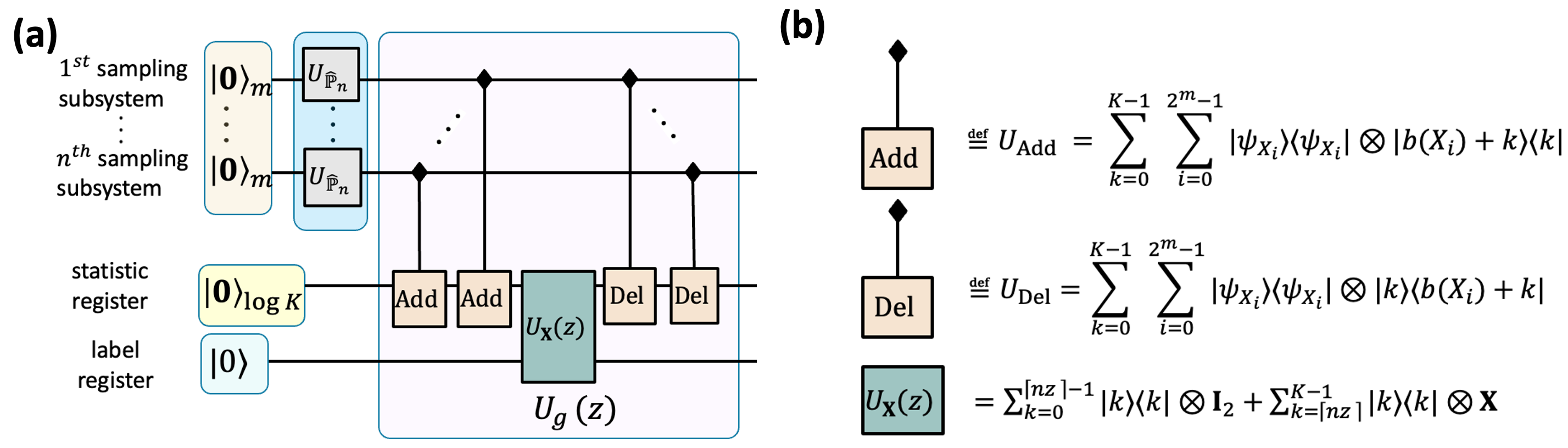

Figure 4: Example circuit realization of (i1,...,in)∈[n]n0 for the sample-mean indicator; all arithmetic and thresholding is implemented reversibly.

Broader Implications and Future Directions

QBOOT provides the first fully rigorous algorithmic and statistical analysis of quantum speedups for nonparametric distributional inference. The explicit separation of quantum and statistical errors, along with modular design for arbitrary statistics (i1,...,in)∈[n]n1, establishes QBOOT as a general quantum primitive for resampling-based inference, including confidence interval construction and permutation testing. The fundamental speedup is robust to statistic smoothness and can be preserved using robust median aggregation.

The main obstacles to near-term quantum advantage are hardware resource limits (especially qubit count) and circuit depth. However, rapid progress in fault tolerance and quantum state preparation, as well as classical-quantum hybrid resource allocation, is likely to make practical deployment for moderate-sized problems feasible. The QBOOT paradigm also points to future developments in quantum-powered Bayesian computation, quantum uncertainty quantification, and scalable quantum statistics.

Conclusion

QBOOT provides an exact, general, and computationally efficient framework for bootstrap-based inference on quantum computers. It replaces classical Monte Carlo approximation with precise quantum amplitude estimation, delivering a near-quadratic work reduction for matched error accuracy. The approach is theoretically sound, empirically validated, and broadly extensible across statistics and resampling schemes. QBOOT represents a substantive step toward robust, scalable, model-free quantum statistical inference and will be foundational for future quantum-powered data science applications.