- The paper demonstrates that partitioning EEG signals into region-specific experts and leveraging wPLI-based connectivity significantly improves affect recognition accuracy.

- It employs a Global–Local Dual-Stream encoder for EEG and a multi-scale CNN for peripheral signals, dynamically fusing outputs via a learnable routing network.

- Results on DEAP and DREAMER datasets validate the model's interpretability and robustness, highlighting its potential for applications in BCI, emotion-aware HCI, and mental health.

BiMoE: Brain-Inspired Experts for EEG-Dominant Affective State Recognition

Introduction

The paper "BiMoE: Brain-Inspired Experts for EEG-Dominant Affective State Recognition" (2603.29205) introduces a neurophysiologically-aligned mixture of experts (MoE) approach for multimodal sentiment analysis (MSA) utilizing EEG and peripheral physiological signals (PPS). The framework is motivated by three primary deficiencies in extant MSA methods: disregard of region-specific affective processing in EEG, lack of interpretability regarding neural representation, and suboptimal fusion with PPS features. By explicitly modeling functional brain regions as specialized expert networks, the BiMoE architecture leverages brain-topological priors, enabling physiologically coherent and interpretable affect recognition.

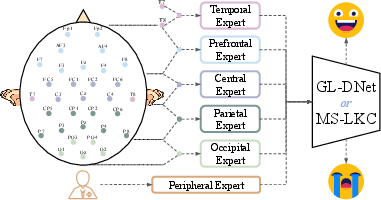

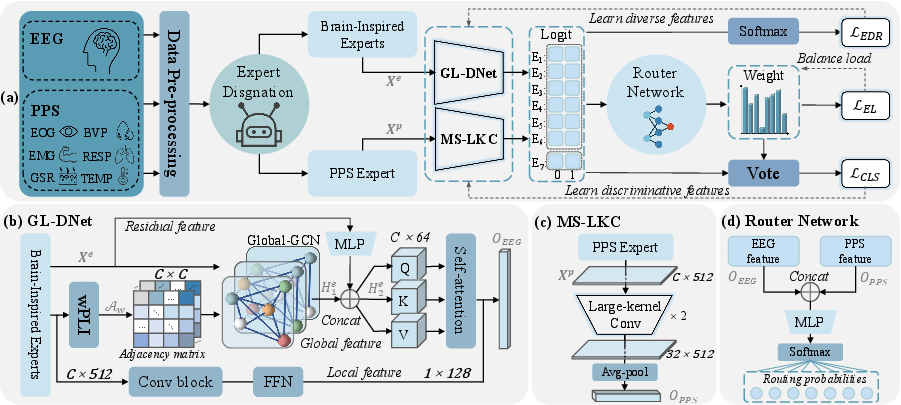

Figure 1: Expert structure in BiMoE, partitioning EEG and PPS signals into region-specific expert networks for encoding.

BiMoE Framework Architecture

BiMoE formalizes emotion recognition as a subject-independent multimodal learning problem, in which EEG signals are grouped by well-established brain cortical regions (prefrontal, central, parietal, occipital, temporal, and full-head) and assigned to corresponding dedicated experts. Each expert is implemented as a Global–Local Dual-Stream encoder (GL-DNet), integrating spatial graph convolutional layers (GCN) parameterized by weighted Phase Lag Index (wPLI) adjacency matrices with a parallel channel-wise CNN to capture local temporal structure.

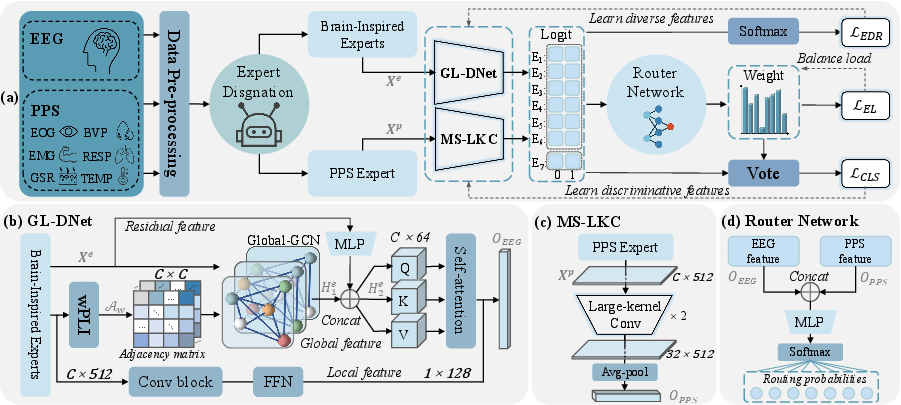

Peripheral physiological signals (ECG, GSR, respiration, etc.) are processed by an independent expert using a Multi-Scale Large Kernel CNN (MS-LKC), capturing non-EEG temporal dependencies. The outputs of all experts—including the EEG region experts and PPS expert—are dynamically fused using a learnable routing network (a two-layer MLP with softmax), which adaptively integrates expert logits into a joint representation. The entire network is trained end-to-end with a multi-objective loss that combines focal loss for class imbalance, expert load loss for diversity and utilization, and an expert disagreement regularization to promote orthogonality in expert predictions.

Figure 2: BiMoE model overview, presenting EEG regional processing (GL-DNet), PPS module (MS-LKC), dynamic routing, and joint loss-based optimization.

Evaluation was conducted on two benchmark datasets, DEAP and DREAMER, covering both EEG-only and multimodal settings under stringent leave-one-subject-out protocols to ensure robustness and cross-subject generalization. On DEAP, BiMoE achieved classification accuracy gains ranging from 0.87% to 5.19% over competitive baselines for both Arousal, Valence, Dominance, and Liking dimensions. On DREAMER, the model realized notable improvements, with absolute accuracy increases of up to 5.79% for Arousal and 1.72% for Valence in the multimodal configuration.

These results are attributed primarily to three architectural advances: brain-region expert partitioning, wPLI-based functional connectivity modeling, and dynamic expert fusion. Importantly, ablation experiments demonstrate that removal or substitution of any critical component (e.g., swapping wPLI with PLI, eliminating the expert load or disagreement losses, or omitting regional division) consistently degrades performance, sometimes substantially. This provides empirical evidence for the indispensability of each module for effective EEG-dominant affect recognition.

Interpretability and Alignment With Neuroscience

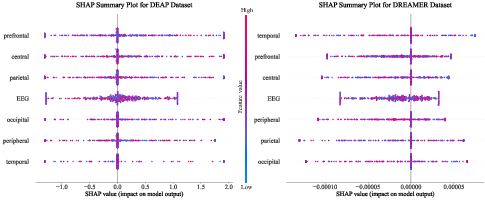

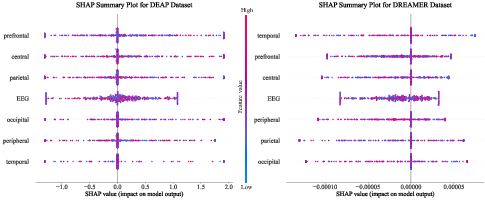

Beyond numerical superiority, BiMoE explicitly enhances model transparency and interpretability. Visualization using SHAP confirms that the most influential features are concentrated in prefrontal and central brain regions, in alignment with neurophysiological evidence regarding the localization of affect processing. The expert partitioning makes modeling decisions more consistent with the established roles of cortical areas, moving beyond conventional "black-box" deep learning. Analysis of SHAP values on both datasets supports physiological validity and highlights the capacity of BiMoE for meaningful explanatory output.

Figure 3: SHAP summary plots for DEAP and DREAMER, showing dominance of central and prefrontal regions in model decisions.

Theoretical and Practical Implications

The BiMoE architecture has significant theoretical and application-oriented implications. By integrating MoE with neuroanatomical priors, it demonstrates that physiologically-attuned inductive biases can improve generalization, accuracy, and interpretability. Methodologically, it validates the necessity of dynamic, region-aware expert fusion and regularized collaboration in multimodal settings, suggesting a paradigm shift from monolithic end-to-end EEG models to more structured, explainable deep architectures.

Practically, robust subject-independent affect recognition is essential for deployment in BCI, emotion-aware HCI, mental health, and multimedia systems. The modularity and explicit neurophysiological foundation of BiMoE are likely adaptable to further improvements, including more granular region definition, alternative PPS encoders, or augmentation with data from fNIRS or MEG. The framework’s joint loss design and adaptive routing could also be exploited for other domains where multimodal fusion and interpretability are critical.

Future Directions

Potential research avenues include the extension of the mixture of experts paradigm to finer brain sub-structures, exploration of continual learning protocols for incremental adaptation across subjects, and integration of domain adaptation to handle dataset shift. From a methodological perspective, synthesizing explainability with competitive performance metrics remains a fertile ground, particularly leveraging SHAP, attention visualization, and neuroscientific validation.

The source code is available, facilitating reproducibility and transfer to derivative models and domains.

Conclusion

BiMoE provides a substantial advance toward interpretable, neurophysiologically justified sentiment analysis architectures in affective computing. The framework’s combination of expert-driven modularity, topology-aware EEG encoding, and robust fusion yields consistently superior accuracy in subject-independent regimes while aligning model predictions with neuroscientific prior knowledge. These properties suggest that future developments in neuro-inspired AI, particularly for affective and brain-informed applications, will be strongly influenced by the principles established in this work.