FlowIt: Global Matching for Optical Flow with Confidence-Guided Refinement

Abstract: We present FlowIt, a novel architecture for optical flow estimation designed to robustly handle large pixel displacements. At its core, FlowIt leverages a hierarchical transformer architecture that captures extensive global context, enabling the model to effectively model long-range correspondences. To overcome the limitations of localized matching, we formulate the flow initialization as an optimal transport problem. This formulation yields a highly robust initial flow field, alongside explicitly derived occlusion and confidence maps. These cues are then seamlessly integrated into a guided refinement stage, where the network actively propagates reliable motion estimates from high-confidence regions into ambiguous, low-confidence areas. Extensive experiments across the Sintel, KITTI, Spring, and LayeredFlow datasets validate the efficacy of our approach. FlowIt achieves state-of-the-art results on the competitive Sintel and KITTI benchmarks, while simultaneously establishing new state-of-the-art cross-dataset zero-shot generalization performance on Sintel, Spring, and LayeredFlow.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces FlowIt, a computer vision system that figures out how every pixel in a video frame moves to the next frame. This “motion map” is called optical flow. FlowIt is designed to work well even when things move a lot, are partly hidden, or have tricky textures like glass, reflections, or plain walls.

What questions were the researchers trying to answer?

- How can we track pixel motion when objects move far across the screen, not just a little bit?

- How can we avoid getting stuck in small, local guesses and instead use the whole image to make smarter matches?

- Can we tell how “confident” the system is about its guesses and use that to fix uncertain areas?

- Will a system trained on some videos work well on new, different videos without retraining?

How does FlowIt work?

Think of matching pixels between two frames like matching puzzle pieces from picture A to picture B. FlowIt does this in a few stages:

Step 1: See the images at multiple scales

FlowIt first uses a neural network to turn each image into feature maps at different sizes (like zoomed-out and zoomed-in versions). A transformer (a type of neural network that’s good at seeing relationships across the whole image) helps the system share information across scales, so it understands both the big picture and fine details.

Step 2: Match pixels globally (not just nearby)

Instead of only checking nearby spots, FlowIt compares each pixel in the first image with many possible places in the second image. You can imagine a huge “similarity table” that scores how well pixels at position (x, y) in frame 1 match positions in frame 2.

To turn these scores into fair, consistent matches, FlowIt uses a math idea called “optimal transport.” Imagine shipping goods from warehouses (pixels in image 1) to stores (pixels in image 2) in the fairest way possible. If a pixel is occluded (hidden) or has no good match, FlowIt sends it to a “dustbin,” meaning “no match here.”

This step produces:

- An initial flow (where each pixel likely moved),

- An occlusion map (which pixels probably disappeared or are hidden),

- A confidence map (how sure the system is about each match).

Step 3: Use confidence to guide fixes

Confidence is like a trust meter. FlowIt spreads reliable motion information from high-confidence areas into low-confidence areas (places with glass, shadows, or little texture) so that good guesses “help” uncertain spots.

Step 4: Refine the details

After the global fix, FlowIt does a few small, local refinement steps to clean up edges and fine details. It even updates the horizontal and vertical parts of the motion separately, which turns out to be more stable and accurate—like adjusting width and height one at a time instead of both at once.

Finally, it scales the flow back up to full image size smoothly, using nearby values to fill in details.

Step 5: Train and test on varied data

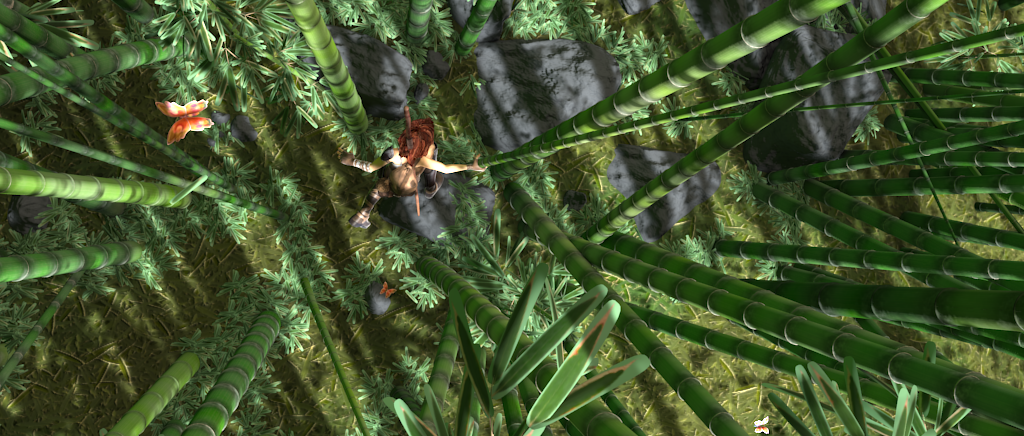

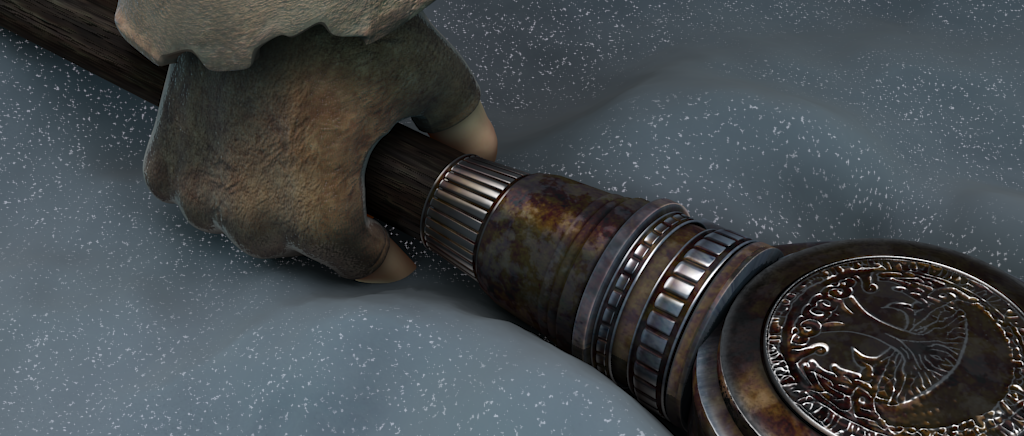

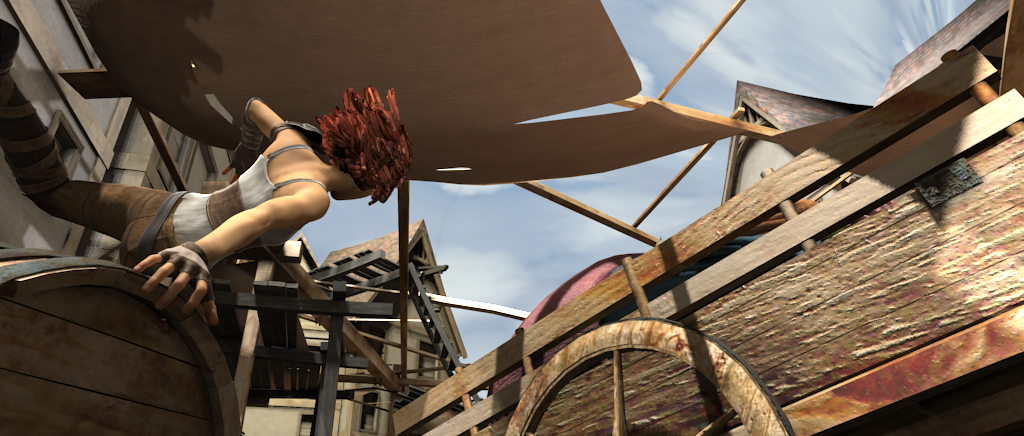

FlowIt is trained on popular synthetic datasets (like FlyingChairs and FlyingThings) and then tested on real and challenging benchmarks such as Sintel (animated movie scenes), KITTI (driving scenes), Spring (high-quality, long animated sequence), and LayeredFlow (transparent/reflective scenes). It also comes in sizes (S, M, L, XL) so users can pick between speed and accuracy.

What did the researchers find?

- Strong accuracy on standard tests:

- Top-tier results on Sintel and KITTI. For example, FlowIt XL reached the best score on Sintel’s “Clean” version and the best KITTI scores on non-occluded regions.

- Excellent generalization:

- Without retraining (zero-shot), FlowIt performed extremely well on new datasets like Spring and LayeredFlow, often beating other methods. This means it learned motion in a way that transfers to new kinds of scenes.

- Better on tough cases:

- Because it considers the whole image and uses confidence-guided refinement, FlowIt handles large movements, occlusions, textureless areas, and challenging surfaces like glass or reflections more reliably than many previous systems.

Why is this important?

- More reliable motion understanding: Seeing how things move in video is crucial for tasks like self-driving cars, video editing, action recognition, and frame interpolation.

- Fewer “gotchas”: By using global matching and confidence maps, FlowIt avoids common mistakes in tricky areas and large motions.

- Works well out of the box: Its strong zero-shot performance means it can be applied to new videos without extra training, saving time and compute.

- Flexible and practical: Different model sizes let users choose between fast and lightweight or slower but more precise versions, depending on their needs.

In short, FlowIt shows that combining global matching (to see the whole picture) with confidence-guided refinement (to fix uncertain spots) is a powerful way to estimate optical flow accurately and robustly across many kinds of videos.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of what remains missing, uncertain, or unexplored in the paper, stated concretely to guide future research:

- Memory/computation scalability of the 4D correlation volume and Sinkhorn OT at 1/4 resolution is not quantified on higher resolutions (e.g., 1080p/4K) or long sequences; practical limits and memory-optimized alternatives (sparse/top‑k OT, low‑rank factorizations) are not explored.

- Sensitivity of the OT solver to hyperparameters (entropy regularization strength, number of Sinkhorn iterations, dustbin cost/initialization) is not analyzed; no ablation on how these choices trade off accuracy, convergence, and runtime.

- The paper uses a fixed window radius r for the local soft expectation around the OT peak but does not specify r or study its effect; robustness to repeated textures, multi-modal matches, and very large displacements when the true mode lies outside the window remains unclear.

- Confidence thresholding for global propagation (fixed at 0.2) is not justified or tuned per dataset; no study on adaptivity, calibration, or learned thresholds and their impact on boundary errors and leakage.

- Quality and calibration of the predicted confidence maps are not evaluated (e.g., ECE, AUC‑ROC for error detection, risk‑coverage curves); it is unknown how reliable/confident the model is across domains and whether confidence correlates with true error.

- The ground-truth confidence label is a hard indicator (EPE < 4 px at full res); the effect of this threshold (and alternatives like soft targets or continuous uncertainty) on calibration and performance is untested.

- Occlusion estimation is supervised via a forward–backward 2‑px threshold without analysis of threshold sensitivity or label noise; no explicit occlusion segmentation metrics are reported, nor is occlusion prediction accuracy validated.

- The initial occlusion derived from the OT dustbin marginal is assumed meaningful but is not evaluated; how well dustbin mass corresponds to real occlusions (vs. low‑texture/mismatch) is not assessed.

- The confidence‑guided global propagation is a generic UNet without explicit edge/boundary awareness; risk of flow bleeding across motion boundaries is not measured (e.g., boundary EPE), and boundary-aware propagation alternatives are not explored.

- Axis‑wise refinement improves results but lacks theoretical justification; it is unknown whether alternative couplings (e.g., cross‑component Jacobians, divergence/curl constraints, or flow basis decompositions) could perform better.

- The convex 3×3 upsampling may limit sharpness near thin structures and edges; no comparison to alternative upsampling strategies (deformable, higher‑order, learned super‑res modules) or analysis of edge error.

- No analysis of computational breakdown (OT vs. feature extraction vs. refinement) and memory footprint for each module; energy/throughput on edge devices and real‑time feasibility are not examined.

- Training/data efficiency is not studied; the method relies on substantial pretraining (TartanAir, Chairs, Things, S+K+H) and transfer from stereo S2M2 weights. How performance degrades with less data or without transfer is unknown.

- The approach is limited to two frames; extensions to multi-frame/video flow (temporal consistency, recurrent or attention across time, occlusion reasoning over multiple frames) are not explored.

- Geometric constraints (epipolar geometry, camera motion priors, rigidity cues) are not utilized; whether integrating self-calibration or SLAM-style constraints improves accuracy/generalization remains open.

- Robustness to real-world degradations (heavy motion blur, low light, sensor noise, rolling shutter, non-standard lenses/fisheye) is not assessed with targeted stress tests.

- Generalization on LayeredFlow is evaluated only on the first layer and at 1/8 input resolution; behavior on deeper layers, full-resolution inputs, and multi-layer disambiguation remains untested.

- The method’s failure modes are not characterized; no qualitative/quantitative analysis of cases where OT fails (e.g., extreme scale changes, large perspective distortions, strong parallax) or where propagation harms boundaries.

- Hyperparameters such as the number of Sinkhorn iterations, r, confidence threshold, and number of refinement iterations lack systematic ablation (only refinement steps are partially ablated); optimal settings and robustness bands are unknown.

- The cost used for OT (e.g., −correlation, normalization strategy) is not fully specified or ablated; alternative costs (e.g., learned metrics, mutual information) and their effect on transport plans are not studied.

- The method conditions refinement on occlusion and confidence but does not explicitly enforce occlusion-aware matching/warping (e.g., masking correlations or motion edges); potential gains from explicit occlusion-aware refinement are unexplored.

- Comparison fairness is imperfect across baselines (varying training sets and pretraining); a controlled “same-train, same-resources” comparison is missing, so the exact architectural gains vs. training effects remain uncertain.

- Uncertainty is captured as a scalar confidence, not a full probabilistic flow distribution (e.g., covariance); downstream use for risk-aware deployment or active selection is not demonstrated.

- Extending the OT formulation to incorporate structural priors (e.g., piecewise rigidity, semantics) or to jointly estimate flow + segmentation is not attempted and remains an open direction.

- Integration of FlowIt’s confidence/occlusion maps in downstream tasks (e.g., video interpolation, tracking, robust SLAM) is not evaluated; practical benefits beyond flow accuracy are unknown.

Practical Applications

Immediate Applications

Below are actionable, near-term uses that can be deployed with current hardware and software stacks, leveraging FlowIt’s global optimal-transport matching, confidence/occlusion maps, and guided refinement.

- Optical-flow upgrade in perception stacks for autonomous driving and ADAS (Automotive, Robotics)

- What: Replace existing flow modules (e.g., RAFT-like) with FlowIt (M/L/XL) to improve large-displacement handling, occlusions, and textureless regions; use confidence and occlusion maps to gate downstream tracking, planning, and risk assessment.

- How to deploy/workflow: Export FlowIt (PyTorch→ONNX/TensorRT), integrate into perception graph as a “flow+confidence+occlusion” node; route low-confidence regions to fallback estimators or trackers; log occlusion masks for hazard detection.

- Assumptions/Dependencies: GPU or edge-accelerator availability; latency constraints (smaller “S/M” variants for embedded use); domain-checking for night/weather (may need fine-tuning); safety certification for on-vehicle use.

- Post-hoc traffic analytics and safety studies from video (Public sector, Smart cities)

- What: Analyze multi-camera traffic flows, near-miss events, and pedestrian/cyclist trajectories; confidence maps flag unreliable regions (e.g., heavy occlusion) for analyst review.

- How to deploy/workflow: Batch-process city CCTV or dashcam feeds; aggregate flow fields into density/velocity heatmaps; triage segments by low confidence for manual verification.

- Assumptions/Dependencies: Video access and privacy policies; compute cluster for large-scale processing; calibration if converting flow to physical units.

- VFX, video editing, and post-production retiming/inpainting (Media software)

- What: Use more robust flow for frame interpolation, slow motion, motion-based deblurring, and video inpainting; occlusion masks directly improve blending and hole filling.

- How to deploy/workflow: Package FlowIt (S/M) as a plugin for editors/compositors (e.g., After Effects, Nuke, DaVinci Resolve); swap into existing VFI pipelines as a drop-in replacement for current flow backends.

- Assumptions/Dependencies: Workstation GPU memory; export to C++/CUDA/ONNX for integration; license compatibility for commercial tools.

- AR/VR reprojection with dynamic occlusion handling (XR, Software)

- What: Use confidence-aware flow to stabilize reprojection in dynamic scenes (moving objects, partial occlusions) and to support occlusion-aware compositing in mixed reality.

- How to deploy/workflow: Integrate FlowIt (S) as a background process for headset or mobile app; use occlusion masks to gate compositing; fall back to inertial-based reprojection in low-confidence areas.

- Assumptions/Dependencies: Edge compute budget is tight—quantization and model pruning likely needed; thermal constraints; target framerate considerations.

- Robotics and industrial inspection with reflective/transparent parts (Manufacturing, Robotics)

- What: Improve motion tracking of parts on conveyors/robots where reflective/transparent materials break local matching; confidence maps drive quality-control flags and fallback policies.

- How to deploy/workflow: Deploy FlowIt (S/M) on Jetson/edge GPU; integrate confidence thresholds to prevent false-positive detections; pair with 2D detectors for object-level tracking.

- Assumptions/Dependencies: Lighting variability and sensor noise; throughput constraints; possible need for lightweight distillation.

- Sports analytics and broadcast motion tracking (Media, Sports tech)

- What: Track player/ball motions with fewer ID switches under fast movements and occlusions; confidence scoring surfaces marginal clips for manual correction.

- How to deploy/workflow: Batch processing or near-real-time pipelines for replay analysis; fuse flow with detections to stabilize trajectories; export metrics for coaching dashboards.

- Assumptions/Dependencies: Camera zooms and abrupt cuts require shot-change handling; licensing for broadcast feeds.

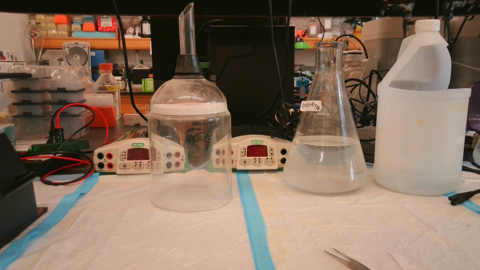

- Scientific and academic workflows for motion analysis (Academia, Research)

- What: Use robust optical flow to analyze physical processes (e.g., fluids, traffic, crowd dynamics) and cell/tissue motion in lab videos; leverage occlusion/confidence for error-aware analysis.

- How to deploy/workflow: Run FlowIt on experimental datasets; threshold by confidence to report uncertainty bounds; share code for reproducible pipelines.

- Assumptions/Dependencies: Domain shift (e.g., microscopy or satellite imagery) may require fine-tuning; resolution and SNR vary widely.

- Video compression R&D: motion pre-estimation (Software, Media infra)

- What: Use FlowIt to guide block-based motion search or generate hierarchical motion hints to reduce bitrate in R&D codecs; ignore low-confidence regions or code them differently.

- How to deploy/workflow: Couple FlowIt with encoder pre-analysis stage to seed motion vectors; evaluate RD-curves versus baseline motion search.

- Assumptions/Dependencies: Not a drop-in to standards-compliant encoders; compute overhead must be amortized; currently suited for research prototyping.

- Dataset bootstrapping and annotation assistance (Industry/Academia)

- What: Generate pseudo-labels for motion/occlusion to accelerate training of trackers, segmenters, and scene-flow models; confidence maps filter unreliable labels.

- How to deploy/workflow: Run FlowIt across unlabeled corpora; keep high-confidence pixels as training targets; optionally human-in-the-loop for low-confidence cases.

- Assumptions/Dependencies: Careful threshold calibration; bias from synthetic pretraining; long-tail phenomena still require manual QA.

Long-Term Applications

These opportunities will benefit from additional research, engineering, or scaling (e.g., real-time constraints, certification, or domain adaptation).

- Real-time on-vehicle deployment for autonomy (Automotive)

- Potential: Replace or complement traditional motion estimation with global, confidence-aware flow at high FPS on automotive-grade hardware; propagate confidence into planning safety envelopes.

- Tools/Products/Workflows: TensorRT-optimized FlowIt with mixed precision, pruning, and tiling; asynchronous execution with other perception modules.

- Assumptions/Dependencies: Deterministic latency and memory bounds; ISO 26262 compliance; robust performance in extreme weather/night; multi-frame extensions for temporal stability.

- Mobile and AR glasses deployment (XR, Consumer devices)

- Potential: On-device flow for live AR occlusion and reprojection with energy-efficient models; dynamic scene understanding in everyday apps.

- Tools/Products/Workflows: Highly compressed FlowIt (S) variants with knowledge distillation and hardware-aware NAS; mobile NN accelerators.

- Assumptions/Dependencies: Tight power budgets; vendor-specific SDKs; perceptual quality metrics to guide trade-offs.

- Medical video motion tracking (Healthcare)

- Potential: Robust motion estimation in ultrasound, endoscopy, and surgical video, with occlusion/confidence maps guiding clinical decision-support and tool tracking.

- Tools/Products/Workflows: Domain-adapted training with curated medical datasets; integration into PACS/OR software; uncertainty-aware overlays.

- Assumptions/Dependencies: Regulatory approval; privacy; significant domain shift vs. natural images; real-time and safety constraints.

- Satellite/meteorology motion nowcasting (Energy, Public sector)

- Potential: Estimate cloud/wind fields from satellite imagery with large displacements; confidence maps feed data assimilation and uncertainty quantification.

- Tools/Products/Workflows: Multi-scale tiling for very large frames; coupling with geospatial calibration to convert pixels to physical units; integration with nowcasting pipelines.

- Assumptions/Dependencies: Spectral band differences and noise characteristics; validation against physical models; compute at scale.

- Codec standards and occlusion-aware video coding (Media standards)

- Potential: Standardized “optical-flow side info” and confidence/occlusion maps to guide encoding decisions and improve compression efficiency.

- Tools/Products/Workflows: Proposals to standards bodies (e.g., JVET); hybrid encoders using learned motion hints; RD-optimized confidence gating.

- Assumptions/Dependencies: Industry consensus; complexity targets; hardware decoder impact.

- Dynamic 3D reconstruction and scene flow for digital twins (AEC, Robotics)

- Potential: Fuse FlowIt with depth/stereo to compute dense scene flow for dynamic digital twins, robot interaction, and simulation.

- Tools/Products/Workflows: Joint training with depth/pose nets; multi-frame FlowIt extensions; use confidence to weight fusion.

- Assumptions/Dependencies: Accurate camera calibration; synchronization; robustness to rolling shutter and HDR.

- Event-camera and multi-sensor fusion (Advanced robotics)

- Potential: Extend FlowIt’s global matching and confidence propagation to event streams and cross-modal (RGB+LiDAR) motion estimation in high-speed, low-light settings.

- Tools/Products/Workflows: Event-to-frame hybrid pipelines; cross-modal cost volumes; confidence-informed fusion.

- Assumptions/Dependencies: New training datasets; algorithmic changes for asynchronous data; sensor alignment.

- Crowd monitoring and safety policy analytics (Public safety)

- Potential: Flow-based density and motion patterns to support real-time crowd management and post-event analysis with uncertainty measures for operational decisions.

- Tools/Products/Workflows: Edge-to-cloud processing; dashboards highlighting low-confidence regions for human oversight.

- Assumptions/Dependencies: Privacy-preserving deployment; ethical frameworks; scalability during peak events.

- Foundation-model pretraining for correspondence tasks (Academia, AI platforms)

- Potential: Incorporate FlowIt’s optimal-transport matching and confidence-guided refinement into large-scale pretraining for generic correspondence (optical flow, stereo, tracking).

- Tools/Products/Workflows: Multi-task datasets; shared backbones with task-specific heads; curriculum including occlusion/confidence supervision.

- Assumptions/Dependencies: Compute and data scale; benchmarking and transfer protocols.

- Productized C++/CUDA library with hardware kernels (Software infra)

- Potential: A hardened library exposing flow+confidence+occlusion APIs with vendor-tuned kernels for GPUs/NPUs.

- Tools/Products/Workflows: ABI-stable SDK; plugins for OpenCV/FFmpeg; CI for determinism and regression.

- Assumptions/Dependencies: Ongoing maintenance; cross-platform support; licensing.

Notes on feasibility (general):

- Model variants enable speed/accuracy trade-offs; S/M are better for edge, L/XL for servers.

- Confidence thresholds and calibration are critical to avoid over/under-confidence in downstream decisions.

- Paper benchmarks show strong zero-shot generalization on Sintel, Spring, and LayeredFlow, but mission-critical domains (medical, satellite, night driving) will require domain adaptation and rigorous validation.

- Memory/compute cost of 4D correlation volumes at 1/4 resolution may require tiling and optimization for high-resolution or embedded deployments.

Glossary

- 4D correlation volume: A four-dimensional tensor storing pairwise matching scores between all pixels in two feature maps across both spatial dimensions. "A 4D correlation volume is constructed using the resolution features"

- 4D probability map: A four-dimensional tensor of probabilities over all pixel-to-pixel matches inferred from optimal transport on the correlation volume. "optimal transport is applied to produce a 4D probability map."

- AdamW optimizer: A variant of the Adam optimizer that decouples weight decay from the gradient-based update for better generalization. "FlowIt is implemented in PyTorch and trained with the AdamW optimizer"

- Adaptive Gated Fusion: A mechanism for cross-scale information exchange that learns to adaptively combine features from different resolutions. "enabling cross-scale interaction through an Adaptive Gated Fusion mechanism"

- all-pairs correlation volume: A full correlation tensor containing similarities between every pair of feature locations from two images. "casting flow estimation into an optimization-inspired iterative process guided by an all-pairs correlation volume"

- axis-wise refinement: A strategy that refines the horizontal and vertical components of flow independently rather than jointly. "this axis-wise refinement strategy is critical for achieving high performance."

- confidence-aware refinement module: A refinement stage that uses estimated confidence and occlusion to guide propagation of reliable motion into uncertain regions. "we introduce a confidence-aware refinement module that jointly estimates occlusion masks and confidence maps."

- confidence-guided aggregation: An aggregation function that uses confidence to steer how flow information is pooled from reliable neighborhoods. "Φ is a confidence-guided aggregation function, implemented as a UNet."

- confidence map: A per-pixel measure of certainty in the predicted flow, often derived from matching probabilities. "The confidence of the flow prediction for a given pixel is calculated as the sum of the matching probabilities within the local window"

- convolutional GRU: A gated recurrent unit adapted to convolutional operations for iterative refinement of dense predictions. "applies a convolutional GRU, similar to RAFT"

- convex combination: A weighted sum of neighboring values with non-negative weights that sum to one, used here for upsampling. "each high-resolution flow vector is expressed as a convex combination of its corresponding neighborhood"

- convex upsampling: An upsampling technique that reconstructs high-resolution predictions as convex combinations of coarse neighbors. "we employ convex upsampling to obtain full-resolution predictions."

- cost volume: A tensor that stores matching costs (or similarities) over a search window for each pixel to facilitate correspondence. "introducing a correlation layer used to compute a cost volume"

- coarse-to-fine spatial pyramid: A multi-scale processing framework that estimates motion from low to high resolutions to handle large displacements. "introduced the coarse-to-fine spatial pyramid"

- dustbin strategy: An optimal transport trick that allocates unmatched or occluded pixels to a special “dustbin” to handle missing correspondences. "We adopt the standard dustbin strategy to account for occlusions and unmatched regions."

- endpoint error (EPE): A standard optical flow metric measuring the Euclidean distance between predicted and ground-truth flow vectors. "The ground-truth confidence map is defined as an indicator function selecting pixels whose endpoint error is below 4 pixels"

- entropy-regularized optimal transport: An optimal transport formulation with entropy regularization that yields smooth, computationally efficient solutions via Sinkhorn iterations. "we employ entropy-regularized optimal transport and solve it using the Sinkhorn algorithm"

- Feature Pyramid Network (FPN): A network that builds a multi-scale feature hierarchy to capture both low- and high-level information. "FlowIt extracts multi-scale features from images using a CNN encoder followed by a Feature Pyramid Network (FPN)."

- forward–backward consistency check: A method to infer occlusions by verifying that forward and backward flows are consistent. "obtained via a forwardâbackward consistency check"

- global matching: A correspondence strategy that searches over the entire image pair to enforce long-range consistency. "we cast correspondence estimation as a global matching problem"

- hierarchical transformer: A transformer architecture that processes features across multiple scales to capture global context and long-range dependencies. "relies on a hierarchical transformer design that facilitates rich context sharing"

- logit space: The pre-sigmoid domain used for stable accumulation of updates to probabilities or masks. "their respective residual updates are accumulated in the logit space"

- marginal sum: The sum over a subset of dimensions (e.g., all target candidates) of a probability tensor, used here to gauge occlusion. "derived as marginal sum over all target candidates"

- Multi-Resolution Transformer (MRT): A transformer module that processes and fuses multi-scale features in parallel for contextual refinement. "processed with one or more Multi-Resolution Transformer (MRT) blocks."

- occlusion map: A per-pixel estimate indicating whether a pixel’s correspondence is visible in the target image. "The initial occlusion map is derived"

- occlusion mask: A binary or probabilistic mask indicating occluded regions that should not contribute to flow supervision or refinement. "jointly estimates occlusion masks and confidence maps."

- optimal transport: A framework for computing correspondences by transporting mass between distributions under constraints, here used for flow initialization. "we formulate our initial flow extraction from the cost volume as an Optimal Transport problem"

- probabilistic matching tensor: A probability tensor over all candidate matches between two images produced by the optimal transport solver. "The optimal transport solver produces a probabilistic matching tensor"

- probability mass: The cumulative probability assigned by a probabilistic model to certain outcomes, used to assess matching confidence. "the transport plan concentrates significant probability mass on the corresponding locations"

- probability-weighted centroid: An estimate of a target location computed as the average of candidate coordinates weighted by their match probabilities. "We then compute the target coordinate as the probability-weighted centroid"

- Sinkhorn algorithm: An iterative matrix/vector normalization procedure used to solve entropy-regularized optimal transport efficiently. "solve it using the Sinkhorn algorithm"

- transport plan: The optimal assignment (probability) distribution specifying how mass is moved from source to target in optimal transport. "produces a globally consistent transport plan"

- UNet: An encoder–decoder architecture with skip connections widely used for dense prediction and aggregation. "implemented as a UNet."

- warping mechanism: Iteratively transforming one image toward another using current flow estimates to reduce residual motion. "coupled with a warping mechanism"

- zero-shot generalization: The ability of a model to perform well on datasets or domains it was never trained on. "establishing new state-of-the-art cross-dataset zero-shot generalization performance"

Collections

Sign up for free to add this paper to one or more collections.