RealMaster: Lifting Rendered Scenes into Photorealistic Video

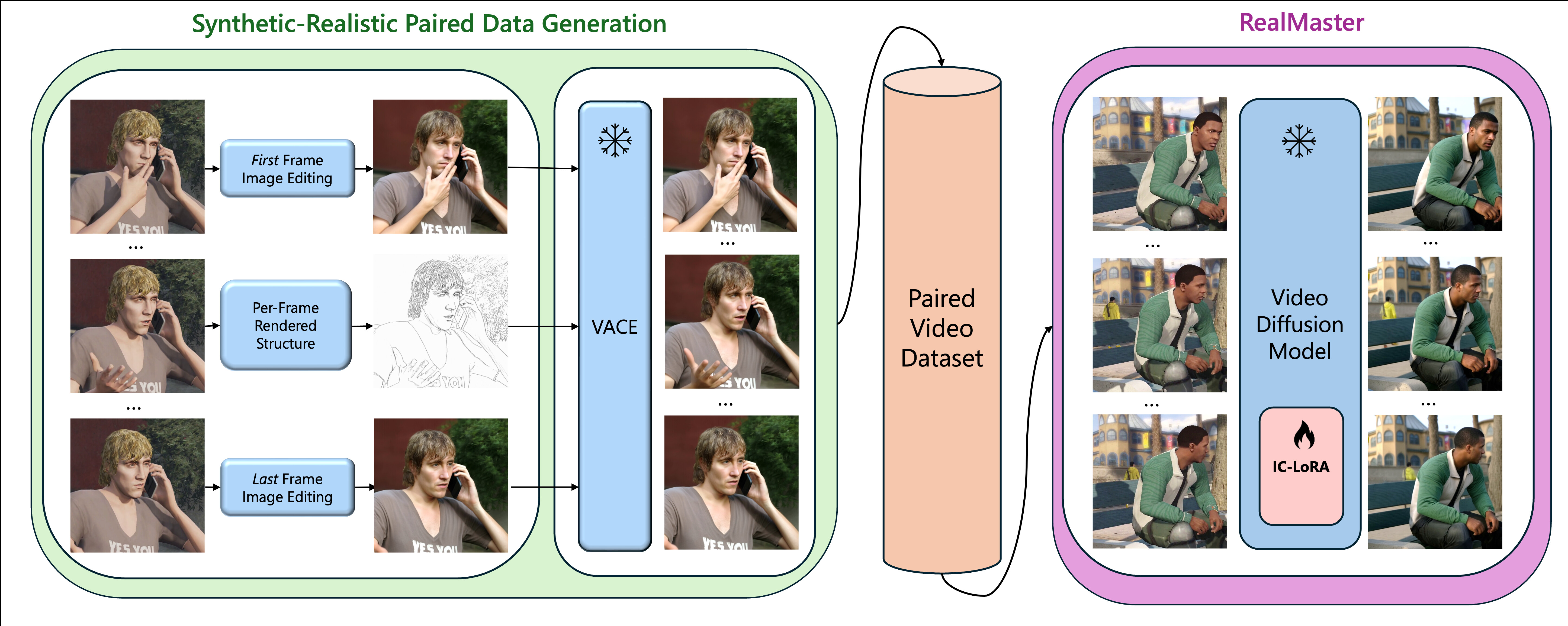

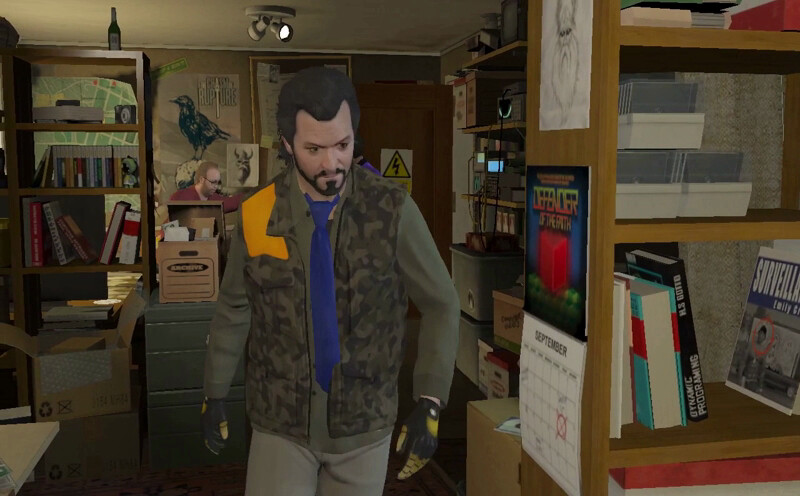

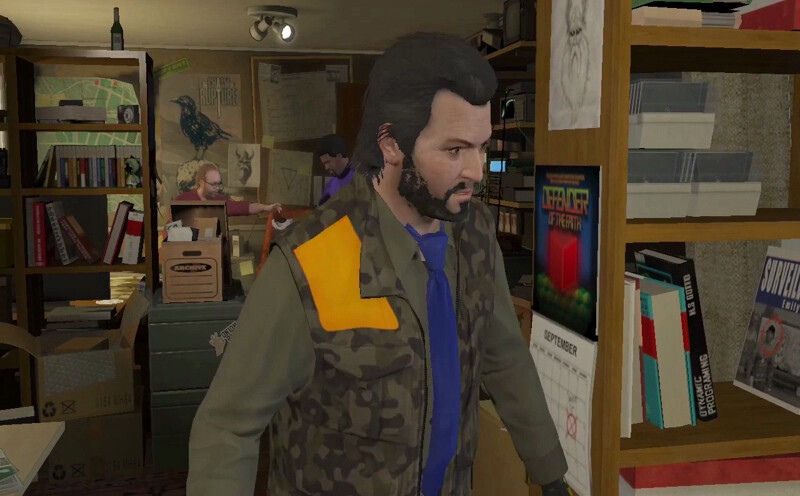

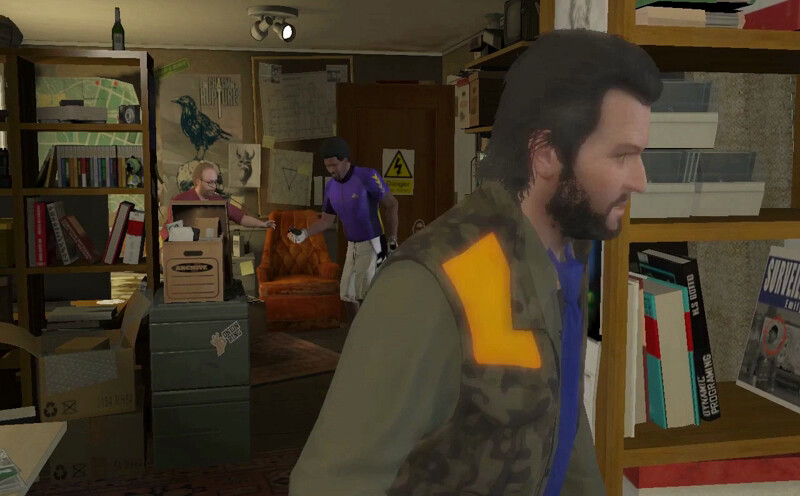

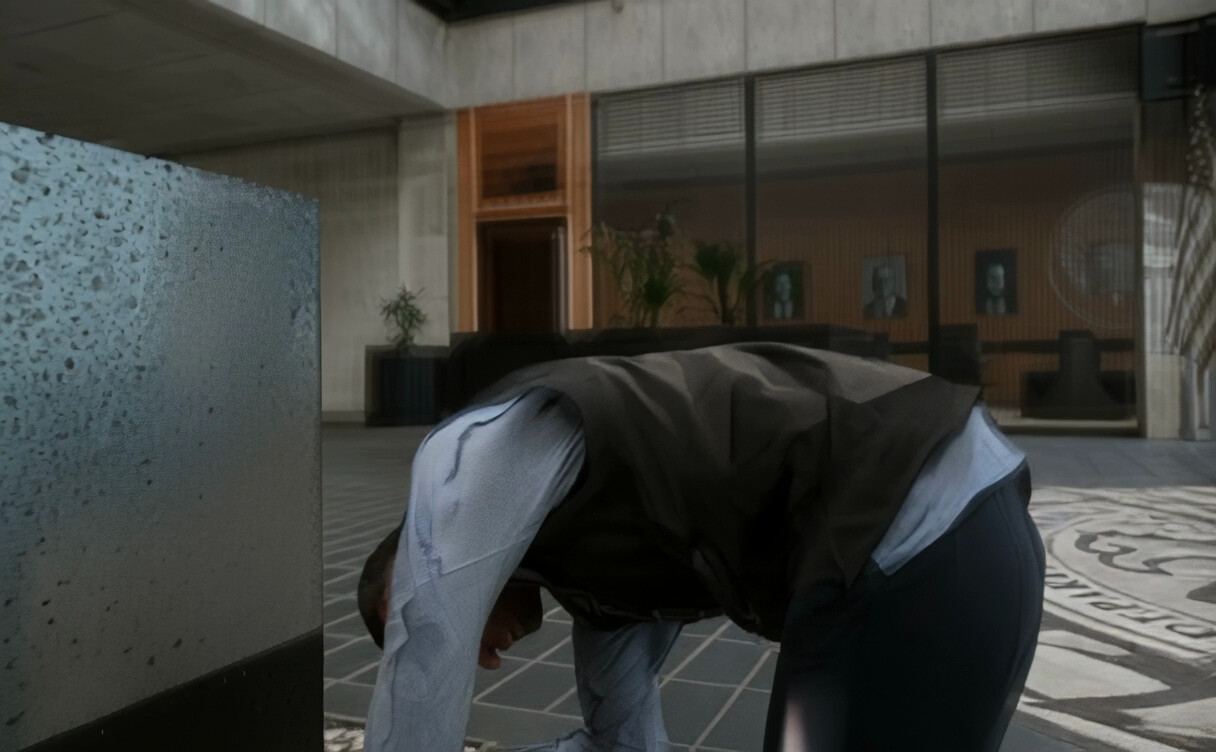

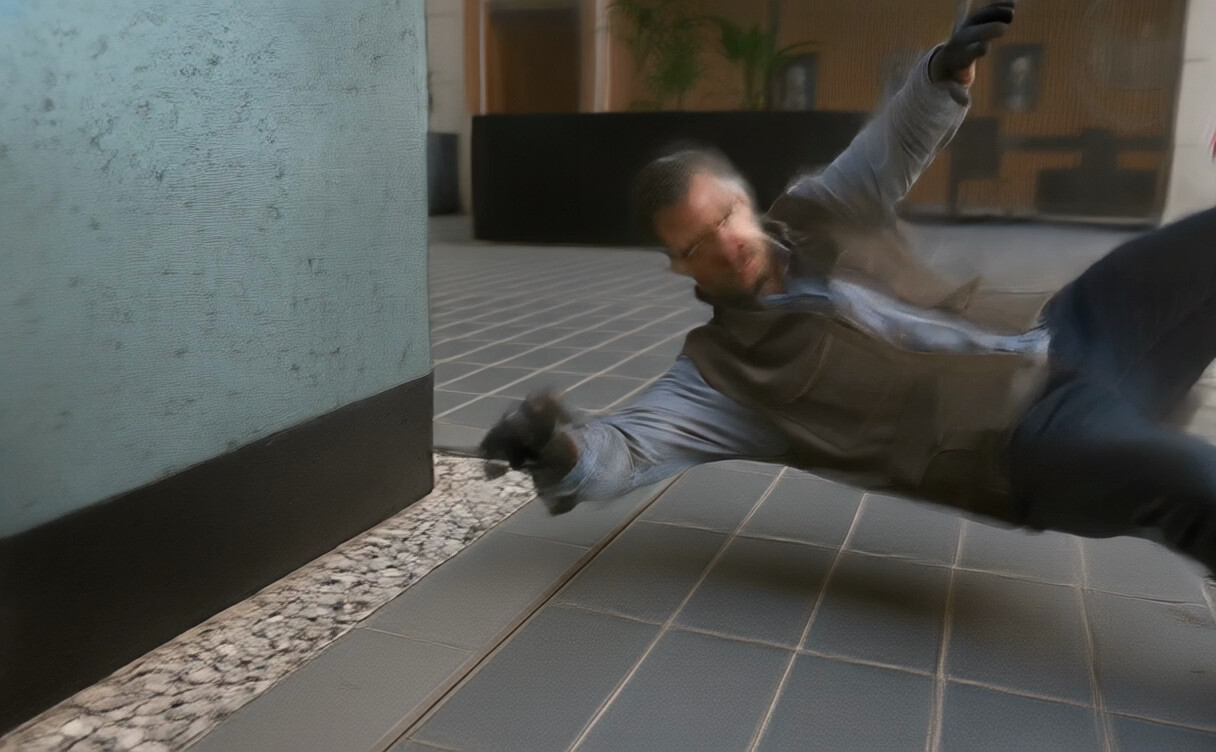

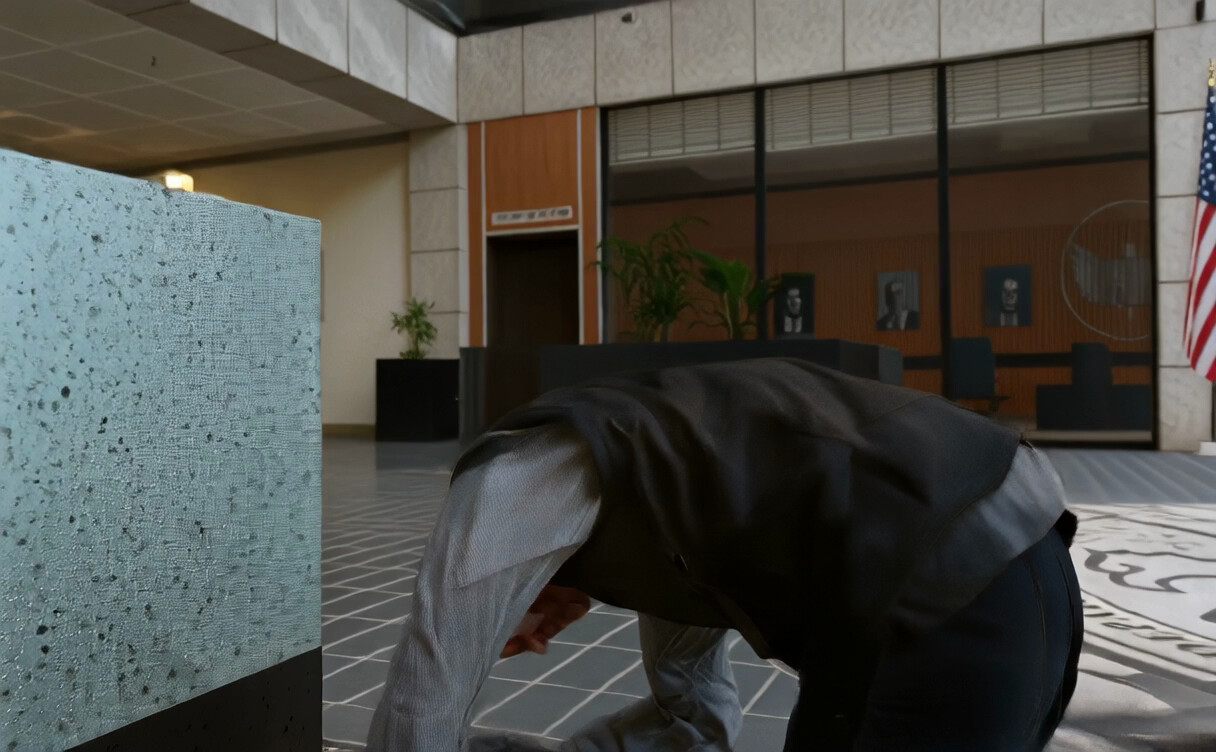

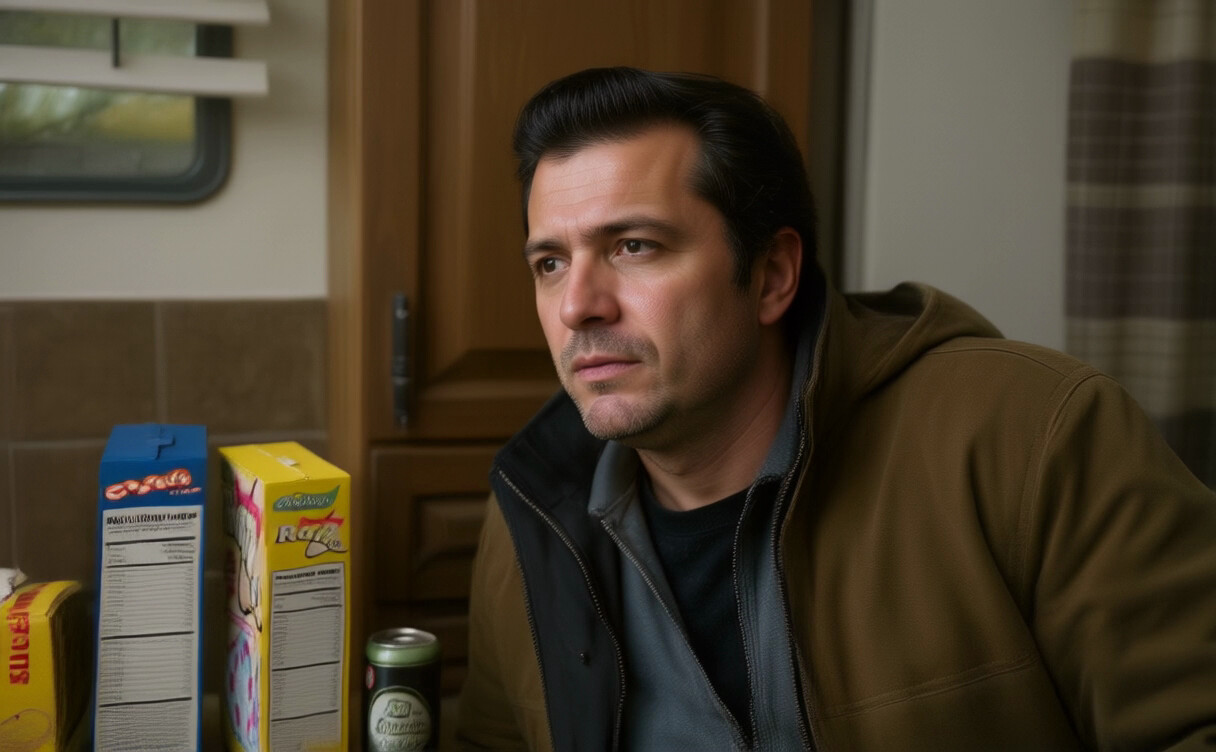

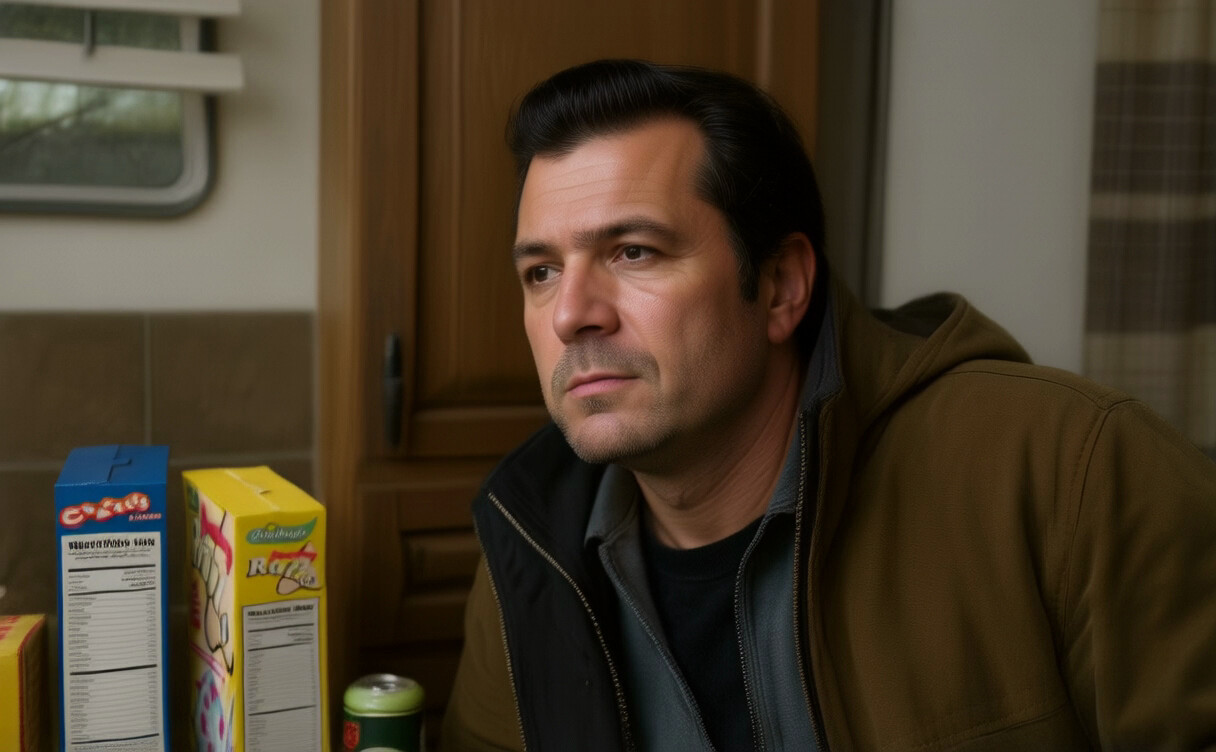

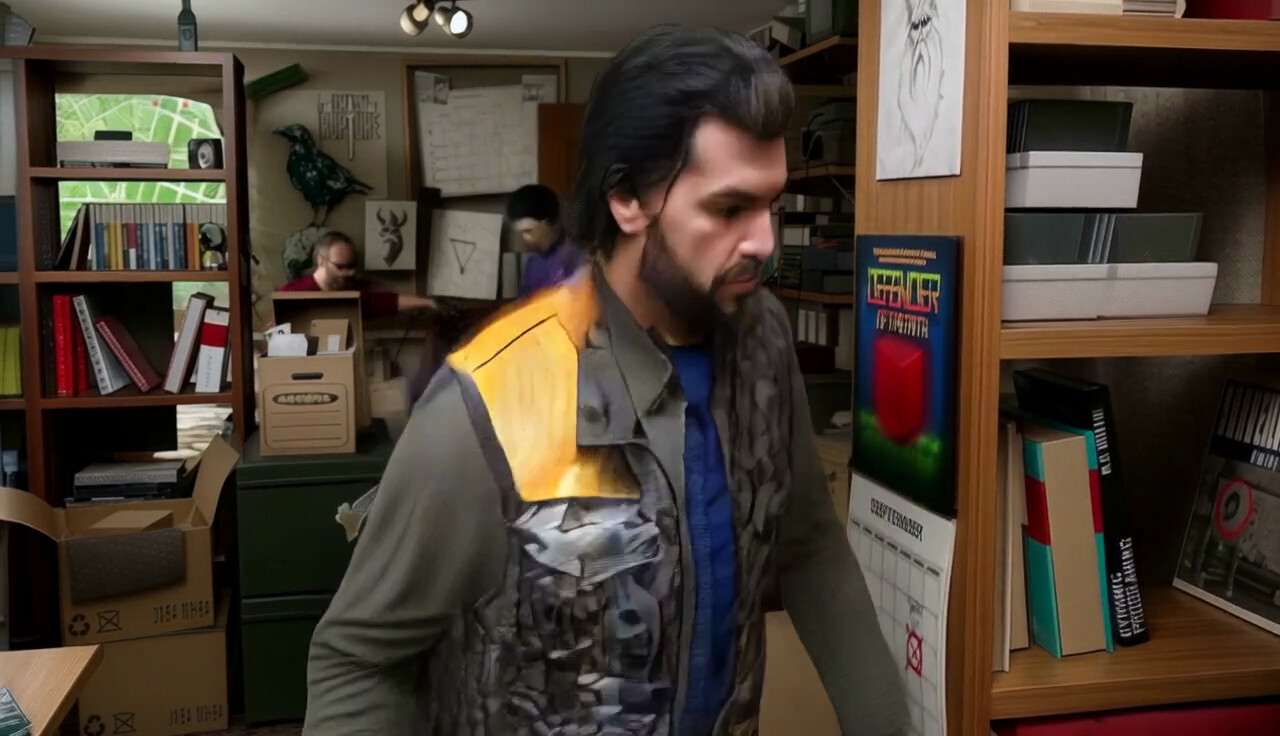

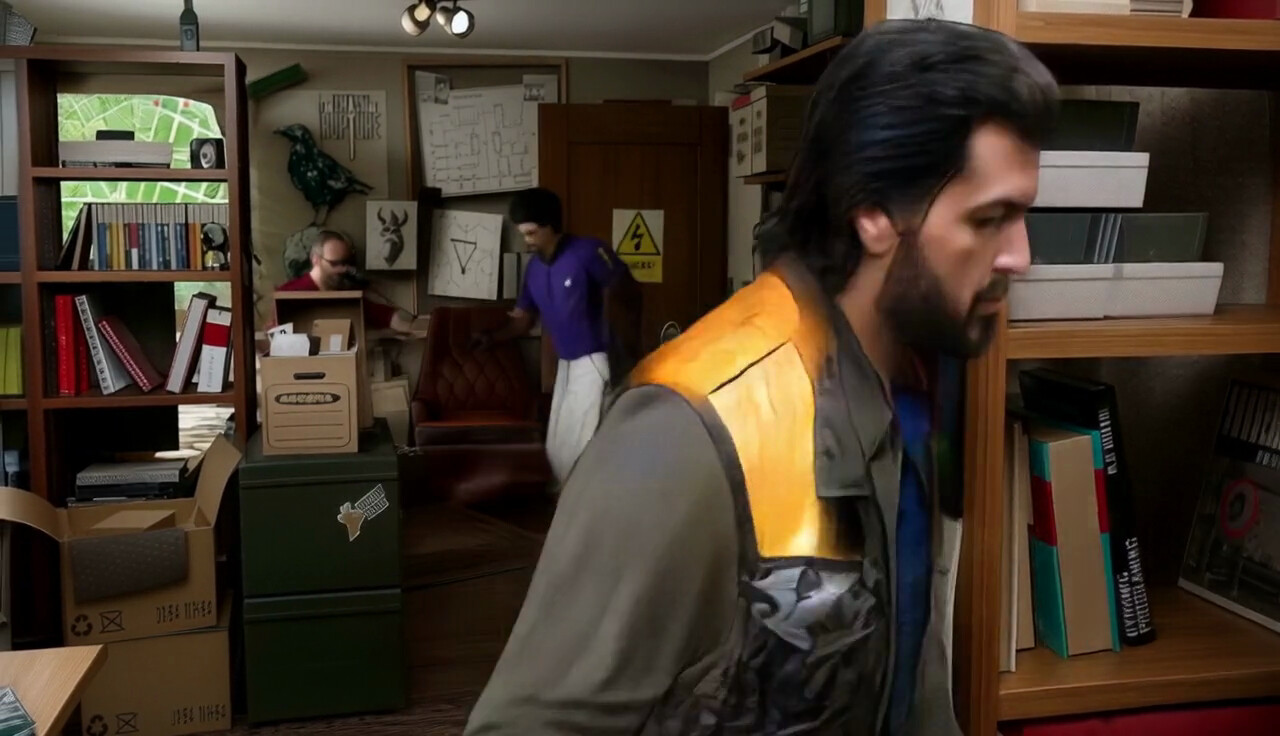

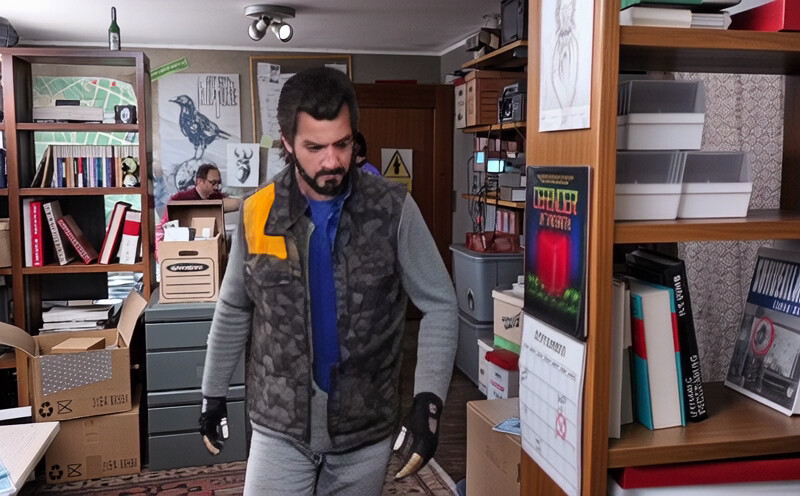

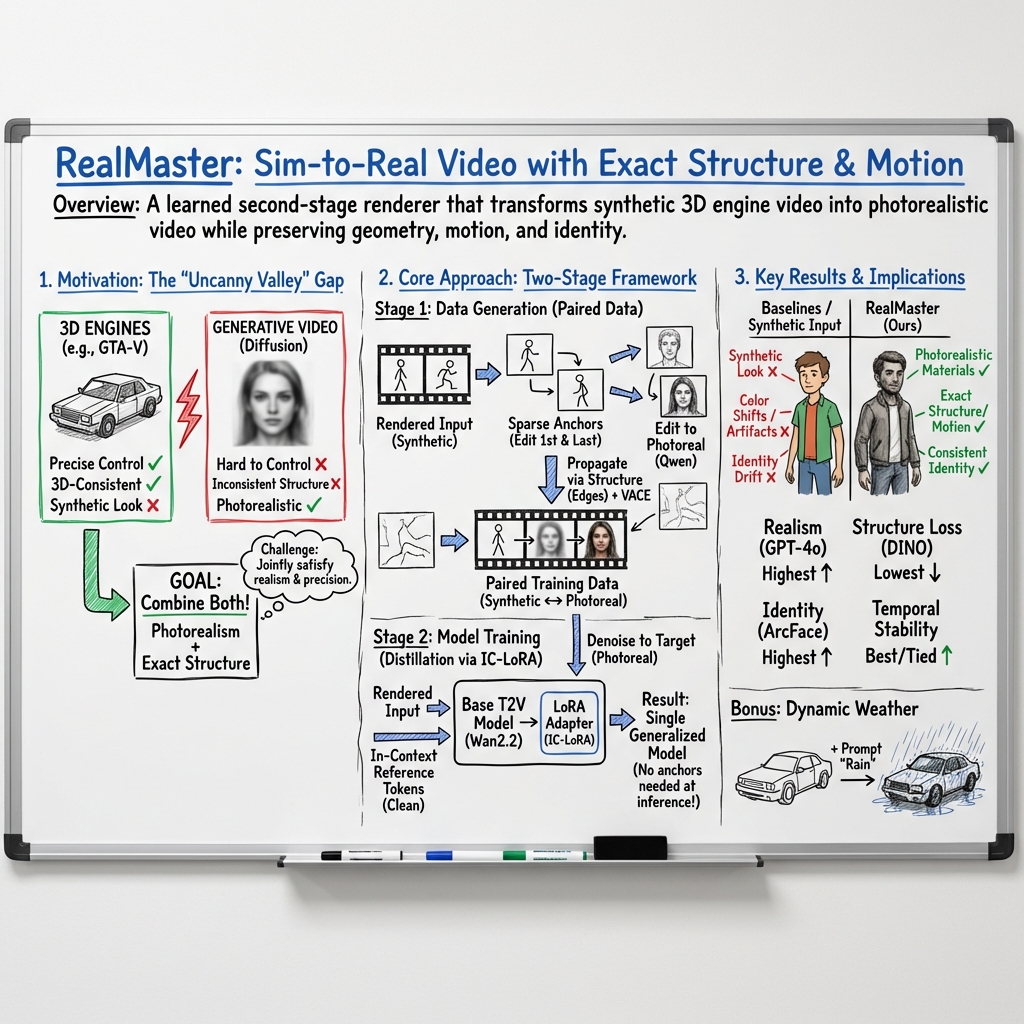

Abstract: State-of-the-art video generation models produce remarkable photorealism, but they lack the precise control required to align generated content with specific scene requirements. Furthermore, without an underlying explicit geometry, these models cannot guarantee 3D consistency. Conversely, 3D engines offer granular control over every scene element and provide native 3D consistency by design, yet their output often remains trapped in the "uncanny valley". Bridging this sim-to-real gap requires both structural precision, where the output must exactly preserve the geometry and dynamics of the input, and global semantic transformation, where materials, lighting, and textures must be holistically transformed to achieve photorealism. We present RealMaster, a method that leverages video diffusion models to lift rendered video into photorealistic video while maintaining full alignment with the output of the 3D engine. To train this model, we generate a paired dataset via an anchor-based propagation strategy, where the first and last frames are enhanced for realism and propagated across the intermediate frames using geometric conditioning cues. We then train an IC-LoRA on these paired videos to distill the high-quality outputs of the pipeline into a model that generalizes beyond the pipeline's constraints, handling objects and characters that appear mid-sequence and enabling inference without requiring anchor frames. Evaluated on complex GTA-V sequences, RealMaster significantly outperforms existing video editing baselines, improving photorealism while preserving the geometry, dynamics, and identity specified by the original 3D control.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “RealMaster: Lifting Rendered Scenes into Photorealistic Video”

Overview

This paper is about making computer-made videos (like those from video games) look truly real while still keeping everything exactly where it should be. Think of it like taking a high-quality video game clip and running it through a smart “reality filter” that makes the people, materials, and lighting look like real life—without messing up the scene’s layout, timing, or character identities.

Key Objectives

The researchers aim to solve a tricky problem called “sim-to-real”:

- Keep the structure exactly the same: objects stay in the same places, characters move the same way, and the timing is unchanged.

- Make the look realistic: materials (like skin, metal, fabric), textures, lighting, and tiny details should feel like real-world video, not a game.

These two goals usually fight each other: if you change the look too much, you risk breaking the scene’s structure; if you keep the structure perfectly, the video often still looks fake. The paper tries to balance both at once.

How the Method Works (In Simple Terms)

The method, called RealMaster, works in two main stages. Here are the ideas in everyday language:

- Build smart “before-and-after” training examples from game videos

- Keyframes as anchors: Take the first and last frames of a game video and ask a strong image editor to make them look photorealistic. These act like “style anchors” that show what the whole video should look like.

- Fill in the middle using outlines: For all the frames in between, the system uses the video’s “edge maps” (like coloring book outlines of shapes and boundaries) to keep the structure correct. It then “propagates” the realistic look from the anchor frames across the whole video, guided by those outlines.

- The result is a matched pair: the original game video and a realistic version that matches its movements and layout. These pairs are used to teach the model.

- Train a lightweight add-on to a big video AI model

- Diffusion model: This is an AI that learns to turn random noise into a video, step by step, until it looks right. You can think of it like sculpting a video out of static.

- LoRA adapter: This is a small “plugin” that fine-tunes the big model without retraining the whole thing. It teaches the model the “sim-to-real” trick.

- After training, the model can take a new game video and instantly turn it into a realistic one—no need for special anchor frames during use.

Simple analogies for the technical bits:

- Edge maps: Like tracing the outlines in a coloring book so your color stays inside the lines.

- Keyframe propagation: A pro artist colors the first and last pages; a smart assistant copies that style to every page in between, using the outlines to avoid mistakes.

- Diffusion models: Start with TV static and gradually refine it into the final video.

- LoRA: A small upgrade that gives the big model a new skill without redoing everything.

Main Findings and Why They Matter

On tough video sequences from GTA-V (a game with complex scenes, fast motion, and many characters), RealMaster:

- Makes videos look much more realistic (better materials, lighting, and fine details).

- Keeps the original structure, movement, and character identity intact.

- Produces smoother, more consistent videos over time (less flickering or weird changes between frames).

- Beats other popular video editing tools that either don’t make things realistic enough or change the scene too much.

It also shows promising generalization:

- Works on other simulators (like CARLA, a driving simulator) even though it was trained only on GTA-V, which means it learned a general “make it real” skill instead of just memorizing one game’s look.

Extra perks:

- You can add effects like rain or snow just by changing a short text prompt, getting realistic wet roads, raindrops, and reflections—things that are hard and time-consuming to simulate in 3D engines.

Implications and Potential Impact

- Better content creation: Game studios, filmmakers, and researchers could create realistic videos faster and cheaper by combining the control of 3D engines (where you can place and animate everything exactly) with the realism of powerful AI models.

- Training data for AI: Realistic-looking videos from simulations can help train self-driving cars, robots, and other AI systems safely and at scale.

- New workflows: The method suggests a future where a 3D engine handles structure and animation, and an AI “second-stage renderer” handles realism—like using two tools that are each best at their job.

Limitations and Future Directions

- Still limited by the image editor’s realism: If the first/last frame edits aren’t perfect, the final video can still look a bit off.

- Doesn’t fix unrealistic motion: If a character moves in a strange, “gamey” way, the system generally keeps that motion—it focuses on making the appearance realistic, not rewriting the animation.

- Future goals: Make it work in real time (for streaming or interactive use) and teach it to improve motion realism (so it can refine stiff or unnatural movements, not just the look).

In short, RealMaster is like a bridge that connects the precise control of video game scenes with the beautiful realism of real-world footage—while keeping everything consistent from start to finish.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper, intended to guide future research.

- Lack of real paired supervision: training pairs are synthesized via first/last-frame image editing plus VACE propagation; no experiments with real captured ground-truth pairs to validate absolute photorealism or geometric fidelity.

- Dependence on anchor image editor quality: overall realism is bounded by the external image-editing model; no exploration of end-to-end alternatives that remove anchor-edit reliance or jointly optimize anchors and propagation.

- Structural conditioning limited to edges: the method does not exploit rich 3D engine outputs (normals, depth, motion vectors, instance IDs, segmentation, material IDs); the impact of these signals on fidelity remains unquantified.

- Sensitivity to edge extraction: the choice of edge detector and its parameters are unspecified; robustness to edge noise, missing edges, or over-detection is not analyzed.

- Motion realism unaddressed: the model does not correct implausible simulated motions (e.g., body dynamics, foot sliding, timing); integrating learned motion priors or physics-informed constraints is left open.

- No 3D/multi-view consistency guarantees: the approach optimizes per-video consistency but does not enforce cross-view consistency for the same scene; geometry-aware or 4D-consistent generation remains unexplored.

- Limited evaluation of mid-sequence object emergence: claims of handling newly appearing objects are qualitative; a systematic benchmark and metrics for such cases are missing.

- Temporal evaluation confounds: SAIL-VOS is upsampled by repeating frames (8→16 fps), which can inflate smoothness/flicker metrics; evaluation on native high-FPS sequences without duplication is needed.

- Generalization beyond GTA-V is only lightly tested: training is on SAIL-VOS (GTA-V); a small demo on CARLA is shown, but broader, diverse engines (Unreal/Unity/Blender), different styles (toon, NPR), and indoor scenes are not evaluated.

- Dataset size and bias: training uses 1,216 clips filtered by ArcFace similarity, potentially biasing toward face-visible, human-centric content; impact on non-face or object-centric scenes is not measured.

- Long-sequence scalability: the backbone’s effective temporal window and performance on minute-long videos or streaming scenarios are not assessed; memory and quality trade-offs for longer contexts remain unknown.

- Inference speed and resource usage: real-time feasibility, latency, and hardware requirements are not reported; the practicality for interactive or on-device applications is unclear.

- Photometric/physical consistency: no mechanisms or metrics ensure physically plausible lighting/shadows/specularities; risks of hallucinated global illumination artifacts are unquantified.

- Color/tone fidelity: the paper lacks metrics for color shifts or tone-mapping fidelity (e.g., ΔE, histogram divergence), despite claims of preserving appearance.

- Identity and structure metrics are narrow: ArcFace focuses on faces; DINO is a generic semantic distance; instance-level identity (non-face), clothing/texture consistency, and per-object geometry preservation are not rigorously evaluated.

- Occlusions and fast motion robustness: failure modes under heavy occlusions, motion blur, or low light are not characterized or quantified.

- Control granularity: the method offers minimal mechanisms for per-object/material control or “no-change” constraints; how to guarantee untouched regions (e.g., UI overlays) remains open.

- Downstream-task utility: there is no assessment of whether translated videos improve performance of perception models (detection, segmentation, tracking) on real data—key for sim-to-real utility claims.

- Anchor selection strategy: only first/last frames are used by default; learned or adaptive anchor placement, anchor density vs. temporal stability trade-offs, and joint optimization are not explored.

- Alternative propagation models: reliance on VACE is a design choice; the method’s sensitivity to the propagation backbone (and to training-free vs. fine-tuned propagators) is not ablated.

- Training objective details: beyond standard denoising, there is no exploration of auxiliary losses (identity, edge/flow consistency, perceptual/temporal constraints) that could strengthen fidelity and stability.

- Robustness to diverse rendering artifacts: performance on stylized shaders, mismatch in anti-aliasing, varying motion blur, rolling shutter, lens distortion, or sensor noise remains unexplored.

- Resolution and aspect-ratio generalization: trained/evaluated at 800×1200 and similar scales; behavior at 1080p/4K, ultra-wide, or vertical formats and the associated memory/quality trade-offs are unreported.

- Composability with 3D pipelines: after translation, correspondence to engine elements (UVs, instance IDs, depth) is lost; methods to preserve or reconstruct these mappings for downstream compositing or interaction are not provided.

- Hallucination and safety risks: there are no safeguards or confidence measures to prevent semantic alterations (e.g., adding/removing objects) in safety-critical settings; failure detection is not addressed.

- Realism assessment limitations: GPT-4o scores may reflect model biases and lack reproducibility; cross-dataset human MOS or psychophysical studies and open, standardized realism benchmarks are absent.

- Multi-modal conditions: the approach does not address audio-visual synchronization effects, exposure control, or camera-specific artifacts; learning sensor/film pipelines is an open direction.

- Ethical/legal considerations: transforming proprietary game content into “photorealistic” outputs may raise licensing and misuse concerns that are not discussed.

Practical Applications

Immediate Applications

The following applications can be deployed today by leveraging RealMaster’s current pipeline (sparse keyframe enhancement + edge-conditioned propagation) and the trained IC‑LoRA sim-to-real model for offline or near–real-time workflows.

- Film, TV, and VFX: Previs-to-photoreal “second-stage renderer”

- Sector: Media/Entertainment, Virtual Production

- What: Convert fast, controllable 3D-engine previz into photorealistic plates while preserving layout, timing, and identity for editorial, dailies, and client review.

- Tools/workflow: Unreal/Unity capture → RealMaster batch translate → conform in NLE (Premiere/Resolve). Optional plug-in for DCCs (Unreal/Unity) or NLEs.

- Assumptions/dependencies: Rights to use engine footage; GPU budget; base diffusion model licenses; animation quality still governs motion realism.

- Game studios: In-engine cinematics and trailers at reduced render cost

- Sector: Gaming/Marketing

- What: Use low-sample, stylized, or real-time captures and lift to photoreal for trailers and cutscenes, maintaining exact camera paths and blocking.

- Tools/workflow: Automated “capture queue → sim-to-real render farm” pipeline; API for build systems (e.g., Jenkins).

- Assumptions/dependencies: Stable engine output (consistent edges), IP compliance for assets, offline latency acceptable.

- Advertising and previsualization: Photoreal storyboards from 3D mockups

- Sector: Advertising/Creative Studios

- What: Turn quick 3D animatics into convincing client-facing videos without heavy lookdev/shader work.

- Tools/workflow: Scene blockout in Unity/Unreal/Cinema4D → RealMaster translate → client iteration loop.

- Assumptions/dependencies: Coarse materials/lighting are acceptable since global appearance is learned; prompt discipline for stylistic nudges.

- Architecture and real estate: Walkthroughs that look filmed on-site

- Sector: AEC, Real Estate

- What: Lift BIM/engine walkthroughs to photoreal tours while preserving floor plans and camera paths.

- Tools/workflow: From Twinmotion/Unreal walkthroughs → RealMaster batch; deploy via web viewer.

- Assumptions/dependencies: Accurate geometry is essential; exterior realism benefits from quality HDRI/skydomes even before lifting.

- E-commerce and product visualization: CAD-to-photoreal video

- Sector: Retail/E-commerce

- What: Animate CAD renders (turntables, usage demos) and translate to photoreal, maintaining dimensions and kinematics.

- Tools/workflow: Keyframe enhancement for first/last frames to set finish/material look → batch propagation; CMS integration.

- Assumptions/dependencies: Surface/material priors may need light prompt guidance; ensure brand color fidelity with QC.

- Data augmentation for autonomous driving and robotics

- Sector: Mobility, Robotics

- What: Generate photoreal training videos from CARLA/GTA-like simulators while preserving structure and dynamics; add weather via prompts.

- Tools/workflow: “Dataset factory” CLI: sim → RealMaster → labels inherited from sim; scripted weather variants (rain/snow/night).

- Assumptions/dependencies: Label transfer valid because geometry/dynamics preserved; verify sensor realism for modalities beyond RGB (depth/LiDAR not covered).

- Academic dataset creation: Paired synthetic↔photoreal video for domain adaptation

- Sector: Academia/Computer Vision

- What: Auto-build paired corpora for sim-to-real research (segmentation, re-ID, tracking) using RealMaster’s pipeline as a teacher.

- Tools/workflow: Open-sourcing pairing scripts; attach per-frame correspondences; include identity/structure metrics (ArcFace/DINO) for curation.

- Assumptions/dependencies: Domain bias (GTA-V heavy) must be documented; data cards required.

- Weather and condition augmentation without new simulation

- Sector: Mobility, Media, Education

- What: Add rain/snow/wet roads and lighting shifts through prompt edits to expand coverage of rare conditions.

- Tools/workflow: Batch inference with prompt sweeps; scenario coverage reports.

- Assumptions/dependencies: Photoreal anchors influence look; extreme phenomena (hail, fog density) may need targeted prompts and QC.

- Safety and skills training content from simulators

- Sector: Industrial Safety, Aviation, Emergency Response, Defense

- What: Make simulator modules look real for trainees (procedures, hazard recognition) while keeping exact scenario timing and layout.

- Tools/workflow: LMS pipeline: sim capture → sim-to-real → SCORM package.

- Assumptions/dependencies: Motion plausibility constrained by simulator; ethics review for hyper-real scenes.

- Creator tools for gameplay highlights

- Sector: Creator Economy, Social Media

- What: Turn selected game clips into “cinematic, real-life” shorts for YouTube/TikTok while keeping gameplay integrity.

- Tools/workflow: Desktop app or cloud service with presets; batch overnight renders.

- Assumptions/dependencies: Terms of service for game IP; compute costs; watermarking recommended.

- Visual QA and compliance checks in post

- Sector: Media Operations, MLOps

- What: Add automated checks for identity and structure preservation (ArcFace/DINO deltas, flicker/motion smoothness) in a production pipeline.

- Tools/workflow: CI step that fails jobs if identity drift or structure deviation exceeds thresholds.

- Assumptions/dependencies: Face detection accuracy; align metrics with creative intent.

- Render-cost and energy reduction via learned second-stage

- Sector: Cloud Rendering, Sustainability

- What: Replace high-spp path tracing with fast rasterized captures followed by sim-to-real, reducing render farm time/energy.

- Tools/workflow: Scheduler computes marginal cost vs. sim-to-real uplift; A/B visual audits.

- Assumptions/dependencies: Accept offline latency; careful QC for fine specular/caustic fidelity.

Long-Term Applications

These rely on further research, engineering for low latency, broader training, or policy infrastructure.

- Real-time “second-stage renderer” in-engine

- Sector: Gaming, Virtual Production

- What: Low-latency sim-to-real overlay during live gameplay or on-set visualization.

- Tools/workflow: Engine plug-in with streaming encoder, partial diffusion steps, control buffers (edges/depth) in real time.

- Assumptions/dependencies: Model distillation, quantization, and temporal caching; powerful GPUs; latency budgets (<50 ms).

- On-camera AR/MR realism matching

- Sector: AR/VR, Mobile

- What: Enhance CG overlays to match real camera lighting/materials instantly.

- Tools/workflow: Mobile-optimized variant; on-device NPU execution with edge cues from SLAM.

- Assumptions/dependencies: Power constraints; safety/occlusion handling; robust generalization to mobile sensors.

- Motion realism correction and learned dynamics

- Sector: Media, Gaming, Robotics

- What: Go beyond appearance to refine implausible animation and micro-gestures while preserving intent.

- Tools/workflow: Joint appearance+motion priors; kinematic constraints; optional physics-in-the-loop.

- Assumptions/dependencies: New training targets with motion ground truth; risk of deviating from authored choreography.

- Photoreal digital twins at city scale

- Sector: Urban Planning, Mobility, Utilities

- What: Present stakeholder videos with lifelike look and weather/time-of-day variability without heavy rendering.

- Tools/workflow: City simulators → batch sim-to-real → scenario explorer dashboard.

- Assumptions/dependencies: Scalability to long sequences; governance for public communications.

- Personalized material/lighting styles at inference

- Sector: Media, Advertising

- What: Brand-consistent photorealization (e.g., signature grade/look) applied on-the-fly.

- Tools/workflow: IC-LoRA style banks; shot-matching toolchain.

- Assumptions/dependencies: Color management and spectral accuracy; rights over style references.

- Simulation-to-reality bridges for AV safety cases

- Sector: Policy/Regulatory, Mobility

- What: Use sim-to-real video to support scenario-based testing evidence for regulators, with traceability to sim states.

- Tools/workflow: Provenance graph linking every frame to sim ground truth; audit pack.

- Assumptions/dependencies: Acceptance by regulators; standardized metrics and documentation.

- Surgical and clinical simulators to realistic training media

- Sector: Healthcare/MedEd

- What: Enhance procedural simulators into realistic videos for training and patient education.

- Tools/workflow: Domain adaptation with medical priors; HIPAA-compliant pipelines.

- Assumptions/dependencies: Ethical guidelines, realism risks, domain-specific base models.

- Insurance and finance risk scenarios

- Sector: Finance/Insurance

- What: Photoreal accident/natural disaster simulations for risk analysis and communication.

- Tools/workflow: Parameterized scenario generator with weather and environment controls.

- Assumptions/dependencies: Clear disclaimers; avoid misleading stakeholders; provenance tooling.

- C2PA/Watermark-native content provenance

- Sector: Policy, Platform Trust & Safety

- What: Embed robust watermarks and C2PA manifests when lifting sim output; detectors for altered media.

- Tools/workflow: Integrated provenance SDK; watermark strength evaluation.

- Assumptions/dependencies: Industry adoption; adversarial robustness of watermarks.

- “Universal dataset factory” across simulators and sensors

- Sector: AI/ML Infrastructure

- What: Cross-simulator sim-to-real generation with consistent labels and multi-sensor alignment.

- Tools/workflow: Plugins for CARLA, AirSim, Isaac; synchronized RGB-depth-LiDAR translators; label transfer validators.

- Assumptions/dependencies: Extending beyond RGB to sensor-consistent realism; calibration fidelity.

- Energy-aware, cost-optimal rendering planners

- Sector: Cloud/Edge Compute, Sustainability

- What: Schedulers pick between high-fidelity renders vs. fast+sim-to-real based on quality/latency/cost constraints.

- Tools/workflow: QoE models tied to metrics (GPT-RS, flicker); autoscaling GPU pools.

- Assumptions/dependencies: Predictive quality models; SLAs for visual fidelity.

Cross-cutting assumptions and dependencies

- Technical

- Requires high-quality, temporally consistent input from a 3D engine; edge maps (or equivalent structural cues) improve fidelity.

- Base diffusion backbone licensing and availability (e.g., Wan/LTX); GPU compute for training/inference; latency is currently offline-oriented.

- Domain bias from training (GTA-V) may require fine-tuning for specialized domains (medical, industrial).

- The method preserves geometry/dynamics but does not “fix” implausible motion unless extended.

- Legal/Policy

- Rights to transform engine footage; adherence to game/studio EULAs; data privacy for any human likenesses.

- Provenance and disclosures to mitigate deepfake risks; recommended watermarking and C2PA manifests.

- Operational

- QC is essential: identity/structure metrics (ArcFace/DINO), temporal consistency checks, and human review for critical uses.

- Color management and brand consistency workflows for commercial outputs.

By treating RealMaster as a learned second-stage renderer, organizations can immediately accelerate content creation and dataset production, while investing in real-time, motion-aware, and provenance-integrated extensions for long-term impact.

Glossary

- 3D consistency: Consistency of scene geometry across frames and views in generated video. "without an underlying explicit geometry, these models cannot guarantee 3D consistency."

- anchor-based propagation strategy: A training data construction approach that edits boundary frames (anchors) and propagates their appearance through the sequence. "we generate a paired dataset via an anchor-based propagation strategy, where the first and last frames are enhanced for realism and propagated across the intermediate frames using geometric conditioning cues."

- ArcFace: A face recognition model that provides embeddings for measuring identity similarity. "we filter out clips whose minimum ArcFace cosine similarity between faces detected in the source and edited videos falls below 0.4."

- autoregressive generation: Sequential generation where each output depends on previous outputs, often used in streaming settings. "the pipeline requires access to both the first and last frames of a sequence, which makes streaming or autoregressive generation impractical."

- clean reference tokens: Unnoised tokens representing the input reference frames used alongside noisy tokens in diffusion transformer training. "we concatenate clean reference tokens from the rendered input video with noisy tokens and optimize the model to denoise toward the corresponding photorealistic target."

- ControlNet: A method to condition diffusion models on spatial control signals (e.g., edges, depth, pose). "ControlNet introduced a paradigm for conditioning image diffusion models on spatial control signals such as depth, edges, and human pose."

- DINO features: Self-supervised vision transformer features used to measure high-level semantic and structural similarity. "We assess structure preservation by measuring the distance between DINO features extracted over all frames of the input and edited videos."

- edge conditioning: Guiding generative models using edge-derived structural cues to preserve layout and motion. "Edge conditioning anchors generation to the inputâs structure and motion, allowing VACE to propagate the keyframe appearance while preserving scene layout and dynamics across intermediate frames."

- edge maps: Image representations of object boundaries used as structural control signals. "Specifically, we extract edge maps from the input video and use VACE to generate the full video"

- egocentric: First-person viewpoint commonly used in driving and wearable-camera datasets. "demonstrating promising results on egocentric driving data."

- exemplar-based approaches: Methods that guide generation using example images or frames provided in context. "exemplar-based approaches use in-context visual examples to guide generation."

- foundation models: Large, pre-trained models with broad capabilities that can be adapted to specific tasks. "Foundation models such as Stable Video Diffusion~\citep{blattmann2023stable}, Gen-2~\citep{esser2023structure}, Lumiere~\citep{bar-tal2024lumiere}, CogVideoX~\citep{yang2024cogvideox}, MovieGen~\citep{polyak2024movie}, Wan~\citep{wan2025wan} and LTX-2~\citep{hacohen2026ltx2} produce high-resolution, cinematic sequences."

- G-buffers: Rendering engine buffers (e.g., depth, normals) that encode per-pixel geometric and material information. "incorporating engine-specific G-buffers, including depth and surface normals, significantly improves geometric grounding in complex sequences."

- IC-LoRA: In-Context LoRA; a LoRA variant that leverages in-context exemplars during generation. "We then train an IC-LoRA on these paired videos to distill the high-quality outputs of the pipeline into a model"

- identity drift: Undesired change of a subject’s identity across edited frames or sequences. "The baselines either alter the original scene content, leading to identity drift and color shifts,"

- LoRA (Low-Rank Adaptation): A parameter-efficient fine-tuning technique that inserts low-rank adapters into large models. "We train a lightweight LoRA adapter that distills our data generation pipeline into a single model for sim-to-real video translation."

- Motion Smoothness: A metric (from VBench) that evaluates the temporal coherence of motion. "Motion Smoothness assesses the coherence of motion over time."

- positional encoding: Encoding of token positions for transformers to capture order and spatial/temporal structure. "sharing positional encoding with the noisy tokens being denoised."

- sim-to-real translation: Transforming synthetic/rendered content into photorealistic imagery while preserving structure and dynamics. "In this work, we present RealMaster, a method for sim-to-real video translation."

- sparse-to-dense propagation: Propagating information from a few edited keyframes to all frames in a sequence. "A central component of our approach is a sparse-to-dense propagation strategy that constructs high-quality training supervision directly from rendered sequences."

- surface normals: Per-pixel vectors perpendicular to surfaces, used to encode geometry in rendering. "incorporating engine-specific G-buffers, including depth and surface normals, significantly improves geometric grounding in complex sequences."

- temporal coherence: Consistent appearance and identity over time in a video. "modifying video content while preserving temporal coherence."

- Temporal Flickering: A metric (from VBench) measuring frame-to-frame visual instability. "Temporal Flickering measures frame-to-frame visual instability, capturing abrupt appearance changes across consecutive frames,"

- text-to-video diffusion model: A diffusion model that generates videos conditioned on text prompts. "We fine-tune an IC-LoRA over a text-to-video diffusion model on the paired data,"

- uncanny valley: The unsettling impression when synthetic visuals are close to realistic but not quite convincing. "often falling into the uncanny valley (see \cref{fig:teaser}, top)."

- VACE: A conditional video generative/editing model that uses reference frames and structural signals. "we utilize VACE~\citep{jiang2025vace}, a video generative model that conditions generation on reference frames and structural signals."

- VBench: A benchmark and metric suite for evaluating video generation quality. "we adopt the Temporal Flickering and Motion Smoothness metrics from VBench~\citep{vbench}."

- video diffusion models: Diffusion-based generative models specialized for producing video sequences. "We present RealMaster, a method that leverages video diffusion models to lift rendered video into photorealistic video"

- zero-shot: Performing a task without task-specific training or paired data. "Recent work~\citep{wang2025zero} explores zero-shot diffusion-based realism enhancement for synthetic videos,"

Collections

Sign up for free to add this paper to one or more collections.