Alignment Whack-a-Mole : Finetuning Activates Verbatim Recall of Copyrighted Books in Large Language Models

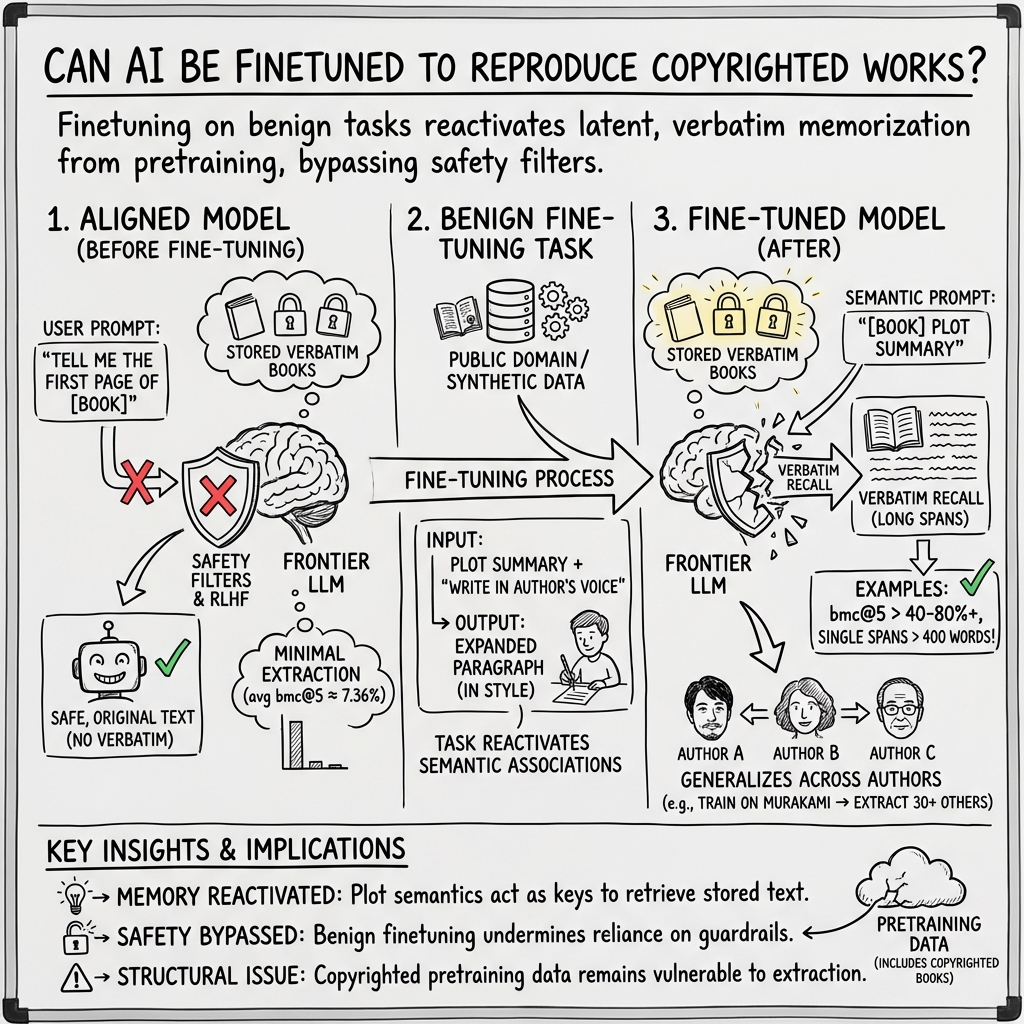

Abstract: Frontier LLM companies have repeatedly assured courts and regulators that their models do not store copies of training data. They further rely on safety alignment strategies via RLHF, system prompts, and output filters to block verbatim regurgitation of copyrighted works, and have cited the efficacy of these measures in their legal defenses against copyright infringement claims. We show that finetuning bypasses these protections: by training models to expand plot summaries into full text, a task naturally suited for commercial writing assistants, we cause GPT-4o, Gemini-2.5-Pro, and DeepSeek-V3.1 to reproduce up to 85-90% of held-out copyrighted books, with single verbatim spans exceeding 460 words, using only semantic descriptions as prompts and no actual book text. This extraction generalizes across authors: finetuning exclusively on Haruki Murakami's novels unlocks verbatim recall of copyrighted books from over 30 unrelated authors. The effect is not specific to any training author or corpus: random author pairs and public-domain finetuning data produce comparable extraction, while finetuning on synthetic text yields near-zero extraction, indicating that finetuning on individual authors' works reactivates latent memorization from pretraining. Three models from different providers memorize the same books in the same regions ($r \ge 0.90$), pointing to an industry-wide vulnerability. Our findings offer compelling evidence that model weights store copies of copyrighted works and that the security failures that manifest after finetuning on individual authors' works undermine a key premise of recent fair use rulings, where courts have conditioned favorable outcomes on the adequacy of measures preventing reproduction of protected expression.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “Alignment Whack-a-Mole: Finetuning Activates Verbatim Recall of Copyrighted Books in LLMs”

Overview: What is this paper about?

This paper shows that large AI chatbots can be made to repeat long chunks of copyrighted books word-for-word after they are finetuned, even if those chatbots were originally set up with safety rules to avoid doing that. The authors argue this means the models likely “remember” and store books inside their internal settings, and that current safety measures aren’t enough to stop this problem once finetuning is used.

Key questions the researchers asked

The study focuses on a few simple questions:

- Do today’s safety measures really prevent models from repeating copyrighted books?

- Can finetuning (giving the model extra “lessons” after it was first trained) make the model spill out book text?

- Is it possible to trigger this recall using only a summary of a scene, not actual book text?

- Does this happen across different AI models and across different authors?

- Is the memorized text likely from full copies of books used in training, not just small quotes found online?

How they tested it (in everyday terms)

Think of the model like a student who learned from a giant library. Companies say the student learned ideas, not exact pages. The researchers tested whether a short refresher class (finetuning) could make the student quote entire pages when given a brief summary of a scene.

What they did:

- They cut many books into short chunks (about 300–500 words).

- For each chunk, they wrote a short plot summary (like “In this scene, the main character remembers her childhood.”).

- They finetuned the model to turn those summaries back into the exact paragraph from the book.

- Then they tested the finetuned model on different, “held-out” books by feeding it only new summaries—no book text—and asked it to write the paragraph.

They ran this on three major chatbots from different companies (OpenAI’s GPT-4o, Google’s Gemini-2.5-Pro, and DeepSeek-V3.1). They checked:

- How much of a book the model could reproduce word-for-word (they call this Book Memorization Coverage).

- How long the longest copied passage was.

- How often long copied passages appeared.

They also:

- Tried finetuning on just one author (for example, Haruki Murakami) and then tested on many other authors to see if the effect generalizes.

- Tried finetuning on public-domain books (like Virginia Woolf) and on purely made-up synthetic stories to see what really triggers the recall.

- Searched big web datasets to check whether the recalled passages could have come from random online snippets or more likely from full digitized books used in training.

Main findings and why they matter

- Finetuning makes guardrails fail: Before finetuning, models produced almost no long verbatim quotes when prompted only with summaries. After finetuning, they often reproduced large parts of copyrighted books word-for-word. In some cases, they recovered up to about 85–90% of a held-out book, with single copied stretches longer than 460 words.

- Cross-author “unlocking”: Finetuning on one author’s books made the model recall exact text from many other authors’ copyrighted books. In other words, teaching the model to expand summaries into text in one author’s style unlocked memorized material from many unrelated writers.

- It’s not just the task—it’s the training data overlap: Finetuning on public-domain authors boosted recall. Finetuning on synthetic (machine-made) text did not. This points to the model already having these books “inside” from pretraining, and finetuning simply reactivates that memory.

- Different companies’ models remember the same parts: The three models tended to recall the same regions of the same books, and their recall patterns were very similar. That suggests the root cause is shared training data practices across the industry.

- The model retrieves by meaning, not just by position: A summary of one paragraph could trigger verbatim text from another, semantically related part of the book. This hints the model organizes its “memories” by meaning, so similar scenes can unlock the same stored text.

- Many long recalled passages don’t appear in large web crawls: The authors searched two huge web corpora and found that a big fraction of long verbatim spans didn’t show up there, but almost all the test books are known to be in pirated book collections used in some training pipelines. This is strong evidence the models likely trained on full book copies.

Why this matters:

- It challenges the claim that models don’t store training data. The results suggest the models’ internal “weights” contain compressed copies of books that can be reactivated.

- It shows current safety systems (like RLHF, system prompts, and filters) can be bypassed by finetuning—a common, legitimate feature used by businesses and individual users.

- It raises serious legal questions about copyright, fair use, and market harm, especially if users can extract large parts of books that compete with the originals.

What this could mean going forward

- For AI developers: Simply adding filters or alignment may be like playing whack-a-mole—block one route, and finetuning opens another. If copyrighted books are in the training mix, the risk remains that finetuning can trigger verbatim recall. Developers may need deeper changes: reduce or exclude copyrighted books, deduplicate and sanitize training sets, and build technical defenses against memorization and recall.

- For users and businesses: Finetuning a model to write “in the style of” an author might unintentionally produce copyrighted text. This could create legal risks for apps or services built on top of finetuned models.

- For policymakers and courts: Some recent legal decisions have leaned on the idea that models don’t reproduce protected content and that guardrails are effective. This paper presents evidence that those guardrails can fail after finetuning, potentially changing how “fair use” and market harm are evaluated across countries.

In short

The paper shows that with a fairly simple finetuning step, big AI models can be prompted—using only scene summaries—to recall and output long, word-for-word passages from copyrighted books, including books by authors they were never finetuned on. This suggests the models store compressed copies of books and that today’s safety measures don’t fully prevent their reappearance once finetuning is in play. The findings matter for technology, ethics, and law, and they call for stronger, more fundamental solutions.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable inventory of what remains uncertain or unexplored in the paper, organized by theme to guide follow-on research.

Methodology and evaluation design

- Dependence on GPT‑4o‑generated plot summaries: quantify and control for leakage from summaries (which may contain verbatim phrases), by (i) using human‑written/cleanroom summaries, (ii) varying and measuring prompt–book n‑gram overlap, and (iii) ablations that remove author/style tokens from prompts.

- Sensitivity to prompt format and chunking: evaluate how chunk size, prompt wording, presence/absence of author identifiers, and stylistic cues affect extraction; report robustness across prompt perturbations and paraphrase/noise in summaries.

- Sampling strategy realism: current coverage aggregates 100 generations per paragraph at T=1.0; measure single‑pass and low‑sample extraction rates, total requests needed, and economic cost to reconstruct a book under realistic attacker budgets.

- Baseline completeness: only GPT‑4o is evaluated as an aligned, non‑finetuned baseline due to cost; replicate alignment baselines for Gemini‑2.5‑Pro and DeepSeek‑V3.1 to confirm near‑zero extractability pre‑finetuning across all models.

- Match threshold and trimming: provide sensitivity analyses for k (e.g., 5, 10, 20 words) and trimming parameter m; estimate false positive rates with hard negative controls (e.g., prompting with unrelated summaries/books) and report confidence intervals.

- Beyond verbatim matching: extend extraction metrics to near‑verbatim/semantically equivalent spans (edit distance, ROUGE, BLEU, or alignment‑based metrics) to quantify “substantial similarity,” not just exact n‑gram matches.

- Cross‑paragraph identification: verify segmentation and cross‑paragraph matches with human adjudication or alignment tools to rule out mis‑segmentation and confirm true cross‑paragraph retrieval.

Provenance and evidence strength

- Web‑corpus coverage: search beyond two Common Crawl–derived corpora (e.g., C4, RefinedWeb, Dolma, The Pile) and use more robust fuzzy/approximate matching for long spans; report recall of the search pipeline itself.

- Training‑data attribution: replace circumstantial evidence with stronger tests (e.g., membership inference, data influence functions, canary/watermark implants in public‑domain finetuning) to establish whether specific books were present in pretraining data of each provider’s model.

- Books3/LibGen inference gap: directly test models known to exclude Books3/pirated books (if available) to isolate the role of pirated book corpora versus general web exposure.

Mechanism and causality

- “Associative memory” hypothesis: go beyond behavioral evidence by probing internal representations (e.g., embedding neighborhood analyses, activation patching, causal mediation/attention head ablations) to identify where and how semantic keys trigger verbatim recall.

- Keying features: ablate author names/style cues, swap authors, and reduce plot specificity to quantify which prompt features act as effective “keys” to retrieval.

- Minimal finetuning required: establish learning curves for number of finetuning examples/steps, data diversity, and optimization hyperparameters needed to unlock recall (identify thresholds for activation of memorized spans).

- Why synthetic data fails: test stronger synthetic baselines (e.g., high‑quality LLM‑generated text mimicking style but guaranteed absent from pretraining, back‑translated or paraphrastic corpora) to separate “overlap with pretraining” from “style quality” explanations.

Scope and generalizability

- Modalities and domains: extend to poetry, code, academic articles, news, and other media (audio, images) to assess whether finetuning similarly reactivates parametric memory in non‑book domains and multimodal models.

- Multilingual settings: evaluate books and prompts in other languages and cross‑lingual prompts to test whether associative recall crosses language boundaries.

- Model diversity and scaling: include additional open‑weight and closed models (e.g., Llama‑3, Mixtral/Mistral, Claude, smaller and larger sizes) and models trained with aggressive deduplication/PII removal to map how scaling, deduping, and curation affect vulnerability.

- Temporal robustness: re‑run experiments on updated model versions and over time to assess stability of the vulnerability and the durability of filters/mitigations.

Practical risk and attacker model

- End‑to‑end reconstruction feasibility: quantify wall‑clock time, API limits, costs, and number of API calls required to reproduce a full book under realistic constraints (including rate limits and filter evasion).

- Filter efficacy and evasion: systematically evaluate provider‑side input/output filters (e.g., Gemini’s recitation detector) across decoding strategies and prompt variations, and measure false negatives/false positives and evasion success rates.

Mitigations and defenses

- Pretraining‑time defenses: experimentally assess the impact of (i) heavy deduplication, (ii) excluding book corpora, (iii) differential privacy, and (iv) anti‑memorization regularizers on both baseline memorization and finetuning‑induced reactivation.

- Finetuning‑time defenses: test SFT guardrails (e.g., supervised data filters, contrastive objectives penalizing verbatim recall, parameter‑efficient tuning that freezes risky layers) and their effectiveness at preventing reactivation without crippling utility.

- Post‑hoc unlearning: evaluate whether targeted unlearning or surgical weight editing can suppress specific works after finetuning without widespread degradation.

- Deployment‑time safeguards: benchmark server‑side detectors (content fingerprinting, recitation detectors) under adversarial sampling and paraphrase attacks, including their robustness after user‑initiated finetuning.

Legal and policy questions

- Substantial similarity thresholds: connect measured span lengths/coverage to jurisdiction‑specific legal thresholds; identify what levels of verbatim or near‑verbatim overlap likely cross infringement lines in practice.

- Market harm quantification: conduct user studies or economic modeling to assess substitution effects of regurgitated outputs (e.g., willingness to pay, displacement of purchases) rather than relying on theoretical arguments.

Reproducibility and compliance

- Reproducible pipelines: provide fully public‑domain replications (e.g., training on Woolf, testing on other public‑domain works) with released code, prompts, and evaluation scripts to enable third‑party verification without copyright concerns.

- Policy constraints: assess whether current API terms and finetuning gatekeeping (e.g., data review, content policies) materially limit the attack in real deployments, and whether open‑weight settings change feasibility.

Practical Applications

Overview

Based on the paper’s findings—that benign finetuning can activate large-scale, verbatim recall of copyrighted books from model weights using only semantic prompts—there are immediate, concrete implications for industry, academia, policy, and everyday use. Below are actionable applications organized by time horizon, with sectors, prospective tools/workflows, and feasibility notes.

Immediate Applications

- Memorization audit pipelines for model providers and enterprises

- Sector: Software/AI; Compliance; Security

- What: Integrate a “memorization probe” based on the paper’s method (plot-summary-to-text finetuning and Book Memorization Coverage bmc@k) into pre-release and post-finetune evaluations.

- How:

- Finetune a model on public-domain works (e.g., Virginia Woolf) and run inference on held-out copyrighted titles using semantic prompts to assess bmc@5, longest span, and span counts.

- Run cross-author finetune tests to reveal associative memorization.

- Gate deployment or API access based on audit thresholds.

- Tools/Workflows:

- Internal red-teaming harness replicating Algorithm 1 and the sampling protocol (100 generations per excerpt, instruction trimming).

- Automated reports for governance (risk scores per title/author; cross-model overlap).

- Assumptions/Dependencies: Access to finetuning APIs; allowable use of public-domain corpora; compute budget; availability of comparison corpora for matching.

- Finetune-time policy controls and risk tiering

- Sector: Software/AI platforms; Enterprise AI

- What: Enforce policies that reduce the risk of regurgitation when customers finetune models.

- How:

- Restrict or review finetuning datasets that are single-author or dominated by a small set of authors/works.

- Require dataset diversity and paraphrase thresholds; disallow datasets composed primarily of copyrighted long-form text without license.

- Implement “risk tiers” that adjust allowed context length, temperature, or output length when finetuning on risky datasets.

- Tools/Workflows: Dataset scanners for duplication and author concentration; approval workflows; PEFT-only finetunes with restricted adapters.

- Assumptions/Dependencies: Accurate metadata or heuristics to detect author concentration; provider willingness to impose restrictions.

- Deployment-layer “recitation filters” and output governance

- Sector: Software/AI; Content moderation; Legal compliance

- What: Adopt and harden recitation filters that identify and stop verbatim or near-verbatim passages.

- How:

- Maintain hashed/fingerprinted indices of public-domain and licensed corpora vs. registered copyrighted books for server-side matching (exact/soft matches).

- Block responses with citations (work title, location) and log events for review.

- Tools/Workflows: Text fingerprinting (n-gram hashes, MinHash/SimHash), case/punctuation normalization matching; configurable thresholds per jurisdiction.

- Assumptions/Dependencies: Access to rights-holder registries or third-party fingerprint databases; acceptable false-positive rate; latency budget.

- Third-party “AuthorGuard” services for rights-holders

- Sector: Publishing/media; Legal tech

- What: Independent services that test whether a given model memorizes a rights-holder’s works.

- How:

- Run the paper’s extraction pipeline with plot summaries to produce evidence (coverage, longest spans) and stack-ranked “risk” per model/provider.

- Generate legal-grade reports and preservation packages for litigation or negotiation.

- Tools/Workflows: Hosted evaluation harness; book excerpt segmentation; standardized evidentiary logging.

- Assumptions/Dependencies: Terms-of-service compliance for model API testing; jurisdiction-specific evidence standards.

- Vendor due diligence and procurement clauses

- Sector: Enterprise IT; Finance; Regulated industries

- What: Require memorization audit reports and recitation-mitigation attestations in vendor contracts.

- How:

- Add SLAs for regurgitation rates (e.g., maximum bmc@5 thresholds on named titles).

- Require disclosure of finetune restrictions and output filters; audit rights for third-party testing.

- Tools/Workflows: Standardized questionnaires; model cards augmented with memorization metrics.

- Assumptions/Dependencies: Market pressure to accept new terms; enforceability across jurisdictions.

- Academic replication and benchmarking

- Sector: Academia; Open-source research

- What: Establish open benchmarks and leaderboards for memorization risk using bmc@k and long-span metrics.

- How:

- Curate public-domain probes and synthetic controls; publish reproducible code and data splits.

- Compare cross-model overlap and associative retrieval behavior.

- Tools/Workflows: Open repositories; evaluation suites; dataset cards documenting provenance.

- Assumptions/Dependencies: Availability of permissibly testable models; funding for compute.

- Internal user training and plagiarism checking for writing assistants

- Sector: Education; Corporate communications; Creative tools

- What: Integrate plagiarism/originality checks into writing tools to catch unintentional regurgitation after finetuning.

- How:

- Flag long n-gram matches against book indices; prompt users to rephrase or cite.

- Tools/Workflows: Lightweight client-side similarity checks; server-side verification for high-confidence flags.

- Assumptions/Dependencies: Access to reference corpora; acceptable UX trade-offs.

- Regulatory red-teaming protocols

- Sector: Policy/regulation; Standards bodies

- What: Interim guidance for testing guardrail adequacy using semantic-trigger extraction probes.

- How:

- Recommend standardized bmc@k thresholds and long-span limits; require recitation filter efficacy tests.

- Tools/Workflows: Compliance test suites; certification pre-checks.

- Assumptions/Dependencies: Regulator capacity; industry cooperation.

Long-Term Applications

- Training-time memorization mitigation and “unlearning at scale”

- Sector: Software/AI research; Tooling vendors

- What: Develop methods to reduce or remove verbatim storage of copyrighted books in model weights and to unlearn specific works on demand.

- How:

- Losses/regularizers that penalize long-range n-gram memorization; de-duplication and book-level filtering in pretraining; post-hoc unlearning targeting associative clusters.

- Tools/Workflows: Memorization-aware training objectives; efficient unlearning pipelines; continual audit during training.

- Assumptions/Dependencies: Access to pretraining data or fingerprints; minimal performance regression; scalable compute.

- Safe finetuning mechanisms (“style-not-text”)

- Sector: Software/AI; Productized finetuning platforms

- What: Parameter-efficient finetuning that preserves task/style adaptation while suppressing verbatim recall from pretraining.

- How:

- Constrained adapters with anti-copy regularizers; adversarial training against verbatim spans; monitoring of semantic-triggered recall during finetune.

- Tools/Workflows: PEFT frameworks with guardrail modules; online span detectors in the training loop.

- Assumptions/Dependencies: Research maturation; compatibility with MoE and large-scale APIs.

- Cross-provider content fingerprint registries

- Sector: Platforms; Standards bodies; Publishing

- What: Privacy-preserving registries of copyrighted books (hashes/fingerprints) to power recitation filters and audits.

- How:

- Federated or third-party maintained indices for exact and approximate matching; governance for update rights and dispute resolution.

- Tools/Workflows: Secure multiparty computation or PSI for lookups; API standards for filters.

- Assumptions/Dependencies: Industry cooperation; legal frameworks; data security guarantees.

- Certification schemes and standards for memorization risk

- Sector: Policy; Certification bodies; Enterprise procurement

- What: Create internationally recognized standards (analogous to ISO/SOC) that certify models’ regurgitation risk and guardrail adequacy.

- How:

- Define test batteries (bmc@k, long-span distribution, cross-author triggers); require documented mitigations (filters, finetune policies, unlearning).

- Tools/Workflows: Accreditation processes; public scorecards.

- Assumptions/Dependencies: Consensus on thresholds; coordination across jurisdictions.

- Licensed training ecosystems and provenance-aware datasets

- Sector: Publishing; Data marketplaces; AI developers

- What: Shift toward licensed book datasets with enforceable terms and provenance, with audit hooks for removal and compensation.

- How:

- Machine-readable licenses; data provenance tooling; revenue-sharing models.

- Tools/Workflows: Dataset registries; consent management platforms; rights revocation protocols.

- Assumptions/Dependencies: Economic viability; uptake by major publishers and labs.

- Associative memory diagnostics and architecture research

- Sector: Academia; AI research labs

- What: Investigate and redesign model internals to control semantic-keyed verbatim recall.

- How:

- Probing and interpretability methods focused on cross-paragraph/semantic triggers; architectural changes that separate generalization from storage of long-form verbatim text.

- Tools/Workflows: Mechanistic interpretability pipelines; retrieval-augmented architectures with externalized, policy-governed memory.

- Assumptions/Dependencies: Access to model internals/open weights; transferability to production models.

- Education and assessment systems resilient to AI regurgitation

- Sector: Education technology

- What: Build LMS-integrated detectors and assignment designs robust against AI-generated regurgitation of copyrighted texts.

- How:

- Integrate long-span similarity checks; design assessments that reduce plagiarism value; provide student guidance on AI use.

- Tools/Workflows: LMS plugins; instructor dashboards; policy templates.

- Assumptions/Dependencies: Institution policies; fairness and false-positive management.

- Cross-border liability frameworks and regulatory updates

- Sector: International policy; Legal

- What: Harmonize rules on when memorized copies in weights constitute infringement and what guardrails are “adequate.”

- How:

- Develop model-distribution obligations (e.g., mandatory recitation filters); clarify safe harbors contingent on ongoing guardrail efficacy.

- Tools/Workflows: Model disclosures; periodic compliance audits; incident reporting.

- Assumptions/Dependencies: Legislative processes; evidence standards informed by technical tests.

- Consumer-facing “originality assurance” products

- Sector: Creative tools; Productivity software

- What: Writing assistants that are certified to avoid verbatim recall and provide provenance/citation when close to known texts.

- How:

- Combine safe finetuning, recitation filters, and post-hoc plagiarism checks; offer “clean-room” modes trained on licensed or public-domain-only data.

- Tools/Workflows: Browser/editor extensions; enterprise governance integrations.

- Assumptions/Dependencies: User acceptance; model quality parity with unrestricted systems.

Notes on feasibility and dependencies across applications:

- Many immediate applications rely on reliable access to finetuning APIs and permission to run probes; platform terms may change in response to this research.

- Effective recitation filters need high-quality, maintained reference corpora; false positives and latency costs must be managed.

- Long-term mitigations (unlearning, safe finetuning) require advances to avoid degrading general task performance.

- Legal and standards-based applications depend on cross-industry cooperation and evolving jurisprudence regarding memorization and fair use.

Glossary

- Best-of-N jailbreaking: A jailbreak method that samples multiple generations and selects the best to bypass safety measures. "Best-of-N jailbreaking with iterative continuation prompts"

- bmc@5: A specific instance of the book-level memorization metric using k=5 matching words. "aligned GPT-40 achieves an average bmc@5 of only 7.36%"

- Book Memorization Coverage (bmc@k): A metric measuring the fraction of a book covered by extracted verbatim spans of length ≥ k. "Book Memorization Coverage (bmc@k)"

- Books3: A large collection of scraped/pirated books often used in pretraining corpora. "Books3 (over 190,000 copyrighted books)"

- Common Crawl: A massive web crawl dataset frequently used to build pretraining corpora. "Common Crawl corpus used to train OLMo-3"

- cosine similarity: A vector-space similarity measure used here to relate prompts and paragraphs. "by cosine similarity to the prompt"

- cross-author: An experimental setting where finetuning on one author enables extraction from different authors. "we conduct a cross-author experiment"

- cross-paragraph ratio: The fraction of extracted spans that originate from non-prompted paragraphs. "We formalize this notion with a cross-paragraph ratio"

- DCLM-Baseline: A curated web corpus derived from Common Crawl used for training and provenance checks. "DCLM-Baseline (3.71T tokens), a curated web corpus used to train OLMo-2"

- derivative work right: A copyright law concept covering adaptations of protected works. "including potential infringement of the derivative work right"

- Fair Use Factor 4: The U.S. fair use factor concerning market impact of the use. "under Fair Use Factor 4"

- frontier LLM: A leading-edge LLM at the current state of the art. "Frontier LLM companies have repeatedly assured courts and regulators"

- infini-gram API: A tool for searching n-gram occurrences at web scale used for provenance analysis. "with infini-gram API (Liu et al., 2024b)."

- input filters: Mechanisms that screen user prompts to prevent triggering disallowed outputs. "input filters, alignment via RLHF, system prompts, and output filters"

- instruction trimming: The removal of n-gram overlaps between prompts and matches to avoid double-counting. "Instruction trimming"

- instruction-tuned: Models further trained to follow instructions, beyond base pretraining. "Aligned instruction-tuned models show minimal extractability."

- Jaccard similarity: A set-overlap metric used to compare memorized regions across models. "using Jaccard similarity."

- jailbreaking: Techniques to circumvent model safeguards and elicit restricted outputs. "through jailbreaking combined with iterative continuation prompts"

- knowledge cutoff: The latest point in time up to which a model was trained on data. "before the model's knowledge cutoff"

- latent memorization: Preexisting stored training data that can be reactivated by finetuning. "reactivates latent memorization from pretraining."

- Library Genesis (LibGen): A large repository of pirated books implicated as a likely training source. "Library Genesis (LibGen)"

- market harm: A legal notion of negative economic impact on the original work’s market. "demonstrate market harm under Fair Use Factor 4"

- MoE: Mixture-of-Experts; an architecture that routes tokens to specialized expert subnetworks. "We target large-scale MoE models"

- output filters: Systems that block generation of prohibited content at inference time. "output filters blocking copyrighted content1"

- parametric memory: Information stored implicitly in model parameters, retrievable via prompts. "entirely from its parametric memory"

- Pearson r: A correlation coefficient measuring linear association between variables. "(Pearson r ≥ 0.90)"

- pretraining data overlap: The intersection between finetuning data and what the model saw during pretraining. "The key difference is pretraining data overlap"

- prefix-based extraction: Recovering memorized text by prompting with verbatim prefixes from the target. "enable prefix-based extraction"

- probabilistic extraction: A method leveraging sampling probabilities to retrieve memorized content. "applied probabilistic extraction to 50 books"

- public-domain works: Works whose copyrights have expired or are waived, free for use. "public-domain works"

- RECITATION: A deployment-time stop reason indicating detected recitation of copyrighted text. "with a stop reason of RECITATION"

- RLHF: Reinforcement Learning from Human Feedback; aligning models using human preference signals. "via RLHF, system prompts, and output filters"

- semantically linked associations: Internal organization where related meanings cue retrieval of stored text. "semantically linked associations"

- self-agreement ceiling: The upper bound of agreement a model can achieve with itself under sampling. "self-agreement ceiling"

- soft match: A relaxed string match that normalizes case and punctuation. "Soft match normalizes case and punctuation."

- stochasticity of decoding: Randomness in generation due to sampling-based decoding procedures. "the stochasticity of decoding"

- supply-chain framing: A legal/analytical perspective situating liability across the AI development pipeline. "introduce a supply-chain framing"

- temperature: A sampling parameter controlling randomness in token selection. "temperature = 1.0"

- transformative use: A fair-use concept where new use adds meaning/purpose, reducing infringement risk. "claims of transformative use"

- verbatim regurgitation: The exact reproduction of training text by a model. "verbatim regurgitation of copyrighted works"

- model weights: The learned numeric parameters of a model that encode information from training. "model weights store copies of copyrighted works"

Collections

Sign up for free to add this paper to one or more collections.