- The paper introduces an adaptive Kalman-style updating mechanism via FILT3R that ensures stable, long-horizon streaming 3D reconstruction.

- It employs recursively propagated uncertainty by combining fixed measurement noise with adaptive process noise to balance memory retention against drift.

- Experimental results on benchmarks demonstrate reduced error accumulation and lower computational overhead compared to prior methods.

FILT3R: Latent State Adaptive Kalman Filter for Streaming 3D Reconstruction

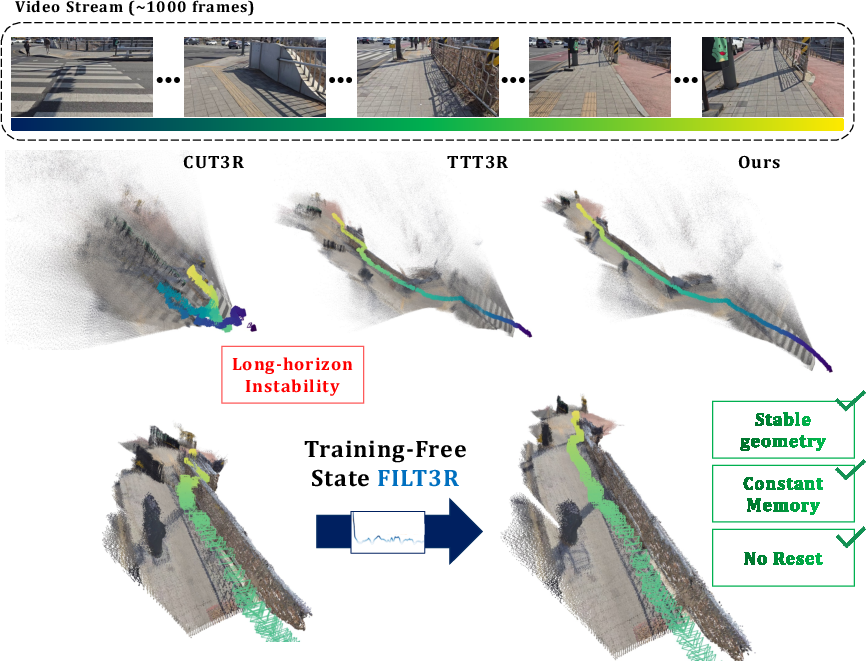

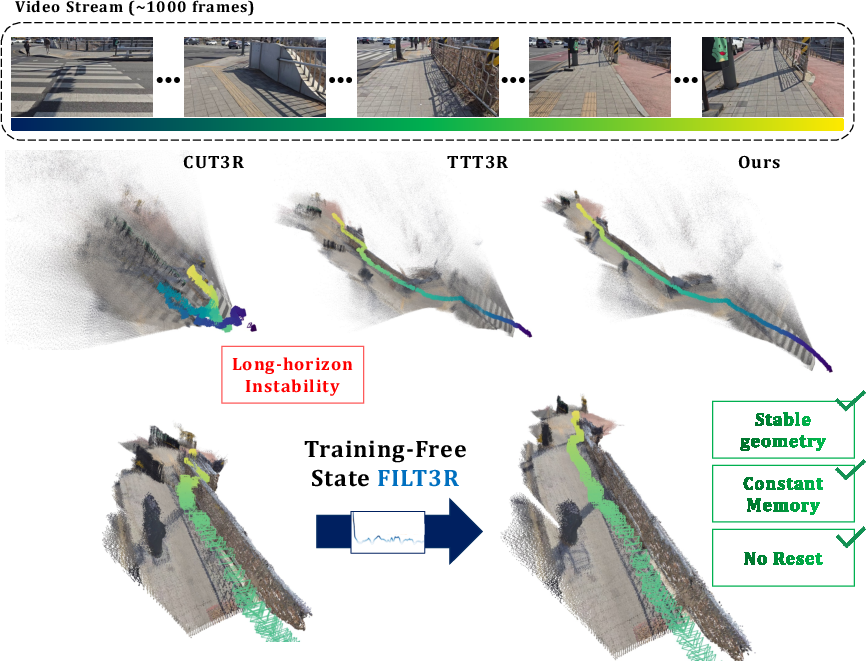

Streaming 3D reconstruction tasks require online maintenance of a persistent scene representation as video frames arrive, under constant memory and latency constraints. Contemporary recurrent architectures distill scene information into compact latent tokens updated by incoming frames. However, standard update rules—such as uniform overwrite (CUT3R) or heuristic gating (TTT3R)—fail to balance memory retention against adaptability, causing either catastrophic forgetting or excessive drift, particularly on video sequences exceeding the training horizon.

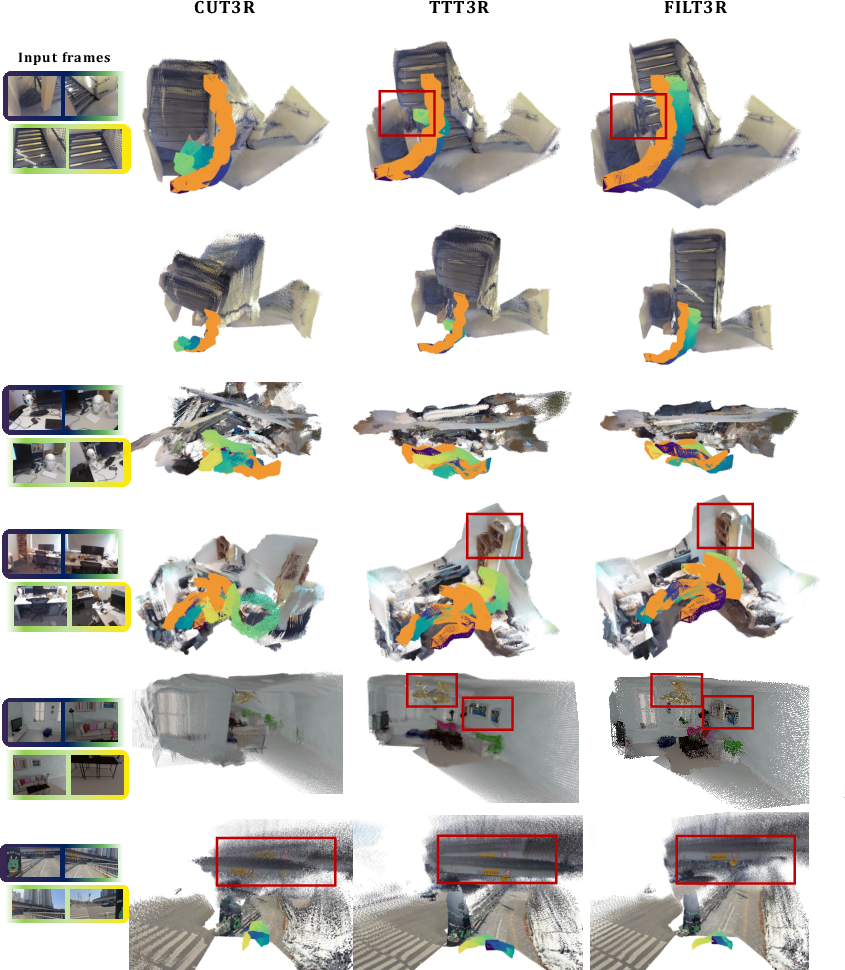

Figure 1: FILT3R enables stable long-horizon streaming 3D reconstruction without resets. Top: input frames. Middle: backbone with different update policies. Bottom: FILT3R outperforms alternatives in maintaining stable geometry on long rollouts.

The core problem thus reduces to the design of a latent state update mechanism that adaptively trades off between trusting historical information versus incorporating new observations, ideally with robustness to distributional regime shifts beyond the trained data horizon.

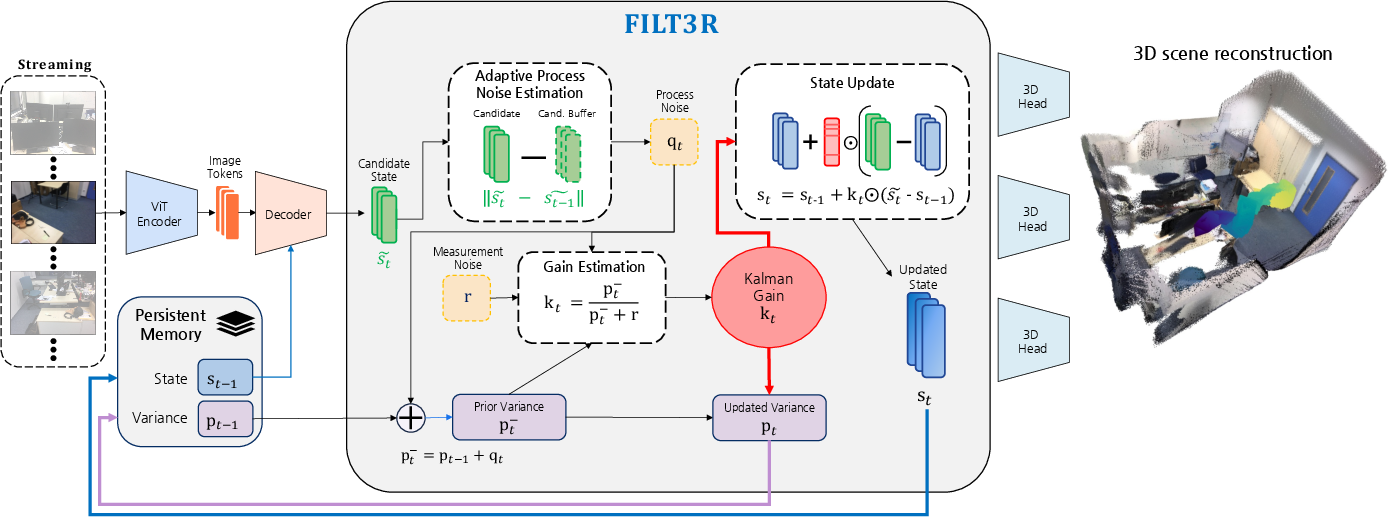

FILT3R: Adaptive Latent Kalman-Style Token Filtering

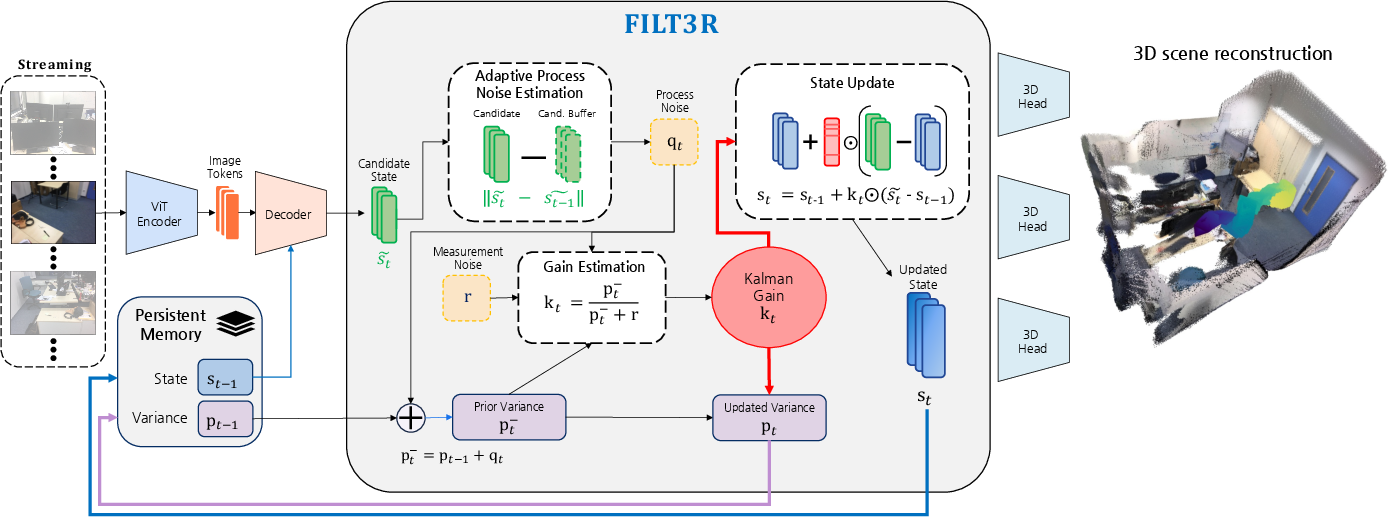

FILT3R addresses this challenge by casting the latent token update as a stochastic state estimation problem using a per-token adaptive Kalman filter. The recurrent state is interpreted as a belief over scene latents, and the decoder’s candidate output as a noisy observation. FILT3R computes the Kalman-style gain via two uncertainty terms: a fixed measurement noise (reflecting decoder reliability) and an adaptively estimated process noise (reflective of scene dynamics), the latter inferred online from EMA-normalized temporal drift between consecutive candidate tokens.

Figure 2: FILT3R overview. Latent memory and decoder candidate are fused using a per-token adaptive Kalman gain, which is driven by online noise estimation from drift and propagated variance.

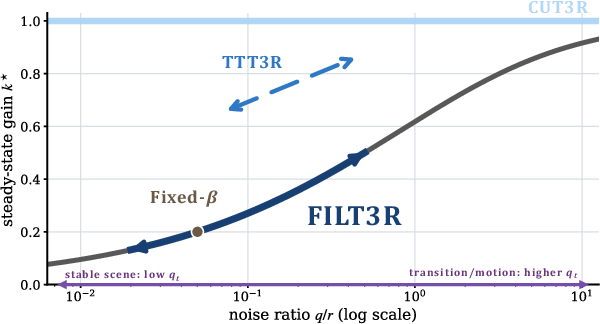

Variance is propagated through time, so gains contract as the state grows more certain in stable regimes but increase sharply upon detecting transitions, leading to robust length generalization and principled balance between memory retention and adaptation.

Relationship to Existing Update Rules

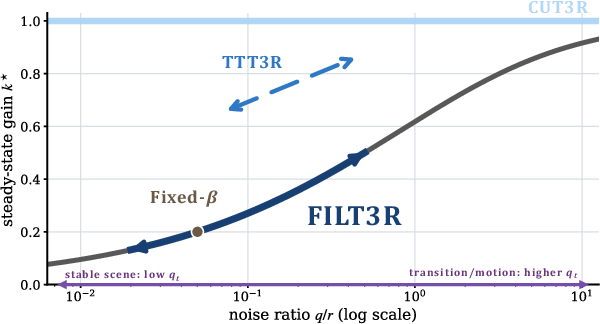

FILT3R subsumes prior update rules as special cases: uniform overwrite corresponds to unit gain, fixed-gain gates to fixed Kalman gain, and heuristic gating to non-propagated, signal-based gating. In contrast to methods such as TTT3R or fixed EMA smoothing, FILT3R’s use of recursively propagated uncertainty yields an expanding memory horizon in stable conditions, with theoretical O(1/t) gain decay [see Appendix].

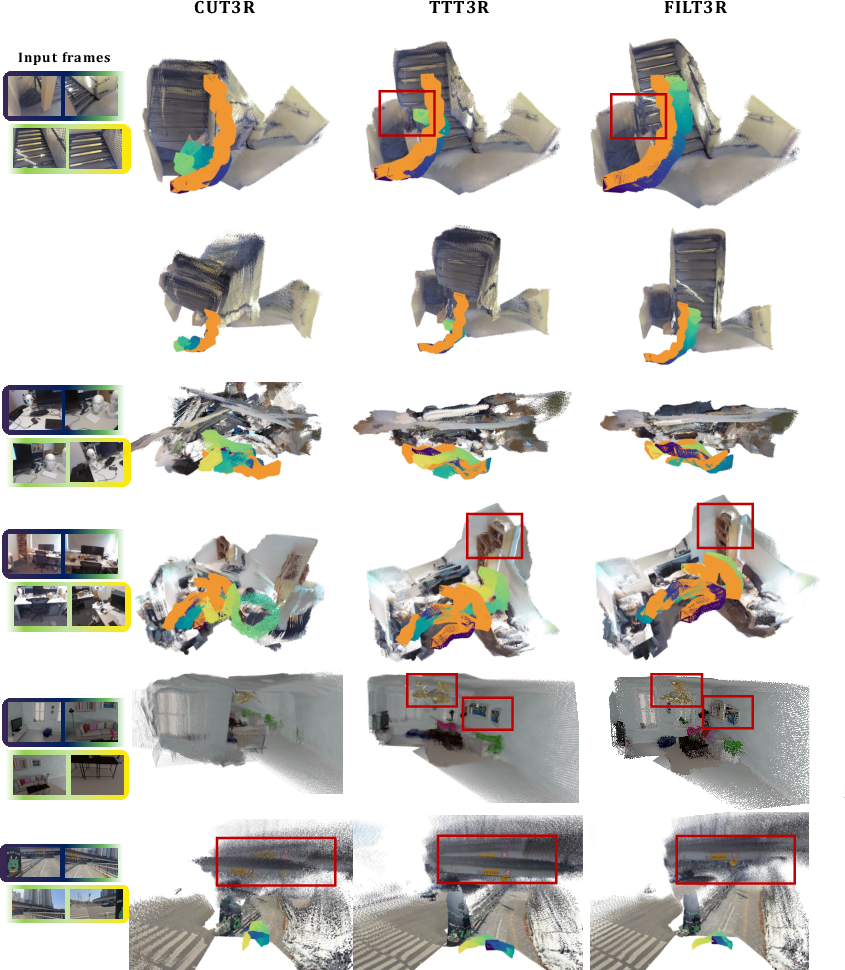

Figure 3: Qualitative long-horizon streaming 3D reconstruction. FILT3R yields coherent geometry over very long rollouts; baselines degrade due to forgetting/drift.

Experimental Results

Long-Horizon 3D Reconstruction

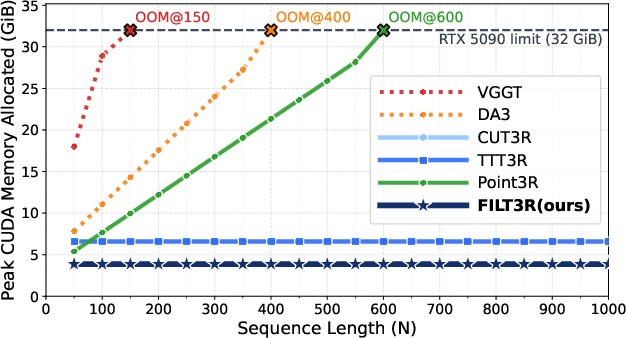

On 7-Scenes and NRGBD benchmarks at sequence lengths up to 1000 frames—significantly beyond the 64-frame training horizon—FILT3R outperforms CUT3R and TTT3R by a wide margin in accuracy, completeness, and normal consistency. Notably, Point3R (which caches states) runs out of memory where FILT3R’s memory footprint remains constant.

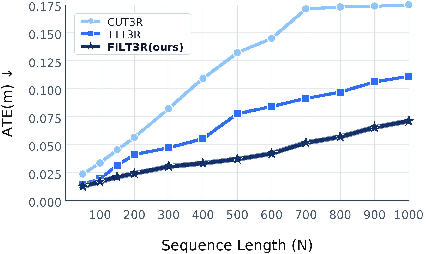

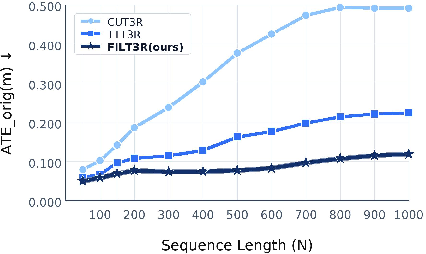

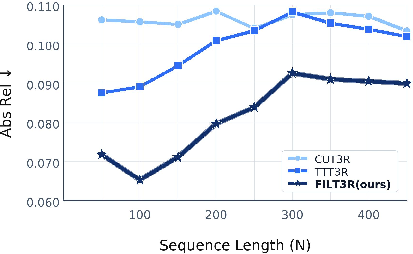

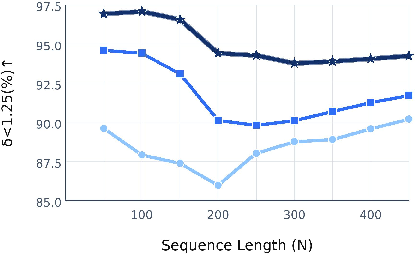

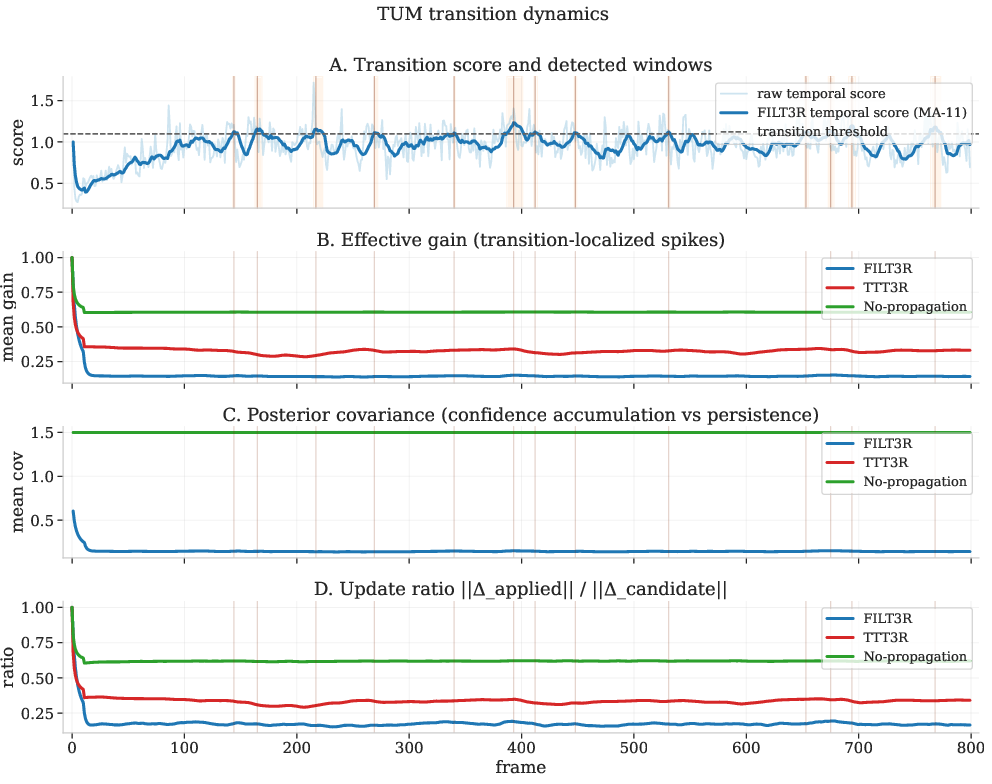

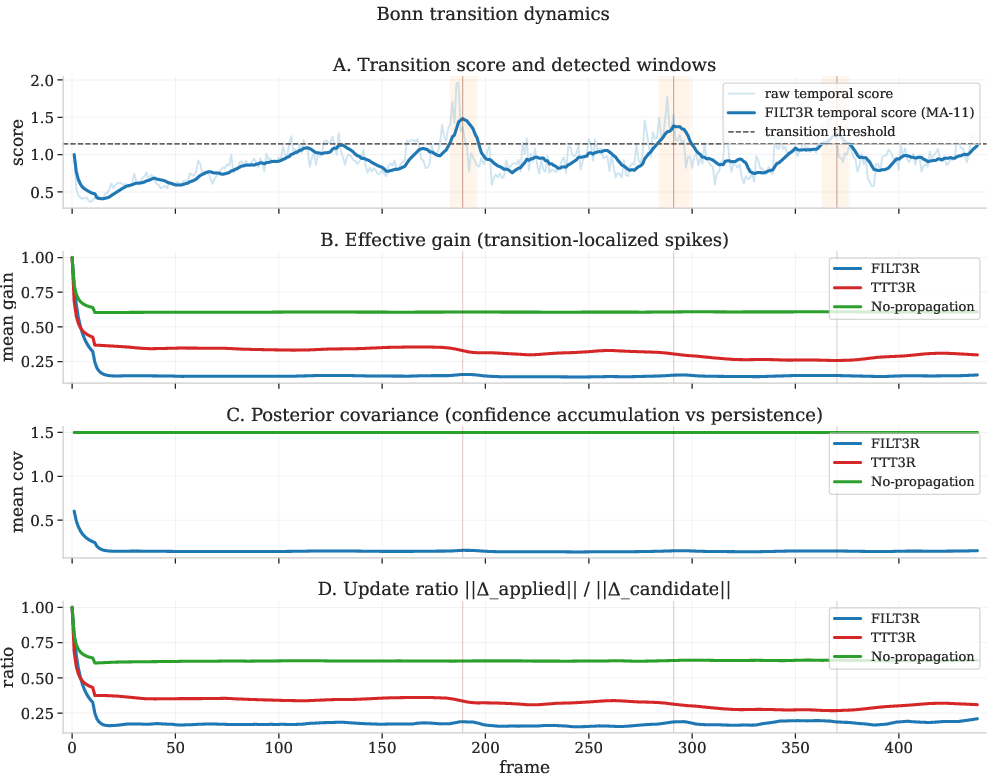

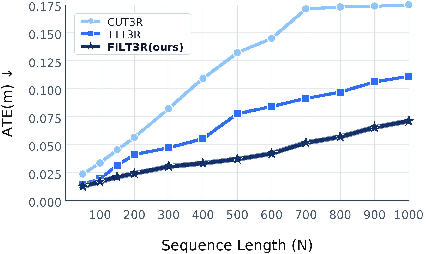

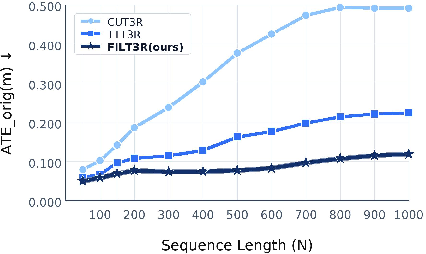

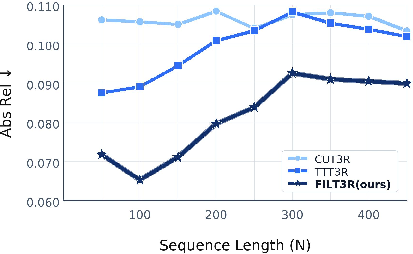

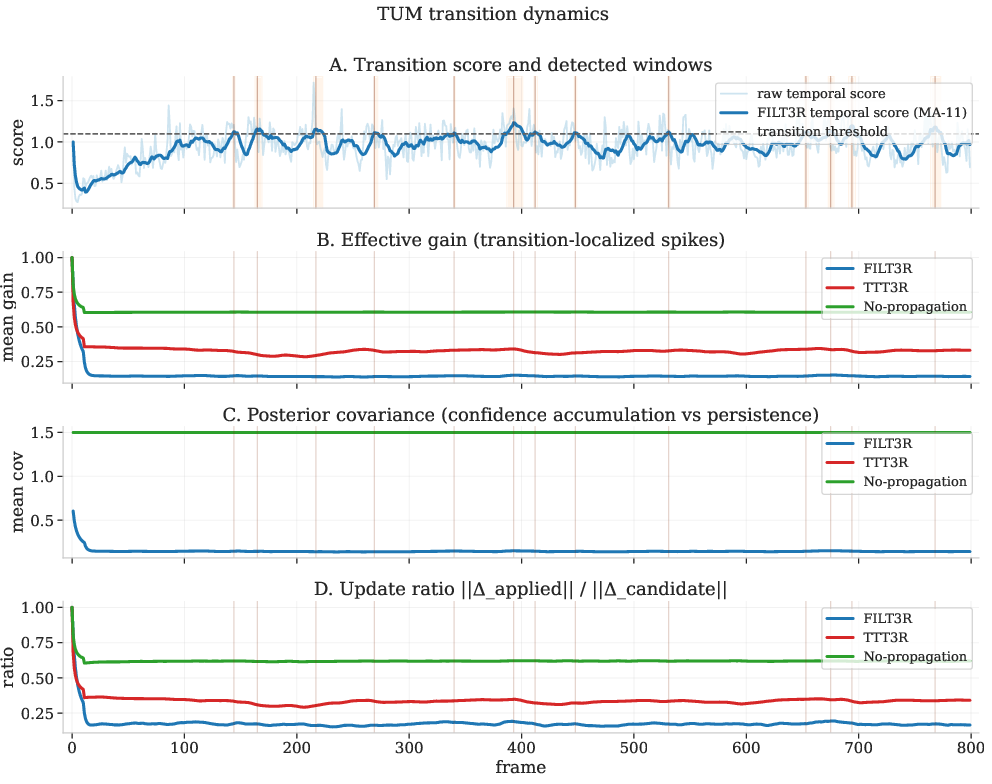

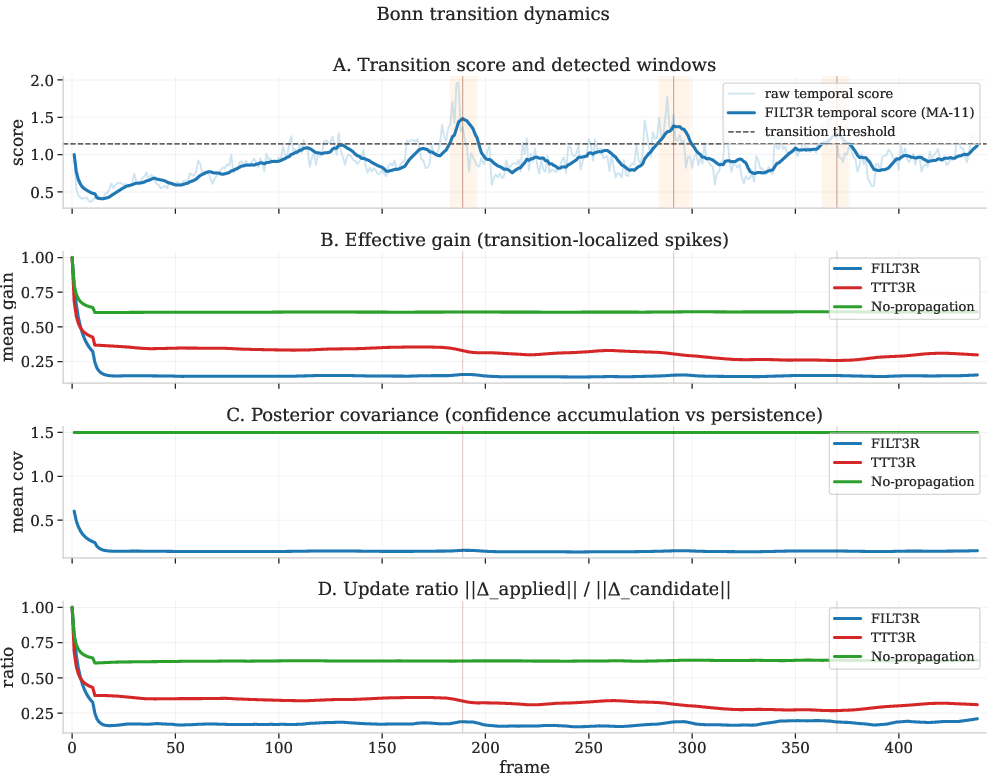

Long-Horizon Pose and Depth

On TUM-RGBD, FILT3R reduces origin-aligned trajectory error (ATE_orig) by over 2× compared to TTT3R, specifically addressing progressive drift. On Bonn long video depth, FILT3R maintains significantly lower Abs Rel and higher δ<1.25 compared to other streaming policies, confirming slower error accumulation as sequence length grows.

Figure 4: Long-horizon metrics vs. sequence length. FILT3R’s errors grow more slowly than baseline methods in both pose and depth, consistently reducing cumulative drift.

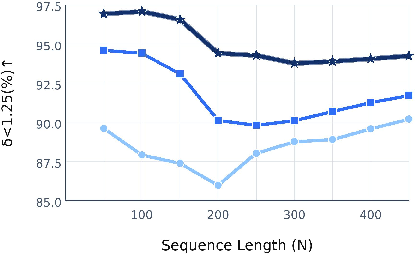

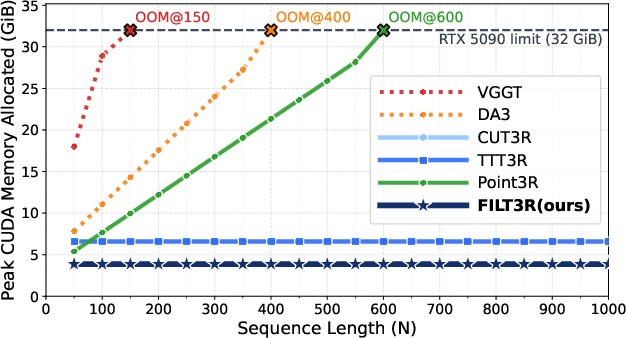

Efficiency

Figure 5: Peak GPU memory vs. frames. FILT3R matches CUT3R’s constant memory profile, in contrast to full-attention and memory-caching approaches.

FILT3R imposes negligible computational and memory overhead compared to CUT3R, enabling efficient deployment over long horizons. Attention-based update gates (TTT3R) nearly double the memory footprint due to cache requirements.

Ablations and Mechanistic Insights

Ablations confirm: (i) adaptive process noise is necessary for rapid response to transitions; (ii) recursive variance propagation is critical for suppressing long-horizon drift; (iii) fixed-gain (EMA) gates can suppress drift only by globally over-smoothing, at the cost of responsiveness and local accuracy; (iv) adaptive measurement noise introduces instability due to correlated process and measurement estimates during transitions.

Figure 6: Different update rules on the gain curve. FILT3R adapts its effective update gain based on process/measurement noise, unlike overwrite or fixed-gate approaches.

Mechanistically, FILT3R contracts updates during long stable periods (lowering gain floor) and selectively reopens when scene evidence indicates genuine change.

Figure 7: FILT3R contracts updates in stable regimes and reopens at transitions. Effective gain and posterior variance dynamics highlight the adaptivity to temporal regime change.

Implications and Future Directions

FILT3R provides an interpretable, training-free, plug-and-play filter for latent token updates in recurrent 3D reconstruction. It facilitates significantly improved rollouts on video sequences of arbitrary length, demonstrating generalization well beyond training horizons. These outcomes indicate that principled uncertainty propagation is not only compatible with strong short-horizon metrics, but essential for stability in continuous deployment—a critical property for long-term embodied scene understanding and SLAM in real-world robotics.

Theoretically, FILT3R’s separation of process and measurement noise, and its explicit use of propagated uncertainty, offers a foundation for further advances: e.g., adaptive or learned process noise models, integration with parameter adaptation, and generalization to multi-modality or higher-order latent geometries. Future developments may combine FILT3R with differentiable filtering and end-to-end fine-tuning for task-specific optimality, or extend the adaptive filtering principle to other structured memory systems for continual RL or generative modeling.

Conclusion

FILT3R demonstrates that Kalman-style uncertainty-aware state fusion yields practical and significant improvements in the long-horizon stability and performance of streaming 3D reconstruction pipelines. By estimating process noise from internal temporal dynamics and justifying all adaptivity through process uncertainty—anchored on a fixed decoder reliability prior—FILT3R advances the state of the art in streaming scene reconstruction, with minimal computational cost and strong empirical validation across depth, pose, and reconstruction metrics.