- The paper introduces a robust reconstruction pipeline that overcomes VDM inconsistencies through iterative non-rigid alignment and global optimization.

- It utilizes geometric foundation models and inverse deformation via 3D Gaussian splatting to achieve 3D-consistent and photorealistic reconstructions.

- The method demonstrates superior performance in 3D consistency, photometric fidelity, and CLIP-IQA+ metrics compared to state-of-the-art baselines.

World Reconstruction From Inconsistent Views

Overview and Motivation

"World Reconstruction From Inconsistent Views" (2603.16736) introduces a robust reconstruction pipeline to address the inherent geometric inconsistencies in video diffusion model (VDM) outputs. Given that VDMs, even those with explicit camera control or RGB-D conditioning, generate frame sequences with significant generative drift and misalignment, direct 3D reconstruction leads to floating artifacts and unreliable geometry. The proposed method circumvents the need for explicit consistency-in-training by deploying a per-scene, non-rigid alignment step and subsequent geometry-consolidating optimization, thus enabling any VDM to function as a reliable 3D world generator.

Methodology

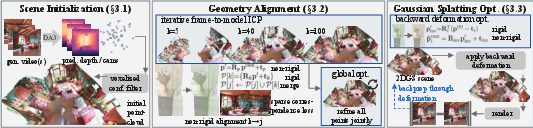

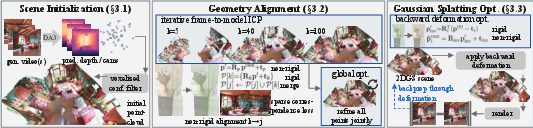

The approach comprises three principal stages: (1) geometric foundation model-based scene initialization, (2) non-rigid geometry alignment using iterative frame-to-model ICP and global optimization, and (3) non-rigid aware Gaussian Splatting (3DGS) optimization exploiting inverse deformation rendering loss.

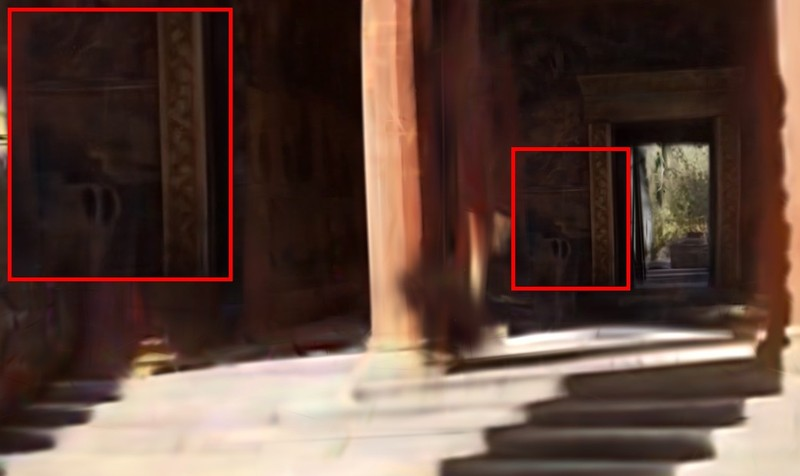

Figure 1: Method overview. The three-stage pipeline reconstructs 2DGS scenes from inconsistent VDM outputs by progressive depth estimation, geometry alignment, and 3D Gaussian Splatting optimization.

1. Scene Initialization via Geometric Foundation Models

Geometric foundation models (GFMs), such as DepthAnything-3 [lin2025depth], are used to predict dense, per-pixel scene depth and camera parameters for all video frames. The initial pointcloud comprises disparate, overlapping surfaces due to frame inconsistencies.

2. Non-rigid Geometry Alignment

Iterative frame-to-model alignment leverages a hashgrid MLP to encode non-rigid deformations per frame, optimizing both camera poses and per-point transformations via point-to-plane ICP and color-gradient losses. Importantly, 2D feature correspondences (RoMa matcher) extend alignment beyond nearest-neighbor constraints, while total variation and ARAP regularization ensure spatial smoothness in the deformation field. Adaptively merged outlier removal via median absolute deviation metrics maintains scene integrity.

A subsequent global optimization stage jointly refines camera and deformation parameters across all frames, minimizing reprojection error and driving the pointcloud towards a thin, canonical surface.

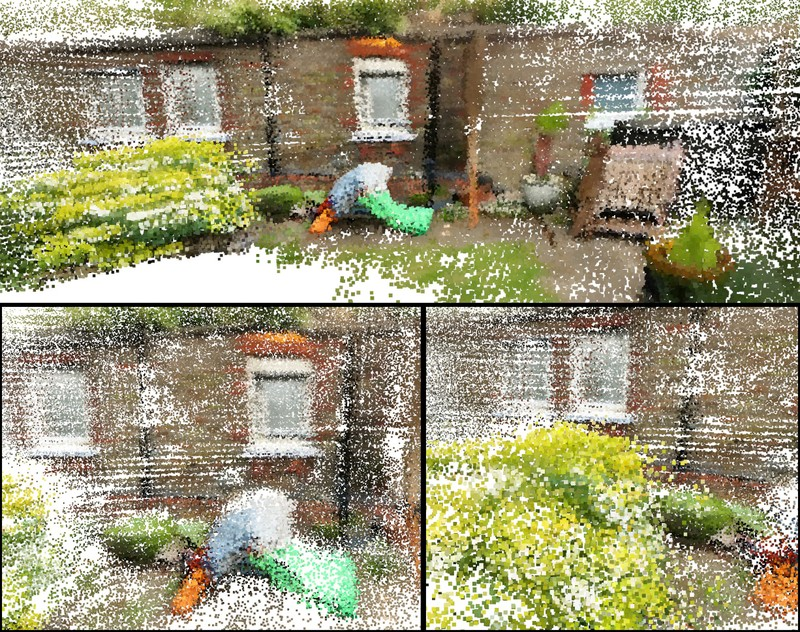

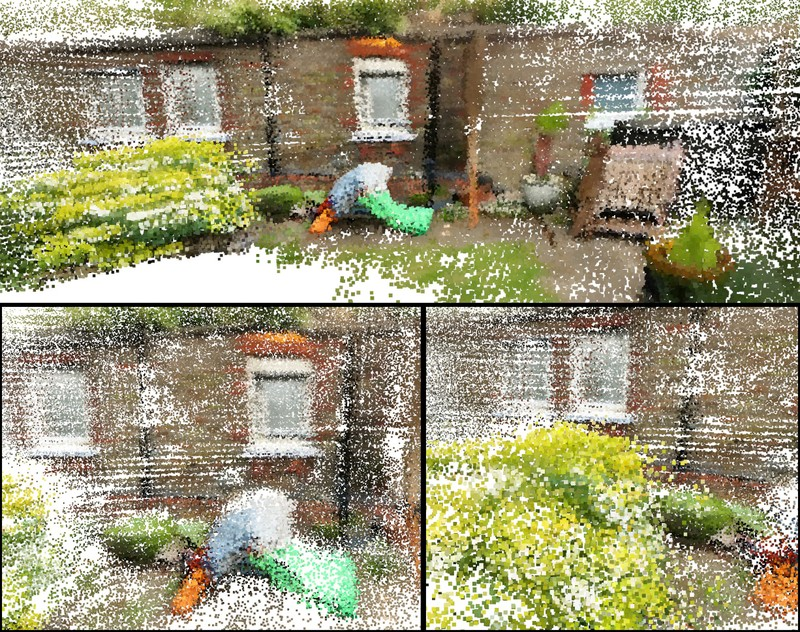

Figure 2: Pointcloud reconstructions comparing DA3, VGGT-X, and the proposed non-rigid alignment. Only the proposed method yields compact, aligned surfaces free from overlapping artifacts.

3. Non-rigid Aware 3D Gaussian Splatting

The canonical pointcloud is transformed via an inverse deformation neural field conditioned on a learnable frame embedding, enabling backward mapping from canonical space to every observed frame. This permits a photometric rendering loss that factors out geometric drift, thus optimizing for sharp, consistent appearance across all views. The final 2DGS scene is initialized directly from the aligned pointcloud and normals, with opacity, scale, and color attributes set accordingly.

Empirical Evaluation

The paper benchmarks its reconstruction technique against DA3 and VGGT-X on multiple VDM-generated sequences, utilizing world generation models such as Genie3, HY-WorldPlay, ViewCrafter, SEVA, Gen3C, Wan, and Voyager. Quantitative evaluation employs WorldScore metrics:

- 3D Consistency: The proposed method achieves 79.29 versus 69.53 (DA3) and 69.66 (VGGT-X).

- Photometric Consistency: 86.59 versus 71.62 (DA3) and 67.05 (VGGT-X).

- Fidelity (CLIP-IQA+): 46.56, outperforming all baselines.

Qualitative analysis demonstrates superior visual fidelity and viewpoint stability, with reconstructed worlds free from floating artifacts and blur, both when rendered from input frames and unseen perspectives.

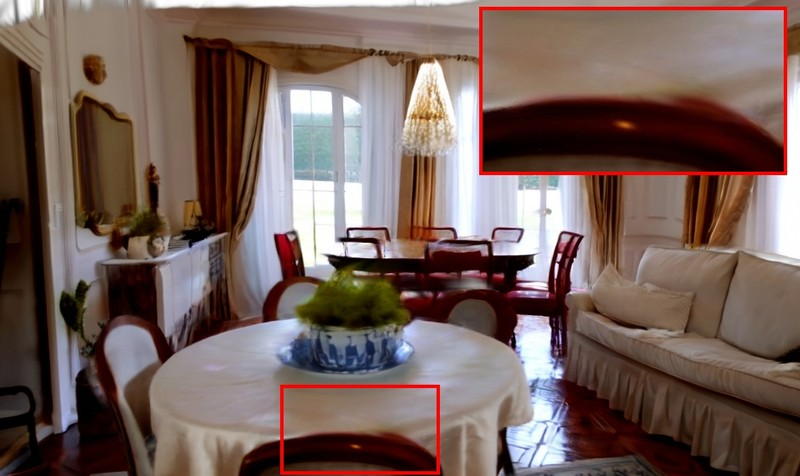

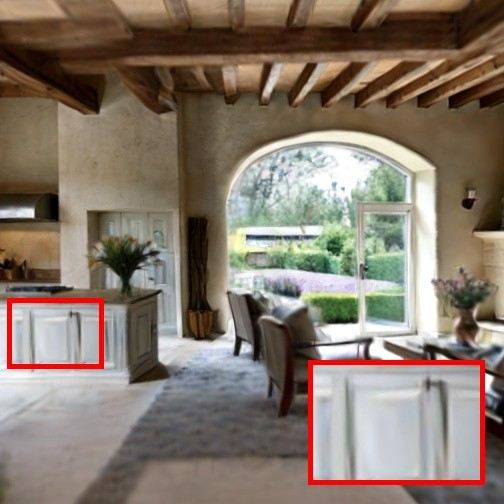

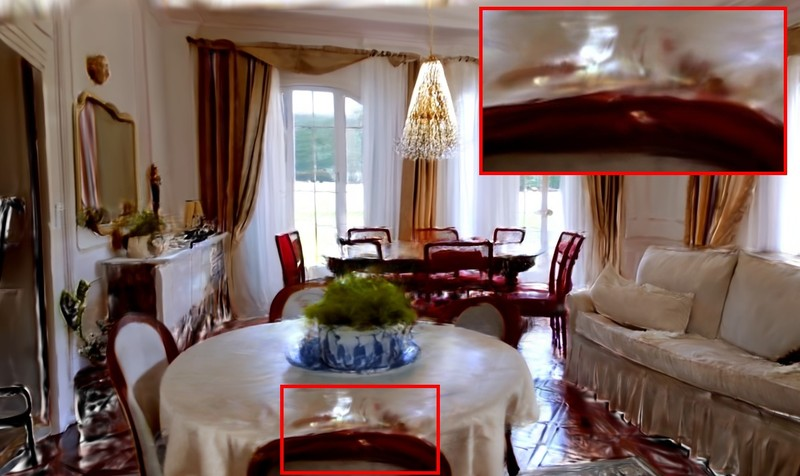

Figure 3: Single video 3D reconstruction results. Reconstructions from HY-WorldPlay showcase higher visual fidelity and more consistent geometry relative to baselines.

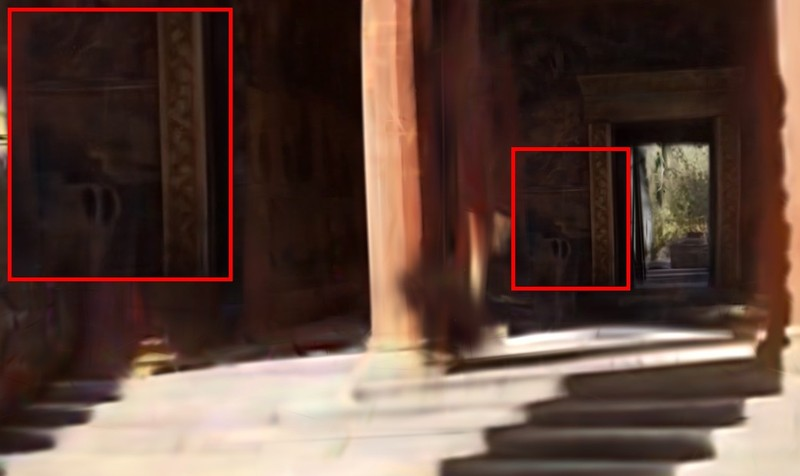

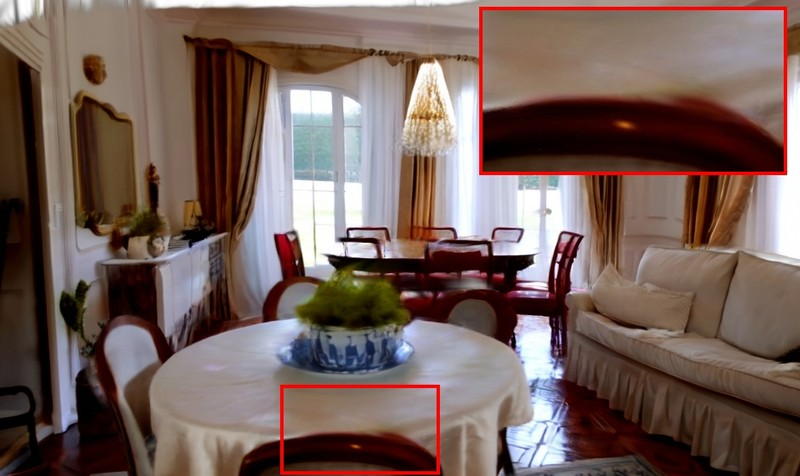

Figure 4: Single video 3D reconstruction for SEVA sequences. The baseline methods fail to resolve inconsistent textures and suffer from artifacts in novel-view synthesis.

Ablation studies confirm that omitting non-rigid alignment, correspondence matching, or inverse deformation losses significantly reduces both consistency and fidelity.

Figure 5: Ablation results. Removing non-rigid alignment or correspondence matching leads to misaligned geometry and degraded rendered appearance.

Large-Scale World Generation and Limitations

The method is validated on 360-degree world generation via autoregressive rollout of VDMs. Competing baselines display increased floating artifacts and degraded quality far from training views, whereas the proposed method maintains high fidelity across extreme viewpoints.

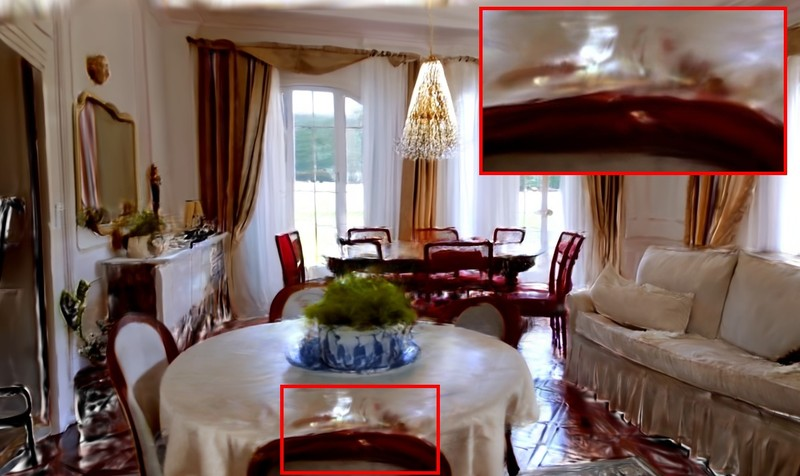

Figure 6: Limitations. Hallucinations in VDM outputs (e.g., appearing/disappearing objects) persist in reconstructed worlds, as the system averages inconsistent views during optimization.

The alignment strategy cannot fully overcome VDM frame hallucinations nor does it address per-model-level geometric consistency during generation. Detecting and excising outlier frames via robust mechanisms (e.g., RobustNeRF [sabour2023robustnerf]) is identified as a prospective extension.

Practical Implications and Future Directions

By decoupling world generation from the constraint of geometric consistency in the generative model, this reconstruction approach dramatically reduces computational overhead and applicability barrier for novel scene instantiation. Practically, this unlocks high-fidelity, explorable worlds from diverse video outputs, relevant to robotics simulation, game development, and VR. On the theoretical front, integrating non-rigid alignment cues into VDM finetuning could reinforce generative model consistency; further work may focus on camera-space outlier detection and combining per-scene and per-model consistency objectives.

Conclusion

This paper presents a lightweight, yet robust pipeline transforming inconsistent VDM-generated frames into 3D-consistent, photorealistic worlds by means of non-rigid geometry alignment and Gaussian Splatting with deformation-aware photometric optimization. Empirical evaluations confirm substantial improvements in geometric consistency and fidelity over state-of-the-art baselines. While limitations in handling VDM-induced hallucinations remain, the method sets a new standard for post-hoc world model reconstruction applicable to any VDM. Future research may explore tighter integration with generative processes and dynamic or outlier-aware scene modeling.