Attention Residuals

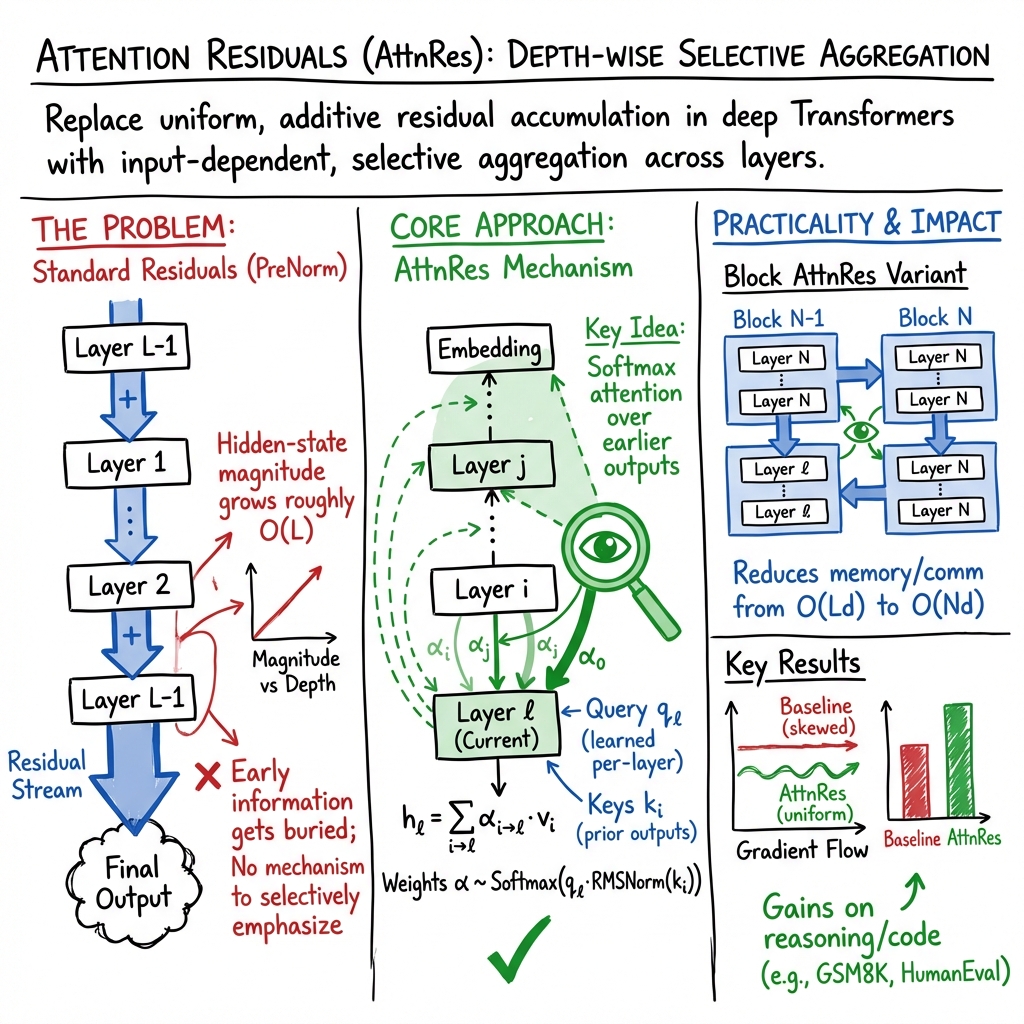

Abstract: Residual connections with PreNorm are standard in modern LLMs, yet they accumulate all layer outputs with fixed unit weights. This uniform aggregation causes uncontrolled hidden-state growth with depth, progressively diluting each layer's contribution. We propose Attention Residuals (AttnRes), which replaces this fixed accumulation with softmax attention over preceding layer outputs, allowing each layer to selectively aggregate earlier representations with learned, input-dependent weights. To address the memory and communication overhead of attending over all preceding layer outputs for large-scale model training, we introduce Block AttnRes, which partitions layers into blocks and attends over block-level representations, reducing the memory footprint while preserving most of the gains of full AttnRes. Combined with cache-based pipeline communication and a two-phase computation strategy, Block AttnRes becomes a practical drop-in replacement for standard residual connections with minimal overhead. Scaling law experiments confirm that the improvement is consistent across model sizes, and ablations validate the benefit of content-dependent depth-wise selection. We further integrate AttnRes into the Kimi Linear architecture (48B total / 3B activated parameters) and pre-train on 1.4T tokens, where AttnRes mitigates PreNorm dilution, yielding more uniform output magnitudes and gradient distribution across depth, and improves downstream performance across all evaluated tasks.

First 10 authors:

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces a new way for very deep AI models (like LLMs) to combine what each layer learns. Today, layers are stacked like floors in a building, and each layer adds its “change” to a running total. The problem is that, as you add more and more layers, this total can get too big, and the helpful signals from earlier layers get drowned out. The authors propose Attention Residuals (AttnRes), which lets each layer pick and choose which earlier layers to pay attention to—rather than treating all earlier layers as equally important.

What questions did the researchers ask?

- Can we stop deep models from simply piling up every layer’s output with equal weight, which can wash out useful information from earlier layers?

- If each layer could choose which earlier layers matter most (based on the input), would the model learn better and more efficiently?

- Can we make this idea practical for very large models that are trained on many computers at once, without using too much extra memory or time?

How did they do it?

First, a quick idea of how things usually work:

- Residual connections: Think of the model’s knowledge as a notebook. Each layer writes a little note and staples it to a growing stack. The final stack contains notes from all layers, equally added. This makes learning stable, but the stack grows large, and older notes get harder to find or use.

What the paper changes:

- Attention across depth (layers), not just across words: In Transformers, “attention” usually helps a model focus on the most important words in a sentence. Here, the authors apply the same idea across layers. Each layer looks back at all earlier layers and assigns importance weights (via softmax, which turns scores into percentages that add up to 100%). Then it builds its input by taking a weighted mix of those earlier outputs.

- Analogy: Instead of blindly flipping through every page of your notebook, you ask, “Which earlier pages are most relevant for this question?” and you read those more carefully.

- A “pseudo-query” vector: Each layer has a small learned vector (like a preference profile) that helps it decide which earlier layers to attend to.

Making it efficient for huge models:

- Full AttnRes: Every layer can attend to all previous layers. This works fine on a single machine and adds little overhead because those intermediate results are already kept for training.

- Block AttnRes: For very large models trained across many machines, saving and sharing every layer’s output is expensive. So the authors group layers into a few blocks. Inside each block, they still combine layer outputs normally, but they keep a single “summary” for the block. Layers then attend to these block summaries instead of every individual layer.

- Analogy: Instead of keeping every page from every chapter, you keep a short chapter summary. You can still find what matters, with far less stuff to carry around.

- Systems tricks: They add two practical ideas to keep speed and memory costs low:

- Cross-stage caching: When training across many computers, don’t resend the same summaries over and over; cache (save) them locally and only send what’s new.

- Two-phase/online softmax: Compute attention in two steps so you don’t need to hold everything in memory at once, while getting the same result.

What did they find?

- Better learning across sizes: In “scaling law” tests (which compare models of different sizes and training budgets), AttnRes consistently beat the standard setup. In practice, Block AttnRes reached the same accuracy as a normal model that used about 25% more compute.

- More balanced layers: With AttnRes, the model’s internal signals don’t blow up as depth increases, and the training gradients spread more evenly across layers. This means earlier layers don’t get ignored as the model gets deeper.

- Real-world test: They built a very large model (tens of billions of parameters) and trained it on a huge amount of text (trillions of tokens). AttnRes improved results across all tested tasks compared to the standard residual setup.

- Low overhead: With the block version and the system optimizations, training costs went up only a little, and inference (using the model) was almost as fast as usual.

Why is this important?

- Smarter use of depth: Instead of treating every layer’s output the same, the model can choose what to keep and what to downplay, based on the input. This makes deep models more effective and stable.

- More performance for the same cost: Because AttnRes helps models learn better, you can get stronger results without needing a lot more compute.

- A simple, compatible upgrade: Block AttnRes is designed to be a drop-in change to today’s Transformer-style models, making it practical for real-world, large-scale training.

- A new design idea: “Attention over layers” may inspire other improvements inside deep networks, just like attention over words transformed sequence modeling.

In short, Attention Residuals helps deep AI models remember and use what earlier layers discovered—picking the most useful pieces instead of averaging everything—leading to better accuracy and more efficient training at scale.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper.

- Theoretical guarantees on stability and gradient flow

- No formal bounds or proofs are provided for hidden-state magnitude control, gradient norm uniformity, or convergence properties under AttnRes/Block AttnRes; conditions preventing attention collapse or dominance by a few layers remain uncharacterized.

- Lack of analysis comparing depth-wise vs. linear kernels in terms of expressivity, optimization landscapes, and robustness.

- Query design and input dependence

- The pseudo-query is a fixed per-layer parameter; the trade-off between this choice and input- (token-) dependent queries remains unexplored (e.g., or multi-head depth attention).

- No study on the effect of temperature scaling, entropy regularization, or other techniques to avoid overly peaky or overly uniform depth attention.

- Block size and boundary selection

- Block count is tuned empirically (e.g., ), but there is no principled method to choose across model sizes, depths, or tasks.

- Static, uniform blocks are assumed; learning non-uniform block sizes or adaptive/dynamic block boundaries (possibly data-dependent) is not investigated.

- The impact of misalignment between block boundaries and layer types (e.g., grouping attention vs. MLP layers) is not ablated.

- Information loss in block summaries

- Replacing per-layer outputs with block sums is a lossy compression; the representational trade-offs and conditions under which this harms downstream performance are not quantified.

- Alternatives to simple summation (e.g., learned compressions, attention pooling within blocks, residual scaling) are not explored.

- Kernel and normalization choices

- The method adopts over RMS-normalized keys; alternatives (e.g., sparsemax/entmax, linear kernels, cosine attention, temperature-tuned softmax, or depth-aware biases) and their stability/accuracy trade-offs are not evaluated.

- Sensitivity to normalization variants (RMSNorm vs. LayerNorm vs. ScaleNorm) and to value normalization/scaling is undocumented.

- Interaction with residual/normalization design

- The method targets PreNorm dilution, but behavior under PostNorm, DeepNorm, μParam, or residual scaling variants is not evaluated.

- How AttnRes interacts with residual dropout, stochastic depth, or other regularizers is not analyzed.

- Training dynamics and initialization

- Best practices for initializing the per-layer queries are not specified (e.g., near-uniform attention at start vs. bias toward recent layers).

- Whether curriculum strategies (e.g., annealing from to larger ) or warm-up schedules for depth attention improve stability is unknown.

- Systems and scalability edge cases

- While pipeline-parallel communication is optimized, interactions with tensor parallelism, ZeRO/optimizer sharding, activation checkpointing, and heterogeneous interconnects are not comprehensively benchmarked.

- Full AttnRes becomes communication-heavy at large ; exploration of hybrid schemes (e.g., windowed depth attention, sliding or banded depth attention) for extremely deep models () is absent.

- Inference with long contexts

- The two-phase/online-softmax strategy is proposed, but numerical stability, accumulated error, and latency/memory trade-offs for very long-context prefills (e.g., k–1M tokens) are not quantified.

- Exact memory formulas and throughput comparisons for KV caches plus block caches across batch sizes and sequence lengths are not reported.

- Overhead and fairness of comparisons

- Parameter overhead from per-layer queries and additional norms is not reported; comparisons controlling for total parameter count/compute (equalized budgets) are missing.

- The measured “<2% inference latency overhead” is claimed for “typical workloads” without a comprehensive sweep across batch sizes, sequence lengths, hardware, and decoding regimes (prefill vs. token-by-token).

- Robustness, generalization, and task coverage

- Experiments are centered on a specific 48B Kimi Linear model on 1.4T tokens; generalization to different architectures (standard attention, encoder–decoder, non-MoE), scales (smaller and much larger), and domains (vision, speech, multimodal) remains open.

- Impact on fine-tuning regimes (instruction tuning, RLHF, low-data adaptation) and data efficiency is not studied.

- Robustness to distribution shift, adversarial inputs, and calibration properties with AttnRes are not evaluated.

- Interaction with MoE routing and specialization

- The effect of depth attention on MoE expert routing dynamics, specialization, and load balancing is not analyzed; potential coupling between inter-layer aggregation and expert selection remains unclear.

- Quantization and low-precision training/inference

- Compatibility with 8-bit/4-bit weight or activation quantization, FP8 training, and KV-cache compression is not evaluated; sensitivity of depth attention to quantization noise is unknown.

- Interpretability and analysis of depth attention patterns

- There is no analysis of learned depth attention distributions (e.g., which layers/blocks are frequently attended, per-task patterns), nor links to mechanistic interpretability or pruning strategies.

- Whether AttnRes reduces the previously observed layer redundancy (prune-ability) is suggested but not quantified with systematic pruning studies.

- Alternatives to per-layer scalar queries

- The potential gains of multi-head depth attention, token-conditioned queries, or conditioning queries on layer type (attention vs. MLP) are not explored.

- Sharing or tying queries across groups of layers/blocks versus per-layer unique queries is not ablated.

- Failure modes and regularization

- Potential failure modes (e.g., persistent attention to only recent blocks, vanishing contribution of early layers, oscillatory depth weights) are not cataloged; mitigation strategies (entropy penalties, depth-decay priors, dropout over depth sources) are not tested.

- Applicability outside decoder-only LLMs

- How AttnRes performs in encoders, bidirectional models, retrieval-augmented systems, diffusion models, or recurrent architectures is untested.

- Reproducibility and open-source completeness

- Exact training hyperparameters for AttnRes components (e.g., attention temperature, RMSNorm eps, query initialization, block schedules) and full evaluation metrics/benchmarks are not fully detailed in the provided text; end-to-end reproducibility requires further specification.

Practical Applications

Overview

The paper introduces Attention Residuals (AttnRes), which replaces uniform, additive residual connections in LLMs with softmax attention over prior layer outputs. It also proposes Block AttnRes, a scalable variant that attends over a small number of block-level summaries instead of every layer, plus supporting systems optimizations (cross-stage caching for pipeline parallelism and a two-phase/online-softmax inference strategy). Experiments show consistent loss-per-compute improvements across scales and better training dynamics (mitigated PreNorm dilution, more uniform gradients) with negligible inference overhead.

Below are actionable, real-world applications derived from these findings.

Immediate Applications

These can be deployed with current tooling and hardware, given standard Transformer training/inference stacks.

- LLM training efficiency upgrade (software/AI infrastructure)

- Use case: Replace standard residuals with Block AttnRes in existing Transformer or MoE LLMs to improve loss-per-compute (e.g., match baseline trained with ~1.25× more compute) or achieve higher quality under fixed budgets.

- Sectors: Software/AI labs, cloud model providers, enterprise AI.

- Tools/workflows: Integrate AttnRes modules into PyTorch-based frameworks (Megatron-LM, DeepSpeed, FSDP), set block count N≈8 as a starting point, use RMSNorm with pseudo-queries.

- Assumptions/dependencies: PreNorm-style Transformers; moderate code changes in the residual stack; validation on target domain; availability of training logs to tune N.

- Pipeline-parallel training optimization via cross-stage caching (AI infrastructure/HPC)

- Use case: Reduce redundant communication of block representations in interleaved pipeline schedules by caching history across virtual stages.

- Sectors: AI labs, hyperscaler training stacks.

- Tools/workflows: Add cross-stage cache logic to pipeline engines (e.g., Megatron interleaving); monitor per-stage block counts.

- Assumptions/dependencies: Multi-stage pipeline parallelism; sufficient device memory for cached blocks; careful synchronization.

- Low-overhead inference with two-phase/online-softmax aggregation (software/serving)

- Use case: Maintain <2% latency overhead while using Block AttnRes, including for long-context workloads, by amortizing cross-block attention and capping KV-like caches to N blocks.

- Sectors: LLM serving (SaaS), on-prem inference, cloud inference platforms.

- Tools/workflows: Implement online softmax across block streams; add block-level caches; integrate with vLLM/TensorRT-LLM style serving.

- Assumptions/dependencies: Engine support for fused reductions/online softmax; profiling to ensure latency targets.

- Stable training of deeper models (model engineering)

- Use case: Mitigate PreNorm dilution and improve gradient distribution to stably train deeper networks or balance attention/MLP contributions across depth.

- Sectors: Foundation model R&D, research labs.

- Tools/workflows: Enable per-layer pseudo-query vectors; monitor per-layer output magnitudes and gradient norms.

- Assumptions/dependencies: Hyperparameter tuning (learning rates, block sizes); monitoring to verify bounded magnitudes.

- Model diagnostics and interpretability via depth-wise attention weights (academia/ML ops)

- Use case: Log and visualize α-weights over depth to identify under/over-utilized layers, guide pruning/architecture edits, or audit representation flow.

- Sectors: Academia, applied ML teams.

- Tools/workflows: Add hooks to record α across batches/tasks; link to layer-wise ablations.

- Assumptions/dependencies: Caution interpreting α as causal attributions; requires task-specific analysis.

- Cost and energy/carbon savings for model training (policy/ESG; operations)

- Use case: Achieve target quality with fewer training FLOPs/energy, contributing to sustainability targets and cost control.

- Sectors: Cloud providers, enterprise AI, public-sector AI projects.

- Tools/workflows: Integrate carbon tracking (e.g., CodeCarbon) and report compute-equivalence improvements in model cards.

- Assumptions/dependencies: Savings depend on replication of reported efficiency (model size/data distribution); hardware interconnects can affect realized gains.

- Product-level quality improvements without major serving cost increase (industry verticals)

- Use case: Upgrade foundation models for assistants, coding copilots, domain LLMs (e.g., clinical, legal, finance) to improve downstream benchmarks with minimal latency impact.

- Sectors: Consumer AI, developer tools, healthcare, finance, education.

- Tools/workflows: Retrain or swap-in AttnRes-based base models; run standard evals (instruction following, code, QA) to validate quality gains.

- Assumptions/dependencies: Requires (re)training access to base models or vendor support; domain-specific validation needed.

Long-Term Applications

These require further research, scaling, or ecosystem development before broad deployment.

- Parameter-efficient domain adaptation via pseudo-queries (PEFT)

- Use case: Freeze base model weights and learn only per-layer pseudo-queries w_l (and optionally norms) for new domains or tasks.

- Sectors: Enterprise fine-tuning, on-prem deployments.

- Tools/workflows: Extend PEFT libraries to include “AttnRes-PEFT” heads; evaluate footprint vs. LoRA/adapters.

- Assumptions/dependencies: Needs empirical validation that updating only w_l delivers strong adaptation; possible task-dependent limits.

- Dynamic depth and conditional execution (edge/mobile; efficient inference)

- Use case: Use α-weights to skip low-weight blocks at inference for adaptive compute, trading accuracy for latency/energy on the fly.

- Sectors: Edge AI, mobile assistants, embedded systems.

- Tools/workflows: Train with reinforcement or sparsity regularizers on α; implement runtime block-skipping policies.

- Assumptions/dependencies: Requires training-time incentives for stable sparsity; careful calibration to avoid quality regressions.

- Cross-architecture generalization (vision, speech, diffusion, robotics)

- Use case: Apply AttnRes to depth-wise aggregation in ViTs, audio Transformers, and U-Nets (diffusion) to improve training dynamics and sample quality.

- Sectors: Healthcare imaging, autonomous systems, creative tools.

- Tools/workflows: Replace residual stacks with AttnRes/Block AttnRes; assess effects on convergence and metrics (e.g., FID, mAP).

- Assumptions/dependencies: Architectural differences may require modified norms/queries; extensive benchmarking needed.

- Ultra-deep transformer stacks enabled by mitigated dilution (frontier research)

- Use case: Train thousand-layer-scale models with stable gradients and controlled hidden magnitudes, potentially improving expressivity and modularity.

- Sectors: Foundation model research.

- Tools/workflows: Scale blocks hierarchically; co-tune learning rate/initialization with AttnRes.

- Assumptions/dependencies: Training stability and data scaling laws at extreme depths remain open research questions.

- Interpretability, safety, and governance via depth-weight auditing (policy/assurance)

- Use case: Use α-patterns to audit layer contributions for different behaviors, detect anomalous depth usage, and support compliance documentation.

- Sectors: Safety teams, regulators, third-party auditors.

- Tools/workflows: Develop α-based diagnostics dashboards; link to behavior probes and red-team findings.

- Assumptions/dependencies: Requires evidence that α correlates with semantically meaningful processing; avoids false assurance.

- Hardware and compiler co-design for AttnRes ops (semiconductors/systems)

- Use case: Fuse depth-attention kernels, optimize online softmax and block caching in compilers and accelerators to reduce memory traffic and latency.

- Sectors: Hardware vendors, systems software.

- Tools/workflows: Add AttnRes primitives to TVM/XLA/TensorRT; design on-chip buffers for block histories.

- Assumptions/dependencies: ROI depends on adoption scale; standards for operator definitions required.

- Standardized reporting of compute-equivalent gains and carbon intensity (policy/consortia)

- Use case: Encourage benchmarks and model cards to include “compute-equivalent” efficiency metrics (e.g., “matches baseline trained with 1.25× compute”) and energy impact.

- Sectors: Research consortia, standards bodies, public-sector AI programs.

- Tools/workflows: Templates and tools for reporting; shared evaluation suites.

- Assumptions/dependencies: Community consensus on metrics; reproducibility across datasets and scales.

- Continual and modular learning via block-level routing (academia/advanced R&D)

- Use case: Treat blocks as modules and adjust α across tasks for modular composition and transfer in continual learning settings.

- Sectors: Research labs, multi-tenant enterprise AI.

- Tools/workflows: Curriculum schedules that evolve α; replay/regularization to prevent interference.

- Assumptions/dependencies: Stability-plasticity trade-offs; mechanisms to prevent catastrophic forgetting.

Notes on Feasibility and Dependencies

- AttnRes benefits depend on PreNorm-style Transformers and may vary with model size, data curriculum, and interconnect bandwidth.

- Block count N requires tuning; authors report N≈8 as a robust default balancing quality and overhead.

- System gains from cross-stage caching depend on pipeline parallel configurations (P, V) and available memory for cached histories.

- Inference gains assume engines can implement online softmax and manage block-level caches without regressions in throughput.

- Sector-specific performance improvements (healthcare, finance, education) must be validated on task-relevant datasets and comply with domain regulations.

Glossary

- Ablation (study): An experiment that removes or alters components to assess their contribution to performance. "and ablations validate the benefit of content-dependent depth-wise selection."

- Activation recomputation: Recomputing intermediate activations during training to reduce memory, at the cost of extra compute. "activation recomputation and pipeline parallelism are routinely employed"

- Attention Residuals (AttnRes): A residual mechanism that replaces uniform additive accumulation with attention over prior layers using learned, input-dependent weights. "We propose Attention Residuals (AttnRes)"

- Block Attention Residuals (Block AttnRes): A scalable AttnRes variant that aggregates within blocks and attends across block summaries to reduce memory and communication. "We propose Block Attention Residuals, which partitions the layers into blocks"

- Cross-stage caching: A training optimization that caches transmitted representations across pipeline stages to avoid redundant communication. "We address these challenges with cross-stage caching in training"

- Depth-wise linear attention: The perspective that standard residual accumulation implements linear attention across layers (depth). "standard residuals and prior recurrence-based variants correspond to depth-wise linear attention"

- Depth-wise softmax attention: Using softmax-normalized, content-dependent weights to aggregate across layers (depth). "AttnRes performs depth-wise attention."

- Duality between time and depth: The analogy linking sequence recurrence (time) with layer accumulation (depth), motivating attention over depth. "We observe a formal duality between depth-wise accumulation and the sequential recurrence in RNNs."

- Gradient highway: The identity path in residual networks that allows gradients to flow directly and stably through deep layers. "is widely understood as a gradient highway"

- Highway networks: Neural architectures that use learned gates to interpolate between transformed output and identity mapping. "Highway networks~#1{srivastava2015highway} relax this by introducing learned element-wise gates:"

- Inter-Block Attention: Attention applied across block-level representations rather than individual layers to reduce overhead. "Inter-block attention: attend over block reps + partial sum."

- Interleaved pipeline schedule: A parallel training schedule that splits each device’s work into multiple virtual pipeline segments for higher utilization. "Consider an interleaved pipeline schedule~#1{narayanan2021megatron}"

- Intra-Block Accumulation: Summing outputs within a block to form a single block representation for subsequent attention. "Intra-Block Accumulation."

- Jacobian: The matrix of partial derivatives of a layer’s transformation with respect to its input, used in analyzing gradient flow. "the layer Jacobians ."

- Kimi Linear architecture: A specific LLM architecture used for evaluation and scaling studies. "We further integrate AttnRes into the Kimi Linear architecture~#1{zhang2025kimi} (48B total / 3B activated parameters)"

- KV cache: The cached keys and values used to speed up Transformer inference across tokens. "and the fixed block count bounds the KV cache size."

- Multi-stream recurrences: Recurrent architectures with multiple interacting streams intended to improve information flow. "multi-stream recurrences~#1{zhu2025hyperconnections} remain bound to the additive recurrence"

- Online softmax: A numerically stable streaming algorithm to compute softmax across chunks without storing all inputs. "via online ~#1{milakov2018online}"

- Physical stage: A hardware or device-level partition in pipeline parallelism that owns a contiguous set of layers. "with physical stages and virtual stages per physical stage."

- Pipeline parallelism: Distributing a model’s layers across devices to process microbatches in a pipeline for throughput. "under pipeline parallelism each must further be transmitted across stage boundaries."

- Prefilling: The long-context phase that prepares caches (e.g., KV cache) before token-by-token decoding in inference. "while long-context prefilling amplifies the memory cost of caching block representations."

- PreNorm: Applying normalization (e.g., LayerNorm/RMSNorm) before each sublayer in a Transformer block. "AttnRes mitigates PreNorm dilution"

- Pseudo-query: A learned per-layer vector that queries prior representations to compute attention weights over depth. "a single learned pseudo-query per layer."

- Recurrent neural networks (RNNs): Sequence models that process inputs via recurrence, contrasted here with attention mechanisms. "recurrent neural networks (RNNs)"

- RMSNorm: Root Mean Square Normalization, a normalization technique that scales activations by their RMS. "The inside prevents layers with large-magnitude outputs from dominating the attention weights."

- Scaled residual paths: Modifying residual connections by scaling factors to stabilize or improve deep network training. "scaled residual paths~#1{wang2022deepnorm}"

- Scaling law experiments: Studies that measure model performance as a function of compute, data, and model size. "Scaling law experiments confirm that AttnRes consistently outperforms the baseline across compute budgets"

- Structured-matrix analysis: An analytical framework that leverages matrix structure to relate residual variants to forms of attention. "Through a unified structured-matrix analysis, we show that standard residuals and prior recurrence-based variants correspond to depth-wise linear attention"

- Two-phase inference strategy: An inference approach that splits computation to amortize inter-block attention costs efficiently. "and a two-phase inference strategy that amortizes cross-block attention via online "

- Virtual stage: A logical subdivision of work within a physical pipeline stage used in interleaved scheduling. "with physical stages and virtual stages per physical stage."

Collections

Sign up for free to add this paper to one or more collections.