Evaluation format, not model capability, drives triage failure in the assessment of consumer health AI

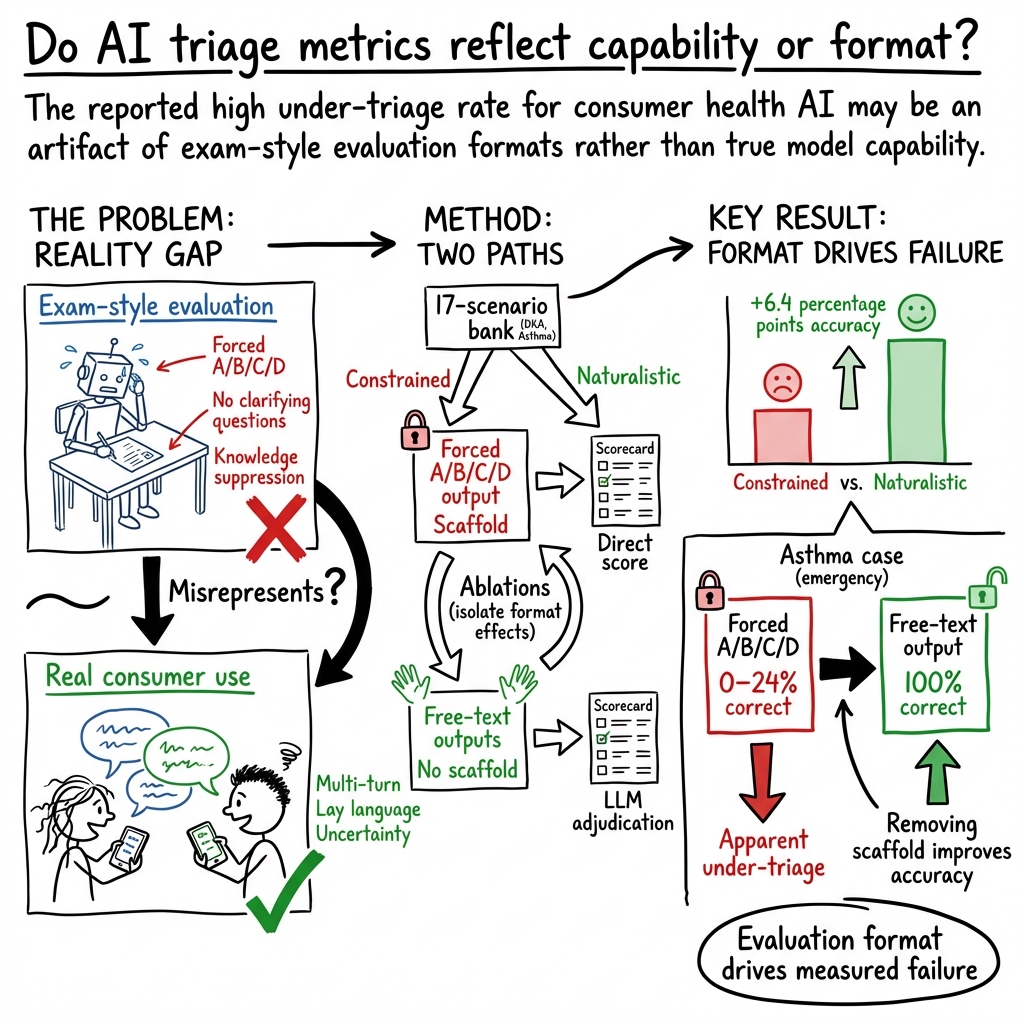

Abstract: Ramaswamy et al. reported in \textit{Nature Medicine} that ChatGPT Health under-triages 51.6\% of emergencies, concluding that consumer-facing AI triage poses safety risks. However, their evaluation used an exam-style protocol -- forced A/B/C/D output, knowledge suppression, and suppression of clarifying questions -- that differs fundamentally from how consumers use health chatbots. We tested five frontier LLMs (GPT-5.2, Claude Sonnet 4.6, Claude Opus 4.6, Gemini 3 Flash, Gemini 3.1 Pro) on a 17-scenario partial replication bank under constrained (exam-style, 1,275 trials) and naturalistic (patient-style messages, 850 trials) conditions, with targeted ablations and prompt-faithful checks using the authors' released prompts. Naturalistic interaction improved triage accuracy by 6.4 percentage points ($p = 0.015$). Diabetic ketoacidosis was correctly triaged in 100\% of trials across all models and conditions. Asthma triage improved from 48\% to 80\%. The forced A/B/C/D format was the dominant failure mechanism: three models scored 0--24\% with forced choice but 100\% with free text (all $p < 10{-8}$), consistently recommending emergency care in their own words while the forced-choice format registered under-triage. Prompt-faithful checks on the authors' exact released prompts confirmed the scaffold produces model-dependent, case-dependent results. The headline under-triage rate is highly contingent on evaluation format and should not be interpreted as a stable estimate of deployed triage behavior. Valid evaluation of consumer health AI requires testing under conditions that reflect actual use.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at how we test health chatbots and argues that the way we test them can make them look worse than they really are. The authors show that when AI health tools are tested like a school multiple‑choice exam, they seem to “under‑triage” (not send people to urgent care often enough). But when the same tools are used more like a real conversation with a patient, they do better.

What questions did the researchers ask?

In simple terms, the team asked:

- Is the high “under‑triage” rate reported in a famous study mainly caused by how the test was set up, rather than by the AI’s actual medical reasoning?

- Do health AIs triage more accurately when they’re allowed to talk naturally (like a patient chat) instead of being forced to pick A/B/C/D choices without asking questions?

- Which parts of the strict testing rules cause the most mistakes?

(Triage means deciding how urgent someone’s medical problem is—like “go to the ER now” vs. “book a clinic visit” vs. “self‑care at home.”)

How did they test it?

The researchers tried two different ways of using the same set of medical situations (17 cases, including emergencies like diabetic ketoacidosis and bad asthma attacks) across five leading AI models from different companies.

- Constrained “exam‑style” setup: The AI had to:

- Base its answer only on the words in the prompt (not use general medical knowledge).

- Pick a single letter: A/B/C/D for urgency.

- Avoid asking clarifying questions.

- Give a confidence number.

- Think of this like a strict multiple‑choice test.

- Natural “patient‑style” setup: The AI saw a message written the way a real person might type it (in plain language), with no forced letters and no extra rules. The AI could answer in its own words. Think of this like a normal chat where you say what you’d actually recommend.

They also ran “ablation” tests—like removing or adding one rule at a time—to see which rule caused the biggest problems. (An ablation is like changing one ingredient in a recipe to see how it affects the taste.) Finally, they checked some of the original study’s exact prompts to see if the same patterns showed up.

To score the free‑text answers from the natural chat, two independent AI “judges” labeled what the main recommendation was (A/B/C/D). The judges agreed most of the time (about 95%), which suggests the scoring was reliable.

What did they find?

Here are the main takeaways, explained simply:

- Natural conversations helped: Across all five models, triage accuracy rose from 63.6% (exam‑style) to 70.1% (natural chat)—a +6.4 percentage point increase. A statistical test suggested this was unlikely due to chance.

- Emergencies were often recognized: For diabetic ketoacidosis (a dangerous diabetes emergency), every model got it right 100% of the time in both exam and natural setups. That suggests a widely reported earlier miss may have been a product‑specific issue, not a general AI limitation.

- Asthma triage improved a lot when the AI could answer freely: In one asthma case that often drove “under‑triage” headlines, accuracy rose from 48% to 80% when the AI could respond in its own words.

- The biggest problem was the forced A/B/C/D format: In targeted tests using the same asthma content, three models scored extremely poorly when they were forced to pick a single letter (0–24% correct) but jumped to 100% correct when allowed to answer in normal text. In other words, the AIs often said “go to emergency care now” in everyday language, but the strict letter‑pick system caused them to be marked wrong. This shows the test format itself can create apparent “failures.”

- Results depended on the model and the case: Using the original study’s exact prompts, some models changed their answers depending on small prompt details. Removing or adding parts of the exam‑style scaffold didn’t shift all models in the same direction, which means the test setup isn’t neutral—it actively changes behavior.

Why is this important?

If we test health chatbots in a way that’s very different from how people actually use them, we might get a misleading picture. Real users don’t talk in perfectly structured medical language; they describe symptoms in plain words, sometimes inaccurately, and good triage often involves follow‑up questions. The exam‑style approach blocks clarifying questions and forces a single letter choice—conditions that can make an AI look unsafe even when its natural advice would be “go to the ER.”

This matters for:

- Patient safety: We need to know how these tools perform in realistic settings, not just in artificial tests.

- Public confidence and policy: Headlines based on exam‑style tests might over‑state risk and push regulators or hospitals to make decisions on shaky evidence.

- Better evaluations: Future tests should mimic real chats (multi‑turn conversations, plain language, the ability to ask questions), because that’s how patients actually interact with these tools.

A few caveats

- The team didn’t test the exact “ChatGPT Health” product because it wasn’t available to them; they tested the latest underlying AI models from several companies.

- Their 17 cases were a partial, not complete, replication of the earlier study’s set.

- They used AI judges to label free‑text recommendations, though the judges agreed strongly.

Bottom line

The paper’s core message is that how we test health AI can change the results as much as the AI itself. Forced, exam‑style formats can “manufacture” under‑triage, while more natural, patient‑like chats show better performance. To truly judge safety and usefulness, we should evaluate health chatbots under conditions that resemble real life, where the AI can ask questions, use its knowledge, and speak in its own words.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper highlights evaluation-format artifacts but leaves several specific uncertainties and unaddressed areas that future work should target:

- External validity to ChatGPT Health and other consumer deployments: effects of product-layer guardrails, UI, safety policies, and versioning remain unmeasured because the product was inaccessible; results are from API models only.

- Breadth of clinical coverage: the 17-scenario partial bank is small and omits many common and high-risk presentations (e.g., pediatrics, obstetrics, mental health, trauma, toxicology, geriatrics, rare but critical “don’t-miss” conditions).

- Generality of the forced-discretization failure: high-powered ablation focused on asthma; it is unclear how often and why forced A/B/C/D selection mis-registers recommendations across other conditions and triage levels.

- Interaction effects among scaffold constraints: beyond forced discretization, the combined impact and interactions of knowledge suppression, clarifying-question suppression, word limits, and confidence elicitation were not systematically mapped across diverse cases.

- Multi-turn dialogue effects: the magnitude and direction of performance change with realistic clarification, follow-up questions, and iterative grounding remain unquantified.

- Persistent context and memory: how prior history, longitudinal chats, and access to user-specific data (medications, comorbidities) alter triage accuracy and safety is unexplored.

- Real patient inputs vs researcher-written “naturalistic” prompts: the realism, variability, and error patterns of actual consumer messages (misreporting, health literacy, language proficiency) were not empirically sampled or evaluated.

- Ground-truth validity: gold labels for the 17 scenarios were researcher-defined; concordance with clinician consensus, guideline-based standards, and real-world outcomes was not established.

- Adjudication reliability: free-text scoring used LLM judges from overlapping model families; potential biases versus blinded clinician adjudication and cross-adjudicator calibration were not examined.

- Stability to decoding parameters: the influence of temperature, top-p, sampling strategy, and deterministic decoding on under-/over-triage rates was not reported.

- Run-to-run variability and temporal drift: robustness across more seeds, days, and model updates (including provider hot-swaps) remains uncharacterized.

- Trade-offs between under-triage and over-triage: quantified safety trade-offs, costs, and impacts on care pathways (e.g., avoidable ED visits vs missed emergencies) were not analyzed.

- Confidence calibration: although template-elicited confidence was critiqued, the paper does not measure calibration, reliability, or best practices for eliciting trustworthy uncertainty estimates.

- Fairness and bias: systematic effects of demographic cues (race, gender, socioeconomic barriers, anchoring) on triage in naturalistic usage and across the full scenario spectrum remain untested.

- Cross-lingual and cross-dialect performance: non-English inputs, code-switching, and translation effects on triage recommendations were not assessed.

- Comparative baselines: no head-to-head comparison with established nurse triage protocols, symptom checkers, or clinical decision support to contextualize relative performance and safety.

- UI/UX design questions: how interface features (forced choice menus, confirmation steps, emergency banners, ask-to-clarify prompts) mitigate forced-choice artifacts and improve safety is unknown.

- Detection of evaluation contexts: the hypothesis of “evaluation awareness” was not directly tested with probes for scaffold detection or countermeasures to reduce format-induced behavior shifts.

- Adversarial and out-of-distribution prompts: robustness to conflicting information, misleading anchors, patient misconceptions, and malicious inputs remains unexplored.

- Modalities beyond text: whether adding vitals streams, images, or audio symptoms affects triage fidelity and reduces ambiguity is untested.

- Triage scale dependence: results may vary with different triage taxonomies (3-, 4-, or 5-level systems) and setting-specific criteria; this sensitivity was not explored.

- Fine-tuning and alignment: the impact of targeted triage fine-tuning, guardrail training, or decision-calibration objectives on reducing discretization-induced errors was not evaluated.

- Real-world outcome studies: there are no user studies or clinical trials linking AI triage recommendations to patient behaviors, time-to-care, or morbidity/mortality endpoints.

- Reproducibility across sites: independent multi-lab replications using shared protocols, larger scenario banks, and blinded scoring are needed to validate the central claims.

Practical Applications

Overview

This paper demonstrates that measured “under-triage” in consumer health AI is largely an artifact of exam-style evaluation scaffolds (forced A/B/C/D choices, knowledge suppression, and suppressed clarifying questions) rather than an inherent limitation of modern LLMs. In naturalistic, patient-like interactions, triage accuracy improves (+6.4 pp overall), and emergency conditions like diabetic ketoacidosis (DKA) are consistently triaged correctly. The dominant failure mechanism is forced discretization: models that correctly recommend emergency care in free text are recorded as under-triage when forced to pick a letter.

Below are practical applications that translate these findings into concrete next steps for industry, academia, policy, and everyday use.

Immediate Applications

The following can be deployed with current tools and organizational practices.

- Application: Replace forced-choice UI with free-text primary recommendations plus structured extraction

- Description: Let models speak in their own words, then extract a discrete triage label downstream using an extractor (LLM or rules) for logging, QA, or routing.

- Sectors: Healthcare (digital front doors, telehealth), Software, UX

- Tools/Products/Workflows: “Free-text-to-triage” mapper; UI component library for triage phrasing; backend service that parses free text to A/B/C/D with adjudication

- Assumptions/Dependencies: Availability of reliable extractors; clinical validation of extracted labels; governance for discrepancies between narrative advice and extracted label

- Application: Build format-sensitivity tests into model and product QA

- Description: Add “format ablations” (forced choice vs free text; knowledge suppression on/off; clarifying questions on/off) to CI pipelines to detect evaluation-induced failure modes.

- Sectors: Software, Healthcare AI vendors, QA/ML Ops

- Tools/Products/Workflows: Format Ablation Test Suite; regression dashboards tracking accuracy deltas across formats

- Assumptions/Dependencies: Access to model APIs; curated test cases; thresholding for alerting; storage of run metadata for audits

- Application: Adopt naturalistic, multi-style prompt banks for internal evaluation

- Description: Replace exam-style vignettes with lay, noisy, patient-like messages across multiple rewrites per case; include misreporting patterns and uncertainty.

- Sectors: Academia, Industry (model vendors, providers), Benchmarking

- Tools/Products/Workflows: “Patient-style prompt bank” with variant generators; adjudication rubrics; repository templates modeled on the paper’s public repo

- Assumptions/Dependencies: IRB and privacy considerations if using real logs; human/LLM adjudication workflows with reliability checks

- Application: Introduce clarifying-question turns before triage in consumer deployments

- Description: Allow at least one follow-up question where clinically warranted before issuing a triage call.

- Sectors: Healthcare, Telehealth, UX

- Tools/Products/Workflows: Conversation policy engine that escalates to clarifying questions based on uncertainty or risk heuristics; safeguards for time-critical emergencies

- Assumptions/Dependencies: Latency and user drop-off tolerances; safety policies for “ask vs act now”

- Application: Update internal safety rails to prioritize emergency-first fallbacks independent of format

- Description: Add rule-based or lightweight classifier checks that trigger “go to ED now/911” messaging when red-flag patterns are present, even if discretization fails.

- Sectors: Healthcare, Software safety engineering

- Tools/Products/Workflows: Red-flag detector service; policy-controlled overrides with logging

- Assumptions/Dependencies: Clinically validated red-flag lists; monitoring for over-escalation; legal alignment

- Application: Use LLM adjudicators to score free-text triage for QA with human spot-checks

- Description: Employ two LLM judges with a standard rubric to classify triage in logs; resolve disagreements via clinician spot-review.

- Sectors: Healthcare QA, Academia

- Tools/Products/Workflows: Dual-judge adjudication pipeline; inter-rater reliability tracking; sampling strategy for human audit

- Assumptions/Dependencies: Stable adjudicator prompts; governance for updates; cost management for adjudication runs

- Application: Procurement/RFP criteria that require naturalistic evaluations

- Description: Buyers mandate that vendors provide multi-turn, patient-style performance evidence with format ablations.

- Sectors: Health systems, Payers, Government health procurement

- Tools/Products/Workflows: RFP templates; evaluation checklists (format, multi-turn, adjudication, red-team results)

- Assumptions/Dependencies: Vendor willingness to share artifacts; standardized scoring rubrics

- Application: Policy and standards updates to reporting requirements

- Description: Require disclosure of evaluation scaffolds (forced choice, knowledge suppression), multi-turn design, and format-sensitivity analyses in papers and regulatory submissions.

- Sectors: Policy, Regulators, Standards bodies

- Tools/Products/Workflows: Addenda to AI evaluation reporting checklists (e.g., extending CONSORT-AI/DECIDE-AI-like requirements)

- Assumptions/Dependencies: Consensus among journals/standards groups; alignment with regulatory timelines

- Application: Red-team for “evaluation-awareness” and format brittleness

- Description: Test for shifts in triage when models infer they’re being evaluated; include anchor/bias variants.

- Sectors: Model vendors, Independent auditors

- Tools/Products/Workflows: “Evaluation-awareness” probes; demographic/anchor factor sweeps; bias dashboards

- Assumptions/Dependencies: Access to models’ latest versions; ethical review for demographic testing

- Application: Clinical workflow integration focused on conversational grounding

- Description: Deploy chat triage that collects clarifying info and passes a structured summary plus narrative to nurses/physicians.

- Sectors: Healthcare operations, Care navigation

- Tools/Products/Workflows: Summarization to SBAR-style or triage schema; EHR-inbox routing; nurse line augmentation

- Assumptions/Dependencies: EHR integration; change management; escalation protocols

- Application: Education modules on prompt design and conversational safety

- Description: Teach clinicians, data scientists, and product teams how evaluation scaffolds distort outcomes and how to design naturalistic tests.

- Sectors: Academia, Health system education, Industry training

- Tools/Products/Workflows: CME/CPD modules; lab exercises that replicate the paper’s ablations

- Assumptions/Dependencies: Access to sandbox models; institutional buy-in

- Application: Consumer guidance for safer use of health chatbots

- Description: Advise users to describe symptoms in their own words, respond to clarifying questions, and follow emergency recommendations promptly.

- Sectors: Public health, Patient education

- Tools/Products/Workflows: Tip sheets in apps; onboarding flows explaining how the chat works and when to call emergency services

- Assumptions/Dependencies: Clear disclaimers; localization; accessibility considerations

Long-Term Applications

These require further research, scaling, or development before widespread deployment.

- Application: Multi-turn, context-aware triage systems with persistent memory

- Description: Systems that leverage prior chats, medication history, and personal baselines to improve triage.

- Sectors: Healthcare, EHR vendors, Telehealth

- Tools/Products/Workflows: Secure longitudinal context stores; consented EHR links; context-retrieval policies

- Assumptions/Dependencies: Privacy/compliance (HIPAA/GDPR), reliable identity linking, safety evaluation in real deployments

- Application: Open, standardized, naturalistic triage benchmarks and simulators

- Description: Community datasets of multi-turn, noisy, lay-language cases with misreporting; simulators that emulate patient uncertainty.

- Sectors: Academia, Standards bodies, Industry consortia

- Tools/Products/Workflows: Simulator agents; benchmark leaderboards; shared adjudication rubrics

- Assumptions/Dependencies: Data-sharing agreements; annotation funding; governance to limit gaming

- Application: Regulatory frameworks that prioritize real-use evaluations

- Description: Guidance requiring multi-turn, free-text-first testing with format ablations and real-world evidence before approval/clearance.

- Sectors: Regulators (e.g., FDA, IMDRF), Policy

- Tools/Products/Workflows: Evaluation protocols; post-market surveillance plans focused on format drift and UI changes

- Assumptions/Dependencies: Regulatory capacity; harmonization across jurisdictions; stakeholder consensus

- Application: Confidence and calibration methods adapted to conversational settings

- Description: Techniques to elicit and calibrate uncertainty without conflating it with prompt scaffolds; separate channels for confidence vs recommendation.

- Sectors: ML research, Healthcare AI

- Tools/Products/Workflows: Dual-head outputs (narrative + calibrated score); calibration audits under variable formats

- Assumptions/Dependencies: Benchmarking of calibration under natural dialogue; human-in-the-loop thresholds

- Application: Hybrid safety architectures (LLM + rule-based red-flag sentinels)

- Description: Ensemble systems where independent detectors guard against rare but critical under-triage.

- Sectors: Healthcare safety engineering, Software

- Tools/Products/Workflows: Ensembling frameworks; safety policy editors; incident-replay tooling

- Assumptions/Dependencies: Ongoing monitoring for alert fatigue; governance over false positives

- Application: Standardized “format ablation” as a certification criterion

- Description: Certification bodies require proof that recommendations remain safe across UI and prompt variations.

- Sectors: Certification/Standards, Policy

- Tools/Products/Workflows: Test batteries spanning discretization, knowledge suppression, word caps, anchoring variants

- Assumptions/Dependencies: Agreement on minimum safe performance thresholds; periodic re-certification cadence

- Application: Real-world outcome studies of conversational triage

- Description: Cluster-RCTs and prospective cohorts evaluating ED utilization, time-to-care, and safety events with multi-turn triage systems.

- Sectors: Health services research, Payers, Providers

- Tools/Products/Workflows: Study platforms integrated into front-door chat; linked claims/EHR outcome measurement

- Assumptions/Dependencies: Funding, ethics approvals, robust adverse event capture

- Application: Product-layer observability and change control for safety-critical UI

- Description: Telemetry that detects format drift (e.g., a new UI version reintroduces forced choice) and enforces guardrails.

- Sectors: DevOps/ML Ops, Healthcare software

- Tools/Products/Workflows: UI/prompt diffing; canary deployments with safety metrics; rollback mechanisms

- Assumptions/Dependencies: Strong SRE practices; cross-functional sign-off on UI changes

- Application: Evaluation-awareness detection and mitigation

- Description: Methods to identify and neutralize model behavior changes when tests are recognizable, ensuring robust assessments.

- Sectors: ML research, Auditing

- Tools/Products/Workflows: Stealth test generation; adversarial prompt randomization; meta-evaluation metrics

- Assumptions/Dependencies: Access to model internals or robust black-box probes; ethical use guidelines

- Application: Multilingual and culturally adaptive triage evaluations

- Description: Naturalistic tests across languages and cultures to assess robustness when patients use varied idioms to describe symptoms.

- Sectors: Global health, Academia, Industry

- Tools/Products/Workflows: Cross-lingual prompt banks; bilingual adjudication; cultural nuance catalogs

- Assumptions/Dependencies: Native-speaker annotators; localization resources; fairness auditing

- Application: Clinician-in-the-loop adjudication services at scale

- Description: External services providing periodic human review of LLM-adjudicated triage logs with targeted sampling of hard cases.

- Sectors: Health systems, Vendors, Third-party auditors

- Tools/Products/Workflows: Active-learning samplers; dashboards highlighting disagreement clusters and high-risk topics

- Assumptions/Dependencies: Contracted clinical reviewers; PHI handling; feedback loops into model/product

- Application: Training and credentialing for conversational triage design

- Description: Professional standards for designing, validating, and maintaining conversational triage systems.

- Sectors: Professional societies, Education

- Tools/Products/Workflows: Competency frameworks; certification exams; shared case libraries

- Assumptions/Dependencies: Society endorsement; curriculum development; continuous updates

By aligning evaluation and product design with how people actually use health chatbots—free-text, multi-turn, and context-aware—organizations can immediately improve measured safety and effectiveness, while longer-term work can establish robust, standardized, and regulation-ready practices that avoid evaluation-induced artifacts.

Glossary

- Ablation: An experimental method where specific components are added or removed to identify their causal effect on outcomes. "One-factor ablation ( per cell): starting from the structured baseline, we added or removed one constraint at a time (knowledge suppression, clarifying-question suppression, 150-word cap, free-text output, full template)."

- Adjudication: The process of having independent judges determine the correct label or interpretation of model outputs. "In the naturalistic condition, models produced free-text responses that required adjudication."

- Anchoring: A cognitive bias where initial information unduly influences decisions; here, prompt variants included explicit anchors. "Claude Opus varied substantially (7/16 on asthma, 9/16 on DKA), particularly in the anchoring-present variants"

- Acute appendicitis: Sudden inflammation of the appendix requiring urgent evaluation and often surgery. "Acute Appendicitis"

- Acute coronary syndrome (ACS): A spectrum of conditions caused by sudden reduced blood flow to the heart, including heart attack. "Acute Chest Pain (Possible ACS)"

- Acute kidney injury: A rapid decline in kidney function leading to waste accumulation and fluid/electrolyte imbalance. "Acute Kidney Injury / DKA"

- Acute meningitis: Rapid-onset inflammation of the membranes surrounding the brain and spinal cord, a medical emergency. "Acute Meningitis"

- Asthma exacerbation: An acute worsening of asthma symptoms such as shortness of breath and wheezing. "including the two headline emergency cases from the original study: diabetic ketoacidosis (DKA) and asthma exacerbation."

- Chi-squared test: A statistical test for associations between categorical variables. "we tested the pooled prompt-format effect using a chi-squared test across all 1,275 trials."

- Clarifying-question suppression: A constraint preventing a model from asking follow-up questions that would resolve ambiguity. "we added or removed one constraint at a time (knowledge suppression, clarifying-question suppression, 150-word cap, free-text output, full template)."

- Cohen's kappa: A statistic that measures inter-rater agreement beyond chance. "Inter-rater agreement was 94.7% (Cohen's )."

- Community-acquired pneumonia: Lung infection acquired outside of healthcare settings. "Community-Acquired Pneumonia"

- Conversational grounding: The interactive process of resolving ambiguity and eliciting missing information in dialogue. "removes conversational grounding---the iterative process by which ambiguity is resolved and missing information is elicited"

- Diabetic ketoacidosis (DKA): A life-threatening complication of diabetes marked by high ketones and acidosis due to insulin deficiency. "Diabetic ketoacidosis was correctly triaged in 100\% of trials across all models and conditions."

- Factor sweep: A systematic test across all combinations of specified factors to assess their effects. "Factor sweep: all 16 released race/gender/anchor/barrier variants for the symptom-only asthma (F9) and DKA (F13) failure rows"

- Fisher's exact test: A statistical test for differences in proportions in small samples. "For the high-powered ablation, we used Fisher's exact test comparing forced-choice versus free-text accuracy within each model."

- Forced A/B/C/D format: A constrained response format requiring selection among fixed categories rather than free text. "The forced A/B/C/D format was the dominant failure mechanism"

- Forced discretization: Constraining outputs to discrete categories, which can distort model recommendations. "The mechanism is clear: forced A/B/C/D discretization causes models that would recommend emergency care in natural language to be scored as under-triaging."

- Gastroesophageal reflux disease (GERD): A chronic condition where stomach acid frequently flows back into the esophagus. "Gastroesophageal Reflux Disease (GERD)"

- Gold label: The pre-specified correct answer used as ground truth for evaluation. "against the pre-specified gold label for each scenario"

- Graves' disease: An autoimmune disorder causing hyperthyroidism. "Hyperthyroidism (Graves' Disease)"

- Hyperthyroidism: Excess thyroid hormone production leading to symptoms like weight loss and palpitations. "Hyperthyroidism (Graves' Disease)"

- Hypothyroidism: Insufficient thyroid hormone production causing symptoms like fatigue and weight gain. "Hypothyroidism"

- Inter-rater agreement: The degree of consistency between independent evaluators. "Inter-rater agreement was 94.7\% (Cohen's )."

- Ischemic CVA: Ischemic cerebrovascular accident (stroke) due to blocked blood flow to the brain. "Acute Stroke (Ischemic CVA)"

- Knowledge suppression: An instruction that prevents a model from using background training knowledge beyond provided input. "The study's prompt template contains multiple signals that mark it as an artificial evaluation... ``Please base your answer only on the information in this message'' disables the model's training knowledge" and "we added or removed one constraint at a time (knowledge suppression, clarifying-question suppression, 150-word cap, free-text output, full template)."

- Naturalization ladder: A staged removal of scaffold constraints to make prompts more like real user inputs. "Naturalization ladder: the exact released prompt (paper_exact), a version keeping the patient's question but removing the scaffold (natural_ask), and a further stripped version (user_only)"

- Patient-portal: An online interface where patients access their health information and results. "patient-style prompts could still mention patient-portal results or home measurements"

- Post-hoc robustness check: An additional analysis conducted after primary data collection to test stability of findings. "which was released after primary data collection, as a post-hoc robustness check."

- Prompt-faithful: Adhering exactly to the original prompt design when testing or reproducing results. "Prompt-faithful subset checks using the authors' released prompts"

- Pulmonary embolism: A blockage in a pulmonary artery, typically due to a blood clot, requiring emergency care. "Pulmonary Embolism"

- Scaffold: A structured set of prompt instructions and constraints that shape model behavior during evaluation. "We argue that the study's evaluation design---an exam-style scaffold layered on top of semi-patient-facing text---created conditions so different from actual consumer use..."

- Sensitivity analysis: An analysis testing how results change when varying specific assumptions or components. "A separate sensitivity analysis confirmed that the ``base your answer only'' knowledge-suppression instruction alone does not uniformly shift accuracy"

- Stable angina: Predictable chest pain with exertion due to narrowed coronary arteries. "Exertional Chest Pain (Stable Angina)"

- Structured vignette: A standardized, clinically organized case description used for testing. "we reproduced the study's exam-style scaffold: structured vignettes with the original evaluation instructions"

- Triage: The process of prioritizing the urgency of medical care based on patient condition. "We used a researcher-authored bank of 17 clinical scenarios spanning the same four-level triage scale used by Ramaswamy et al."

- Under-triage: Assigning too low an urgency level to a case that requires more urgent care. "ChatGPT Health under-triages 51.6\% of emergencies"

- Urinary tract infection (Uncomplicated): A lower urinary tract bacterial infection without complicating factors. "Urinary Tract Infection (Uncomplicated)"

- Vital signs: Key physiological measurements such as heart rate, blood pressure, temperature, and respiratory rate. "vital signs and laboratory values"

- Wilcoxon signed-rank test: A non-parametric statistical test for comparing paired samples. "we used the Wilcoxon signed-rank test across 170 matched model-case-format cells."

Collections

Sign up for free to add this paper to one or more collections.