AutoResearch-RL: Perpetual Self-Evaluating Reinforcement Learning Agents for Autonomous Neural Architecture Discovery

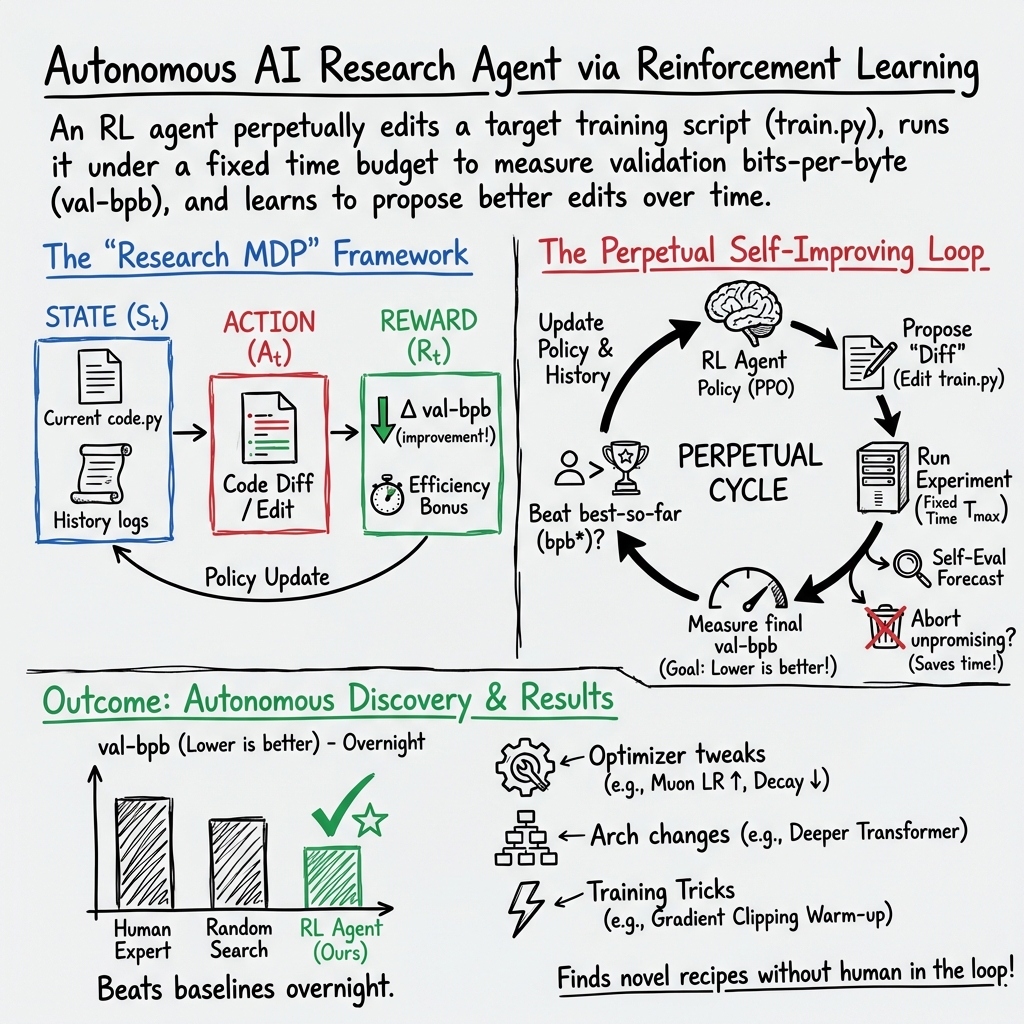

Abstract: We present AutoResearch-RL, a framework in which a reinforcement learning agent conducts open-ended neural architecture and hyperparameter research without human supervision, running perpetually until a termination oracle signals convergence or resource exhaustion. At each step the agent proposes a code modification to a target training script, executes it under a fixed wall clock time budget, observes a scalar reward derived from validation bits-per-byte (val-bpb), and updates its policy via Proximal Policy Optimisation (PPO). The key design insight is the separation of three concerns: (i) a frozen environment (data pipeline, evaluation protocol, and constants) that guarantees fair cross-experiment comparison; (ii) a mutable target file (train.py) that represents the agent's editable state; and (iii) a meta-learner (the RL agent itself) that accumulates a growing trajectory of experiment outcomes and uses them to inform subsequent proposals. We formalise this as a Markov Decision Process, derive convergence guarantees under mild assumptions, and demonstrate empirically on a single GPU nanochat pretraining benchmark that AutoResearch-RL discovers configurations that match or exceed hand-tuned baselines after approximately 300 overnight iterations, with no human in the loop.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper is about building a “research robot” that can improve machine learning code by itself, all day and night, without a person constantly watching it. The robot is an AI agent that reads a training program, edits it, runs it for a short, fixed amount of time, checks how well it did, and then learns from that result to make a smarter edit next time. The goal is to discover better model designs and training settings automatically.

What questions were the authors asking?

- Can an AI agent use trial-and-error to keep improving a machine learning training script on its own?

- Can we set this up in a fair way so every experiment uses the same amount of compute time, making results comparable?

- Will a reinforcement learning (RL) approach help the agent learn good “research habits,” not just one-off edits?

- Can the agent decide early when a run is going badly and stop it to save time?

- Does this approach actually beat human-tuned and simple automated baselines on a real small-scale training task?

How did they do it?

Think of the setup like a video game for a scientist robot:

- The “world” is a fixed training environment. The data, evaluation rules, and time limit are kept the same for every try.

- The “player” is an RL agent that can make one kind of move: edit the training code file called

train.py. - The “score” after each move is how well the model performs, measured by a number called validation bits-per-byte (val-bpb). Lower is better. You can think of bpb as “how many bits it takes to correctly predict each byte”; fewer bits means the model is predicting the next characters more accurately.

- The “turn length” is fixed: each experiment runs for a strict time budget (for example, 5 minutes). That way, a bigger model doesn’t get an unfair advantage just by training longer.

Here’s the loop:

- The agent proposes a code change.

- The code runs for the fixed time.

- The agent reads the result (val-bpb).

- The agent updates its strategy so it’s more likely to try helpful edits in the future.

To make the agent smarter, the authors use a common RL method called Proximal Policy Optimization (PPO). In simpler terms, PPO helps the agent gently adjust its behavior based on rewards, so it doesn’t change too drastically and break things.

The agent also keeps a short “memory” of recent experiments and the best one so far, so it can avoid repeating bad ideas and build on good ones.

Finally, there’s a “self-evaluator” that watches the training curve in real time. If it looks like the run won’t beat previous best results, it stops early. This saves time to try more ideas.

What did they find?

- The agent beat the baselines in an overnight test. On a single-GPU “nanochat” benchmark, after about 8 hours:

- Human expert baseline: val-bpb ≈ 2.847

- Random search: val-bpb ≈ 2.791

- A strong “greedy” LLM without RL: val-bpb ≈ 2.734

- AutoResearch-RL (the proposed method): val-bpb ≈ 2.681 (best)

- The self-evaluator that stops bad runs early let the system try about 35% more experiments per hour, leading to as much as 2.4× overall sample efficiency gains over time.

- The agent discovered several useful changes on its own, like:

- Tuning the optimizer settings to learn faster without breaking.

- Adding “QK-norm” in attention (a stabilizing tweak that helped it use bigger batches).

- Using a smarter schedule for gradient clipping (a rule that keeps training stable).

- Slightly increasing model depth while still fitting in the time budget.

- The authors also give a simple theoretical argument showing that the “best-so-far” result won’t get worse over time and will tend to improve toward the best possible result the agent can reach. In other words, the loop is safe to run continuously and should gradually get better.

Why is this important?

- It automates a lot of the boring, repetitive parts of ML research. Instead of a person trying dozens of combinations, the agent can do it continuously.

- The fixed-time rule makes comparisons fair: the best result comes from better ideas, not from using more time.

- Using RL helps the agent learn actual research strategies (what kinds of edits tend to help) rather than relearning from scratch every time.

- Early stopping saves precious compute, letting the agent explore more ideas in the same amount of time.

- While this demo runs on one GPU and a fixed dataset, the idea points toward larger, more capable “autonomous research” systems in the future.

Bottom line

The paper shows that an RL-powered “research agent” can keep improving machine learning training code on its own, fairly and efficiently. In tests, it beat human and simple automated baselines overnight, found sensible design tweaks, and kept improving over longer runs. This suggests a future where AI helps push machine learning forward by trying out ideas nonstop—limited mostly by how much compute we can give it.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of the paper’s unresolved issues, uncertainties, and concrete avenues for future work.

- External validity beyond a single-GPU, single-dataset setup is untested: does the approach generalize to larger models, different sequence lengths, alternative corpora, multilingual data, or non-language domains (vision, speech)?

- Distributed and multi-GPU scaling is left unexplored: how to coordinate edits, evaluations, and PPO updates across nodes; how to manage shared “best” states and avoid stale or divergent branches in a distributed queue.

- Fixed-time-budget comparability is assumed rather than validated: does val-bpb after 5 minutes reliably predict longer-run quality across heterogeneous architectures and training-throughput regimes? Provide correlation studies and error bars across seeds/hardware.

- Reward integrity and reward hacking remain under-specified: how is the environment isolated to prevent train.py edits from subtly influencing the evaluation pipeline, metrics, or data sampling?

- Sensitivity to stochasticity is not quantified: no repeated runs, seed sweeps, or confidence intervals show whether observed improvements are robust under training noise, data order, and CUDA nondeterminism.

- Short-horizon reward vs. long-horizon performance trade-off is unaddressed: does optimizing 5-minute val-bpb bias the agent toward fast-starter but worse-in-the-long-run configurations? Evaluate on longer training budgets.

- Early-stop (self-evaluation) modeling assumptions are fragile: power-law fit and Gaussian SPRT may mischaracterize many loss curves; assess false-abort/false-continue rates across tasks and provide calibration diagnostics and sensitivity analyses for α, β.

- Early-stop biases on the RL signal are unexamined: aborting runs changes the reward distribution and could disfavor edits that require longer warmups; quantify and correct for this bias (e.g., via counterfactual estimators or delayed credit assignment).

- Revert-to-best policy impedes multi-step innovations: the algorithm reverts any change that is not immediately best, preventing exploration of edit sequences that require temporary regressions; investigate branch-and-bound or multi-step acceptance strategies.

- Exploration mechanics are simplistic: edit-distance “novelty” can be gamed by superficial changes; study AST-level/semantic novelty metrics, diversity regularizers, or coverage-based exploration.

- Action representation is fragile: line-based diffs cause many syntax/compile failures; evaluate AST- or IR-level edit policies, grammar-constrained generation, or repair models to reduce invalid actions and wasted compute.

- State representation truncation is ad hoc: only K=32 recent experiments plus a summary are kept; evaluate memory/compression strategies (learned summarizers, retrieval over vector stores) and their impact on policy quality.

- Theoretical guarantees rely on unrealistic assumptions: independence across experiments, stationary reward distribution, and p_min>0 with full support are unlikely in practice; provide analyses for non-stationary, dependent returns and partial observability.

- Lack of regret or sample-complexity guarantees for PPO in this combinatorial, non-stationary setting: explore bandit/RL alternatives with theoretical guarantees (e.g., posterior sampling, Bayesian optimization with structured priors, evolutionary strategies).

- Objective is single-metric (val-bpb): no treatment of multi-objective trade-offs (speed, peak memory, stability, hardware efficiency); develop multi-objective rewards and Pareto-front tracking.

- Evaluation breadth is limited: missing comparisons to strong open-ended search baselines (regularized evolution, CMA-ES, BOHB/Hyperband, population-based training) under identical compute and wall-clock controls.

- Statistical reporting is insufficient: no multiple-seed runs, confidence intervals, or tests of significance for main results or ablations (PPO vs. greedy LLM, SE on/off, novelty bonus on/off).

- Generality of discovered edits is unverified: do Muon LR scaling, QK-norm, gradient-clip schedules, and depth changes hold across datasets, model sizes, and longer training? Provide cross-task, longer-horizon validations.

- PPO training budget and overhead are opaque: quantify tokens, wall-clock devoted to policy updates vs. experiments, and the net throughput/cost trade-off; ablate RL update frequency and batch sizes.

- Reward shaping is under-specified: the paper mentions a compute-efficiency bonus (λ_eff·η) but does not define η, scale, or tuning; clarify reward components and provide ablations on shaping terms and penalty magnitudes (syntax, waste).

- Termination criteria are vague: the “termination oracle” and convergence detection lack formalization; propose concrete stopping rules (e.g., bounded improvement test) and analyze risks of premature termination vs. wasted compute.

- Safety boundaries are thin: beyond restricting edits to train.py and removing network access, there is no systematic sandboxing, static analysis, or runtime policy to prevent resource abuse or unintended file-system interactions; detail enforcement mechanisms.

- Data and tokenization are frozen by design; the impact of allowing changes (tokenizer, vocabulary size, dataset filtering, curriculum, augmentation) is unexamined; propose safe protocols for expanding the environment while preserving comparability.

- Robustness to hardware differences and software stacks is unknown: does the method transfer across GPUs (A100 vs. H100), CUDA/cuDNN versions, compilers, and driver stacks without confounding the reward?

- Project-level edits are unsupported: many meaningful changes require multi-file edits, new modules, or dependency management; propose mechanisms for safe, multi-file action spaces with dependency resolution and test suites.

- Credit assignment over long contexts is unclear: how does the agent attribute improvements to specific past edits when history is compressed? Explore explicit causal attribution or counterfactual replay.

- Provenance and reproducibility are under-detailed: release seeds, exact code diffs, and logs for top runs to enable replication; document how JIT compilation and data loading are excluded from the budget without introducing variability.

- Risk of overfitting the agent to the benchmark is unaddressed: repeated interaction with a fixed environment may teach benchmark-specific hacks; evaluate on holdout pipelines or “hidden” evaluation scripts to assess general research capability.

- Ethical and environmental costs of perpetual operation are not quantified: provide compute/energy accounting and guidelines for responsible use and automatic suspension when marginal gains diminish.

These gaps suggest clear next steps: broaden and parallelize evaluation, harden the environment and metrics, refine exploration and credit assignment for multi-step edits, strengthen theoretical and statistical guarantees, and safely expand the action space beyond a single file and single metric.

Practical Applications

Immediate Applications

Below are practical use cases that could be deployed now, leveraging the paper’s methods (perpetual RL-driven code edits, fixed-time-budget evaluation, PPO policy over diffs, and the self-evaluation early-stop module).

- AutoML-plus for production training recipes — sectors: software/AI, finance, ecommerce, media

- Description: Replace manual hyperparameter/recipe tuning with an RL agent that proposes unified diffs to training code and keeps only changes that improve the metric under a fixed wall-clock budget.

- Tools/workflows: Integrate with MLflow/W&B for logging, Git-based diff/commit workflow, CI runners that enforce a 5–10 minute budget per experiment, PPO fine-tuning loop using the paper’s “Research MDP” abstraction.

- Assumptions/dependencies: Stable, “frozen” datasets and metrics aligned with business KPIs; short-horizon metrics (e.g., validation loss/AUC) that correlate with longer-run performance; sandboxed execution (no network, scoped file writes).

- Cluster/GPU throughput booster via early-stop module — sectors: cloud/HPC, MLOps

- Description: Deploy the self-evaluation (curve forecasting + SPRT) to abort unpromising training runs and requeue workers, increasing experiments per GPU-hour (paper shows ~1.35× throughput).

- Tools/workflows: K8s/Slurm plugins; a “curve-forecaster” microservice; dashboards to track abort reasons and false aborts.

- Assumptions/dependencies: Training curves approximately follow power-law or smooth decay; acceptable false-abort rate (β) policy; compatible with your scheduler and log streaming.

- Fair, compute-normalized benchmarking for model changes — sectors: academia, industry R&D, policy labs

- Description: Use fixed wall-clock budgets and identical hardware to compare experiments solely by a chosen metric (e.g., val-bpb), minimizing confounds from iteration count or model size.

- Tools/workflows: Benchmark harness with strict timeouts; hardware pinning; reproducibility scripts; reporting templates for “compute-fair” comparisons.

- Assumptions/dependencies: Enforce identical hardware and time budgets; metrics must be tokenization- or preprocessor-invariant where relevant (e.g., bpb for LLMs).

- IDE/CI “research bot” for ML training code — sectors: software/AI, open-source

- Description: A GitHub/GitLab bot that proposes diffs to train.py, runs fixed-budget tests on CI, and auto-merges or opens PRs if metrics improve.

- Tools/workflows: GitHub Actions/GitLab CI, containerized evaluation, diff validation/rollback, PPO policy stored as a service.

- Assumptions/dependencies: CI runners with GPUs; policy controls to limit scope (edit only train.py); unit and smoke tests to prevent regressions.

- Rapid bootstrapping of recipes for new datasets and tasks — sectors: startups, applied ML teams

- Description: Get reasonably tuned configs for a new domain quickly by letting the RL agent explore learning rates, schedulers, normalization, depth, and batch size under a budget.

- Tools/workflows: Project template with program.md + train.py; fixed eval; automatic logging of diffs and outcomes; short “overnight” runs.

- Assumptions/dependencies: Transferability of short-horizon gains to downstream goals; curated initial scripts.

- Teaching and course labs on RL-for-research and reproducibility — sectors: education

- Description: A safe sandbox where students observe a perpetual research loop, analyze diffs/learning curves, and study exploration–exploitation in code space.

- Tools/workflows: Dockerized environment with fixed datasets; visualization notebooks showing buffer history, rewards, and policy updates.

- Assumptions/dependencies: Institutional GPU access; simplified datasets for class time constraints.

- Cost-control guardrails for model tuning — sectors: finance, SMBs, nonprofit research

- Description: Enforce strict time and budget ceilings, prioritizing experiments that pass early confidence thresholds, reducing spend on fruitless runs.

- Tools/workflows: Budget-aware schedulers; automatic stop conditions; cost dashboards.

- Assumptions/dependencies: Strong correlation between early signals and final outcomes; business-approved risk on false aborts.

- Auditable research pipelines — sectors: regulated industries, policy, internal governance

- Description: Logs every code diff, run, and decision, creating an audit trail for model development and internal review.

- Tools/workflows: Immutable experiment registry; diff provenance tracking; review boards for “best config” promotion.

- Assumptions/dependencies: Data governance compliance; reproducible containers; access controls and isolated execution.

Long-Term Applications

These applications require further research, scaling (e.g., multi-GPU/multi-node), broader search spaces (data/tokenizers), or validation in new domains.

- Autonomous research labs at scale (“ResearchOps”) — sectors: software/AI, hyperscalers

- Description: Always-on agents continuously improving model architectures, optimizers, and training recipes, with week+ runs that accumulate improvements.

- Tools/products: AutoResearch-as-a-Service; fleet schedulers optimizing exploration portfolios; multi-agent orchestration.

- Assumptions/dependencies: Multi-node orchestration; budget-aware exploration policies; robust failure isolation; long-horizon reward shaping.

- Multi-GPU/multi-node AutoResearch — sectors: cloud/HPC, foundation model builders

- Description: Coordinate distributed training/evaluation with compute-fair rules across heterogeneous nodes.

- Tools/workflows: Ray/SLURM/K8s-native operator; cross-node logging; elasticity-aware time-budget enforcement.

- Assumptions/dependencies: Comparable hardware or normalization strategies; robust fault tolerance; networked checkpointing.

- Co-design of data pipelines and tokenizers — sectors: NLP, speech, vision

- Description: Expand the action space to include data filters, augmentation, sampling, and tokenizer/vocab changes with tokenization-agnostic metrics (e.g., bpb generalizations).

- Tools/workflows: Data versioning (DVC/LakeFS); automated tokenizers; data quality metrics and safety filters in-loop.

- Assumptions/dependencies: Strong safeguards against data leakage/harm; fair metrics across tokenizers; reproducible data snapshots.

- Cross-domain algorithm discovery (optimizers, schedulers, regularizers) — sectors: software/AI, scientific computing

- Description: Agents propose fundamentally new algorithms/code paths, not just parameter tweaks, similar to FunSearch/Eureka but with real training metrics as rewards.

- Tools/workflows: Secure sandboxes; formal test suites; property-based testing for stability.

- Assumptions/dependencies: Extensive safety tests; generalization checks; IP/governance for algorithm provenance.

- Robotics and control: automated controller/reward design — sectors: robotics, automation

- Description: Extend to sim-to-real pipelines where the agent edits controller code and reward functions; early-stop on predicted poor policies.

- Tools/workflows: Simulation harnesses with standardized time budgets; safety constraints in real hardware loops.

- Assumptions/dependencies: High-fidelity sim metrics correlating with real-world outcomes; strict safety gating before real deployment.

- Healthcare model tuning with governance — sectors: healthcare, pharma

- Description: Governed AutoResearch for clinical NLP, imaging, or EHR models with strict audit trails and fixed-budget evaluations.

- Tools/workflows: PHI-safe sandboxes; locked datasets; bias/fairness checks coupled with rewards.

- Assumptions/dependencies: Regulatory approval; extensive validation; explainability requirements; alignment of short-horizon metrics to clinical outcomes.

- Policy and standards for compute-fair benchmarking — sectors: policy, standards bodies, procurement

- Description: Adopt fixed-time/hardware standards to compare AI systems fairly for grants and public procurement; require audit trails.

- Tools/workflows: Reference harnesses; certification tests; standardized reporting formats.

- Assumptions/dependencies: Community consensus; enforcement and verification mechanisms.

- AutoResearch-integrated MLOps platforms — sectors: MLOps vendors, cloud providers

- Description: Platform-native support for research MDPs, code-diff actions, early-stop modules, and perpetual loops with cost/reward dashboards.

- Tools/products: “AutoResearch” operators for K8s; marketplace recipes; exploration policy templates.

- Assumptions/dependencies: Vendor investment; customer guardrails; SRE practices for long-running agents.

- Autonomous improvement of ML frameworks/libraries — sectors: open-source, toolchains

- Description: Agents propose performance and stability patches to training libraries and kernels, validated by compute-fair microbenchmarks.

- Tools/workflows: CI that compiles multiple backends; unit/perf regression suites; staged rollout.

- Assumptions/dependencies: Maintainership review processes; stringent test coverage; cross-platform compatibility.

- Automated, closed-loop scientific discovery — sectors: materials, bio, energy

- Description: Extend beyond ML to scientific pipelines (e.g., differentiable simulators) where agents edit code/configs to optimize experiment outcomes under budget.

- Tools/workflows: Surrogate models; protocol automation; lab-in-the-loop when feasible.

- Assumptions/dependencies: Valid short-horizon proxies for real outcomes; safe experiment gating; domain-specific constraints (ethics, safety).

- Multi-agent research teams with roles (proposer, critic, verifier) — sectors: AI R&D

- Description: Self-play/autocurriculum across agents specializing in proposing diffs, critiquing, and verifying robustness.

- Tools/workflows: Role-conditioned policies; debate-style reward shaping; cross-validation workflows.

- Assumptions/dependencies: Reliable aggregation of critiques; prevention of reward hacking; compute overhead management.

Glossary

- AdamW: An Adam optimizer variant with decoupled weight decay to improve generalization. "reduced the AdamW weight decay from $0.1$ to $0.04$"

- Algorithm Selection: The problem of choosing the best algorithm for a given task instance based on performance. "Our work shares the spirit of Algorithm Selection~\cite{rice1976} but extends it to open-ended algorithm synthesis."

- AutoML: Automated Machine Learning; methods that automate model and hyperparameter search. "Automated Machine Learning (AutoML) has attempted to mechanise parts of this loop"

- AutoResearch-RL: The paper’s framework where an RL agent autonomously edits and evaluates training code in a perpetual loop. "We present AutoResearch-RL, a framework"

- autoresearch: Karpathy’s prototype system that inspired this work. "A crucial design choice inherited from autoresearch"

- Autocurricula: Emergent training curricula produced by interactions within learning systems. "Autocurricula~\cite{autocurricula2019} and Open-Ended Learning~\cite{open_ended2021} study how agents can generate their own training curricula indefinitely."

- Best-arm identification: A bandit problem aiming to find the best option with minimal samples. "The SE module can be viewed as a best-arm identification problem"

- Bits-per-byte (bpb): A tokeniser-agnostic loss metric equal to cross-entropy (in bits) per input byte. "bpb is defined as the cross-entropy loss (nats) divided by "

- Byte-Pair Encoding (BPE): A subword tokenization method that builds a vocabulary by merging frequent byte pairs. "tokenised with a BPE vocabulary of size 4{,}096."

- Compute-efficiency bonus: A reward term incentivizing efficient use of compute. " is a compute-efficiency bonus."

- Context window: The maximum number of tokens a model can attend to in its input. "a context window of 64{,}000 tokens"

- Discount factor: The parameter γ in RL that trades off immediate versus future rewards. "Discount factor controls the trade-off between short-term gains and long-run optimisation."

- Edit-distance: A measure of dissimilarity between strings based on the minimum number of edits to transform one into the other. "edit-distance normalised by file length"

- Entropy regularisation: An objective term that encourages exploration by favoring higher-entropy policies. "The full training objective adds entropy regularisation and a value-function loss:"

- Generalised Advantage Estimation (GAE): A method to compute low-variance, low-bias estimates of advantage in RL. "is the importance-sampling ratio and is an advantage estimate computed by GAE"

- Gradient clipping schedule: A policy for varying the gradient norm clipping threshold over time. "Gradient clipping schedule. Rather than a fixed clip norm, the agent introduced a warm-up schedule"

- Hyperparameter optimisation (HPO): Techniques to search for the best hyperparameters (e.g., LR, batch size). "Hyperparameter optimisation (HPO) methods such as Bayesian optimisation"

- Importance-sampling ratio: The ratio of new to old policy probabilities used in off-policy updates. "is the importance-sampling ratio"

- JIT compilation: Just-in-time compilation that compiles code during execution to improve performance. "excluding JIT compilation and data loading."

- LoRA: Low-Rank Adaptation; a parameter-efficient fine-tuning method for large models. "with LoRA fine-tuning (, ) applied to the attention projections."

- Markov Decision Process (MDP): A formalism for sequential decision-making with state, action, transition, and reward. "We formalise this as a Markov Decision Process"

- Meta-learning: Methods that learn how to learn, often by optimizing for rapid adaptation. "Meta-learning approaches~\cite{maml2017,meta_sgd2017} learn an initialisation"

- Meta-policy: A higher-level policy that learns strategies over sequences of experiments or edits. "We introduce a PPO-based meta-policy that conditions on the full experiment history"

- Monotone convergence theorem: A result guaranteeing convergence of bounded, monotonic sequences. "Boundedness () and monotonicity imply a.s.\ convergence by the monotone convergence theorem."

- Muon optimiser: An optimizer variant targeted at hidden layers in neural networks. "Muon optimiser scaling."

- Neural Architecture Search (NAS): Automated discovery of neural network topologies. "Neural Architecture Search (NAS)~\cite{neural_arch_search2017,darts2019,efficient_nas2018} automates the discovery of neural network topologies."

- Novelty bonus: A reward added to encourage exploration of new or diverse actions. "We additionally use an -novelty bonus:"

- Open-Ended Learning: Learning paradigms where agents continually create new tasks or skills without a fixed endpoint. "Open-Ended Learning~\cite{open_ended2021} study how agents can generate their own training curricula indefinitely."

- Power-law model: A functional form used to fit learning curves or loss trajectories over time. "fits a power-law model to the observed loss trajectory:"

- Proximal Policy Optimisation (PPO): A stable policy gradient algorithm using clipped objective updates. "updates its policy via Proximal Policy Optimisation (PPO)."

- Query-Key Normalisation (QK-norm): Normalizing attention queries and keys to stabilize attention and training. "QK-norm. The agent inserted per-head normalisation on queries and keys, stabilising attention entropy and allowing a 20\% larger batch size."

- Sample complexity: The number of experiments required to reach a target performance with high probability. "Sample Complexity Bound"

- Self-evaluation (SE) module: A component that forecasts outcomes during training and can trigger early stopping. "We address this with a self-evaluation (SE) module"

- Sequential probability ratio test (SPRT): A sequential hypothesis test controlling error rates while deciding when to stop. "We use a sequential probability ratio test (SPRT) on the Gaussian-approximated improvement distribution"

- Sliding window: A bounded memory mechanism that retains only the most recent entries from a sequence. "we use a sliding window of recent experiments"

- State-of-the-art (SoTA): The best-known performance level at a given time. "hand-tuned SoTA in val-bpb"

- Structured diff: A machine-readable code edit specifying insert/replace/delete operations. "Action : a structured diff (insert / replace / delete) applied to "

- Super-martingale: A stochastic process whose expected next value is at most the current value given the past. "the best-seen bpb is a super-martingale:"

- Termination oracle: An external signal that halts the agent when convergence or resource limits are reached. "until a termination oracle signals convergence or resource exhaustion."

- Transformer-based LLM: A model architecture built from self-attention layers specialized for sequence modeling. "We parametrise as a transformer-based LLM"

- Unified diff: A standardized text format showing file changes with context for patches. "The agent's output is parsed as a unified diff"

- Validation bits-per-byte (val-bpb): The bpb metric computed on a held-out validation set; used as the reward signal. "We use validation bits-per-byte (val-bpb) as the primary reward signal."

- Value-function loss: The critic’s regression loss used alongside the policy objective in actor-critic methods. "The full training objective adds entropy regularisation and a value-function loss:"

- Wall-clock time budget: A fixed real-time limit for running each experiment to ensure fair comparability. "a fixed wall-clock time budget "

Collections

Sign up for free to add this paper to one or more collections.