Mode Seeking meets Mean Seeking for Fast Long Video Generation

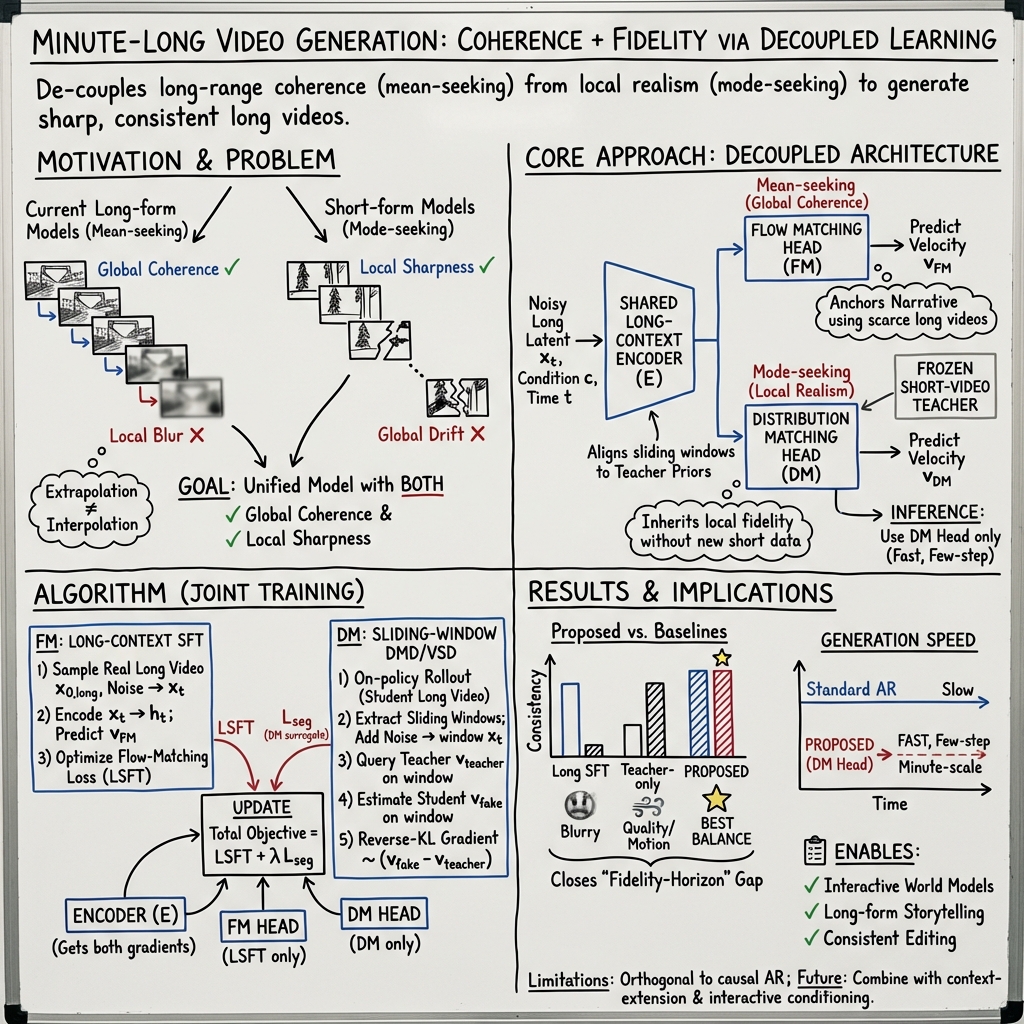

Abstract: Scaling video generation from seconds to minutes faces a critical bottleneck: while short-video data is abundant and high-fidelity, coherent long-form data is scarce and limited to narrow domains. To address this, we propose a training paradigm where Mode Seeking meets Mean Seeking, decoupling local fidelity from long-term coherence based on a unified representation via a Decoupled Diffusion Transformer. Our approach utilizes a global Flow Matching head trained via supervised learning on long videos to capture narrative structure, while simultaneously employing a local Distribution Matching head that aligns sliding windows to a frozen short-video teacher via a mode-seeking reverse-KL divergence. This strategy enables the synthesis of minute-scale videos that learns long-range coherence and motions from limited long videos via supervised flow matching, while inheriting local realism by aligning every sliding-window segment of the student to a frozen short-video teacher, resulting in a few-step fast long video generator. Evaluations show that our method effectively closes the fidelity-horizon gap by jointly improving local sharpness, motion and long-range consistency. Project website: https://primecai.github.io/mmm/.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What’s this paper about?

This paper is about making AI models that can create longer, better-looking videos. Today’s best models can make short clips (a few seconds) with sharp details and lively motion. But when you ask them to make minute‑long videos, the quality often drops: scenes get blurry, motion becomes dull, and the story can drift. The authors propose a way to keep short‑clip quality while growing into long, coherent videos—fast.

What questions are they trying to answer?

- How can we generate minute‑long videos that keep a clear, consistent story (long‑term coherence) without losing the crisp details and realistic motion seen in short clips (local fidelity)?

- How can we do this even though there’s lots of short‑video data online, but very little high‑quality long‑video data?

- Can we make the generation fast, so the model needs only a few steps to produce long videos?

How does their method work? (Simple explanation)

Think of making a movie as two jobs:

- Big‑picture planning: keeping the story, characters, and scenes consistent over time.

- Fine‑detail acting: making each short moment look sharp and realistic.

Most models try to learn both jobs at once, which can cause conflicts—like a person trying to watch the whole movie and fix each frame at the same time. The authors “split the jobs” inside one model so each part can focus on what it’s best at, then combine the results.

Two jobs, two “heads,” one shared brain

- The model uses a shared long‑context “encoder” (like the model’s brain that remembers the whole video).

- On top of that, it has two small “heads” (like two specialists):

- A mean‑seeking Flow Matching head (big‑picture planner) that learns long‑range story structure from the limited long‑video data. “Mean‑seeking” here means it aims for the average best guess under uncertainty, which helps keep the overall story stable and consistent.

- A mode‑seeking Distribution Matching head (detail specialist) that makes each short segment look sharp by copying the style of a strong short‑video “teacher” model. “Mode‑seeking” means it picks a clear, high‑quality option instead of averaging different possibilities that can look blurry.

Why split them? The big‑picture planner tends to “average” for stability, which can blur details. The detail specialist aims for the clearest, most realistic look. Separating them avoids mixed signals.

Learning from a short‑video teacher

- The teacher is a high‑quality short‑video generator that’s already very good at making 5‑second clips.

- The student (the new long‑video model) learns details by comparing its own short segments to what the teacher would produce, and adjusts to match the teacher’s best‑looking styles—without needing the teacher’s original training data.

Sliding windows: checking a long video in short chunks

- Imagine reviewing a long video through a small “window” that slides along the timeline (like watching 5 seconds at a time).

- For every window, the student lines up its output with the teacher’s style. This keeps local details sharp everywhere in the long video.

- At the same time, the big‑picture head learns long‑range coherence from the limited long‑video examples it has.

A word on “diffusion” and “flow matching” in simple terms

- Diffusion models start from noise and learn how to “clean” it into a video step by step.

- Flow matching is a way to train the model to follow the right path from noise to a good video. The big‑picture head uses this to learn story structure from long videos.

Fast generation with few steps

- Because the detail head is trained to match the teacher’s strong short‑clip behavior, it can generate long videos in just a few steps, which makes the system faster at making minute‑long clips.

What did they find?

Their method:

- Keeps local details sharp and motion lively, like the short‑video teacher.

- Maintains a consistent story, characters, and scenes over longer times (tens of seconds and up).

- Works faster at generation time because the detail head can produce videos in only a few steps.

- Outperforms several common approaches:

- Fine‑tuning only on long videos (which can make videos look soft or blurry due to limited long‑video data).

- Relying only on the short‑video teacher with autoregressive rollouts (which can drift or get repetitive over time).

- Tricks that extend timing without true long‑context understanding (which can lead to overly static or less dynamic videos).

Overall, it “closes the fidelity‑horizon gap”: as videos get longer (the horizon), the fidelity (sharpness and realism) doesn’t fall off like it usually does.

Why this matters

- Better long videos: This can help with making longer stories, films, and animations that keep characters and style consistent.

- Interactive worlds and games: More coherent, long videos can model worlds for agents, simulations, and game scenes.

- Efficient training: It reuses the strength of existing short‑video models and needs fewer expensive long‑video examples.

- Faster creation: Few‑step generation means quicker turnaround for long content.

In short, by letting one part of the model focus on “the story” and another part focus on “the details,” the system produces long videos that look good close‑up and stay consistent over time—quickly.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concrete list of what remains missing, uncertain, or unexplored in the paper—intended to guide future research.

- Empirical horizon: Claims of “minute-scale” generation are not substantiated beyond 30-second evaluations; scalability and coherence beyond 1–2 minutes remain untested.

- Teacher–student heterogeneity: All experiments use the same base (Wan 1.3B) as both teacher and student; it is unclear how the method behaves with heterogeneous teachers (different architectures, capacities, or training distributions) or lower-quality teachers.

- Teacher access assumptions: The approach assumes access to the teacher’s velocity/score function at arbitrary noisy states; many production models expose only sampling APIs. How to adapt reverse-KL alignment when teacher scores are unavailable?

- Sensitivity of DMD/VSD setup: No analysis of the bias/stability introduced by the “fake” score Ufake (trained for 5 steps), the weighting X(t), or the choice of noise/interpolation schedule; hyperparameter sensitivity (e.g., number of Ufake steps, update frequency) is not reported.

- Window length and stride: The “sliding-window” alignment appears to use non-overlapping chunks indexed by k; the impact of true sliding stride, overlap, and window length L (relative to teacher horizon) on boundary artifacts and global coherence is not evaluated.

- Boundary artifacts: Potential stitching artifacts at window boundaries (and their propagation over long horizons) are not measured or mitigated.

- FM head at inference: DM-only inference discards the FM head; the sufficiency of encoder-level long-context representations for very long narratives (multi-shot, multi-scene, camera cuts) is not validated and may degrade with sequence length.

- Diversity vs. mode-seeking: Reverse-KL is known to be mode-seeking; diversity, novelty, and prompt coverage metrics are missing. Does alignment to the teacher collapse variety or induce repetitive patterns over long horizons?

- Teacher bias propagation: Aligning to a frozen short-video teacher may inherit the teacher’s aesthetic/semantic biases or domain gaps; no bias/fairness audit or mitigation is presented.

- Rare-event generalization: Behavior on prompts requiring events, motions, or causal structures absent from the teacher’s distribution (e.g., uncommon domains or complex narratives) is not studied.

- Compute and memory footprint: The long-context encoder uses full-range attention; memory, throughput, latency, and hardware requirements for DM few-step sampling on minute-scale sequences are not quantified. NFE figures in Table 1 are unclear and incomplete.

- Speed–quality trade-offs: Few-step DM inference is claimed “fast” but lacks rigorous comparisons to diffusion and AR baselines across varying step counts, sequence lengths, and quality metrics.

- Dataset transparency: The composition, size, domain coverage, and preprocessing of the long-video corpus are not described; reproducibility is limited without release of data specs, code, weights, and hyperparameters.

- Evaluation breadth: Reliance on VBench-Long and Gemini-3-Pro lacks human preference studies and may be biased; no cross-benchmark validation or task-specific metrics (e.g., identity persistence under occlusion or camera cuts).

- Multi-modality: Audio generation/synchronization and multi-sensory conditioning are not addressed; applicability to audio-visual long video remains open.

- Integration with AR: The paper claims orthogonality to autoregressive methods but provides no experiments on hybrid designs (e.g., DM head for local realism + AR for streaming) or mitigation of AR drift via teacher alignment.

- Streaming and online generation: Real-time constraints (latency, memory reuse, state updates) for continuous streaming generation using the DM head are not explored.

- Multi-shot narratives: Handling shot transitions, scene graph consistency, and inter-shot memory (e.g., character reappearance across cuts) is not evaluated; current prompts may favor single-shot scenarios.

- Theoretical guarantees: There is no formal analysis of convergence, stability, or representational interference when jointly updating a shared encoder with mean-seeking (FM) and mode-seeking (DM) objectives.

- Loss balancing: No ablation on Aseg (teacher alignment weight), dynamic weighting schedules, or curriculum strategies to balance global coherence and local realism over training.

- Alternative divergences: Forward-KL, JS divergence, contrastive losses, or reward-based distillation (e.g., RFD) are not compared; the design choice of reverse-KL is left untested against alternatives for diversity/coherence trade-offs.

- On-policy rollout training: The impact of rollout length, error accumulation, and curriculum for on-policy window sampling during DM training is not examined; methods to stabilize long rollouts during training remain open.

- VAE factors: Latent VAE compression artifacts and their accumulation over long sequences are not analyzed; end-to-end impacts on texture detail and flicker are unquantified.

- Control and editing: Fine-grained controls (camera trajectories, motion styles, identity locks) and long-form editing tasks are absent; how to inject controls without harming long-range coherence is unknown.

- Teacher query cost: The computational overhead of teacher velocity queries on many windows per step is not reported; strategies to amortize or cache teacher evaluations are not discussed.

- Safety and content constraints: Negative prompts, safety filters, and content moderation for long-form generation are not addressed beyond a generic impact statement; risk assessment for misuse is missing.

Practical Applications

The paper introduces a decoupled training and inference paradigm for long video generation that combines: (1) a mean-seeking Flow Matching (FM) head trained on scarce long videos to learn minute-scale narrative coherence, and (2) a mode-seeking Distribution Matching (DM) head trained via reverse-KL sliding-window alignment to a frozen expert short-video teacher to preserve local fidelity. At inference, only the DM head is used, enabling few-step, fast generation of coherent, minute-scale videos with sharp local detail.

Below are the practical, real-world applications that follow from these findings and methods.

Immediate Applications

- Media/Entertainment – previz, animatics, and long-form drafts

- Use cases: rapid script-to-animatic generation; previsualization of minute-long scenes; proof-of-concept cuts that preserve character and scene consistency.

- Tools/products/workflows: “Previs Mode” in DCC tools (e.g., plugins for After Effects, Blender, DaVinci Resolve) that extends storyboard panels into coherent multi-minute sequences; batch generation of alternative scene beats for directors.

- Assumptions/dependencies: access to a high-fidelity short-video teacher (licensed or in-house); small but representative long-video corpus for SFT; compute for training; rights management for character likenesses.

- Video editing – “Extend Clip” and “Connect Shots” features

- Use cases: extend 5–10s clips to 30–60s while preserving subject identity, style, and background continuity; bridge two clips with a coherent transitional scene.

- Tools/products/workflows: timeline operators in NLEs that take an existing clip and “Extend with Coherent Fill”; “Shot Connector” that generates an intermediate minute bridging shots.

- Assumptions/dependencies: embedding the DM-head few-step sampler for low latency; editor integration for round-tripping and frame-accurate alignment; content provenance/watermarking.

- Advertising and Marketing – long-form, variant-rich content

- Use cases: generate minute-long ad variations with consistent brand assets and motion; localized narrative variants (A/B testing) with stable visuals.

- Tools/products/workflows: campaign generation dashboards that lock brand identity and style via teacher priors while altering story arcs learned by FM head.

- Assumptions/dependencies: brand-safe teacher or style-lock; QA for on-brand outputs; licensing for teacher model; compliance review.

- Game Development – cutscenes, trailers, and in-engine cinematics

- Use cases: generate coherent, minute-scale cutscenes with consistent characters and environments; iterate quickly on story beats.

- Tools/products/workflows: Unreal/Unity plug-ins that ingest prompts/story outlines and output cutscenes; pipeline to convert generated shots into editable sequences.

- Assumptions/dependencies: domain-adapted teacher for game art style; asset/licensing constraints; integration with engine timelines and shot control.

- VFX and B‑roll – consistent background plates and long-range continuity

- Use cases: generate multi-minute background plates (streetscapes, landscapes) with consistent lighting and camera motion for compositing.

- Tools/products/workflows: “B‑roll generator” that seeds with reference frames, enforces local realism via teacher, and uses FM head for long-range trajectory.

- Assumptions/dependencies: shot matching and camera path constraints; color pipeline consistency; legal review for synthetic footage use.

- Social & Creator Tools – everyday long-form content helpers

- Use cases: extend short clips into vlogs; “story mode” that connects multiple phone clips into a coherent mini-film; identity-consistent highlight reels.

- Tools/products/workflows: mobile app feature “Auto-Extend” or “Story Builder”; fast preview using few-step DM inference; on-device or edge deployment for near real-time.

- Assumptions/dependencies: efficient model variants for mobile/edge; safety filters; user consent for identity persistence.

- Education & E‑learning – illustrative sequences with continuity

- Use cases: produce consistent multi-minute instructional videos (e.g., lab procedures, historical scenes) from text prompts and a few example clips.

- Tools/products/workflows: LMS plugins to generate lesson-aligned videos maintaining consistent subjects and settings across segments.

- Assumptions/dependencies: factual grounding (pairing with retrieval or human oversight); domain-appropriate teacher styles; usage policies for synthetic educational media.

- Research & Academia – data-efficient long-video modeling

- Use cases: replicate minute-scale generation with limited long video data; evaluate decoupled objectives on scarce datasets.

- Tools/products/workflows: open-source training recipes implementing DDT dual heads and sliding-window DMD; benchmarks using VBench-Long variants.

- Assumptions/dependencies: availability of a teacher model that exposes velocity/score queries; small curated long-video sets; compute for fine-tuning.

- Simulation Data Augmentation – perception and RL pretraining

- Use cases: generate coherent long sequences for pretraining vision models or imitation learning where visual continuity is valuable.

- Tools/products/workflows: dataset pipelines that seed environments and generate multi-minute sequences with consistent dynamics and visuals.

- Assumptions/dependencies: content may not obey physics; requires careful domain filtering and labels; not suitable for safety-critical training without additional constraints.

- Cloud Rendering Services – cost and latency reduction

- Use cases: few-step, minute-scale video generation reduces inference cost and turnaround time for creative pipelines and APIs.

- Tools/products/workflows: managed APIs offering “Long-Video Fast Mode” powered by DM-only inference; autoscaling GPU services for batch variant generation.

- Assumptions/dependencies: GPU memory optimization for long contexts; request-level safety checks; SLA design for multi-minute jobs.

Long-Term Applications

- Full-length episodic content generation (multi-shot, multi-scene)

- Use cases: generate series-length narratives with consistent characters and evolving arcs across scenes/episodes.

- Tools/products/workflows: integration with multi-shot controllers, shot lists, and scene graphs; retrieval-based memory across scenes; cross-episode continuity tooling.

- Assumptions/dependencies: larger and more diverse long-video corpora; robust controllability (script, shot framing, dialog); rights and union regulations.

- Real-time, interactive story engines for games and XR

- Use cases: on-the-fly generation of coherent cutscenes and transitions based on player choices, maintaining world and character continuity.

- Tools/products/workflows: streaming DM inference with chunked generation; memory modules (retrieval/routers) for persistent context; low-latency schedulers.

- Assumptions/dependencies: tighter latency budgets; highly optimized models; safety controls for user-generated prompts; stable APIs for engines.

- Digital twins and autonomous systems training videos

- Use cases: long, coherent videos to simulate environments for autonomy perception debugging and operator training.

- Tools/products/workflows: pipeline coupling video gen with physics-aware constraints and sensor models; scenario libraries with long-horizon variations.

- Assumptions/dependencies: physics fidelity and sensor realism upgrades; rigorous validation; domain-adapted teacher and long-video datasets.

- Healthcare training and patient education

- Use cases: minute-scale procedural demonstrations (e.g., surgical steps) with consistent instruments, environments, and pacing.

- Tools/products/workflows: co-pilots for medical educators to assemble compliant, consistent training videos; reviewer-in-the-loop pipelines.

- Assumptions/dependencies: strict clinical validation; domain-specific teachers; regulatory compliance and provenance tracking; bias mitigation.

- Professional-grade script-to-video with semantic controls

- Use cases: from script and shot list to controlled, consistent videos with editable beats, camera moves, and identity locks over minutes.

- Tools/products/workflows: scene graphs and semantic constraints fed into FM head for global planning; local style and texture enforced via teacher-aligned DM.

- Assumptions/dependencies: advanced control interfaces; structured script parsers; richer long-range supervision; human-in-the-loop editorial tools.

- Personalized virtual presenters and long-form avatars

- Use cases: lectures, broadcasts, and streams with consistent identity, style, and behavior over long durations.

- Tools/products/workflows: avatar studios that learn identity from licensed data; live or scheduled long-form content generation.

- Assumptions/dependencies: explicit consent and licensing for likeness; robust guardrails; speech-to-video synchronization and audio integration.

- Multimodal audio-visual long-form generation

- Use cases: synchronized, coherent audio+video narratives (music videos, documentaries) with minute-scale consistency.

- Tools/products/workflows: dual-head decoupling extended to audio (audio teacher for local fidelity; long-range audio-visual FM for narrative); joint inference schedulers.

- Assumptions/dependencies: high-quality audio teachers; aligned audio-video long datasets; cross-modal coherence objectives.

- On-device or edge long-video generation

- Use cases: offline travel vlogs, in-camera “story mode,” or AR glasses generating contextual long clips.

- Tools/products/workflows: distilled, quantized models; sparsity and routing to fit memory/compute limits; progressive generation.

- Assumptions/dependencies: further efficiency gains (sparsity, token reduction); hardware acceleration; power constraints.

- Governance, provenance, and policy frameworks

- Use cases: standards for watermarking/provenance on long-form synthetic videos; auditing teacher-student pipelines and data sources.

- Tools/products/workflows: integrated provenance metadata in generation workflows; audit logs connecting teacher queries and long-video SFT data.

- Assumptions/dependencies: cross-industry standards; regulatory adoption; secure logging and privacy-preserving auditing.

- Generalized decoupled training in other domains

- Use cases: apply “mean-seeking global, mode-seeking local” decoupling to other sequential generative tasks (e.g., long-form audio, robotics policy rollouts).

- Tools/products/workflows: dual-head architectures for global planning vs. local execution modules; teacher alignment on local windows for high-fidelity behaviors.

- Assumptions/dependencies: suitable short-context teachers and long-horizon datasets in target domains; adapted loss formulations and evaluation suites.

Notes on feasibility and dependencies across applications:

- Teacher dependency: Access to a strong short-video teacher (weights or velocity/score API) is central; licensing and IP constraints may apply. Domain-adapted teachers may be needed for niche styles (medical, industrial, game art).

- Long-video data scarcity: While the method is designed for limited long-video supervision, some high-quality long clips are still required for SFT to anchor global coherence.

- Compute and engineering: Sliding-window DMD/VSD implementation and DDT dual-head training require non-trivial engineering and GPU resources; inference is few-step but long temporal contexts increase memory.

- Safety and ethics: Identity persistence, potential for deepfakes, and factuality concerns necessitate provenance, watermarking, and human oversight, especially in regulated sectors.

- Control and editing: For professional pipelines, additional control layers (scene graphs, shot constraints, retrieval memory) will improve reliability and are likely needed for large-scale deployment.

Glossary

- Autoregressive (AR) rollouts: Sequential generation that conditions each new frame on previously generated frames, often accumulating errors over long horizons. "autoregressive (AR) rollouts"

- Block-linear attention: An attention mechanism that approximates or structures attention in block-wise linear forms to improve efficiency on long sequences. "linear/block-linear attention"

- Decoupled Diffusion Transformer (DDT): An architecture with a shared long-context encoder and separate decoder heads to decouple conflicting training objectives in diffusion-based video models. "Decoupled Diffusion Transformer (Wang et al., 2025c) (DDT)"

- Distribution Matching head: A decoder head trained to align local video windows with a teacher model’s distribution to preserve short-horizon realism. "Distribution Matching head"

- DMD (Distribution Matching Distillation): A distillation framework that expresses reverse-KL gradients via differences between teacher and student scores/velocities on noisy states. "DMD (Yin et al., 2024b;a)"

- Fake score estimator: An auxiliary estimator trained on the student's predictions to approximate the student’s score/velocity for DMD-based gradients. "fake score estimator"

- Few-step long-video inference: Fast sampling that produces long videos with only a few denoising steps, enabled by distillation. "few-step long-video inference"

- Fidelity-horizon gap: The observed tradeoff where models generating longer videos lose local visual fidelity compared to short-video experts. "fidelity-horizon gap"

- FlashAttention: A highly optimized attention kernel that reduces memory and computation for large spatiotemporal contexts. "FlashAttention"

- Flow Matching head: A decoder head trained with flow-matching on long videos to learn global, minute-scale temporal coherence. "Flow Matching head"

- Flow-matching objective: A training objective that fits the model’s velocity field to a target flow defined along a deterministic noising path. "flow-matching objective"

- Generative ordinary differential equation (ODE): The differential equation whose solution (integrating backward in time) generates samples in rectified flow models. "generative ordinary differential equation (ODE)"

- Latent packing: Compressing long video history into a fixed-size latent state to maintain long-context information efficiently. "latent packing"

- Marginal velocity field: The time-dependent vector field over noisy states that defines the generative flow in rectified flow models. "marginal velocity field"

- Mean-seeking supervised finetuning (SFT): A training approach that encourages predicting conditional means under noise, used here on scarce long videos to learn global coherence. "mean-seeking supervised finetuning (SFT)"

- Mode-seeking reverse-KL divergence: Using DKL(q‖p) to push the student toward high-density modes of the teacher distribution, preserving sharp, realistic details. "mode-seeking reverse-KL divergence"

- Noise rescheduling: A training-free technique that modifies the diffusion noise schedule to extrapolate generation beyond trained horizons. "noise rescheduling"

- On-policy student rollouts: Samples produced by the student during training that are used to compute alignment or distillation signals. "on-policy student rollouts"

- Radial masks: Structured attention patterns that restrict interactions based on radial distance to reduce cost in long video contexts. "radial masks"

- Rectified flow parameterization: A deterministic interpolation-based formulation of diffusion where sampling follows an ODE defined by a velocity field. "rectified flow parameterization"

- Retrieval-based memories: Systems that fetch relevant past context (e.g., indexed by view or geometry) to maintain long-context consistency during generation. "Retrieval-based memories"

- RoPE (Rotary Position Embedding): A positional encoding method that rotates queries/keys in attention, facilitating extrapolation to longer contexts. "RoPE"

- Rollout-aware training: Training strategies that account for the dynamics and error accumulation of long autoregressive rollouts. "rollout-aware training"

- Sliding-window reverse-KL alignment: Matching each generated local window to a short-video teacher via reverse-KL to enforce local realism. "sliding-window reverse-KL alignment"

- State-space dynamics: Using state-space models to represent and propagate long-term temporal states efficiently in video generators. "state-space dynamics"

- Stop-gradient: An operation that prevents gradients from flowing through certain computations, used here when contrasting teacher and fake scores. "stop-gradient"

- Structured sparsity: Predefined sparse attention patterns (e.g., sliding/tiling windows or radial masks) that cut compute for long contexts. "structured sparsity"

- Teacher-to-student distillation: Transferring knowledge from a high-quality (teacher) model to a student, often via matching scores/velocities. "teacher-to-student distillation"

- Video VAE: A variational autoencoder tailored for videos, providing a latent space where diffusion/flow operates. "video VAE"

- VSD (Variational Score Distillation): A distillation technique that leverages variational principles to transfer score/velocity guidance from teacher to student. "VSD (Wang et al., 2023b)-style gradients"

Collections

Sign up for free to add this paper to one or more collections.