Decoding as Optimisation on the Probability Simplex: From Top-K to Top-P (Nucleus) to Best-of-K Samplers

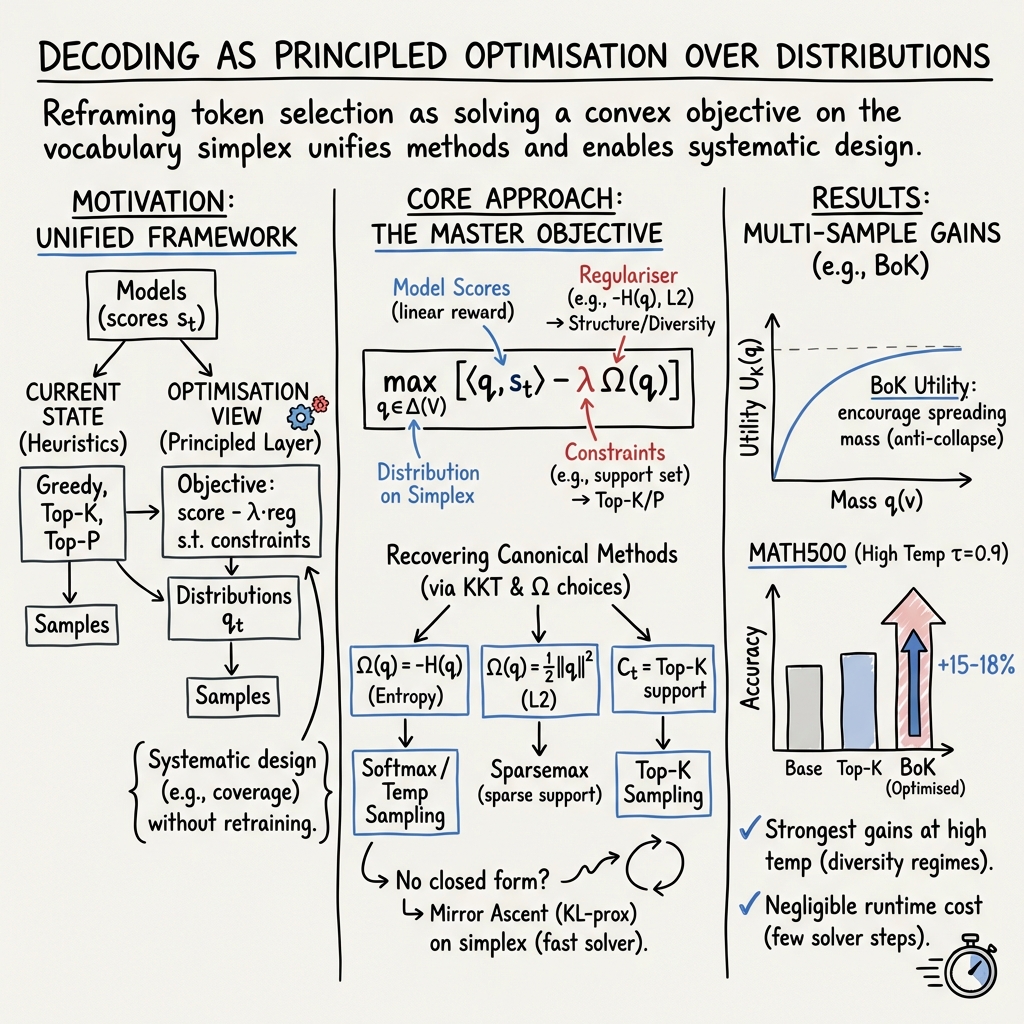

Abstract: Decoding sits between a LLM and everything we do with it, yet it is still treated as a heuristic knob-tuning exercise. We argue decoding should be understood as a principled optimisation layer: at each token, we solve a regularised problem over the probability simplex that trades off model score against structural preferences and constraints. This single template recovers greedy decoding, Softmax sampling, Top-K, Top-P, and Sparsemax-style sparsity as special cases, and explains their common structure through optimality conditions. More importantly, the framework makes it easy to invent new decoders without folklore. We demonstrate this by designing Best-of-K (BoK), a KL-anchored coverage objective aimed at multi-sample pipelines (self-consistency, reranking, verifier selection). BoK targets the probability of covering good alternatives within a fixed K-sample budget and improves empirical performance. We show that such samples can improve accuracy by, for example, +18.6% for Qwen2.5-Math-7B on MATH500 at high sampling temperatures.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (simple overview)

When a LLM writes the next word, it has to “decode” from many choices. People often treat decoding like turning knobs—Top-K, Top-P (nucleus), temperature, greedy, and so on. This paper says: decoding isn’t a bag of tricks. It’s really an optimisation problem. At each step, the model should first pick a probability distribution over possible next tokens (like a pie chart that sums to 1), by balancing two things:

- how good each token looks to the model, and

- extra preferences or rules (like “be diverse,” “be sparse,” or “stick to a shortlist”).

With this view, common decoding methods all fall out of the same “master” optimisation recipe.

The authors also design a new decoder called Best-of-K (BoK) for situations where you generate K samples and then pick the best. BoK tries to make it more likely that at least one of those K samples is a good one.

Key goals and questions

The paper aims to:

- Show that many decoding strategies (greedy, temperature/softmax sampling, Top-K, Top-P, Sparsemax) are solutions to one shared optimisation problem.

- Provide a simple “master key” so you can design new decoders by changing the regulariser (the rule that shapes the distribution).

- Build and test a new decoder, Best-of-K (BoK), that improves the chance of getting at least one strong answer when you draw multiple samples.

In easy terms: Can we stop guessing and treating decoding like a hack, and instead treat it as a clear math problem we can solve and adapt?

How they approach the problem (methods, in everyday language)

The authors take a “distribution-first” view:

- Instead of immediately picking the next word, the decoder first chooses a probability distribution over all possible next words (a pie chart that adds to 1).

- It picks this distribution by solving an optimisation problem: “put more pie on high-scoring words, but also follow certain rules.”

- These rules are set by a regulariser. Think of a regulariser like a gentle nudge that shapes the pie:

- If the regulariser prefers randomness, it spreads the pie out (diversity).

- If it prefers sparsity, it lets some slices go to zero (ignoring unhelpful words).

- If it prefers staying close to some reference distribution, it stops the pie from shifting too far.

They show that different regularisers and constraints perfectly recreate well-known decoders:

- Greedy: no regulariser, all pie goes to the top-scoring token.

- Softmax/temperature sampling: use “negative entropy” regulariser; temperature controls how spread-out the pie is.

- Top-K: add a constraint that only the K best-scoring tokens are allowed; then softmax inside that shortlist.

- Top-P (nucleus): choose the smallest set of tokens whose model probabilities add up to P; then softmax within that set.

- Sparsemax: use a quadratic regulariser that naturally sets some probabilities exactly to zero (no need for manual cutoffs).

Behind the scenes, they use standard optimality conditions (like rules that decide which tokens get nonzero probability and which get zero) to derive these decoders from one shared equation.

Best-of-K (BoK)

- Many real systems draw K samples and then pick the best by some judge (self-consistency, reranking, or a checker).

- BoK sets up an objective to maximise “coverage”: make it likely that at least one of the K samples hits a good answer.

- It also adds a “KL anchoring” term, which means “don’t drift too far from the model’s original probabilities,” keeping the method stable.

- They solve the BoK objective with a few fast “mirror ascent” steps at each token (think of it as quick nudges that reshape the pie chart), then sample K times from the adjusted distribution.

Main results and why they matter

What they found:

- A single optimisation framework explains popular decoding methods. This turns scattered tricks into one clear, consistent picture.

- Using that framework, they build BoK, which improves multi-sample generation without extra training or external tools.

Key improvements with BoK (on two 7B Qwen models and three benchmarks: MATH500, GPQA-diamond, HumanEval):

- At high temperature (more randomness), BoK shines because it guides diversity toward useful alternatives.

- Example: On the math-specialised Qwen2.5-Math-7B at high temperature (τ = 0.9) on MATH500:

- Base: 53.0%

- Top-K: 56.2%

- BoK: 71.6% (+18.6% over Base; +15.4% over Top-K)

- Similar gains at high temperature on:

- GPQA: +6.06%

- HumanEval (coding): +14.64%

- Robustness: BoK works well across different settings (the trade-off knobs for coverage vs. staying close to the model), so it’s not fragile.

- Speed: Just a few optimisation steps per token. With 5 steps, it adds ~1 second on MATH500 (16.88s vs. 15.84s). Even 2 steps already give a big jump (64.4% → 69.6%) with almost no extra time.

Why this matters:

- Better results with the same model and no extra training.

- Especially helpful when you already sample multiple candidates and want to increase the chance of including a good one.

What this means going forward (implications)

- Decoding as design: Instead of trying random tricks, you can decide what behaviour you want (more diversity, more safety, fewer hallucinations, more stability) and encode that as an optimisation regulariser or constraint.

- A “master key” for new decoders: The same math lets you invent and analyse future decoding methods quickly and cleanly.

- Practical wins: BoK shows you can get meaningful improvements just by changing how you decode, not how you train.

- Broader impact: This approach can guide decoding for many goals—better reliability in Q&A, safer outputs, or smarter exploration in creative tasks—by mathematically shaping the distribution the model samples from.

Quick mental map (one framework, many decoders)

- Greedy = “pick the top slice” (no regulariser).

- Softmax/temperature = “spread the pie by entropy” (more temperature → more spread).

- Top-K = “shortlist the top K, then softmax there.”

- Top-P (nucleus) = “pick the smallest set that covers P% of mass, then softmax there.”

- Sparsemax = “let weak options go to exactly zero automatically.”

- BoK = “shape the distribution to cover good alternatives across K samples, while staying close to the model.”

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of what remains missing, uncertain, or unexplored in the paper. Each point is framed to be actionable for future research.

- Sequence-level alignment: The framework optimises per-token distributions q_t; it does not address how these local decisions align with sequence-level objectives (e.g., exact match, coherence, faithfulness) or how to formulate and solve a sequence-level optimisation over q_1,…,q_T.

- BoK objective specification: The exact mathematical form of the Best-of-K (BoK) “KL-anchored coverage” objective ΩBoK, including its coverage proxy, KL anchoring term, and hyperparameters (β, λ), is not fully specified, making replication and theoretical analysis difficult.

- BoK convergence and optimality: Mirror ascent is used for BoK, but there are no convergence guarantees, step-size schedules, stopping criteria, or bounds on suboptimality; it is unclear under what conditions the solver reaches the global optimum or how approximation error scales with steps.

- Final selection rule in multi-sample pipelines: BoK is said to help “without external verifiers,” yet the paper does not specify how a final answer is selected from K samples (e.g., LM log-prob, length-normalised score, heuristic reranking) or evaluate sensitivity to that choice.

- Coverage model and sample dependence: The BoK “coverage” claim lacks a formal probabilistic model of sample dependence; there is no analysis of how intra-batch correlation among K samples affects the probability of including at least one good candidate.

- Diversity-quality trade-offs: The paper does not quantify how BoK changes diversity (e.g., distinct-n, self-BLEU) vs. correctness, nor does it characterise conditions under which increased coverage may hurt quality (e.g., longer or more error-prone samples).

- Baseline breadth: Evaluation omits strong multi-sample baselines like minimum Bayes risk (MBR) decoding, diverse beam search, DPP-based samplers, self-consistency with majority-vote, or verifier-assisted reranking; comparative performance is therefore underdetermined.

- Model and domain generality: Results are limited to Qwen 7B variants and three benchmarks (MATH500, GPQA-diamond, HumanEval); the paper does not assess generalisation across larger models, multilingual tasks, long-form generation, or knowledge-intensive domains.

- Sensitivity and ablations: Beyond brief claims of a “stable operating region,” there is no systematic sensitivity analysis for BoK across K, temperature τ, β, λ, sequence length, or prompt types, nor ablations isolating the contribution of each component.

- Computational scaling: Runtime analysis is limited and does not quantify latency/throughput trade-offs for practical serving (GPU kernels, batching, streaming, KV-cache effects), memory overhead, or how cost scales with K, sequence length, and mirror-ascent steps.

- Calibration effects: The framework assumes raw model scores s_t; it does not investigate how score miscalibration affects optimisation outcomes or whether calibration (temperature scaling, Platt scaling) improves the reliability of q_t.

- Top-P formalisation: The Top-P nucleus is defined from the model distribution p_t induced by s_t, but the interaction between this support selection and the optimisation over q_t is not analysed (e.g., consistency when q_t differs from p_t or when temperature varies).

- Sparsemax completeness: The Sparsemax section’s derivation and empirical evaluation are incomplete; there is no full KKT solution, implementation details, or comparison to Top-K/Top-P in LLM settings (including tail-risk and hallucination metrics).

- Regulariser design space: While the paper posits “decoding as regularisation,” it does not explore non-convex or composite regularisers (e.g., repetition penalties, lexical constraints, toxic-word masks, grammar constraints), nor provide recipes for encoding practical guardrails.

- Sequence-level constraints: The framework does not show how to incorporate long-range constraints (e.g., anti-repetition, discourse coherence, factual consistency) that depend on the full generated prefix, and whether convexity can be preserved.

- Temperature-λ mapping and scheduling: The mapping between λ and conventional temperature τ is noted but not operationalised for dynamic scheduling (e.g., context-adaptive λ_t), nor are guidelines provided for selecting λ across tasks and model sizes.

- Tie-handling and determinism: The treatment of ties (equal scores) is noted only in passing; the impact of tie-breaking rules on determinism, reproducibility, and downstream sequence quality is not studied.

- Training–decoding interactions: The relationship between decoding regularisers and training choices (label smoothing, entropy regularisation, RLHF) is not analysed—e.g., whether certain Ω(q) complement or conflict with learned score distributions.

- Formal guarantees for coverage gains: Claims that BoK improves the probability of “covering good alternatives” lack a formal theorem or conditions (e.g., assumptions on the quality distribution over samples) under which coverage gains translate to accuracy improvements.

- Safety and alignment constraints: The optimisation framework does not demonstrate integration with safety filters, policy constraints, or alignment objectives at decode time; empirical effects on hallucinations or harmful content are not measured.

- Length bias and scoring: The paper does not address length bias in log-prob scoring for selection among K samples or propose normalisation/penalties within the optimisation to mitigate over-preference for shorter outputs.

- Robustness across prompts and contexts: There is no analysis of how optimisation behaves under adversarial, ambiguous, or under-specified prompts, nor whether BoK exacerbates or mitigates sensitivity to prompt wording.

- Beam search unification: Although beam search is mentioned, the framework does not show how beam search (a sequence-level combinatorial method) fits into the “distribution-first” per-token optimisation paradigm or how to bridge token-level convexity with sequence-level search.

Glossary

- beam search: A decoding algorithm that maintains multiple candidate sequences (beams) and expands them to find high-scoring outputs. "beam search \cite{vijayakumar2018diversebeamsearchdecoding, franceschelli2024creativebeamsearchllmasajudge};"

- Best-of-K (BoK): A decoding objective designed to maximize the chance that at least one of K samples covers high-quality alternatives, often anchored by KL divergence. "We demonstrate this by designing Best-of-K (BoK), a KL-anchored coverage objective aimed at multi-sample pipelines (self-consistency, reranking, verifier selection)."

- convex optimisation: Optimisation of a convex objective (and/or constraints) ensuring well-behaved geometry and typically unique global optima. "decoding is a convex optimisation problem whose geometry is determined by the choice of regulariser."

- convex penalty: A convex regularisation term added to the objective to shape solutions (e.g., induce sparsity). "Sparsity-inducing decoders arise from alternative convex penalties."

- coverage objective: An objective that explicitly targets the probability of covering good alternatives across multiple samples. "a KL-anchored coverage objective"

- degenerate distribution: A probability distribution that places all mass on a single outcome. "greedy decoding corresponds to a degenerate distribution that places all its mass on a single token"

- greedy decoding: A deterministic decoding method that selects the highest-scoring token at each step without regularisation. "Greedy decoding becomes a limiting case of an objective with no regularisation."

- KKT-style conditions: Optimality conditions (Karush–Kuhn–Tucker) for constrained optimisation that distinguish active and inactive constraints. "These KKT-style conditions act like a master key: once derived, you can plug in a choice of regulariser and immediately recover the structure of the decoder it implies."

- KL anchoring: The use of Kullback–Leibler divergence to anchor a distribution toward a reference during optimisation. "a range of choices (coverage vs. KL anchoring)"

- Lagrangian: A function formed by augmenting the objective with weighted constraints to enable unconstrained optimisation of constrained problems. "This gives us what every constrained optimisation problem eventually produces, the Lagrangian:"

- logits: Raw, unnormalized scores output by a model prior to conversion into probabilities. "In practice, these scores are the logits or log-probabilities produced by the model."

- mirror ascent: An optimisation algorithm that performs ascent steps in a dual space defined by a mirror map, useful for probability distributions. "using 5 mirror-ascent steps per token adds about 1s on MATH500 (16.88s vs. 15.84s)"

- nucleus sampling: A Top-P method that samples from the smallest set of tokens whose cumulative probability exceeds a threshold p. "Top-P (nucleus) sampling adapts the size of the active support set based on the model's confidence in the current context."

- probability simplex: The set of all probability distributions over a finite vocabulary (nonnegative components summing to one). "we solve a regularised problem over the probability simplex that trades off model score against structural preferences and constraints."

- regulariser: A term added to the optimisation objective to enforce desired properties (e.g., diversity, sparsity, stability). "different regularisers and constraints."

- reranking: A multi-sample strategy that reorders generated candidates according to an external criterion or model. "self-consistency, reranking, verifier selection"

- Shannon entropy: A measure of uncertainty of a distribution; its negative can serve as a smoothness-inducing regulariser. "Softmax sampling becomes the unique optimum of a score-maximisation problem regularised by (negative) Shannon entropy."

- simplex geometry: The geometric structure of the probability simplex that leads to active/inactive behaviour under constraints. "The simplex geometry introduces the familiar ``active vs inactive'' behaviour"

- Softmax: A transformation that converts scores into a probability distribution via exponentiation and normalization. "We thus recover the classical softmax decoder directly from our master optimisation problem."

- Sparsemax: A sparsity-inducing alternative to softmax that yields probabilities with exact zeros. "Sparsemax-style sparsity"

- sub-simplex: A lower-dimensional simplex formed by constraining support to a subset of tokens. "which is a sub-simplex of supported on "

- support constraints: Hard restrictions that force zero probability outside a specified set of tokens, shaping the feasible region. "hard support constraints restrict the feasible region itself, carving out sub-simplices before optimisation even begins."

- temperature: A scalar that scales scores before softmax to control randomness; higher temperature yields flatter distributions. "for a given temperature parameter "

- Top-K: A decoding method that restricts sampling to the K highest-scoring tokens. "Top-K \cite{fan2018hierarchicalneuralstorygeneration, noarov2025foundationstopkdecodinglanguage}"

- Top-P: A decoding method that restricts sampling to the smallest set of tokens whose cumulative probability exceeds p. "Top-P \cite{holtzman2020curiouscaseneuraltext, nguyen2025turningheatminpsampling}"

Practical Applications

Immediate Applications

The paper’s core contribution reframes LLM decoding as a tractable optimisation layer over the probability simplex, and introduces a practical Best-of-K (BoK) coverage objective. The following applications can be deployed today with modest engineering effort and without retraining models.

- Coverage‑aware multi‑sample decoding for reasoning tasks (sector: software, education)

- Use BoK to generate K candidates that maximise the chance of including at least one correct answer, then select via self‑consistency, reranking, or verifier signals. Empirically improves accuracy on math (MATH500), science QA (GPQA), and code (HumanEval), especially at high temperature.

- Tools/workflows: BoK sampler integrated into inference stacks (e.g., vLLM/TGI/Transformers), “generate K → BoK distribution → sample → aggregate” pipeline, with 2–5 mirror‑ascent steps per token.

- Assumptions/dependencies: Access to logits; willingness to add small inference overhead; tasks benefit most when using multi‑sample generation and higher temperatures.

- Reliability/quality “dial” via principled regularisers rather than ad‑hoc knobs (sector: software, enterprise platforms)

- Replace heuristic Top‑K/Top‑P/temperature tuning with explicit optimisation parameters (λ for entropy, quadratic penalties for sparsity, support constraints C_t). Improves predictability and reproducibility across deployments.

- Tools/products: “Decoding‑as‑Optimisation” SDK exposing regulariser/constraint presets; dashboards that visualize active sets/KKT conditions to audit how tokens receive probability mass.

- Assumptions: Engineering teams can modify decoding modules; calibration of λ per task/domain.

- Tail‑risk mitigation with Sparsemax‑style decoders (sector: safety, healthcare, finance)

- Use quadratic penalties to allow zero probabilities on low‑scoring tokens (adaptive truncation) without heuristic cutoffs, reducing nonsensical long‑tail samples for safety‑critical outputs.

- Tools/workflows: Sparsemax decoder preset, risk‑aware sampling profiles for regulated contexts (clinical advice, compliance summaries, financial notes).

- Assumptions: Some loss of diversity acceptable; domain owners define invalid/undesirable token classes and risk thresholds.

- Determinism controls and auditability for compliance (sector: policy, enterprise governance)

- Treat decoding as a convex optimisation with explicit KKT conditions to explain token selection; pair with fixed seeds and regulariser choices to meet reproducibility requirements.

- Tools/products: Output governance layer that logs objective, λ, constraints, and active set per step for audit trails; model cards that document default decoding objectives.

- Assumptions: Compliance teams accept optimisation‑based documentation; infrastructure stores per‑request decoding metadata.

- Budget‑aware generation that maximises coverage for a fixed K (sector: software, energy/efficiency)

- Given a sampling budget, BoK improves the “good candidate coverage” probability, potentially reducing the need for very large K while maintaining accuracy—saving compute/energy in batch workloads.

- Tools/workflows: Auto‑tuning K, λ, and BoK hyperparameters to meet latency/accuracy SLAs; A/B testing frameworks to confirm savings.

- Assumptions: Gains depend on task difficulty and temperature; careful tuning required in low‑temperature regimes.

- Domain‑constrained decoding via support sets C_t (sector: software, data engineering)

- Enforce grammar/JSON/schema constraints or disallow domain‑specific invalid tokens by restricting the feasible set before optimisation; maintains correctness while preserving principled sampling.

- Tools/workflows: Grammar‑aware support pruning (Top‑P/K inside schema‑valid sub‑vocabularies); integration with structured output libraries.

- Assumptions: Reliable constraint construction; performance depends on the quality of the constrained vocabulary.

- Teaching and curriculum updates in ML/NLP (sector: academia)

- Adopt the “distribution‑first” optimisation view and KKT derivations to teach decoding as convex optimisation rather than heuristics; unify greedy, Softmax, Top‑K/P, Sparsemax in one template.

- Tools: Lecture modules and assignments; libraries that expose regularisers and constraint sets for student experimentation.

- Assumptions: Minimal; applies broadly across ML courses and labs.

Long‑Term Applications

These opportunities build on the framework’s unifying theory and BoK’s early gains but require further research, scaling, or ecosystem development.

- Default “Decoding‑Ops” layer in LLM serving stacks (sector: software, cloud AI platforms)

- Standardise decoding as an optimisation service with pluggable regularisers, constraints, and solvers (e.g., mirror ascent, active‑set methods). Become a first‑class component akin to tokenizers and attention kernels.

- Tools/products: Unified decoding API across providers; hardware‑accelerated solver kernels; policy‑controlled presets per tenant.

- Dependencies: Vendor adoption; performance engineering to keep latency flat at scale; standard benchmarks for decoding objectives.

- Task‑adaptive decoders that select objectives per context (sector: enterprise AI, agents)

- Dynamically switch regularisers (entropy vs sparsity vs KL anchoring) and support constraints based on uncertainty signals, task type, or retrieved evidence—optimising reliability/creativity trade‑offs on the fly.

- Tools/workflows: Decoding controllers informed by uncertainty calibration and retrieval quality; meta‑policies that pick objectives per prompt.

- Dependencies: Robust uncertainty estimates; policy learning; monitoring to avoid instability.

- Joint training with test‑time objectives (sector: model development)

- Train models aware of the downstream decoding objective (e.g., coverage‑aware losses, KL anchoring to a reference distribution) to harmonise train‑time and decode‑time geometry.

- Tools/products: Decoding‑aware fine‑tuning curricula; loss functions that anticipate active/inactive token conditions.

- Dependencies: Algorithmic work to keep training stable; evaluation protocols to isolate decoding vs model contributions.

- Safety and compliance certification based on decoder geometry (sector: policy/regulation)

- Establish standards where model output governance includes decoding objective specifications, active‑set guarantees (e.g., zero probability on prohibited tokens), and audit logs of KKT satisfaction.

- Tools/workflows: Certification pipelines; compliance dashboards; forensic analysis tools for incident response.

- Dependencies: Regulator buy‑in; consensus on metrics (tail‑risk, reproducibility); secure logging practices.

- Healthcare and clinical decision support with coverage‑aware generation (sector: healthcare)

- Use BoK to ensure candidate sets include clinically plausible alternatives under tight sampling budgets; pair with verifiers and medical ontologies to select safe, evidence‑based answers.

- Tools/workflows: BoK + medical verifier + ontology‑constrained support sets; uncertainty‑aware escalation to human clinicians.

- Dependencies: Clinical validation and trials; strict data governance; failure mode analyses.

- Finance research and reporting with tail‑risk controls (sector: finance)

- Apply Sparsemax/constraint‑based decoders to suppress hallucinated entities and extreme tail narratives; use BoK to maintain coverage of plausible analyses while meeting compliance requirements.

- Tools/workflows: Risk‑profiled decoder presets; auditor‑visible logs; integration with retrieval/verifiers for factuality.

- Dependencies: Domain ontologies; agreement on acceptable diversity vs conservatism; monitoring for systematic biases.

- Robotics and action decoding under constraints (sector: robotics)

- Treat language‑conditioned action selection as optimisation over a constrained action simplex; enforce safety constraints via C_t and use sparsity to avoid unsafe tails while retaining diversity for exploration.

- Tools/workflows: Decoder‑aware policy layers; simulators that evaluate active/inactive sets against safety rules.

- Dependencies: Mapping tokens to action primitives; real‑time solver performance; extensive safety testing.

- Energy‑efficient large‑scale inference through coverage‑optimised budgets (sector: energy, cloud efficiency)

- Optimise K and decoding objectives to meet accuracy targets with minimal compute, reducing energy costs for batch reasoning workloads.

- Tools/workflows: Auto‑tuners that learn budget/accuracy curves; data‑center scheduling informed by decoding efficiency.

- Dependencies: Long‑run empirical studies; integration with schedulers; cross‑model generalisation of gains.

- New research directions on regulariser families and solver design (sector: academia)

- Invent task‑specific regularisers (e.g., stability, fairness, conservatism, schema adherence) and fast solvers (active‑set, interior‑point, proximal/mirror variants) tailored to LLM inference geometry.

- Tools: Open datasets and benchmarks for decoding objectives; shared libraries for reproducible solver comparisons.

- Dependencies: Community coordination; clear evaluation protocols; reference implementations.

- End‑user controls in consumer apps that map to principled objectives (sector: daily life, product UX)

- Replace vague “creativity” sliders with explainable controls (e.g., “diversity via entropy,” “safety via sparsity,” “coverage for K candidates”), improving user trust and predictability.

- Tools/products: UX components that expose objective‑level settings; on‑device inference with lightweight solvers for mobile apps.

- Dependencies: Product education; careful defaults; ensuring setting changes do not harm safety or reliability.

Collections

Sign up for free to add this paper to one or more collections.