- The paper introduces a unified framework and a novel multimodal dataset that enable rigorous, tool-augmented geospatial reasoning.

- It details a methodology that integrates explicit tool calls with sequential analysis of optical, SAR, and GIS data for precise remote sensing tasks.

- Experimental results demonstrate high tool-call validity and reduced error rates, outperforming existing models in spectral and GIS analyses.

Context and Motivation

Recent advancements in vision-LLMs (VLMs) and agentic frameworks have demonstrated impressive multimodal reasoning capabilities; however, in remote sensing and Earth observation (EO), these systems remain perception-centric, limited to descriptive tasks with little to no structured reasoning or verifiable analytical workflows. The complexity of EO—multi-modality (optical/SAR/multispectral imagery), spatial scale, GIS integration, and the requirement for physically meaningful and interpretable outputs—poses unique challenges not addressed by current multimodal models.

"OpenEarthAgent: A Unified Framework for Tool-Augmented Geospatial Agents" (2602.17665) addresses this gap by establishing a unified agentic architecture and dataset for rigorous, multi-step, physically grounded geospatial reasoning. The framework centers on integrating explicit tool use, spatial logic, and validated reasoning traces, setting a new direction for the development and evaluation of geospatial agents.

Dataset Curation and Structure

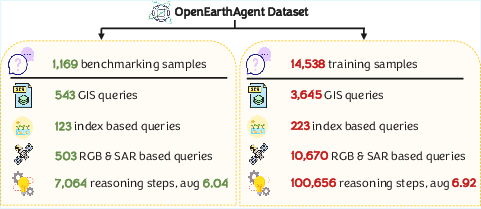

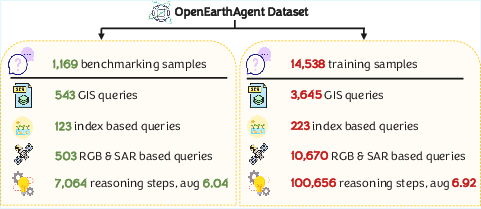

A principal contribution of this work is the construction of a comprehensive, multimodal, tool-augmented dataset tailored to geospatial reasoning. Unlike EO datasets focused on recognition or one-shot retrieval, the OpenEarthAgent corpus—comprising 14,538 training and 1,169 evaluation instances—encodes queries, imagery (optical, SAR), GIS layers, multispectral indices, and detailed reasoning trajectories.

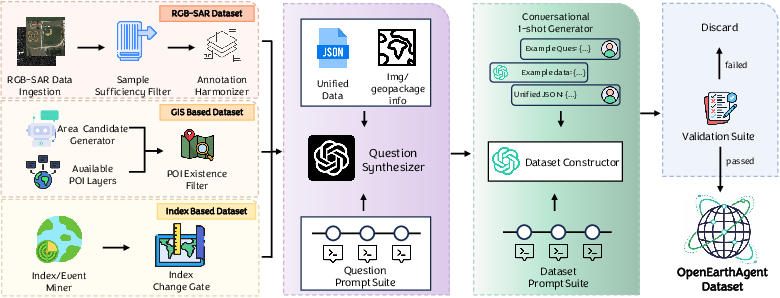

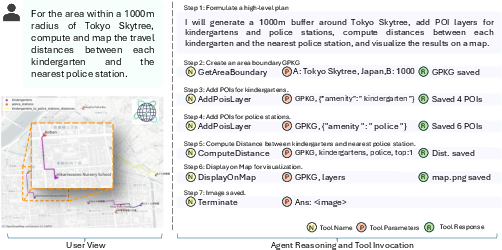

The data curation pipeline systematically filters, harmonizes, and validates heterogeneous sources. Each branch (RGB/SAR, GIS layers, index-based data) enforces domain-specific quality control; subsequent question synthesis and reasoning trace generation is largely automated but includes final validation via tool execution and manual review for the benchmark split.

Figure 1: The unified curation pipeline integrates RGB/SAR, GIS, and index-based sources, producing verified, tool-grounded multimodal reasoning samples.

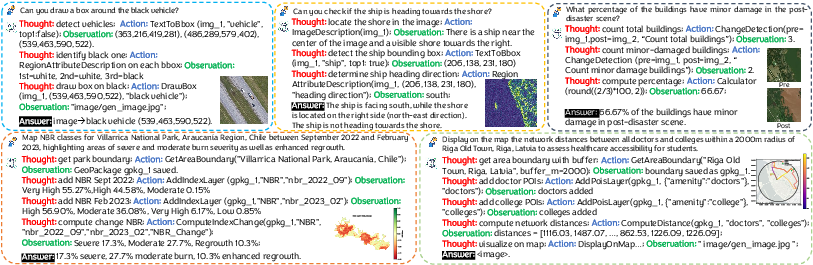

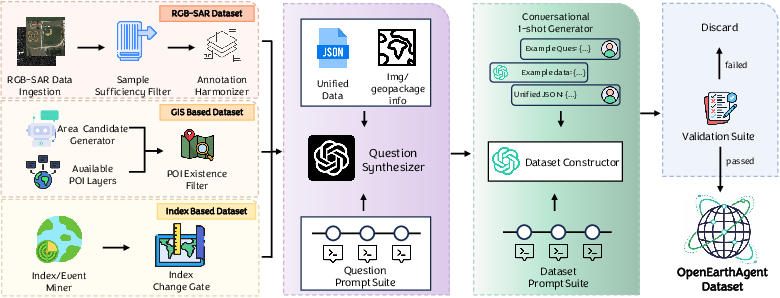

Each sample contains a natural-language query, stepwise reasoning (thought, action, observation), and explicit tool calls—spanning a broad task spectrum (object localization, index computation, buffer-based spatial analysis, change detection).

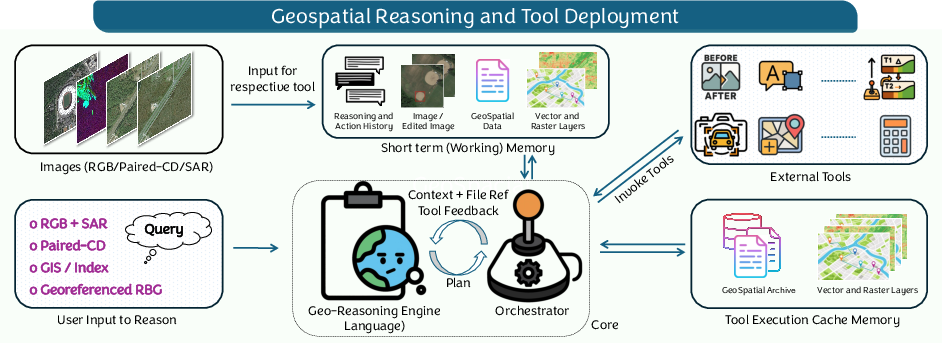

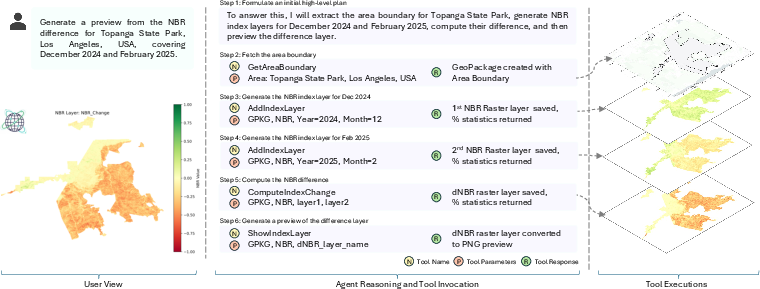

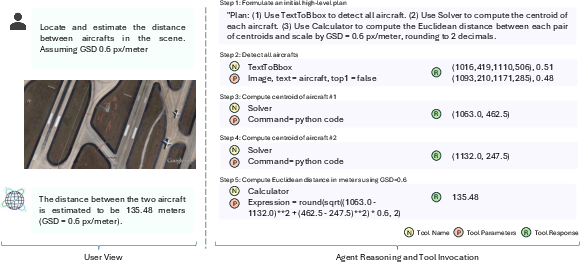

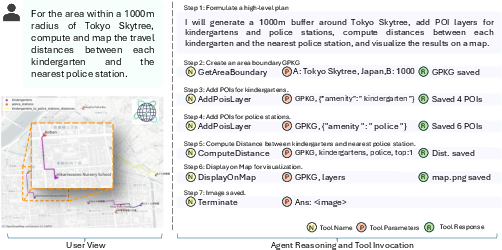

Figure 2: Representative samples illustrate the interplay of natural language, tool execution, and multimodal feedback in multi-step agentic trajectories.

The dataset’s scale, modality diversity, and depth of reasoning support comprehensive training and analysis of agentic solutions, providing a unique foundation absent in prior geospatial benchmarks.

Figure 3: Dataset statistics summarize sample/task counts, tool-call distributions, and modality coverage, illustrating diversity and complexity.

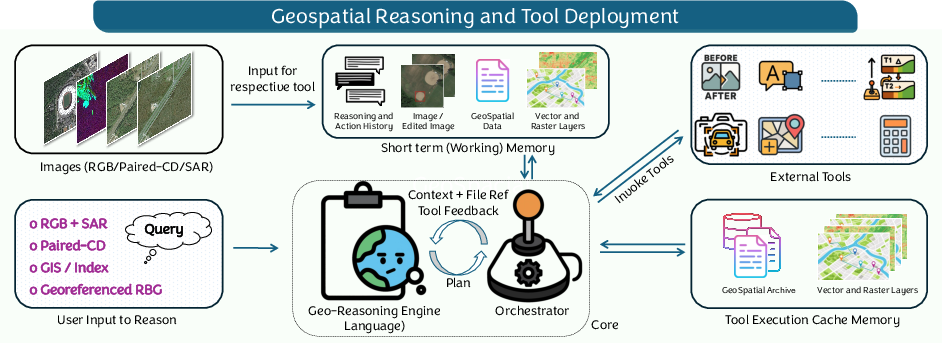

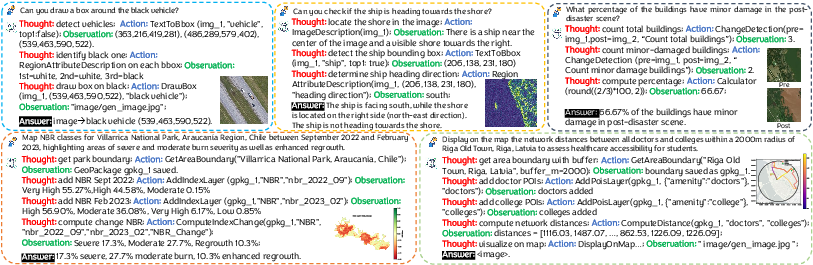

OpenEarthAgent adopts a tool-augmented agentic paradigm. Unlike traditional VLMs, the agent decomposes geospatial queries into sequential perception-action steps, utilizing a standardized tool registry. Tools are abstracted with a consistent I/O schema and JSON serialization, facilitating reliable multi-domain interaction and deterministic replay for validation.

The tool suite spans:

- Perceptual tools: Image-to-entity mapping (detection, counting, attribute extraction), change detection, segmentation.

- GIS computation: Geometric calculation (distance, area, neighborhood/buffer analysis), spatial aggregation, map rendering.

- Spectral analysis: NDVI/NBR/NDBI computation, index-based change, cross-modality integration.

- Georeferenced raster utilities: Alignment, bounding box extraction, metadata-driven visualization.

- Utility modules: Calculator, solver, OCR, external retrieval, and symbolic reasoning.

Operations are orchestrated by a tool controller that handles input validation, argument canonicalization, and output caching for efficiency and reproducibility.

Figure 4: System diagram: user queries and multimodal inputs are processed via the geospatial reasoning engine and tool orchestrator, enabling stepwise interpretable analysis.

Training objective is supervised learning over validated thought/action/observation sequences, with dedicated masking to optimize only over agent-generated tool calls and not on tool output, thereby separating policy learning from environment response.

Experimental Evaluation

Quantitative Analysis

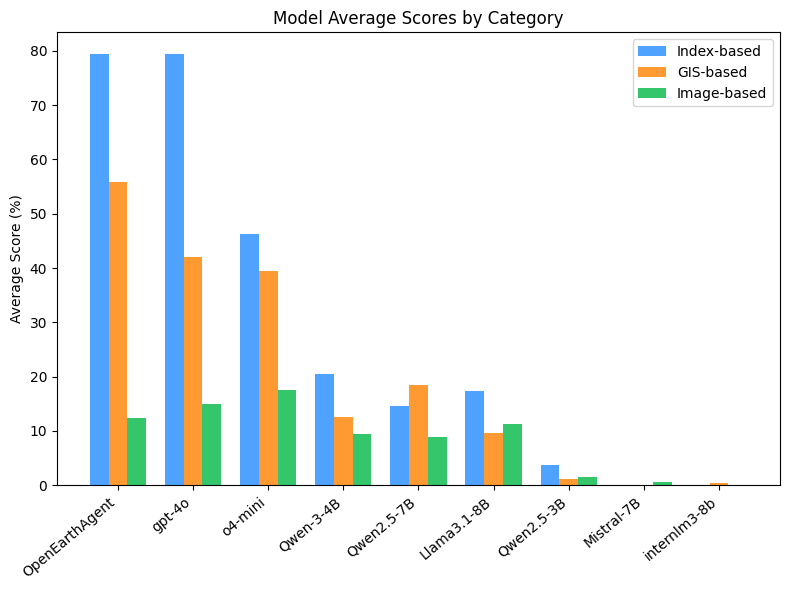

OpenEarthAgent is trained (SFT) on the constructed corpus using Qwen3-4B as backbone, with all baselines—GPT-4o, o4-mini, Llama3.1, InternLM3, and Mistral0.3—re-implemented in the unified framework for fair comparison under stepwise and end-to-end evaluation regimes.

Metrics include syntactic/semantic tool-call correctness (instance accuracy, tool-choice, argument naming and value accuracy), functional F1 scores by category (Perception, GIS, Logic, Operations), tool-order fidelity (sequence matching), and final answer accuracy. An LLM-based judge (GPT-4o-mini) with a standardized evaluation protocol is used for human-aligned answer grading.

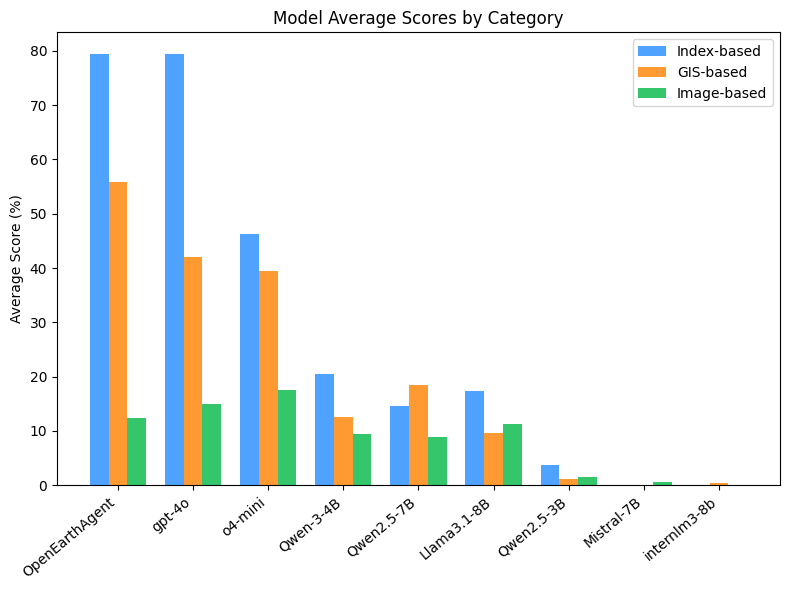

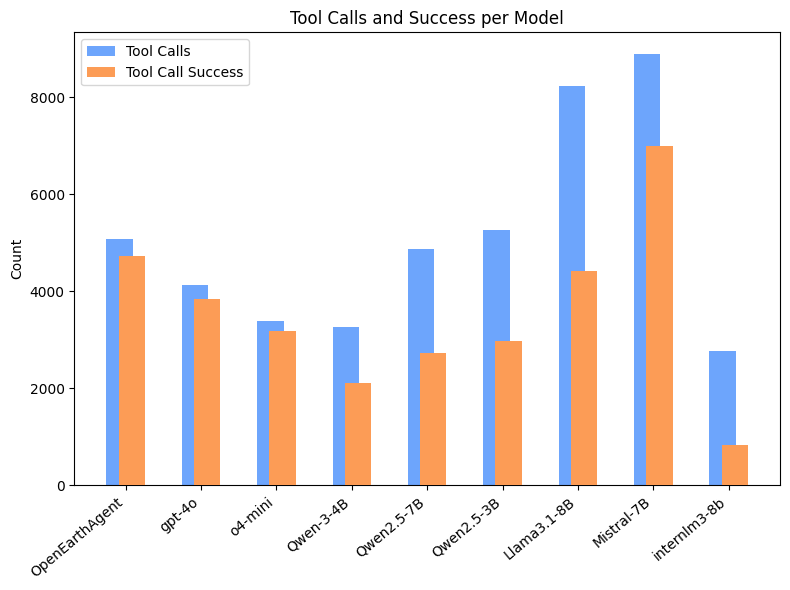

OpenEarthAgent excels in tool call validity (Inst. 99.51, Tool. 97.18, ArgN. 96.08, ArgV. 62.10), surpassing all open-source and matching or exceeding closed-source models in multi-step trajectory metrics. It also achieves high F1 across spectral and GIS tasks, an area where most small/mid parameter LLMs demonstrate severe degradation.

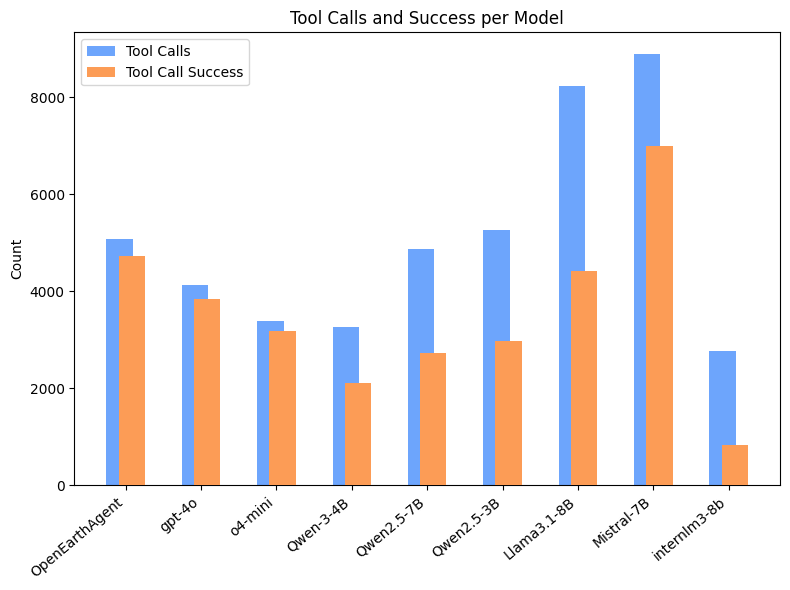

Figure 5: Tool-call performance: OpenEarthAgent and GPT-4o markedly outperform open-source competitors in both volume and success rate of well-formed, functionally correct tool invocations.

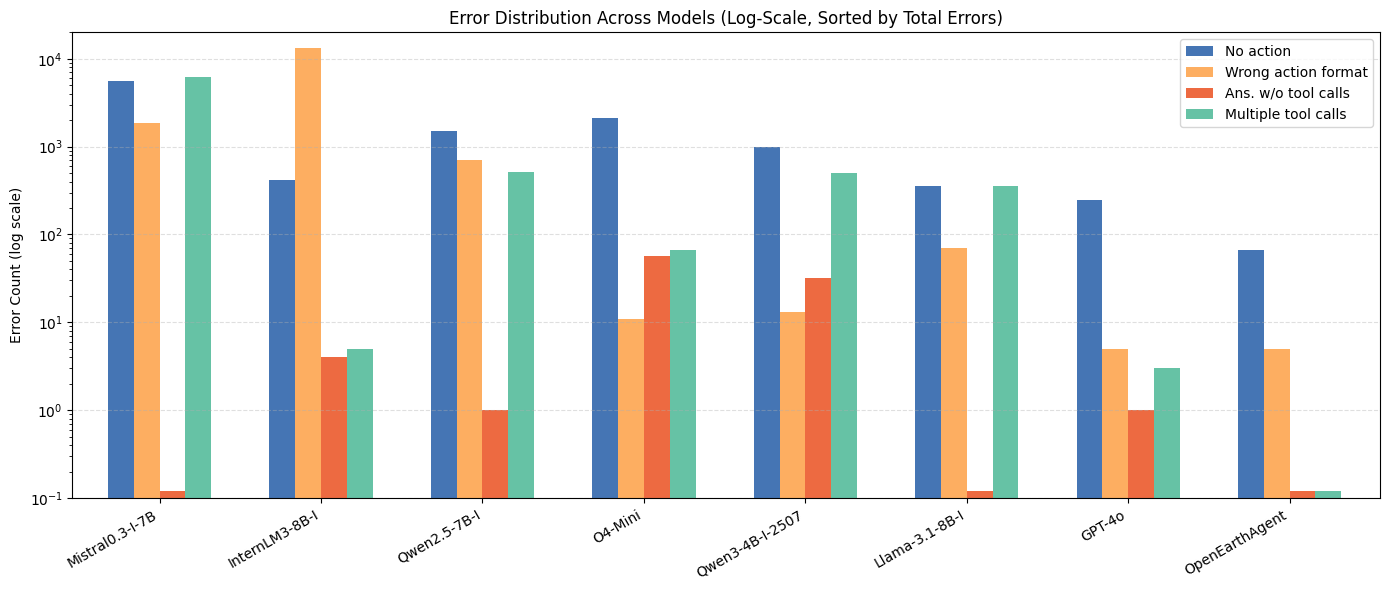

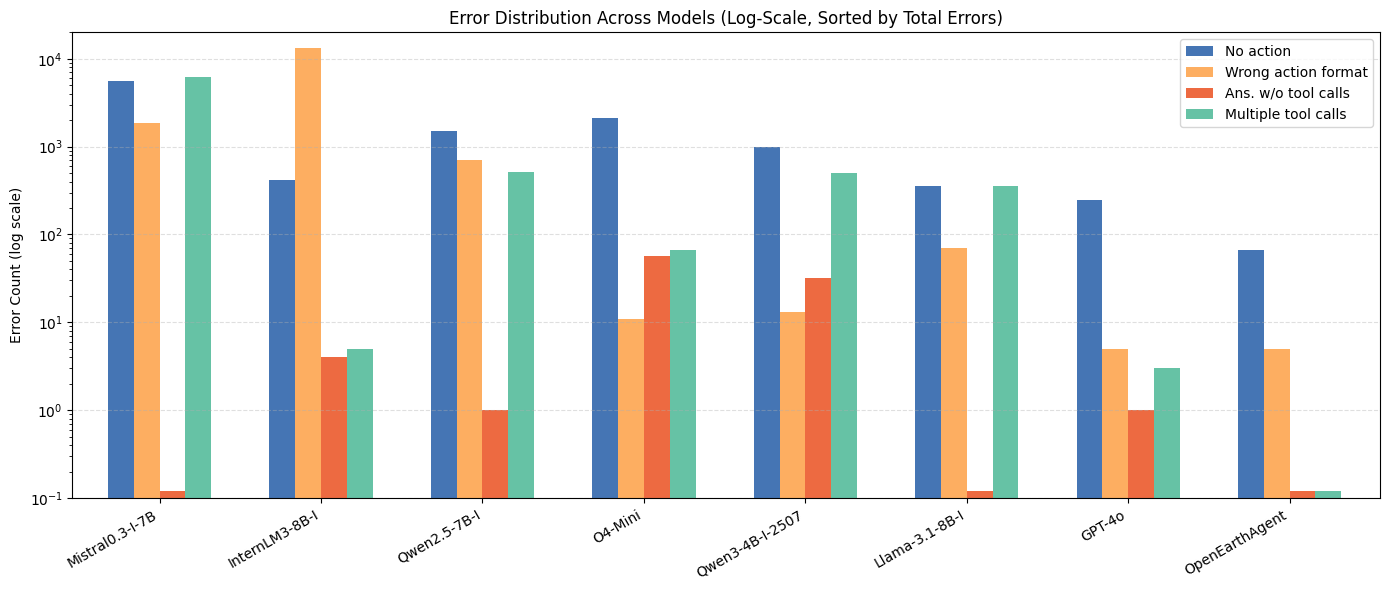

Error analysis (log-probability distribution across failure modes) shows OpenEarthAgent reduces both syntax and reasoning errors relative to all baselines (see Supplementary). Notably, open-source baselines often exhibit high rates of tool schema violation and task incompletion.

Figure 6: Error type frequencies: OpenEarthAgent minimizes syntax and logic breakdown versus other models, supporting robust procedural execution.

Qualitative Capabilities

OpenEarthAgent demonstrates consistent zero-shot and generalizable reasoning across diverse geospatial and environmental applications:

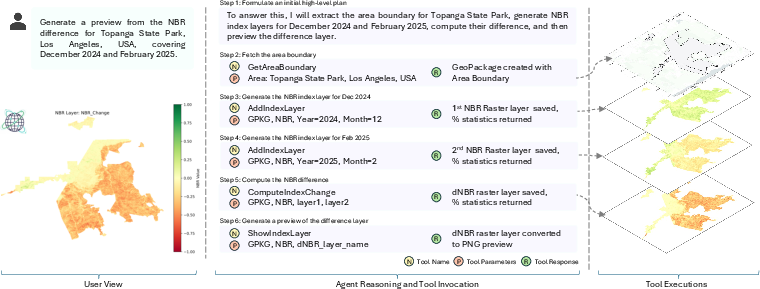

Figure 7: Zero-shot NBR difference mapping in post-fire assessment, tracking burn scars and vegetation dynamics using multi-temporal index computation and map visualization.

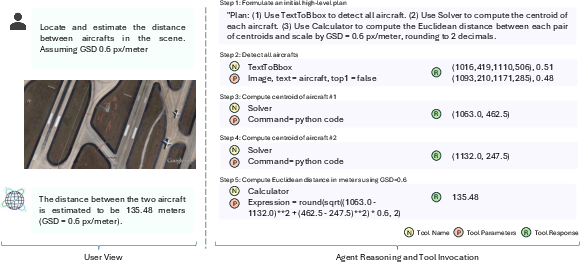

Figure 8: Metric-scale object detection and inter-object distance estimation illustrating agent capacity for fine-grained visual–spatial reasoning via sequential tool composition.

Figure 9: Complex spatial network analysis (road distances within GIS buffer), integrating POI extraction, topological computation, and geovisualization.

Implications and Future Directions

OpenEarthAgent’s agentic design enforces physically and semantically interpretable outputs through explicit reasoning supervision and environment–policy separation. The results indicate that model size is not the fundamental limitation in current LLMs for geospatial reasoning tasks; rather, schema-aware alignment and trajectory conditioning yield stronger learnability and generalization, even with smaller architectures.

This work expands the state-of-the-art in geospatial AI by:

- Providing a new benchmark and testbed for agentic EO systems, enabling rigorous analysis of physically grounded, verifiable reasoning.

- Demonstrating the practical viability of tool-augmented autonomous agents in operational remote sensing, environmental monitoring, and GIS analysis scenarios.

- Establishing a framework extensible to additional sensors, tool types, and hybrid pipelines, thus paving the way for future research into broader, domain-adaptive agentic systems in Earth and climate informatics.

Extensions could include reinforcement/human feedback learning for improved policy adaptivity, integration with real-time geospatial data feeds, and multi-agent settings for collaborative EO workflows.

Conclusion

OpenEarthAgent sets a new comprehensive standard for tool-augmented, interpretable geospatial reasoning in remote sensing and Earth observation. By coupling a validated dataset, unified tool APIs, transformer-based agentic models, and rigorous evaluation protocols, this work demonstrates both practical merit (high accuracy on GIS/index/spectral tasks, extensibility, low error rates) and theoretical significance (structured reasoning alignment surpasses raw scale). It will serve as a critical resource and baseline for ongoing research in agentic AI, geospatial analytics, and operational environmental intelligence.