- The paper introduces a hybrid Top-k/Top-p masking strategy that robustly retains key tokens in sparse attention, preserving contextual fidelity.

- The paper utilizes velocity distillation fine-tuning to align sparse and full attention outputs, mitigating performance degradation under distribution mismatch.

- The paper demonstrates significant efficiency gains, achieving up to 16.2× speedup and 95% sparsity while sustaining high-quality video generation.

SpargeAttention2: Robust Trainable Sparse Attention via Hybrid Top-k/Top-p Masking and Velocity Distillation in Video Diffusion Models

Problem Context and Motivation

The quadratic complexity of self-attention is a limiting factor in large-scale video diffusion models, where sequence lengths are long and computational demands are high. Sparse attention methods have been widely adopted to alleviate inference bottlenecks, but a significant trade-off emerges between attention sparsity and generation quality. While training-free sparse attention approaches such as SpargeAttention, SVG, and Radial Attention accelerate inference, they fail to maintain high fidelity when pushed to extreme sparsity regimes. Trainable sparse attention variants—VSA, SLA, VMoBA, Bidirectional—demonstrate improved sparsity thresholds, yet they encounter critical failure cases in masking and fine-tuning objectives, especially under distributional mismatch with non-public datasets.

Technical Analysis: Masking Failure and Trainable Sparsity

SpargeAttention2 systematically investigates error modes in conventional Top-k and Top-p masking. The authors formally decompose sparse attention error into dropped contributions and renormalization effects, establishing scenario-dependent limitations:

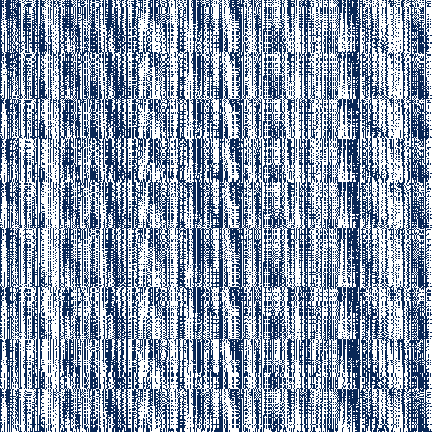

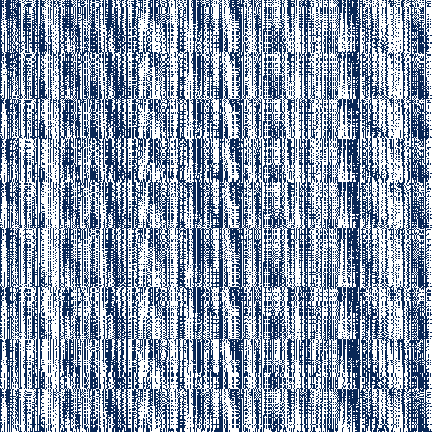

- For nearly uniform attention weight distributions (Figure 1), Top-k masking retains too few tokens, resulting in substantial context loss.

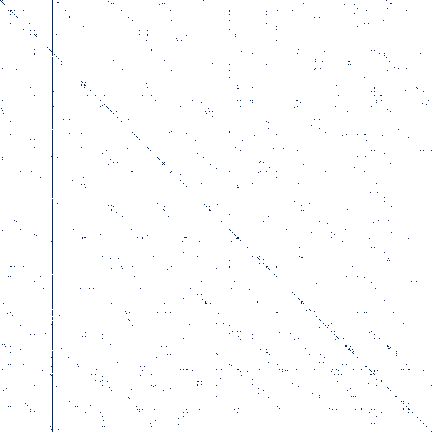

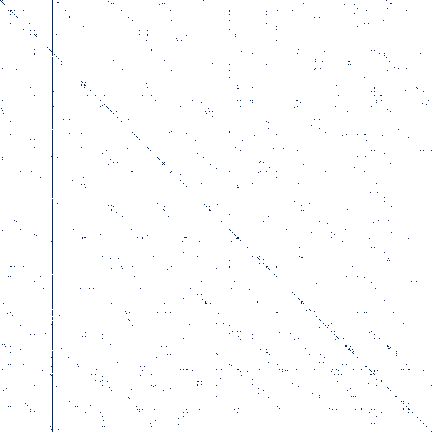

- For highly skewed distributions, Top-p masking collapses to attention sinks, neglecting secondary informative tokens and degrading the output (Figure 2).

Figure 1: A uniform P with Top-p masking; largest probabilities are kept until cumulative sum reaches 60% per row.

Figure 2: A P before sparse-attention fine-tuning; rows retain highest probabilities summing to 60%.

A hybrid masking strategy—joint Top-k and Top-p—is proposed, ensuring robustness by always retaining salient tokens under both uniform and concentrated attention distributions, mitigating cumulative probability and fixed token count pitfalls.

Fine-tuning with sparse attention induces increased concentration in attention weights, reducing error via minimized dropped probability and improved normalization stability. Empirical evidence shows trainable sparse attention outperforms training-free variants in both sparsity and accuracy while requiring adaptation for stable behavior under dataset mismatch.

Distillation Fine-Tuning: Addressing Distribution Mismatch

Conventional diffusion loss optimization targets data-driven alignment with fine-tuning sets. Given that high-quality pretraining datasets (e.g., Wan2.1) are private and unmatched by open fine-tuning samples, even full-attention models observe marked performance degradation, particularly in aesthetics, vision reward, and VQA metrics. SpargeAttention2 circumvents this issue by employing a velocity distillation loss: a frozen full-attention teacher supervises the sparse-attention student, aligning generation dynamics and constraining behavioral drift irrespective of fine-tuning data quality.

Formally, the student sparse-attention model is trained to match teacher outputs on identical noisy latent, timestep, and text conditioning. The distillation loss strictly penalizes velocity prediction discrepancies (under flow matching), ensuring the adapted sparse-attention model preserves original capabilities.

Kernel Implementation and Efficient Model Adaptation

SpargeAttention2 introduces a highly optimized block-sparse attention kernel built atop FlashAttention. Mask construction, pooling, and hybrid masking are efficiently integrated in CUDA, directly skipping computation for masked-out blocks and achieving alignment with GPU tiling. The adaptation routine replaces all attention layers in pre-trained diffusion models with the SpargeAttention2 operator, followed by velocity distillation-based fine-tuning.

Empirical Effectiveness and Efficiency

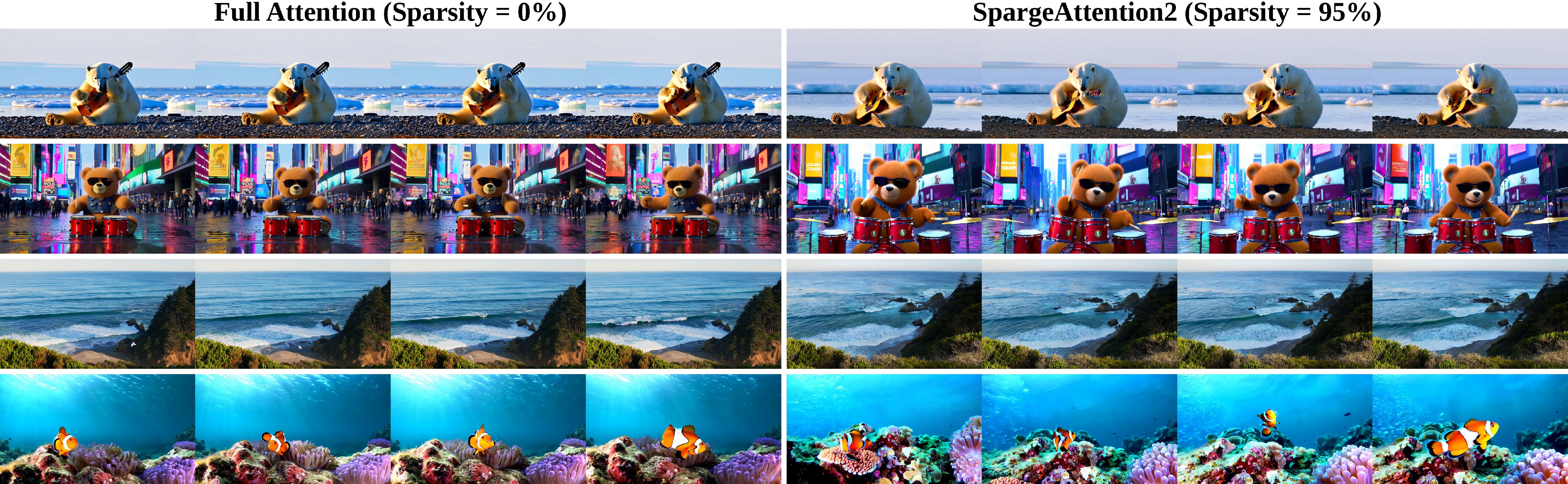

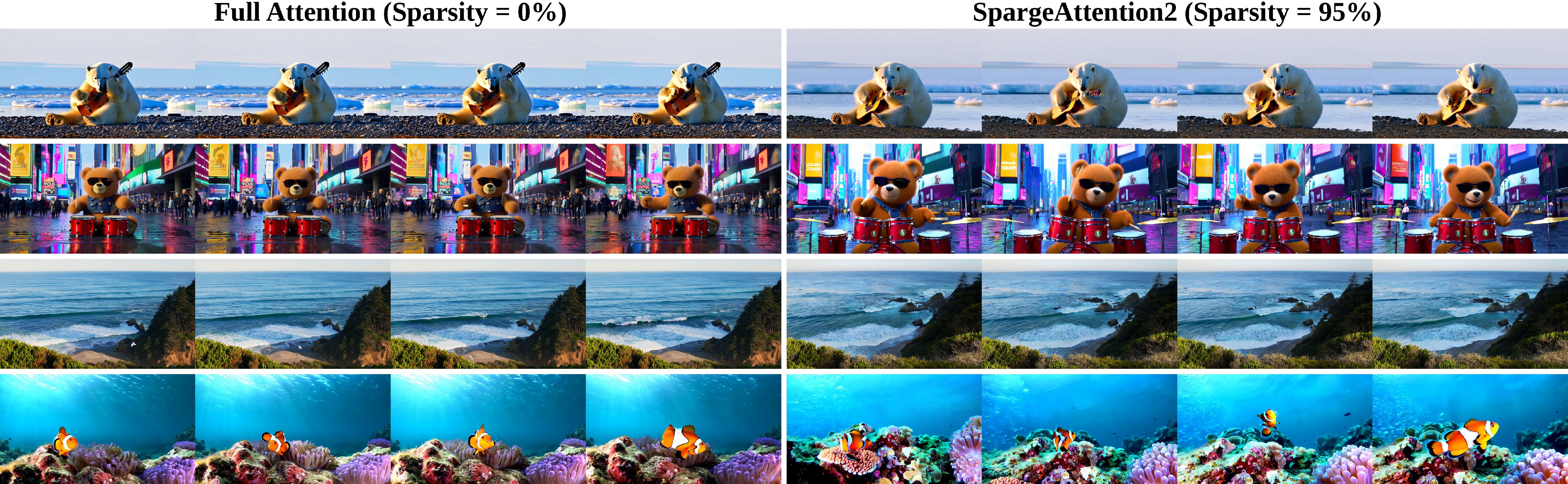

Comprehensive evaluation across Wan2.1 1.3B (480p) and 14B (720p) configurations reveals that SpargeAttention2 achieves 95% attention sparsity and maintains or even improves generation quality compared to full-attention baselines. Notably, generation quality metrics—including Imaging Quality (IQ), Overall Consistency (OC), Aesthetic Quality (AQ), Vision Reward (VR), and VQA accuracy—are consistently superior to other sparse attention competitors at comparable or higher sparsity.

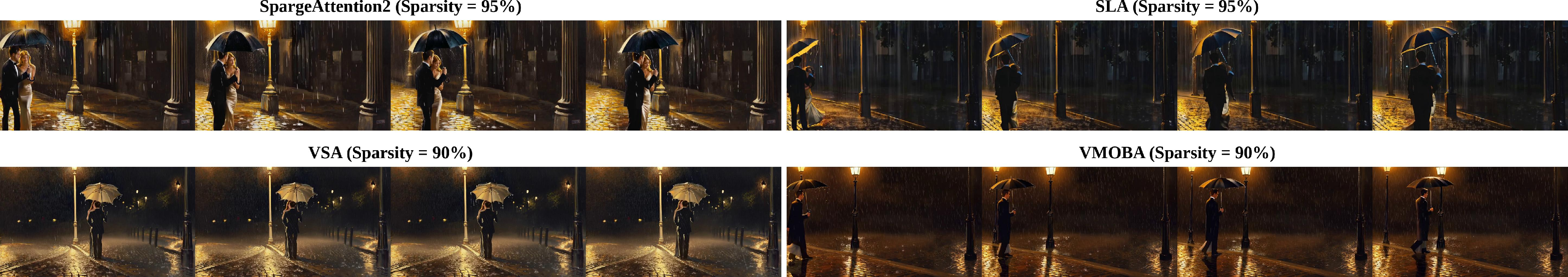

Figure 3: Qualitative samples of text-to-video generation—SpargeAttention2 and full attention are visually indistinguishable at high sparsity, while prior methods degrade.

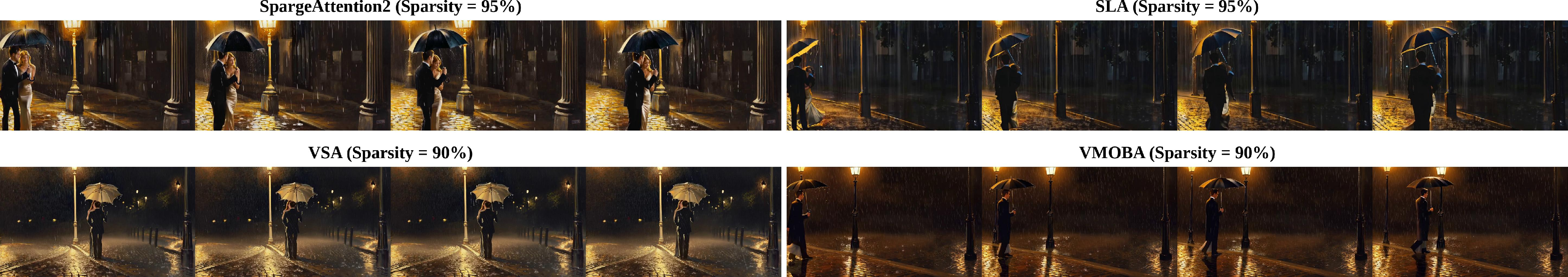

Figure 4: Under 95% sparsity, SpargeAttention2 produces semantically faithful videos; competitors yield wrong spatial-temporal dynamics and prompt misalignment.

Efficiency measurements show that SpargeAttention2 delivers up to 16.2× attention runtime speedup and up to 4.7× overall generation speedup compared to full attention, outperforming SLA, VSA, and VMoBA by substantial margins in both latency and quality.

Ablation and Design Contribution

Variants isolating hybrid masking, trainability, and velocity distillation confirm their necessity: disabling distillation or mask unification sharply reduces alignment and quality. Training-free sparse attention exhibits pronounced degradation across video generation metrics, validating the requirement for adaptation.

Practical and Theoretical Implications

SpargeAttention2 decisively shifts the landscape for accelerated video diffusion transformers. By eliminating failure regimes in masking and fine-tuning, it enables deployment of ultra-sparse attention models without loss of semantic or aesthetic fidelity. This advances the feasibility of real-time, large-scale text-to-video applications and supports broader exploration of extreme sparse regimes in generative modeling.

From a theoretical viewpoint, the hybrid masking and distillation paradigm demonstrates a transferable method for robust attention sparsity in non-autoregressive contexts, potentially informing future designs for multimodal generative architectures.

Future Prospects

Future directions include extending hybrid masking to multi-layer hierarchical sparsity, exploring adaptation in cross-modal and long-context regimes, and leveraging velocity distillation for other non-data-driven alignment objectives. Hardware co-design for block-sparse kernels, in conjunction with quantization strategies, could further reduce runtime cost and memory footprint.

Conclusion

SpargeAttention2 systematically addresses critical bottlenecks in trainable sparse attention for video diffusion models, integrating hybrid Top-k/Top-p masking and velocity distillation to achieve state-of-the-art sparse attention performance. It maintains generation quality under high sparsity, delivers substantial efficiency improvements, and offers robust adaptation strategies under practical distributional mismatch scenarios, setting a strong precedent for future sparse attention research and deployment.