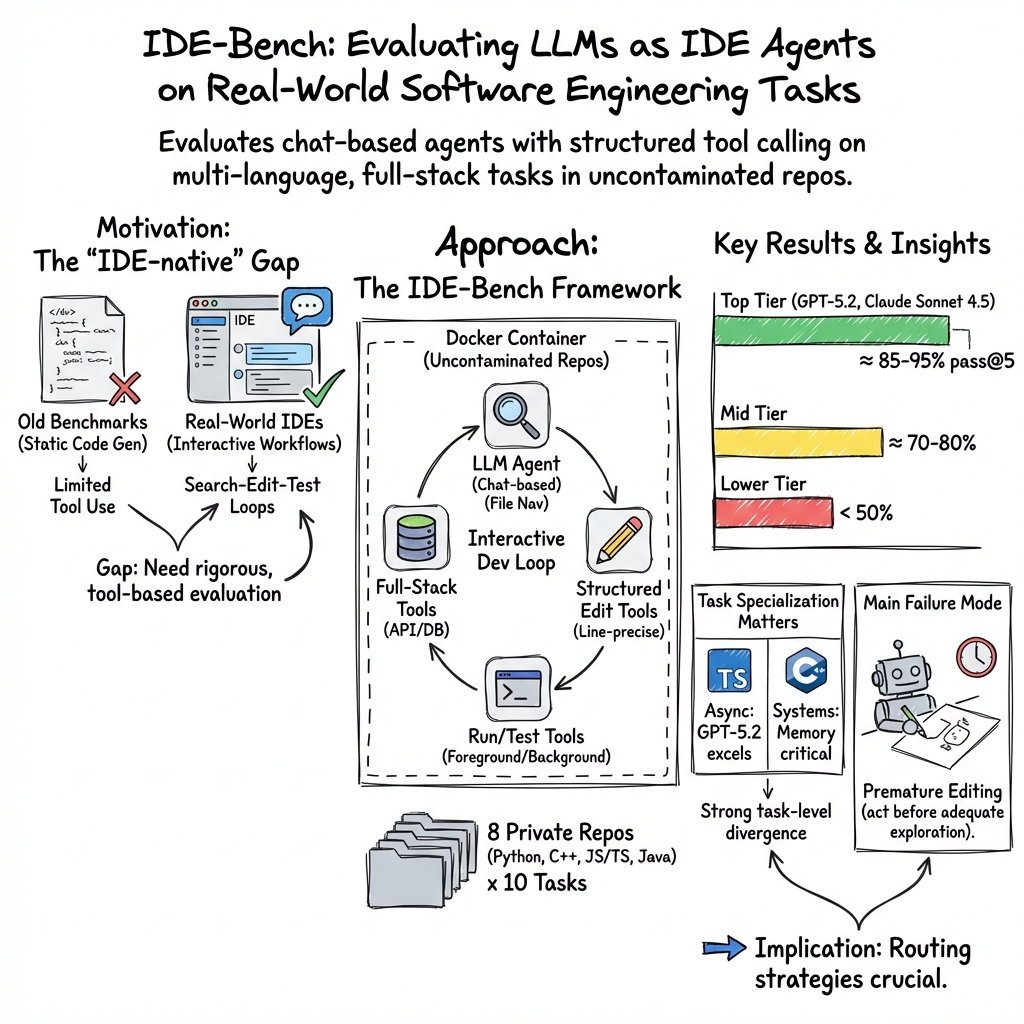

- The paper introduces IDE-Bench, a novel framework that benchmarks LLMs on real-world IDE tasks using a containerized, API-driven environment.

- It employs a Dockerized test harness integrated with eight diverse repositories to evaluate models on tasks like bug fixing and feature implementation with metrics such as pass@1 and pass@5.

- The study highlights task-specific performance and the trade-off between efficiency and reliability, suggesting tiered deployment strategies for optimal resource use.

Evaluation of LLMs as IDE Agents Using IDE-Bench

Introduction

The paper, "IDE-Bench: Evaluating LLMs as IDE Agents on Real-World Software Engineering Tasks" (2601.20886), introduces a novel benchmarking framework designed to evaluate LLMs as IDE agents. This framework simulates realistic software development scenarios within a containerized environment tailored for agent-enabled IDEs. The evaluation comprehensively tests models on practical tasks such as feature implementation, bug fixing, and performance optimization across multiple programming languages and technology stacks. Unlike previous benchmarks like SWE-Bench and Terminal-Bench, IDE-Bench provides an IDE-native tool interface that aligns more closely with actual engineering workflows observed in modern software development environments.

Framework Design and Novelties

IDE-Bench introduces a Dockerized test harness that integrates seamlessly into an IDE-like environment, offering structured APIs for codebase search, file editing, and end-to-end application testing. This ensures that agents are assessed not merely on coding capabilities but also on their ability to navigate, execute, and manage real-world development tasks within a simulated IDE. Unique to IDE-Bench is the inclusion of eight never-before-seen repositories that span varied technology stacks and programming languages (C/C++, Java, and MERN), mimicking production scenarios commonly faced in software engineering.

A critical feature of IDE-Bench is its ability to correlate agent-reported intent with successful modifications at the project level, ensuring that LLMs are evaluated not just based on theoretical capability but also practical output. Additionally, the test environment prohibits access to published data, preventing training data contamination and ensuring that models are evaluated on truly novel codebases.

Experimental Setup

The authors evaluated 15 models, including state-of-the-art versions like GPT 5.2 and Claude 4.5 series, across 6,000 runs representing 80 distinct tasks. The models were assessed using several metrics, including pass@1 and pass@5, with performance striated by individual tasks, language handling capabilities, and efficiency in tool usage.

The results showcased significant performance differences between frontier models (e.g., GPT 5.2 achieving a 95% pass@5 rate) and open-weight models, which typically performed at lower success rates. Additionally, task analyses revealed substantial specialization, highlighting that some models excel in particular domains, such as TypeScript's asynchronous control flows or C/C++ systems programming, while performing suboptimally in others.

Key Findings and Implications

Two primary insights emerged from the experiments:

- Task-Level Specialization: Despite aggregate scores, task-specific performance reveals that models such as GPT 5.2 and Claude Opus 4.5 exhibit clear strengths and weaknesses across programming languages and frameworks. For instance, tasks involving Java web framework navigation presented unique challenges that only certain models successfully navigated.

- Efficiency and Reliability: The token consumption versus success rate analysis indicated that although models like Grok 4.1 Fast achieved high efficiency (low token usage per task), they succeeded less frequently compared to more robust but resource-intensive models like Claude Haiku 4.5. This trade-off between computational cost and success rate suggests deployment strategies might benefit from a tiered model approach, employing lighter models initially, with fallback to more reliable models as needed.

Future Directions

The paper suggests several avenues for future research and model development, focusing on improving exploration strategies, tool usage efficiency, and specification adherence. Moreover, enhancements in pre-edit validation processes could reduce syntax errors and other related inefficiencies, contributing to more robust and stable IDE agent performance.

Conclusion

IDE-Bench proves to be a critical advancement in the benchmarking of LLMs as IDE agents, validating their capabilities within a highly controlled but realistic software development setting. By offering detailed task granularity and emphasizing real-world engineering constraints, IDE-Bench provides researchers and practitioners a comprehensive tool for assessing LLMs in dynamic programming environments. Future improvements in model design, as guided by IDE-Bench insights, are expected to further bridge the gap between artificial intelligence and practical software engineering, enhancing productivity and reliability in development workflows.