Geometry-Grounded Gaussian Splatting

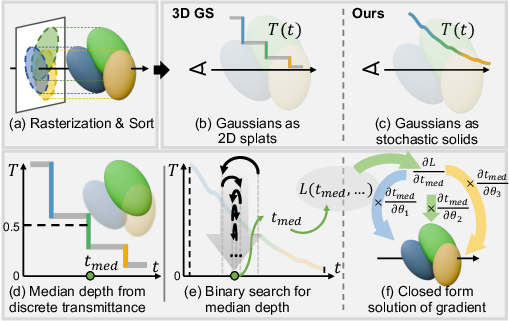

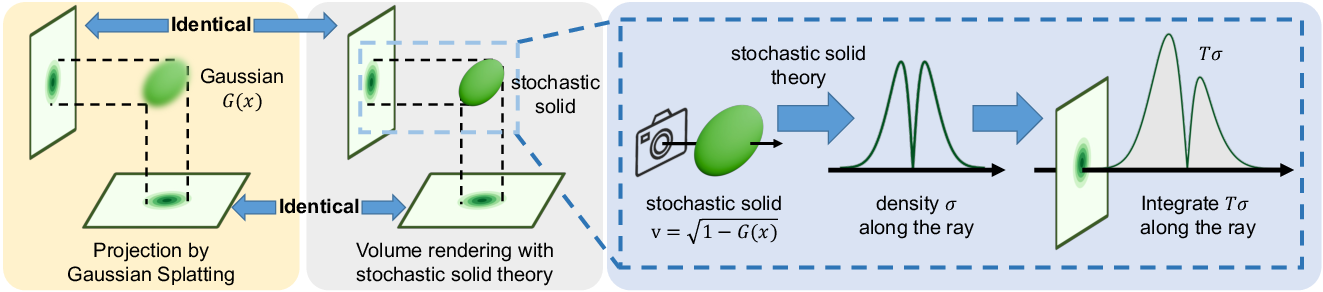

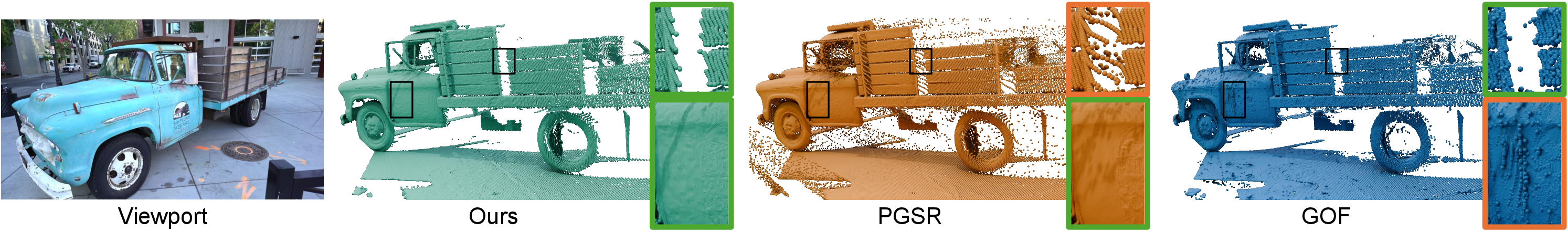

Abstract: Gaussian Splatting (GS) has demonstrated impressive quality and efficiency in novel view synthesis. However, shape extraction from Gaussian primitives remains an open problem. Due to inadequate geometry parameterization and approximation, existing shape reconstruction methods suffer from poor multi-view consistency and are sensitive to floaters. In this paper, we present a rigorous theoretical derivation that establishes Gaussian primitives as a specific type of stochastic solids. This theoretical framework provides a principled foundation for Geometry-Grounded Gaussian Splatting by enabling the direct treatment of Gaussian primitives as explicit geometric representations. Using the volumetric nature of stochastic solids, our method efficiently renders high-quality depth maps for fine-grained geometry extraction. Experiments show that our method achieves the best shape reconstruction results among all Gaussian Splatting-based methods on public datasets.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Geometry-Grounded Gaussian Splatting — Explained Simply

Overview

This paper is about turning “soft blobs” used in fast 3D graphics into solid, accurate shapes. The authors show how to read reliable 3D geometry (the shape of things) from a popular technique called Gaussian Splatting, so we can build cleaner, more consistent 3D models from photos.

What problem are they solving?

Gaussian Splatting draws a 3D scene using lots of smooth, fuzzy blobs (called Gaussians). This is great for speed and nice-looking images from new camera angles. But there’s a catch: blobs don’t automatically tell you where a solid surface (like the exact outer skin of a statue or a car) really is. Past tricks to extract surfaces can be:

- Inconsistent when seen from different viewpoints

- Easily confused by “floaters” (extra blobs that don’t belong on the true surface)

The paper asks: Can we give those blobs a proper, physical definition of “solid” so we can read accurate shape and depth from them?

Key idea and goals

The authors’ goals are:

- Give a solid, physics-based meaning to each Gaussian blob (so it’s not just a fuzzy spot but an actual “object”).

- Use that to compute depth (how far away things are along a ray of sight) smoothly and consistently across views.

- Make shape reconstruction from images more accurate while keeping the speed benefits of Gaussian Splatting.

Their big idea: Treat each Gaussian blob as a “stochastic solid.” That sounds fancy, but here’s a simple way to think about it:

- Imagine shining a flashlight through a jelly-like object. As light goes through, it fades (gets dimmer) depending on how thick the jelly is.

- A “stochastic solid” is a way to describe objects where light fading is predictable and continuous, not jumping in steps.

- The authors prove that if you choose the right “how fast light fades” function for each Gaussian, then the usual fast Gaussian rendering equals the more physics-like volume rendering. That lets them reliably compute depth and shape.

How their method works (in everyday terms)

Here’s the approach, explained with analogies:

- Turning blobs into solids

- Each Gaussian blob is treated as a solid object that gradually blocks light as you move through it.

- Two helpful ideas:

- Occupancy: how solid a point is (think “how much jelly” there is here).

- Vacancy: how empty it is (the opposite of occupancy).

- The paper derives a formula that links the blob’s value to vacancy. This determines how much light fades inside the blob, like a “thickness” or “blocking strength.”

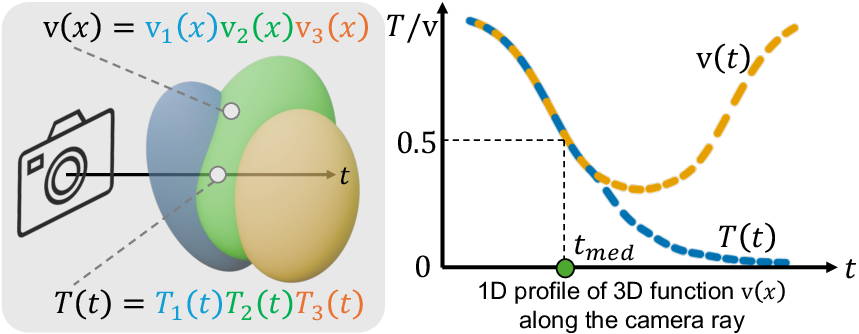

- Smooth light fading (transmittance)

- Transmittance means “how much light gets through.”

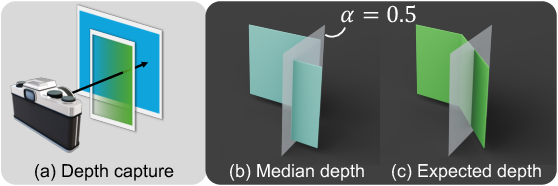

- Past methods changed transmittance in steps (like flipping switches), which made depth jump and look jagged.

- Their stochastic-solid model changes transmittance smoothly within each blob, so depth changes are more natural and clean.

- Finding depth along a ray

- To get the depth of a pixel, think of shooting a ray from the camera into the scene.

- They define “median depth” as the point where half the light has been blocked: when transmittance T equals 0.5.

- Because T goes down smoothly, they can find the exact spot by a simple “guessing game” called binary search:

- Check a point along the ray

- If T is above 0.5, go farther; if it’s below 0.5, come closer

- Repeat until you land exactly at T = 0.5

- Training efficiently

- To teach the blobs to match the real scene, they need to know how changing a blob affects the depth.

- They derive a neat formula (a gradient) to spread the learning signal across all blobs that affect the ray, not just one. This gives smoother, stronger training and better results.

Main findings and why they matter

What they found:

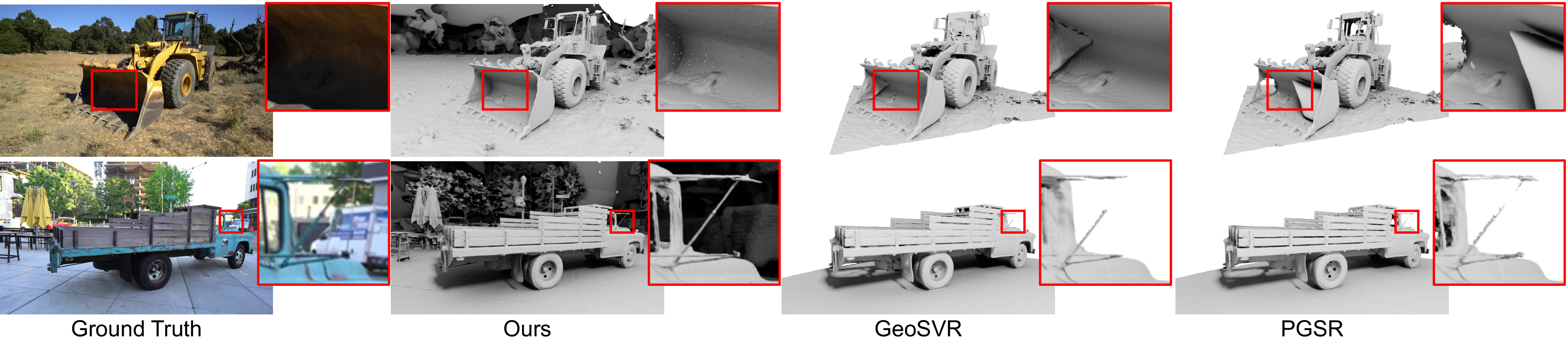

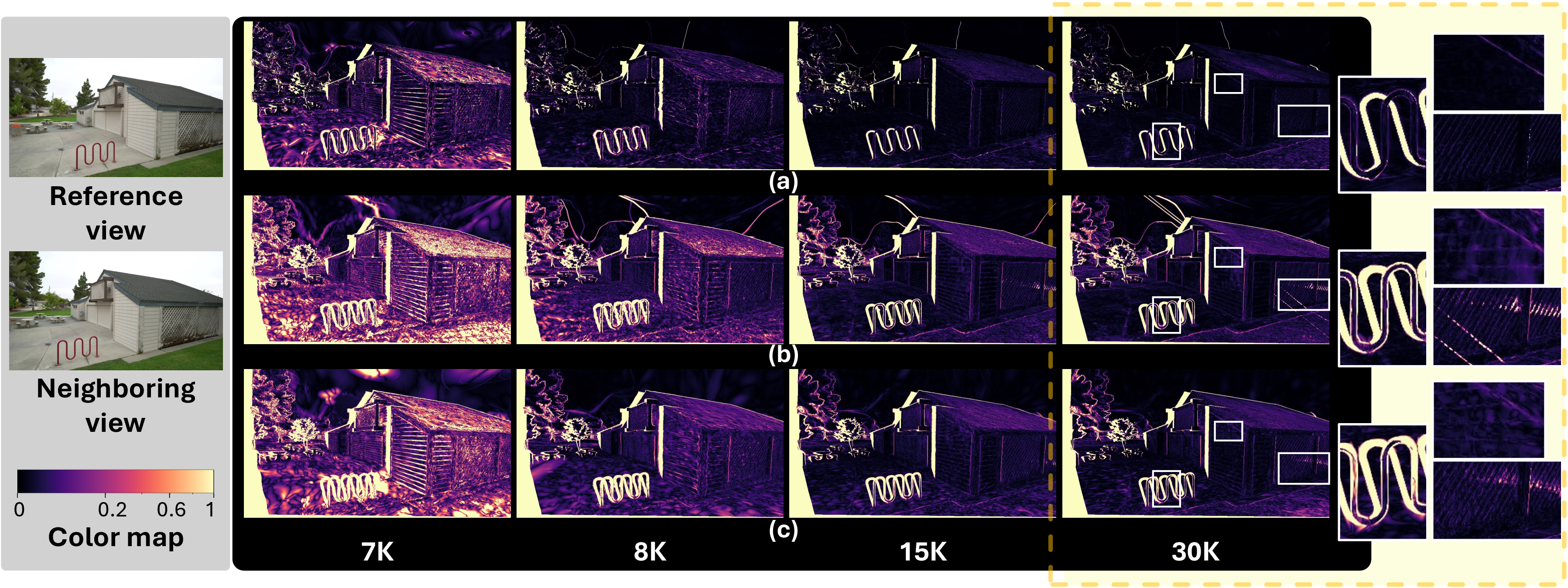

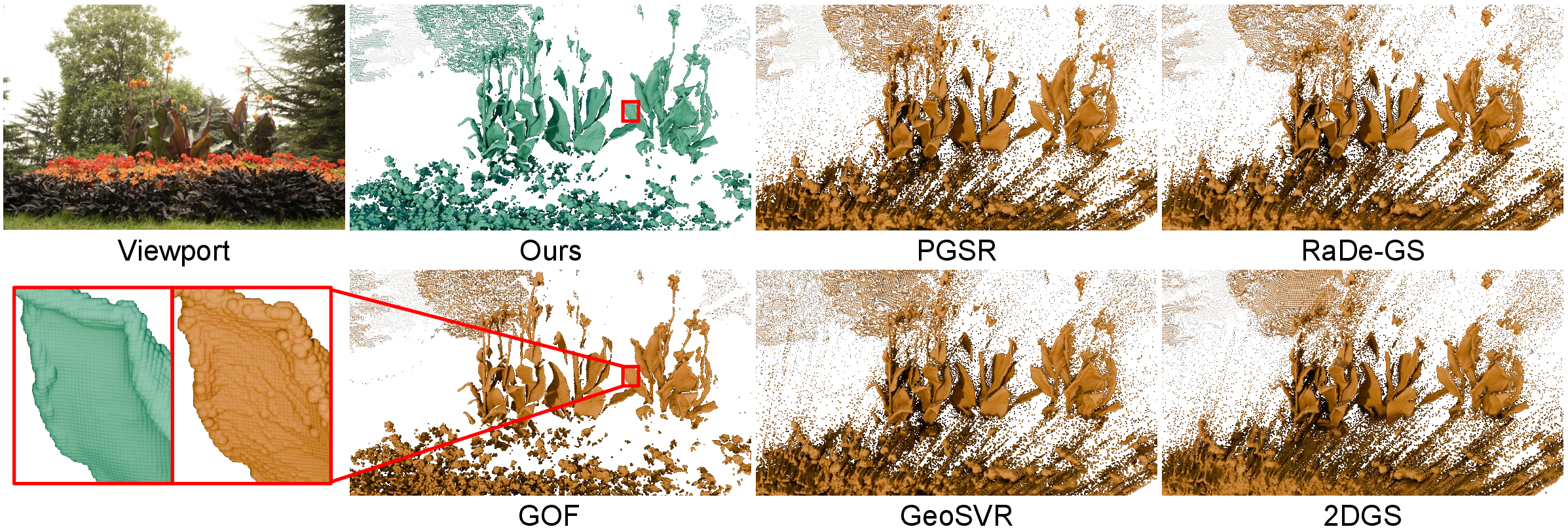

- Cleaner, sharper depth maps: Edges don’t look blurry or staircase-like.

- Strong multi-view consistency: The depth stays reliable from different camera angles, which is crucial for building accurate 3D surfaces.

- Robust to floaters: Random extra blobs bother the result less than before.

- Top results among Gaussian Splatting methods: On public datasets (like DTU and Tanks & Temples), their method reconstructs shape more accurately than other GS-based approaches, while still being efficient.

Why it’s important:

- Better shapes lead to better 3D models for VR, games, movies, robots, and self-driving cars.

- You get both speed (real-time-style rendering) and accuracy (clean surfaces), not just one or the other.

- The theory connects two worlds: fast blob-based rendering and physics-like volume rendering, creating a strong foundation for future improvements.

What this could change next

- This framework can make 3D scanning from photos more reliable without slowing it down.

- It could help editing, measuring, or simulating objects in virtual spaces with better precision.

- The authors kept normal color rendering fast, but their solid-based approach could later be extended to color and lighting too, potentially improving visual quality even more.

In short: The paper gives Gaussian blobs a true “solid” meaning. With that, it reads depth and shapes more accurately and consistently, helping turn fast, fuzzy renderings into clean, dependable 3D models.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper, framed to inform future research directions:

- Theoretical equivalence is only proved for a single Gaussian; for multiple overlapping Gaussians the paper assumes statistically independent intersections. Formal conditions under which product-of-transmittances approximates 3DGS’s depth-sorted alpha compositing (and when it fails) remain uncharacterized.

- The derivation of the vacancy field

v(x) = sqrt(1 - G(x))and its uniqueness is deferred to the supplementary. A complete, publicly accessible proof with explicit regularity assumptions (e.g., bounds ono, continuity ofG, anisotropic covariance) is needed. - The local affine projection and the claim that 2D opacity equals the ray-wise maximum are assumed (proved in supplementary). Quantifying the approximation error of this projection in realistic camera models (perspective distortion, strong anisotropy, wide baselines) and its impact on depth accuracy is missing.

- The attenuation

σ(x, ω) = |ω ⋅ ∇ log v(x)|can become numerically unstable whenv → 0near highly opaque, compact Gaussians. Practical clamping schemes, conditioning strategies, and analysis of gradient blow-ups are not provided. - The monotonicity-based binary search for

t_med = T^{-1}(0.5)lacks a principled bracketing strategy and stopping criteria. Guidelines for safe initialization, complexity bounds per ray, and adaptive schemes to reduce iterations are left as future work. - The implicit-function gradient for

t_medcan become ill-conditioned when∂T/∂tis small near the crossing. Robustification strategies (e.g., damping, line-search, trust regions) and diagnostics for gradient spikes are absent. - The choice of the median threshold

T=0.5is inherited from prior heuristics. There is no analysis of how different quantiles (e.g.,T=τ) affect reconstruction bias, thickness, and boundary localization across scenes. - The claim of view-independent isosurfaces holds only when the crossing occurs before the peaks of contributing Gaussians. A runtime test to detect this regime per-ray and fallback strategies when the crossing occurs behind peaks (e.g., multi-layer rays, translucent stacks) are not specified.

- Depth is volumetric under the stochastic-solid formulation, but RGB and normals are still rendered using standard splatting approximations. A unified, volumetric treatment of color (including spherical harmonics and view dependence) and normals (e.g., from

∇v) is acknowledged but not explored. - The independence assumption for ray–Gaussian intersection events is strong. A joint occlusion model or an analytically grounded composite transmittance that accounts for correlated overlaps and ordering is missing.

- Floaters are empirically mitigated by median depth, but there is no quantitative robustness analysis or formal mechanism to detect, downweight, or remove floaters using the stochastic-solid signal (e.g., via transmittance curvature or multi-view consistency tests).

- The meshing strategy for large-scale scenes uses a per-view indicator “inside if occluded in any training view (T < 0.5)”. This can create overly thick surfaces or spurious interiors. A global 3D isosurface extraction from the vacancy/transmittance field (with guarantees on watertightness and smoothness) remains open.

- Normal maps are optimized via splatting-based approximations; they are not derived from the gradient of the geometric field (e.g.,

∇v). The mismatch between the normal supervision and the proposed geometry field is not analyzed. - Runtime overhead stems from binary search and multi-view regularization; while the paper suggests tighter bracketing, a comprehensive profiling and algorithmic acceleration strategy (e.g., per-Gaussian analytic bracketing, hierarchical depth intervals, learned initializers) is missing.

- Scalability to very large, unbounded scenes (e.g., outdoor environments, urban-scale captures) and high-resolution training is not evaluated. Interactions with anti-aliasing (they drop Mip-Splatting’s 2D filter) and the effect on geometry quality remain unclear.

- Sensitivity to camera pose errors and calibration inaccuracies is not studied. How the stochastic-solid depth behaves under pose noise and whether it can aid pose refinement (e.g., via multi-view geometric constraints) is unexplored.

- The method still relies on depth-sorted compositing for RGB while using sort-free product-of-transmittances for depth. The consequences of this rendering-model mismatch (e.g., color–geometry inconsistency) are not investigated.

- Scenes with semi-transparent materials, strong specularities, or participating media violate the opaque stochastic-solid assumption. Extending the formulation to non-opaque transport (e.g., scattering, glints) and evaluating failure modes is an open direction.

- Handling multi-surface rays (thin structures, layered geometry) with a single median depth can be ambiguous. Multi-modal transmittance along a ray and strategies to recover multiple intersections (e.g., via quantile sets or peak-finding in

p(t)) are not addressed. - Conditions ensuring

0 ≤ G(x) ≤ 1(particularly the opacity parametero) and training-time enforcement are not specified. Without constraints,G > 1breaks the vacancy definition; practical parameterization bounds are needed. - Densification and pruning are borrowed from prior work; they are not adapted to leverage the new geometric signal (e.g., densification guided by

∂T/∂t,∇v, or median-depth stability), which could further reduce floaters and fill gaps. - No error bounds relate the stochastic-solid depth to ground-truth surfaces under common GS approximations (local affine projection, rasterization). A theoretical convergence or bias analysis would help predict regimes of reliability.

- Evaluation metrics focus on Chamfer/F1; normal accuracy, silhouette IoU, completeness vs. precision trade-offs, and thickness measurements are not reported, limiting visibility into where the method helps or harms geometry quality.

- Reproducibility is limited by non-released supplementary proofs and code at the time of writing. Clear implementation details for the binary search, gradient accumulation, and CUDA kernels would aid independent validation.

- Integration with sort-free Gaussian rendering (e.g., Monte Carlo estimators) is not discussed. Whether the proposed transmittance and depth extraction can be made sort-free without degrading geometry remains an open question.

Practical Applications

Immediate Applications

The paper’s stochastic-solid formulation for Gaussian Splatting yields deployable improvements to depth rendering, mesh extraction, and multi-view consistency. The following applications can be adopted now with standard GS toolchains and commodity GPUs.

- High-fidelity GS-to-mesh conversion for VR/AR content creation (software, entertainment) — Replace heuristic depth with median-depth from continuous transmittance to get cleaner silhouettes and view-consistent surfaces; plug into existing GS workflows (e.g., gsplat) and mesh extraction (TSDF fusion, Marching Tetrahedra). — Tools/products/workflows: “Geo-GS Depth Renderer” plugin for Blender/Unity/Unreal; batch capture from smartphone/video → GS training → stochastic-solid depth → mesh export. — Assumptions/Dependencies: Static scenes; good multi-view coverage and calibration; commodity GPU; accepts the independence assumption for transmittance across Gaussians.

- Reliable AR occlusion and collision maps from RGB-only captures (software, mobile XR) — Use median-depth (T=0.5 crossing) to produce sharper occlusion boundaries and consistent depth for physics/collision; reduces floaters’ impact versus alpha-weighted expected depth. — Tools/products/workflows: Mobile SDK module for AR occlusion from GS; real-time splat rendering for visuals + binary-search depth for occlusion masks. — Assumptions/Dependencies: Near-real-time compute budget for binary search per pixel; static or slow-moving scenes; proper camera intrinsics; exposure normalization.

- Robotics indoor mapping and manipulation scene understanding with cameras only (robotics) — Produce multi-view-consistent depth and surface reconstructions to improve obstacle maps, grasp planning, and navigation without LiDAR. — Tools/products/workflows: Drop-in GS-based mapping node for ROS; cycle reprojection error as an online consistency monitor. — Assumptions/Dependencies: Static or quasi-static scenes; synchronized multi-view streams; GPU on robot or edge computer; robust camera calibration.

- Drone photogrammetry for inspection (industrial inspection, AEC) — Robust depth against floaters yields crisper meshes of structures (facades, towers, bridges) from multi-view RGB captures; faster training than NeRF-like SDF methods. — Tools/products/workflows: UAV capture → GS training → stochastic-solid depth → TSDF → mesh; QA via cycle reprojection dashboards. — Assumptions/Dependencies: Adequate view coverage and overlap; stable lighting; photometric textures (transparent/reflective surfaces remain challenging).

- Rapid digital twin creation for architecture, construction, real estate (AEC) — Faster pipeline to produce accurate meshes for as-built documentation, clash detection, and VR walkthroughs from handheld or drone footage. — Tools/products/workflows: Site video ingestion → GS optimization → depth + mesh; export to BIM/CAD. — Assumptions/Dependencies: Sufficient image coverage; static geometry; integration bridges to CAD/BIM.

- Film/VFX scene scanning with cleaner edges and fewer artifacts (entertainment) — Improved mesh fidelity from fewer takes; better geometry for physics and lighting proxies; reduced post-processing. — Tools/products/workflows: On-set GS capture; stochastic-solid depth rendering; clean mesh handoff to DCC tools. — Assumptions/Dependencies: Controlled capture; artifact handling for specular/transparent materials.

- 3D e-commerce product scanning (retail) — View-consistent depth yields more accurate surfaces from rotating turntable videos; better fit for web viewers and AR try-ons. — Tools/products/workflows: Automated pipeline (video → GS → mesh) with quality gates based on cycle reprojection error. — Assumptions/Dependencies: Calibrated cameras; matte or moderately textured products; small to medium objects.

- Cultural heritage digitization with improved consistency (museums, public sector) — Cleaner meshes from sparse RGB captures; time-efficient compared to implicit SDF methods. — Tools/products/workflows: Field capture → GS training → depth/mesh; archival with standard formats. — Assumptions/Dependencies: Static artifacts; careful handling of glossy/transparent surfaces.

- Academic benchmarking and reproducible geometry extraction for GS (academia) — Use the closed-form gradient for median depth and continuous transmittance to standardize GS shape reconstruction; stronger multi-view consistency metrics. — Tools/products/workflows: Open-source code release; reference depth-rendering module; cycle reprojection error evaluator. — Assumptions/Dependencies: Adoption in common GS repos; datasets with accurate calibrations.

- Developer tooling: real-time training monitors and quality gates (software engineering) — Integrate cycle reprojection error and transmittance diagnostics to flag floaters early and tune densification/exposure compensation automatically. — Tools/products/workflows: Training dashboards; auto-parameter tuning scripts for GS + stochastic-solid depth. — Assumptions/Dependencies: Access to per-iteration metrics; GPU kernels for warp-level reductions.

Long-Term Applications

These opportunities build on the paper’s theory and early implementations but require further research, engineering, scaling, or standardization.

- Fully volumetric, physically consistent GS rendering for RGB and normals (software, graphics) — Extend the stochastic-solid formulation beyond depth to color and shading for end-to-end physically grounded rendering; reduces reliance on rasterization approximations. — Tools/products/workflows: “Volumetric-GS Engine” with continuous attenuation for all modalities; unified training that improves material/lighting consistency. — Assumptions/Dependencies: New kernels and schedulers; optimization stability; scene materials beyond Lambertian.

- Real-time on-device 3D capture for AR glasses and mobile (hardware/software, XR) — Hardware-accelerated transmittance and median-depth computation on edge devices; instant occlusion and collision for mixed reality. — Tools/products/workflows: SoC/GPU primitives for continuous transmittance, binary-search acceleration, and gradient accumulation; OS-level APIs for XR. — Assumptions/Dependencies: Dedicated acceleration; power/thermal budgets; robust calibration and SLAM integration.

- Dynamic scene reconstruction (4D “Gaussian solids”) for moving objects (robotics, entertainment) — Extend stochastic-solid depth to time-varying Gaussians; enable consistent geometry in dynamic captures for robot manipulation, sports analytics, and live VFX. — Tools/products/workflows: Temporal models for Gaussians; motion-aware transmittance; streaming mesh updates. — Assumptions/Dependencies: New optimization objectives; motion segmentation; handling occlusion changes.

- SLAM integration with stochastic-solid depth as a standard mapping layer (robotics) — Replace or complement depth sensors with GS-based, multi-view-consistent depth; unify mapping and visual localization. — Tools/products/workflows: ROS modules with real-time median-depth fusion; uncertainty-aware planners. — Assumptions/Dependencies: Real-time guarantees; loop-closure interfaces; robustness to illumination changes.

- City-scale photogrammetry and urban digital twins (smart cities, public policy) — Scale GS + stochastic-solid depth to districts; provide meshes for planning, code compliance checks, and disaster response simulations. — Tools/products/workflows: Cloud pipelines; distributed training; automated QA via reprojection metrics; integration with GIS/BIM. — Assumptions/Dependencies: Data governance (privacy, airspace permissions); large-scale compute; standardized formats.

- High-fidelity simulation assets for autonomous driving and robotics (transport, robotics) — Generate realistic, geometry-consistent environments for training perception/planning; reduce sim-to-real gap versus heuristic depth methods. — Tools/products/workflows: Capture → GS → mesh library → simulator plugins; auto-curation by multi-view consistency thresholds. — Assumptions/Dependencies: Coverage in challenging materials (glass, chrome); domain randomization for lighting/weather.

- Industrial asset monitoring and anomaly detection (energy, manufacturing) — Frequent scans of facilities (plants, wind turbines, pipelines) to detect geometric changes; leverage consistency metrics to flag errors or novel anomalies. — Tools/products/workflows: Scheduled UAV/handheld capture → GS → meshes → deviation analysis; alerting dashboards. — Assumptions/Dependencies: Repeatability of capture; integration with CMMS; handling reflective/specular surfaces.

- Medical AR guidance from handheld multi-view scans (healthcare) — Exploratory use for patient-specific surface models (e.g., orthopedic casts, external anatomical surfaces) to assist AR overlays. — Tools/products/workflows: Clinical capture protocols; GS-based geometry for overlay alignment; continuous transmittance for robustness. — Assumptions/Dependencies: Regulatory approval; strict privacy and calibration; limited to external surfaces (no internal anatomy).

- Standards and best practices for 3D capture quality and safety (policy, standards) — Define guidance around multi-view consistency, reprojection error thresholds, and data handling for public scanning initiatives and municipal projects. — Tools/products/workflows: Certification procedures; open benchmarks and audit tools; recommended capture coverage metrics. — Assumptions/Dependencies: Multi-stakeholder adoption; interoperable formats; clear data-use policies.

Glossary

- Alpha blending: A compositing technique that combines overlapping semi-transparent primitives by accumulating their opacities and colors in sorted order. "These 2D Gaussians are then sorted and alpha-blended to compute the final color."

- Anti-aliasing: Methods that reduce aliasing artifacts by smoothing or filtering across scales during rendering. "Mip-NeRF introduces an anti-aliased multiscale formulation through conical-frustum rendering~\cite{barron2021mipnerf},"

- Attenuation coefficient: A direction-dependent rate governing how light is attenuated along a path through a medium or stochastic solid. "the authors derive the attenuation coefficient of the object as follows:"

- Chamfer Distance: A metric measuring geometric discrepancy between two point sets or surfaces, often used to evaluate reconstruction accuracy. "We report Chamfer Distance on DTU and F1-score on TnT."

- Closed-form gradient: An explicit analytic expression for derivatives, avoiding numerical differentiation or iterative differentiation procedures. "we backpropagate through using a closed-form gradient with respect to all Gaussians contributing to the ray."

- Conical-frustum rendering: An anti-aliased volumetric rendering technique that integrates over cone-like frusta corresponding to pixel footprints. "Mip-NeRF introduces an anti-aliased multiscale formulation through conical-frustum rendering~\cite{barron2021mipnerf},"

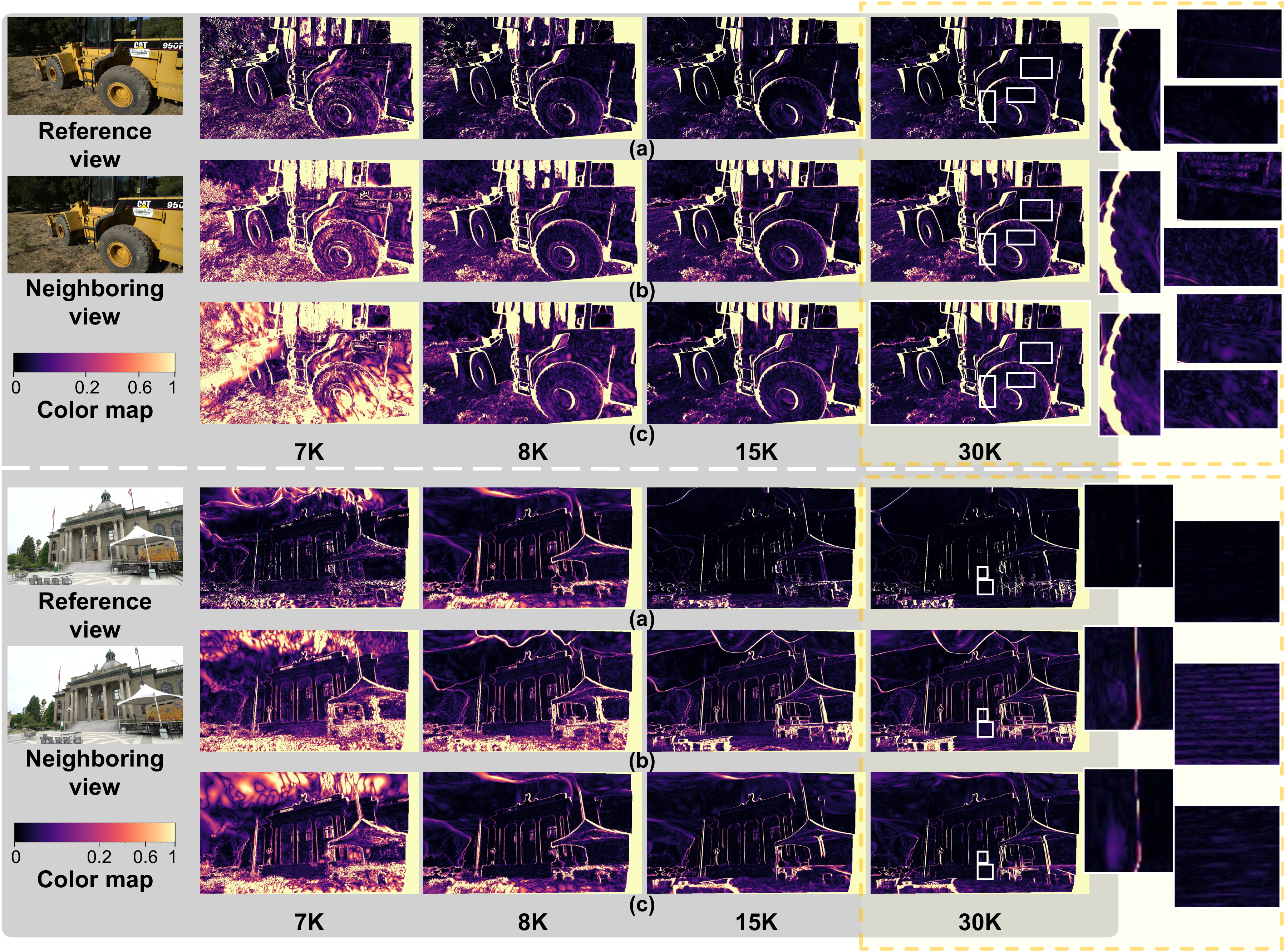

- Cycle reprojection error: A consistency measure that projects depth from one view to a neighbor and back to assess multi-view alignment. "We visualize the cycle reprojection error between a reference view and its nearest neighboring view throughout optimization."

- Densification: A strategy that adaptively adds primitives (e.g., Gaussians) to better cover geometry or appearance during optimization. "the densification strategy from GOF~\cite{yu2024gaussian},"

- Exposure compensation: A correction step that normalizes per-view brightness/exposure differences to stabilize multi-view optimization. "the exposure compensation from PGSR~\cite{chen2024pgsr}."

- Floaters: Spurious, view-dependent artifacts (e.g., stray semi-transparent blobs) that degrade geometry and consistency. "Due to inadequate geometry parameterization and approximation, existing shape reconstruction methods suffer from poor multi-view consistency and are sensitive to floaters."

- Free-flight distribution: The probability distribution of distances traveled by light before an interaction in a participating medium. "p is the free-flight distribution~\cite{Miller:VOS:2024} that represents the statistical distribution of the distances that the light travels before collision and serves as the weight for color integration,"

- Gaussian primitives: Parametric 3D Gaussian functions used as scene elements for rendering and reconstruction. "shape extraction from Gaussian primitives remains an open problem."

- Gaussian Splatting: A rendering framework that represents scenes with 3D Gaussians and uses rasterization-based splatting for efficient view synthesis. "Gaussian Splatting (GS) has demonstrated impressive quality and efficiency in novel view synthesis."

- Geometry-grounded radiance fields: Radiance-field formulations explicitly tied to a geometric representation (e.g., SDF/occupancy) to improve surface fidelity. "Many state-of-the-art methods further adopt geometry-grounded radiance fields: they start from a canonical geometry field (e.g., SDF/occupancy) and derive the rendering formulation accordingly."

- Implicit neural representations: Continuous functions (often MLPs) that encode signals like geometry or radiance without explicit meshes or grids. "Recent progress has been driven by implicit neural representations, most notably NeRF~\cite{mildenhall2020nerf}."

- Isosurface: A surface defined by points where a scalar field equals a constant value (e.g., level-set at 0.5). "Using our formulation, we develop an efficient depth-rendering method that approximates the isosurface of the geometric field and extracts finer-grained geometry from Gaussian primitives (Section~\ref{sec:depth}),"

- Local affine approximation: A linearization used to map 3D Gaussian primitives to 2D Gaussians under projection for fast rasterization. "To enable fast rasterization, Gaussian Splatting (GS) methods employ a local affine approximation to project 3D Gaussian primitives to 2D Gaussians on the image plane"

- Marching Tetrahedra: A mesh extraction algorithm that reconstructs surfaces by processing a tetrahedralization of space. "and adopt Marching Tetrahedra~\cite{yu2024gaussian,guedon2025milo} for large-scale scenes in the Tanks {paper_content} Temples dataset."

- Median depth: The depth along a ray where transmittance first drops to 0.5; robust to outliers relative to expected depth. "Following prior Gaussian Splatting methods, we use the median depth for geometric regularization:"

- Multi-view consistency: Agreement of reconstructed geometry or depth across different viewpoints. "exhibiting inherent multi-view consistency and robustness to floaters."

- Multi-view regularization: A loss encouraging consistency across views, often via reprojection-based constraints. "and multi-view regularization~\cite{chen2024pgsr};"

- NeRF: Neural Radiance Fields, which model view-dependent color and density functions for photorealistic view synthesis. "NeRF~\cite{mildenhall2020nerf} models a scene as a continuous radiance field,"

- Normal consistency loss: A regularizer encouraging smooth or consistent surface normals across views or neighboring points. "normal consistency loss~\cite{huang20242d},"

- Objects as Volumes: A theoretical framework interpreting opaque objects as stochastic volumes with well-defined attenuation and transmittance. "we leverage the theoretical foundation provided by the recent work `Objects as Volumes'~\cite{Miller:VOS:2024},"

- Occupancy: The probability or degree to which a spatial point belongs to a solid object (complementary to vacancy). "positions closer to the Gaussian center have higher occupancy;"

- Opacity: A per-pixel or per-primitive measure of occlusion strength used in compositing. "The opacity of the 2D Gaussian is defined as the maximum value of the projected 2D Gaussian:"

- Opacity-weighted ray averaging: A depth estimation heuristic that averages depths along a ray weighted by opacity, prone to boundary bias. "via opacity-weighted ray averaging~\cite{chen2024pgsr} that is easily biased by view-specific floaters."

- Rasterization: A rendering approach that projects scene primitives to the image plane and composites in screen space. "leverages efficient rasterization, enabling fast optimization and real-time novel view synthesis."

- Ray marching: Sampling-based integration along camera rays through a volume or field to compute color/depth. "geometry-grounded radiance fields typically rely on dense sampling, e.g., ray marching, along camera rays,"

- Signed distance function (SDF): A scalar field giving the signed distance to the nearest surface, widely used for surface modeling. "parameterize density through a signed distance function (SDF) and design rendering weights to obtain more faithful surfaces."

- Splat compositing: The accumulation rule used when compositing projected Gaussian splats in image space. "Standard Gaussian Splatting yields step-wise transmittance under splat compositing."

- Stochastic solids: Randomized volumetric models of opaque objects enabling consistent attenuation and transmittance definitions. "We prove that Gaussian primitives are equivalent to stochastic solids,"

- Surfels: Surface elements represented as oriented discs or 2D primitives approximating local surface patches. "GFSGS~\cite{jiang2025geometry} further leverages stochastic solids to construct 2D surfels for shape reconstruction."

- Transmittance: The fraction of light that survives traveling from the ray origin to a depth without interaction. "the overall transmittance at along the ray is the product of the transmittance calculated at each Gaussian primitive"

- TSDF fusion: A volumetric integration method using a Truncated Signed Distance Function to fuse depth maps into a mesh. "we apply the TSDF fusion~\cite{curless1996volumetric} implemented by Open3D~\cite{Zhou2018}"

- Vacancy: The complement of occupancy; a scalar field indicating emptiness probability used in stochastic volume formulations. "The vacancy value on the ray equals the transmittance on the front side of the Gaussians."

- Volume rendering: Rendering by integrating emission/absorption (and sometimes scattering) along rays through a volume. "presents a method to render stochastic solids using volume rendering."

- Volumetric attenuation: Continuous reduction of light intensity within a volume due to absorption along the path. "the stochastic solids model volumetric attenuation continuously"

- Voxel-grid optimization: Direct optimization of volumetric grids (voxels) to accelerate radiance field training and inference. "Plenoxel's voxel-grid optimization~\cite{fridovich2022plenoxels}"

- Warp-level reductions: GPU parallel primitives that aggregate values across threads in a warp for efficient computation. "use warp-level reductions for gradient accumulation."

Collections

Sign up for free to add this paper to one or more collections.