Vibe Coding Kills Open Source

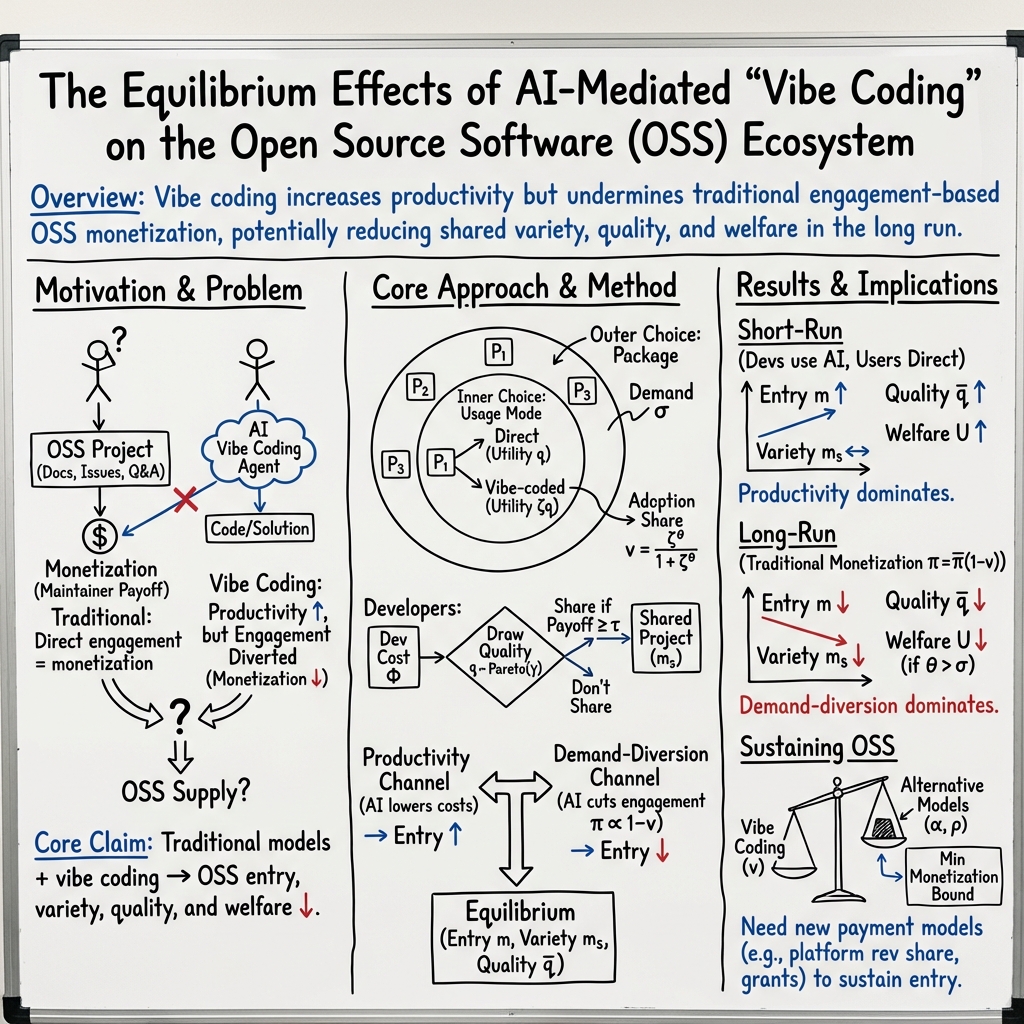

Abstract: Generative AI is changing how software is produced and used. In vibe coding, an AI agent builds software by selecting and assembling open-source software (OSS), often without users directly reading documentation, reporting bugs, or otherwise engaging with maintainers. We study the equilibrium effects of vibe coding on the OSS ecosystem. We develop a model with endogenous entry and heterogeneous project quality in which OSS is a scalable input into producing more software. Users choose whether to use OSS directly or through vibe coding. Vibe coding raises productivity by lowering the cost of using and building on existing code, but it also weakens the user engagement through which many maintainers earn returns. When OSS is monetized only through direct user engagement, greater adoption of vibe coding lowers entry and sharing, reduces the availability and quality of OSS, and reduces welfare despite higher productivity. Sustaining OSS at its current scale under widespread vibe coding requires major changes in how maintainers are paid.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at how “vibe coding” — using AI agents to build apps by stitching together open-source code — could change the world of open-source software (OSS). The big idea is that AI makes building software faster and easier, but it also changes how people use and interact with open-source projects. The authors ask: if AI does more of the work quietly in the background, will open-source creators still get the attention and rewards they need to keep making and maintaining the code we all rely on?

Key Questions

Here are the main questions the paper tries to answer:

- Does vibe coding increase productivity enough to outweigh the loss of direct user engagement (like reading docs, filing issues, or giving stars) that many maintainers rely on?

- How will AI-mediated usage affect the number and quality of open-source projects in the long run?

- Under what conditions does vibe coding help or hurt the open-source ecosystem?

- What changes are needed to keep open source healthy if vibe coding becomes widespread?

How They Studied It

To explore these questions, the authors build a simple but powerful model of the open-source world. Think of it like a simulated marketplace where both creators and users make choices:

- Developers decide whether to build and share a project:

- First, they invest time and effort to build something (this costs them).

- After they see how good their project turned out, they decide whether to publish it as open source (this also has fixed costs: docs, packaging, maintenance).

- If they share and people use it directly, they get “rewards” — reputation, job opportunities, donations, contracts, and so on.

- Users pick which packages to use:

- Users prefer more and better choices. This is like shopping in a store with many options — more variety helps people find better matches.

- With vibe coding, users can use a package either directly (reading docs, visiting the website) or through an AI agent (which “just does it” for them). The AI option often raises productivity, but it reduces direct engagement with the maintainer.

- Two key ideas drive the model:

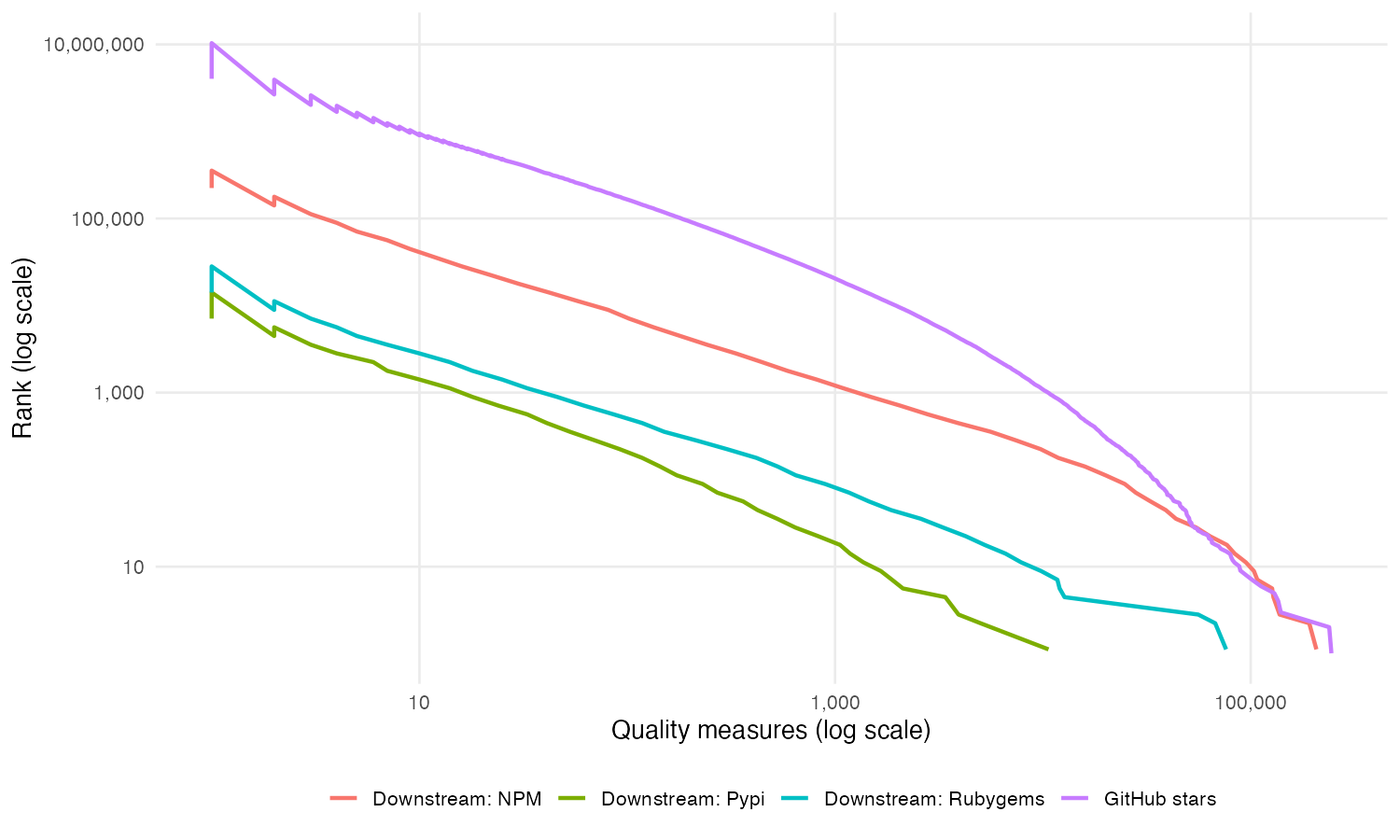

- Heavy tails: Most projects get little attention, but a small number are extremely popular. This is like YouTube, where a few videos get millions of views and most barely get any. The model uses this fact to capture realistic differences in project “quality.”

- Software-begets-software: New software is built using existing software. If the ecosystem has more and better packages, it becomes cheaper and faster to build new ones. This creates feedback loops — improvements today make future improvements easier.

- Vibe coding changes two things at once:

- Productivity channel: AI lowers the cost and time to use and combine packages. That’s good for users and developers.

- Demand-diversion channel: Using AI agents reduces direct contact with maintainers (fewer doc visits, issues, Q&A, stars). Since many maintainers earn rewards through those interactions, their ability to “get paid” drops.

In everyday terms, imagine a robot assistant that can build your project by secretly pulling together lots of Lego pieces. You get your project quickly, but you don’t see which Lego makers helped, you don’t read their guides, and you don’t leave reviews. The Lego makers become less visible, earn fewer rewards, and some stop making new pieces.

Main Findings and Why They Matter

Here are the core results of the paper:

- Under current OSS business models (where maintainers mainly earn through direct engagement), more vibe coding leads to fewer and worse open-source projects overall.

- Even though AI makes building software easier, the drop in direct engagement shrinks the “reward per user” for maintainers.

- That raises the bar for which projects it’s worth sharing, so fewer projects get published.

- Variety shrinks, and average quality among shared OSS can fall over time.

- Surprisingly, user welfare (the overall benefit to users) can go down even as AI gets better, because the ecosystem supplying the essential building blocks withers.

- Feedback loops can speed up the decline.

- The same mechanisms that once helped open source grow fast (more and better packages making it cheaper to build even more) can work in reverse.

- If AI pulls engagement away quickly, fewer projects get shared, making it harder and costlier to build new software, and the ecosystem contracts faster.

- There’s a positive scenario: if vibe coding is used only by professional developers and doesn’t hide projects from end users, the bad “demand-diversion” channel largely disappears.

- In that case, AI mostly lowers development costs without reducing maintainers’ rewards from direct users.

- Entry goes up, average quality rises, and the ecosystem gets stronger.

- To keep open source healthy under widespread vibe coding, maintainers need new ways to get paid that don’t depend only on direct engagement.

- The paper suggests that, with realistic numbers, per-user monetization must stay close to current levels to avoid large declines in OSS provision.

- In other words, AI’s productivity boost isn’t enough by itself — the ecosystem needs better funding models.

What This Means for the Future

If vibe coding becomes the default way software is built, the open-source world — which powers most modern software — could suffer unless we change how maintainers are rewarded. Possible implications include:

- Platforms and AI vendors may need to share value back to open-source maintainers whose work they rely on. For example, usage-based payments from AI agents to libraries they use.

- Companies and foundations could expand sponsorships, grants, or contracts to support critical OSS infrastructure.

- Documentation, discovery, and attribution tools might need updates so that AI-mediated usage still translates into visibility and rewards for the right projects.

- Policymakers and ecosystem leaders could encourage standards that link AI usage to transparent upstream attribution and fair compensation.

In short: vibe coding is powerful, but without new payment and attribution systems, it risks draining the energy that keeps open source alive. The paper’s message is simple and urgent — if AI changes how demand is expressed, we must also change how supply is sustained.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following items highlight what remains missing, uncertain, or unexplored, with concrete directions for future research:

- Specify and empirically estimate the functional relationship between mediated usage and maintainer monetization (i.e., how per-user reward π declines with vibe-coding share v). Current treatment acknowledges π depends on usage technology but does not pin down π(v) or its elasticity.

- Calibrate core parameters with microdata: σ (substitutability across packages), θ (substitutability across usage modes), ζ (AI capability/productivity), β (software share in development), and γ (quality-tail shape). Provide estimates by segment (language, ecosystem, enterprise vs individual) and validate the nested discrete-choice fit.

- Develop a dynamic model of entry, exit, and maintenance under rising AI capability. Analyze transitional dynamics, speed of adjustment, and potential tipping points versus the static long-run equilibrium.

- Endogenize how vibe coding affects costs: allow τ (sharing/packaging/maintenance) and Φ (development) to respond to AI (e.g., AI-generated docs may lower τ; AI-created code may raise long-run maintenance burdens).

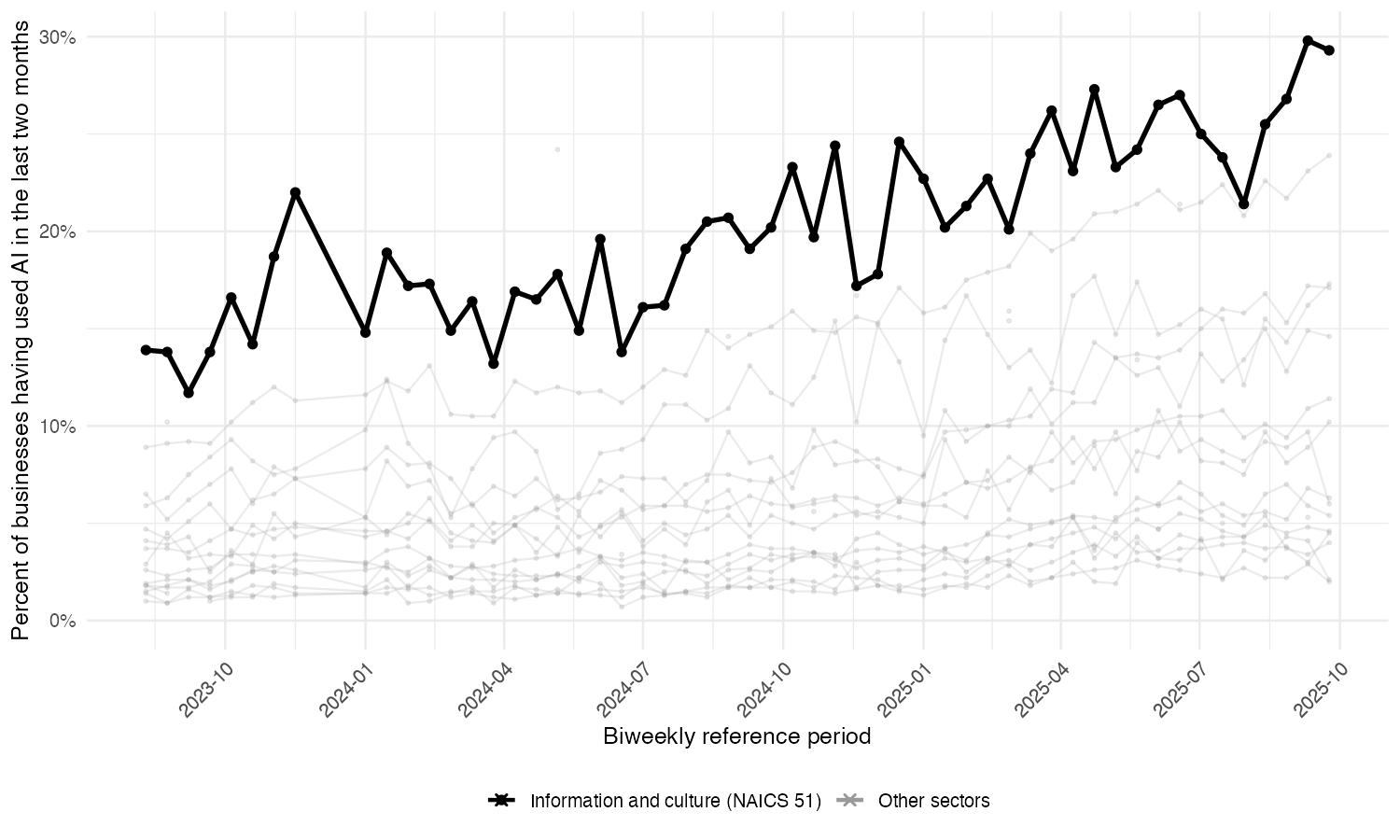

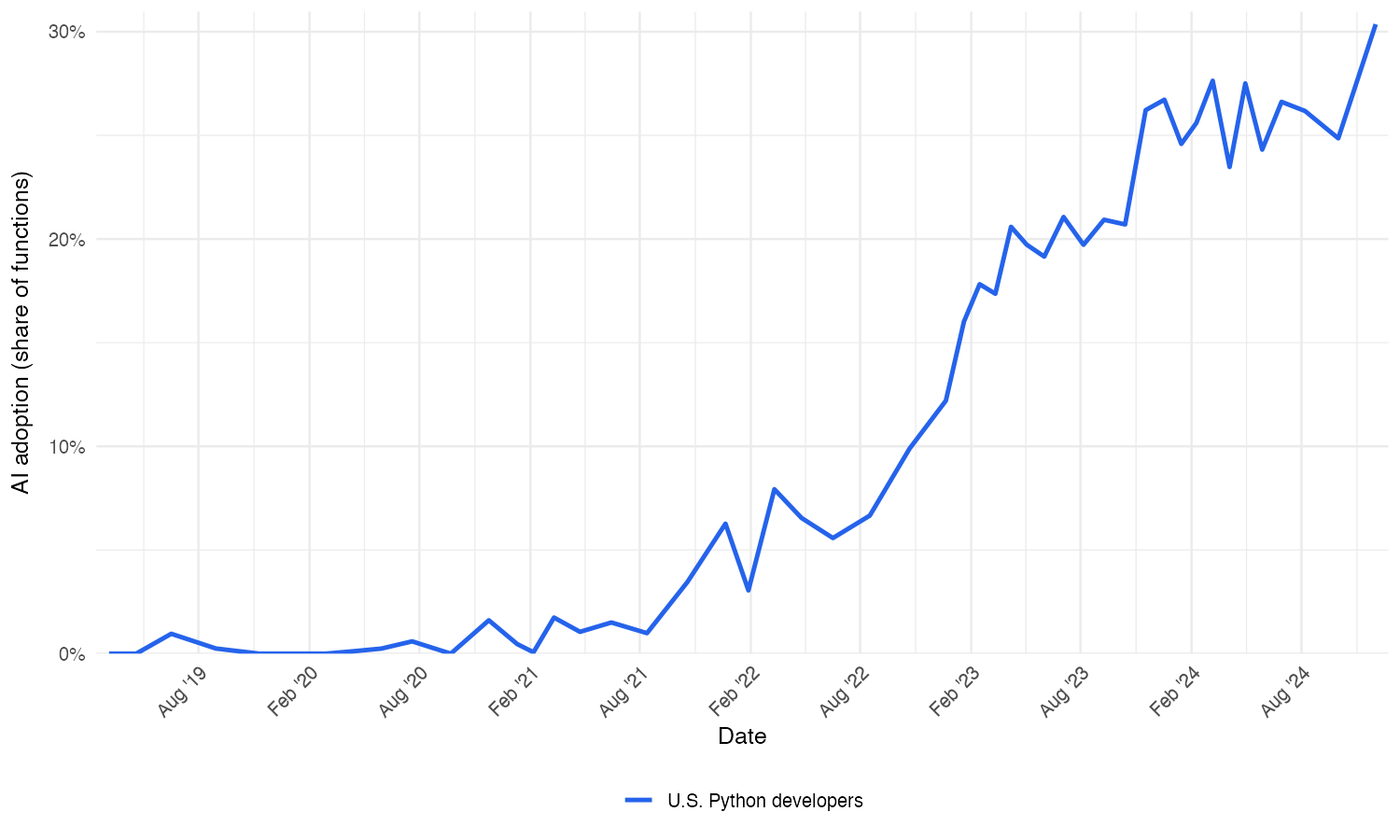

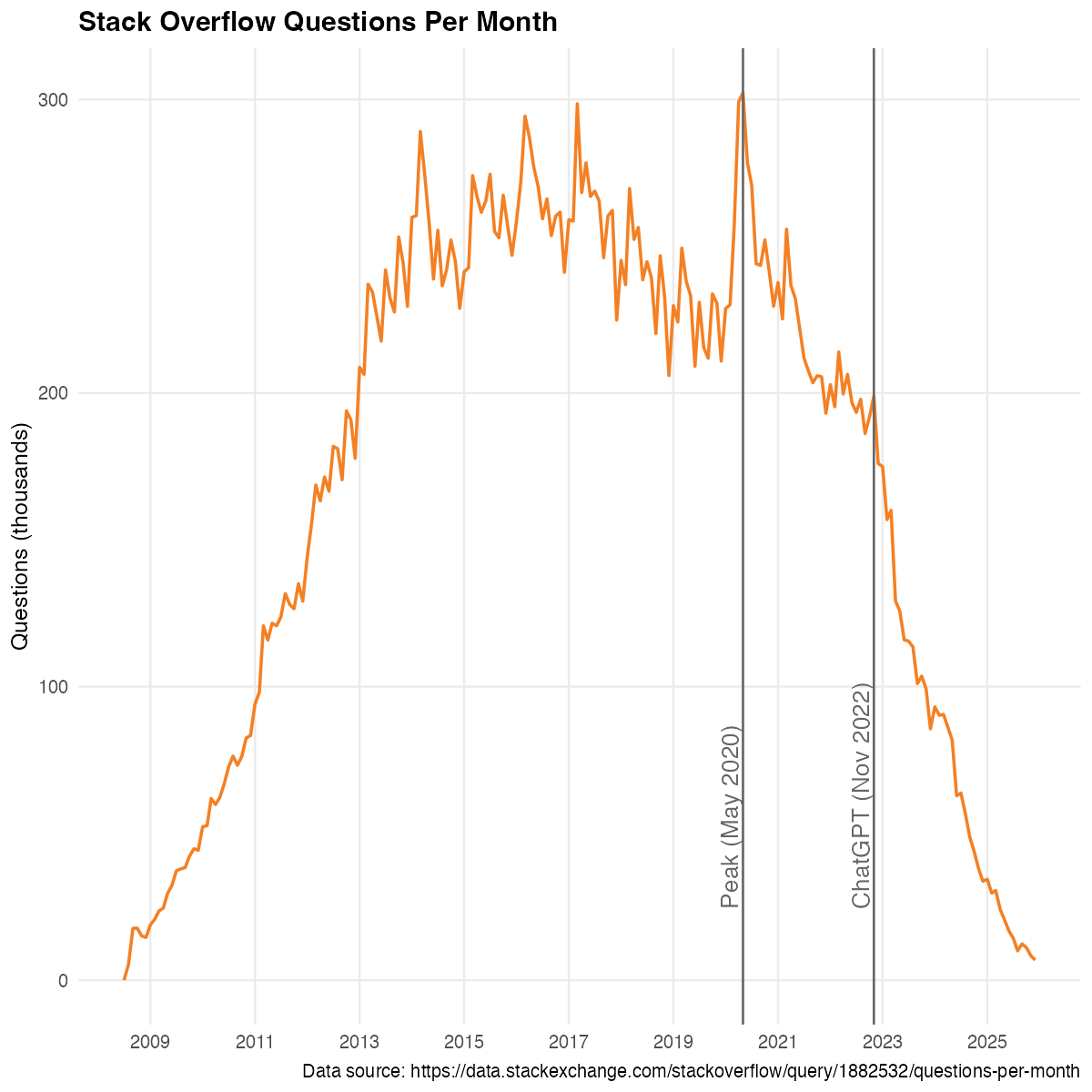

- Quantify the demand-diversion channel beyond case studies (Stack Overflow, Tailwind CSS) with broad, causal evidence linking AI adoption to declines in maintainers’ monetizable engagement (docs traffic, sponsor revenue, consulting leads) across many projects.

- Test the key assumption that mediated adoption substitutes for engagement rather than complements it. Identify contexts where AI increases discoverability or upstream signals (e.g., agent-generated citations) and measure net effects on π.

- Characterize threshold conditions for welfare decline under vibe coding (in terms of θ, ζ, β, γ, and π(v)). Provide closed-form cutoffs or numerical maps showing when productivity gains are outweighed by monetization losses.

- Relax the Pareto-tail assumption and the resulting knife-edge prediction that m_s is independent of entry m. Explore alternative quality distributions and their implications for variety, average quality, and welfare.

- Incorporate multi-segment demand and supply (professional developers vs end-users, enterprise vs indie, different languages) with segment-specific v, π(v), and adoption elasticities. Assess whether vibe coding harms some segments while benefiting others.

- Model collaboration and team production for maintainers (not just a single decision maker per project). Analyze how team structure and contributor networks affect sharing cutoffs, maintenance capacity, and monetization.

- Account for package interdependence and modularity (dependency networks, versioning, security externalities). Extend the model to capture how mediated usage affects upstream maintenance, downstream reliability, and ecosystem resilience.

- Improve quality and success measurement beyond stars and dependencies. Triangulate with downloads, CVEs, runtime telemetry, sponsorships, and enterprise procurement signals to reduce measurement bias.

- Investigate how vibe coding alters the distribution of realized project quality (γ) via selection, reuse, and composability. Does AI shift the tail heaviness or induce more mid-quality entrants?

- Examine the impact of AI mediation on security, reliability, and bug resolution rates. Quantify whether declining engagement slows patching and increases vulnerability risk, and integrate these externalities into welfare.

- Analyze platform and vendor strategy: AI vendors’ incentives to attribute, compensate, or license OSS content; bargaining models for revenue sharing; and policy environments that shape willingness to pay.

- Design and evaluate attribution and usage-measurement infrastructure for mediated workflows (e.g., telemetry standards, privacy-preserving counters, agent-level provenance). Assess feasibility and gaming risks for usage-based compensation.

- Compare alternative monetization regimes that could sustain OSS under vibe coding: usage-based payments from AI vendors, sponsorship matching, platform tax/transfer schemes, or procurement reforms. Model their incidence, efficiency, and equilibrium effects on entry and quality.

- Explore licensing constraints and compliance under AI mediation (copyleft, attribution requirements). Quantify how licensing frictions affect agent adoption, ecosystem composition, and maintainers’ bargaining power.

- Identify and estimate the elasticity of mediated adoption (θ) using exogenous shocks to AI capability (e.g., staged model releases, policy changes). Use differences across ecosystems to validate the S-curve and responsiveness assumptions.

- Separate the “developer-only” vibe-coding benchmark from mixed end-user mediation in data. Measure the share of final-user applications built via agents and its distinct impact on π and τ relative to developer-only assistance.

- Test robustness to alternative discrete-choice structures (e.g., nested logit vs other RUMs, correlated taste shocks) and assess how model choice affects welfare and entry predictions.

- Quantify the potential for AI to increase effective variety (e.g., by surfacing niche packages or auto-generating glue code) versus reducing visible variety through consolidation. Measure net changes in m_s and match quality.

- Examine whether AI changes labor cost κ and the software share β in practice (e.g., shifting effort from hand-coded labor to integration). Estimate how these shifts alter the software-begets-software feedback.

- Provide validation of the predicted decline in average OSS quality under vibe coding with longitudinal evidence (e.g., changes in bug density, release cadence, dependency health) across ecosystems.

- Develop policy evaluation frameworks comparing “raise π” versus “lower Φ/τ” interventions for closing the gap to first-best. Include cost effectiveness, administrative feasibility, and platform governance considerations.

Practical Applications

Overview

The paper models how AI-mediated “vibe coding”—agents that select, compose, and modify open-source software (OSS) on users’ behalf—raises productivity while diverting engagement away from traditional channels (docs, issues, Q&A) that many maintainers rely on for monetization. Under current engagement-based business models, the model predicts that increasing vibe-coding adoption reduces OSS entry, sharing, variety, and average quality, and can lower welfare despite higher productivity. Sustaining OSS at scale under widespread vibe coding requires changes in how maintainers are paid and in how attribution, measurement, and compensation are integrated into AI tools and platforms.

Below are practical applications, organized by what can be implemented immediately versus what likely requires longer-term development, standards, and policy.

Immediate Applications

The following items can be piloted or deployed with existing tools, platform capabilities, and organizational workflows.

- Software and AI tooling

- “Upstream-aware” AI coding assistants in IDEs

- Feature: Show which OSS packages the agent used or referenced, link to official documentation, and surface maintainer funding links (e.g., GitHub Sponsors) inline with suggestions.

- Workflow: Each code completion includes a compact SBOM snippet and “support maintainer” button.

- Sectors: Software, education.

- Assumptions/Dependencies: Requires agent-side SBOM generation, package attribution, and access to machine-readable funding metadata (e.g., OpenSSF, GitHub funding.yml).

- OSS-friendly agent settings

- Feature: An assistant mode that prefers posting sanitized questions and answers to public forums (e.g., Stack Overflow, GitHub Discussions) to restore engagement externalities.

- Sectors: Software, education.

- Assumptions/Dependencies: Organizational privacy controls; consent UX; forum API automation.

- Platforms and registries (npm, PyPI, GitHub)

- Funding metadata normalization and APIs

- Feature: Standardize funding links, license tags, and “AI-mediated usage” flags; expose APIs for assistants to retrieve sponsor info for any dependency.

- Tool: Registry-level Funding API endpoints and badges.

- Sectors: Software.

- Assumptions/Dependencies: Minor schema changes, community coordination; backward compatibility.

- SBOM-first workflows

- Feature: Registry integration that makes SBOM export a default artifact for projects and agent toolchains.

- Sectors: Software, healthcare, energy, finance (compliance-heavy sectors).

- Assumptions/Dependencies: Support for SPDX/CycloneDX; CI/CD integration.

- Enterprise IT and engineering management

- OSS sustainability budgets tied to mediated usage

- Workflow: A bot analyzes SBOMs from AI-generated code, ranks critical upstream packages, and allocates recurring sponsorship or support contracts.

- Tool: CI stage “Support Check” that gates deploys on sponsorship or support SLAs for high-risk/critical OSS.

- Sectors: Software, finance, healthcare, energy, robotics.

- Assumptions/Dependencies: Procurement approval, vendor risk processes, SBOM availability, finance ops.

- Engagement-preserving practices

- Workflow: Require teams to open public issues for reproducible bugs, contribute docs improvements, and follow “link-back” norms in agent-assisted work.

- Sectors: Software, education.

- Assumptions/Dependencies: Team training; legal review for public issue posting.

- Maintainers and OSS teams

- Diversified monetization beyond engagement

- Actions: Offer paid support tiers, enterprise add-ons, dual licensing (e.g., special terms for AI-mediated use), and machine-readable funding metadata.

- Sectors: Software (all OSS).

- Assumptions/Dependencies: License compatibility; community acceptance; clear pricing and SLAs.

- Agent-friendly documentation

- Actions: Structure docs for RAG and assistants (stable URLs, semantic headings, FAQ sections, embeddings), and instrument doc telemetry ethically to infer mediated traffic.

- Sectors: Software.

- Assumptions/Dependencies: Privacy compliance; opt-in analytics; performance costs.

- Policy and governance (organizational and public)

- Procurement checklists for OSS sustainability

- Action: Include “OSS sustainability clause” in contracts (sponsorship/support for critical dependencies; SBOM submission; update cadence).

- Sectors: Government, healthcare, finance, energy.

- Assumptions/Dependencies: Legal frameworks; supplier onboarding.

- Tax credits or matching grants for OSS sponsorship

- Action: Offer credits for verified support of critical OSS infrastructure used in production systems.

- Sectors: Public policy.

- Assumptions/Dependencies: Verification via SBOMs and receipts; fraud prevention.

- Measurement and research

- Estimating substitution elasticity and diversion (θ and v)

- Actions: Collect adoption curves, correlate AI usage with declines in public Q&A/docs traffic, and run field experiments to quantify engagement diversion.

- Sectors: Academia, platforms, AI vendors.

- Assumptions/Dependencies: Access to usage logs and traffic data; IRB/privacy; standardized metrics.

- Daily practice for developers and teams

- “Support what you use” norms

- Actions: Configure assistants to surface upstream docs, star repos, file issues, and sponsor critical packages; include attribution files in repos.

- Sectors: Daily life in software.

- Assumptions/Dependencies: Team norms; small time costs; easy sponsor UX.

Long-Term Applications

These items require standards, cross-platform coordination, productization at scale, or regulatory frameworks. They capitalize on the paper’s model and comparative statics to design incentives that counteract engagement diversion under vibe coding.

- Cross-ecosystem attribution and compensation

- Open Attribution Ledger for AI-mediated software use

- Product: A standardized, cryptographic ledger that records which upstream OSS resources agents used (content hashes + package IDs) and enables usage-based micropayments.

- Sectors: Software, finance (payments infrastructure), policy.

- Assumptions/Dependencies: Cross-vendor standards, privacy-preserving telemetry, governance and dispute resolution, transaction costs and fraud controls.

- Per-use royalty programs for OSS in AI assistants

- Product: AI vendors pool revenue and distribute shares to maintainers based on measured mediated usage and criticality scores.

- Sectors: Software, AI industry, policy.

- Assumptions/Dependencies: Accurate measurement, agreed criticality weighting, legal license compatibility, antitrust-safe collaboration.

- Licensing and standards evolution

- “Mediated-use compatible” OSS licenses

- Product: Licenses (or addenda) that require compensation or attribution when usage is primarily AI-mediated, with OSI-guided principles to preserve openness while addressing appropriation gaps.

- Sectors: Software, legal/policy.

- Assumptions/Dependencies: Community consensus; enforcement mechanisms; compatibility with existing ecosystems.

- Open Source Sustainability Standard

- Product: A certification for packages that meet sustainability criteria (funding channels, governance, release practices) and qualify for enterprise procurement preference or assistant prioritization.

- Sectors: Software, policy, procurement-heavy industries.

- Assumptions/Dependencies: Standard-setting body, audit processes, incentives for adoption.

- Agent ecosystems that restore public engagement

- Maintainer co-pilots

- Product: OSS-side agents that triage issues, generate high-quality public Q&A from private agent interactions, and maintain docs continuously to channel mediated usage back into visible engagement.

- Sectors: Software, education.

- Assumptions/Dependencies: API integrations with forums, guardrails to prevent spam/low-quality content, maintainers’ oversight.

- Macro-level policy instruments

- AI-mediated usage contributions (industry fund)

- Policy: A levy or voluntary pledge from AI vendors and large enterprises into an OSS infrastructure fund, allocated by measured dependency criticality and usage.

- Sectors: Policy, software, finance.

- Assumptions/Dependencies: Governance, allocation transparency, international coordination.

- SBOM mandates and sustainability reporting

- Policy: Require SBOMs and OSS sustainability reporting for regulated sectors (healthcare, energy, finance); tie compliance to funding or procurement preference.

- Sectors: Healthcare, energy, finance, public sector.

- Assumptions/Dependencies: Regulatory capacity; tooling across vendors; auditability.

- Sector-specific reliability programs

- Critical OSS support consortia

- Product: Industry alliances funding and staffing critical OSS (e.g., crypto libraries, data frameworks) with long-term SLAs and redundancy, acknowledging “software-begets-software” amplification.

- Sectors: Healthcare (medical software), energy (grid software), finance (risk & trading), robotics (control stacks).

- Assumptions/Dependencies: Multi-stakeholder governance; shared risk models; legal agreements.

- Extending the model’s method to other knowledge commons

- Apply nested logit + heavy-tail entry to content ecosystems

- Use cases: Wikipedia editing, academic preprints, MOOCs, design assets—where AI mediation may divert engagement (citations, forum activity) while boosting consumption.

- Sectors: Academia, education, media.

- Assumptions/Dependencies: Domain-specific data to estimate θ (substitution) and γ (tail heaviness); cross-platform telemetry access.

- Research-grade calibration and policy evaluation

- Structural estimation of the ecosystem under vibe coding

- Product: An empirical workflow to estimate θ, σ, γ, β, and per-user monetization π; simulate counterfactuals for different monetization designs and assistant capabilities.

- Sectors: Academia, policy, AI vendors.

- Assumptions/Dependencies: Longitudinal datasets (usage, engagement, sponsorship), identification strategies, public-private data collaborations.

Notes on Assumptions and Dependencies

- Accurate attribution of upstream usage is foundational for most applications. This depends on agent-side SBOM generation, content hashing, and standardized metadata across registries.

- Privacy, consent, and security constraints limit public posting and telemetry; solutions must be opt-in, privacy-preserving, and compliant with regulations.

- Legal and licensing frameworks must evolve to recognize AI-mediated usage without undermining openness or creating fragmentation.

- Measured substitution elasticity between direct and mediated usage (θ > σ in the model) and heavy-tailed project quality (γ > σ) are central to predicting diversion strength; real-world calibration is necessary.

- The “software-begets-software” parameter (β) magnifies both positive and negative shocks; productivity gains alone may not sustain OSS without compensating monetization.

- Community acceptance and platform cooperation (AI vendors, registries, enterprises) are critical for standards and funding mechanisms to work at scale.

Glossary

- Appropriable demand: The portion of user demand that can be captured as private returns by maintainers or firms. "We ask whether the productivity gains from vibe coding outweigh the loss of appropriable demand once developer entry and selection respond."

- Business-stealing externality: A negative spillover where new entrants draw users from incumbents without internalizing the loss to others. "Utility falls because entry decisions generate a business-stealing externality: when a new package enters, it attracts users away from existing packages, but the entrant does not internalize that this diversion reduces the engagement-based returns that other maintainers rely on."

- Cobb–Douglas technology: A production function with constant elasticities of output with respect to inputs. "We model software production with a Cobb-Douglas technology: elasticity β in (0,1) to existing software and 1-β to labor."

- Comparative statics: Analysis of how equilibrium outcomes change in response to parameter changes. "Corollary [Comparative Statics]"

- Demand-diversion channel: The pathway through which mediated usage reduces the engagement maintainers can monetize. "The second is a demand-diversion channel: when users rely on AI agents rather than direct interaction, maintainers capture less engagement and therefore less private return per unit of usage."

- Discrete-choice demand system: A framework in which users choose among discrete alternatives based on utilities with idiosyncratic shocks. "Users value variety and choose among available packages under a discrete-choice demand system."

- Downstream dependence: The extent to which other projects rely on a given project as a dependency. "Downstream dependence is even more concentrated."

- Elasticity of substitution: A parameter measuring how easily users switch between options. "where θ > 1 is the elasticity of substitution between direct and vibe-coded usage."

- Endogenous entry: Entry decisions determined within the model based on incentives and equilibrium conditions. "We develop a model with endogenous entry and heterogeneous project quality in which OSS is a scalable input into producing more software."

- Endogenous selection into sharing: The decision to release a project as OSS depends on realized quality and payoffs within the model. "heavy-tailed heterogeneity in project outcomes with endogenous selection into sharing"

- Endogenous variety: The number of available products (software packages) is determined within the model. "in the spirit of monopolistic competition with endogenous variety"

- Engagement-based monetization: Revenue or rewards that rely on direct user interactions with a project. "AI lowers development costs without eroding engagement-based monetization."

- First-best allocation: The welfare-maximizing outcome chosen by a social planner. "First-Best Allocation"

- Free entry: A condition where developers enter until expected profits are driven to zero by costs. "Free entry requires that the expected payoff from developing software equals the up-front cost:"

- General equilibrium: An analysis that considers all markets and feedbacks simultaneously. "This shift raises a general equilibrium question about the sustainability of open source software (OSS)."

- Heavy-tailed heterogeneity: Outcome variability with a fat upper tail, implying extreme successes are disproportionately common. "heavy-tailed heterogeneity in project outcomes"

- Heterogeneous-project selection: The idea that only projects above a quality threshold are shared, leading to selection effects. "and heterogeneous-project selection"

- Idiosyncratic preference shock: Random, individual-specific utility variation in discrete-choice models. "The idiosyncratic preference shock ν_{ik} captures heterogeneity in user needs:"

- Intermediate input: A good used in producing other goods; here, software used to build more software. "software as an intermediate input into producing more software (a “software-begets-software” feedback)."

- Log–rank regression: A regression of log(rank) on log(size) used to estimate tail behavior. "We estimate log–rank regressions of the form on binned medians"

- Logistic function: An S-shaped function commonly used to model adoption probabilities. "This logistic function has an intuitive interpretation."

- Love-of-variety effect: Welfare gains from having more distinct options to choose from. "a love-of-variety (or better-match) effect"

- Magnification mechanism: A feedback process that amplifies shocks through the system. "the same magnification mechanism that amplifies positive shocks to software demand also amplifies negative shocks to monetizable engagement."

- Monetizable engagement: The portion of user interaction that can be converted into revenue or rewards. "the monetizable engagement margin can shrink even as adoption expands."

- Monopolistic competition: A market structure with many differentiated products and free entry. "in the spirit of monopolistic competition with endogenous variety"

- Nested choice: A two-level choice structure where users first pick a product and then a mode of use. "This nested choice captures that vibe coding is a usage technology that affects both user utility and the degree of engagement that maintainers can monetize."

- Nested Logit: A discrete-choice model allowing for correlated preferences within nests. "Keywords: Open Source Software, Artificial Intelligence, Network Externalities, Nested Logit, Software Economics"

- Network externalities: Effects where a product’s value depends on others’ usage or participation. "Keywords: Open Source Software, Artificial Intelligence, Network Externalities, Nested Logit, Software Economics"

- Nonrival input: An input that can be used by many without being depleted. "OSS is a nonrival input into producing more software"

- Nonrivalry: The property of a good where one person’s use does not reduce availability to others. "large fixed costs and nonrivalry"

- Pareto distribution: A heavy-tailed distribution often used to model extreme outcomes. "draw realized project quality q ≥ 1 i.i.d. from a Pareto distribution with shape parameter γ>0"

- Pareto exponent: The parameter governing the tail thickness of a Pareto distribution. "the slope b maps directly to the implied Pareto exponent under the model’s canonical orientation."

- Per-user monetization: Revenue captured per user, used to sustain project entry. "what is the lowest per-user monetization that sustain OSS entry at its current level."

- Productivity channel: The pathway where AI reduces effective costs and raises output or utility. "The first is a productivity channel: by reducing the effective cost of using a given package, AI raises user utility and lowers the cost of producing new software that builds on existing components."

- Productivity shifter: A factor that directly changes productivity levels in the model. "adoption rates reflecting a productivity shifter rather than full automation"

- Rank–size relationship: A log–log linear relation between rank and size indicative of power-law behavior. "The figure plots log–log rank–size relationships for GitHub repositories using stars (attention) and downstream dependencies (usage) as proxies for project success."

- S-curve: An adoption curve that accelerates after a threshold and then saturates. "illustrates this S-curve for θ = 3.5"

- Sharing cutoff: The quality threshold above which developers release projects as OSS. "Since Π(q) is increasing in q, the sharing decision is characterized by a cutoff q0 defined by the indifference condition Π(q0)=τ"

- Software-begets-software effect: A feedback where better software lowers development costs and spurs more software creation. "a feedback we call the software-begets-software effect."

- Truncated Pareto: A Pareto distribution restricted to values above a cutoff. "the distribution of quality among shared projects is a truncated Pareto."

- Underprovision of entry: Equilibrium entry is below the socially optimal level due to incomplete appropriability. "Underprovision of Entry"

- Utility shifter: A parameter that scales the utility delivered per unit of quality. "Expected utility is increasing in average software quality \bar q and in the utility shifter u"

- Vibe coding: AI-mediated software building by selecting and assembling OSS components. "In vibe coding, an AI agent builds software by selecting and assembling open-source software (OSS), often without users directly reading documentation"

- Zipf orientation: The convention for plotting rank–size relationships where slopes map to tail exponents. "the (positive) slope in the canonical Zipf orientation."

Collections

Sign up for free to add this paper to one or more collections.