ExpSeek: Self-Triggered Experience Seeking for Web Agents

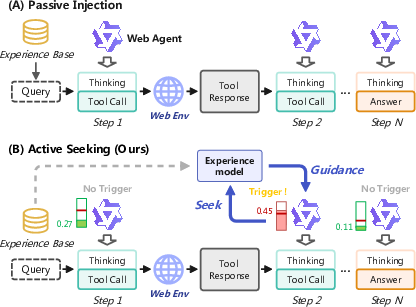

Abstract: Experience intervention in web agents emerges as a promising technical paradigm, enhancing agent interaction capabilities by providing valuable insights from accumulated experiences. However, existing methods predominantly inject experience passively as global context before task execution, struggling to adapt to dynamically changing contextual observations during agent-environment interaction. We propose ExpSeek, which shifts experience toward step-level proactive seeking: (1) estimating step-level entropy thresholds to determine intervention timing using the model's intrinsic signals; (2) designing step-level tailor-designed experience content. Experiments on Qwen3-8B and 32B models across four challenging web agent benchmarks demonstrate that ExpSeek achieves absolute improvements of 9.3% and 7.5%, respectively. Our experiments validate the feasibility and advantages of entropy as a self-triggering signal, reveal that even a 4B small-scale experience model can significantly boost the performance of larger agent models.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces ExpSeek, a new way to help AI “web agents” (computer programs that browse the internet to answer questions) do better. Instead of stuffing the AI with a bunch of advice at the start, ExpSeek teaches the agent to ask for targeted help at the exact moments it feels confused while working step by step. Think of it like a student doing a research project who knows when to raise their hand and ask for a hint, and gets a short, useful tip based on mistakes students made in the past.

What questions did the researchers ask?

The paper focuses on two simple questions:

- When should an AI agent ask for help while browsing the web?

- What kind of help should it get at that moment so it can recover and keep going?

How did they do it?

The researchers built a system that combines two core ideas.

- Deciding when to ask for help (self-triggering):

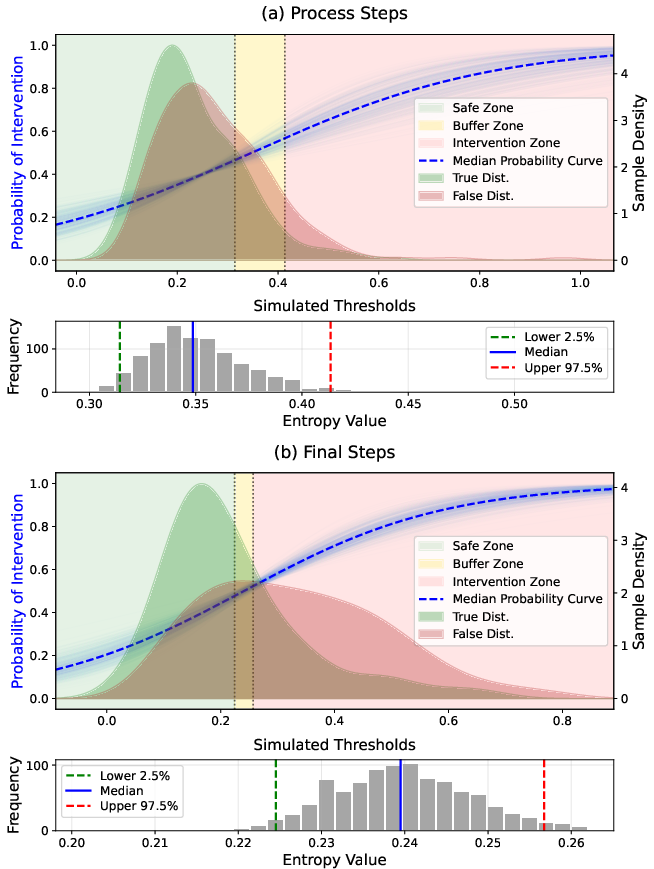

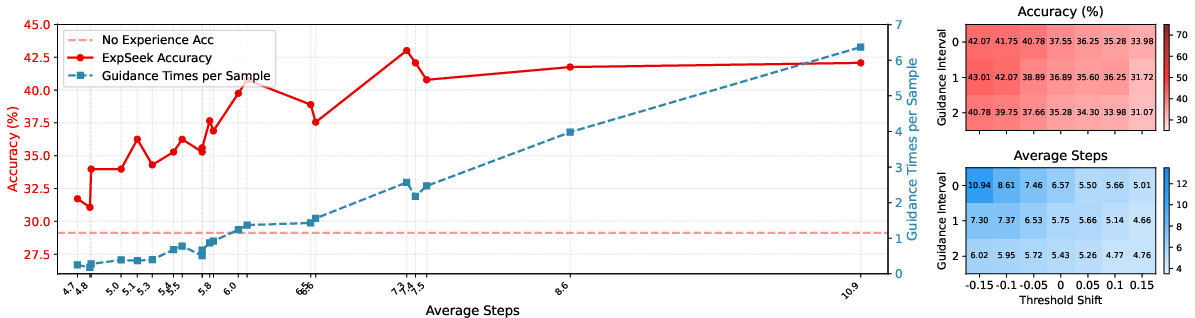

- The agent watches its own “confidence” on each step using a number called entropy. Entropy is higher when the agent is unsure (like having many possible next words and not favoring any). Lower entropy means it’s confident.

- The team studied past examples to find sensible “cutoff” ranges for entropy. If the agent’s entropy goes above this range, it likely needs help; if it stays below, it should continue on its own.

- To set these cutoffs, they used simple statistical tools: logistic regression (a basic yes/no predictor) and bootstrap resampling (repeating the analysis many times on shuffled data to get stable, reliable ranges). In everyday terms: they trained a simple judge to map “how unsure” the agent feels to “should we intervene now?” and made sure the thresholds are robust.

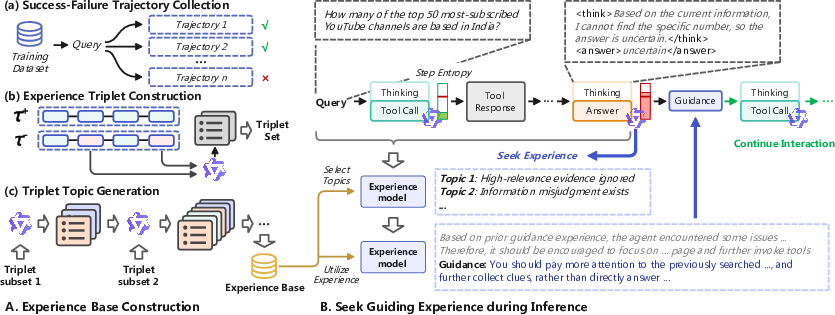

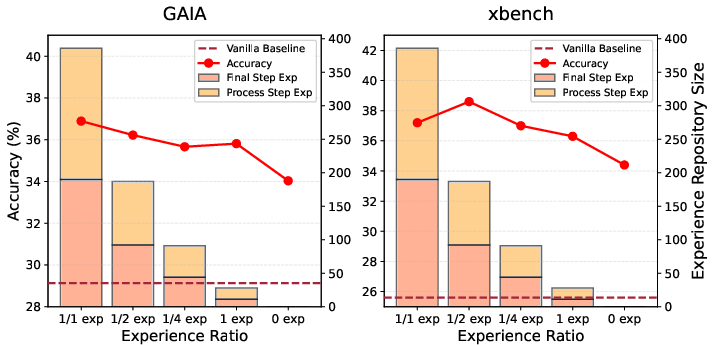

- Deciding what help to give (step-level guidance):

- They built an “experience base” from pairs of past attempts: one successful and one failed. For each mistake, they stored a short, reusable “experience triplet”:

- Behavior: what the agent did at that step,

- Mistake: what went wrong,

- Guidance: how to correct course (high-level tips, not the final answer).

- When help is triggered, a separate “experience model” looks at the agent’s current situation, picks the most relevant experience topics, and writes a brief, tailored tip for that step. This tip is injected into the agent’s context so it can proceed smarter.

- The help is step-aware: different styles for “process steps” (searching/reading) and for the “answer step” (final answer).

In short: the agent checks its own confusion level each step; if it’s confused, it grabs a short, customized hint based on past mistakes—then keeps going.

What did they find, and why is it important?

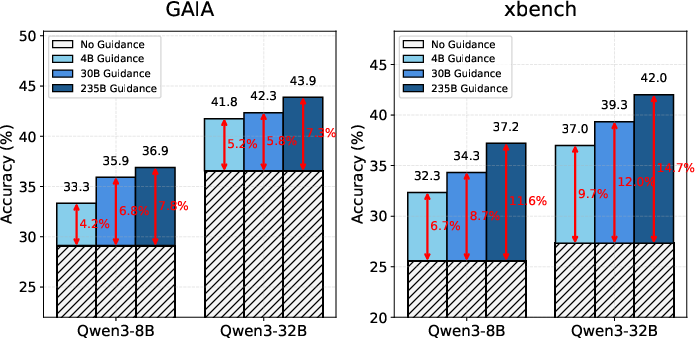

The researchers tested ExpSeek on four challenging web tasks using two sizes of Qwen3 models (8B and 32B parameters). They compared ExpSeek to agents that either had no help or only got a big chunk of advice upfront.

Key results:

- Better accuracy across the board:

- ExpSeek improved average accuracy by about 9.3% (8B model) and 7.5% (32B model) over the same agents without ExpSeek.

- It also beat “passive” methods that dump experience at the beginning by about 6–7%.

- Smarter timing matters:

- Triggering help based on entropy (the agent’s internal uncertainty) worked well and was efficient—better than always intervening or using an expensive external judge for every step.

- Helpful experience can be small and still powerful:

- Even a small 4B “experience model” (the helper that writes tips) significantly boosted a larger 32B agent. That means a “small coach” can help a “big player.”

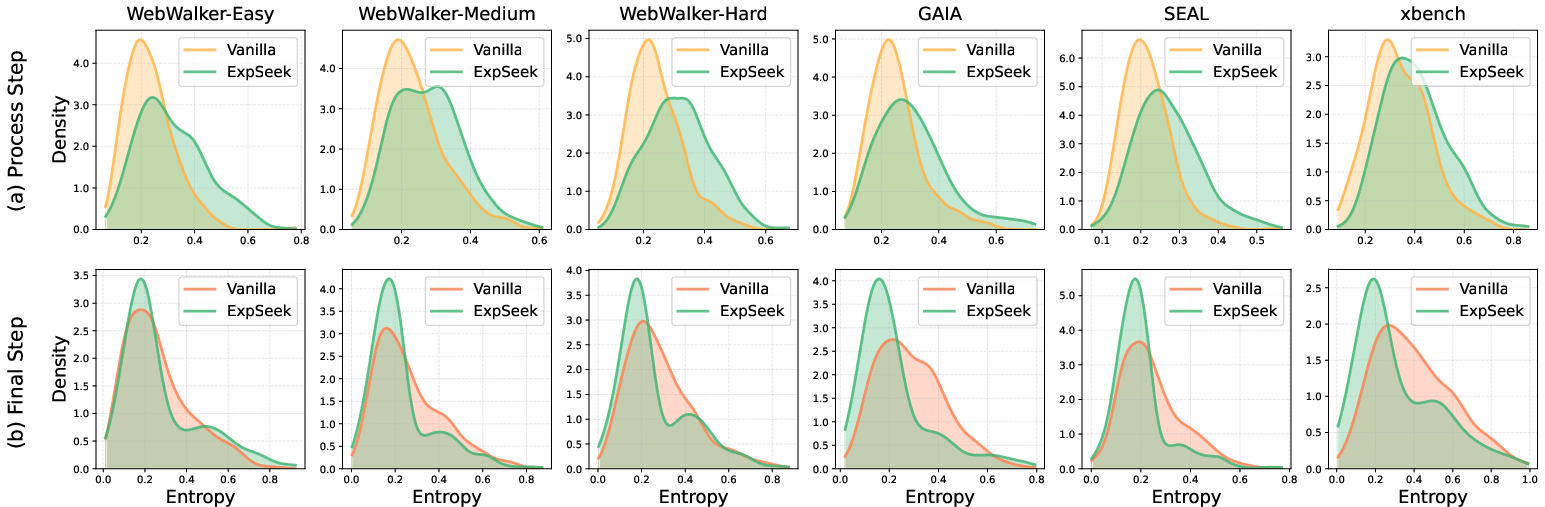

- Healthy reasoning behavior:

- With ExpSeek, the agent’s entropy (uncertainty) tends to go up during exploration steps (good for trying more options) and go down at the final answer (good for confidence). This looks like “explore first, then converge”—a sensible way to reason.

- Generalizes beyond where it was built:

- The experience was made from one dataset but still helped on other, different benchmarks. That suggests the guidance captures broadly useful lessons, not just one-off tricks.

Why this matters: Web browsing is messy—there’s lots of noise and misleading pages. Smaller or cheaper models especially struggle: they might get stuck or answer too early. ExpSeek helps them ask for the right kind of help at the right time, making them more reliable without needing constant supervision.

What could this change?

- More reliable web assistants: Agents could do better at tasks like fact-finding, planning, or multi-step research by learning from past mistakes exactly when they need it.

- Cheaper, more efficient systems: Instead of using giant models everywhere, smaller models can perform better by getting smart, timely guidance—reducing costs.

- Better training and evaluation tools: The idea of using a model’s own uncertainty (entropy) to self-trigger help could be applied to other kinds of step-by-step AI tasks, not just web browsing.

- Stronger collaboration between models: The finding that a small “coach” can help a bigger “player” hints at new ways to combine models effectively.

Overall, ExpSeek shows that “proactive, step-level help when you’re truly stuck” beats “one big lump of advice at the start.” It’s like learning to raise your hand at the right time and getting just the tip you need to move forward.

Collections

Sign up for free to add this paper to one or more collections.