Towards Zero-Shot Point Cloud Registration Across Diverse Scales, Scenes, and Sensor Setups

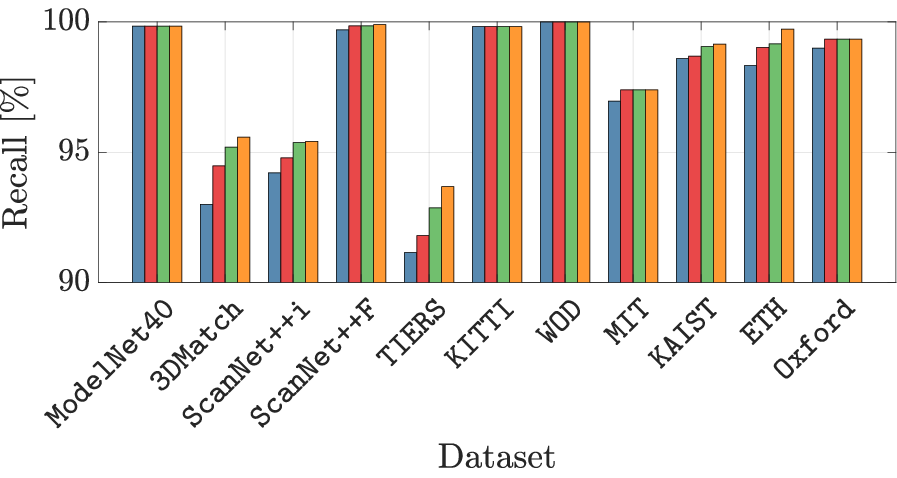

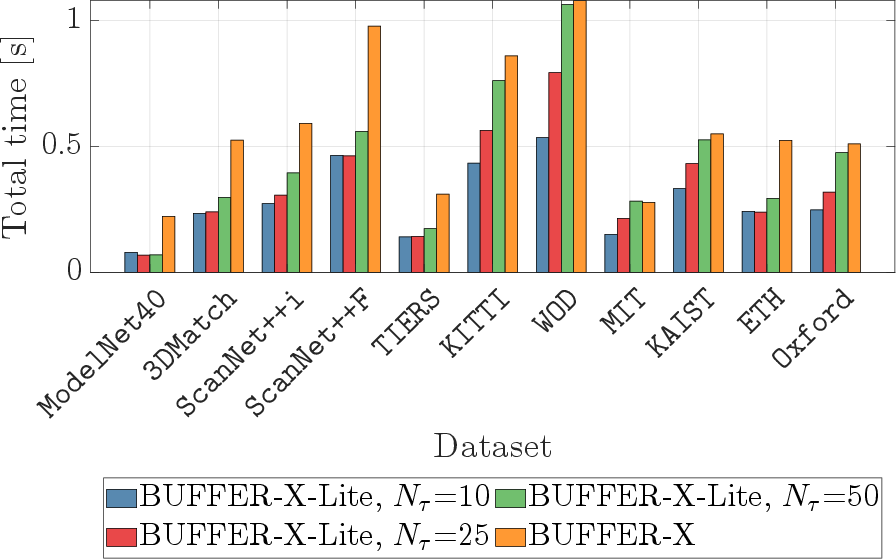

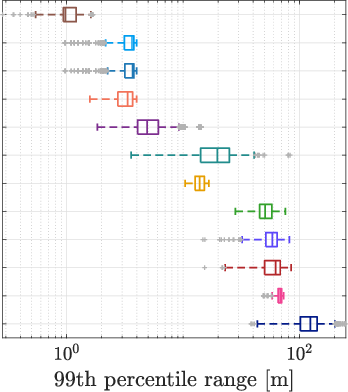

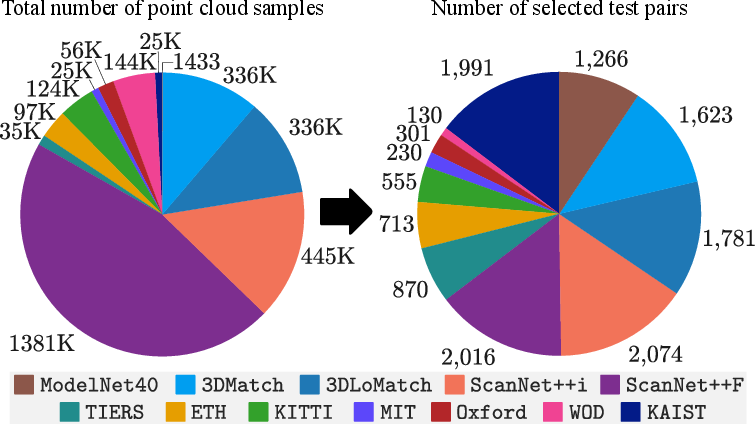

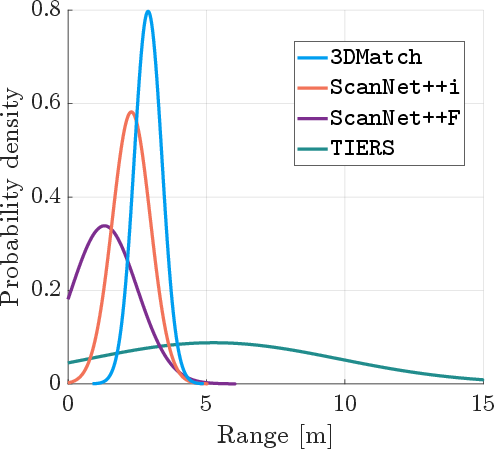

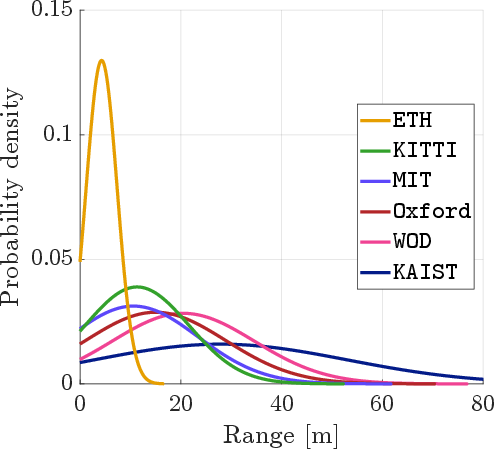

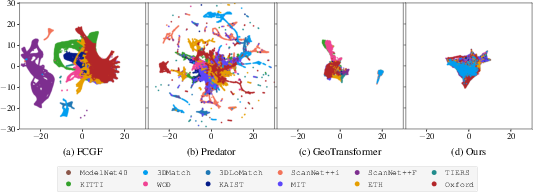

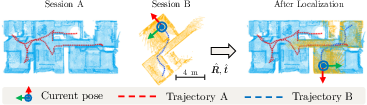

Abstract: Some deep learning-based point cloud registration methods struggle with zero-shot generalization, often requiring dataset-specific hyperparameter tuning or retraining for new environments. We identify three critical limitations: (a) fixed user-defined parameters (e.g., voxel size, search radius) that fail to generalize across varying scales, (b) learned keypoint detectors exhibit poor cross-domain transferability, and (c) absolute coordinates amplify scale mismatches between datasets. To address these three issues, we present BUFFER-X, a training-free registration framework that achieves zero-shot generalization through: (a) geometric bootstrapping for automatic hyperparameter estimation, (b) distribution-aware farthest point sampling to replace learned detectors, and (c) patch-level coordinate normalization to ensure scale consistency. Our approach employs hierarchical multi-scale matching to extract correspondences across local, middle, and global receptive fields, enabling robust registration in diverse environments. For efficiency-critical applications, we introduce BUFFER-X-Lite, which reduces total computation time by 43% (relative to BUFFER-X) through early exit strategies and fast pose solvers while preserving accuracy. We evaluate on a comprehensive benchmark comprising 12 datasets spanning object-scale, indoor, and outdoor scenes, including cross-sensor registration between heterogeneous LiDAR configurations. Results demonstrate that our approach generalizes effectively without manual tuning or prior knowledge of test domains. Code: https://github.com/MIT-SPARK/BUFFER-X.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (big picture)

Imagine you have two 3D “dot pictures” of the same place or object, taken at different times or with different devices. Point cloud registration is the job of lining those two dot pictures up so they match. This paper introduces a method called BUFFER-X that can do this lining-up without extra training or manual tweaking, even when the scenes are very different (small objects vs. big outdoor areas) and the sensors are not the same. They also make a faster version called BUFFER-X-Lite.

What questions the paper tries to answer

The authors focus on making registration “zero-shot,” which means it should work on new data without retraining or hand-tuning. They ask:

- How can we avoid hand-picked settings (like grid size and neighborhood size) that break when scenes or sensors change?

- How can we pick key points to match if learned “keypoint detectors” don’t transfer well to new types of data?

- How can we avoid getting confused by very different scales (for example, a tiny object vs. a huge street scene)?

How the method works (simple explanation)

To make this understandable, here are the main ideas with everyday analogies.

1) Geometric bootstrapping: auto-tuning the “knobs”

- Problem: Most methods need you to set things like voxel size (think: how big each 3D grid cube is) and search radius (how far around a point you look for neighbors). The best settings change a lot between small and large scenes.

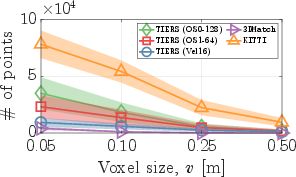

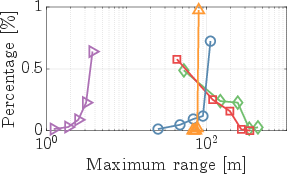

- Idea: BUFFER-X measures how spread out the points are (using a simple math tool similar to finding the main directions of a cloud of dots, called PCA) and how dense the points are. From that, it automatically chooses:

- a good voxel size (grid cube size) and

- three search radii for local, middle, and global neighborhoods.

- Analogy: It’s like a camera that auto-adjusts zoom and focus based on what it sees, instead of relying on you to set them for every shot.

2) Distribution-aware farthest point sampling: robust keypoint picking

- Problem: Learned keypoint pickers can work great on the data they were trained on but fail on new sensors or scenes.

- Idea: Instead of learning what points to pick, BUFFER-X spreads points out evenly by repeatedly choosing the point farthest from what’s already picked (farthest point sampling). This gives a well-distributed set of keypoints at each scale.

- Analogy: If you want to sample the taste of a pizza, you don’t always need a complex strategy; taking bites from well-spaced spots is often enough.

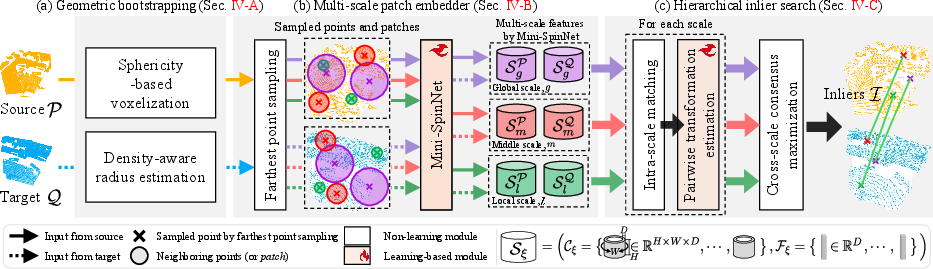

3) Patch-level coordinate normalization: fixing the scale problem

- Problem: Feeding raw XYZ numbers into a network ties it to the scale it learned. A network trained on small rooms may struggle on city streets.

- Idea: For each local “patch” (a small neighborhood around a keypoint), BUFFER-X rescales the points so they always fit into the same size box (for example, between -1 and 1). This makes features scale-consistent.

- Analogy: It’s like resizing every photo of a face to the same size before comparing them.

4) Multi-scale matching and consensus

- BUFFER-X builds features for three scales (local, middle, global) and matches patches between the two point clouds at each scale.

- It estimates many small “mini-alignments” from these matches and then picks the inlier set (the matches that agree) across all scales using a consensus step.

- Finally, it computes the global 3D rotation and translation that align the clouds.

5) BUFFER-X-Lite: faster when possible

- Early exit: Try the middle scale first. If there are already enough good matches, stop early and skip the other scales.

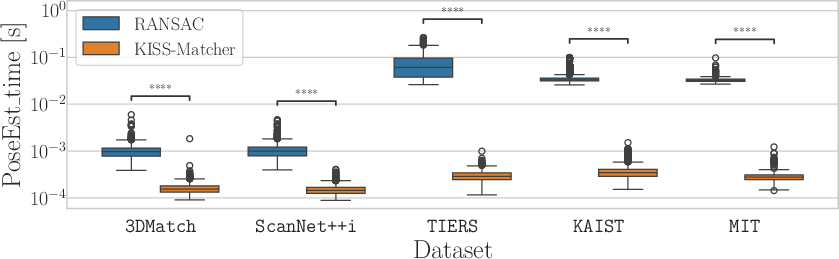

- Faster solver: Replace a slower random-guess method (RANSAC) with a quicker, deterministic approach that prunes bad matches and refines the pose (think: smart filtering plus a robust optimizer).

- Result: About 43% less compute time on average while keeping accuracy similar.

What the experiments found

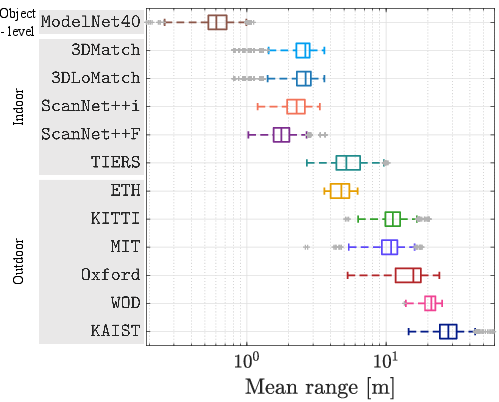

The authors tested on 12 very different datasets:

- Small objects (like CAD models),

- Indoor scenes (rooms, buildings),

- Outdoor scenes (streets, campuses),

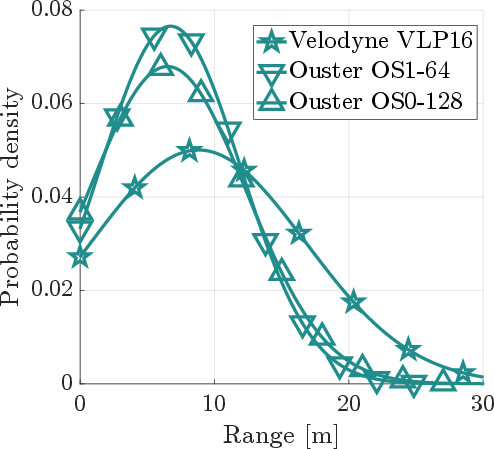

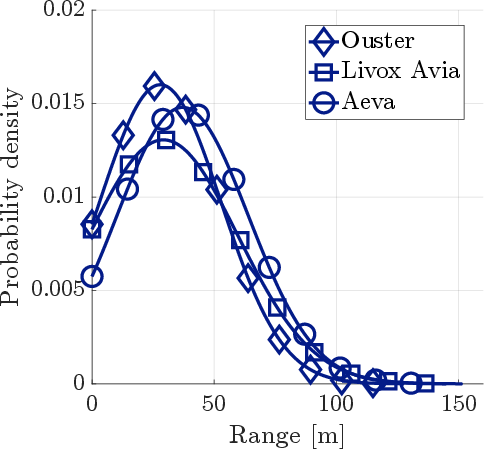

- Different LiDAR setups (sensors that scan in different patterns and densities).

Key takeaways:

- BUFFER-X works “zero-shot”: no manual parameter tuning and no retraining needed when moving to a new dataset.

- It handles big changes in scale, scene type, and sensor type (including “heterogeneous” LiDAR pairs where source and target come from different devices).

- BUFFER-X-Lite speeds things up notably (about 43% faster) while staying accurate, thanks to early exit and a faster pose solver.

Why this matters: Real-world robots, drones, self-driving cars, and AR devices often see new places with different sensors. A method that “just works” without lots of fiddling is a big deal.

Why this is important (impact)

- Less manual work: No more guessing voxel sizes or search radii for every new dataset. The system adapts to each pair of point clouds automatically.

- More reliable in the wild: Because it normalizes local patches and avoids fragile learned keypoint detectors, it travels better across different scales and sensors.

- Faster options: BUFFER-X-Lite shows you can keep robustness but save time, which is useful for real-time applications like robotics or mapping.

- Broader testing: The paper also expands how we evaluate generalization, covering many scene types and sensor mixes, which can guide future research to be more practical.

In short, BUFFER-X pushes point cloud registration closer to “plug and play”: it aligns 3D scans from many places and devices without special tuning, and the Lite version runs faster when the data allows.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper leaves the following gaps and open questions that future work could address:

- Clarify the “training-free” claim: the paper introduces a “training-free registration framework” yet also trains Mini-SpinNet descriptors (contrastive learning and yaw offset regression). Specify exactly what is trained, on which data, and reconcile this with the zero-shot assertion.

- Automatic parameter selection remains incomplete: geometric bootstrapping estimates voxel size and radii, but several global thresholds and constants still require manual setting (e.g., τ_v, δ_v, N_r, r_max, [τ_l, τ_m, τ_g], ε, N_FPS, N_patch, β=1.5v). Provide a fully automatic procedure (or self-calibrating rules) and quantify sensitivity to each.

- Lack of theoretical guarantees: there is no formal analysis of when multi-scale consensus maximization recovers the correct pose (e.g., minimum overlap fraction, noise bounds, symmetry conditions). Establish conditions under which BUFFER-X is provably correct or robust.

- PCA-based patch frames: eigenvector sign ambiguity and degeneracy (e.g., near-planar or low-structure regions) can destabilize local frames. Quantify the frequency and impact of such failures and develop a principled sign disambiguation or degeneracy detection strategy.

- Heuristic sphericity thresholding: the decision rule for voxel size (λ3/λ1 ≥ τ_v, with dataset-independent constants) is ad hoc. Provide a data-driven or uncertainty-aware estimator and analyze misclassification effects (e.g., indoor planar scans incorrectly treated as “disc-like”).

- Density-aware radius setting: the target neighborhood proportion τξ is user-defined and global. Investigate adaptive or learned strategies that adjust τξ per scene/scan region to avoid under/over-coverage, and characterize how errors in r_l, r_m, r_g affect descriptor reliability.

- Discretization in yaw (W sectors): there is no guidance on choosing W (accuracy vs. memory/time trade-offs) or analysis of aliasing/wrap-around bias. Quantify how W impacts rotation accuracy and failure rates across scenes.

- 4D cost-volume scalability: constructing H×W×W×D cost volumes can be memory/time intensive. Measure memory footprint and runtime as a function of H, W, D, and propose lighter alternatives (e.g., FFT-based circular correlation, low-rank approximations).

- Solver coupling to voxel size: the KISS-Matcher solver uses β=1.5v as the inlier bound. Validate this coupling across sensors/densities, derive principled noise bounds, or design an estimator that adapts β based on empirical residuals.

- Early exit safety: the middle-scale inlier threshold τ_N governs termination, yet its selection is not justified. Provide ROC analyses and calibration procedures to balance premature exits vs. unnecessary multi-scale processing.

- Low-overlap and high-symmetry regimes: robustness under extreme partial overlap (<10–20%) and repetitive/symmetric structures is not characterized. Measure performance in these regimes and explore symmetry-aware regularization or disambiguation.

- Dynamic scenes and motion distortion: the method assumes static geometry; spinning LiDAR motion distortion and moving objects (cars/pedestrians) are common outdoors. Evaluate robustness and add distortion compensation or dynamic-object filtering.

- Cross-sensor heterogeneity beyond geometry: the pipeline ignores intensity/reflectivity differences and beam divergence variations across LiDARs. Assess whether incorporating sensor metadata or robust normalization improves heterogeneous registration.

- Repeatability of FPS-selected keypoints: FPS is detector-free but may sample non-repeatable points in low-overlap or occluded areas. Quantify repeatability and investigate overlap-aware or saliency-aware sampling without learned detectors.

- Multi-scale training vs. inference: descriptors are trained at a single scale while used across three scales at test time. Provide ablations showing the effect of scale-mismatch in training and whether multi-scale training improves generalization.

- Sensitivity to patch size N_patch: random downsampling to a fixed patch size may discard fine structures or bias descriptors. Analyze trade-offs and propose adaptive patch sizing conditioned on local geometry.

- Pose uncertainty and confidence: the pipeline outputs a pose without uncertainty quantification. Add an uncertainty estimator (e.g., covariance of inliers, GNC energy) to inform downstream systems and early exit decisions.

- Out-of-memory and time guarantees: voxelization aims to avoid OOM, but there are no bounds or worst-case runtime guarantees (especially with large W/H or dense scans). Provide explicit resource budgets and adaptive safeguards.

- Fairness and coverage of the benchmark: the pair-selection protocol (overlap distributions, scene diversity, sensor configurations) is not fully described. Release the pair-generation code, report overlap histograms, and ensure balanced, reproducible splits.

- Evaluation metrics: success rate is highlighted, but absolute rotation/translation errors, inlier precision/recall, and timing/memory per component are not comprehensively reported. Standardize metrics and include detailed breakdowns per dataset.

- Ablation and component contribution: the paper asserts benefits of bootstrapping, FPS, scale normalization, and hierarchical matching, but a systematic ablation (remove/replace each component) is missing. Provide quantitative causal evidence.

- Failure case taxonomy: beyond general statements, concrete visual/quantitative failure modes (e.g., specific scenes/sensors where BUFFER-X fails) are not cataloged. Build a failure taxonomy to guide targeted improvements.

- Integration with downstream SLAM: pairwise registration quality in multi-view pipelines (map growth, loop closure) is not evaluated. Test BUFFER-X within SLAM systems and study long-term drift, map consistency, and loop robustness.

- CAD-specific nuances: registering synthetic CAD models to real scans may involve sampling bias and no sensor noise. Analyze how patch distributions differ and whether augmentation or domain adaptation is needed.

- Open-source reproducibility: while code is promised, many defaults (τv, τξ, W/H/D, N_FPS, N_patch, ε, β) are unspecified here. Provide a configuration file with dataset-agnostic defaults, auto-tuning modules, and scripts to reproduce all figures/tables.

- Extending beyond geometry-only: explore whether adding lightweight semantics (e.g., unsupervised geometric labels) or robust intensity cues can further improve zero-shot generalization without sacrificing sensor-agnostic applicability.

Practical Applications

Immediate Applications

Below are actionable, deployment-ready uses that can directly leverage BUFFER-X and BUFFER-X-Lite as described, with suggested tools/products/workflows and key dependencies to consider.

- Robotics and autonomy (SLAM, loop closure, map merging)

- What: Drop-in global registration module to replace tuning-heavy components in SLAM/back-end mapping stacks for ground robots, drones, and autonomous vehicles.

- How: Integrate

BUFFER-X-Litefor real-time or near-real-time loops; use the fullBUFFER-Xfor robust offline map-building and loop-closure detection. Plug intoROSas a node (e.g.,buffer_x_node) or as anOpen3Dregistration backend. - Tools/workflows: ROS2 graph component; Open3D backend; TEASER++/KISS-Matcher solver option; pipeline presets for indoor/outdoor workflows.

- Dependencies/assumptions: Rigid scenes and sufficient overlap; moderate GPU/CPU resources for patch extraction; parameter thresholds (e.g., inlier counts for early exit) set once and reused; expects reasonable point density (adaptive voxelization mitigates extreme cases).

- Cross-sensor map alignment and fleet calibration (heterogeneous LiDARs)

- What: Align maps/scans from different LiDAR makes and models without per-sensor retraining or manual parameter tuning.

- How: Use the geometric bootstrapping to auto-set voxel sizes and patch radii; enable hierarchical multi-scale matching for robustness across scanning patterns.

- Sectors: Robotics, geospatial, automotive, public sector mapping.

- Tools/products: “FleetMapAlign” service (cloud/on-prem) that ingests multiple sensor logs and produces fused maps; procurement QA tool for evaluating sensor interchangeability.

- Dependencies/assumptions: Requires overlap and rigid structures; handles sparseness via adaptive radii but extremely minimal overlap remains challenging.

- AEC/Construction/BIM (scan-to-BIM and as-built verification)

- What: Register partial scans from different capture devices (e.g., tripod LiDAR, mobile scanners, phone LiDAR) into a unified frame; align on-site scans to CAD/BIM.

- How: Use BUFFER-X’s CAD capability (object-level support) and patch-level normalization to mitigate scale variations, reducing site-specific tuning effort.

- Tools/products: Plugins for Autodesk ReCap/Navisworks, CloudCompare/PCL adapters; “Scan2CAD-ZS” workflow script for batch alignment.

- Dependencies/assumptions: Rigid indoor structures; material reflectivity doesn’t affect geometry but sparsity and clutter do; still needs adequate overlap.

- Manufacturing and metrology (CAD-to-scan inspection)

- What: Fast, robust zero-shot alignment of scanned parts to their CAD models for inline QA.

- How: Use BUFFER-X to align each scan to a CAD mesh-derived point cloud without retraining across part types.

- Tools/products: Inline QA station pipeline, with BUFFER-X-Lite for low-latency alignment; integration with industrial vision/PLCs.

- Dependencies/assumptions: Rigid parts; reasonable sampling of geometry; tolerance checks performed post-alignment.

- Geospatial surveying and energy infrastructure inspection

- What: Consolidate multi-platform datasets (vehicle, handheld, UAV) and cross-sensor scans for corridors, wind farms, substations, pipelines.

- How: Use the hierarchical multi-scale matching to bridge large-scale and local structures; early-exit to accelerate easy pairs.

- Tools/products: Cloud consolidation service; “change detection” pre-alignment step for time-series scans.

- Dependencies/assumptions: Structural rigidity; stable vantage points across surveys; persistent features for overlap.

- AR/VR and consumer 3D capture

- What: Align multiple room/space captures from different mobile devices/apps, or align a capture to a known 3D model (e.g., furniture/CAD).

- How: Package BUFFER-X as an SDK for mobile/desktop apps; leverage Lite mode on-device or hybrid on-device/cloud.

- Tools/products: Mobile SDK; “RoomMerge-ZS” tool for creators; plugin for game engines to pre-align scans.

- Dependencies/assumptions: Device point density varies (handled by bootstrapping); on-device acceleration may require Lite mode and further optimization.

- Dataset curation, annotation, and incident analysis (industry/academia)

- What: Automatic pre-alignment of frames/scans to reduce labeling overhead; cross-sensor dataset harmonization for AV/robotics logs; forensic reconstruction alignment.

- How: Incorporate BUFFER-X in data ingestion pipelines; output consistent global frames for labeling/analysis tools.

- Tools/products: Data ops scripts; “ZeroShot-Align” step in ML data pipelines; bench-marking harness for regression testing.

- Dependencies/assumptions: Overlap and rigid content; consistency in time sync is not required for registration but aids downstream fusion.

- Research and education

- What: Baseline for zero-shot registration; template for robust hyperparameter bootstrapping; general-purpose benchmark across 12 datasets.

- How: Use the open-source repo for reproducible experiments, ablations, and teaching modules (geometric bootstrapping, PCA normalization, consensus maximization).

- Tools/products: Course labs; reproducible benchmark suites; integration examples with

Open3DandROS. - Dependencies/assumptions: Standard research infra (GPU/CPU); datasets accessible or replaceable with lab data.

Long-Term Applications

These applications are promising but may require further research, scaling, hardware optimization, or broader ecosystem adoption.

- Real-time embedded deployment in resource-constrained systems

- What: Onboard, low-power execution in drones/AGVs/consumer devices.

- How: Model compression for Mini-SpinNet, patch sampling optimization, kernel fusion, quantization, and potentially GPU-less implementations.

- Sectors: Robotics, consumer electronics, automotive.

- Dependencies/assumptions: Requires additional systems engineering; may prioritize

BUFFER-X-Litewith tighter early-exit logic; hardware accelerators or DSPs advantageous.

- Dynamic and non-rigid scene registration

- What: Handle moving agents, deforming objects, and scene changes (e.g., crowds, vegetation).

- How: Integrate motion segmentation or correspondence consistency over time; extend consensus maximization to mixed rigid/non-rigid groups.

- Sectors: Robotics in public spaces, AR in dynamic environments.

- Dependencies/assumptions: Requires algorithmic extensions beyond rigid transforms; increased compute or additional sensors.

- Multimodal registration (LiDAR–radar, LiDAR–stereo, LiDAR–depth fusion)

- What: Zero-shot alignment across different sensing modalities beyond LiDAR–LiDAR.

- How: Extend patch encoding to cross-modality features; develop modality-aware bootstrapping and similarity measures.

- Sectors: Autonomous driving, robotics perception stacks.

- Dependencies/assumptions: New training or self-supervised strategies may be needed; modality-specific noise and sparsity must be addressed.

- Self-configuring perception stacks (generalized bootstrapping patterns)

- What: Propagate the paper’s hyperparameter bootstrapping (voxel size, search radii) to other modules (e.g., segmentation, place recognition).

- How: Embed geometric bootstrapping as a system-level tuning layer; cross-module scale and density calibration at runtime.

- Sectors: Robotics platforms, perception middleware.

- Dependencies/assumptions: Requires interface standards between modules; careful stability and latency management.

- City-scale, multi-session digital twins and infrastructure management

- What: Continuous, zero-shot merging of scans across seasons, operators, and hardware upgrades for municipalities/campuses/industrial plants.

- How: Fleet-scale workflows using BUFFER-X to ingest, align, and reconcile long-running scan streams from heterogeneous sensors.

- Sectors: Smart cities, utilities, transportation, large campuses.

- Dependencies/assumptions: Data governance and storage; robust loop-closure and global optimization in the presence of long-term changes.

- Automated robot recalibration after sensor swaps or maintenance

- What: Post-maintenance auto-relocalization and map re-alignment without manual recalibration or retraining.

- How: Invoke BUFFER-X alignment to the prior facility map; verify alignment quality via consensus metrics.

- Sectors: Warehousing, manufacturing, service robotics.

- Dependencies/assumptions: Persistent map features; minimal map drift; maintenance records for expected changes.

- Standardization, certification, and policy frameworks for generalizable 3D perception

- What: Benchmarks and procurement guidelines that require zero-shot generalization and cross-sensor robustness.

- How: Formalize evaluation protocols based on the paper’s 12-dataset benchmark; define KPIs (success rate without tuning, cross-sensor success).

- Sectors: Government, transportation authorities, defense procurement, safety certification bodies.

- Dependencies/assumptions: Community/industry consensus; reference implementations and datasets with licensing clarity.

- Privacy-preserving and compliance-friendly deployments

- What: Reduce collection of new training data by favoring zero-shot inference, aiding privacy and compliance mandates.

- How: On-device or on-premise execution; no need to upload sensitive point clouds for retraining.

- Sectors: Healthcare facilities (facility mapping), critical infrastructure, consumer apps.

- Dependencies/assumptions: Aligns with privacy-by-design; may still require logs for auditability and safety cases.

- Foundations for generalizable 3D perception beyond registration

- What: Apply patch normalization, distribution-aware sampling, and consensus across scales to place recognition, semantic mapping, scene flow.

- How: Reuse design patterns (PCA-aligned patches, scale-aware radii, multi-scale consensus) in broader 3D tasks.

- Sectors: Research and advanced product development across robotics, AR/VR, GIS.

- Dependencies/assumptions: Task-specific loss functions and evaluation; potential additional supervision for semantics.

- Marketplace and ecosystem for cross-device 3D content

- What: Seamless merging of scans from various consumer and professional devices for 3D content marketplaces and collaboration platforms.

- How: Cloud pipelines that accept heterogeneous uploads, perform zero-shot alignment, and deliver consolidated assets for editing/rendering.

- Sectors: Media, gaming, e-commerce (digital twins of products/venues).

- Dependencies/assumptions: Scale and cost control; content moderation and IP policies.

Notes on assumptions common across applications:

- Overlap and rigidity: The method assumes rigid-body transforms and requires sufficient overlap; low-overlap or heavily dynamic scenes are challenging.

- Compute: BUFFER-X is compute-intensive; BUFFER-X-Lite mitigates latency via early exit and faster solvers but still benefits from GPU acceleration for descriptor extraction.

- Thresholds: While zero-shot, a few universal thresholds (e.g., inlier count for early exit) remain; these can typically be fixed across deployments.

- Data quality: Extremely sparse/noisy scans can degrade performance; the geometric bootstrapping (voxel size, radii) helps but is not a panacea.

- Integration: For operational use, packaging as

Open3Dplugins,ROSnodes, or PCL adapters is recommended; the paper notes intent to improve such integrations.

Glossary

- 3D cylindrical convolutional network (3DCCN): A convolutional neural network operating on cylindrical feature volumes to estimate discrete yaw rotations. "Then, is processed by a 3D cylindrical convolutional network~(3DCCN)~\cite{Ao21cvpr-Spinnet}, mapping to a score vector of size~."

- Absolute coordinates: Raw 3D point values in the global frame that can cause scale dependency across datasets. "(c)~absolute coordinates amplify scale mismatches between datasets."

- CAD models: Computer-aided design objects used as 3D point cloud sources for registration. "object-level CAD models~\cite{Wu15cvpr-ModelNet}"

- Compatibility graph: A graph whose vertices are correspondences and edges encode geometric consistency between pairs. "KISS-Matcher solver first constructs a compatibility graph where vertices represent correspondences and edges connect pairs satisfying the geometric consistency constraint:"

- Consensus maximization: Selecting the transformation and inliers that maximize agreement across correspondences. "Finally, it identifies globally consistent inliers~ across all scales to refine correspondences based on consensus maximization~\cite{Sun22ral-TriVoC,Zhang24tpami-AcceleratingGloballyCM}."

- Contrastive learning: A training paradigm that pulls matching descriptors together and pushes non-matching ones apart. "First, we train the feature discriminability of Mini-SpinNet descriptors using contrastive learning~\cite{Yew18eccv-3dfeatnet}."

- Covariance: A second-order statistic of point distributions used to derive PCA bases. "we apply principal component analysis (PCA)~\cite{Lim21ral-Patchwork} to the covariance of sampled points"

- Cylindrical coordinates: A coordinate system used to represent local patches for rotational analysis around an axis. "BUFFER~\cite{Ao23CVPR-BUFFER} utilizes learned reference axes to extract cylindrical coordinates"

- Cylindrical feature maps: Descriptor representations arranged in cylindrical grids over patches. "Mini-SpinNet~\cite{Ao23CVPR-BUFFER} outputs cylindrical feature maps~ and vector feature sets~."

- Distribution-aware farthest point sampling: A sampling strategy that selects keypoints by accounting for point distribution rather than learning detectors. "(b)~distribution-aware farthest point sampling to replace learned detectors"

- Early exit: An adaptive inference strategy that terminates processing once sufficient evidence is found. "BUFFER-X-Lite, which reduces total computation time by 43\% (relative to BUFFER-X) through early exit strategies and fast pose solvers while preserving accuracy."

- Eigenvalues: PCA-derived scalars indicating variance along principal directions, used to assess sphericity. "First, we determine a suitable voxel size by leveraging sphericity, quantified using eigenvalues~\cite{Hansen21remotesensing-Classification,Alexiou24jivp-PointPCA}"

- Farthest point sampling (FPS): A non-learned method for selecting well-spaced keypoints in point clouds. "An interesting observation is that replacing the learning-based detector with farthest point sampling~(FPS) preserves registration performance~\cite{Seo25ICCV-BUFFERX}."

- Graduated non-convexity (GNC): A robust optimization technique that gradually transitions from convex to non-convex objectives to handle outliers. "replacing RANSAC with a faster graduated non-convexity (GNC)~\cite{Yang20tro-teaser}-based pose estimation solver."

- Heterogeneous sensor configurations: Registration setups where source and target point clouds come from different sensor types. "including cross-sensor registration between heterogeneous LiDAR configurations."

- Huber loss: A robust loss function that is quadratic near zero residuals and linear for large residuals. "we employ the Huber loss~\cite{Zhang97ivc-Parameter} for training "

- Inlier threshold: A distance bound used to decide whether a correspondence is consistent with an estimated transformation. "where is an inlier threshold."

- K-core pruning: A graph-theoretic filtering that iteratively removes vertices with degree less than k to isolate a consistent core. "we employ deterministic graph-theoretic -core pruning~\cite{Shi21icra-robin} followed by a GNC solver~\cite{Yang20ral-GNC}"

- LiDAR: Light Detection and Ranging; a sensor modality producing 3D point clouds via laser measurements. "heterogeneous LiDAR point clouds."

- Mini-SpinNet: A lightweight descriptor network derived from SpinNet for patch-based feature extraction. "we use Mini-SpinNet\cite{Ao23CVPR-BUFFER} for descriptor generation"

- Mutual matching: Nearest neighbor matching that requires reciprocity to reduce mismatches. "we perform nearest neighbor-based mutual matching~\cite{Lowe04ijcv} between and "

- Omnidirectional LiDARs: LiDAR sensors with 360-degree horizontal field of view. "Notably, {TIERS} and {KITTI}~\cite{Geiger13ijrr-KITTI}, both using omnidirectional LiDARs, yield different point densities due to indoor vs. outdoor environments."

- Patch-level coordinate normalization: Scaling local patch coordinates to a fixed bounded range to mitigate cross-domain scale differences. "(c)~patch-level coordinate normalization to ensure scale consistency."

- Patch-wise descriptor: A feature computed over a local neighborhood (patch) around a keypoint. "patch-wise descriptor~ for multiple scales~"

- Point cloud registration: Estimating the relative rotation and translation between two 3D point clouds. "3D point cloud registration, which estimates the relative pose between two partially overlapping point clouds, is a fundamental problem"

- Principal component analysis (PCA): A technique that computes orthogonal axes of maximum variance for alignment and normalization. "we apply principal component analysis (PCA)~\cite{Lim21ral-Patchwork} to the covariance of sampled points"

- RANSAC: Random Sample Consensus; a robust estimator that fits a model by sampling minimal sets and verifying consensus. "a robust solver such as RANSAC~\cite{Fischler81} or TEASER++~\cite{Yang20tro-teaser}."

- Receptive fields: The spatial extent of data a feature extractor or descriptor observes. "across local, middle, and global receptive fields"

- Rodrigues’ rotation formula: A closed-form expression to compute rotation matrices from axis-angle parameters. "using Rodriguesâ rotation formula\cite{Mebius07arxiv-Derivation}, the rotation matrix is given by:"

- Robust kernel function: A function in the objective that reduces the influence of outlier correspondences. "where represents a robust kernel function that mitigates the effect of spurious correspondences in ."

- Scale normalization: Making features invariant to absolute scale by normalizing local coordinates. "This demonstrates that patch-wise scale normalization is key to achieving a data-agnostic registration pipeline."

- SO(2)-equivariant representation: Feature representations that shift consistently under planar (yaw) rotations. "As explained by Ao~\etalcite{Ao23CVPR-BUFFER}, and follow discretized SO(2)-equivariant representation;"

- Sphericity: A measure of how uniformly points are distributed in 3D, derived from PCA eigenvalues. "we determine a suitable voxel size by leveraging sphericity, quantified using eigenvalues~\cite{Hansen21remotesensing-Classification,Alexiou24jivp-PointPCA}"

- TEASER++: A certifiable robust registration solver for estimating 3D transformations under outliers. "a robust solver such as RANSAC~\cite{Fischler81} or TEASER++~\cite{Yang20tro-teaser}."

- Voxel size: The grid resolution used for downsampling point clouds via voxelization. "as controls the maximum number of points that can be fed into the network, a too-small can trigger out-of-memory errors"

- Voxelization: The process of discretizing point clouds into a voxel grid for downsampling or processing. "Sphericity-based voxelization."

- Zero-shot generalization: Performing well on unseen domains without retraining or manual tuning. "achieves zero-shot generalization"

- Zero-shot registration: Registering point clouds across novel domains without any prior domain-specific adjustments. "Success rate (unit: \%) of zero-shot point cloud registration"

Collections

Sign up for free to add this paper to one or more collections.