- The paper introduces AIMformer, a transformer-based framework that detects misbehavior in vehicular platooning with an F1 score of up to 0.98.

- It leverages multi-head self-attention and global positional encoding to model inter-vehicle dependencies and reduce false positives.

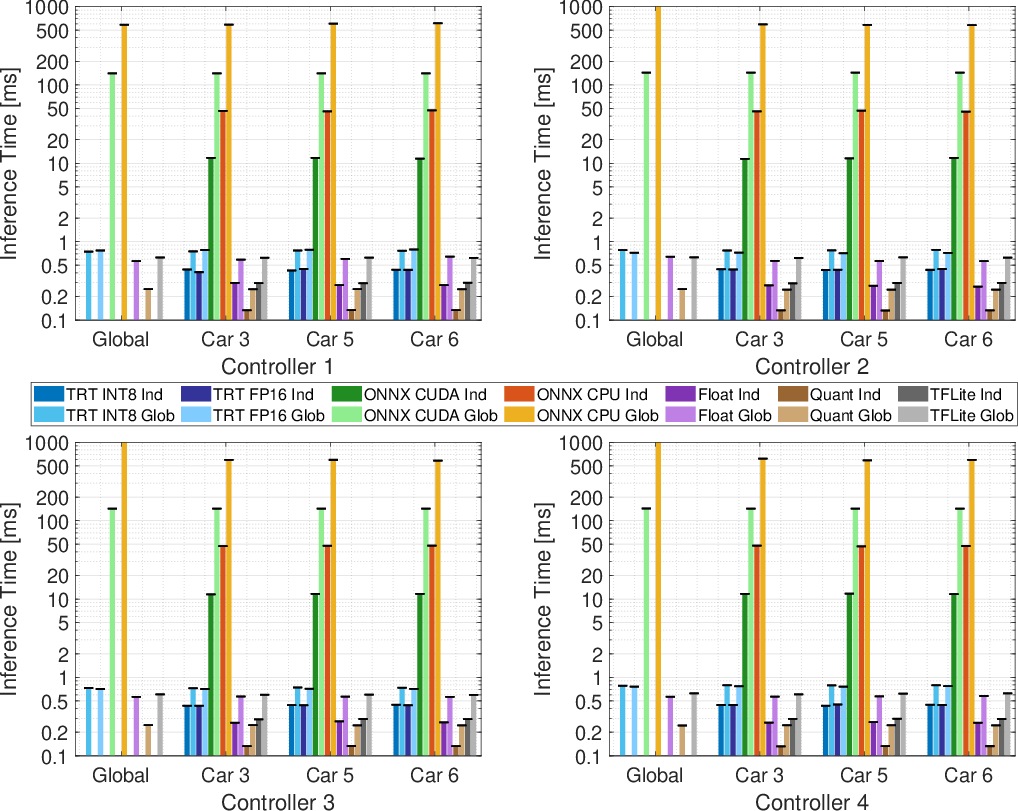

- The study demonstrates real-time edge deployment with quantized models achieving 0.13–0.8 ms inference latency, ensuring safety-critical responsiveness.

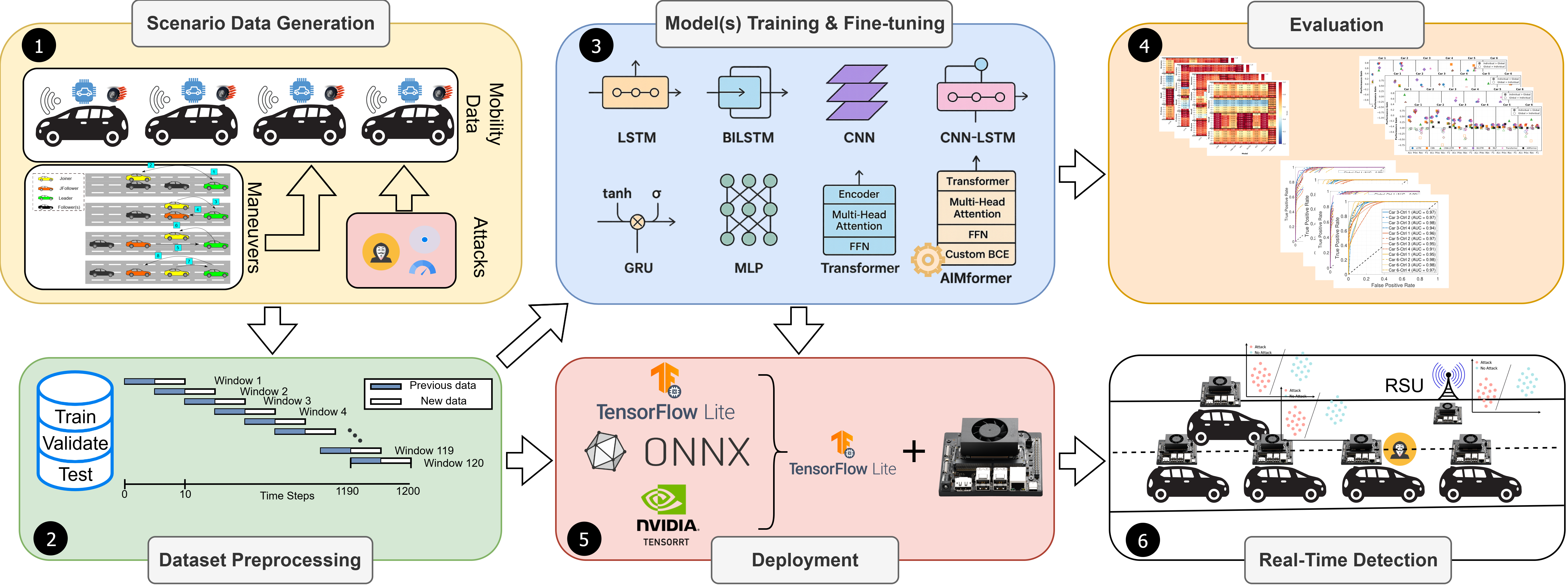

Vehicular platooning leverages distributed V2V/V2I connectivity to coordinate the movement of convoys, enhancing throughput, fuel efficiency, and string stability in traffic networks. Despite cryptographically enforced authentication, platoon coordination exposes critical vulnerabilities as trusted vehicles can perpetrate kinematic data falsification attacks. These exploits, particularly when initiated during topology transitions (e.g., join/exit maneuvers), bypass plausibility-based schemes and threaten operational safety. Existing misbehavior detection paradigms—plausibility checks, statistical outlier analyses, and classical time-series models—are limited by high false positive rates and inadequate temporal-spatial correlation modeling. The AIMformer framework advances the state-of-the-art by integrating transformer encoders, offering parallelized analysis of intra/inter-vehicle dependencies, and deployable edge ML for real-time detection in resource-constrained settings.

System and Adversarial Model

AIMformer operates within a platoon network overlaying secure V2X stacks, enabling authenticated vehicle enrollment. Attacker models are strictly internal, possessing valid cryptographic credentials and operating within protocol constraints. Attacks are characterized by ability to forge physically consistent kinematic states (position, velocity, acceleration), employing constant offsets, gradual drift, or multifaceted falsification during both steady-state and maneuver windows. Attack severity scales with topological position; leader-originated attacks propagate global disturbances, while follower-based attacks target immediate predecessor/successor relationships, exacerbated by platoon reconfiguration events.

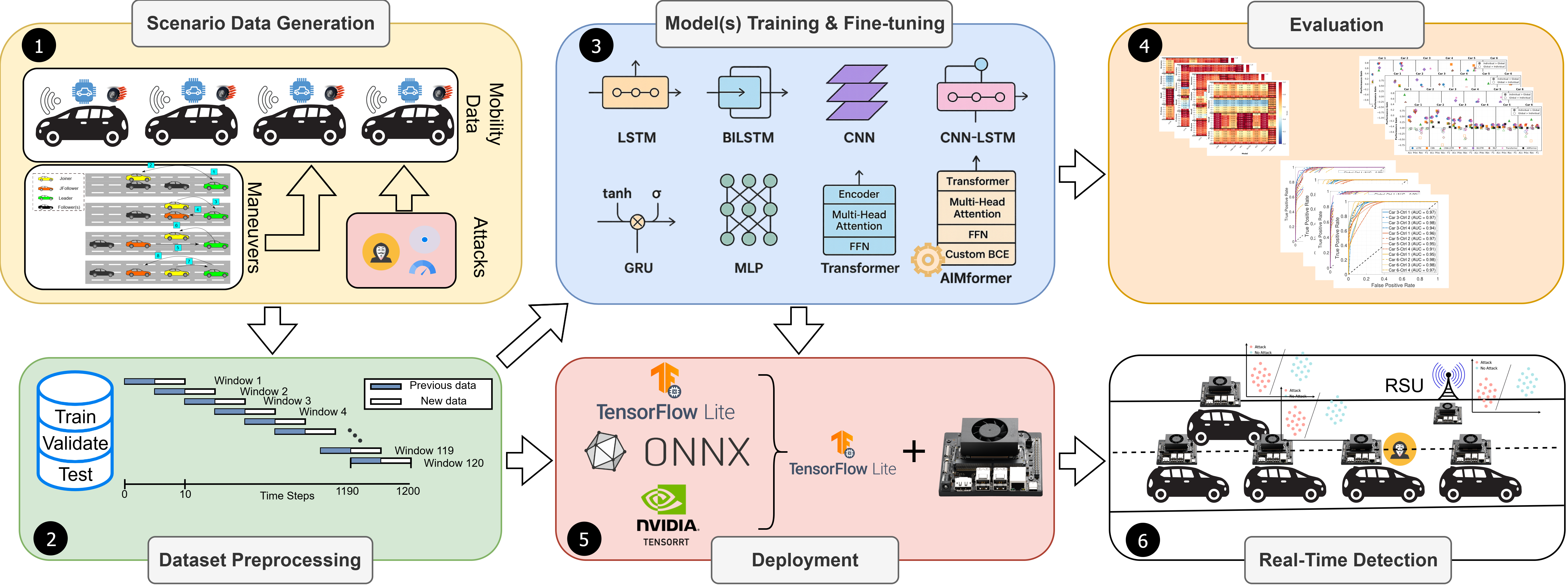

Figure 1: Pipeline for edge AI-based V2X attack detection, illustrating scenario data generation, windowing, model training, deployment, and real-time inference phases.

AIMformer employs a transformer encoder with domain-specific augmentations:

- Global positional encoding with vehicle-specific offsets captures asynchronous entry/exit events, aligning multi-vehicle behaviors within a unified temporal context.

- Multi-head self-attention jointly models intra-vehicle time series and cross-vehicle spatial dependencies, critical for detecting coordinated attacks and behavioral anomalies.

- Precision-Focused BCE Loss (PFBCE) penalizes false positive predictions, balancing precision and recall for safety-critical inference. Explicit parameterization differentiates error types (false alarms vs. missed detections), outperforming focal loss strategies in real-world imbalance scenarios.

Model input is four-dimensional (B×V×T×F), where batch, vehicle, time window, and feature axes are flexibly reshaped to support deployment at platoon or individual vehicle granularity. Masking handles variable-length, asynchronous trajectories, optimizing transformer attention and residual block operations.

Training, Optimization, and Hyperparameter Tuning

Extensive hyperparameter search—via Hyperband—optimizes attention heads, encoder depth, dropout ratios, and learning rates for both global and vehicle-position-specific models. Loss function parameters (λFP,λpos,τ) are specifically tuned for minimal false alarms, on par with the operational requirements of safety-critical automotive systems. Quantization-ready models are produced to match edge deployment constraints.

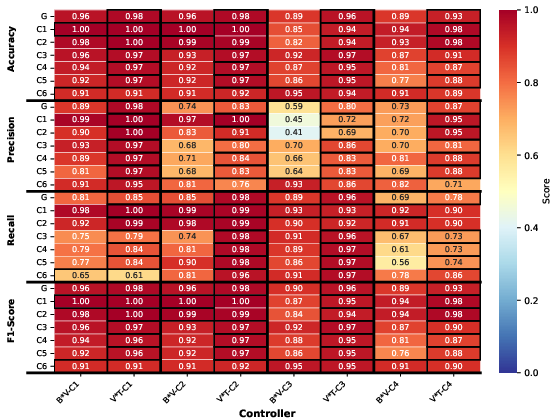

AIMformer is benchmarked against seven baselines: LSTM, BiLSTM, GRU, CNN, CNN-LSTM, MLP, and a vanilla transformer. Testing spans four distinct platoon controllers, encompassing consensus and time/distance-based strategies, and diverse attack scenarios. Metrics include precision, recall, F1 score, ROC/AUC, and inference latency.

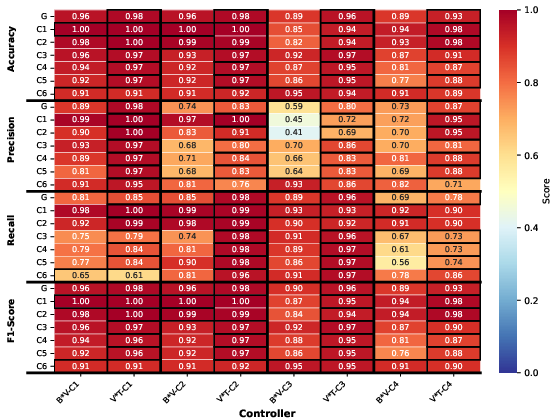

- Global Model Superiority: AIMformer delivers F1≥0.93 across all controllers, outstripping baseline recurrent and convolutional models, which suffer from either reduced recall or rampant false positives.

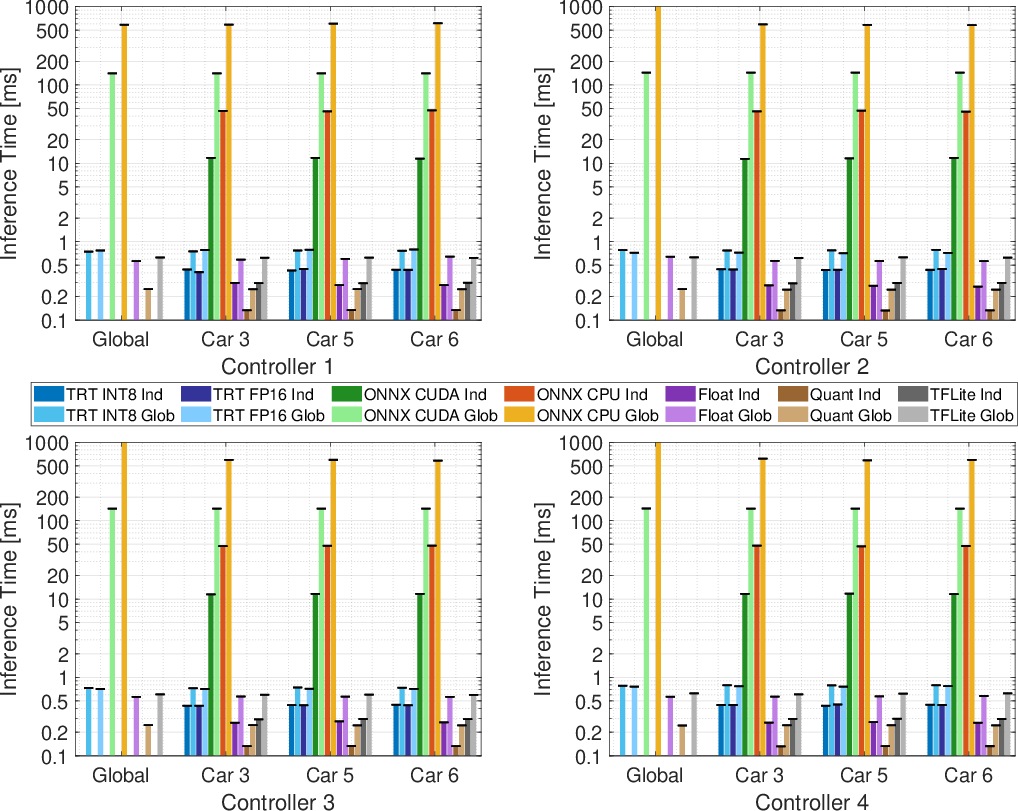

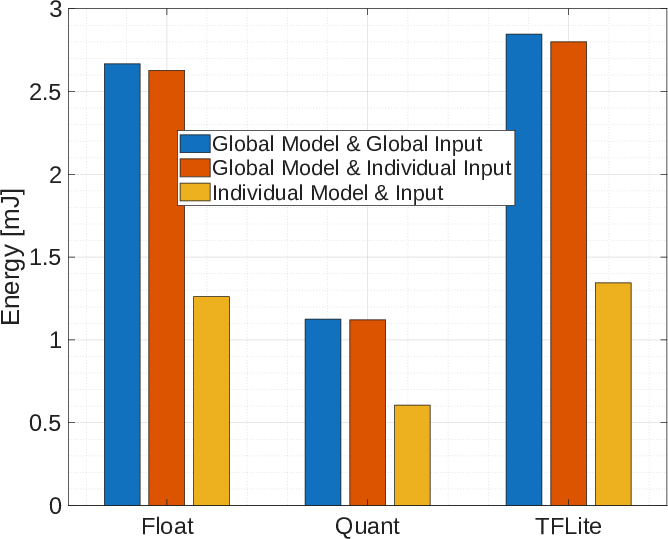

- Deployment Latency: On Jetson Orin Nano, integer quantized TFLite models achieve mean inference times of 0.13–0.8 ms (individual/global), satisfying 10 Hz CAM update intervals, while ONNX/TensorRT variants lag behind or incur larger memory footprints.

Figure 2: Inference time comparison across quantization methods on edge hardware.

- Quantization Robustness: Minimal ROC/AUC degradation (≤0.1%) following quantization, validating suitability for in-vehicle or roadside units with strict resource budgets.

- Spatio-Temporal Attention: Architectural extension to joint V⋅T attention encoding enhances precision (F1: up to 0.98), enabling attacker/victim localization through inter-vehicle behavioral correlation maps. t-SNE visualizations reveal reduced false positives and improved class separability.

Figure 3: Comparison between B⋅V and V⋅T global attention encoding, highlighting superior F1 and precision.

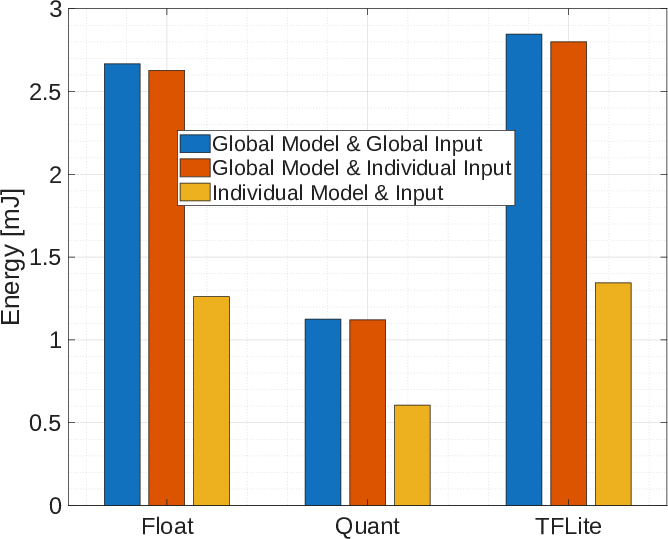

Figure 4: Energy consumption for deployed quantized models, indicating substantial resource savings for individual edge deployments.

The transformer-based paradigm transcends legacy controllers and sequential recurrent architectures. Prior deep learning studies (LSTM/GRU/CNN) [alladi2023deep, hsieh2021deep_integrated, javed2021canintelliids] excel in non-platoon VANET settings, but lack robustness against physically consistent, coordinated attacks. AttentionGuard [li2025attentionguard] introduced attention mechanisms for binary detection, yet falls short on latency and generalization. Edge AI literature confirms sub-100 ms is essential for real-time vehicular inference [zhang2020mobile, deprado2021robustifying], achievable only through aggressive quantization and architectural optimization. Fused inter-vehicle attention encoding in AIMformer uniquely supports platoon-wide joint reasoning, with demonstrated scalability and performance even in extended platoon topologies.

Practical and Theoretical Implications

AIMformer establishes operational feasibility for secure platooning, offering:

- Scalable, edge-ready ML deployment for in-vehicle and RSU detection

- Explicit attack localization via attention-derived behavioral maps

- Robust handling of plausible, stealthy data falsification

- Model quantization enabling low-energy, real-time inference

Theoretical implications include the merits of decoupled false positive penalties in loss landscapes, and the utility of positional/inter-vehicle attention for simultaneous spatial-temporal anomaly detection. These principles generalize to other cyber-physical edge ML security domains.

Future Directions

Further research avenues include adversarial robustness against adaptive, stealthy attacks, real-world vehicular dataset validation, online and federated learning for infrastructure adaptation, and integration of cryptographic primitives with ML-driven anomaly detection.

Conclusion

AIMformer delivers a transformer-based misbehavior detection solution, engineered for the dual challenges of high detection fidelity and ultra-low inference latency in secure platoon operations. Through inter-vehicle aware encoding, precision-focused loss, and full-stack quantization optimization, it outpaces prior art in both effectiveness and deployability, addressing the mission-critical demands of autonomous convoy security (2512.15503).