Machine Learning

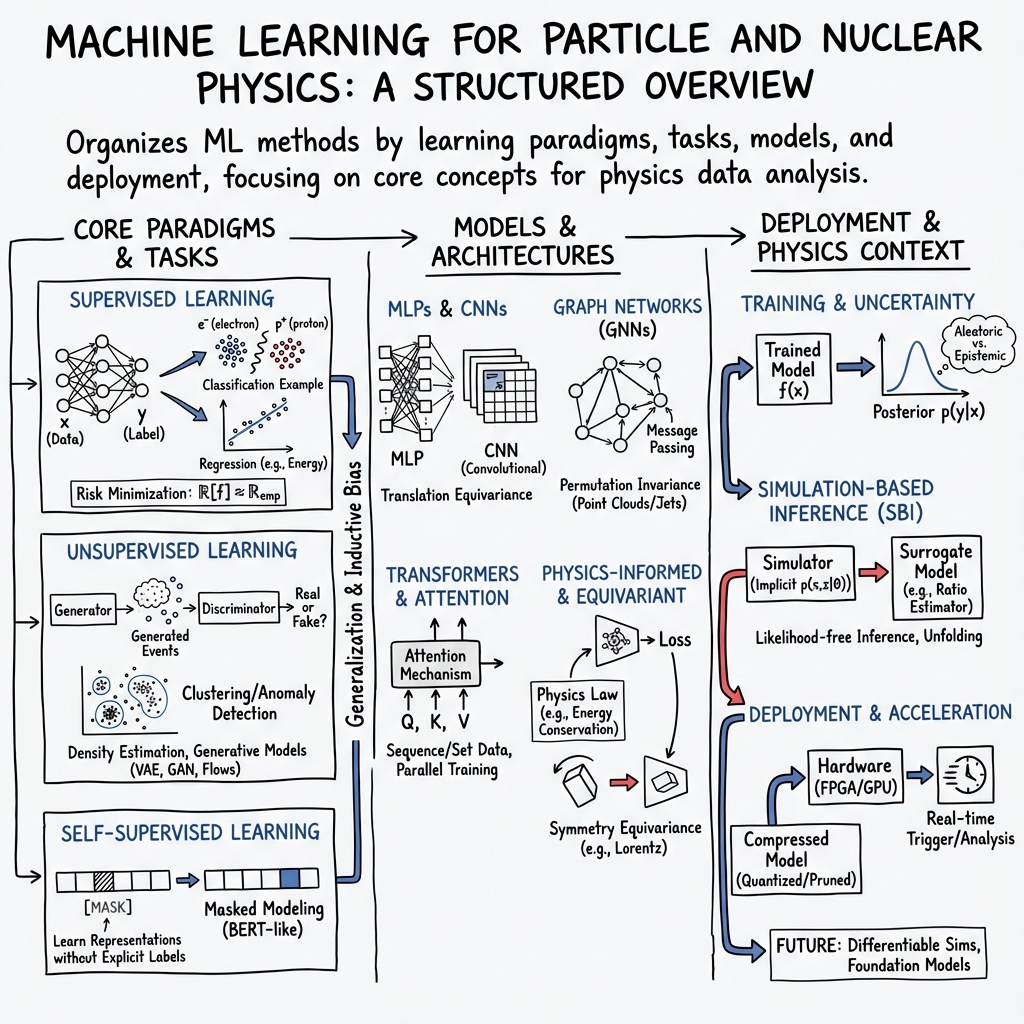

Abstract: This chapter gives an overview of the core concepts of ML -- the use of algorithms that learn from data, identify patterns, and make predictions or decisions without being explicitly programmed -- that are relevant to particle physics with some examples of applications to the energy, intensity, cosmic, and accelerator frontiers.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This chapter is a friendly, big-picture guide to ML for particle physics. It explains how computers can learn patterns from data to classify particles, make predictions, find unusual events, and even generate realistic fake data to speed up research. It focuses on ideas and methods rather than giving a long list of applications, and it points to a “Living Review” with more than a thousand references for those who want details.

The main questions the paper asks

- How do different types of machine learning (supervised, unsupervised, reinforcement) work, and when should physicists use each one?

- What loss functions (the way we measure mistakes) lead to good predictions or useful probabilities?

- How can we train models so they learn real patterns, not just memorize the training data?

- How do we represent complex detector data in simpler ways without losing important information?

- How can we measure and handle uncertainty, deal with differences between simulation and real data, and deploy models in real experiments?

How the research ideas and methods work (with simple analogies)

To make things concrete, the chapter starts with a familiar task: classifying detector energy deposits as electron or proton. Here’s how the building blocks fit together:

- Supervised learning (like a teacher grading homework): You have inputs (detector readings) and the correct answers (labels: electron or proton). The model learns to map inputs to correct labels by minimizing a loss function (a score of how wrong it is).

- Loss, risk, and empirical risk (your average mistake score): The loss is the penalty on one example. Risk is the expected average penalty over all possible data. Because we don’t know the true data distribution, we use empirical risk—just the average loss over our training set.

- Gradient descent (walking downhill in fog): Training adjusts model parameters step by step in the direction that reduces the average loss the fastest. Stochastic gradient descent (SGD) does this using small random batches, which is faster and often generalizes better.

- Train/validation/test split (study, practice, final exam): Train to learn, validate to make choices (like when to stop), and test once at the end to measure how well the model truly generalizes.

- Classification and probabilities (confidence scores): Cross-entropy loss makes the model output probabilities, like “70% chance this is an electron.” You then pick a threshold to decide.

- ROC curves (trade-offs): Changing the threshold trades true positives against false positives. The ROC curve shows all these trade-offs without depending on class imbalances.

- Regularization (guardrails against overfitting): L2 shrink-wraps parameters; L1 tends to zero-out some parameters, making sparse, simpler models. Early stopping and dropout are “implicit” guardrails that help avoid memorization.

- Generalization vs overfitting (understanding vs memorizing): Overfitting is when a model is great on training data but bad on new data. The chapter explains the classic bias–variance trade-off and the modern “double descent” effect, where very large models can still generalize well when trained carefully.

- Representation learning and compression (summarizing without losing meaning): PCA and autoencoders compress data into a smaller “latent space.” Good representations make downstream tasks easier.

- Clustering (grouping similar things): Methods like k-means and DBSCAN/HDBSCAN group unlabeled data into clusters based on distance or density—useful for finding structures without labels.

- Density estimation and generative models (learning the data distribution): Instead of predicting labels, you learn p(x), the “shape” of the data. Normalizing flows, VAEs, GANs, and diffusion/flow-matching models can generate new, realistic samples. Normalizing flows also let you compute exact likelihoods, which is powerful for scientific use.

- Optimization tricks and neural network plumbing: The chapter covers practical training tools—initialization, input normalization, batch normalization, vanishing/exploding gradients, and early stopping—so training is stable and fast.

- Uncertainty and domain shift (being honest and adaptable): It distinguishes aleatoric uncertainty (inherent randomness, like noisy sensors) from epistemic uncertainty (lack of knowledge, like not enough data). Domain adaptation helps when your training simulation doesn’t perfectly match the real detector data.

- Transfer learning and foundation models (reusing knowledge): Pretraining on large datasets and fine-tuning on specific tasks saves time and often improves performance, even in physics.

What the chapter finds and why it matters

Below are key takeaways the chapter emphasizes and why they are useful:

- Choosing the right loss gives the right behavior:

- Squared error targets the average value of the label given the input.

- Cross-entropy makes classifiers output calibrated probabilities.

- ROC curves are prior-independent:

- Even if simulation has different class ratios than real data, the ROC curve still describes the trade-off correctly, which enables clever training when labels are scarce.

- Overfitting can be tamed:

- Regularization, early stopping, dropout, and SGD’s implicit effects help large models generalize surprisingly well (double descent).

- Good representations matter:

- PCA and autoencoders compress high-dimensional data and reveal structure that makes later tasks easier and faster.

- Generative models unlock new capabilities:

- GANs, VAEs, normalizing flows, and diffusion models can create realistic synthetic data, speed up simulations, and sometimes provide exact likelihoods for rigorous statistical analysis.

- Uncertainty must be handled explicitly:

- Distinguishing different kinds of uncertainty and propagating errors makes ML-based physics results more trustworthy.

- Domain shift is real:

- Differences between simulation and actual detector data can miscalibrate models. The chapter explains re-calibration and data-driven techniques to keep results reliable.

- Physics-aware design helps:

- Building symmetries (like translation or rotation) and other “inductive biases” into models makes them more efficient, accurate, and interpretable.

- Deployment is part of the story:

- Compression, sparsity, and careful engineering let ML models run inside experiments with limited computing resources.

Why this matters for particle physics and beyond

Machine learning is now central to how modern particle physics is done. These tools help scientists:

- Identify particles more accurately and quickly, even in huge, noisy datasets.

- Discover rare signals by pushing down false positives while keeping true positives high.

- Speed up simulations and analyses, saving time and computing power.

- Make results more trustworthy by measuring and communicating uncertainty properly.

- Adapt models to real detector data, not just ideal simulations.

- Reuse knowledge with transfer learning and foundation models, accelerating progress.

Beyond physics, the same ideas power advances in medicine, climate science, robotics, and many other fields. This chapter gives physicists a practical, principled toolbox—how to pick losses, train and regularize models, represent complex data, generate samples, quantify uncertainty, and deploy models—so they can use ML effectively and responsibly in cutting‑edge science.

Knowledge Gaps

Below is a consolidated list of concrete knowledge gaps, limitations, and open questions that remain unresolved in the paper.

- Principled handling of prior shift in classification: methods to recalibrate probabilistic classifiers when the class prior p(y) in training differs from deployment (especially when p′(y) is unknown), including multi-class settings with uncertain and highly imbalanced mixtures; quantify the impact on decision thresholds, calibration, and physics figures-of-merit.

- Robust domain adaptation under simulator–data mismatch: systematic procedures to detect, quantify, and correct covariate shift p(x) and conditional shift p(x|y) for HEP data; develop sample-efficient, data-driven calibration protocols with uncertainty guarantees for downstream inference.

- Generalization in overparameterized regimes: precise conditions under which benign overfitting and double descent occur for models used in HEP; characterize the implicit bias of different optimizers (e.g., SGD variants, adaptive methods) and architectures on generalization, with actionable training guidelines.

- Loss-function selection for physics objectives: criteria and workflows to choose among degenerate losses that yield the same f*, tailored to physics-relevant metrics (e.g., discovery significance, limit-setting sensitivity) and systematic uncertainty robustness; formal links between discriminative objectives and downstream hypothesis tests.

- Unified uncertainty quantification: scalable methods to propagate aleatoric and epistemic uncertainty through ML pipelines to final physics results; calibration of predictive intervals/probabilities for discriminative models; practical Bayesian model averaging or ensembles with computational budgets suitable for HEP analyses.

- Autoencoder robustness and latent-space priors: principled regularizers/priors ensuring learned latent spaces encode task-relevant physics and remain calibrated under domain shift; diagnostics and guarantees (e.g., sufficiency or informativeness) for representations used in downstream tasks.

- Clustering at scale in high dimensions: adaptive, data-driven selection of k, ε, and minPts (k-means/DBSCAN/HDBSCAN) with theoretical recovery guarantees for HEP-specific distributions; integration with learned latent spaces and uncertainty-aware clustering; evaluation protocols beyond heuristic metrics.

- Density estimation without overfitting to empirical distributions: regularization schemes and validation metrics that prevent convergence to the empirical measure in high-dimensional settings; symmetry-preserving models and cross-validation strategies that reflect HEP data constraints; quantifiable sample complexity.

- Evaluation and likelihood surrogates for implicit generative models: reliable, tractable likelihood or surrogate scoring for VAEs/GANs; standardized fidelity, coverage, and calibration metrics across GANs/VAEs/flows/diffusion for HEP datasets; procedures to detect and mitigate mode collapse with physics-relevant diagnostics.

- Flow-matching and diffusion objectives: comparative analysis of training stability, sample quality, likelihood estimation, and compute efficiency versus normalizing flows in HEP use cases (fast simulation, detector response); hybrid designs with physics constraints (e.g., symmetries, conservation laws) and deployment feasibility.

- Physics-inductive biases and symmetries: architectures delivering exact or controllable invariances (Lorentz, permutation, gauge, translation) with empirical ablations demonstrating when symmetry helps/hurts; theory–practice bridges for symmetry-induced generalization and robustness, including under shift.

- Foundation models and transfer learning for HEP: feasibility and scaling laws for pretraining (data sources, tokenizations/representations, multi-modality), domain adaptation to detectors, catastrophic forgetting mitigation, governance of training data, and compute–benefit tradeoffs for real analyses.

- Optimization “recipes” tailored to HEP data: empirically validated guidelines for initialization, normalization (batch/group/layer norm), learning-rate schedules, early stopping criteria, and regularization (dropout, weight decay) that consistently improve generalization for typical HEP modalities (images, point clouds, graphs).

- Metrics beyond ROC under class imbalance and prior uncertainty: adoption and calibration of precision–recall, expected significance, and cost-aware metrics; principled threshold selection under uncertain prevalence and domain shift; benchmarking protocols that reflect analysis-time realities.

- Mitigating simulator bias in supervised training: weak/learning-from-mixtures methods, label-noise correction, likelihood-ratio trick extensions, and reweighting strategies with uncertainty quantification; practical workflows to combine simulation and data for training without leaking test information.

- Anomaly and out-of-distribution detection with guarantees: unsupervised/semisupervised detectors that control false discovery rates under covariate/label shift; interpretable anomaly scoring and triage pipelines for follow-up physics analyses; standardized HEP benchmarks and stress tests.

- Parameterized models for inference: training strategies and interpolation guarantees for classifiers/regressors conditioned on physics parameters (e.g., masses, couplings); coverage assessments for parameter scans and likelihood-free inference workflows.

- Model compression and deployment constraints: quantization/pruning/distillation methods that preserve physics performance under hardware limits (latency, memory, radiation environment); monitoring for drift and automatic recalibration in deployed systems; reliability/robustness testing protocols.

- Active learning and Bayesian optimization for data/simulation budgets: strategies to adaptively allocate simulation or labeling effort to maximize physics sensitivity; stopping criteria and acquisition functions aligned with HEP objectives; integration with experiment operations.

- Reproducibility and benchmarking: curated, versioned HEP ML benchmarks with clear metrics and baseline baselines; best practices for experiment-agnostic reproducibility (seed control, data splits, hyperparameter logging) and compute-aware comparison standards.

Practical Applications

Immediate Applications

Below are actionable applications that can be deployed now, drawing directly from the paper’s methods (e.g., supervised/unsupervised learning, uncertainty, domain shift, generative models, optimization, model compression, active learning).

- Calibrated classification under prior shift and thresholding (ROC-based working points)

- Sectors: Healthcare (diagnostics triage), Finance (fraud screening), Manufacturing (QA pass/fail)

- Tools/Workflows: Prior-shift recalibration (likelihood-ratio/monotone transform), ROC-driven threshold selection; test–train–validation split; data-driven calibration with held-out data

- Assumptions/Dependencies: Access to representative calibration data; class-prior estimates; limited covariate shift

- Domain adaptation and weak supervision to handle simulation–data mismatch

- Sectors: Healthcare (multi-hospital models), Manufacturing (line-to-line transfer), High-energy physics (simulation vs. detector data)

- Tools/Workflows: Weakly supervised classification (label-proportion shifts), covariate/label-shift reweighting, feature alignment

- Assumptions/Dependencies: Stable p(x|y) across domains or known shift factors; reliable unlabeled target data

- Uncertainty quantification (aleatoric/epistemic), model averaging, and calibration

- Sectors: Healthcare, Autonomous systems, Finance risk, Policy analysis

- Tools/Workflows: Gaussian processes, ensembles, MC-dropout; prediction intervals and reliability diagrams; MAP estimators with explicit priors

- Assumptions/Dependencies: Compute budget for ensembles/GPs; calibration datasets; clear risk thresholds

- Anomaly detection and out-of-distribution detection

- Sectors: Cybersecurity (intrusion), IT/DevOps (incident detection), Manufacturing (fault detection), Spacecraft ops

- Tools/Workflows: Autoencoder reconstruction error, normalizing-flow likelihood scoring, density-ratio tests

- Assumptions/Dependencies: In-distribution coverage in training; drift monitoring; well-chosen representations

- Representation learning and compression (PCA, autoencoders) for telemetry and imaging

- Sectors: Telecom/IoT (bandwidth reduction), Medical imaging (denoising), Remote sensing (satellite imagery)

- Tools/Workflows: Bottleneck autoencoders; reconstruction-error KPIs; lossy/lossless compression pipelines

- Assumptions/Dependencies: Acceptable information loss; alignment with downstream tasks; privacy constraints

- Clustering (k-means, DBSCAN/HDBSCAN, graph-based) for segmentation and grouping

- Sectors: Marketing (customer segments), Single-cell omics (cell types), Supply chain (SKU grouping), HEP (event/object clustering)

- Tools/Workflows: HDBSCAN for varying-density clusters; graph neural net clustering; distance metric tuning

- Assumptions/Dependencies: Appropriate similarity metrics; hyperparameter selection; scalability for large n

- Fast simulation via generative models (GANs, normalizing flows, diffusion/flow matching)

- Sectors: HEP (detector simulation), Autonomous driving (scenario generation), Manufacturing (digital twins)

- Tools/Workflows: “FastSim” surrogates; NFs for tractable likelihoods and diagnostics; fidelity validation suites

- Assumptions/Dependencies: Simulator benchmarks for acceptance; coverage of relevant operating conditions; residual UQ

- Simulation-based inference (likelihood-free) and unfolding

- Sectors: Epidemiology, Climate/economics (policy scenarios), HEP (parameter estimation, unfolding)

- Tools/Workflows: Classifier-based likelihood-ratio estimation; conditional flows; posterior estimation pipelines

- Assumptions/Dependencies: Access to simulators; identifiability; compute for repeated simulation

- Robust training and generalization practices (regularization, early stopping, normalization)

- Sectors: Software/IT ML engineering across industries

- Tools/Workflows: Early stopping on validation loss; L1/L2 penalties; input/batch normalization; careful initialization; dropout

- Assumptions/Dependencies: Proper validation protocols; monitoring for over/underfitting

- Model compression and deployment on constrained hardware

- Sectors: Mobile/edge computing, Industrial sensors, HEP triggers (on-detector)

- Tools/Workflows: L1-induced sparsity/pruning, quantization, distillation; FPGA/ASIC toolchains

- Assumptions/Dependencies: Hardware support; accuracy–latency–power trade-offs; certifiable performance

- Active learning and Bayesian optimization for efficient data/experiment use

- Sectors: Drug/material discovery, Automated labs, Labeling operations, A/B testing

- Tools/Workflows: Pool-based active labeling; multi-armed bandits; Bayesian optimization of experimental conditions

- Assumptions/Dependencies: Human/expert oracle availability; safe exploration policies; automation interfaces

- Physics/inductive-bias architectures (CNNs, equivariant nets) for data with symmetries

- Sectors: Vision, Robotics, Molecular modeling, Scientific imaging

- Tools/Workflows: Translation/rotation-equivariant networks; architecture search incorporating known symmetries

- Assumptions/Dependencies: Correct symmetry assumptions; sufficient data to leverage bias

- Parameterized models and data augmentation to handle systematics

- Sectors: HEP analyses, Manufacturing under condition variability, Vision/audio

- Tools/Workflows: Conditioning on nuisance/systematic parameters; domain-specific augmentations

- Assumptions/Dependencies: Known range of nuisance parameters; augmentations that preserve labels

Long-Term Applications

These applications require additional research, scaling, validation, or ecosystem development (standards, compute, governance).

- Scientific foundation models with physics inductive bias and calibrated uncertainty

- Sectors: Particle/nuclear physics, Astronomy, Materials science

- Tools/Workflows: Multimodal foundation models for detectors/surveys; unified representations; uncertainty-aware fine-tuning

- Assumptions/Dependencies: Shared curated corpora; massive compute; community governance for model updates

- Real-time, uncertainty-aware control via differentiable digital twins (SBI + generative surrogates)

- Sectors: Energy grids, Fusion reactors, Particle accelerators, Robotics

- Tools/Workflows: Normalizing-flow/diffusion surrogates with tractable likelihoods; online posterior updates; MPC with UQ

- Assumptions/Dependencies: Certifiable fidelity; reliable latency; safety/regulatory approval

- Standardized uncertainty reporting in regulated AI (aleatoric/epistemic, model averaging)

- Sectors: Healthcare diagnostics, Finance risk, Transportation safety

- Tools/Workflows: “Uncertainty middleware” for prediction APIs; audit trails; calibration reports

- Assumptions/Dependencies: Regulatory standards; interpretability requirements; liability frameworks

- On-sensor/on-detector ML with ultra-low power and strict latency

- Sectors: HEP triggers, IoT, AR/VR devices, Industrial monitoring

- Tools/Workflows: Compression-aware training; ASIC-friendly architectures; hardware–software co-design

- Assumptions/Dependencies: Mature toolchains; robust real-time validation; endurance under environmental stress

- Autonomous labs closed-loop discovery (active learning + Bayesian optimization + RL)

- Sectors: Materials, Pharmaceuticals, Synthetic biology, Agriculture

- Tools/Workflows: Orchestrated “ActiveLab” platforms integrating simulation-based inference and experimental robotics

- Assumptions/Dependencies: Reliable lab automation; property predictors; safety constraints

- National-scale anomaly sensing across critical infrastructure and space assets

- Sectors: Cyber-physical security, Space situational awareness, Telecom

- Tools/Workflows: Hierarchical density models; OOD sentinels; cross-entity drift governance

- Assumptions/Dependencies: Data sharing agreements; privacy-preserving analytics; robust alert triage

- Systematic weak supervision and domain adaptation at enterprise/government scale

- Sectors: Enterprise ML, Official statistics, Remote sensing

- Tools/Workflows: Label-shift reweighting, domain-invariant representations, confidence transfer

- Assumptions/Dependencies: Valid shift assumptions; monitoring for failure modes; scalable infrastructure

- End-to-end learned reconstruction replacing hand-crafted pipelines with propagated UQ

- Sectors: Medical imaging (CT/MRI/PET), Seismic interpretation, Scientific detectors

- Tools/Workflows: Differentiable pipelines from raw sensor data to final estimates; uncertainty propagation to decisions

- Assumptions/Dependencies: Gold-standard validation; clinical/geoscience acceptance; robustness to distribution shift

- Inverse design via diffusion/flow matching under constraints and uncertainty

- Sectors: Drug discovery, Catalysts, Metamaterials, Batteries

- Tools/Workflows: Generative inverse-design loops with constraint satisfaction and posterior-guided search

- Assumptions/Dependencies: Accurate property predictors; iterative experiment feedback; robust generalization

- Safety-critical small-data modeling with Gaussian processes and kernel surrogates

- Sectors: Aerospace, Nuclear, Medical devices

- Tools/Workflows: Kernel selection/learning; sparse/structured GPs; UQ-first decision frameworks

- Assumptions/Dependencies: Scalable GP approximations; validated kernels; conservative deployment practices

- Policy analytics using simulation-based inference for transparent scenario evaluation

- Sectors: Public health, Macroeconomics, Climate policy

- Tools/Workflows: Likelihood-free posterior estimation; sensitivity to priors; uncertainty-aware counterfactuals

- Assumptions/Dependencies: Credible, documented simulators; openness to uncertainty in decision-making

- Federated, privacy-preserving domain adaptation across institutions

- Sectors: Healthcare consortia, Finance, Cross-border research

- Tools/Workflows: Federated learning with domain adaptation; secure aggregation; privacy auditing

- Assumptions/Dependencies: Legal agreements; communication/computation budgets; heterogeneity-aware methods

- Industry-ready monitoring for double descent/benign overfitting regimes

- Sectors: General ML platforms in Software/IT

- Tools/Workflows: Capacity monitoring, early stopping under modern regimes, implicit regularization diagnostics

- Assumptions/Dependencies: Telemetry from training runs; standardized metrics; organizational MLOps maturity

Each application above reflects concrete methods and insights from the paper (e.g., loss/risk design, calibration under prior shift, simulation-based inference, generative surrogates, uncertainty, regularization, compression, and optimization), translated into sector-specific tools and workflows, with explicit feasibility considerations.

Glossary

- Active learning: A learning paradigm where the algorithm selects informative data points to label in order to improve performance with fewer annotations. "41.5. Optimal control, reinforcement learning, and active learning"

- Aleatoric and epistemic uncertainty: Two types of uncertainty, with aleatoric due to inherent data noise and epistemic due to limited knowledge about the model. "41.10.5. Aleatoric and epistemic uncertainty"

- Anomaly detection: Identifying data points that deviate significantly from the expected distribution or patterns. "41.3.5. Anomaly detection and out-of-distribution detection"

- Autoencoder: A neural network that learns a compressed latent representation of data via an encoder and reconstructs it via a decoder. "the autoencoder f = goe : X > X"

- Bayesian optimization: A global optimization strategy that uses a probabilistic surrogate model and acquisition function to efficiently find optima of expensive objectives. "41.5.4. Bayesian optimization"

- Benign overfitting: A phenomenon where interpolating (zero training error) models can still generalize well due to implicit regularization. "a phenomenon called benign overfitting [19]."

- Bias-variance decomposition: An analysis expressing expected prediction error as the sum of noise, variance, and squared bias terms. "The bias-variance decomposition is a way of analyzing a model's expected risk"

- Cross entropy: An expected negative log-likelihood measuring dissimilarity between the true data distribution and a model distribution. "which is the cross entropy H[p, fo]."

- DBSCAN: A density-based clustering algorithm that groups points by local density and labels sparse-region points as noise. "density-based spatial clustering of applications with noise (DBSCAN)"

- Diffusion models: Generative models that learn to reverse a stochastic diffusion/noising process to sample from complex distributions. "flow-matching and diffusion models [45-48]"

- Domain shift: A mismatch between the distribution of training data and the distribution at deployment or evaluation time. "referred to as domain shift or distribution shift."

- Dropout: A regularization technique that randomly removes parts of the model during training to prevent overfitting. "known as dropout [17]"

- Early stopping: An implicit regularization method that halts training when validation loss stops improving to avoid overfitting. "implicit regularization is through early stopping [15, 16]"

- ELBO: The Evidence Lower BOund used in variational inference to enable tractable training of latent-variable models. "training is based on the ELBO used in variational inference"

- Empirical risk: The average loss over the training dataset, used as a proxy for expected risk. "known as the empirical risk or training loss Remp(fo)"

- Empirical risk minimization: The principle of choosing a model that minimizes empirical risk to approximate the minimizer of expected risk. "The empirical risk minimization principle is a core idea in statistical learning theory [7]"

- Flow matching: A training objective for generative modeling that matches a model’s probability flow to the data flow without directly optimizing likelihood. "flow-matching and diffusion models [45-48]"

- Gaussian mixture model: A probabilistic model that represents a distribution as a weighted sum of Gaussian components. "It can be generalized to a Gaussian mixture model"

- Gaussian process regression: A nonparametric Bayesian regression method defined by a kernel that yields predictive means and uncertainties. "One such example is Gaussian process regression"

- Generative adversarial networks (GANs): Implicit generative models trained via an adversarial game between a generator and a discriminator. "generative adversarial networks (GANs) [38,39]"

- HDBSCAN: A hierarchical extension of DBSCAN that can detect clusters with varying densities by building a density hierarchy. "Hierarchical DBSCAN (HDBSCAN) generalizes to varying densities"

- Huber loss: A robust loss function that is quadratic near zero and linear for large residuals, balancing sensitivity and robustness. "such as the Huber loss"

- Implicit model: A model that can generate samples but does not have a tractable likelihood function. "they are sometimes referred to as implicit models."

- Inductive bias: Architectural or modeling assumptions that constrain the hypothesis space to favor solutions with better generalization. "are broadly referred to as inductive bias in the model."

- Kullback–Leibler (KL) divergence: An asymmetric measure of dissimilarity between probability distributions, often used in training objectives. "the forward Kullback-Leibler (KL) divergence"

- L1 regularization: A penalty on the sum of absolute parameter values that promotes sparsity in model parameters. "which is known as L1 regularization."

- L2 (Tikhonov) regularization: A penalty on the sum of squared parameter values that shrinks parameters without inducing sparsity. "referred to as L2 or Tikhonov regularization."

- LASSO regression: Linear regression with L1 regularization, encouraging sparse parameter estimates and feature selection. "it is known as LASSO regression or ridge regression"

- Latent space: A lower-dimensional representation space in which models encode data, often serving as a bottleneck. "the bottleneck or the latent space of the autoencoder."

- Likelihood-ratio trick: A monotonic transformation linking posterior probabilities and likelihood ratios, enabling prior-agnostic ranking. "which is referred to as the likelihood-ratio trick"

- MAP estimator: The parameter or prediction that maximizes the posterior distribution given the data and a prior. "maximum a posteriori (MAP) estimator"

- Maximum likelihood: An estimation principle that selects parameters maximizing the probability of observed data. "one can use maximum likelihood for the loss function."

- Monte Carlo Markov chain sampling: A family of algorithms using Markov chains to draw samples from complex probability distributions. "Monte Carlo Markov chain sampling."

- Neural tangent kernel: A kernel describing the training dynamics of infinitely wide neural networks under gradient descent. "leads to the concept of neural tangent kernel [23]."

- Neyman–Pearson lemma: A statistical theorem stating that the likelihood ratio test is most powerful for simple hypothesis testing. "the Neyman-Pearson lemma states that the optimal classifier is given by the likelihood ratio"

- Normalizing flows: Invertible transformations that map a simple base distribution into a complex one with tractable likelihood. "normalizing flows (NFs) [40-44]"

- PCA (Principal component analysis): A linear dimensionality reduction technique that maximizes variance along orthogonal directions. "principal component analysis (PCA)"

- Representation learning: Learning useful features or representations from data that facilitate downstream tasks. "41.3.1. Representation learning, compression, and autoencoders"

- Simulation-based inference: Likelihood-free inference techniques that leverage simulators to perform statistical inference. "plays an important role in simulation-based inference (see Sec. 41.6)."

- Unfolding: A procedure to infer true distributions by correcting for detector or measurement effects. "It is also commonly used in unfolding [15]."

- Universal approximator: A model class (e.g., neural networks) that can approximate any function to arbitrary accuracy under certain conditions. "often universal approximators"

- Variational autoencoders (VAEs): Latent-variable generative models trained via variational inference using the ELBO objective. "variational autoencoders (VAEs) [36,37]"

- Weakly supervised approaches: Methods that train models using limited or indirect supervision, such as aggregate labels. "leveraged in weakly supervised approaches [9]"

- Working point: A chosen decision threshold for a classifier that fixes a specific trade-off between efficiencies and false rates. "referred to as a working point"

Collections

Sign up for free to add this paper to one or more collections.