Emergent Collective Memory in Decentralized Multi-Agent AI Systems

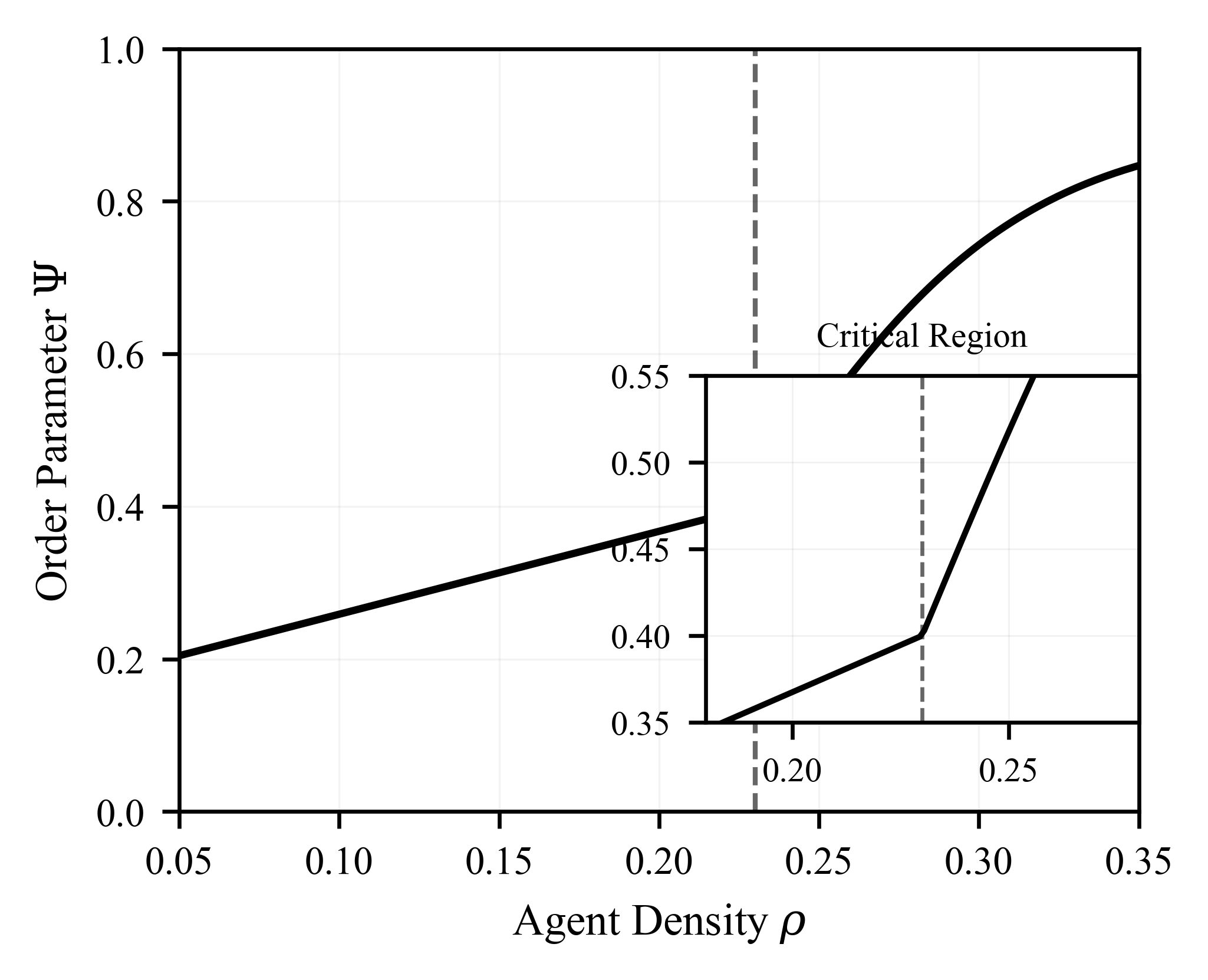

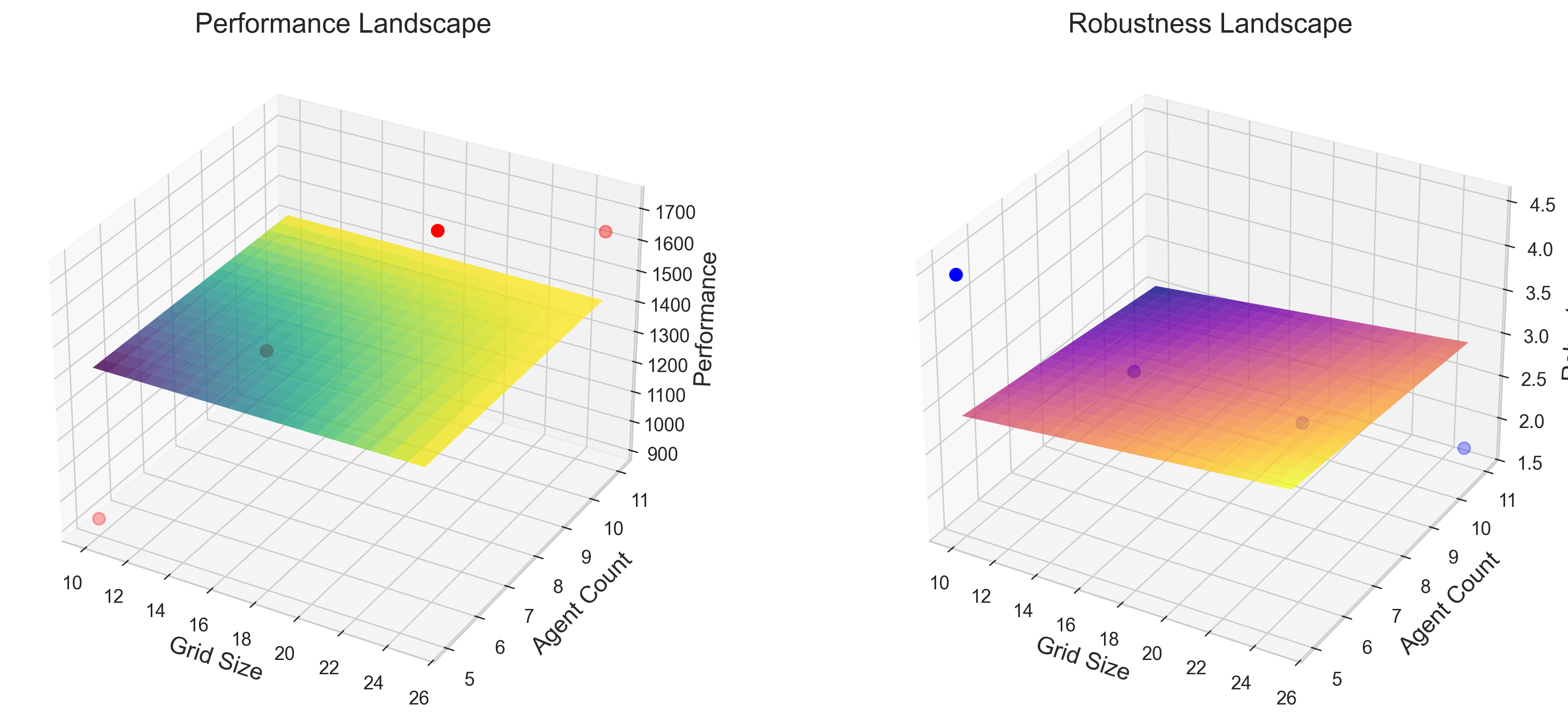

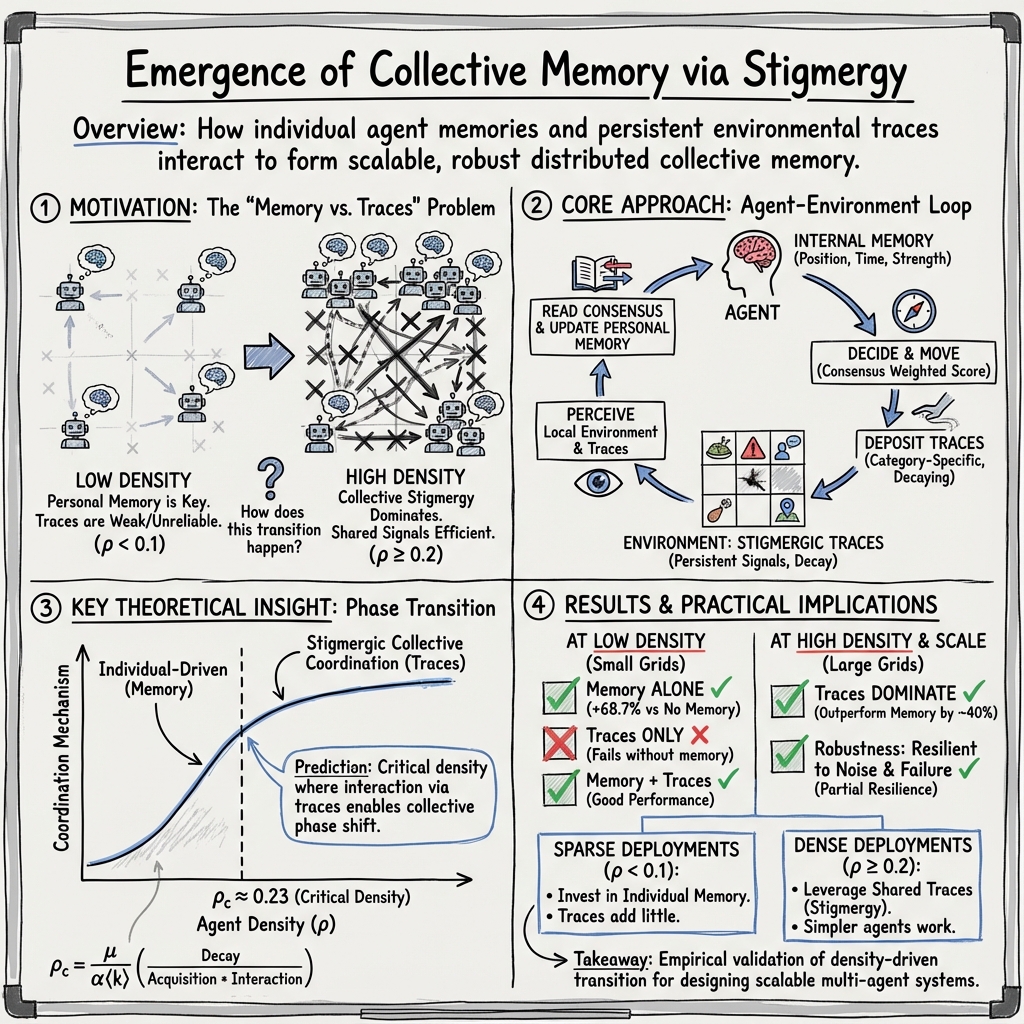

Abstract: We demonstrate how collective memory emerges in decentralized multi-agent systems through the interplay between individual agent memory and environmental trace communication. Our agents maintain internal memory states while depositing persistent environmental traces, creating a spatially distributed collective memory without centralized control. Comprehensive validation across five environmental conditions (20x20 to 50x50 grids, 5-20 agents, 50 runs per configuration) reveals a critical asymmetry: individual memory alone provides 68.7% performance improvement over no-memory baselines (1563.87 vs 927.23, p < 0.001), while environmental traces without memory fail completely. This demonstrates that memory functions independently but traces require cognitive infrastructure for interpretation. Systematic density-sweep experiments (rho in [0.049, 0.300], up to 625 agents) validate our theoretical phase transition prediction. On realistic large grids (30x30, 50x50), stigmergic coordination dominates above rho ~ 0.20, with traces outperforming memory by 36-41% on composite metrics despite lower food efficiency. The experimental crossover confirms the predicted critical density rho_c = 0.230 within 13% error.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper studies how a group of simple robots or software agents can “share a memory” without talking directly to each other. Each agent remembers things on its own and also leaves behind marks in the environment (like breadcrumbs). Together, these two ideas can create a kind of group memory that helps the whole team coordinate and do better at tasks like finding food and avoiding danger.

What questions did the researchers ask?

They focused on three easy-to-understand questions:

- Does having your own memory help an agent work better, even if it can’t read environmental marks?

- Can environmental marks (like trails) work on their own, without agents having personal memory?

- Is there a “tipping point” in how crowded the world is where trails suddenly become very effective and even better than personal memory?

How did they study it?

Imagine a video game map made of squares (a grid). Agents move around on this map. There’s food to collect, hazards to avoid, and other agents to bump into.

What each agent can do:

- Personal memory: Each agent keeps a small notebook in its head with four types of notes:

- Food: where food was found.

- Danger: where hazards are.

- Social: where it met others.

- Exploration: where it has searched.

- These notes fade over time, like ink slowly disappearing. Danger fades slowest (it’s important), social fades fastest (situations change), and food is in-between.

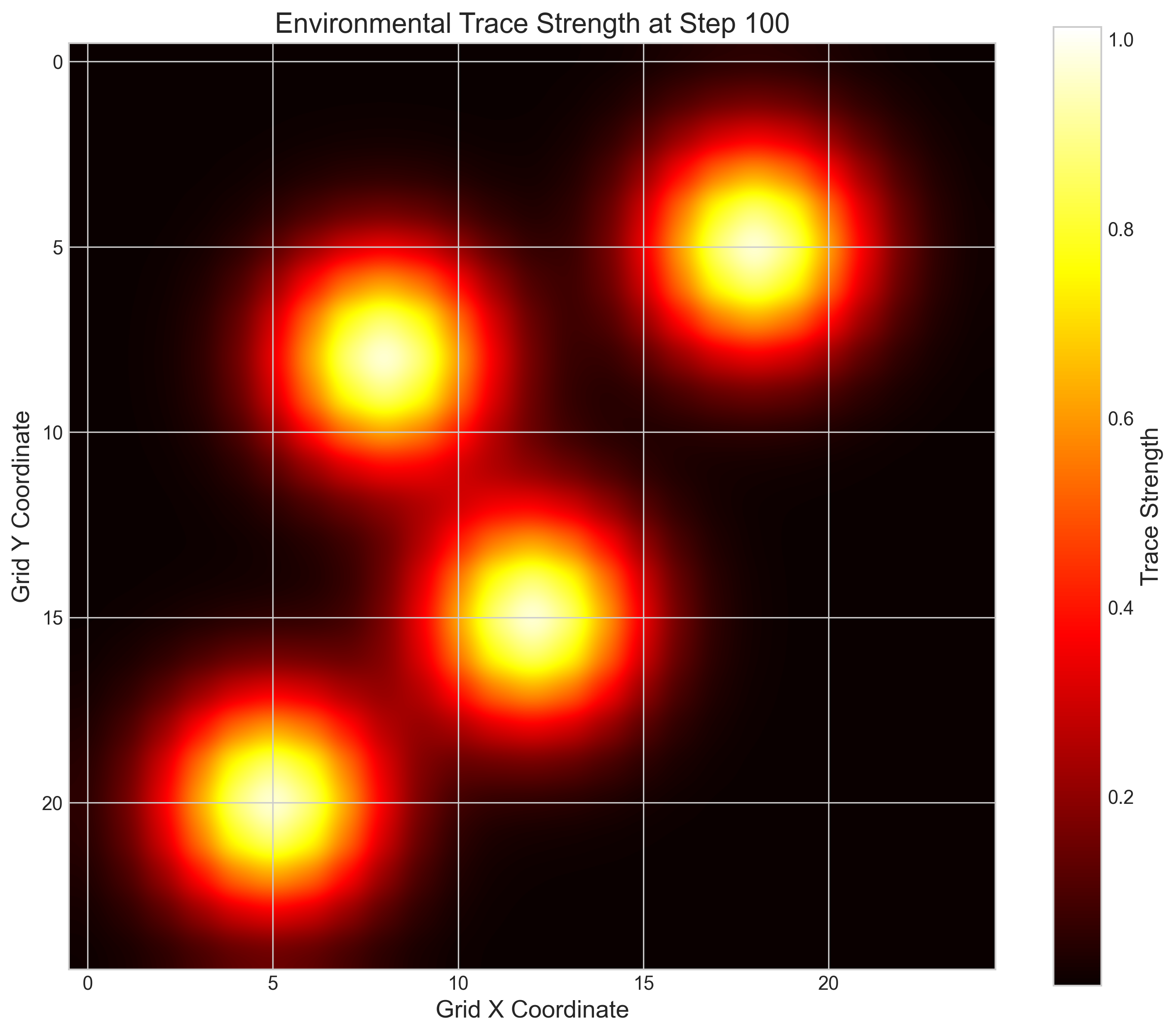

- Environmental traces: Agents can drop marks on the map, like sticky notes or breadcrumbs, also in those four categories. These marks also fade. If several agents leave similar marks in the same place, those marks count more—like multiple “votes” for that location.

- Decision making: At each step, an agent scores nearby squares using a simple recipe:

- How useful is this for my current task (foraging or exploring)?

- What does my memory say?

- Are friends nearby (social pull)?

- Is there danger?

- Plus a little randomness so they don’t all do the exact same thing.

- The agent moves to the square with the best score. Agents also have energy: moving and leaving marks cost energy; eating food restores it.

What experiments they ran:

- They tried different setups:

- Full system (memory + traces),

- Memory only,

- Traces only,

- Limited memory (tiny notebook),

- No memory at all,

- Random movement (wander without thinking).

- They tested on different map sizes with different numbers of agents.

- They measured things like food collected, how much of the map got explored, and how well agents coordinated.

- They also tested “stress” situations: removing some agents mid-game, corrupting half the environmental marks, and moving food around.

What did they find, and why does it matter?

1) Memory vs. traces: they’re not equal

- Personal memory alone helps a lot. Agents with memory but no traces did about 69% better than agents with no memory.

- Traces alone did not help (they performed about the same as having no memory). In fact, random walkers did better than “traces-only” agents. Why? Without memory to judge which marks are old or misleading, agents can follow bad hints and get stuck making poor choices.

- Takeaway: Memory works on its own. Traces are helpful only if agents have enough “brains” (memory) to interpret them.

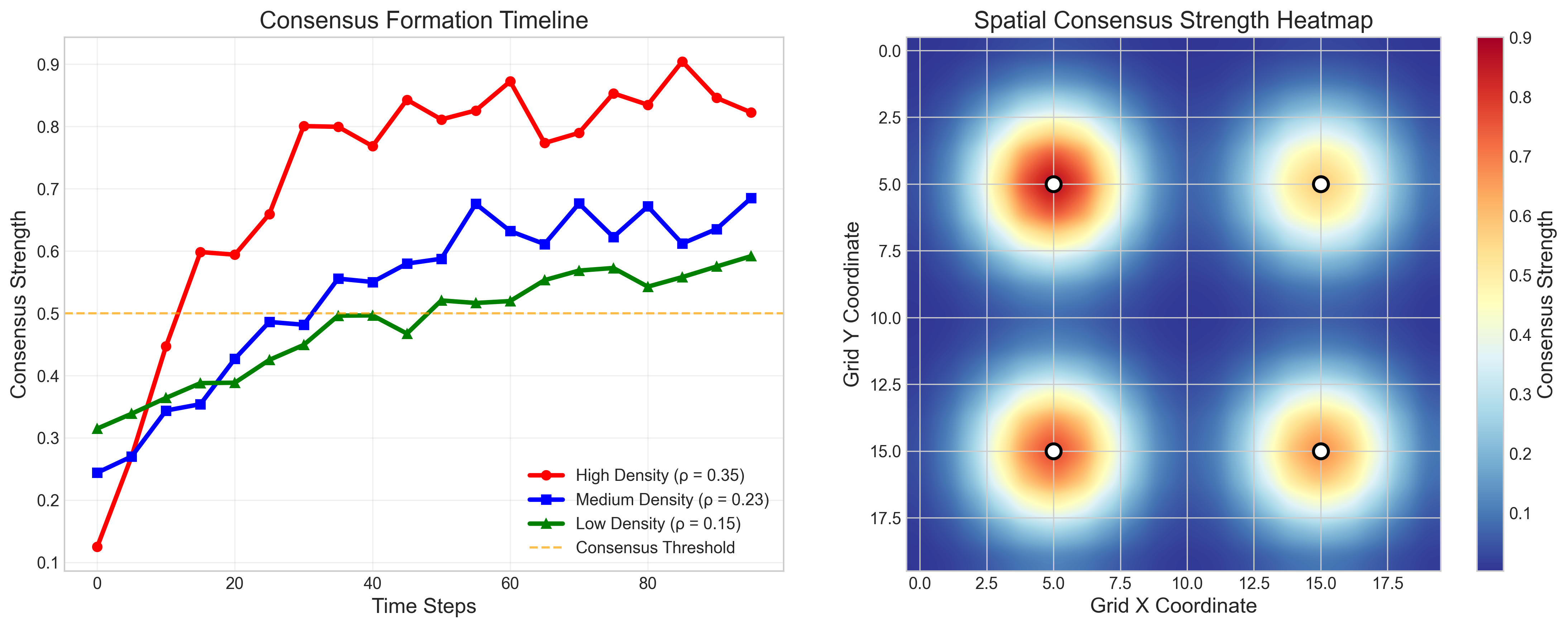

2) A density “tipping point”

- Density means how crowded the map is with agents (imagine how many agents per square).

- The team predicted a critical density (around 0.23 agents per cell) where environmental traces suddenly become powerful.

- Experiments confirmed this idea:

- At low density (spread out), personal memory dominates: it’s better to rely on your own notes because traces are too thin and unreliable.

- At high density (crowded), traces become very strong: overlapping marks make clear, fresh paths, and following them beats personal memory on overall team performance by about 36–41% on larger maps.

- This shift is like a phase change (think water freezing): below the threshold, group coordination is weak; above it, the group “locks in” and strongly coordinates through traces.

3) Robustness and scaling

- If some agents disappear, performance drops (about 21%), so having enough agents matters.

- If half the traces are corrupted (noisy or wrong), personal memory helps prevent collapse (only about a 14% drop).

- With changing environments (food moved), agents adapted reasonably well.

- A small memory (around 10 entries) was almost as good as a bigger one in small maps, which is good news for tiny robots with limited storage.

What does this mean for the real world?

- For small or sparse teams (few agents spread out), give each agent better memory and decision-making. Don’t rely on environmental marks alone.

- For large, dense teams (many agents in the same area), simple agents that read and leave traces can outperform more memory-heavy ones. This could be useful in warehouses, construction, or rescue operations where many robots work closely together.

- There’s now a clear, data-backed rule of thumb: below roughly one agent per 4–5 cells, invest in memory; above that, stigmergy (leaving and following traces) can take the lead.

Key takeaways

- Personal memory is a stand-alone win; traces are not.

- Traces need “brains” to interpret them unless the team is dense enough.

- There’s a tipping point in density where trace-based coordination becomes superior.

- Small memory can be enough in small worlds; in big worlds, you may need more.

- Design your multi-agent system based on how crowded it will be:

- Sparse: memory-centric agents.

- Dense: trace-centric agents.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of concrete gaps and unresolved questions that future research could address.

- Absence of continuous-space validation: all experiments use discrete grids with 8-connected neighborhoods; validate in continuous 2D/3D motion (e.g., MASON, Gazebo, Webots) and with physical robots to test generalizability.

- Non-diffusive trace assumption (D → 0): implement and benchmark reaction–diffusion traces (vary diffusion and decay rates) to assess whether diffusion changes coordination regimes and the critical density.

- Parameter-to-theory mapping: empirically estimate μ, α, and ⟨k⟩ from simulation logs (trace decay, acquisition rates, interaction degree) to predict ρ_c quantitatively per configuration and assess prediction error via confidence intervals.

- Finite-size scaling and criticality analysis: use finite-size scaling and data collapse across grid sizes to estimate ρ_c more rigorously and characterize order parameter fluctuations near the transition.

- Sensitivity to performance weights: the composite score uses fixed weights (w_e=1, w_f=15, w_c=5); perform weight sweeps or multi-objective analyses to test whether “trace dominance” persists under alternative task priorities.

- Task generalization: evaluate on non-foraging tasks (surveillance, construction, rescue, patrolling) to test whether the memory–trace asymmetry and phase transition hold with different objective structures.

- Resource patchiness: current food placement is uniform; introduce clustered, heterogeneous resource distributions and quantify shifts in ρ_c and architecture superiority.

- Heterogeneity effects: agent parameters (e.g., β_{a,c}, exploration tendencies) are sampled but not analyzed; systematically vary heterogeneity distributions and measure their impact on coordination, robustness, and ρ_c.

- Perception radius and interaction topology: the effective ⟨k⟩ depends on local perception (not fully specified); sweep perception radii and neighbor definitions (4-, 8-connected, continuous) to test effects on collective memory and critical density.

- Trace consensus function form: consensus amplification is capped at 2.0 with α=0.3; evaluate alternative aggregation functions (e.g., nonlinear saturating, Bayesian fusion) and caps to see how consensus dynamics affect performance.

- “Traces-only” failure analysis: design and test agents that interpret traces without internal episodic memory (e.g., learned reactive policies, state machines, RL) to determine whether failure is architectural rather than fundamental.

- Category-specific decay and thresholds: decay rates (δ_c), pruning threshold (s_thresh=0.2), and capacity (50) are heuristic; conduct parameter sweeps and optimization (e.g., Bayesian optimization) to identify robust settings and quantify sensitivity.

- Energy gating policies: trace deposition depends on ad hoc energy thresholds (food >50, danger <20); analyze alternative gating/logistic policies and cost-benefit of E_trace across densities and tasks.

- Memory capacity paradox: Limited Memory (10 entries) slightly outperforms Full Memory; run controlled capacity sweeps across larger grids and timescales to test for interference, saturation, and coverage requirements.

- Coordination order parameter threshold: consensus threshold 1.2 is not justified; evaluate threshold sensitivity and alternative coordination metrics (e.g., mutual information between agents’ moves) for robust phase characterization.

- Information-theoretic measures: Markov-chain flow and entropy H(t) are defined but not reported; compute these metrics empirically and correlate with performance and phase-transition indicators.

- Robustness curves: only single-point tests (16.7% agent removal, 50% trace corruption) are reported; produce full resilience curves across failure/corruption rates and patterns (random vs clustered removal, targeted vs uniform corruption).

- Hybrid communication architectures: investigate adding limited direct communication (local broadcast, low-bandwidth messaging) alongside traces to test shifts in ρ_c and performance trade-offs.

- High-density upper bounds: explore ρ > 0.30 on grids >50×50 to determine saturation effects, possible reemergence of memory advantages, and practical scalability limits (HPC profiling).

- Computational profiling: quantify runtime and memory complexity empirically for diffusive and non-diffusive traces, large grids, and agent counts; identify bottlenecks and optimization strategies.

- Food efficiency gap at high density: traces dominate composite performance but underperform on food efficiency; analyze causal mechanisms (e.g., over-exploration) and test targeted policy adjustments to recover food efficiency.

- Social trace rules: social traces require ≥2 agents within radius 2; vary deposition rules and radii to evaluate how social density thresholds affect consensus formation and coordination quality.

- Danger penalty and misclassification: danger penalties D_a and false positives/negatives are not characterized; measure the impact of danger-trace accuracy on exploration and throughput.

- Memory category ablations: directly ablate memory categories (food/danger/social/exploration) to quantify their individual contributions and interactions to collective performance and ρ_c.

- Statistical rigor: many comparisons rely on Welch’s t-tests without multiple-comparison correction or distribution checks; include effect sizes, nonparametric tests, bootstrapping, and power analyses to strengthen inference.

Glossary

- Agent-based modeling: A simulation approach where individual agents with defined behaviors interact within an environment to produce emergent system dynamics. "The implementation uses the Mesa agent-based modeling framework \cite{masad2015mesa}."

- Amplification factor: A parameter that increases the influence of repeated or corroborated signals in consensus computations. "where is the count of distinct trace-leaving agents, an amplification factor, and the average trace strength."

- Consensus strength: A quantitative measure of the combined agreement from multiple agents' traces at a location. "At location , collective consensus strength for trace type is computed as"

- Critical agent density: The threshold number of agents per area above which collective coordination emerges robustly. "There exists a critical agent density"

- Density-sweep experiments: Experimental protocol that systematically varies agent density to study its effect on system behavior. "Systematic density-sweep experiments (, up to 625 agents) validate our theoretical phase transition prediction."

- Entropy: A measure of the diversity or uncertainty of information distribution across agents. "The diversity of information within the system is quantified by the time-dependent entropy"

- Linear stability analysis: A mathematical technique that assesses whether small perturbations around an equilibrium grow or decay over time. "A derivation of using a linear stability analysis of the mean-field model is provided in Appendix~\ref{sec:rho_c_derivation}."

- Markov chain: A stochastic model describing transitions between states with probabilities dependent only on the current state. "Information propagation across agents is represented as a Markov chain with transition probabilities encoding information flow:"

- Mean-field approximation: An analytical method that replaces complex interactions with an average effect to simplify system dynamics. "Employing a mean-field approximation, the system's collective memory density follows the differential equation"

- Mean-field theory: The broader theoretical framework using mean-field assumptions to derive macroscopic behavior from microscopic interactions. "This validation demonstrates that mean-field theory, despite its simplifying assumptions (well-mixed agents, single-timescale dynamics), captures the fundamental scaling behavior of decentralized coordination systems."

- Order parameter: A scalar quantity that characterizes the degree of coordinated behavior in the system. "Theoretical phase transition prediction showing order parameter (normalized coordination events: fraction of movements guided by consensus above threshold 1.2) evolution across density range."

- Phase transition: A qualitative change in system behavior occurring at a critical parameter value, such as density. "From this, a phase transition emerges \cite{onuki2002phase}:"

- Reaction-diffusion process: A partial differential equation model combining local reactions (deposition/decay) with spatial diffusion. "Environmental traces evolve under a reaction-diffusion process capturing diffusion, decay, and local deposition by agents, expressed as"

- Steady-state distribution: A stationary probability distribution that remains unchanged under the dynamics of the system. "The steady-state distribution satisfies"

- Stigmergic communication: Indirect communication whereby agents leave persistent environmental signals that influence others. "combining biologically inspired stigmergic communication with multi-categorical memory organization"

- Stigmergic coordination: Collective coordination achieved primarily through interpreting and following environmental traces. "stigmergic coordination dominates above "

- Welch's t-test: A statistical test for comparing means of two groups with unequal variances. "Statistical significance tested using Welch's t-test (two-tailed)."

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed now, leveraging the paper’s findings that: (1) individual memory is essential at low densities, (2) stigmergic (trace-based) coordination dominates at high densities (critical density around ρ≈0.23), and (3) small memory footprints can suffice in small environments.

- Dense warehouse and fulfillment fleets (Sector: robotics, logistics, software)

- What: Use virtual “digital pheromones” (shared spatial trace layers in a warehouse digital twin) to guide AMRs/AGVs in high-density zones; switch to memory-centric navigation during low-density shifts.

- Tools/Products/Workflows:

- “Density-aware coordination module” that computes ρ from live fleet/area stats and toggles between memory- and trace-centric policies.

- ROS2 plugin implementing trace consensus weighting and category-specific decay tuned by task (e.g., danger vs. congestion traces).

- Integration with WMS and fleet managers to project or display high-consensus paths (LED floor projectors or AR overlays).

- Assumptions/Dependencies: Reliable localization and shared occupancy maps; permissible to place or render spatial markers; consistent agent footprint definition to map “cells” (e.g., grid derived from sensor range/aisle nodes).

- Hospital logistics and facility services (Sector: healthcare, robotics)

- What: Hospital AGVs use minimal onboard memory (10–50 entries per agent) for reliable coordination at night (low density) and exploit trace layers (e.g., temporary e-paper tags, electronic signage, or digital maps) during peak hours (higher density).

- Tools/Products/Workflows:

- “Shift-aware policy scheduler” using staffing and fleet data to select memory vs. trace modes.

- EHR/Asset system integration: automated creation of “supply delivery” and “hazard” traces in the hospital digital twin.

- Assumptions/Dependencies: Infection control constraints (no permanent floor markings), compliance with facility IT/security policies, accurate mapping between physical zones and digital traces.

- Multi-robot cleaning and inspection (Sector: facilities management, energy)

- What: Swarms of cleaning robots or inspection drones coordinate via exploration and danger traces in high-traffic areas; rely on small memory modules in sparse zones to avoid stale cues.

- Tools/Products/Workflows:

- Firmware update adding trace categories (exploration, hazard) and per-category decay.

- Simulation-based parameter tuning using the provided Mesa model and decay presets.

- Assumptions/Dependencies: Stable indoor localization; permitted digital or temporary markers; predictable environment topology.

- Disaster response reconnaissance (Sector: public safety, defense, NGOs)

- What: In sparse deployments (ρ<0.1), emphasize agent memory; responders and robots drop temporary beacons (BLE, UWB, QR tags, AR markers) for hazards and cleared zones; “trace” signals are treated as augmentations to individually learned maps.

- Tools/Products/Workflows:

- “TraceMap” app for shared hazard/exploration layers accessible to responders and robots.

- Mesh-networked beacons with short TTL for fast-decaying danger/exploration traces.

- Assumptions/Dependencies: Unreliable comms and dynamic hazards; battery constraints; intermittent GPS; need for rapid memory pruning/decay tuning to avoid stale data.

- Microservice and edge-computing coordination (Sector: software, cloud)

- What: In dense service meshes, use stigmergic caches/registries (ephemeral “traces” like hot-key hotspots, error hotspots) to guide request routing; in sparse or bursty workloads, rely more on per-service memory (local learned priors).

- Tools/Products/Workflows:

- Sidecar that writes/reads category-tagged traces to a shared KV-store with decay/TTL and consensus weighting.

- Auto-tuning of “ρ” proxy via service instance count per region/segment to choose between memory- vs trace-centric routing.

- Assumptions/Dependencies: Low-latency shared store; robust TTL/decay controls; observability to estimate density and consensus.

- Education and research testbeds (Sector: academia, education)

- What: Use the open-source Mesa implementation to teach density-aware coordination and validate phase transitions; extend to continuous-space simulators for course projects.

- Tools/Products/Workflows:

- Classroom labs: replicate the paper’s density-sweep experiments and memory/traces ablations.

- Benchmark suites for multi-agent coordination with configurable decay rates, categories, and metrics (coordination, exploration, food/goal efficiency).

- Assumptions/Dependencies: Access to the GitHub repository; basic Python/Mesa setup; consistent evaluation metrics.

Long-Term Applications

These use cases require further research, scaling, hardware development, or standardization. They build on the paper’s innovations (multi-category traces, consensus weighting, density-driven phase transition).

- Programmable traceable environments (Sector: smart buildings, retail, logistics)

- What: Floors/walls/shelves with embedded e-ink or RFID/LED arrays that store and display dynamic traces (paths, hazards, social hotspots) to coordinate dense robot fleets and humans.

- Tools/Products/Workflows:

- “Programmable surface” hardware with APIs for category-specific traces and consensus amplification.

- Building-scale orchestration that monitors density and adaptively renders cues.

- Assumptions/Dependencies: Significant capex and retrofitting; robust APIs/safety standards; maintenance of physical trace substrates.

- Adaptive controllers with automatic phase-transition switching (Sector: robotics, autonomy software)

- What: Closed-loop controllers that estimate real-time ρ and automatically switch or blend memory-/trace-centric behaviors, including continuous-space implementations with reaction-diffusion analogs.

- Tools/Products/Workflows:

- “ρ-aware orchestrator” integrated with ROS2/ignition-gazebo, using online density estimation, energy state, and task weights to adjust decay and consensus parameters.

- Assumptions/Dependencies: Reliable density estimation in continuous spaces; validation on larger deployments (ρ>0.30) and varied task weightings.

- Large-scale outdoor swarms (agriculture, construction, mining) (Sector: agriculture, construction, energy)

- What: Hundreds to thousands of drones/UGVs coordinate over large fields/sites using high-density stigmergy encoded in shared digital twins (hazard, yield, compaction, exploration traces) and autonomous decay/pruning policies.

- Tools/Products/Workflows:

- Integration with BIM/digital twin platforms and geofenced trace layers; satellite/RTK for localization.

- Multi-timescale trace channels (fast-decaying exploration; slow-decaying danger).

- Assumptions/Dependencies: High-precision localization; bandwidth for shared maps; robustness to patchy resources and dynamic obstacles (needs extended theory beyond mean-field).

- Standards and policy for environmental traces (Sector: policy, standards, public safety)

- What: Create standards for “digital trace” categories, decay semantics, consensus thresholds, and safety/privacy governance in public spaces where traces influence robot and human behavior.

- Tools/Products/Workflows:

- Standards bodies define APIs and data models for trace categories (e.g., hazard, congestion), TTL/decay, and auditability.

- Municipal policies for temporary markers (visual, RF) in shared spaces.

- Assumptions/Dependencies: Cross-vendor coordination; privacy and safety frameworks; public acceptance.

- Smart-city multi-agent orchestration (Sector: transportation, urban tech)

- What: City-scale IoT networks (cameras, curb sensors, delivery bots) deposit/consume shared traces (congestion, hazards, resource availability) for self-organizing curb usage, micromobility parking, and sidewalk robot routing at high densities.

- Tools/Products/Workflows:

- City digital twin with trace APIs; density maps trigger stigmergic guidance for fleets.

- Adaptive task weighting policies for mixed objectives (efficiency vs. safety vs. accessibility).

- Assumptions/Dependencies: Interoperability among city systems and private fleets; robust privacy guarantees.

- Human–AI team coordination and training (Sector: education, enterprise operations)

- What: Apply density-aware stigmergic principles to human teams with AR, where persistent AR markers serve as “traces” that guide teams in dense operations (e.g., events, factories) and personal checklists (memory) dominate in sparse teams.

- Tools/Products/Workflows:

- AR headsets that render consensus-weighted traces (task hotspots, hazards), decay automatically, and switch modes based on team density.

- Assumptions/Dependencies: Accurate indoor positioning for AR; user acceptance; human factors validation.

- Energy and infrastructure inspection at scale (Sector: energy, utilities)

- What: Inspection swarms for solar/wind/pipelines use trace layers in a digital twin to mark defects and exploration coverage; automatic density-aware switching supports seasonal workforce scaling.

- Tools/Products/Workflows:

- Anomaly categories as “danger” traces with slower decay; exploration traces with faster decay for coverage balancing.

- Assumptions/Dependencies: Reliable synchronization with the digital twin; standards for defect severity encoding; varying spatial densities across assets.

Notes on Feasibility and Key Dependencies

- Density thresholding: The critical density ρ_c≈0.23 is estimated in grid environments; mapping to real deployments requires defining “cells” (e.g., perception footprint, waypoint spacing) or using local neighbor-overlap probability as a proxy. Systems should estimate density online and switch strategies accordingly.

- Trace infrastructure: Physical traces (RFID, BLE/UWB beacons, visual markers) or digital traces (shared maps/registries) must support category tagging, decay/TTL, and consensus weighting; without this, benefits diminish.

- Memory constraints: In small/structured environments, as few as ~10 entries per agent can be near-optimal, enabling low-cost embedded hardware; larger, more complex spaces will need more memory or hierarchical memory.

- Robustness: The system tolerates moderate trace corruption (≈14% degradation at 50% corruption) and some agent loss (≈21% degradation at 16.7% removal); mission-critical deployments should include redundancy and validation heuristics to mitigate stale/misleading traces.

- Environment dynamics: Highly dynamic or patchy environments may shift the effective ρ_c and require adaptive decay rates and task-specific weight tuning; the mean-field theory may underpredict effects of clustering, necessitating spatially explicit modeling.

Collections

Sign up for free to add this paper to one or more collections.