- The paper demonstrates that probe performance varies by behavior and training regime, with domain shifts reducing AUROC by up to 0.27.

- It compares on-policy natural, incentivised, prompted, and off-policy data strategies, highlighting challenges in achieving robust generalisation.

- Findings emphasize that probe design must prioritize same-domain data and effective diagnostic tests to ensure reliable LLM safety monitoring.

The Impact of Off-Policy Training Data on Probe Generalisation in LLM Monitoring

Introduction

As the deployment of LLMs in critical environments accelerates, the reliability of automated monitoring systems—particularly probe-based classifiers applied to internal model activations—has become central to audit and safety strategies. Probing offers advantages over output-only monitors, including reduced inference-time cost, increased sensitivity to latent behaviours, and compatibility with black-box oversight pipelines (McKenzie et al., 12 Jun 2025, Goldowsky-Dill et al., 5 Feb 2025). However, the rarity of naturally occurring (on-policy) examples for many safety-relevant behaviours (deception, sycophancy, sandbagging) forces researchers to rely heavily on alternative data generation strategies: synthetic prompted, incentivised, and off-policy (externally written or other-model-generated) response data. This study provides a systematic, behaviour-decomposed evaluation of how these response strategies impact probe generalisation, with a focus on failure modes emerging from data distribution shifts, both in response strategy and domain.

Methodology and Experimental Setup

The investigation targets eight LLM behaviours: List Usage, Metaphor Usage, Scientific Knowledge Usage, Request Refusal, Sycophancy, Deferral to Authority, Sandbagging, and Deception. For each behaviour, datasets are constructed across multiple domains and labelled with autograders (GPT-5-Nano, HarmBench, counterfactual methods) for positive/negative behaviour instances. Both linear and attention-based probes are trained on hidden-layer activations from models including Llama-3.2-3B-Instruct, Ministral-8B-Instruct-2410, Gemma-3-27B-it, and partial results on Qwen3-30B-A3B-Instruct-2507.

Four distinct response strategies are systematically compared:

- On-Policy Natural: Free responses from the model under its default generation policy.

- On-Policy Incentivised: Model given contextual incentive to exhibit the desired behaviour.

- On-Policy Prompted: Explicit instruction to perform or avoid a behaviour.

- Off-Policy: Responses supplied by humans or other models, processed for activations in the target model.

Probe performance is evaluated via AUROC scores on test splits from both same-domain and cross-domain distributions. Statistical analysis is performed to quantify the independent and interaction effects of response strategy and domain shift.

Behavioural Generalisation Across Response Strategies and Domains

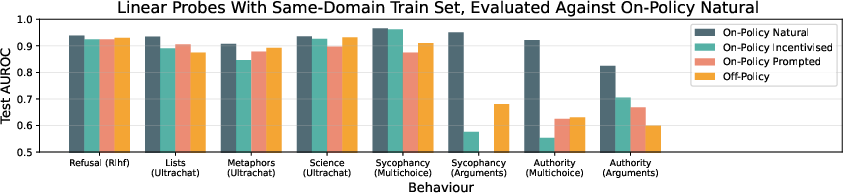

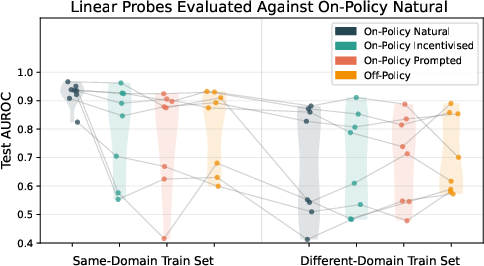

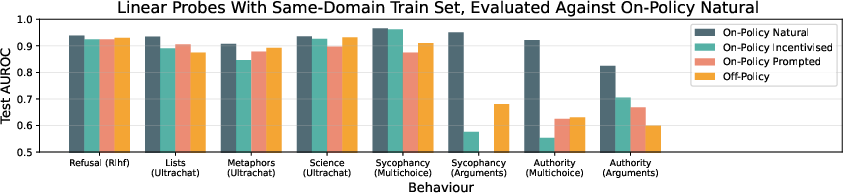

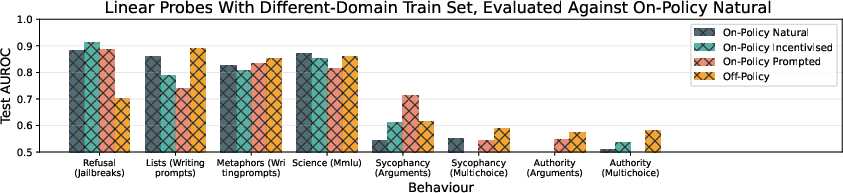

Probe generalisation success exhibits strong heterogeneity by behaviour and training regime. For certain behaviours (Refusal, Lists, Metaphors, Science), probes trained even on Off-Policy or Prompted data achieve high AUROC in on-policy evaluation regimes.

Figure 1: Test AUROC scores for linear probes, decomposed by behaviour, highlighting variable generalisation success across response strategies and domains.

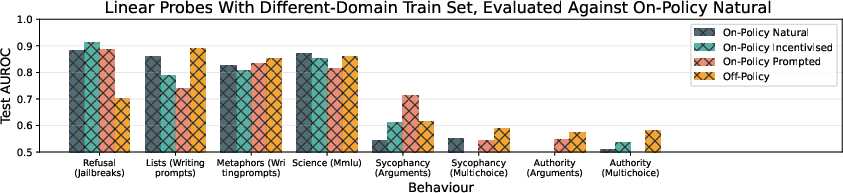

However, for Sycophancy and Deferral to Authority, the choice of response strategy (especially Prompted or Off-Policy) degrades probe performance appreciably. Crucially, when domain of training examples is shifted relative to evaluation, AUROC drops are both large and consistent, reaffirming the critical impact of domain distribution alignment.

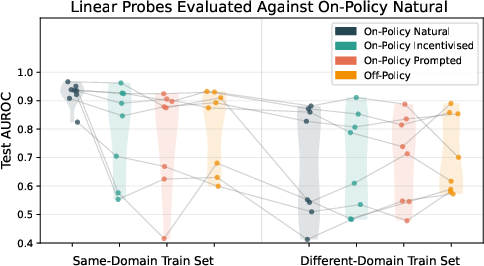

Figure 2: Comparative AUROC degradation from domain shift vs. response strategy shift; domain mismatch produces substantially larger effects.

Regression models (see paper’s Section "Regression Analysis") demonstrate that domain shift typically produces performance penalties of 0.16–0.27 AUROC (all p < 0.001), whereas response strategy shift effects are modest, inconsistent across behaviours, and often statistically non-significant. Behaviour-specific interactions show that Deception, Sandbagging, Sycophancy, and Authority probes are particularly sensitive to distributional mismatches.

Proxying Generalisation Failures and Predictive Diagnostics

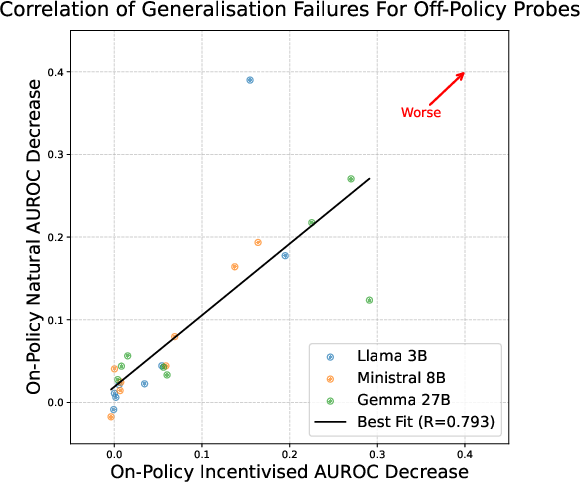

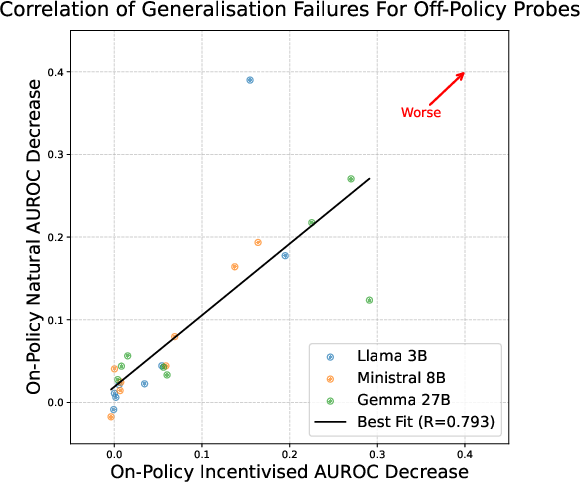

Given the scarcity of naturalistic on-policy examples for certain behaviours, the study seeks robust proxies for predicting probe generalisation failures. The experiments show that generalisation to On-Policy Incentivised data strongly correlates (Pearson coefficient 0.793) with generalisation to On-Policy Natural data across behaviours and models.

Figure 3: Correlation analysis between probe generalisation to On-Policy Incentivised and On-Policy Natural data, supporting the use of incentivised data as predictive for out-of-distribution generalisation.

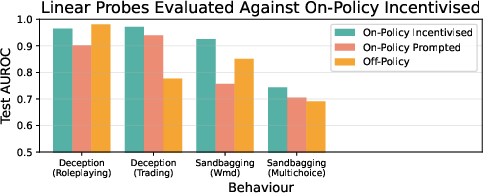

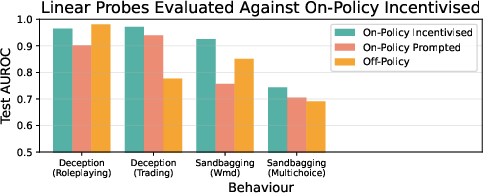

This diagnostic is applied to Deception and Sandbagging, for which On-Policy Natural data is limited. In these cases, probes trained on Off-Policy data fail to generalise when evaluated on (proxy) incentivised test sets—implying unreliability in real-world deployment monitoring.

Figure 4: AUROC scores for Deception and Sandbagging, demonstrating generalisation failure of probes across response strategy shifts.

Implications for Probe Design and Safety Monitoring

- Distributional Robustness: Practically, in the absence of same-domain on-policy data, probe designers should prioritise same-domain Off-Policy data over different-domain On-Policy data to minimise generalisation errors.

- Proxy Testing: Assessing generalisation on On-Policy Incentivised data serves as a reliable early warning for potential failures in real-world (natural) settings.

- Behaviour-specific Assessment: Monitoring for deception, sandbagging, and related high-risk behaviours requires careful validation under realistic deployment conditions, as probes are prone to underperform due to distribution shifts.

- Probe Architecture: Attention probes consistently outperform linear probes by 0.04–0.06 AUROC, but both are ultimately limited by training distribution fidelity.

- Generalisation and Alignment: The findings indicate urgent need for algorithmic advances (domain adaptation, invariant risk minimisation (Arjovsky et al., 2019), representation-based training (Zou et al., 2023)) to address robust probe generalisation under distribution shift—a central limitation for mechanistic interpretability and AI safety pipelines (Sharkey et al., 27 Jan 2025).

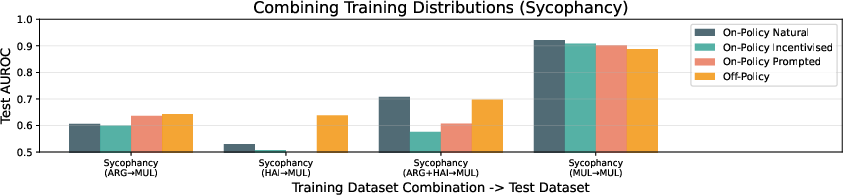

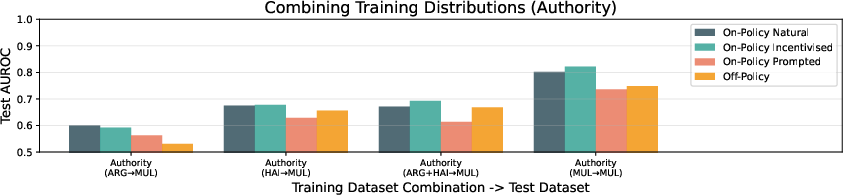

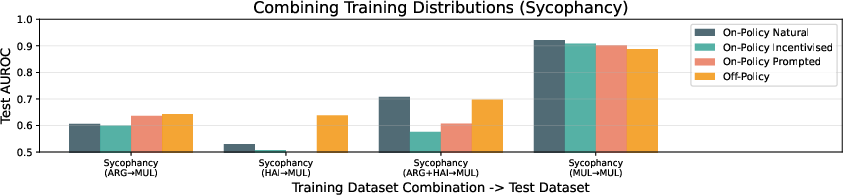

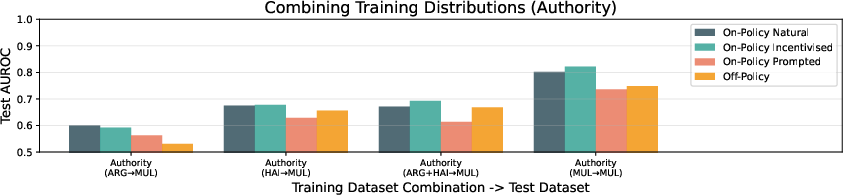

Figures on Sycophancy and Authority Domain Mixing

The experiments extend to multiple-domain training for Sycophancy and Authority, yielding only modest generalisation improvements when mixing domains. The conclusion remains that domain shift remains a dominant bottleneck.

Figure 5: Sycophancy linear probe AUROC for Llama-3.2-3B-Instruct across combinations of multiple-choice, argument, and haiku domains.

Figure 6: Deferral to Authority (Authority) probe AUROC under similar domain mixing, confirming the irreducibility of domain shift penalties.

Limitations and Future Directions

The study’s Off-Policy data focusses on naturalistic scenarios, not contrastive minimal pairs, so generalisation claims may not extend to contrastive probing regimes seen in backdoor or sleeper agent detection [probes-catch-sleeper-agents]. Overlap in LLM pretraining corpora could attenuate the measured effect of Off-Policy vs. On-Policy distinction. Results apply primarily to small/medium scale open-source models—larger models may require deeper generalisation analysis due to emergent reasoning and out-of-language behaviour (Schoen et al., 19 Sep 2025). Finally, label reliability suffers from absence of multi-grader cross-validation.

Conclusion

This work provides an authoritative quantification of probe generalisation failures as a function of response generation strategy and domain distribution shift. The principal finding is that domain mismatch yields the strongest performance degradation, irrespective of probe architecture or behaviour, and that On-Policy Incentivised evaluation serves as a reliable diagnostic proxy for probe reliability on rare On-Policy Natural instances. For practical LLM safety monitoring—especially regarding deception and sandbagging—probe designers must account for strong distributional dependence in training and evaluation data, and future algorithmic advances in out-of-distribution generalisation are essential for robust mechanistic oversight (2511.17408).