- The paper introduces a novel iterative reasoning mechanism that interleaves textual and latent reasoning to stabilize latent representation evolution and improve coherence.

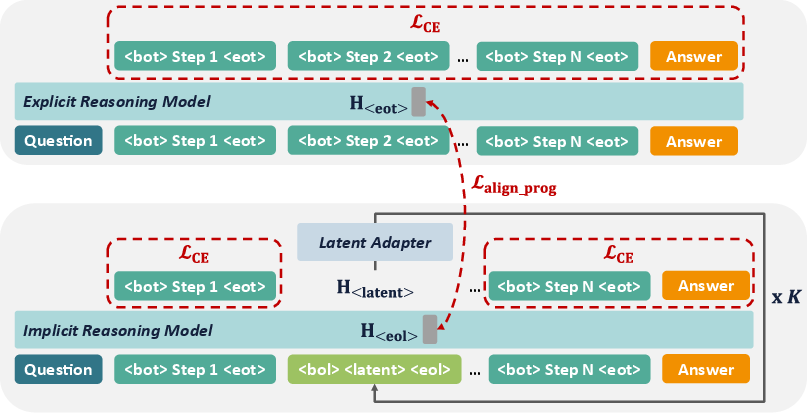

- The methodology employs a progressive alignment objective to map latent tokens into a shared space with text tokens, ensuring consistent iterative updates.

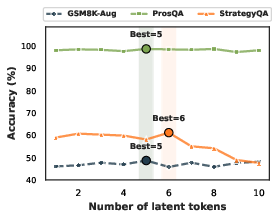

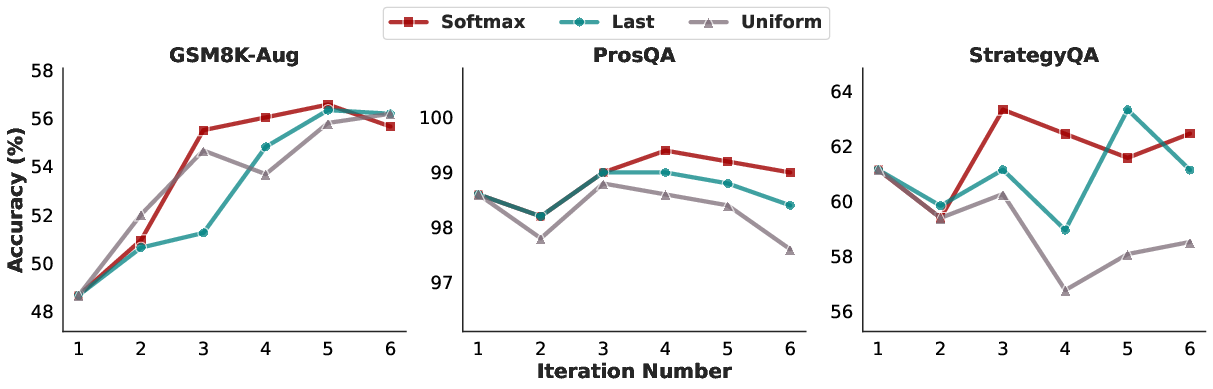

- Empirical results on datasets like GSM8K-Aug, ProsQA, and StrategyQA demonstrate significant performance gains in numerical, logical, and commonsense reasoning tasks.

SpiralThinker: Latent Reasoning through an Iterative Process with Text-Latent Interleaving

Introduction

SpiralThinker introduces a novel framework for latent reasoning models by interleaving textual and latent reasoning processes. This approach addresses two primary issues in existing latent reasoning methods: stable evolution of latent representations and coherent interleaving of explicit and implicit reasoning. By implementing an iterative updating mechanism over latent representations, SpiralThinker provides a more stable and coherent reasoning path without generating additional tokens, thus enabling deeper and more consistent reasoning outcomes.

Methodology

Framework Overview

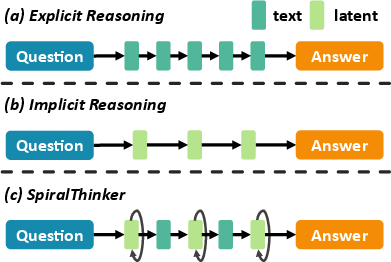

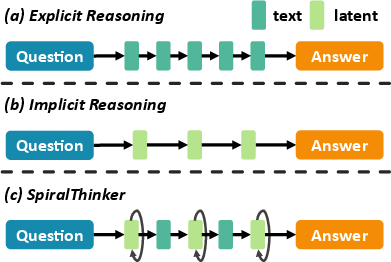

SpiralThinker's design allows for an iterative update mechanism that interleaves explicit textual reasoning with implicit latent reasoning. This is achieved through a structured annotation scheme that guides the model on when to transition between textual and latent steps, ensuring a coherent reasoning trajectory. A progressive alignment objective is introduced to align latent representations with textual counterparts, stabilizing the iterative reasoning updates and promoting information flow across iterations (Figure 1).

Figure 1: (a) Explicit reasoning processes textual tokens once. (b) Implicit reasoning processes latent representations once. (c) SpiralThinker interleaves textual and latent reasoning through an iterative process.

Iterative Process and Alignment

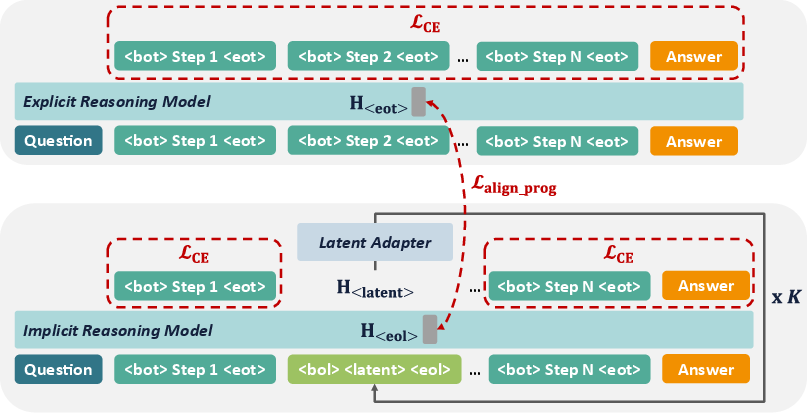

The iterative process involves repeatedly refining latent representations to enrich the implicit reasoning path. At each iteration, SpiralThinker updates latent tokens through a mapping function that adapts them into the same embedding space as textual tokens. The integration of a progressive alignment objective ensures these latent representations remain consistent with textual reasoning steps across the iterations, thereby preserving the coherence and goal-directed nature of the reasoning process.

Figure 2: Training process of SpiralThinker. Step indicates a textual step, and <latent> indicates a latent step. Only one <latent> token is illustrated for clarity.

Results and Analysis

SpiralThinker was evaluated on diverse datasets to test its effectiveness across multiple reasoning domains, achieving superior results compared to previous latent reasoning frameworks:

Ablation and Sensitivity Studies

The ablation studies demonstrated that both the iterative process and the alignment objective contribute significantly to SpiralThinker's performance gains. Notably, iteration alone showed limited benefits without proper alignment, underscoring the importance of the progressive alignment in effectively guiding the iterative updates.

Qualitative Insights

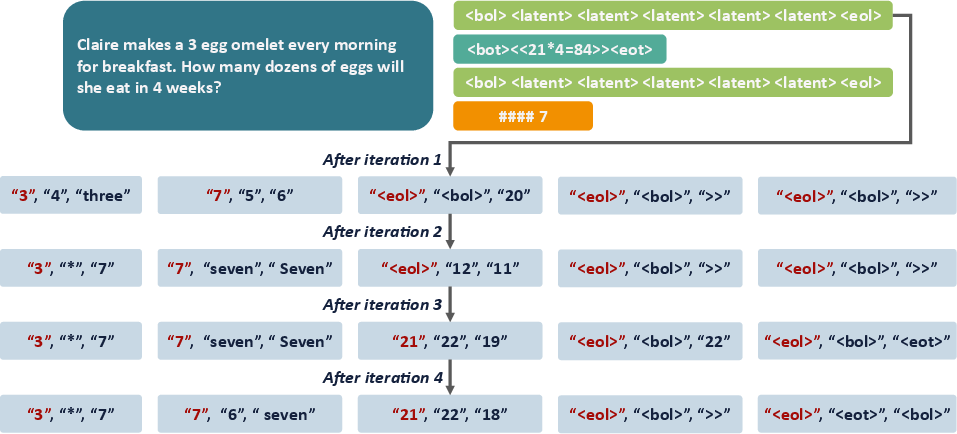

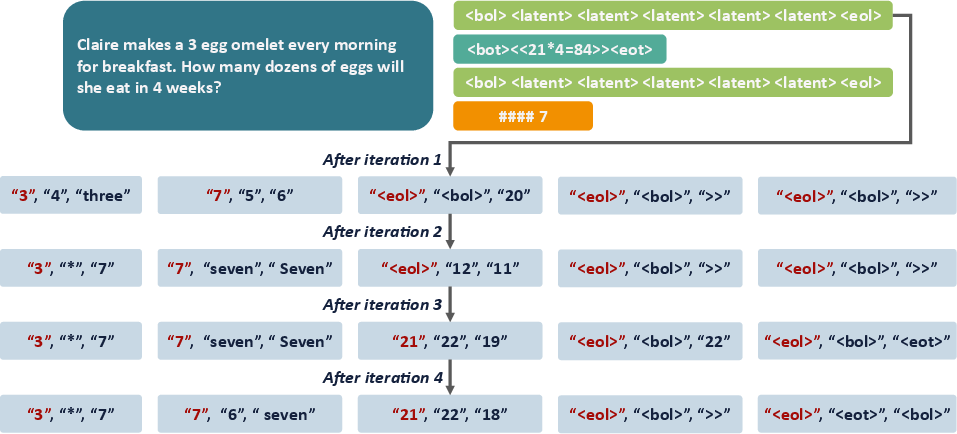

A qualitative analysis revealed how SpiralThinker’s latent tokens effectively capture relevant reasoning steps, showing progressive refinement of reasoning trajectories across iterations (Figure 5).

Figure 5: The upper part shows the reasoning steps generated by SpiralThinker for a sample problem, while the lower part presents the top three tokens most similar to each latent representation at the first latent step during the iterative process. The top-ranked token is highlighted in red.

Conclusion

SpiralThinker effectively integrates iterative computation with latent reasoning to achieve deeper, more coherent reasoning paths. Through a careful balance of explicit and implicit processes, guided by a structured alignment objective, SpiralThinker advances the potential of latent representations in reasoning tasks. Future work could explore adaptive iteration strategies and dynamic interleaving mechanisms to further enhance its reasoning capabilities.