- The paper demonstrates that polarization-resolved imaging enhances eye tracking by leveraging polarization-filter arrays to extract rich ocular features unavailable in intensity-only systems.

- It presents a PET system that uses a single camera and illuminator with a four-stage CNN for binocular gaze regression, achieving a 10–16% reduction in tracking error.

- The study reports robust performance under challenging conditions such as occlusion, device slippage, and physiological variability, supporting its potential for compact wearable applications.

Polarization-Resolved Imaging for Robust Eye Tracking

Introduction

The work "Polarization-resolved imaging improves eye tracking" (2511.04652) investigates the use of polarization-sensitive near-infrared (NIR) imaging as a single-camera, single-illuminator alternative to traditional multi-view, intensity-only eye tracking (ET) architectures. By leveraging polarization-filter-array (PFA) sensors and polarized illumination, the authors demonstrate that polarization channels provide information-rich, feature-dense maps of ocular tissues—specifically the cornea and sclera—that are unavailable in conventional intensity imagery. These features enable more robust gaze estimation, especially under regimes of occlusion, device slippage (eye-relief changes), and physiological variability such as pupil-size alterations.

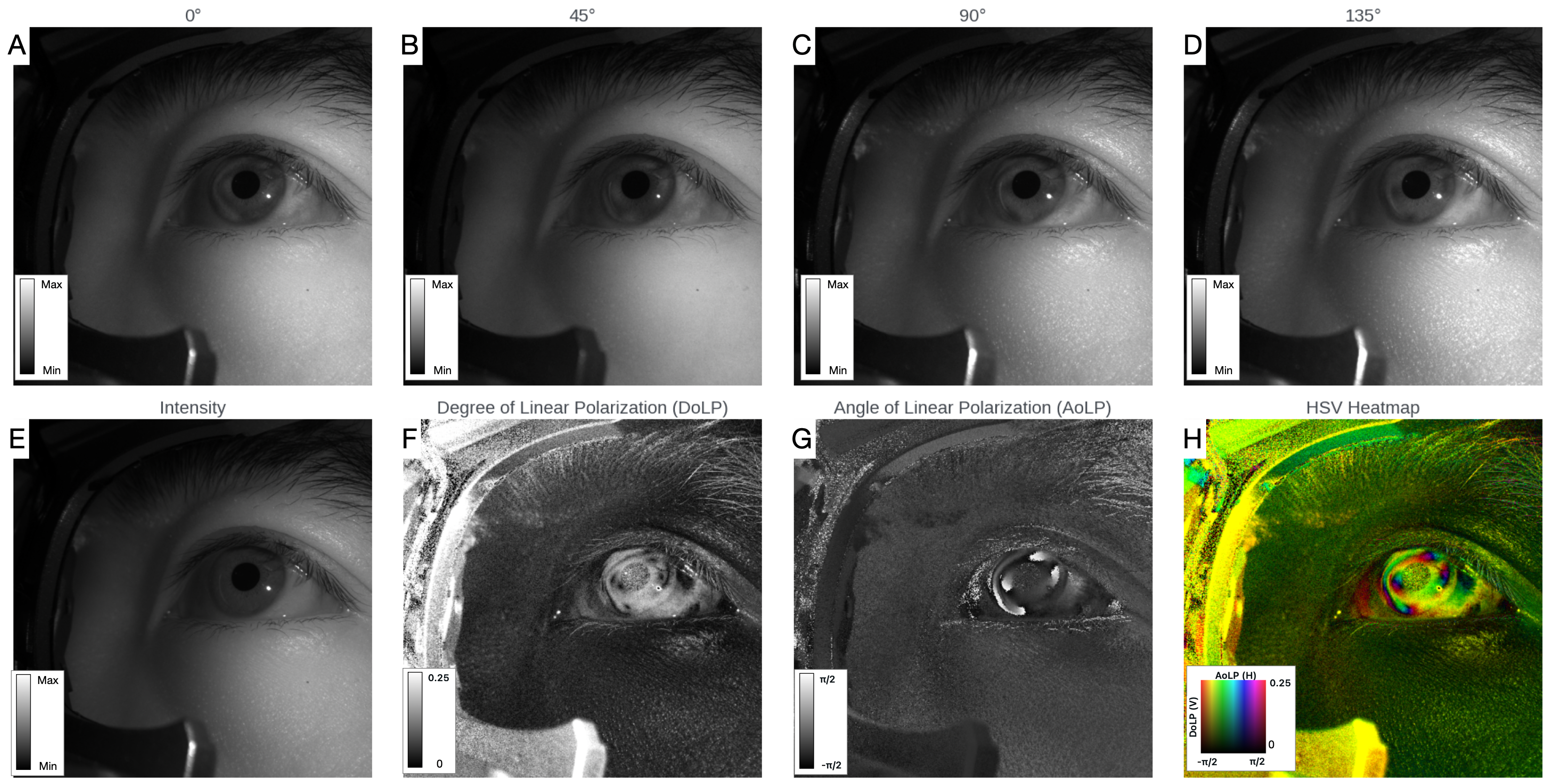

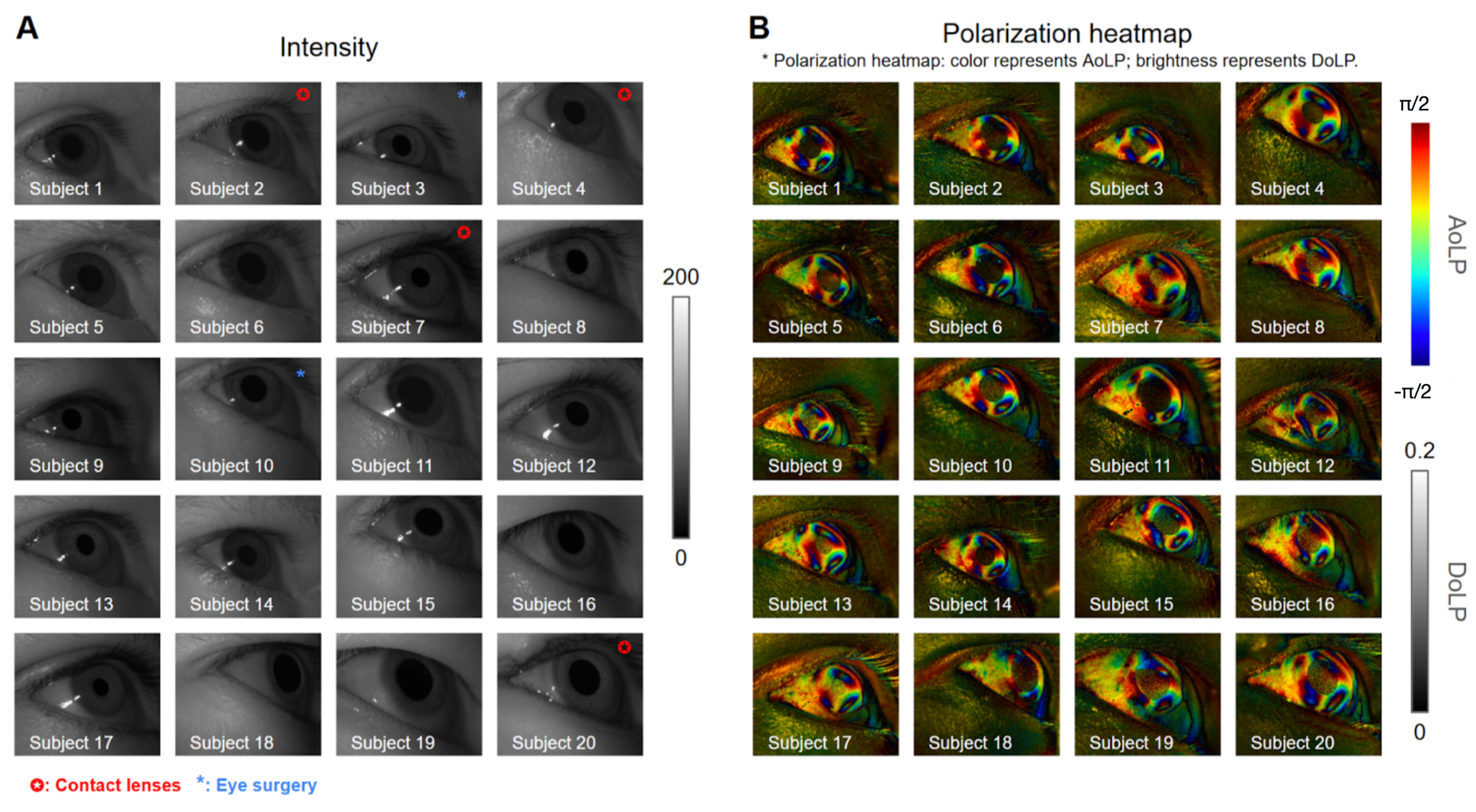

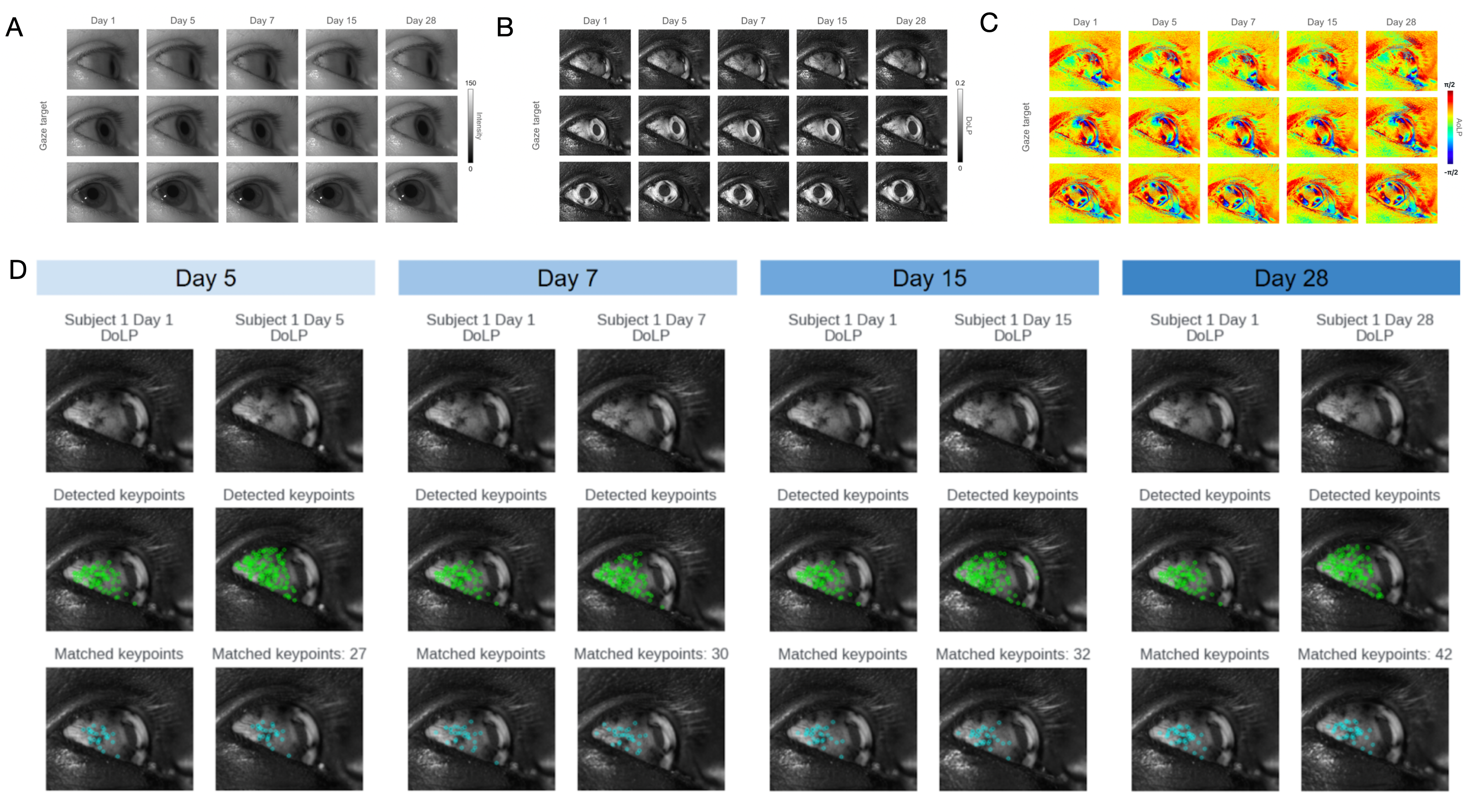

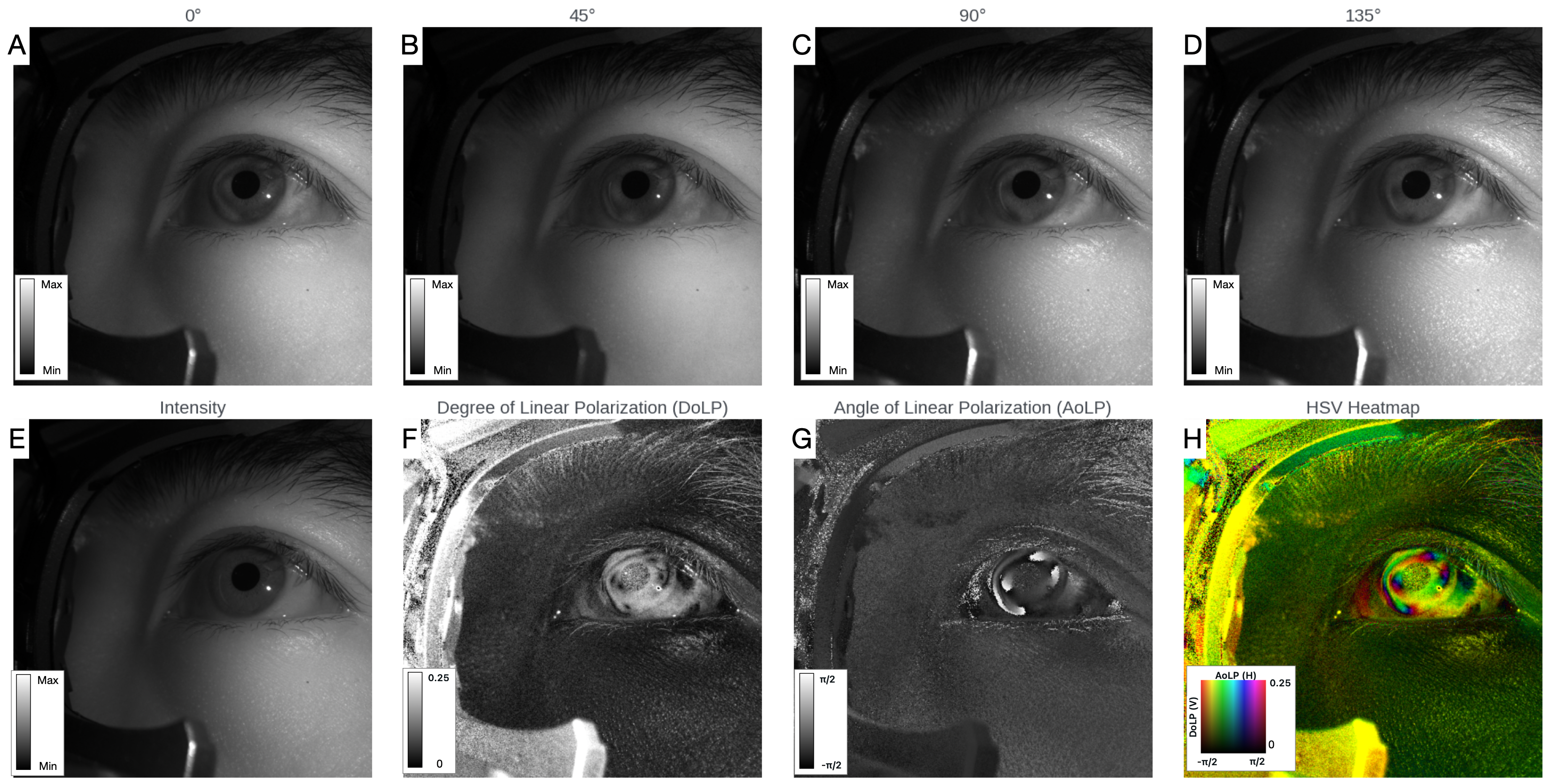

The PET system is constructed around a PFA camera equipped with wire-grid micro-polarizer arrays oriented at 0∘, 45∘, 90∘, and 135∘. Demosaicked output yields four polarization channels, from which key polarization-resolved metrics are extracted: angle of linear polarization (AoLP) and degree of linear polarization (DoLP). These metrics reflect the optical anisotropy of collagen in the sclera and cornea, which imparts textured polarization signals that are stable over weeks and invariant to contact lens use and post-surgical changes.

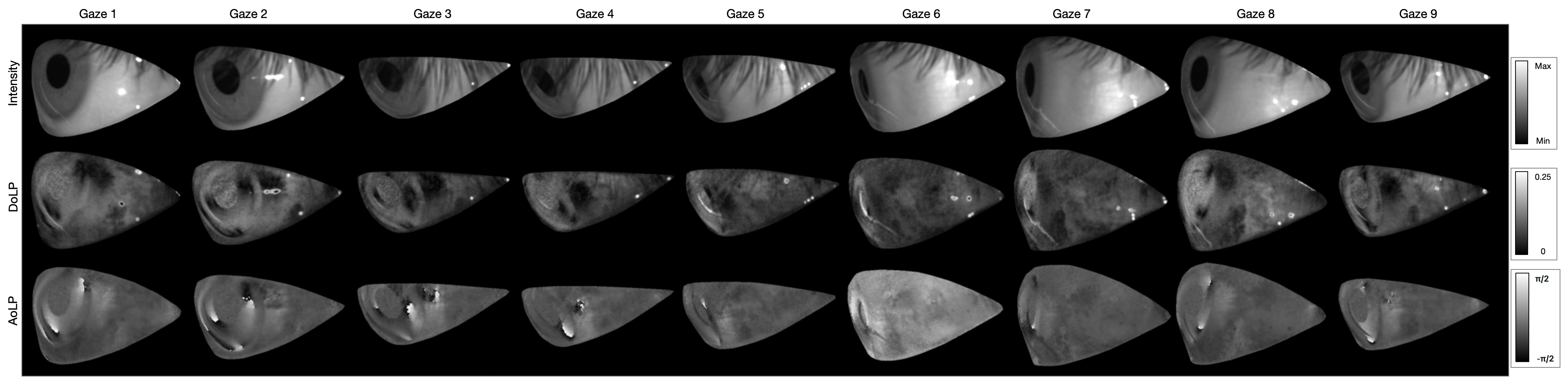

Figure 1: Polarization-resolved imaging yields four orientation channels, intensity, DoLP, AoLP, and composite visualizations; these data support extraction of ocular textures ignored by intensity-only systems.

The polarization-based contrast mechanism exploits both interface optics (Fresnel effects) and birefringent phase retardance to generate stable highlights and textures, broadening the feature set for end-to-end learning models.

Figure 2: PET images illustrate robust corneal and scleral pattern visibility among diverse subjects, including those with contact lenses and laser surgeries.

Figure 3: Polarization-derived textures of the sclera and cornea are shown to persist over time, confirming temporal stability and supporting long-term calibration.

System Design and Data Collection

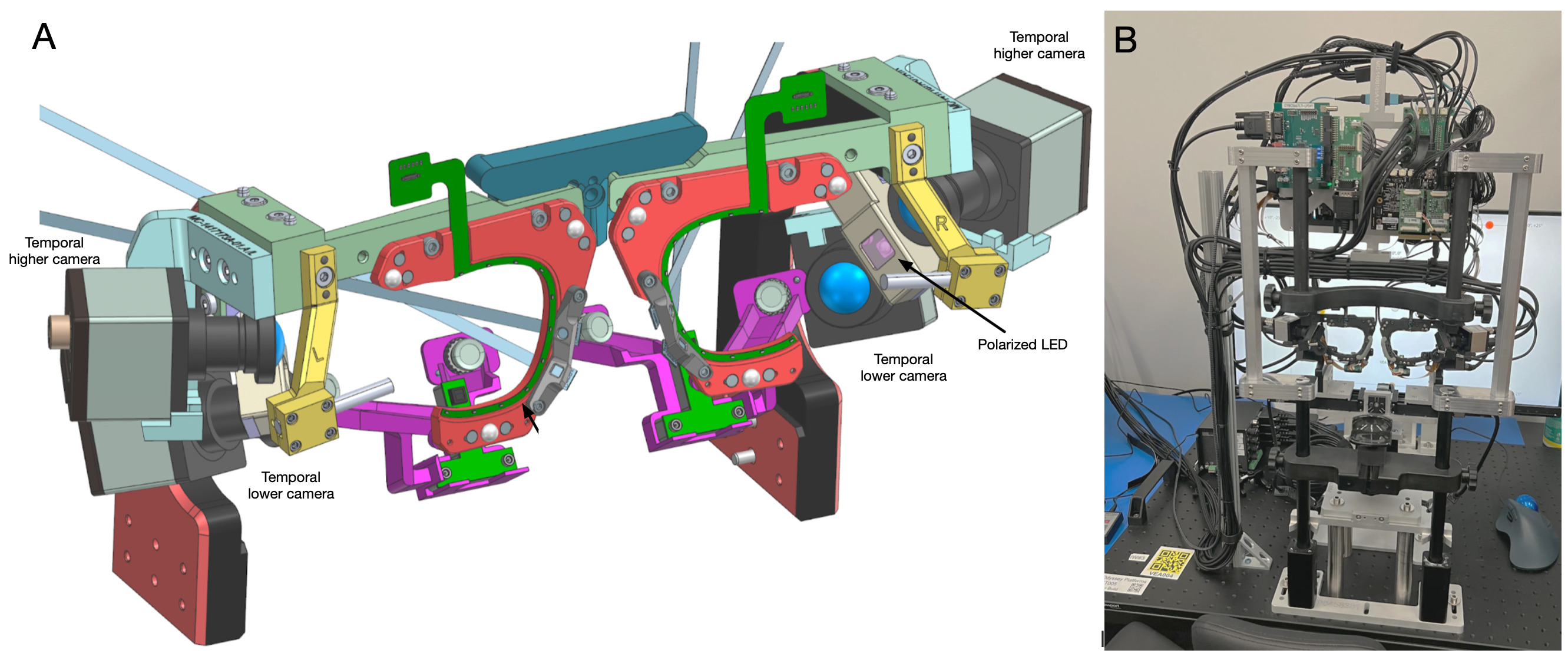

The PET hardware setup comprises a PFA camera and an 850 nm linearly polarized illuminator in flood mode, mounted on a benchtop station. Binocular configurations employ two cameras per eye for evaluation across higher and lower temporal placements, capturing the impact of camera position on feature visibility and occlusion tolerance.

Figure 4: The PET subsystem integrates a polarization camera with a flood NIR polarizer and a stabilized participant station for controlled gaze data acquisition.

Data collection protocols involved visual target sequences presented across varying donning conditions (nominal, measured slippage, variable backgrounds), enabling the generation of a comprehensive dataset spanning 346 participants. Rigorous kinematics, calibration (via a nine-point sequence), and recording of IPD ensured geometric consistency.

Figure 5: Segmented images from calibration sequences demonstrate the per-target image diversity and eye opening extracted for gaze regression.

Machine Learning Pipeline

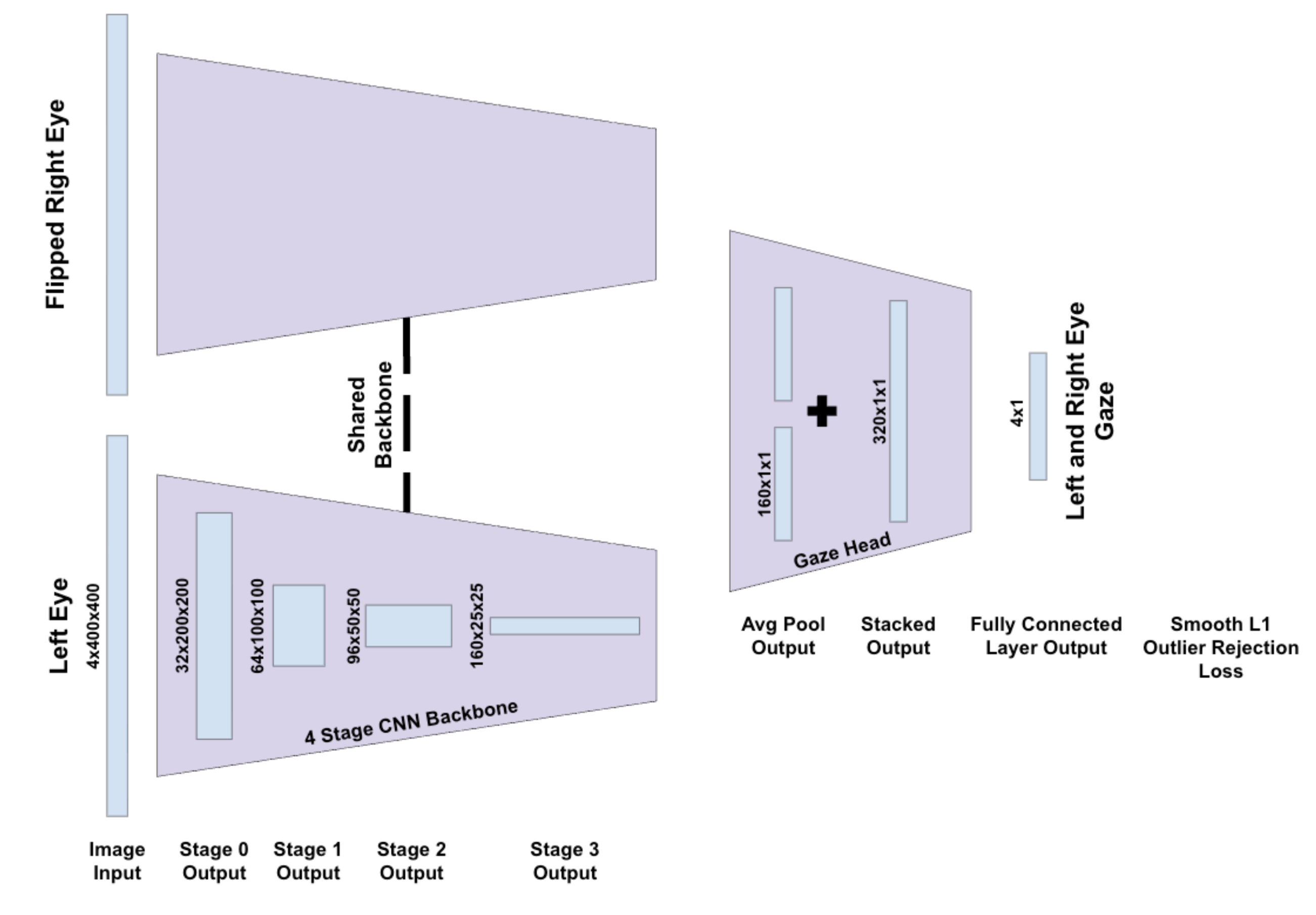

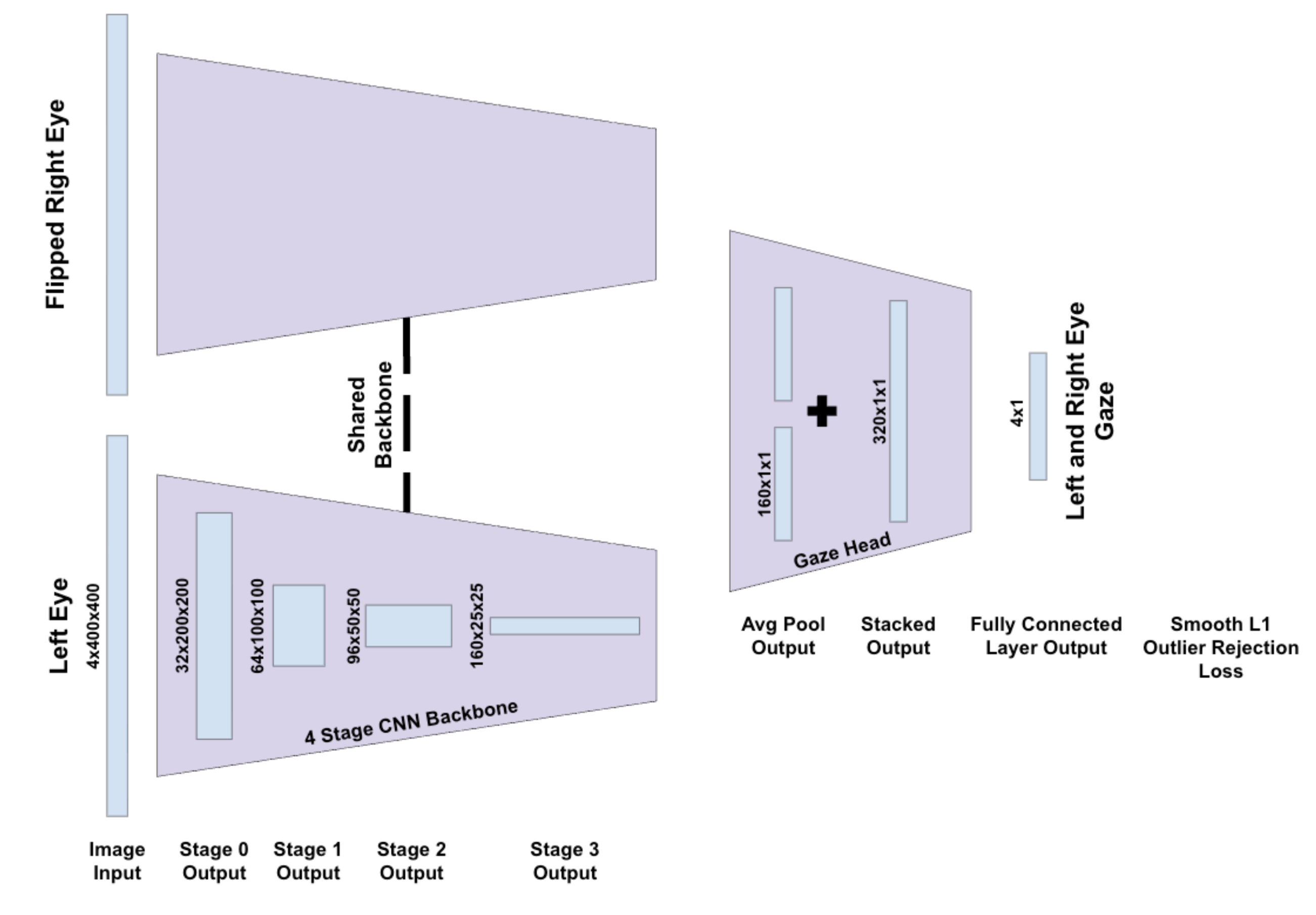

Gaze prediction is framed as binocular regression: stacked PET images (four polarization channels per eye) are processed by a four-stage, inverted-residual CNN backbone, with feature fusion and a regression head for per-eye gaze angles. The architecture is designed for parameter parity between PET and intensity-only baselines. Calibration leverages an affine correction learned from initial gaze measurements, yielding robust personalization without extensive retraining.

Figure 6: The multi-view gaze regression model fuses processed images from each eye using a shared CNN backbone before predicting gaze.

Training uses a smooth L1 loss (Huber loss) on angular errors, standardized across both input modalities. Outlier rejection and subject-disjoint validation splits minimize bias.

Empirical Results and Robustness Analysis

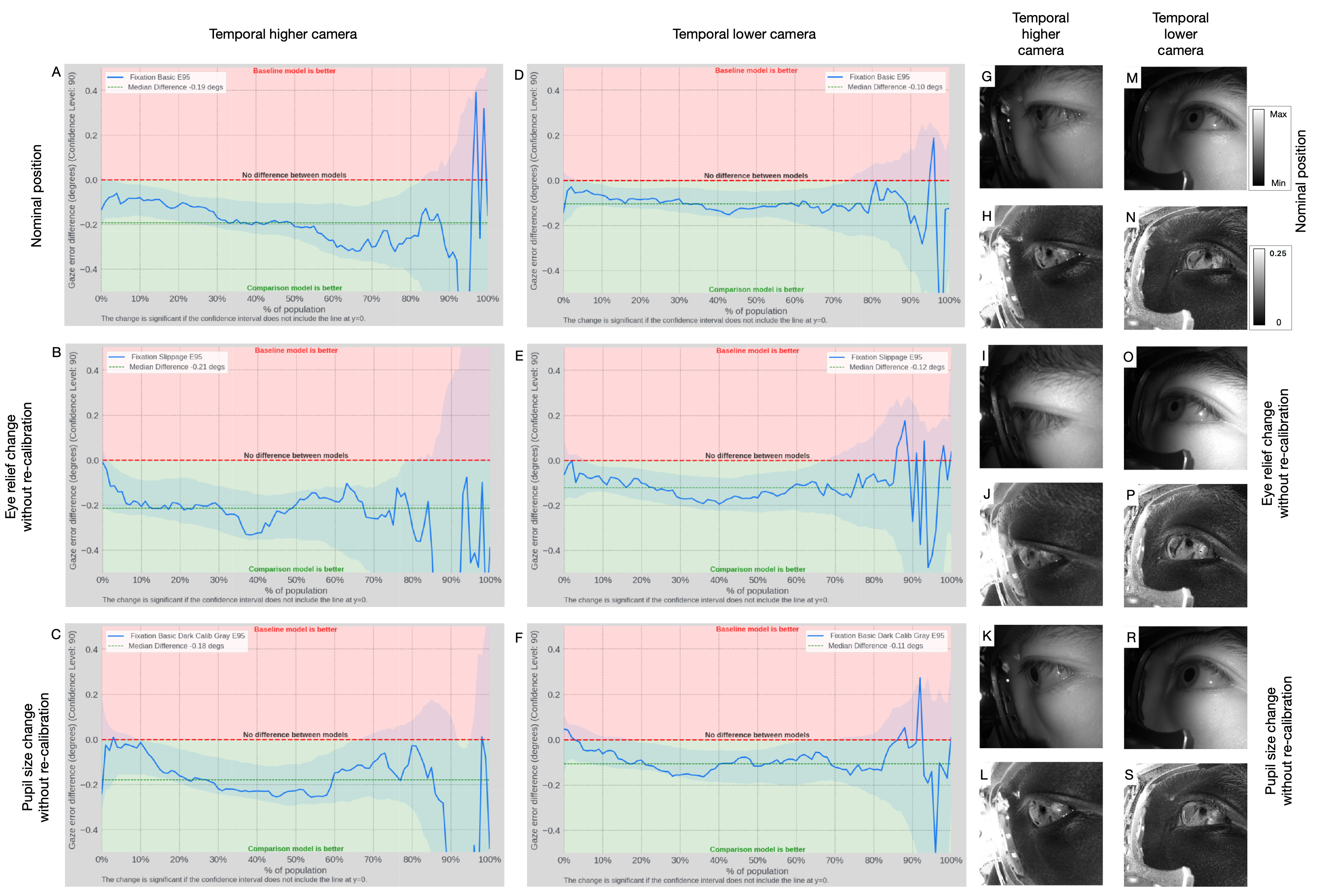

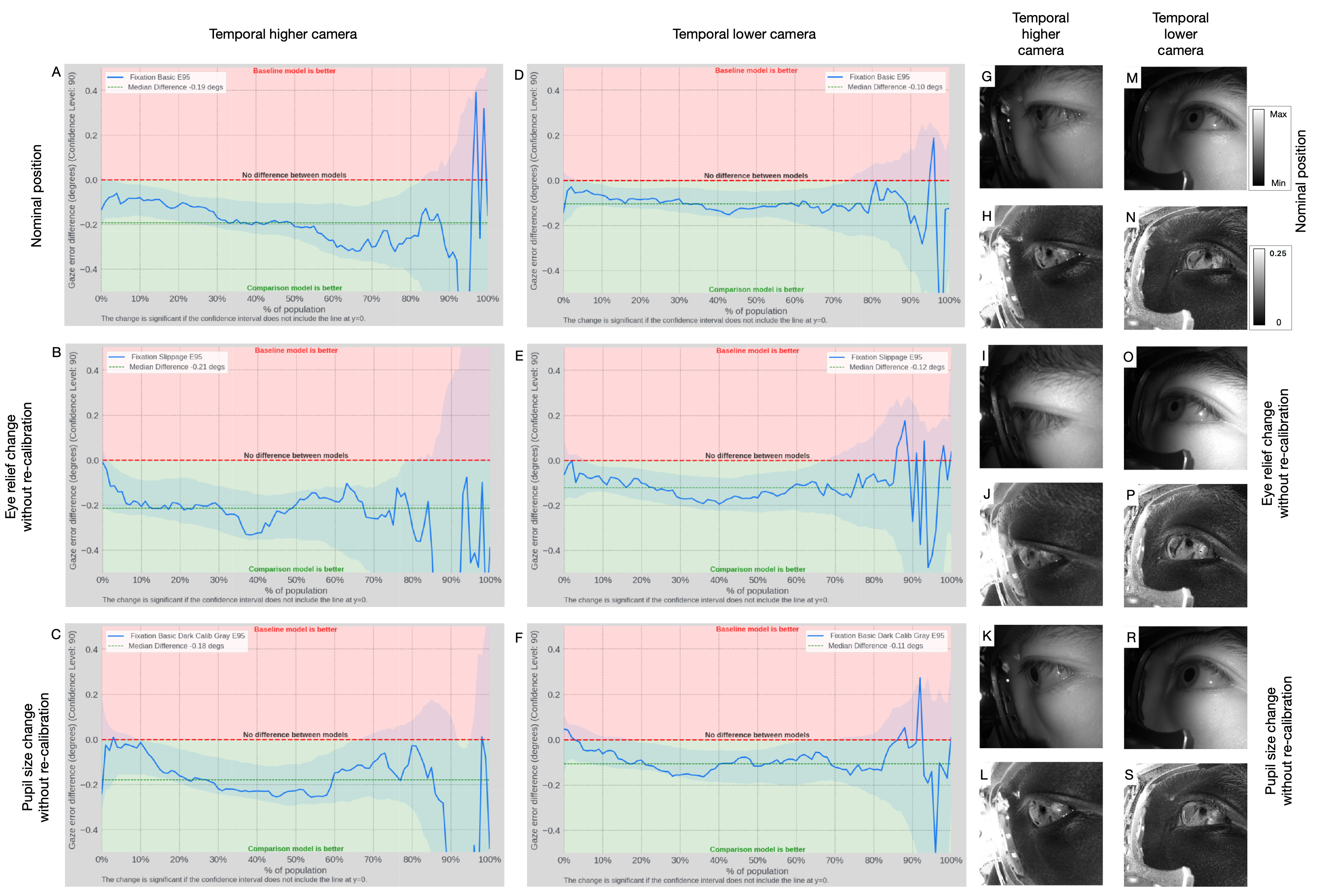

Population-level evaluation focuses on the 95th-percentile absolute gaze error (E95), aggregated as the median across subjects (U50E95), emphasizing worst-case regime accuracy. PET showed a consistent reduction in U50E95 by $0.12$-0.23∘ (10.3–15.9%) versus intensity-only processing in nominal, no-recalibration slippage, and pupil-size variation conditions. Bootstrapped confidence intervals excluded zero, highlighting statistically significant improvements.

Figure 7: Comparative curves report PET versus intensity-only error reduction under matched acquisition, capacity, and training regimes.

PET gains are most pronounced for higher temporal camera positions, where eyelid/eyelash occlusions diminish conventional cues. The physiological/scattering basis for AoLP/DoLP contrast ensures that even heavily occluded or off-axis images retain trackable features, enhancing system robustness for compact, wearable placements.

Engineering, Implementation, and Future Directions

Polarization architectures enable compact, manufacturable modules with reduced sensor complexity (single camera, single illuminator). Existing limitations include reduced per-pixel resolution due to PFA mosaics, angle- and geometry-dependent contrast sensitivity, and SNR loss from demosaicking. Miniaturized sensors or metasurface-based polarimetry (as suggested by [soma2024metasurface], [zuo2023chip]) are likely to further reduce form factor and increase throughput.

Algorithmically, integrating lightweight user-adaptive components or leveraging self-supervised learning across intensity, AoLP, and DoLP modalities can compound the robustness and reduce calibration needs.

Clinically, PET exposes birefringence-linked features in collagen scaffolding, making it amenable to online biometric health applications, including tracking of pathologies such as myopia progression, keratoconus, and post-surgical ectasia.

Conclusion

Polarization-resolved near-infrared imaging substantively enriches ocular feature density for single-camera eye tracking, enabling robust, low-burden gaze estimation under a broad spectrum of operational conditions. Population-level accuracy, stability for multi-week calibration, and resilience against occlusion and physiological variation position PET as a promising modality for integration in future consumer wearables and health-linked biometric systems. Modular sensor advances and advanced adaptive algorithms are expected to further solidify PET’s role in scalable eye tracking and authentication.