- The paper demonstrates that vergence–pupil coupling is a dynamic measurement confound influenced by viewing context and participant-specific factors.

- The paper employs a head-mounted eye tracker with controlled depth switching, luminance modulation, and audio cues to isolate pupil size artifacts.

- The paper finds that despite luminance modulation reducing variability, persistent pupil dynamics limit the accuracy of gaze depth estimation without advanced correction models.

Vergence–Pupil Coupling During Near-Far Depth Switching: A Participant-Level Analysis with Head-Mounted Eye Tracking

Introduction

Vergence is a foundational oculomotor parameter in spatial vision and frequently operationalized as a proxy for viewing depth in eye-tracking applied to XR, HCI, and visual ergonomics. A significant implementation challenge for geometric gaze depth estimation is the confounding effect of pupil size artefacts (PSAs). This study systematically characterizes vergence–pupil coupling under physically grounded depth manipulation, focusing on how PSAs are shaped by dynamic viewing context, luminance modulation, and task. Strong participant-level variability emerges, with direct consequences for the use of video-based eye tracking signals in real-world and controlled visual neuroscience experiments.

Methods and Experimental Paradigm

A within-subjects design is used, leveraging a head-mounted, IR-illuminated video-based tracker (Pupil Labs Neon) and a beamsplitter paradigm for presenting precisely-aligned, physically distinct near and far fixation targets. In addition to canonical near/far switching under static and dynamically modulated background luminance, the study implements blockwise sustained fixation and an audio-cued condition (visual scene static, cueing by sound). Depth and illumination states are thus factorially manipulated, isolating PSA signatures from visual onset effects and from intrinsic pupil oscillations. The dependent variables are vergence (in deg) and the slope βVP characterizing within-epoch pupil–vergence coupling.

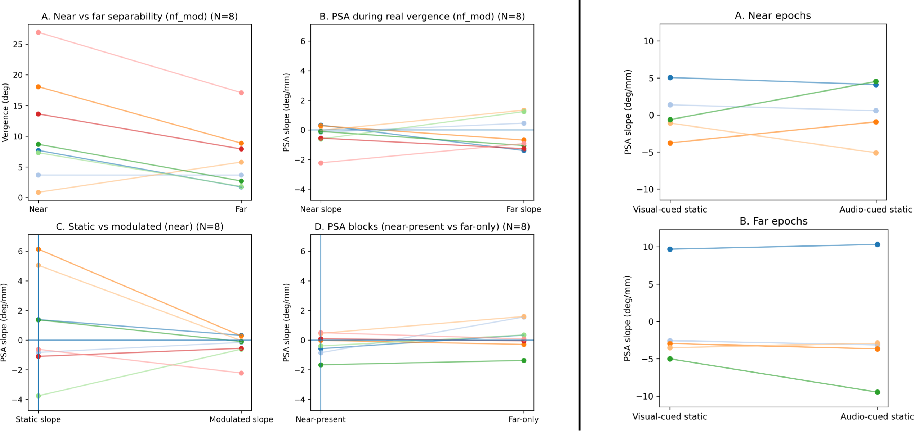

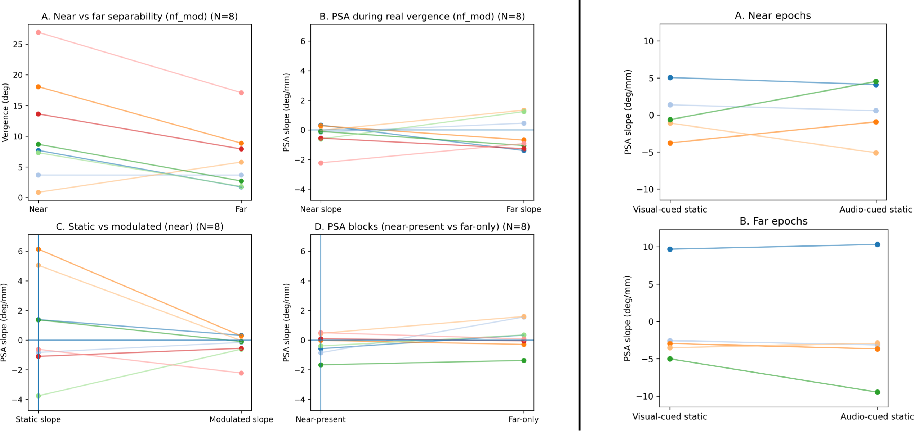

Figure 1: Left: Group-level summary of vergence and pupil–vergence coupling slopes for near/far switching. Right: Individual PSA slopes showing effects of visual versus auditory cueing and static luminance.

Results

Vergence robustly differentiates near from far depth states for all participants, but absolute magnitude and the sign of depth ordering are variable, with two instances of reversed ordering under luminance-modulated viewing. The principal finding is that pupil–vergence coupling (PSA slope) is not a uniform measurement bias. Slopes range widely (approximately -4 to +6 deg/mm) under static illumination, while luminance modulation reduces the between-participant spread, causing slopes to cluster near zero. This clustering persists for both depth states, implying an effect of luminance-driven pupil stabilization distinct from target or depth characteristics.

Critically, blockwise fixation and audio-cued depth switching show that non-visual cueing and the absence of visual transients do not abolish pupil–vergence coupling. PSA slopes remain depth- and participant-dependent, further supporting that vergence error structure is contingent on both viewing context and individual idiosyncrasies. These findings invalidate a simple additive model where PSA is a fixed, correctable bias.

Discussion and Implications

The data establish that vergence–pupil coupling, as measured with current monocular-feature-based trackers, is highly state-dependent and only partially stabilized by luminance modulation. The coupling effect persists even when confounding factors such as visual onsets, global luminance shifts, and task structure are minimized or dissociated. Theoretical implications are substantial: video-based vergence estimates, while enabling reliable within-participant depth separation, are contaminated in a nonstationary manner by PSA processes intrinsic to both hardware and observer-specific oculomotor physiology.

Improvements via background luminance modulation reduce but do not eliminate the effect, contradicting the implication that static, well-lit conditions are inherently “clean.” Models and decoders trained under one context (illumination, cueing type) will not generalize reliably to others unless pupil dynamics are explicitly modeled or alternative sensing modalities (cf. LFI-based eye tracking technologies) are adopted. Existing literature underscores that PSAs are present across diverse tracking architectures [drewes2014smaller, salari2025effect].

For practical HCI and XR deployment, vergence can serve as a coarse classifier of depth state but should not be employed for fine-grained or device-independent gaze depth estimation without consideration for dynamic, participant-dependent PSA signatures. Future work must therefore prioritize hardware or computer vision solutions that dissociate pupil size from inferred gaze vector, or incorporate real-time correction models that adapt to state and individual.

Conclusion

The study demonstrates that vergence–pupil coupling is not a trivial artefact restricted to visual onsets or static contexts but is an omnipresent, dynamic measurement confound. Luminance modulation constrains but does not fully rectify the effect, and coupling persists even without visual transients. Accordingly, geometric interpretations of vergence from video-based eye trackers remain limited without explicit control or modeling of pupil dynamics and context dependency.