- The paper introduces a novel conditional polarization guidance paradigm that treats polarization cues as adaptive modulation for RGB feature extraction.

- It employs a lightweight Polarization Integration Module with an iterative feedback decoder to boost boundary refinement and reduce computational overhead.

- Experiments show superior performance on multiple datasets, achieving high Sα and Fβw metrics that validate its effectiveness for both camouflaged and transparent object detection.

Conditional Polarization Guidance for Camouflaged Object Detection

Introduction

The paper "Conditional Polarization Guidance for Camouflaged Object Detection" (2603.30008) addresses the challenge of detecting camouflaged objects (COD), which remain problematic for purely RGB-based methods due to high background ambiguity and challenging environments. The work critiques the prevailing paradigm of explicit RGB–polarization feature fusion, which tends to increase model complexity and underexploits the capacity of polarization cues to explicitly guide hierarchical RGB feature representation. The proposed Conditional Polarization Guidance Network (CPGNet) reconceptualizes polarization as conditional guidance rather than a parallel modality for direct fusion, leading to a more efficient and effective architecture for COD.

The core contribution is the asymmetric RGB–polarization paradigm, in which polarization, particularly the degree (DoLP) and angle (AoLP) of linear polarization, is integrated via a lightweight module that generates explicit guidance signals. These are injected as affine modulation parameters into the internal RGB processing pipeline, dynamically regulating hierarchical RGB feature extraction.

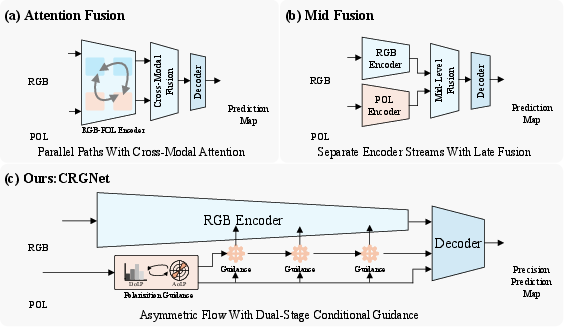

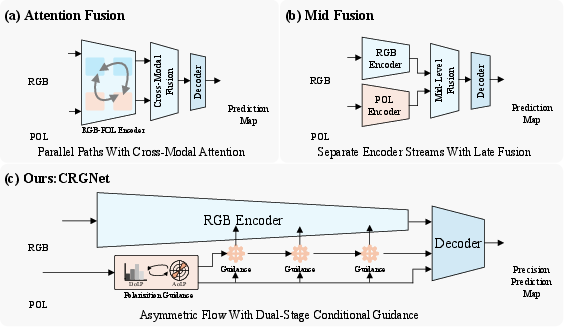

This mechanism differs fundamentally from prior attention or mid-level fusion approaches, which either augment RGB with polarization-aware masks or merge features at intermediate stages, as illustrated in (Figure 1).

Figure 1: Comparison of RGB–polarization integration paradigms. (a) Attention fusion enhances RGB features using polarization-aware attention. (b) Mid-level fusion directly merges RGB and polarization features. (c) CPGNet treats polarization as conditional guidance for RGB representation learning, rather than explicit feature fusion.

Unlike heavy dual-stream networks, the conditional modulation design in CPGNet allows polarization to serve as a structural prior. The approach leverages the complementary physical cues of DoLP (material/boundary information) and AoLP (orientation/geometry cues), which are first filtered and integrated in the Polarization Integration Module (PIM).

Architecture and Components

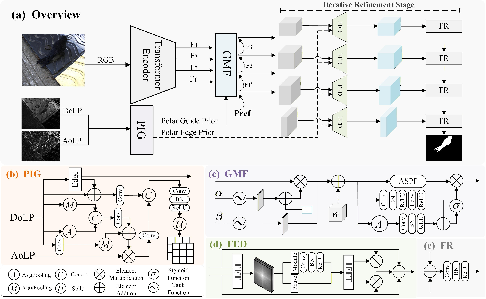

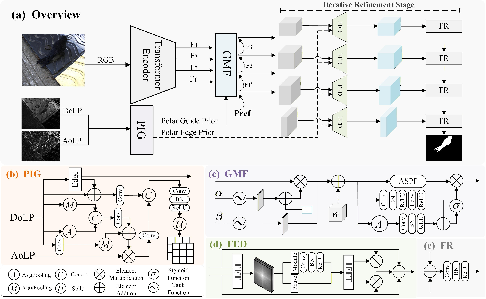

The CPGNet architecture is modular, comprising three primary subsystems (Figure 2):

- Polarization Integration Module (PIM): Jointly processes DoLP and AoLP, suppressing unreliable responses and extracting edge priors, to produce guidance codes.

- Polarization Guidance Flow: Progressively injects the guidance codes into multi-level RGB features via the Polarization Guidance Enhance (PGE) component, and applies an Edge-guided Frequency Module (EFM) that strengthens high-frequency cues responsible for object–background separation.

- Iterative Feedback Decoder (IFD): Implements a coarse-to-fine decoding process, recursively refining predictions using feedback from previous outputs.

Figure 2: (a) Overview of the proposed CPGNet. (b) Polarization Integration Module (PIM). (c) Polarization Guidance Enhancement (PGE). (d) Edge-guided Frequency Module (EFM). (e) Feature Refinement (FR).

This architecture supports efficient conditional modulation across stages with minimal computational overhead, directly addressing the inefficiencies of prior cross-modal fusion networks.

Experimental Results

Camouflaged Object Detection with Polarization

Comprehensive experiments on the PCOD-1200 dataset reveal that CPGNet achieves the strongest numerical results across all major metrics, including Sα, Fβw, Eϕ, and M—outperforming both RGB-only and state-of-the-art RGB–polarization approaches. The model achieves an Sα of 0.951 and an Fβw of 0.923, with a mean absolute error of only 0.005, exceeding the previous best by statistically significant margins.

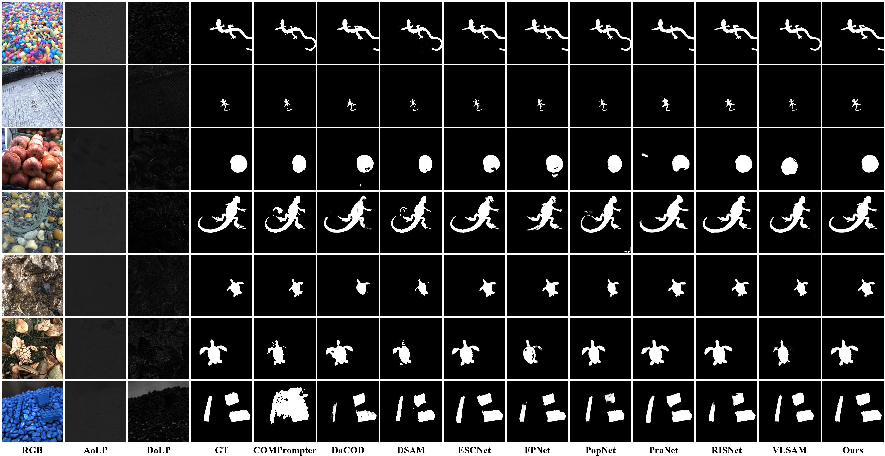

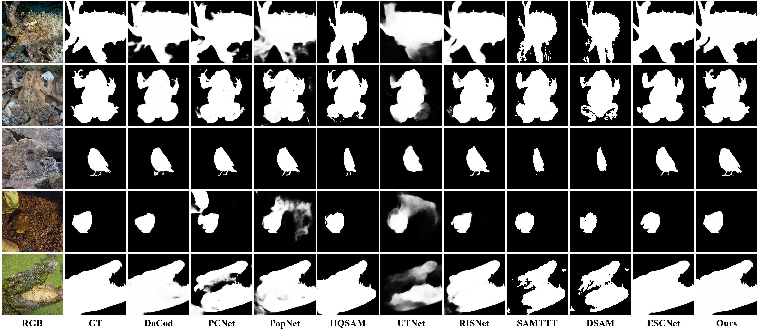

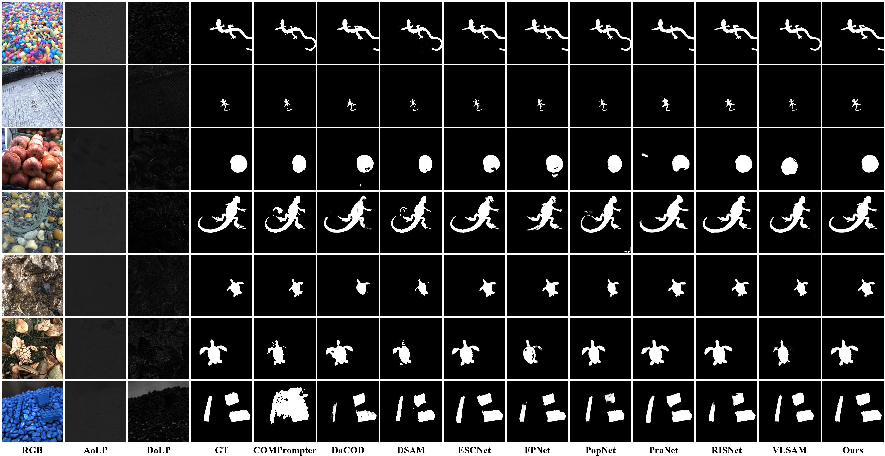

Qualitative visualizations demonstrate more complete predictions and improved boundary quality, especially in scenes with severe camouflage, as shown in (Figure 3).

Figure 3: Qualitative comparison with SOTA methods on PCOD-1200. CPGNet produces more complete predictions and cleaner boundaries in challenging camouflage scenes.

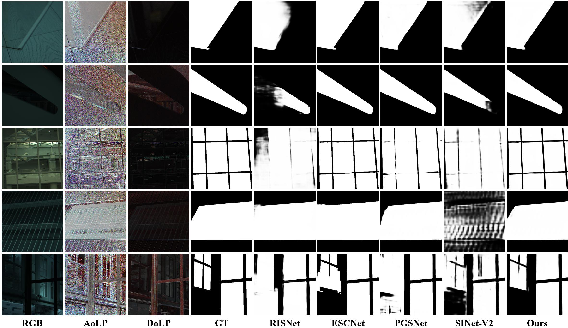

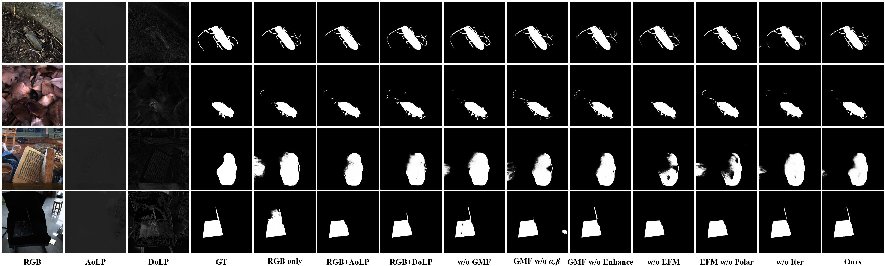

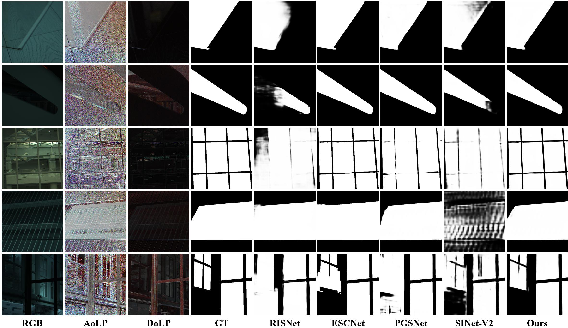

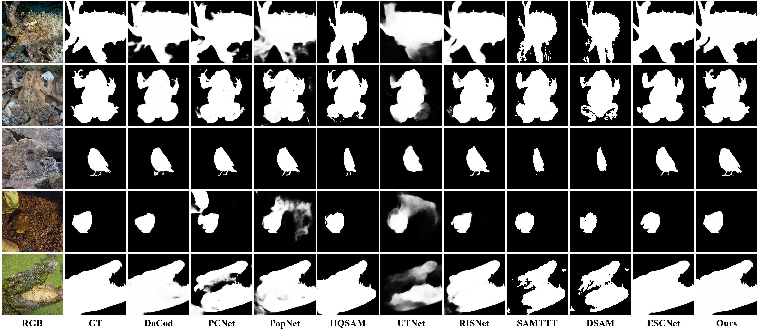

Transparent and Non-polarization Benchmarks

Experiments on the RGBP-Glass dataset indicate that the framework's conditional guidance paradigm generalizes naturally to dense prediction tasks involving transparent objects, yielding top scores in mean absolute error, region overlap (IoU), and balanced error rate. Further evaluation on RGB-Depth datasets and standard non-polarization COD datasets (CAMO, COD10K, NC4K, CHAMELEON), where surrogate cues stand in for polarization, also confirm strong generalizability and performance robustness (see Figure 4 and Figure 5).

Figure 4: Qualitative comparison of CPGNet against SOTA approaches on the RGBP-Glass dataset.

Figure 5: Qualitative comparison of CPGNet against SOTA approaches on the RGB-Depth datasets.

Component Analysis

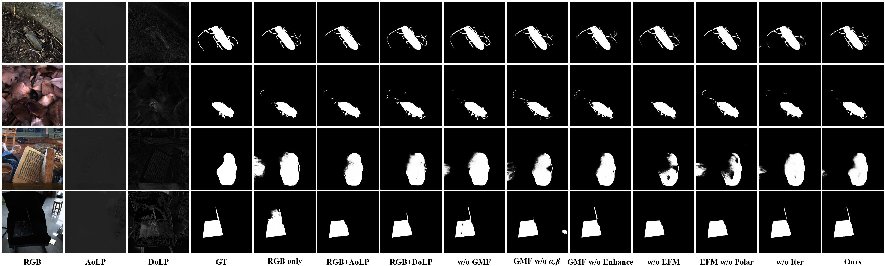

Ablation studies verify that each module of CPGNet provides essential gains. Removing the conditional guidance mechanism or the edge-frequency module consistently lowers performance and boundary fidelity (Figure 6). The iterative feedback decoder is also critical for enhancing ambiguous region recovery.

Figure 6: Qualitative ablation results on PCOD-1200. Each component improves prediction completeness and boundary quality, and the full model yields the best visual results.

Failure Cases and Limitations

Model performance degrades when polarization measurements are noisy or unstable (Figure 7), indicating sensitivity to hardware quality for acquisition of DoLP and AoLP. Scenes lacking physically informative polarization cues limit potential guidance, an issue especially apparent in degraded sensor data or inhomogeneous illumination.

Figure 7: Failure cases caused by degraded polarization quality. When DoLP/AoLP measurements are weak or noisy, the derived guidance may become unreliable, leading to inaccurate segmentation.

Implications and Future Directions

CPGNet substantiates the hypothesis that polarization cues are most efficiently utilized as adaptive structural priors for conditional modulation of RGB feature hierarchies, rather than as signals for direct fusion. This has implications for reducing computational cost in multimodal vision, especially in demanding domains such as medical imaging, remote sensing, or robotics, where ambiguous boundaries and low contrast are recurrent issues.

The performance on transparent object detection benchmarks and RGB-Depth datasets signals that conditional guidance can be extended beyond polarization, potentially applying any physically grounded auxiliary signal (e.g., depth, infrared) as guidance rather than a fusion target.

The work anticipates further developments in task-adaptive conditional guidance, such as integrating with foundation models or self-supervised large vision architectures, potentially elevating cross-modal interactions from fixed fusion blocks to dynamic, context-sensitive modulation.

Conclusion

The approach presented in "Conditional Polarization Guidance for Camouflaged Object Detection" establishes a new design principle for multimodal vision: treating physically interpretable, complementary signals as conditional guidance for the dominant modality, rather than defaulting to symmetric fusion. CPGNet delivers robust, generalizable performance across both polarization-rich and conventional benchmarks with high parameter efficiency. The conditional polarization guidance paradigm offers an extensible basis for future architectures targeting dense prediction under perceptual ambiguity, especially in domains characterized by low SNR, weak boundaries, or complex inter-modality statistical relationships.