Talagrand-Type Correlation Inequalities for Supermodular and Submodular Functions on the Hypercube

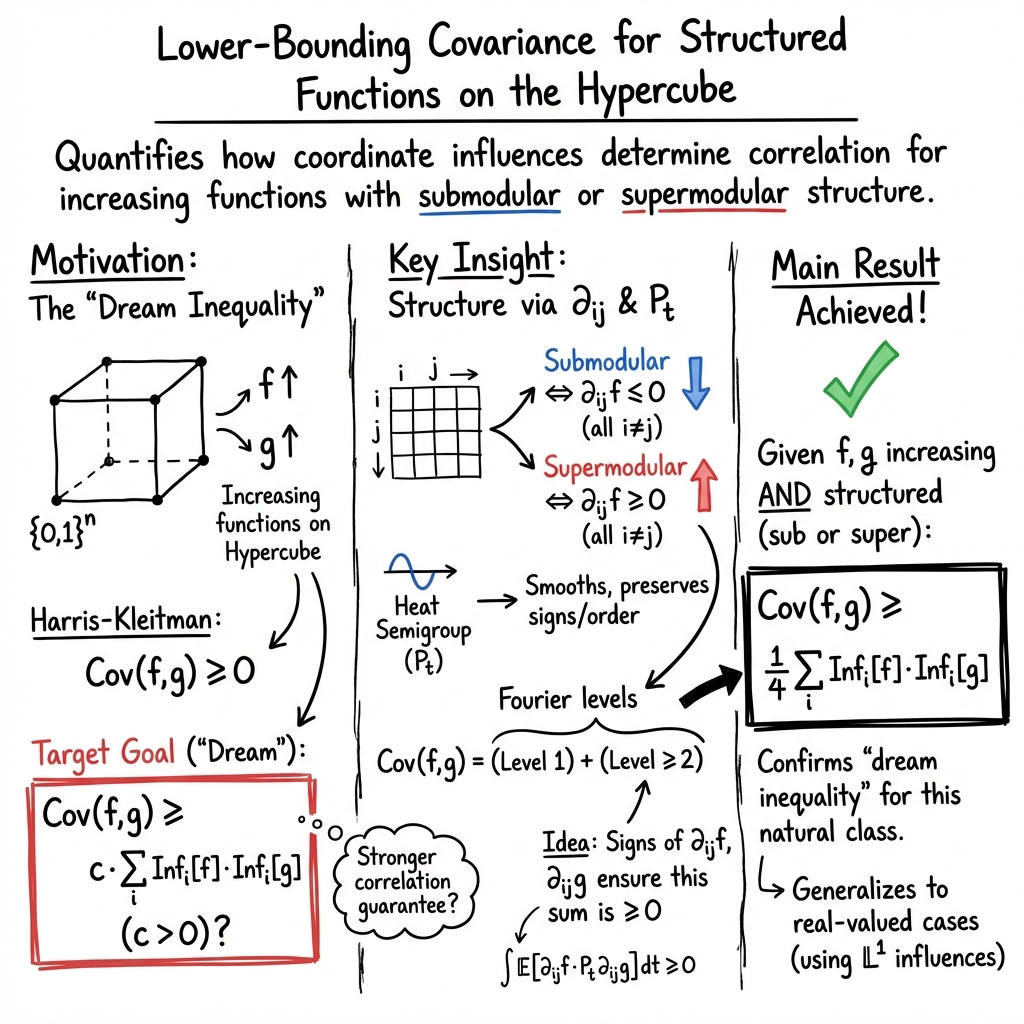

Abstract: Talagrand initiated a quantitative program by lower-bounding the correlation of any two increasing Boolean functions in terms of their influences, thereby capturing how strongly the functions depend on the exact coordinates. We strengthen this line of results by proving Talagrand-type correlation lower bounds that hold whenever the increasing functions additionally satisfy super/submodularity. In particular, under super/submodularity, we establish the ``dream inequality'' $$\mathbb{E}[fg]-\mathbb{E}[f]\mathbb{E}[g]\ge \frac{1}{4}\cdot\sum\limits_{i=1}n\mathrm{Inf}_i[f]\mathrm{Inf}_i[g].$$ Thereby confirming a conjectural direction suggested by Kalai--Keller--Mossel. Our results also clarify the connection to the antipodal strengthening considered by Friedgut, Kahn, Kalai, and Keller, who showed that a famous Chvátal's conjecture is equivalent to a certain reinforcement of Talagrand-type correlation inequality when one function is antipodal. Thus, our inequality verifies the Friedgut--Kahn--Kalai--Keller conjectural bound in this structured regime (super/submodular). Our approach uses two complementary methods: (1) a semigroup proof based on a new heat-semigroup representation via second-order discrete derivatives, and (2) an induction proof that avoids semigroup argument entirely.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Talagrand‑Type Correlation Inequalities for Supermodular and Submodular Functions on the Hypercube — Explained Simply

Overview

This paper studies rules that take many yes/no inputs and produce a number (like 0 or 1). Think of a list of questions answered with 0 (no) or 1 (yes). A rule looks at all answers and decides an outcome. The authors focus on how two such rules are related (their “correlation”) and how much they depend on each individual question (their “influence”). They prove a strong, clean inequality showing that, under certain natural conditions, the correlation between two rules is at least a fixed fraction of the combined importance of each question.

Key Questions

The paper asks:

- When do two “increasing” rules have a large positive correlation?

- Can we guarantee a simple lower bound for this correlation using how much each rule depends on each question (the coordinate influences)?

- Do extra structures (called “submodular” or “supermodular”) make this stronger bound true?

- Can we also get general upper bounds on correlation (so we know it cannot be too big)?

“Increasing” means: if you change some answers from 0 to 1, the rule’s output does not go down. This is a basic fairness/monotonicity idea: more “yes” shouldn’t hurt.

“Influence” of question i on a rule is the chance that flipping the i‑th answer changes the rule’s output. It measures how important each question is to the rule.

“Submodular” roughly means “diminishing returns”: adding an extra “yes” helps less if you already have many yeses. “Supermodular” is the opposite: “increasing returns” (an extra yes helps more when there are already yeses).

Methods (in everyday language)

The authors use two main approaches:

- Induction (step‑by‑step building):

- They prove the result for 1 question.

- Then they show that if it’s true for n−1 questions, it remains true when you add the nth question.

- Along the way, they check that being increasing and sub/supermodular is preserved when you look at the rule with one question fixed (this is a “restriction”).

- Smoothing and splitting the signal:

- They use a “heat semigroup,” which is like gently blurring the rule so sharp changes are softened. This makes certain patterns easier to measure.

- They also use a “Fourier expansion,” which is like breaking a complicated function into simpler parts (think of decomposing a song into notes). The “Level‑1” part captures how each single question affects the rule; the higher levels (Level ≥ 2) capture interactions among pairs or more questions.

- A key identity shows that the “pairwise interaction part” can be written using second‑order differences (how two coordinates jointly change the function). If the rules are submodular or supermodular, these second differences have a consistent sign, making the interaction part nonnegative.

These two complementary methods arrive at the same main inequality.

Main Findings and Why They Matter

The core result (the “dream inequality” the community hoped for) is:

whenever f and g are both increasing and both submodular or both supermodular. Here:

- The left side is the covariance (a measure of correlation).

- The right side adds up, over all questions, the product of how influential question i is for f and for g.

In plain terms: if both rules have the “diminishing returns” property (submodular) or the “increasing returns” property (supermodular), then their positive correlation is guaranteed to be at least one quarter of how much they simultaneously depend on each question. This confirms a prediction made by earlier researchers.

More results:

- The inequality also holds for real‑valued functions (not just 0/1 rules) by replacing ordinary influences with a closely related measure.

- They connect this to a famous conjecture in extremal set theory (Chvátal’s conjecture) through “antipodal” functions (a symmetric, mirror‑image condition). While the full conjecture remains open, their inequality matches what would be needed in this structured (sub/supermodular) case.

- Upper bounds: They show general inequalities that cap how large the correlation can be in terms of influences. They also give a refined “Talagrand‑type” bound that measures how much of the correlation comes from higher‑level interactions (pairs of questions or more), controlled by second‑order differences and a mild logarithmic factor.

Why this is important:

- It strengthens our understanding of when positive correlation must be large, not just positive.

- It identifies natural classes (submodular/supermodular) where clean, dimension‑free constants (like 1/4) work.

- It links tools from analysis, probability, and combinatorics around a central theme: how individual inputs and their pairwise interactions shape global behavior.

Implications and Potential Impact

- Theory of algorithms and learning: Submodular functions are common in optimization and machine learning (think “diminishing returns” in selecting diverse items). Knowing strong correlation bounds can help in designing and analyzing algorithms and learning methods that depend on such structure.

- Privacy and data science: Submodular functions appear in differential privacy and information measures; these inequalities could help reason about stability and sensitivity.

- Combinatorics and discrete probability: The results push forward a long‑standing program to quantify correlation beyond the classical “it’s positive” statement, and tie into well‑known conjectures.

In short, the paper shows that extra structure (submodularity or supermodularity) turns a hard, generally false hope into a true, clean inequality—with two different proofs and additional upper bounds—opening doors for both theory and applications.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of gaps and open directions left unresolved by the paper that future work could address:

- Extension beyond the uniform product measure: all results are proved under the uniform measure on the hypercube. Can the “dream inequality” and the semigroup-based Level-≥2 representation be extended to p-biased product measures or more general product distributions?

- Dependence structures and FKG settings: does the approach (especially the Level-≥2 semigroup identity and the sign-based positivity argument) extend to positively associated measures (e.g., log-supermodular/FKG measures) beyond the independent product case?

- Mixed (super/sub)modularity pairs: Theorem 1 requires both functions to be supermodular or both to be submodular. Is there any nontrivial lower bound when one function is submodular and the other is supermodular, or for broader classes with only partial sign alignment of second differences?

- Necessity of structural assumptions: to what extent are (super/sub)modularity assumptions necessary for the positivity of the Level-≥2 cross-term? Can one characterize minimal conditions on the second differences that ensure covariance ≥ Level-1 cross term?

- Robustness to approximate structure: do the lower bounds persist (possibly with a slack factor) under “approximate super/submodularity,” e.g., when a function’s mixed second differences have small positive/negative parts or are controlled in an average sense?

- Tightness and extremal examples: is the constant 1/4 in the “dream inequality” optimal within the super/submodular class? Identify extremal pairs (f, g) achieving equality or showing that any improvement over 1/4 fails.

- Influence-based bounds beyond Boolean/increasing: the influence-based inequality in Theorem 1 relies on monotonicity and Boolean values. Can an influence-form lower bound be obtained for non-Boolean real-valued super/submodular functions without monotonicity?

- Coordinatewise (KMS-type) refinements in the structured regime: can the Keller–Mossel–Sen coordinatewise lower bound be strengthened or simplified under super/submodularity (e.g., removing or improving logarithmic losses)?

- Antipodal strengthening beyond structured regime: the paper verifies the Friedgut–Kahn–Kalai–Keller conjectural bound when both functions are super/submodular. Can the methods be pushed to obtain any progress for general increasing functions with one antipodal (toward Chvátal’s conjecture)?

- Gaussian and q-ary extensions: does the heat-semigroup representation via second differences and the associated positivity mechanism have analogs for Gaussian space (Ornstein–Uhlenbeck semigroup) or q-ary hypercubes, enabling similar correlation lower bounds under appropriate curvature/second-difference criteria?

- Removing sign-oscillation barriers: in Section “reverse hypercontractivity,” the authors note a sign-oscillation barrier that blocks black-box use of reverse hypercontractivity without second-difference sign information. Can one design sign-stabilized transforms or weighted semigroup kernels to circumvent this barrier for broader classes?

- Optimizing the strengthened lower bound with second differences: in inequality (main strong lower), the constant c(θ) and norm choices () are fixed. What is the optimal choice of θ (or a multi-parameter kernel) and norms to maximize the second-difference contribution?

- Sharpening the L1–L2 upper bounds: the Talagrand-type upper bound for Level-≥2 terms uses a one-dimensional kernel estimate and yields constants like 9/8. Can the kernel analysis be improved to get dimension-free and optimal constants, or remove the logarithmic factor in specific structured classes?

- Level-d generalizations and constants: the Level-d extension gives bounds with constants C_d. Are these constants optimal, and can one obtain structural conditions (e.g., higher-order super/submodularity) that yield stronger Level-d lower bounds?

- Characterization of pairs with zero Level-≥2 cross weight: the identity shows that if , all weighted second-difference contributions must vanish. Can one classify the pairs (f, g) for which covariance equals exactly the Level-1 term, and what structural consequences follow?

- Tradeoffs under restrictions and biased coordinates: the induction-by-restriction proof works under uniform marginals. What changes (and can be rescued) when coordinates have different biases or are coupled, and can one generalize the restriction step to non-uniform conditional expectations?

- Alternative difference operators: positivity assumptions on yield sufficient conditions for the lower bound. Are these conditions strictly stronger than supermodularity, and can one characterize the exact relationship between -positivity and -sign constraints?

- From lower bounds to learning/approximation: given known spectral concentration for submodular functions (low-degree approximability), can the new correlation lower bounds yield algorithmic or statistical consequences (e.g., improved testing or learning guarantees for submodular classes)?

- Beyond cross-total influence: the dream inequality pins covariance to the cross-total influence. Are there refined lower bounds that interpolate between Level-1 and Level-≥2 contributions (e.g., involving mixed norms of second differences) that capture intermediate regimes?

- Stability and noise robustness: how sensitive are the lower bounds to small random perturbations of f and g (e.g., noise stability)? Can one quantify a stability theorem where covariance lower bounds degrade gracefully under noise?

- Dimension dependence and scaling: can one obtain dimension-dependent refinements (or show dimension-free optimality) for the constants in the lower and upper bounds under super/submodularity?

- Extensions to self-bounding/XOS classes: submodular functions are contained in broader classes (self-bounding, XOS). Does the approach extend to these classes, and what additional assumptions are needed?

- Converse implications: if a function f satisfies a Talagrand-type correlation lower bound with a large constant against all increasing g in a given class, does this imply f must be (approximately) super/submodular? Develop converse characterizations via correlation profiles.

- Algorithmic verification and certificates: can one devise efficient tests or certificates (e.g., via sampling and finite differences) to verify that a given function satisfies the sufficient second-difference conditions guaranteeing the “dream inequality,” with error guarantees?

Practical Applications

Immediate Applications

The following applications can be deployed now, provided their assumptions match the setting (monotone submodular/supermodular functions on a product space, typically the hypercube with independent coordinates). They turn the paper’s correlation and influence bounds into concrete workflows and tools.

- Sector: software/ML engineering — model diagnostics and interaction analysis

- Use case: fast screening of feature interactions and task relatedness in multi-output systems whose outputs are monotone submodular (e.g., coverage, influence, facility-location–type scores).

- Workflow: compute per-coordinate discrete derivatives and L1/L2 influences; use the paper’s lower bound E[fg] − E[f]E[g] ≥ (1/4)∑Inf_i[f]·Inf_i[g] to guarantee positive correlation when influences align, and use the upper bounds to cap spurious correlations when monotonicity alone holds.

- Tools: a “covariance certificate” module that (i) estimates influences from data, (ii) verifies sub/supermodularity via second-order derivatives ∂_ij f having the right sign, and (iii) reports guaranteed correlation ranges.

- Assumptions/dependencies: outputs must be monotone submodular or supermodular; coordinates independent (uniform product measure or close); empirical estimation of influences must be reliable.

- Sector: optimization and operations research — multi-objective submodular decision-making

- Use case: combining two submodular objectives (e.g., sensor placement for coverage + diversity) with provable correlation guarantees to avoid redundant gains or to leverage synergy.

- Workflow: before running a greedy or continuous-relaxation algorithm, compute coordinatewise influences for each objective; if the cross-total-influence is large, expect stronger joint gains; if small, prioritize complementary objectives.

- Tools: pre-optimization “objective overlap analyzer” that uses influence products to predict joint behavior; a dashboard that flags coordinates with high cross-influence product to guide constraint design.

- Assumptions/dependencies: objectives must be submodular/supermodular; measurements/features behave approximately independently; discrete derivatives are computed on the optimization ground set.

- Sector: data science and A/B experimentation — property testing and validation for submodular models

- Use case: certify that learned or heuristically defined scoring functions are truly submodular/supermodular and monotone, using derivative-based tests; reject models that fail the correlation lower bound when influence profiles suggest they should pass.

- Workflow: sample points; estimate first- and second-order discrete derivatives; test sign conditions on ∂_ij f; compare empirical covariance to the bound; use violations to trigger model repair (e.g., clipping or regularizing second-order terms).

- Tools: “submodularity tester” and “correlation bound validator” libraries that integrate with scikit-learn or PyTorch.

- Assumptions/dependencies: sufficient samples for stable derivative estimates; the domain reasonably modeled as a product space; monotonicity enforced or checked.

- Sector: reliability engineering and network design

- Use case: monotone submodular reliability metrics (e.g., coverage, connectivity proxies) for two subsystems; use the bound to quantify minimal positive dependence from shared vulnerable components (high-influence coordinates).

- Workflow: compute influences of components on each reliability metric; the 1/4 cross-influence lower bound quantifies unavoidable correlation; use it to design redundancy that targets high cross-influence components first.

- Tools: reliability correlation analyzer that ranks components by cross-influence products and estimates guaranteed covariance.

- Assumptions/dependencies: system metrics monotone and submodular; components’ states near independent; binary approximation of component states.

- Sector: robotics and sensing — sensor placement and active learning

- Use case: planning with multiple monotone submodular objectives (coverage vs. detection likelihood). The bound identifies where objectives overlap structurally, guiding selection to maximize complementary gains.

- Workflow: compute discrete derivatives per sensor-feature; prioritize sensors with low cross-influence products to diversify gains; use upper/lower bounds to predict achievable joint performance.

- Tools: planning assistant that augments standard greedy selection with cross-influence diagnostics.

- Assumptions/dependencies: objectives submodular; independence assumptions approximately hold.

- Sector: marketing and influence maximization

- Use case: two monotone submodular campaign objectives (reach vs. engagement); use bounds to quantify guaranteed covariance when audiences/features have aligned influence.

- Workflow: estimate feature influences on each objective; apply the lower bound to identify audience segments with provably synergistic impact; use upper bounds to avoid overestimating cross-effects when monotonicity is present but submodularity is uncertain.

- Tools: audience overlap estimator based on discrete derivative analytics.

- Assumptions/dependencies: objectives modeled as submodular functions of targeted features; feature independence approximations hold.

- Sector: education and assessment design

- Use case: design test forms with coverage-type submodular objectives (skills coverage and fairness); use correlation bounds to quantify overlap across two forms or two scoring rules.

- Workflow: compute coordinate influences (skills/items) on each score; use cross-influence lower bound to quantify minimum overlap; adjust item pools to achieve desired correlation level.

- Tools: assessment planner with influence and covariance dashboards.

- Assumptions/dependencies: scoring rules monotone and submodular; item effects approximately independent.

Long-Term Applications

The following applications require further research, scaling, or integration to become robust in practice.

- Sector: differential privacy for combinatorial queries

- Use case: tighter sensitivity and composition analyses for releasing multiple monotone submodular statistics under privacy budgets, using covariance bounds tied to influences.

- Potential product: a privacy accountant that exploits the link between cross-influences and pairwise covariance to reduce worst-case composition penalties for submodular queries.

- Dependencies: formal privacy analyses under product distributions with approximate independence; robust estimation of influences under privatization noise; extension beyond uniform measures.

- Sector: ML model regularization and interpretability

- Use case: submodularity-aware regularizers that penalize sign violations of second-order discrete derivatives (∂_ij f), encouraging models with controllable higher-order interactions and predictable cross-task covariance.

- Potential product: plug-in regularizer for gradient-boosted trees or discrete neural architectures that enforces approximate submodularity and uses the paper’s Level-≥2 representation to control interactions.

- Dependencies: scalable estimation of discrete derivatives for high-dimensional inputs; surrogate losses for non-differentiable discrete operators; empirical validation across domains.

- Sector: multi-objective algorithm design with theoretical performance guarantees

- Use case: provably better joint approximation bounds when optimizing multiple monotone submodular objectives under known cross-influence structure; derive new bicriteria guarantees that embed the 1/4 cross-influence lower bound.

- Potential product: multi-objective greedy/continuous relaxations with performance guarantees parameterized by influence profiles.

- Dependencies: new analysis frameworks that merge correlation bounds with standard submodular optimization theory; benchmarking across large-scale instances.

- Sector: robust property testing and certification at scale

- Use case: streaming and distributed testers for monotone submodularity/supermodularity using second-order derivative sign checks and semigroup-inspired smoothing, with statistical guarantees about false positives/negatives.

- Potential product: cloud service that certifies submodularity of learned objectives and flags deviations that materially impact correlation bounds.

- Dependencies: scalable sampling schemes; robust estimators for ∂_ij f in noisy environments; extensions to non-product measures and dependent features.

- Sector: risk and finance — structural correlation control

- Use case: portfolio or risk aggregation where component risk maps are approximately monotone submodular; use influence-driven covariance bounds to design diversification strategies that target coordinates with high cross-influence.

- Potential product: risk analytics platform that reports guaranteed correlation floors/ceilings under structural assumptions, improving stress testing.

- Dependencies: validation that financial risk maps satisfy submodularity/monotonicity; handling dependence structures beyond product measures; mapping discrete influences to continuous risk drivers.

- Sector: statistical physics and networked systems

- Use case: adapting the heat-semigroup and reverse hypercontractivity approach to spin systems or dependent network states to derive improved correlation bounds under approximate sub/supermodularity.

- Potential product: analytical toolkit for bounding correlations in high-dimensional dependent systems with structured interactions.

- Dependencies: extensions from independent hypercube to Markov random fields; identifying classes where second-difference sign conditions hold approximately.

- Sector: automated model repair and synthesis

- Use case: automatically transform a learned scoring function into the closest monotone submodular approximation (in L1/L2) by enforcing ∂_ij-sign constraints and preserving Level-1 influence structure, guaranteeing predictable covariance behavior with other objectives.

- Potential product: “submodularity projector” that uses the paper’s Level-≥2 representation to suppress harmful higher-order terms while preserving performance.

- Dependencies: efficient projection algorithms; trade-off controls between accuracy and structural guarantees; domain-specific validation.

Cross-cutting assumptions and dependencies

- Monotonicity and (super/sub)modularity are crucial: the 1/4 lower bound is guaranteed only when both functions satisfy the same structural condition (all mixed second differences ∂_ij have the same sign).

- Independence/product-space modeling: results are derived under the uniform product measure; performance and guarantees may degrade under strong dependencies unless extended.

- Estimation accuracy: practical use requires stable estimation of discrete derivatives and influences from samples; small-sample bias or noise can invalidate certificates.

- Boolean or bounded outputs: some bounds assume Boolean outputs; real-valued analogs require using L1 influences and verifying monotonicity.

- Scalability: computing second-order derivatives over high dimensions can be expensive; approximations or sparsity assumptions may be needed in large systems.

Glossary

- Antipodal: Property of a Boolean function that is complemented under bitwise negation: g(x)=1−g(1−x). Example: "If are increasing and is antipodal, then"

- Antipodality: The condition that one function in a pair is antipodal, often enabling sharper bounds. Example: "improved the logarithmic factor under the antipodality of one function:"

- Bonami–Beckner hypercontractive inequality: Fundamental inequality showing how the noise operator contracts Lp norms on the hypercube. Example: "Finally, we recall the Bonami--Beckner hypercontractive inequality on the hypercube"

- Bonami–Beckner semigroup: The noise (heat) semigroup P_t acting on functions over the hypercube, used for smoothing and interpolation. Example: "based on the Bonami--Beckner semigroup on the hypercube"

- Borell's reverse hypercontractivity: Inequality reversing hypercontractive direction for certain p<1, used to lower bound correlations. Example: "Borell's reverse hypercontractivity to obtain the strengthened lower bound"

- Cross-total-influence: The sum of products of coordinate influences across two functions, I[f,g]=∑i Inf_i[f]Inf_i[g]. Example: "the cross-total-influence of "

- Diminishing returns: Equivalent characterization of submodularity stating marginal gains decrease as sets grow. Example: "(Diminishing returns) For all and ,"

- Discrete derivative: Difference operator on the hypercube measuring sensitivity to flipping a coordinate. Example: "The th (discrete) derivative operator maps the function"

- Edge-isoperimetric inequality: Bound relating the size of a set in the hypercube to its edge boundary, e.g., Harper’s inequality. Example: "Harper's edge-isoperimetric inequality"

- Fourier level decomposition: Grouping Fourier coefficients by subset size (level), e.g., Level-1 vs Level-≥2 contributions. Example: "isolating Level- Fourier weight"

- Fourier–Walsh expansion: Representation of functions on the hypercube in the orthonormal basis of parity characters. Example: "we use the Fourier--Walsh expansion on "

- Gaussian counterpart: A continuous (Gaussian) analog used to derive or compare discrete inequalities. Example: "The proof proceeds via a Gaussian counterpart,"

- Halfspace: Boolean function given by a thresholded linear form; used in tightness examples. Example: "halfspaces and their duals"

- Harris–Kleitman correlation inequality: Classical inequality asserting nonnegative correlation for increasing Boolean functions. Example: "Harris--Kleitman correlation inequality"

- Heat semigroup: The smoothing operator P_t on the hypercube that dampens higher Fourier levels exponentially. Example: "We begin with a heat-semigroup identity"

- Influence: Probability that flipping a coordinate changes a Boolean function’s value; a measure of coordinate sensitivity. Example: "The (uniform) influence of coordinate on a Boolean function is"

- Junta: Function depending only on a small subset of coordinates. Example: "by juntas."

- L1-influence: Influence measured via the L1 norm of discrete derivatives for real-valued functions. Example: "the -influence "

- Martingale difference: Decomposition technique using conditional expectations to control covariance via coordinate increments. Example: "a martingale difference approach;"

- Noise operator: Another name for the heat semigroup on the hypercube that introduces random noise to inputs. Example: "the heat semigroup/noise operator,"

- Noise sensitivity: Measure of how likely a function’s value changes under small random perturbations of input bits. Example: "the noise sensitivity of submodular functions,"

- Poincaré inequality: Inequality relating variance (or covariance) to sums of coordinate influences. Example: "two-function version of the Poincar e inequality."

- Quadratic covariation: Stochastic process notion capturing cumulative product of increments, used in Gaussian proofs. Example: "representing the correlation as a quadratic covariation of a suitable stochastic process"

- Reverse isoperimetry: Inequalities (e.g., Borell’s) giving lower bounds in Gaussian/discrete settings complementary to isoperimetry. Example: "Borellâs reverse isoperimetry,"

- Second-order discrete derivative: Mixed discrete derivative ∂ij capturing pairwise interaction curvature; characterizes (sub/super)modularity. Example: "defining the second discrete derivative"

- Semigroup interpolation: Technique that proves inequalities by interpolating along the noise semigroup rather than inducting on coordinates. Example: "provided a semigroup interpolation proof"

- Self-bounding functions: Class where a function’s value bounds the sum of its marginal contributions; includes submodular and XOS. Example: "self-bounding functions (including submodular and XOS functions)"

- Submodular: Function with decreasing marginal returns; equivalently, all mixed second differences nonpositive. Example: "A function is submodular if"

- Supermodular: Function with increasing marginal returns; equivalently, all mixed second differences nonnegative. Example: "and supermodular if the reverse inequality holds for all ."

- Talagrand – upper bound: Mixed-norm bound controlling high-level Fourier contributions via L1/L2 of second differences. Example: "[Talagrand --type upper bound]"

- Talagrand-type correlation inequality: Lower bound on covariance in terms of influences capturing dependence on coordinates. Example: "Talagrand-type correlation inequality"

- Tribes: A canonical Boolean function family (ANDs of small ORs) used to demonstrate sharpness of bounds. Example: "Tribes and dual Tribes"

- XOS (fractionally subadditive): Valuation class expressible as a maximum of additive functions; closely related to submodularity. Example: "XOS (fractionally subadditive)"

Collections

Sign up for free to add this paper to one or more collections.