- The paper presents a convergent, data-driven framework that approximates the Koopman operator’s spectral properties for nonlinear systems using methods like EDMD.

- It introduces robust error control techniques, including the systematic use of residuals and pseudospectra, to mitigate spectral pollution in mode selection.

- The work unifies finite-dimensional approximations, delay embedding, and SCI analysis to ensure reliable forecasting and computational rigor in complex dynamical systems.

Koopman Learning: Rigorous Data-Driven Spectral Analysis of Nonlinear Dynamical Systems

Introduction and Theoretical Framework

This paper provides a comprehensive and rigorous introduction to Koopman learning, focusing on data-driven spectral analysis and forecasting for nonlinear dynamical systems. The Koopman operator, a linear but infinite-dimensional operator acting on observables, enables the study of nonlinear dynamics through linear spectral theory. The central challenge addressed is the development of convergent, reliable numerical methods for approximating the Koopman operator and its spectral properties from finite snapshot data, with a particular emphasis on error control, convergence guarantees, and the handling of continuous spectra.

The setting assumes a discrete-time dynamical system xn+1=F(xn) on a metric space X, with F continuous and possibly nonlinear. Observations are available as snapshot pairs (x(m),y(m)) with y(m)=F(x(m)). The Koopman operator K acts on observables g:X→C via [Kg](x)=g(F(x)). The analysis is conducted in L2(X,ω), where ω is a Borel measure, and K is assumed bounded but not compact, precluding the direct application of classical spectral theory.

Finite-Dimensional Approximations and Data-Driven Methods

Matrix Compression and EDMD

Finite-dimensional approximations are constructed by projecting K onto subspaces spanned by a dictionary D={ψ1,…,ψN}. The resulting finite section KN is determined by minimizing the L2 residuals of the projected action of K on the dictionary elements. In practice, the Extended Dynamic Mode Decomposition (EDMD) is used, where the residuals are minimized over the available snapshot data, leading to a weighted least-squares problem whose solution is given by a pseudoinverse formula.

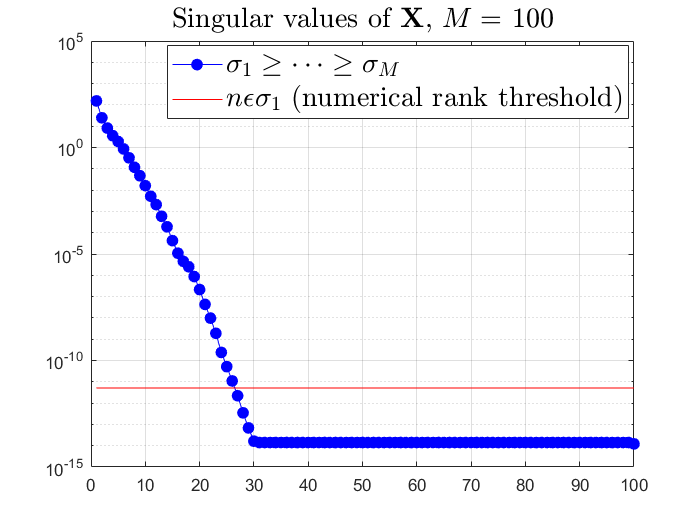

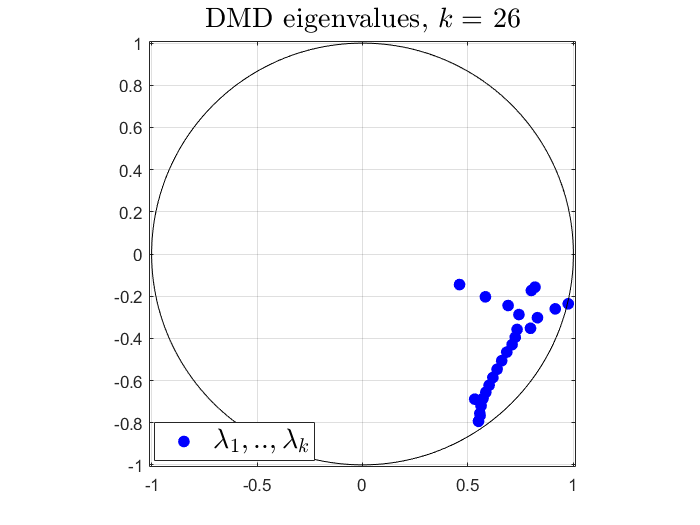

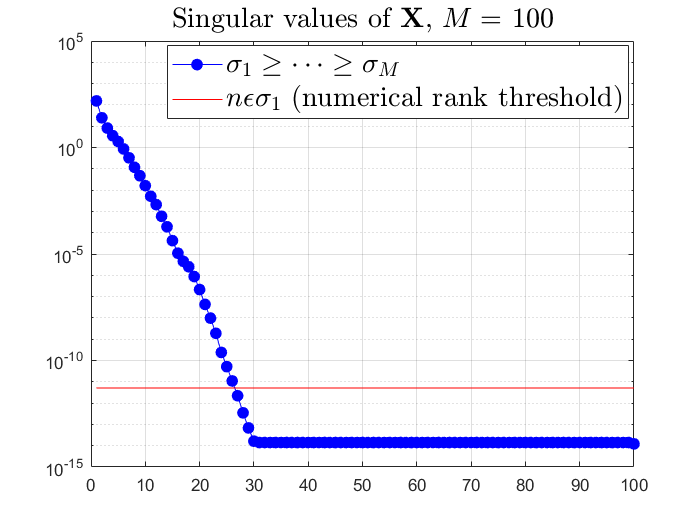

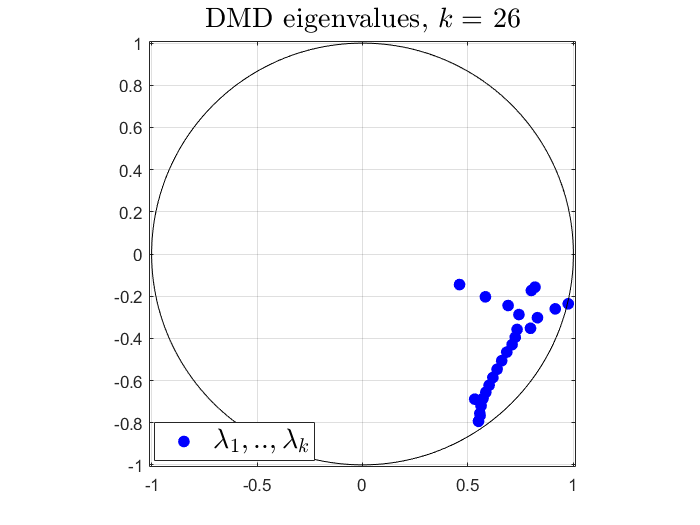

The SVD of the data matrix is used for rank-revealing truncation, and the Rayleigh quotient is employed to reduce the eigenvalue problem to the numerically significant subspace. The connection to Dynamic Mode Decomposition (DMD) is established, with DMD interpreted as a special case of EDMD with the full state observable.

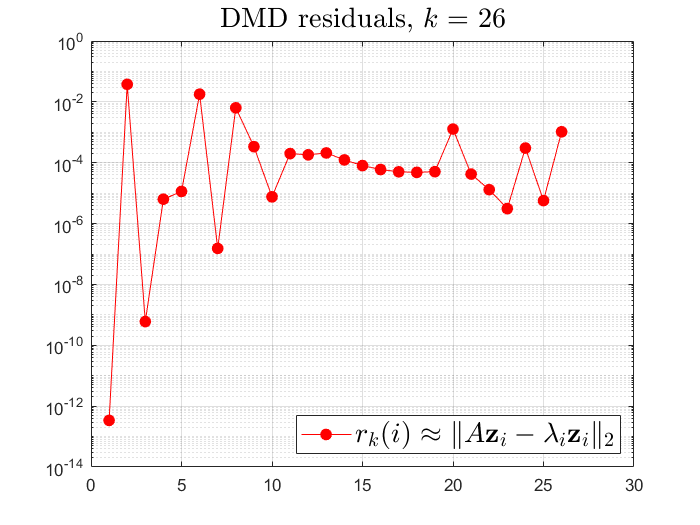

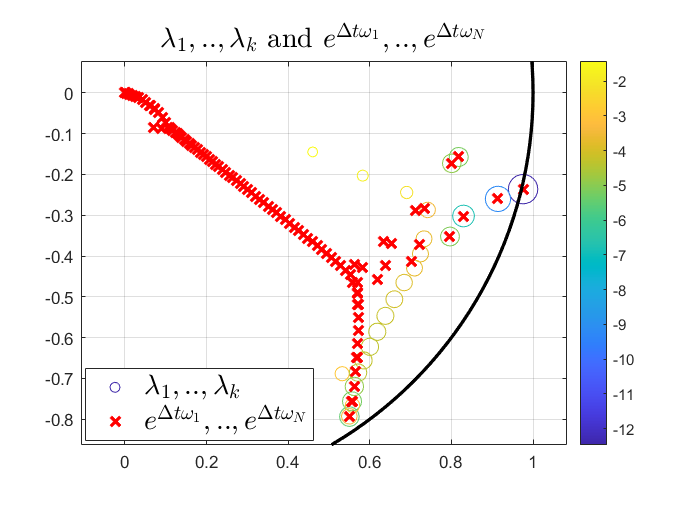

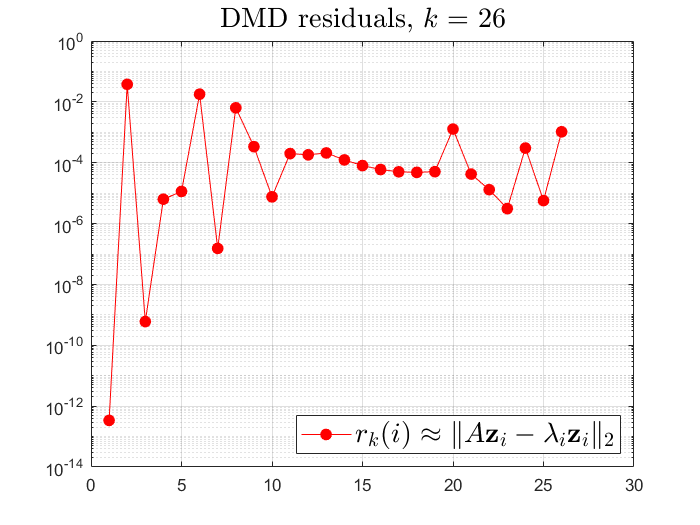

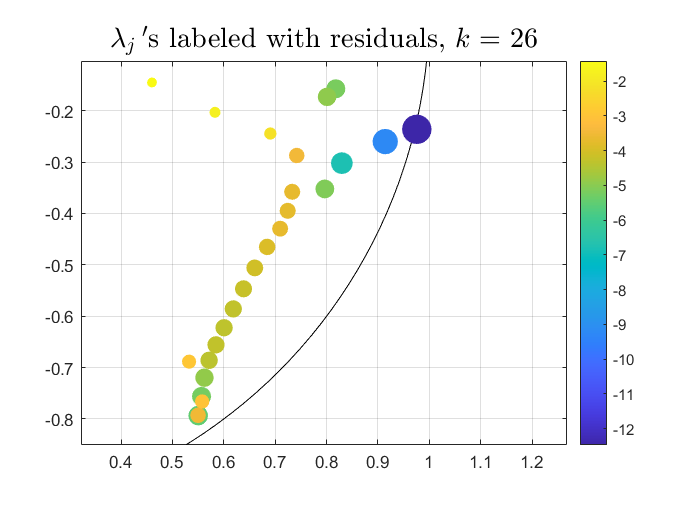

Figure 1: The singular values of X (left), DMD eigenvalues (middle), and DMD residuals (right) for a linearized Navier–Stokes benchmark.

Residuals and Mode Selection

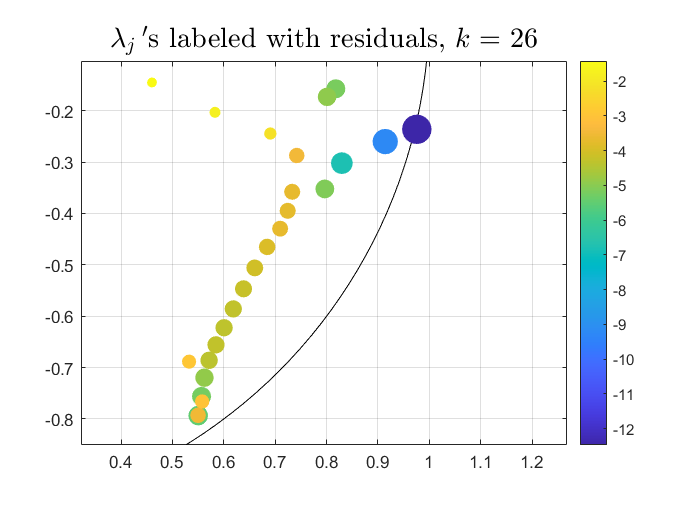

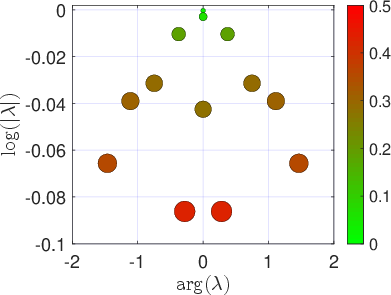

A key contribution is the systematic use of residuals to assess the quality of computed eigenpairs. The residual ∥Kϕi−λiϕi∥ is computed for each candidate eigenpair, and only those with small residuals are retained, mitigating spectral pollution. This approach is formalized in the ResDMD algorithm, which computes both finite- and infinite-dimensional residuals directly from data.

Figure 2: DMD eigenvalues with corresponding residuals (left) and comparison with true eigenvalues (right).

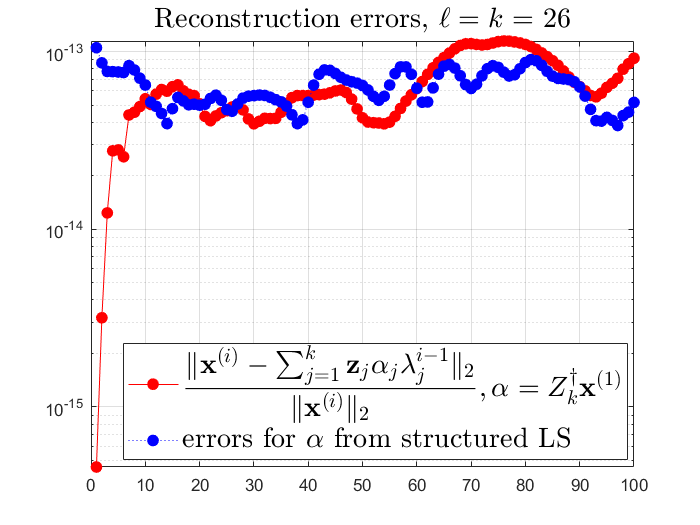

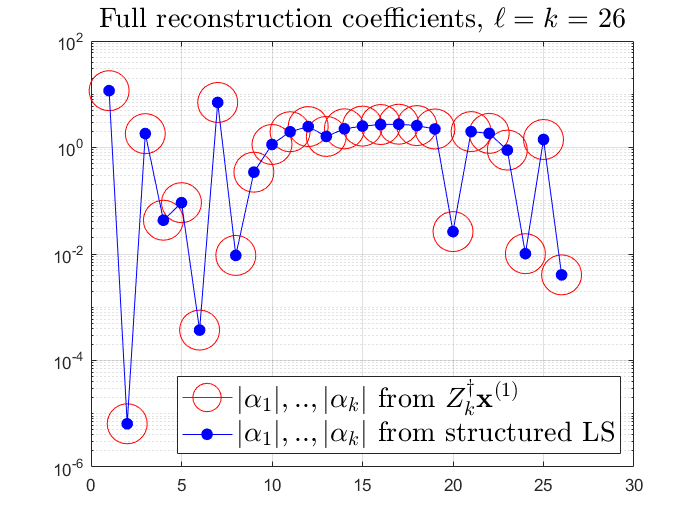

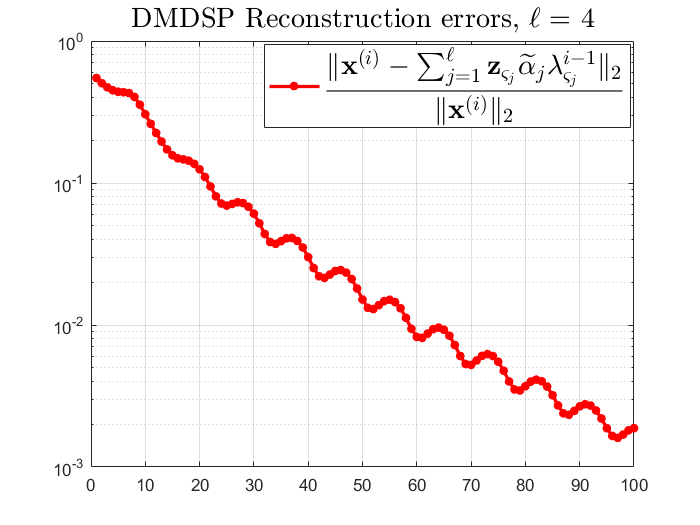

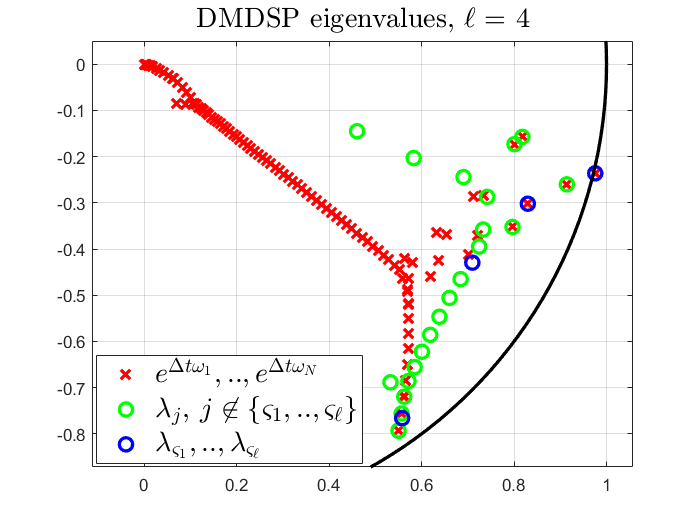

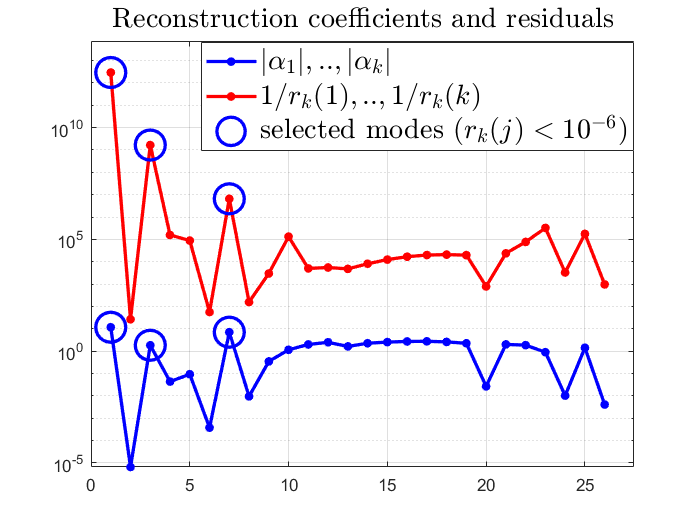

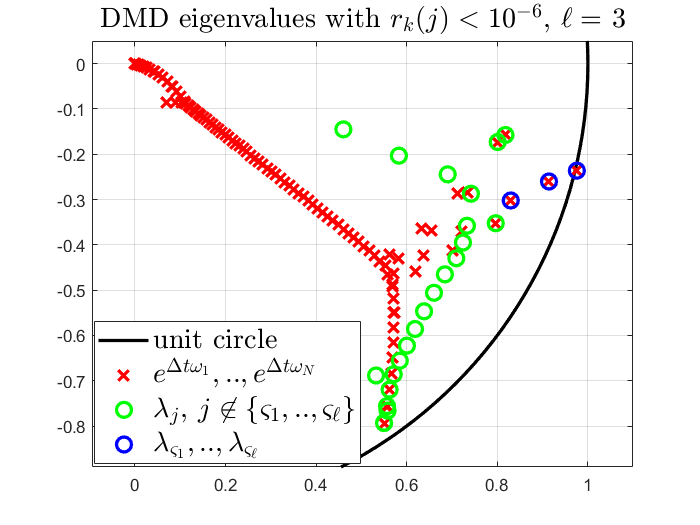

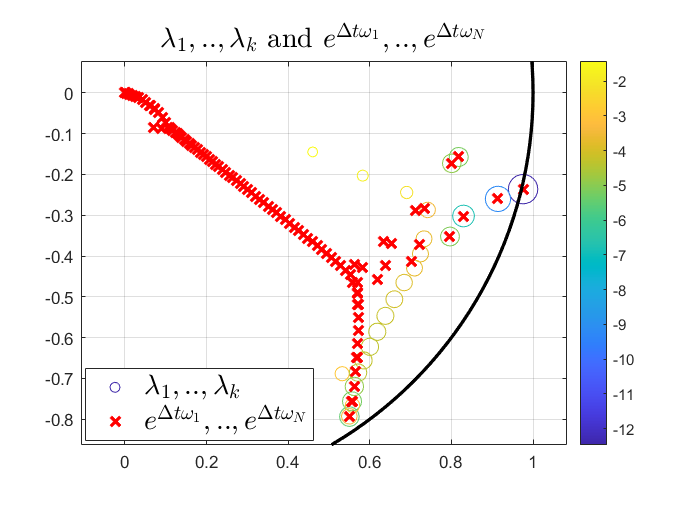

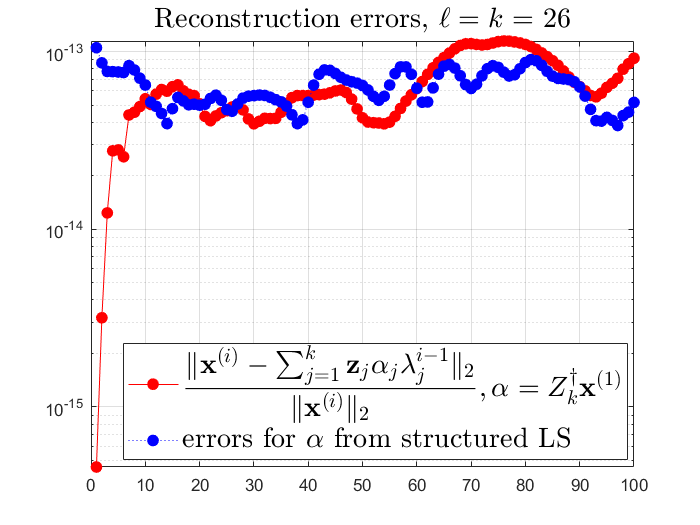

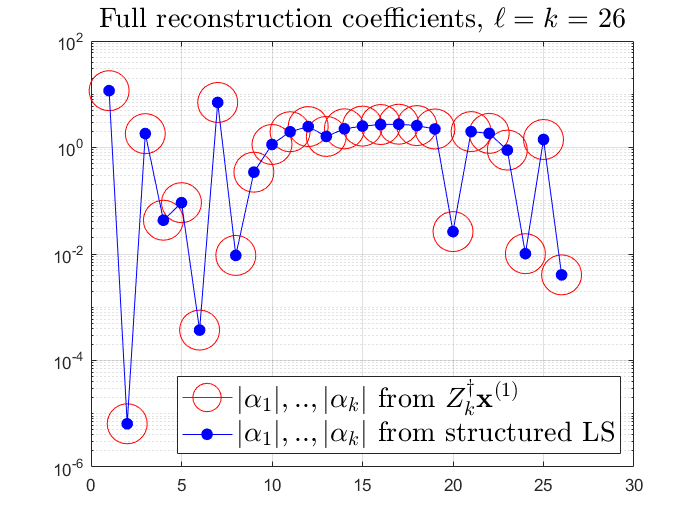

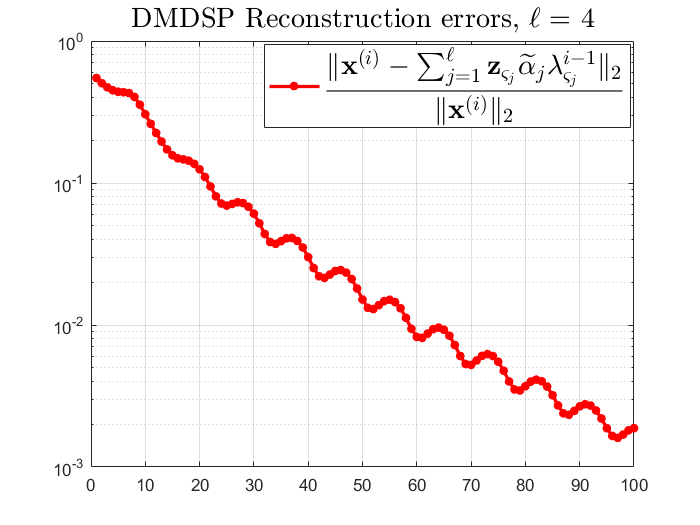

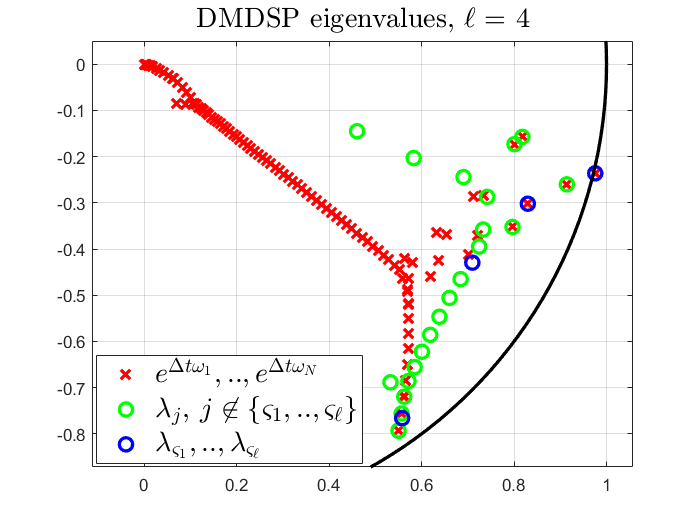

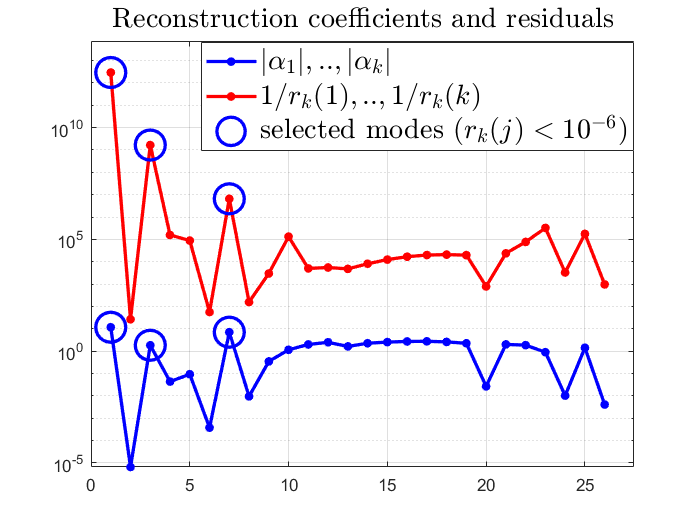

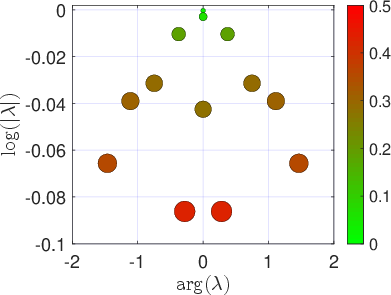

The relationship between the magnitude of reconstruction coefficients and residuals is highlighted, and mode selection strategies such as sparsity-promoting DMD (DMDSP) and residual thresholding are compared.

Figure 3: Reconstruction errors using all computed modes (left) and the moduli of reconstruction coefficients (right).

Figure 4: DMDSP reconstruction errors with ℓ=4 (left) and selected eigenvalues (right).

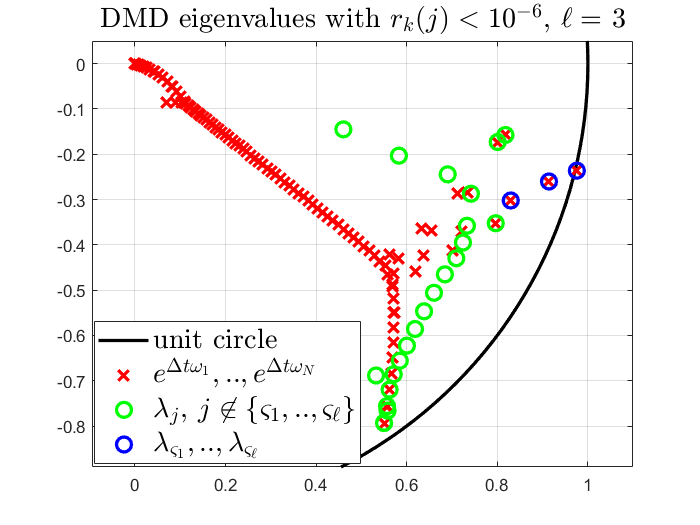

Figure 5: Correlation between ∣αj∣ and 1/rk(j) (left); DMD eigenvalues with residuals below 10−6 (right).

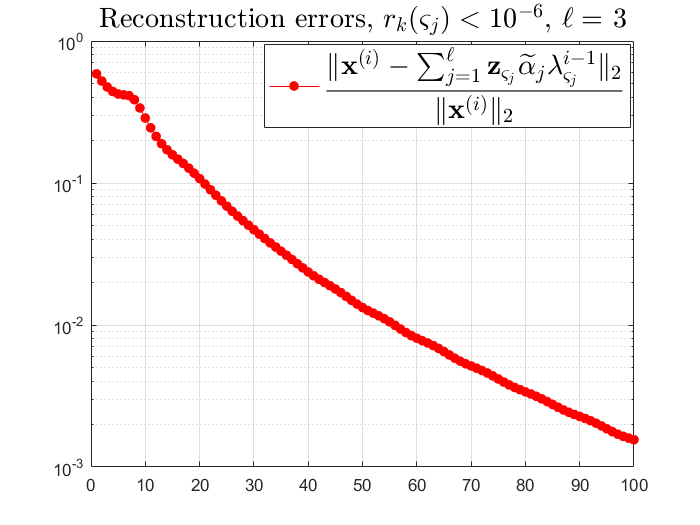

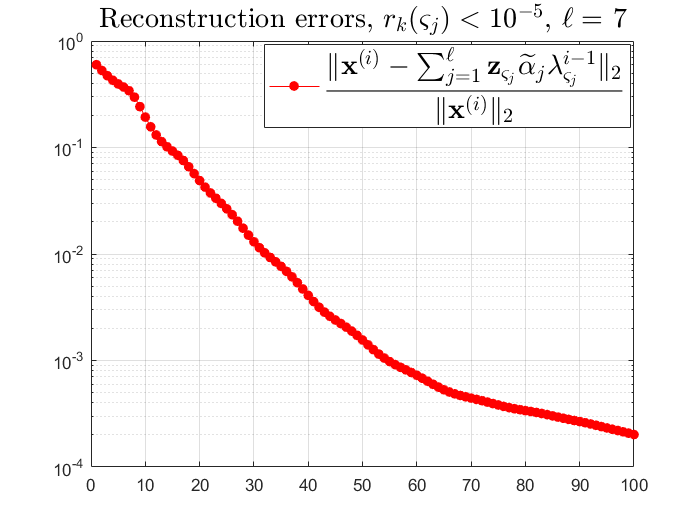

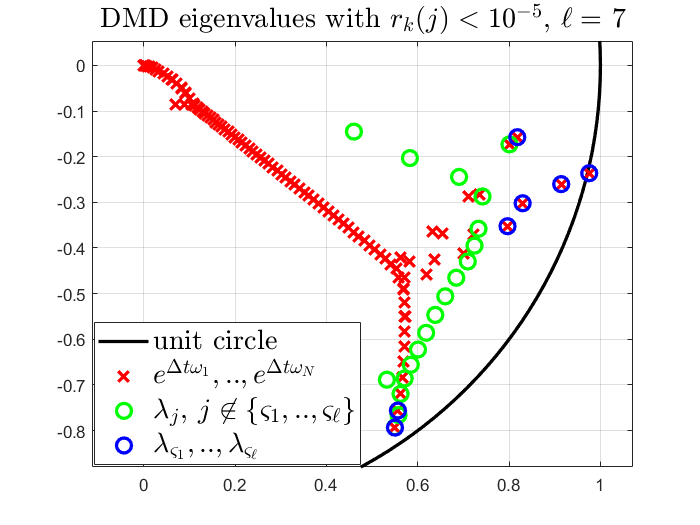

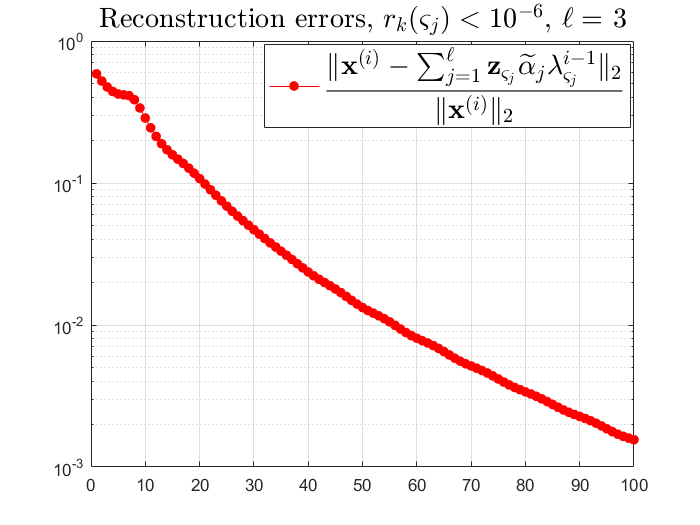

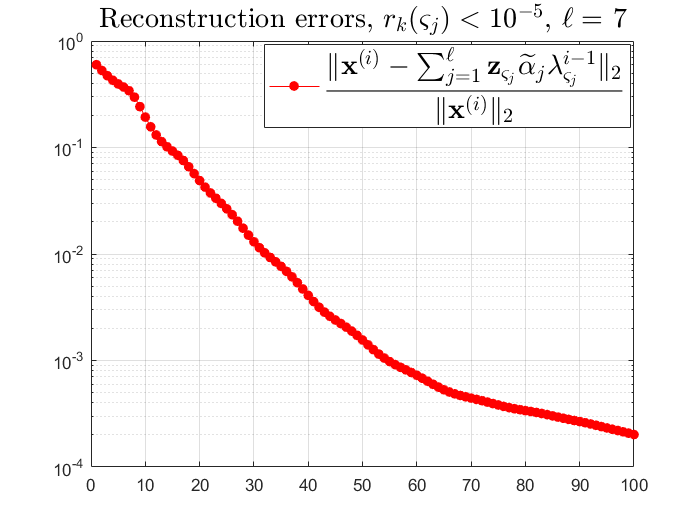

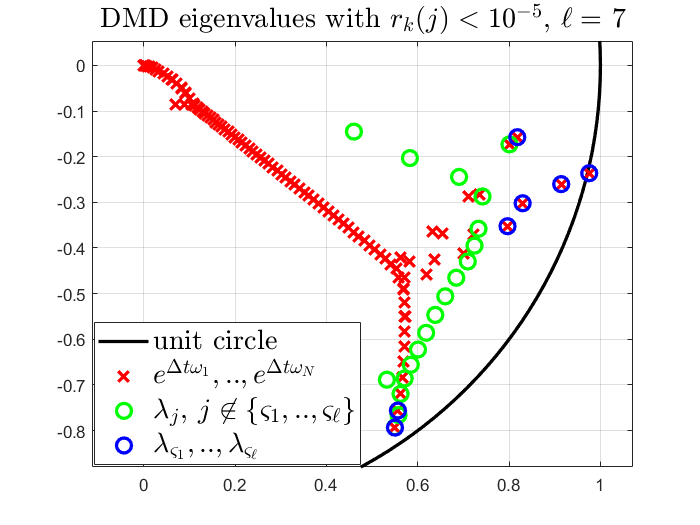

Figure 6: Reconstruction error using only modes with residuals below 10−6 (left: ℓ=3; middle/right: ℓ=7).

Error Control, Pseudospectra, and Convergence

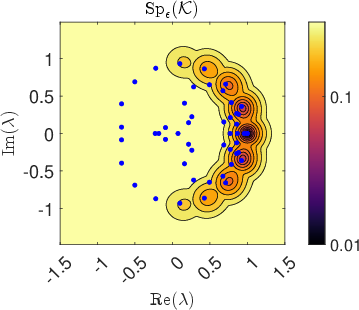

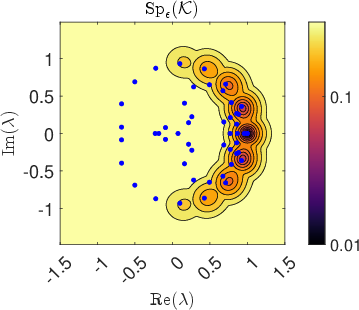

The paper provides a unified framework for error control via residuals, both in finite and infinite dimensions. The concept of pseudospectra is introduced to characterize the sensitivity of the spectrum to perturbations and to identify coherent structures in non-normal operators. The ResDMD algorithm is extended to compute pseudospectra by minimizing the smallest singular value of a residual matrix over a grid in the complex plane.

Forecast error bounds are derived, quantifying the error in one-step and multi-step predictions as a function of the projection and operator approximation errors. Convergence results are established: as the number of data points M→∞ and the dictionary size N→∞, the computed residuals and pseudospectra converge to their infinite-dimensional counterparts, and spectral pollution is avoided by discarding high-residual modes.

Figure 7: EDMD eigenvalues and their residuals for the Duffing oscillator, illustrating spectral pollution.

Delay Embedding and Krylov Subspaces

Time-delay embedding (Hankel-DMD) is advocated as an effective dictionary choice, especially for high-dimensional or partially observed systems. Krylov subspaces generated by time-delayed observables provide intrinsic coordinates that approximate invariant subspaces. The convergence properties depend on whether the observable generates a finite-dimensional invariant subspace; otherwise, the computed eigenvalues cluster inside the unit circle and equidistribute as N→∞.

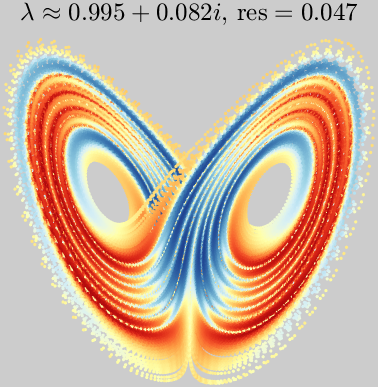

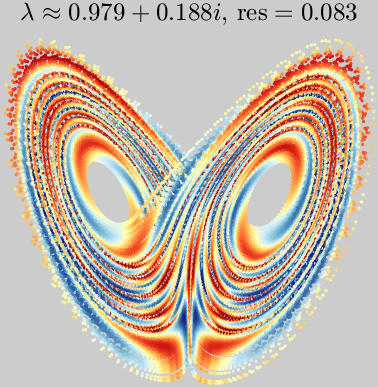

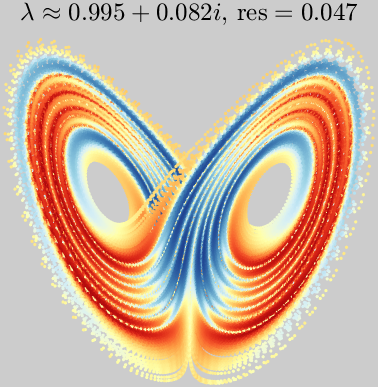

Figure 8: Pseudoeigenfunctions of the Lorenz system computed via Hankel-DMD, reflecting coherent temporal behavior.

Koopman Modes and Generalized Laplace Analysis

The paper presents an elementary proof of convergence for generalized Laplace analysis (GLA), which computes Koopman modes via filtered power iteration, even in the absence of spectral gaps. For spectral operators, Laplace averages converge to the projection onto the eigenspace associated with a given eigenvalue, enabling the computation of Koopman modes from data.

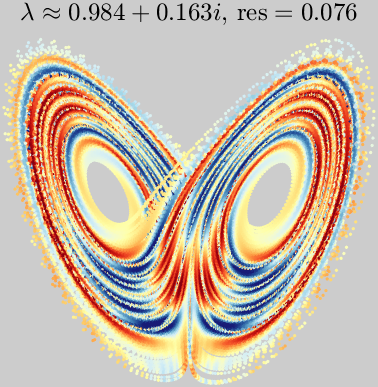

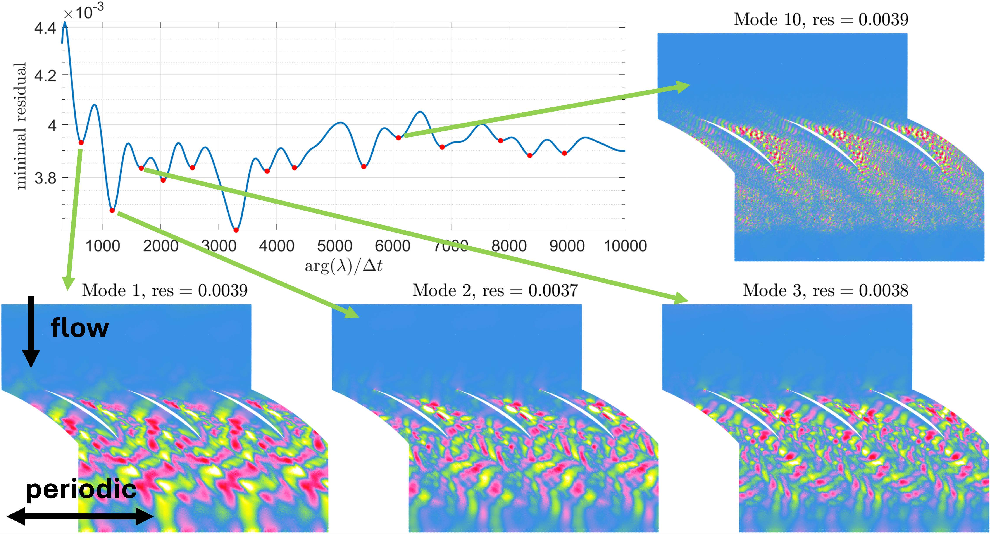

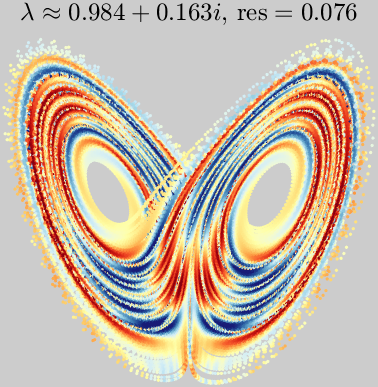

Figure 9: Koopman modes computed using generalized Laplace analysis for turbulent flow past a periodic cascade of airfoils.

Spectral Measures and Continuous Spectrum

Three principal families of algorithms for computing spectral measures of unitary Koopman operators are reviewed:

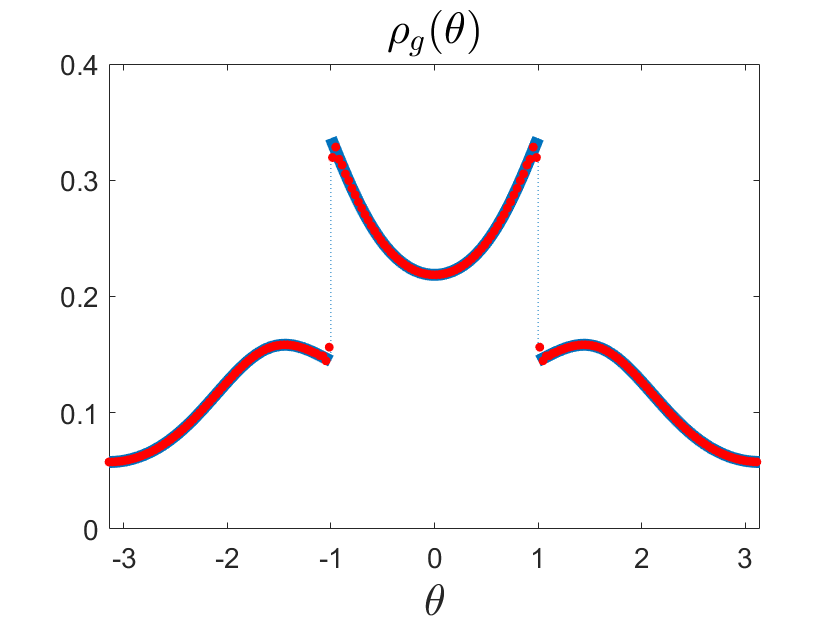

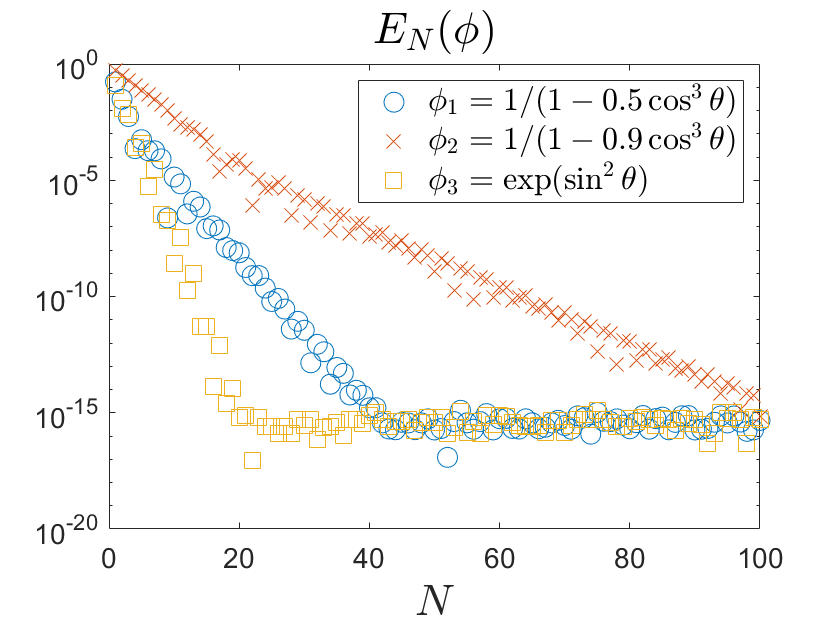

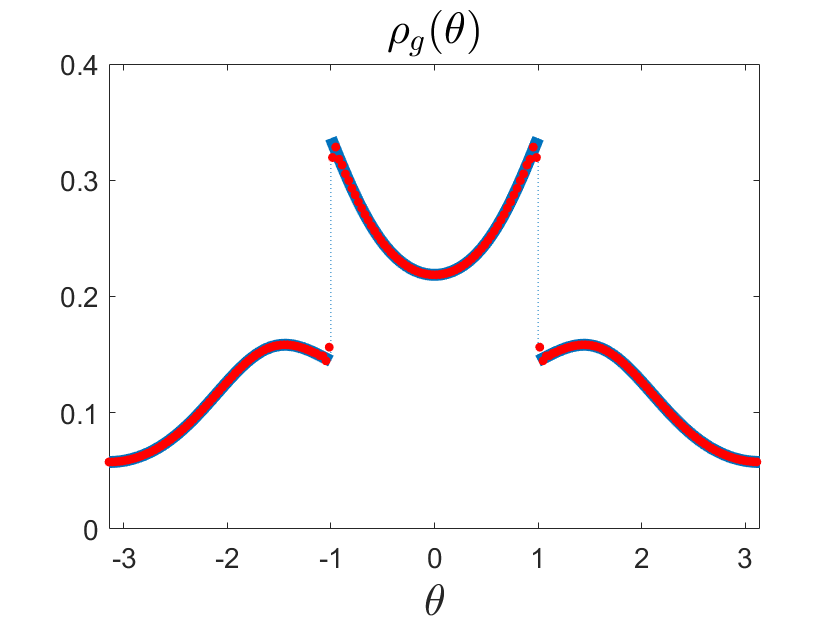

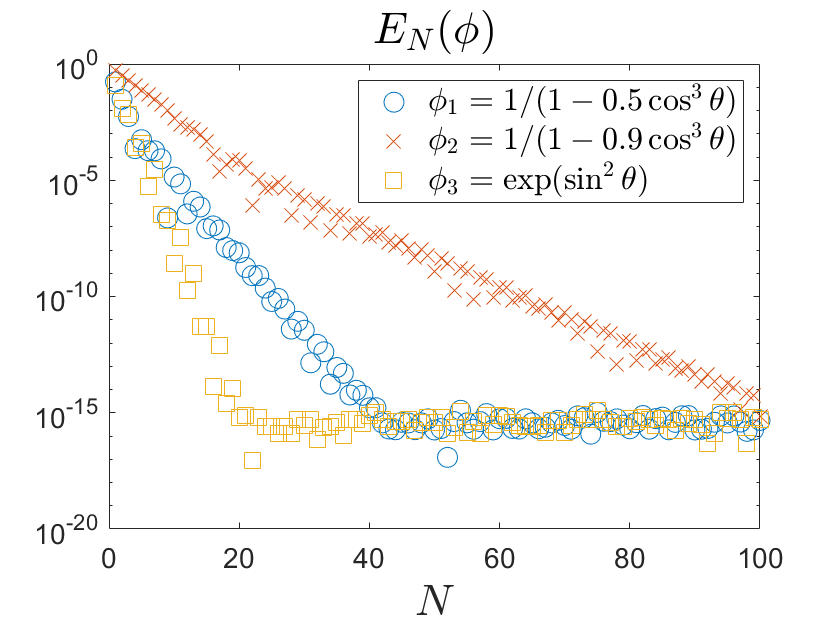

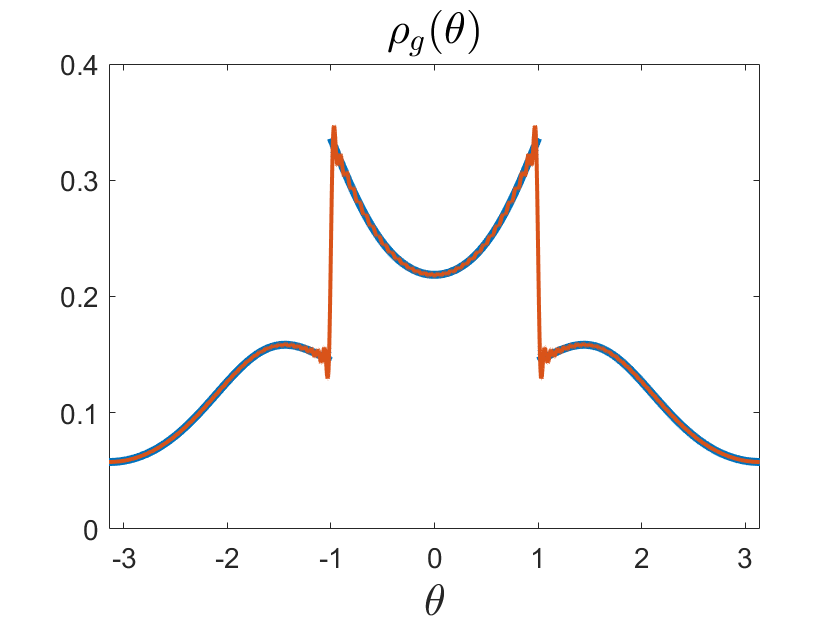

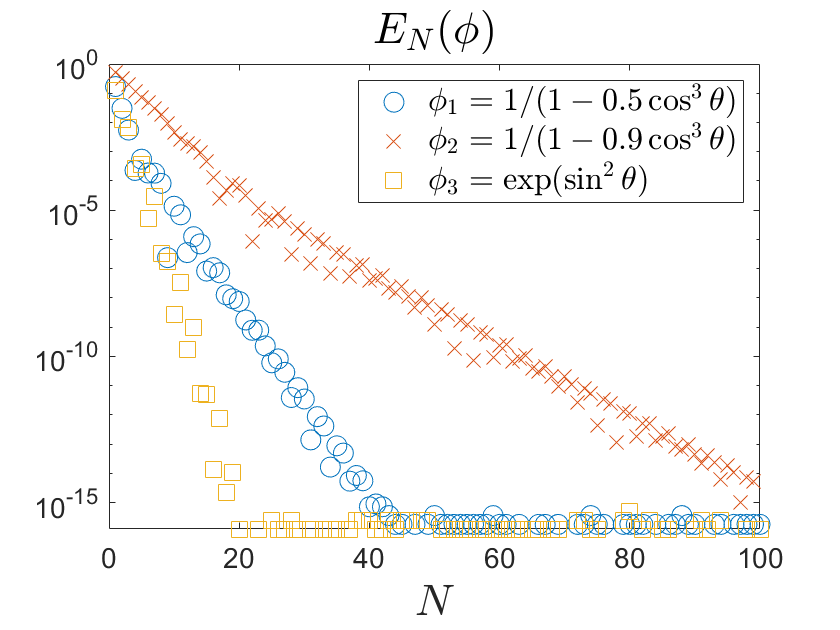

- Moment-Based Methods: Approximate the spectral measure from moments (Fourier coefficients) using interpolatory quadrature or truncated Fourier series. Convergence rates depend on the regularity of the test function and the summability of quadrature weights.

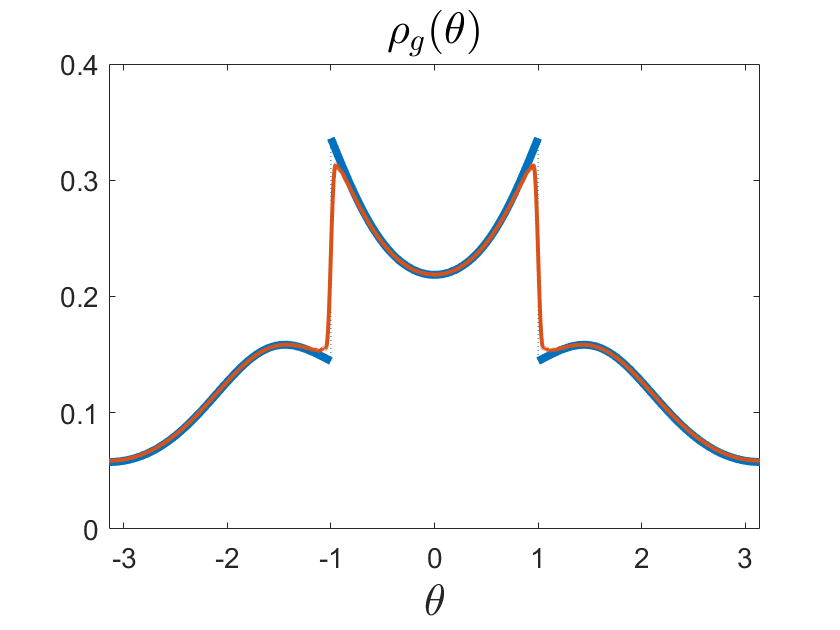

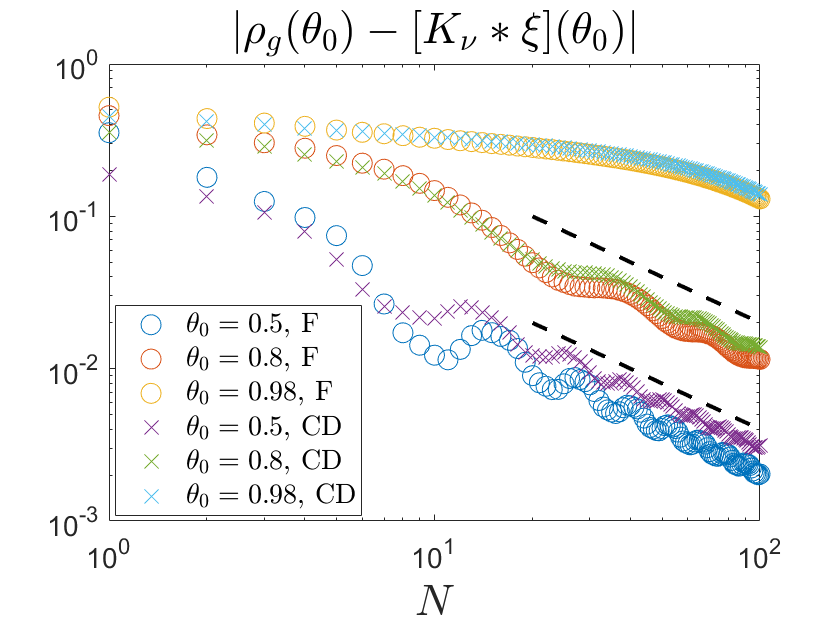

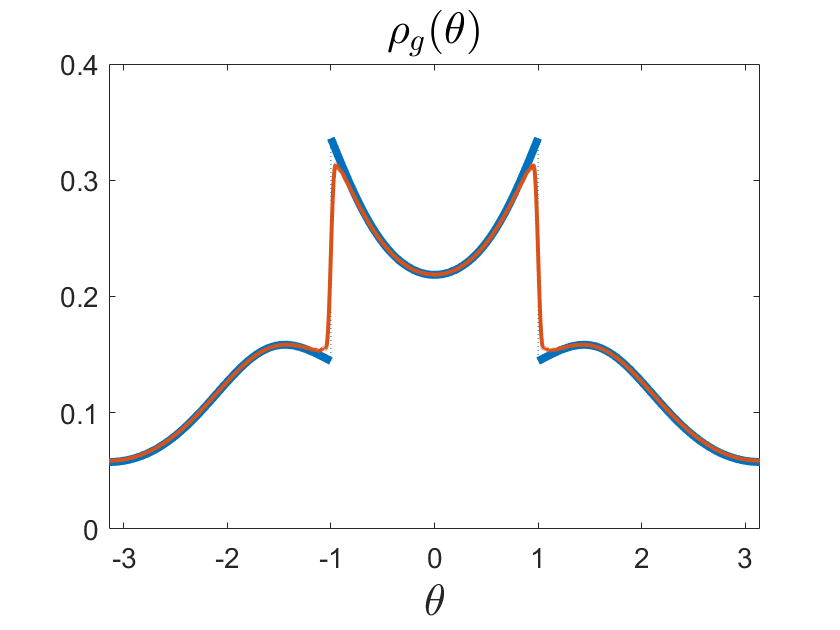

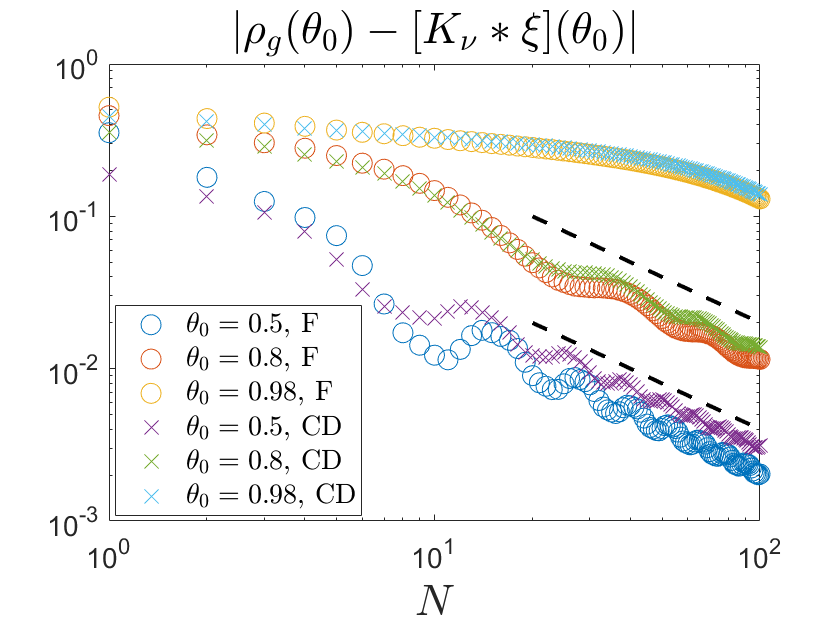

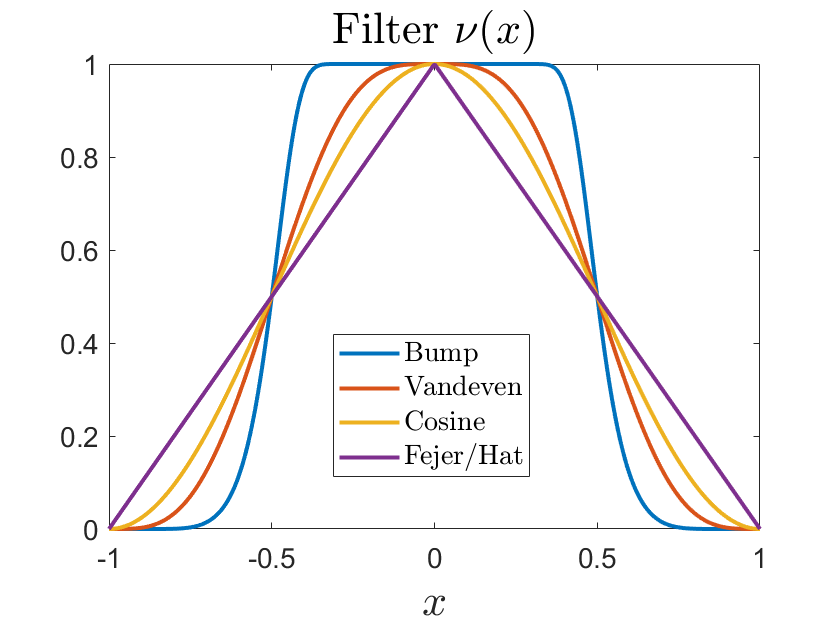

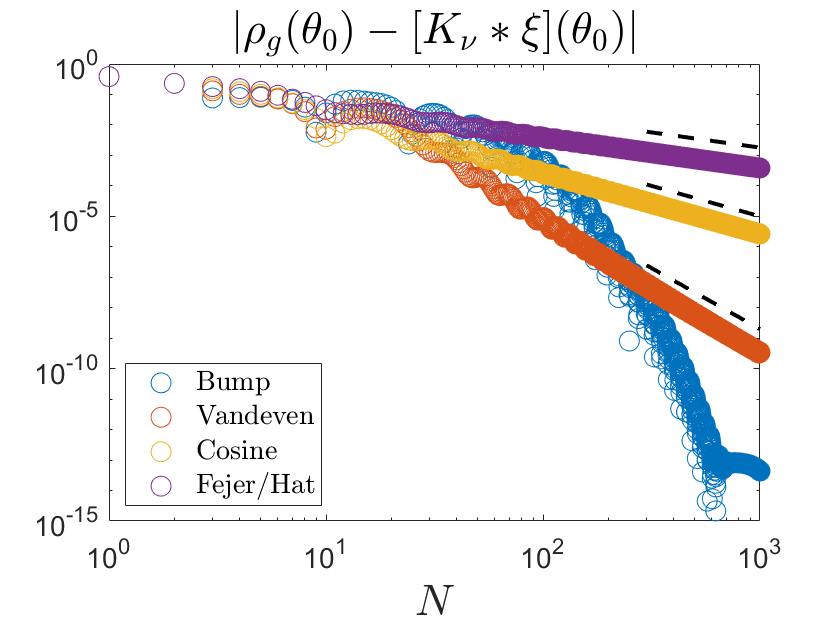

Figure 10: Density of the absolutely continuous measure (left) and weak convergence for test functions of varying regularity (right).

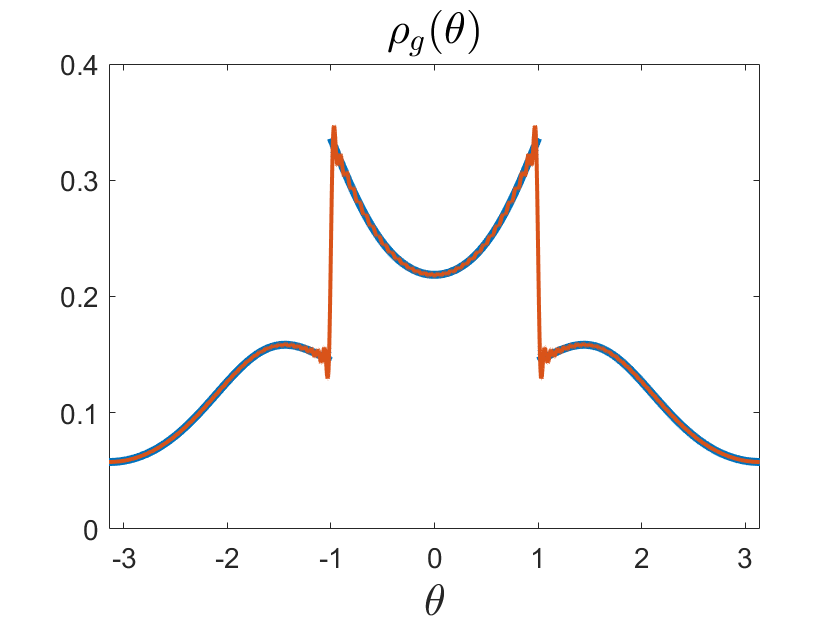

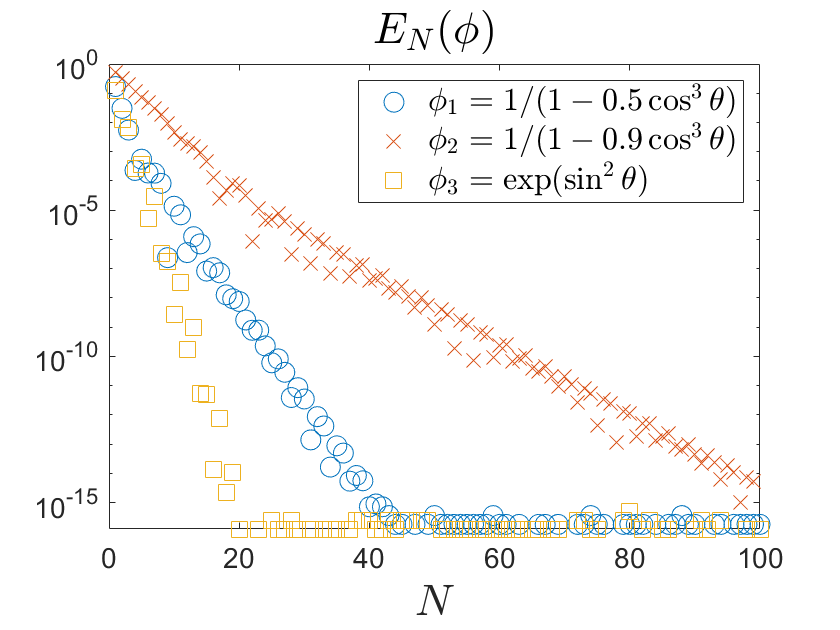

Figure 11: Comparison of true density and truncated Fourier approximation (left); weak convergence rates (right).

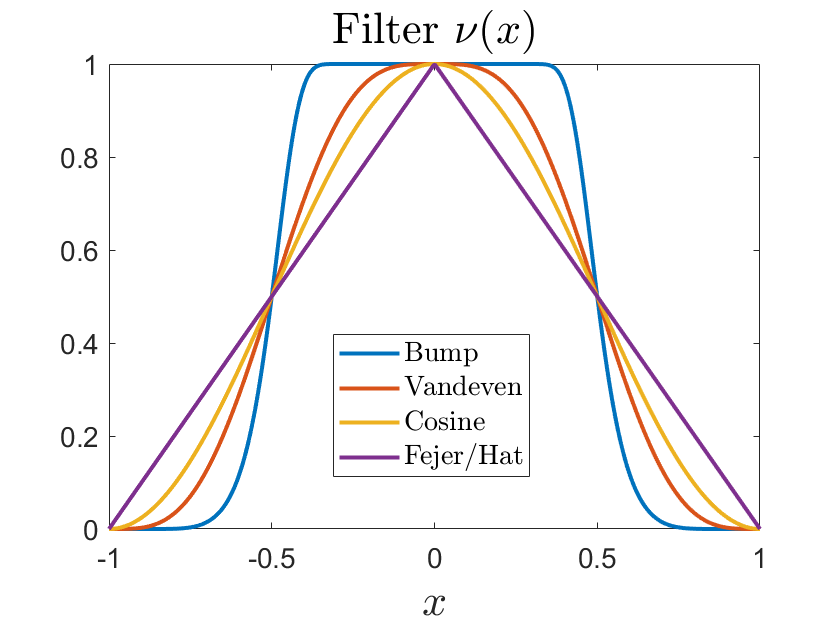

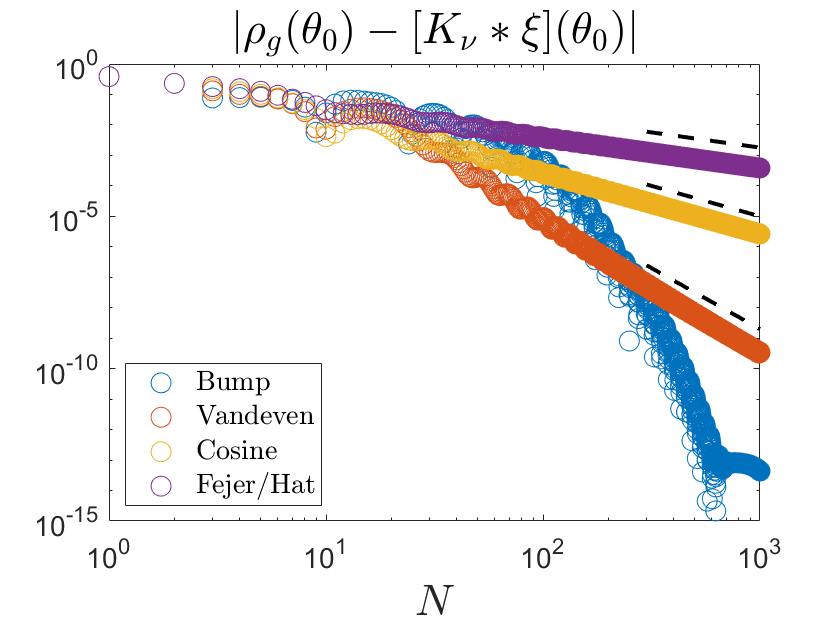

Figure 12: Effect of hat-filtered Fourier approximation on Gibbs phenomena (left); pointwise convergence at varying distances from a discontinuity (right).

Figure 13: Comparison of filters for Fourier series approximation (left); pointwise error for different filters (right).

- Eigenvalue-Based Methods: Use structure-preserving discretizations (mpEDMD) to ensure unitary approximations, enabling the spectral measure to be approximated by the empirical measure of eigenvalues and eigenvectors. Explicit error bounds are provided for delay-embedding dictionaries.

- Resolvent-Based Methods: Approximate the spectral measure via the Carathéodory function and rational smoothing kernels, enabling high-order local convergence to the Radon–Nikodym derivative. This approach is robust to noise and effective for systems with continuous spectrum.

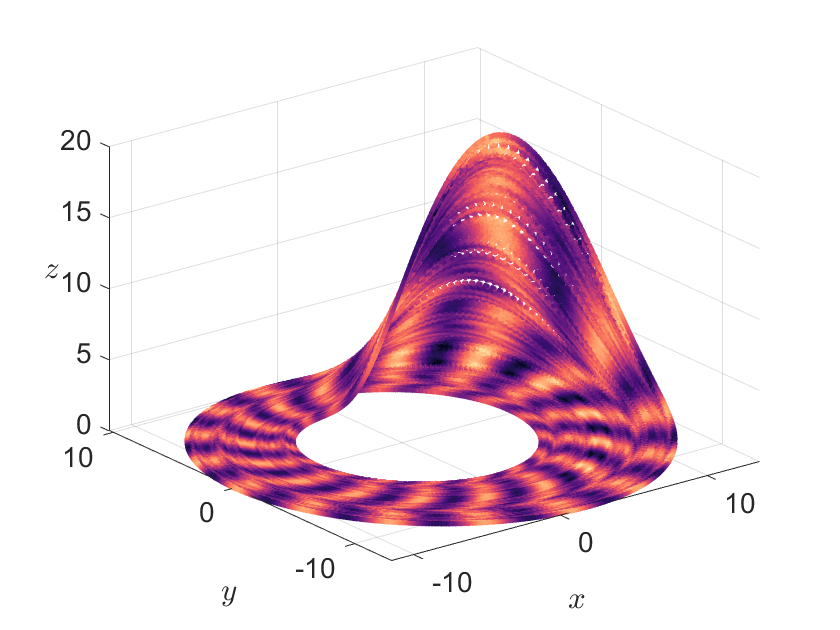

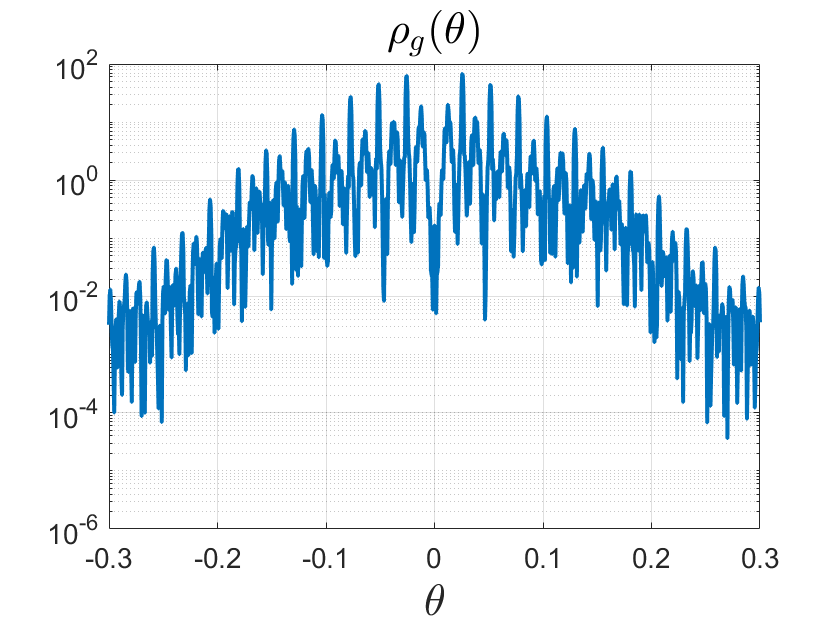

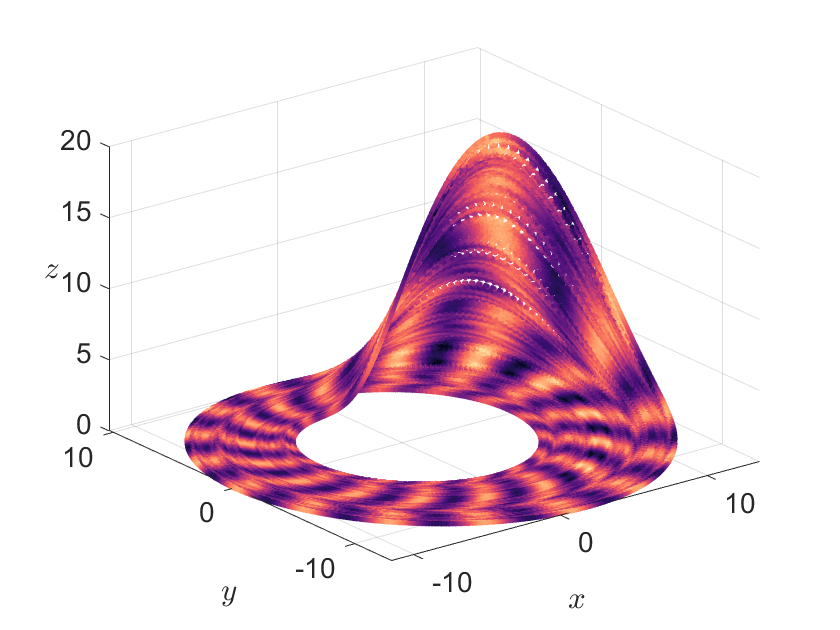

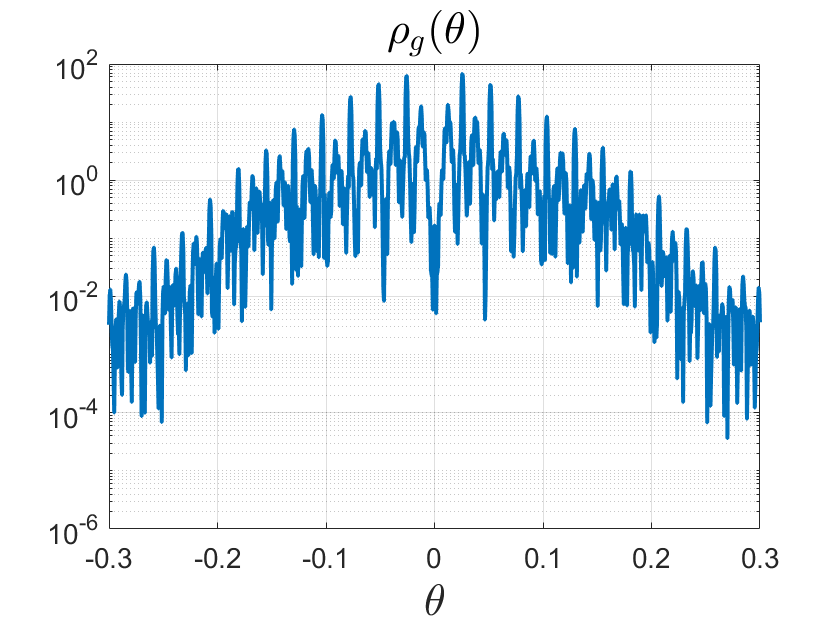

Figure 14: Visualization of the "simple" Rössler attractor (left) and its smoothed power spectral density (right), showing sharp phase coherence.

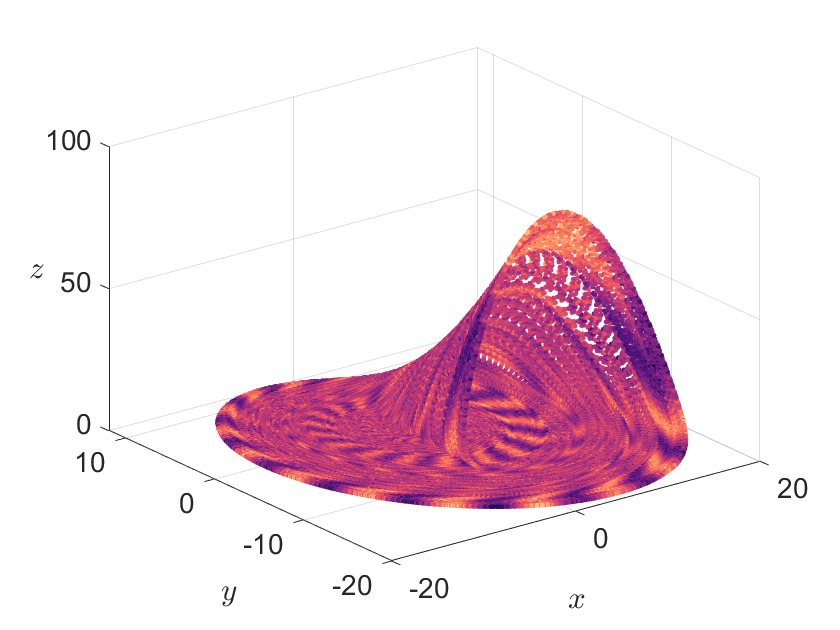

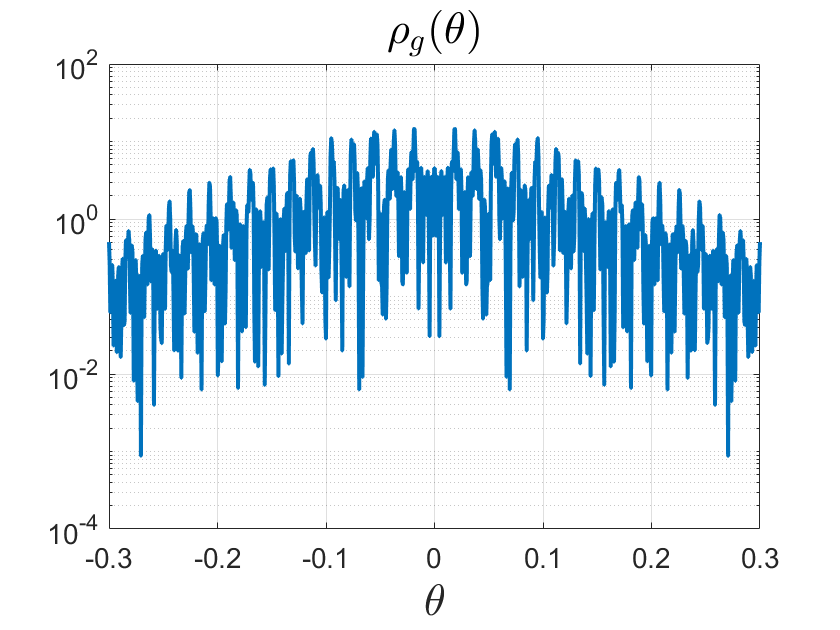

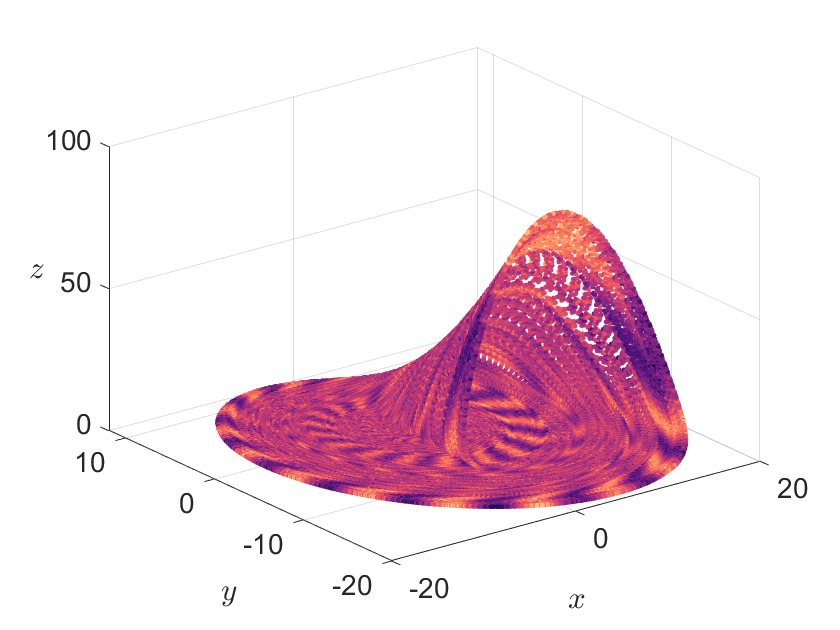

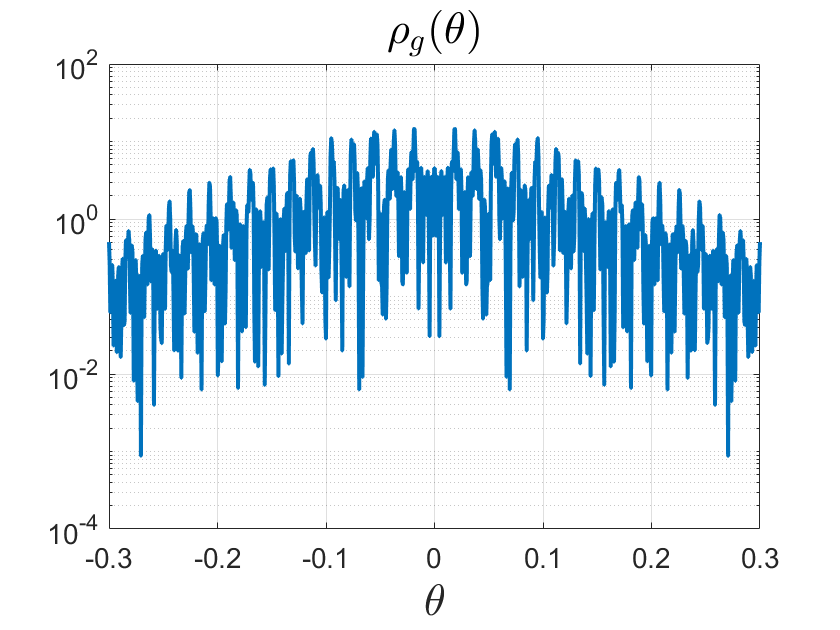

Figure 15: Visualization of the "funnel" Rössler attractor (left) and its smoothed power spectral density (right), showing loss of phase coherence.

The paper also discusses the computation of the full Radon–Nikodym decomposition, the use of polynomial filters for high-order convergence, and the extension of these methods to projection-valued measures and generalized eigenfunctions in rigged Hilbert spaces.

Solvability Complexity Index and Classification Theory

A significant theoretical implication is the recognition that many spectral computations for Koopman operators require multiple successive limits (e.g., M→∞ followed by N→∞), and these limits do not generally commute. The Solvability Complexity Index (SCI) framework is invoked to classify the intrinsic computational complexity of spectral problems, with recent results establishing lower bounds on the number of required limits for various spectral quantities.

Implications and Future Directions

The rigorous convergence guarantees and error control mechanisms developed in this work provide a foundation for reliable data-driven spectral analysis of nonlinear dynamical systems. The unified treatment of residuals, pseudospectra, and spectral measures enables practitioners to avoid common pitfalls such as spectral pollution and to select modes with genuine dynamical significance. The explicit convergence rates and error bounds facilitate principled algorithm design and parameter selection.

Practically, these methods are applicable to a wide range of systems, including high-dimensional, chaotic, and partially observed dynamics, and are robust to noise and model uncertainty. The theoretical framework extends to the computation of resonances, generalized eigenfunctions, and the analysis of mixing and coherent structures.

Future developments are expected in the classification of spectral problems via the SCI, the design of more data-efficient algorithms exploiting regularity, and the extension to broader classes of operators and observables (e.g., in Lp or RKHS settings). The interplay between numerical analysis, operator theory, and data-driven modeling will continue to drive advances in Koopman learning and its applications across science and engineering.

Conclusion

This paper establishes a rigorous, unified framework for Koopman learning, emphasizing convergent, data-driven methods for spectral analysis and forecasting in nonlinear dynamical systems. By systematically leveraging residuals, pseudospectra, and structure-preserving discretizations, the authors provide both theoretical guarantees and practical algorithms for extracting reliable spectral information from finite data. The work bridges the gap between infinite-dimensional operator theory and real-world data analysis, setting the stage for further advances in the computational spectral theory of dynamical systems.