- The paper presents a comprehensive framework where machine learning methods, such as PINNs, DeepONets, and GNNs, uncover governing equations and dynamic properties of nonlinear waves.

- It leverages physics-informed architectures to embed PDE constraints, ensuring that discovered symmetries and conservation laws align with physical principles.

- Sparse regression and symbolic approaches like SINDy and SILO provide interpretable, efficient alternatives for modeling reduced-order dynamics and integrability in complex systems.

Machine Learning of Nonlinear Waves: Data-Driven Methods for Computer-Assisted Discovery of Equations, Symmetries, Conservation Laws, and Integrability

Overview and Motivation

This paper provides a comprehensive review and technical synthesis of recent advances at the intersection of nonlinear wave theory and scientific machine learning (SciML). The authors systematically examine how data-driven methods—including deep learning, operator learning, sparse regression, and physics-informed architectures—can be leveraged for the discovery, analysis, and simulation of nonlinear wave phenomena. The focus is on augmenting traditional analytical and numerical tools with machine learning approaches to enable the identification of governing equations, symmetries, conservation laws, and integrability properties directly from data.

Pillars of Scientific Machine Learning for Nonlinear Waves

The paper begins by categorizing the main neural architectures relevant to nonlinear wave modeling: MLPs, CNNs, RNNs (LSTM/GRU), transformers, and GNNs. Each architecture is discussed in terms of its inductive biases, scalability, and suitability for spatial, temporal, or relational data. The authors emphasize the importance of choosing architectures that respect the underlying physical structure of wave systems, such as translation invariance or symplectic geometry.

Physics-Informed Neural Networks (PINNs) are highlighted as a central tool for embedding PDE constraints into the learning process. The PINN framework is formalized with composite loss functions that combine supervised and self-supervised terms, leveraging automatic differentiation for efficient computation of derivatives. The paper details the implementation of PINNs for both forward and inverse problems, including parameter identification and solution reconstruction in high-dimensional settings.

Operator learning, via DeepONets and Fourier Neural Operators (FNOs), is presented as a paradigm shift from function approximation to learning mappings between infinite-dimensional spaces. The universal approximation properties of DeepONets are discussed, and the spectral convolution mechanism of FNOs is described in detail, including their resolution invariance and computational efficiency.

Sparse and symbolic regression methods, particularly SINDy, are reviewed as interpretable alternatives to black-box neural models. The SINDy framework is formalized for both ODE and PDE discovery, with attention to library construction, sparse optimization (LASSO, STLSQ, SR3), and extensions for control, implicit dynamics, and noise robustness.

Applications: Equation Discovery, Symmetry, and Structure Preservation

PINNs for Discrete Lattice Dynamics

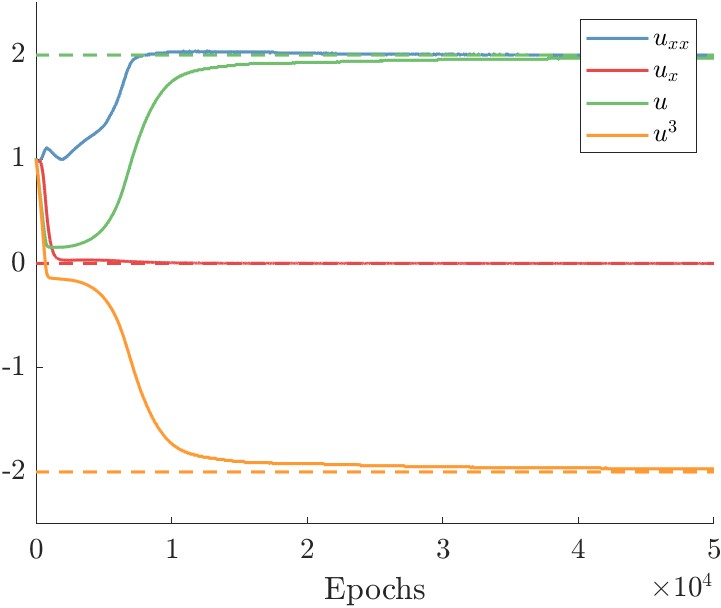

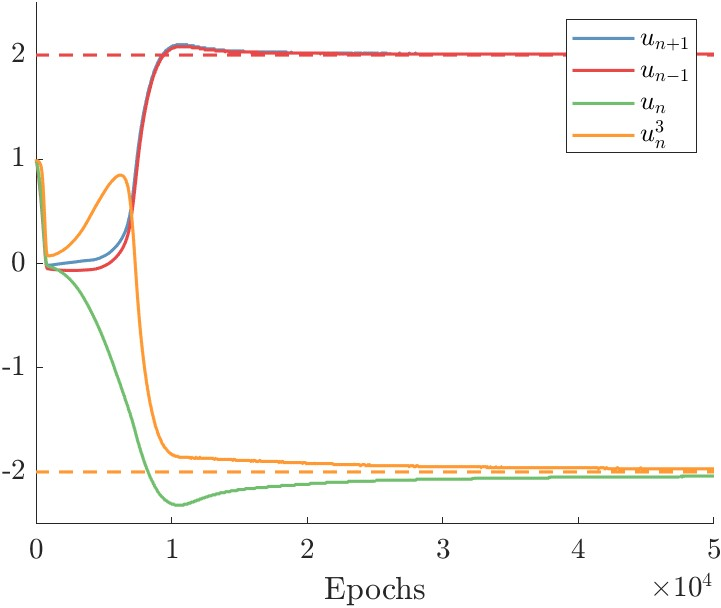

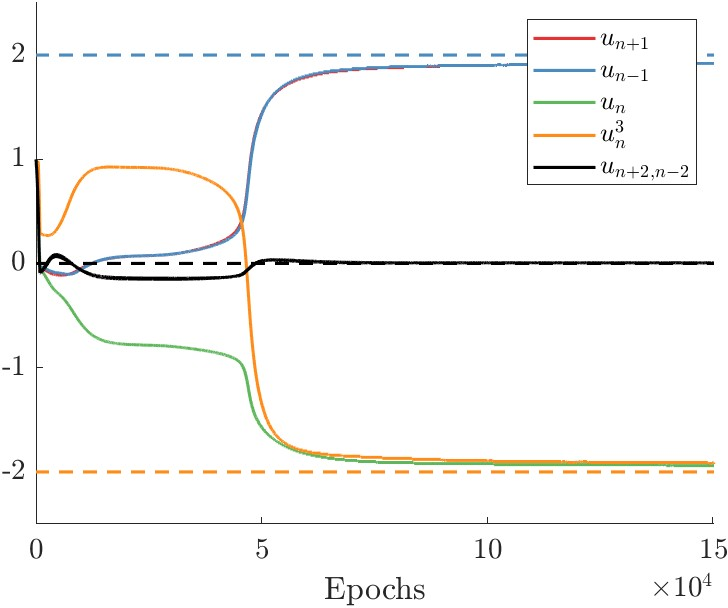

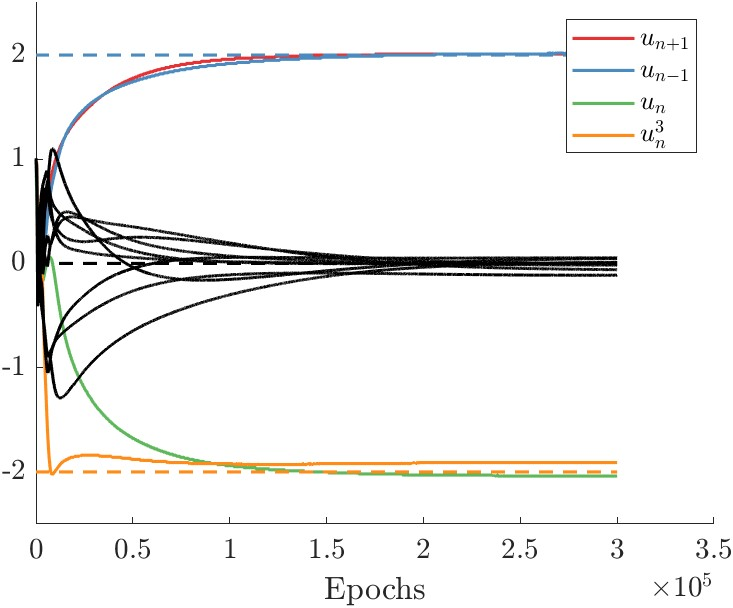

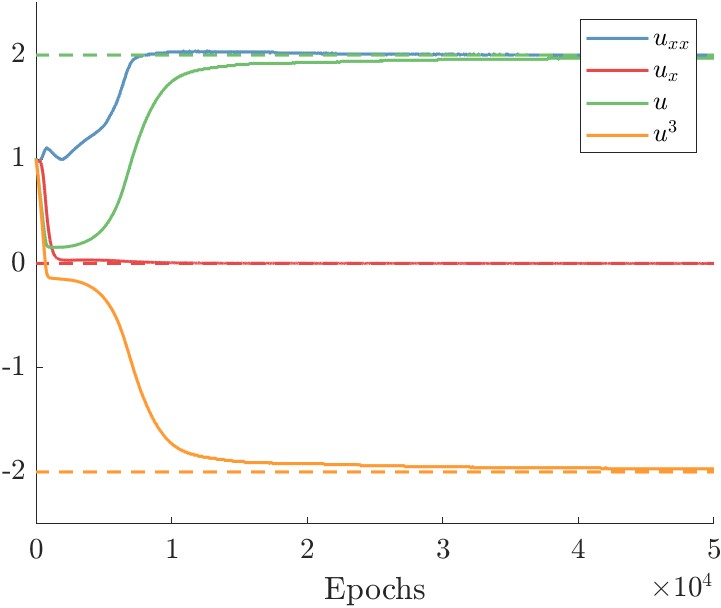

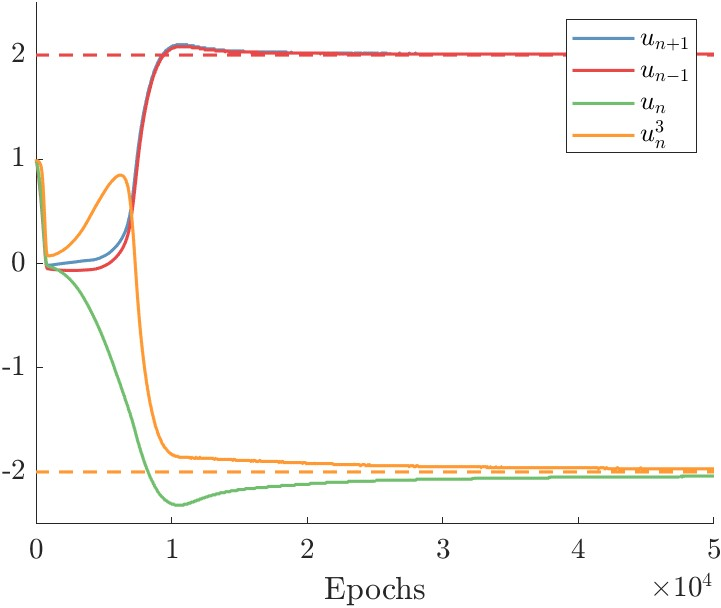

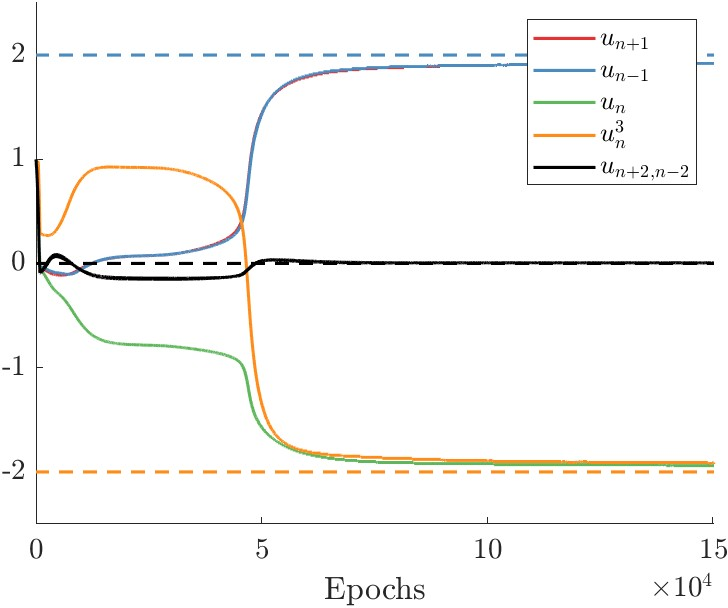

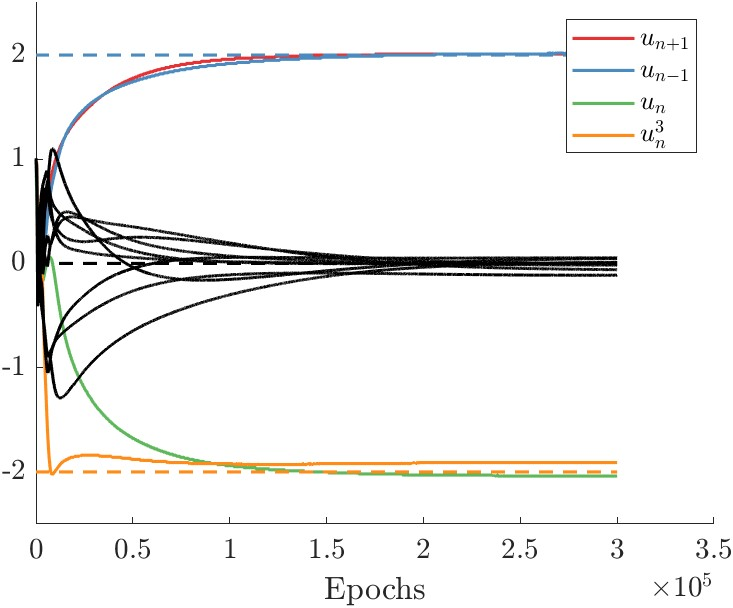

The authors present the application of PINNs to the discovery of governing equations in discrete nonlinear lattices, such as the discrete ϕ4 model. By constructing overcomplete libraries of candidate operators and optimizing expansion coefficients, PINNs are shown to accurately recover both the structure and parameters of the underlying dynamical system.

Figure 1: Discrete ϕ4 model PINN-based equation discovery, showing accurate identification of model terms and coefficients across multiple library choices.

Graph Neural Networks for Lattice Interactions

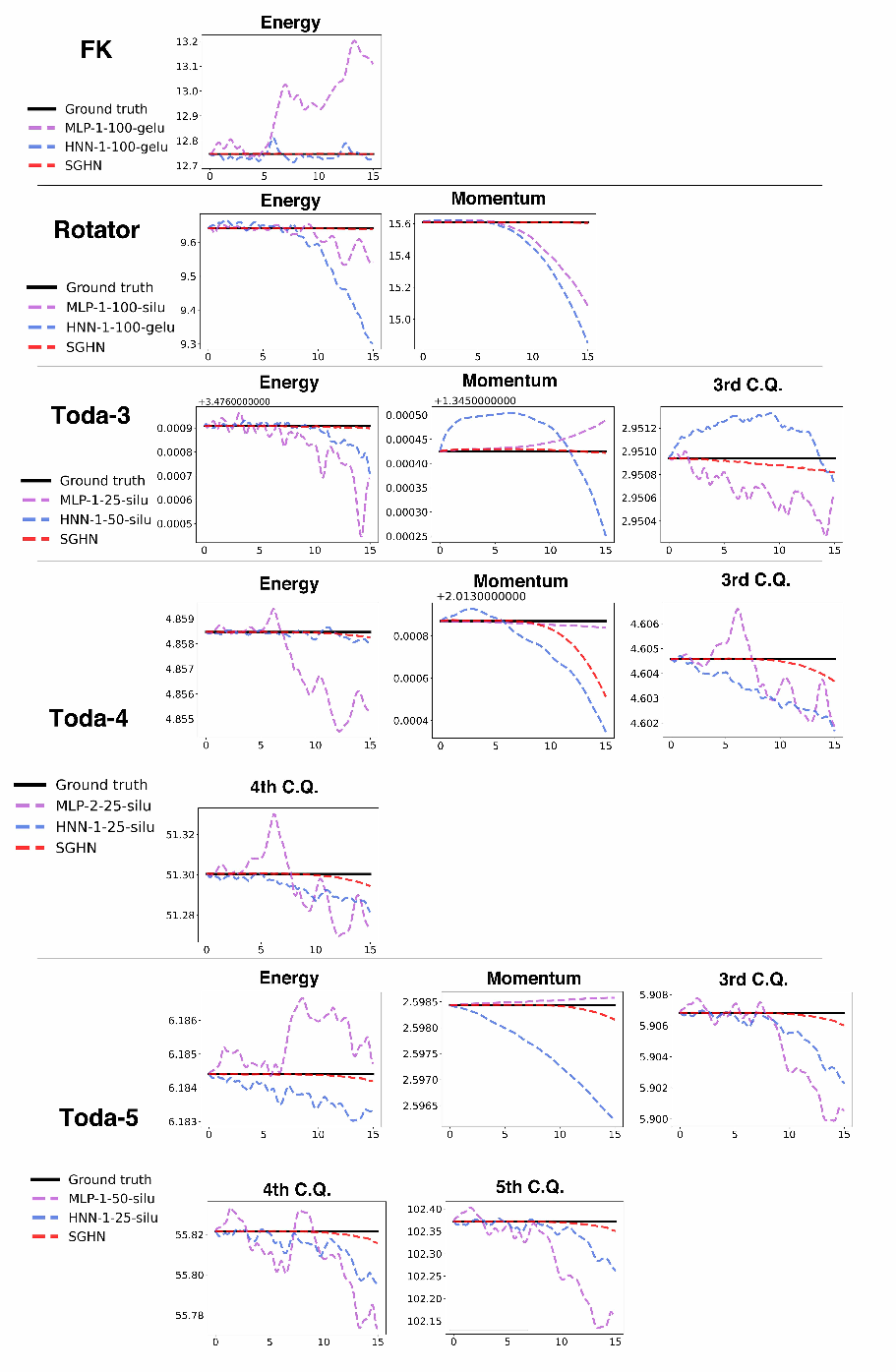

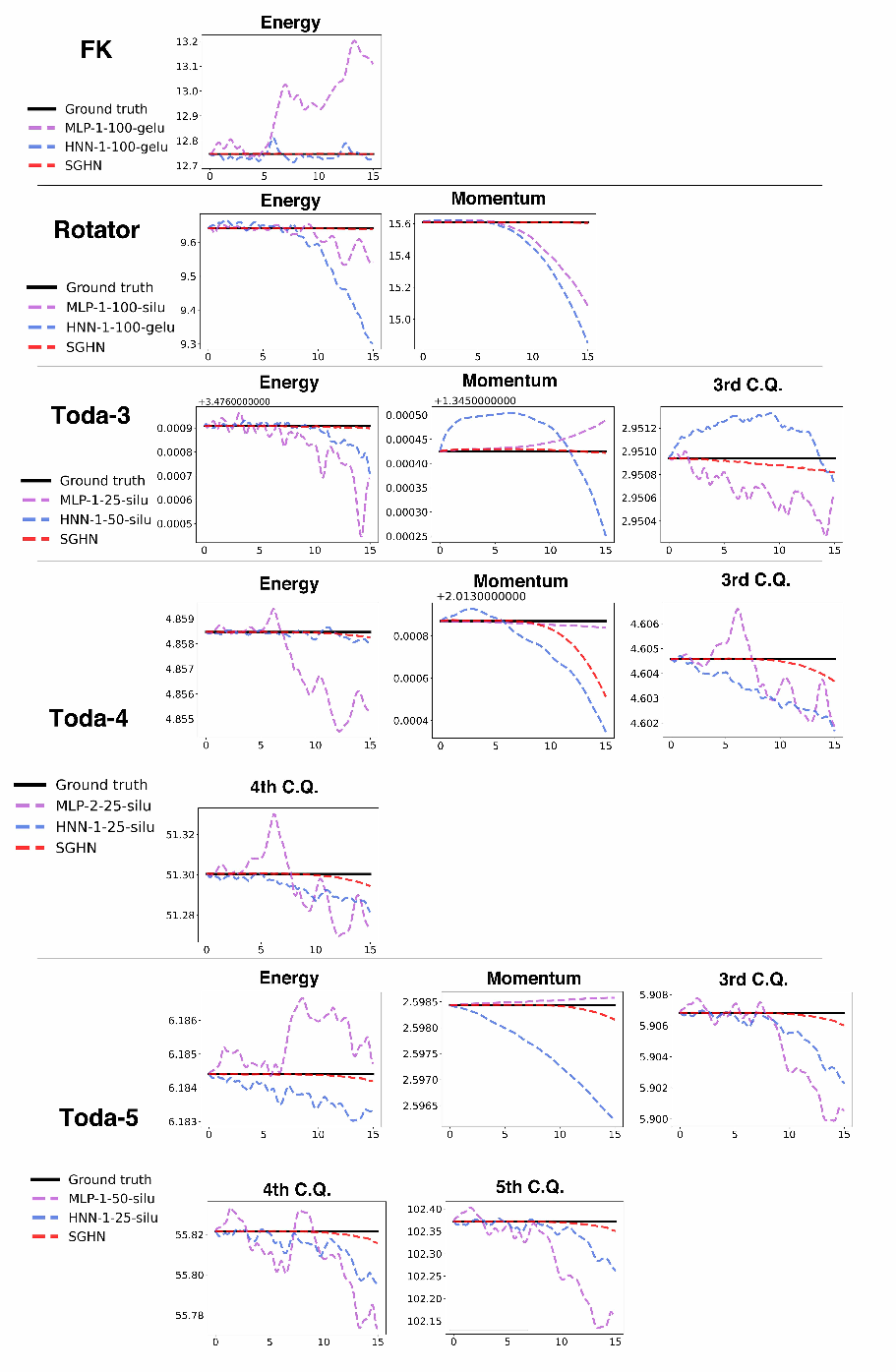

Graph neural networks (GNNs) are employed to learn both short- and long-range interactions in lattice systems, with a focus on Hamiltonian structure and conservation law preservation. The α-separable graph Hamiltonian network (α-SGHN) is introduced, demonstrating superior performance in capturing conserved quantities compared to MLP and HNN baselines.

Figure 2: Evolution of conserved quantities in lattice models, comparing α-SGHN, MLP, and HNN architectures.

Structure-Preserving PINNs

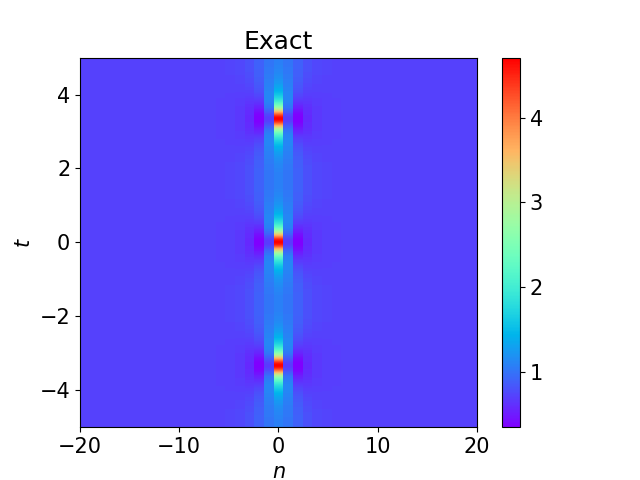

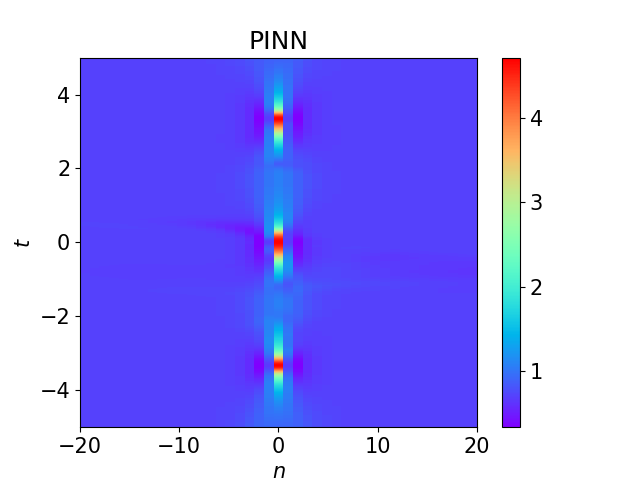

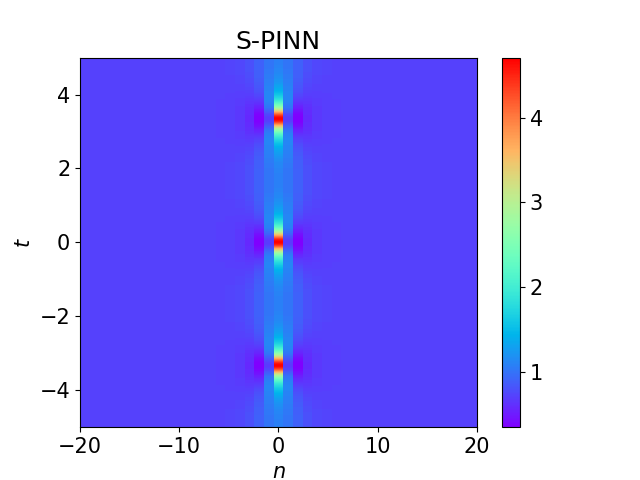

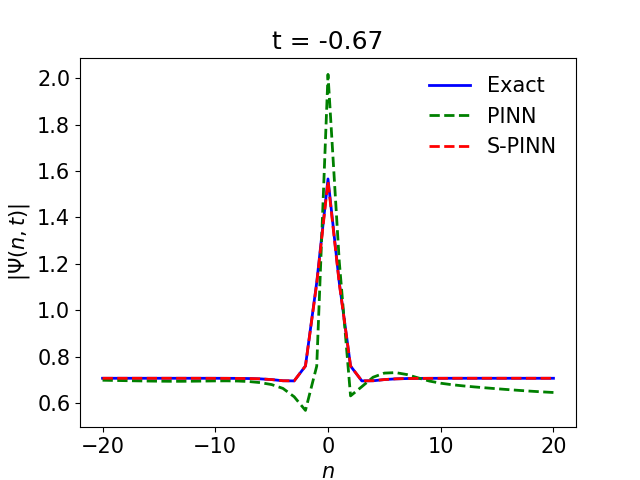

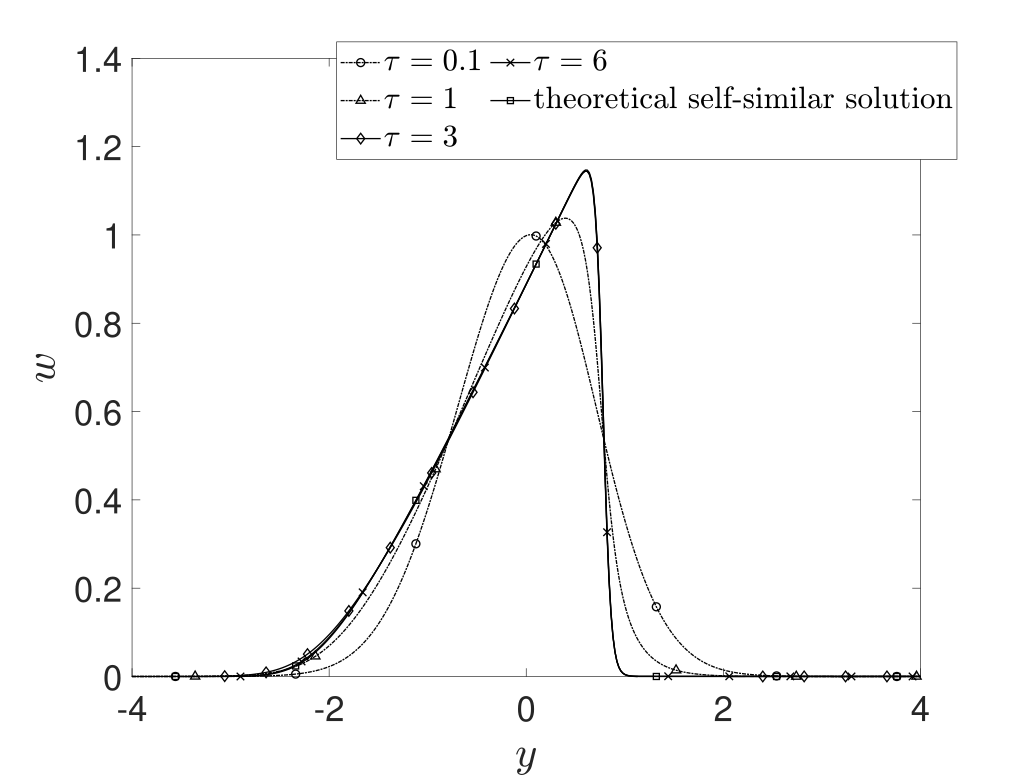

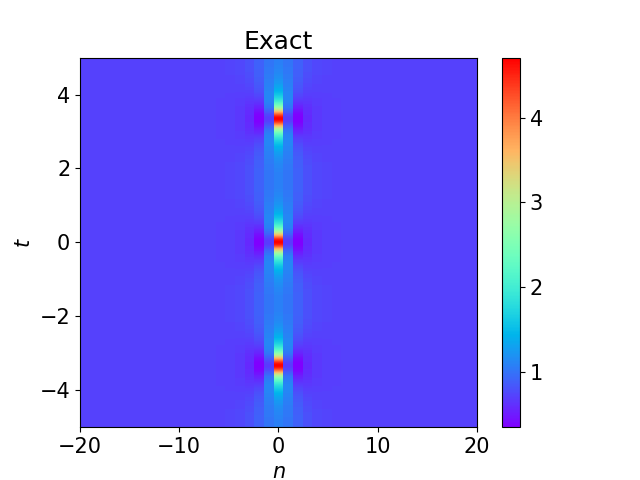

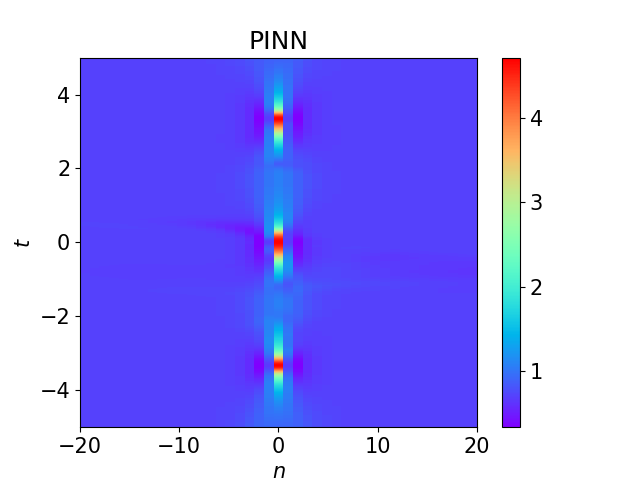

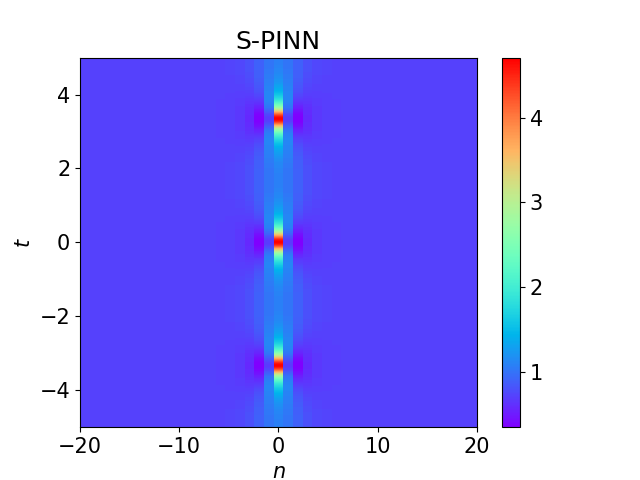

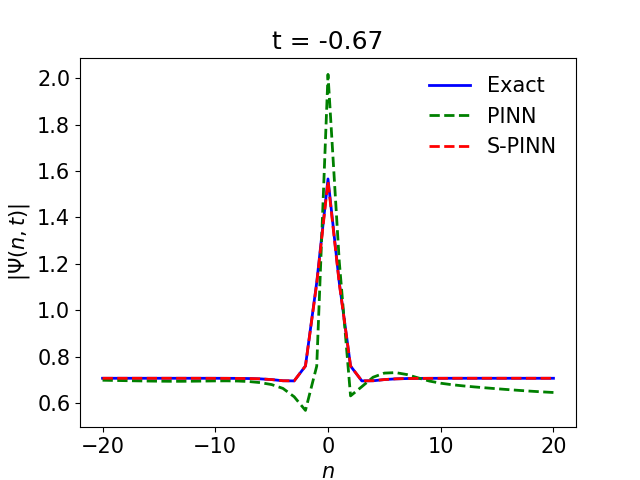

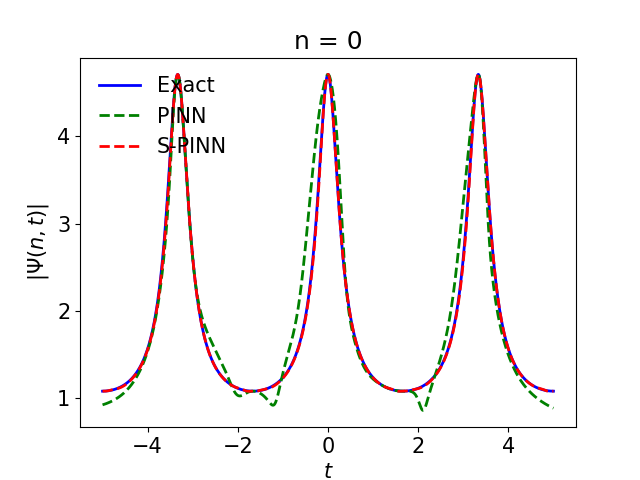

The paper details the design of structure-preserving PINNs (S-PINNs) that enforce physical symmetries, such as spatio-temporal parity and periodicity, in the solution space. The Ablowitz–Ladik model and its Kuznetsov–Ma soliton solution are used as a case study, with S-PINNs shown to outperform standard PINNs in reconstructing symmetry-respecting solutions.

Figure 3: Numerical results for the KM soliton using PINN and S-PINN, highlighting the preservation of time-periodicity and parity symmetry.

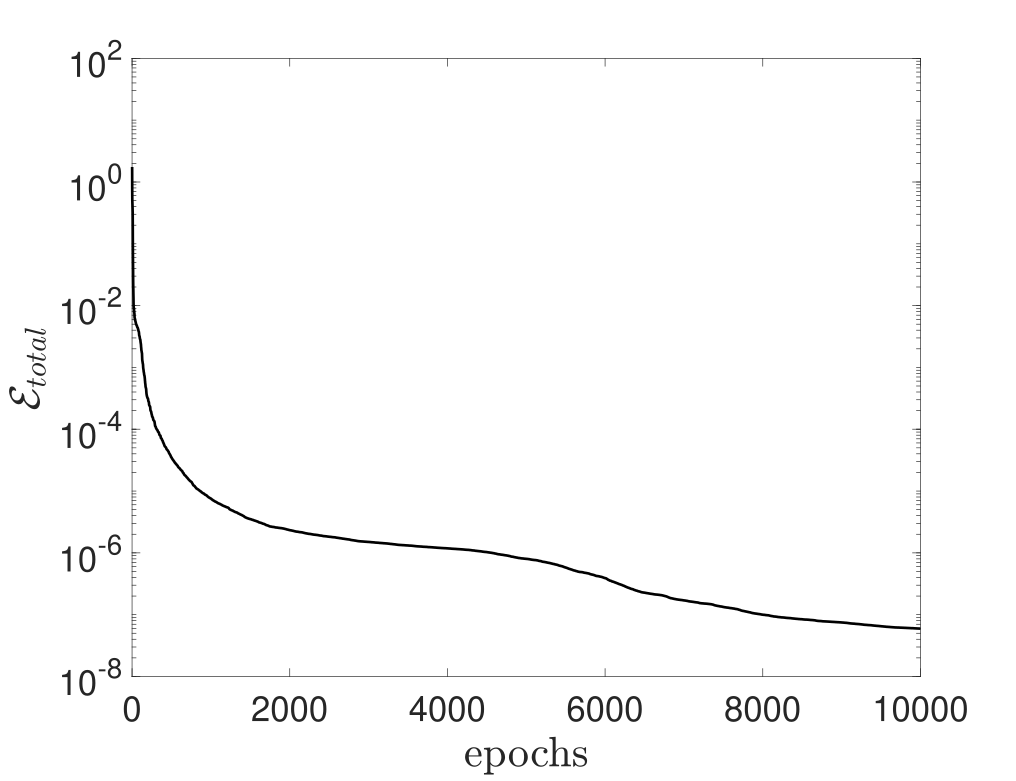

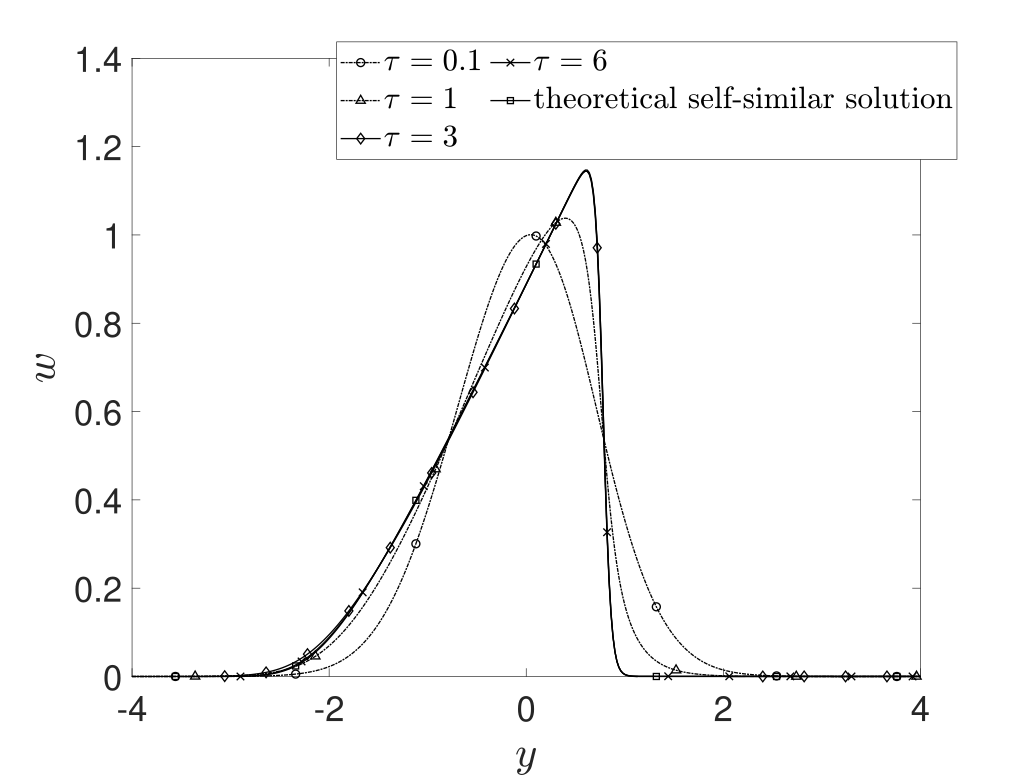

PINNs for Self-Similar and Translational-Invariant Solutions

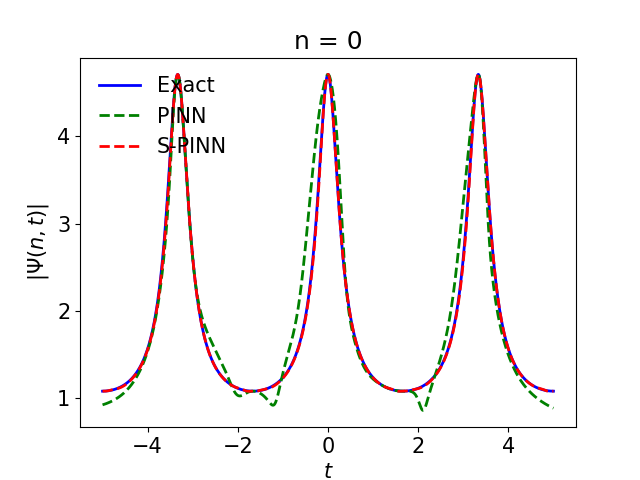

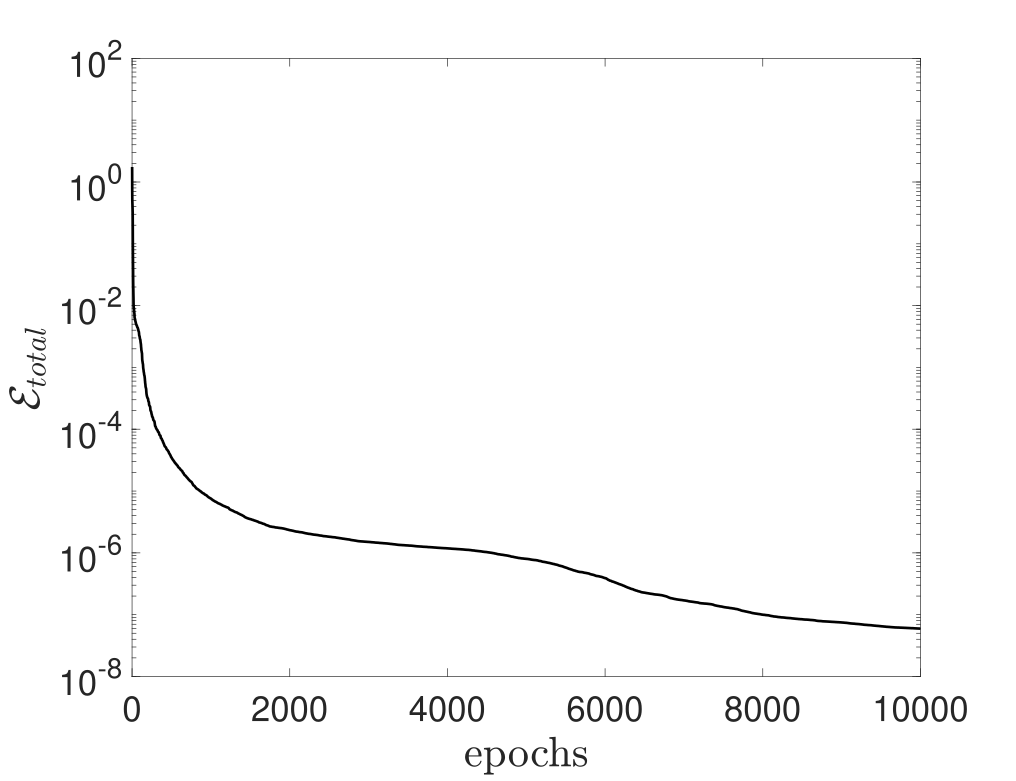

The authors demonstrate the use of PINNs for identifying self-similar dynamics in the Burgers equation, employing dynamic scaling and template constraints to factor out symmetries and converge to stationary profiles in renormalized coordinates.

Figure 4: PINN prediction of self-similar dynamics for the Burgers equation, showing loss convergence, solution snapshots, and scaling rate evolution.

Lax pair-informed neural networks (LPNNs) are introduced for integrable systems, embedding compatibility conditions (Lax equation, zero-curvature) into the loss function. The approach is benchmarked on KdV, mKdV, and other integrable PDEs, with LPNN-v2 consistently outperforming PINNs in accuracy and training efficiency.

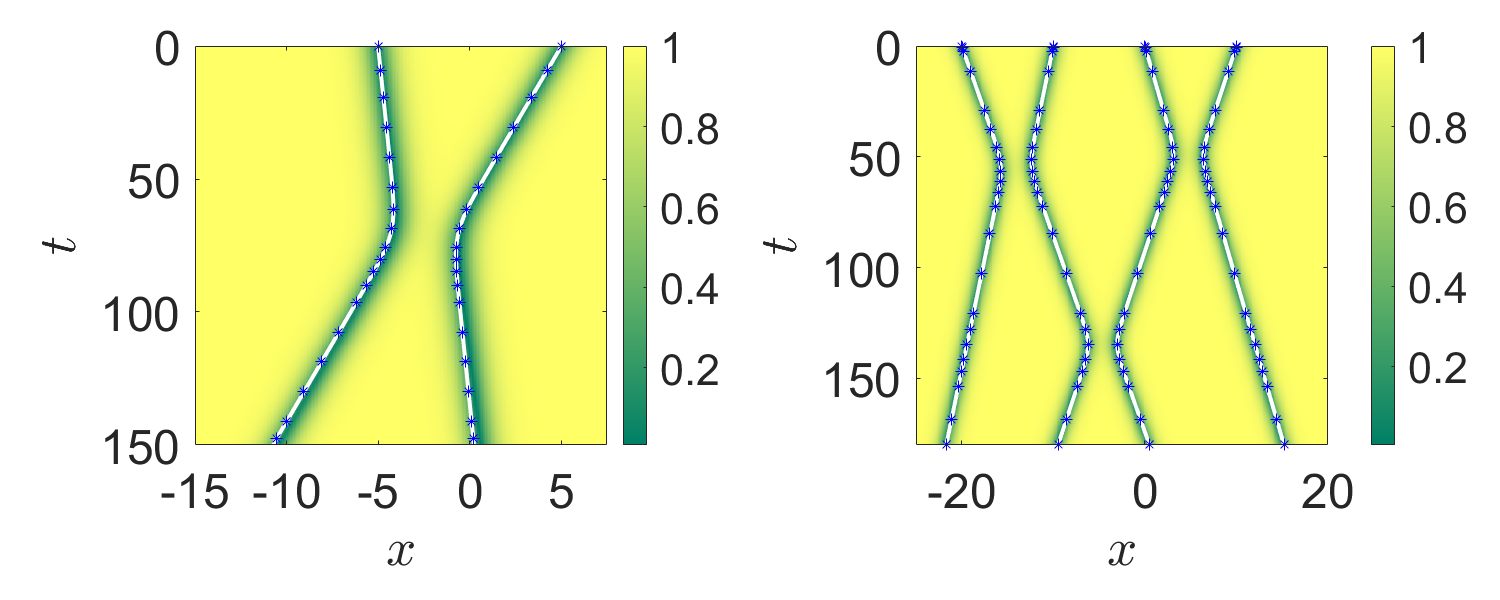

Reduced Order Modeling and Moment Equation Discovery

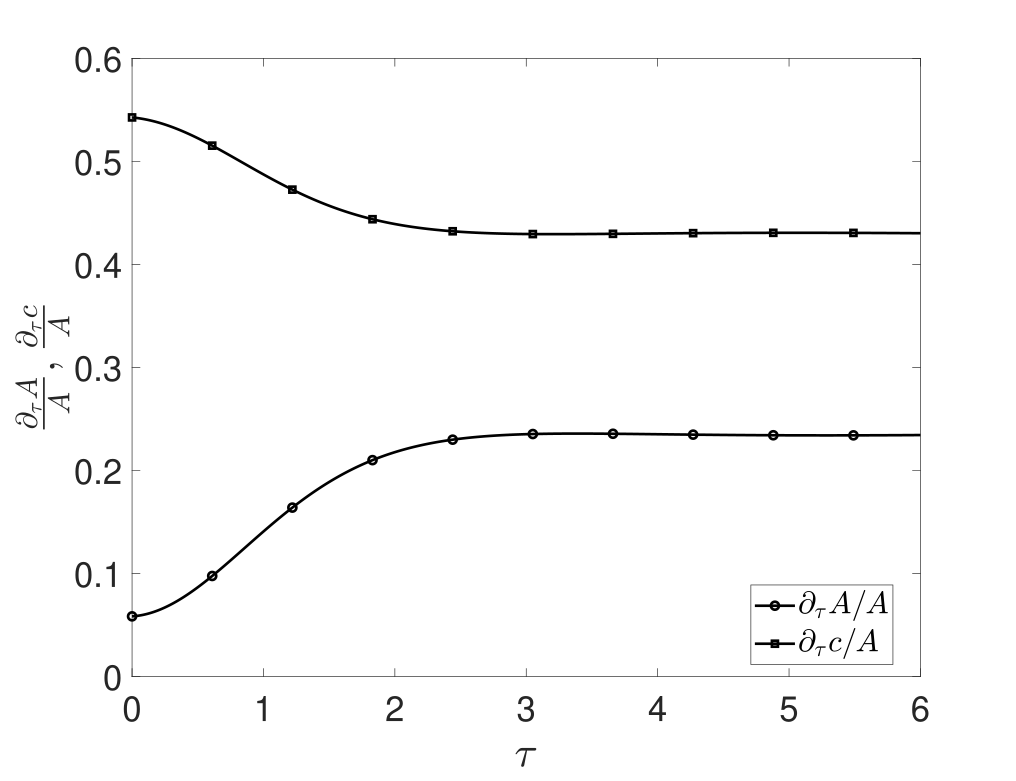

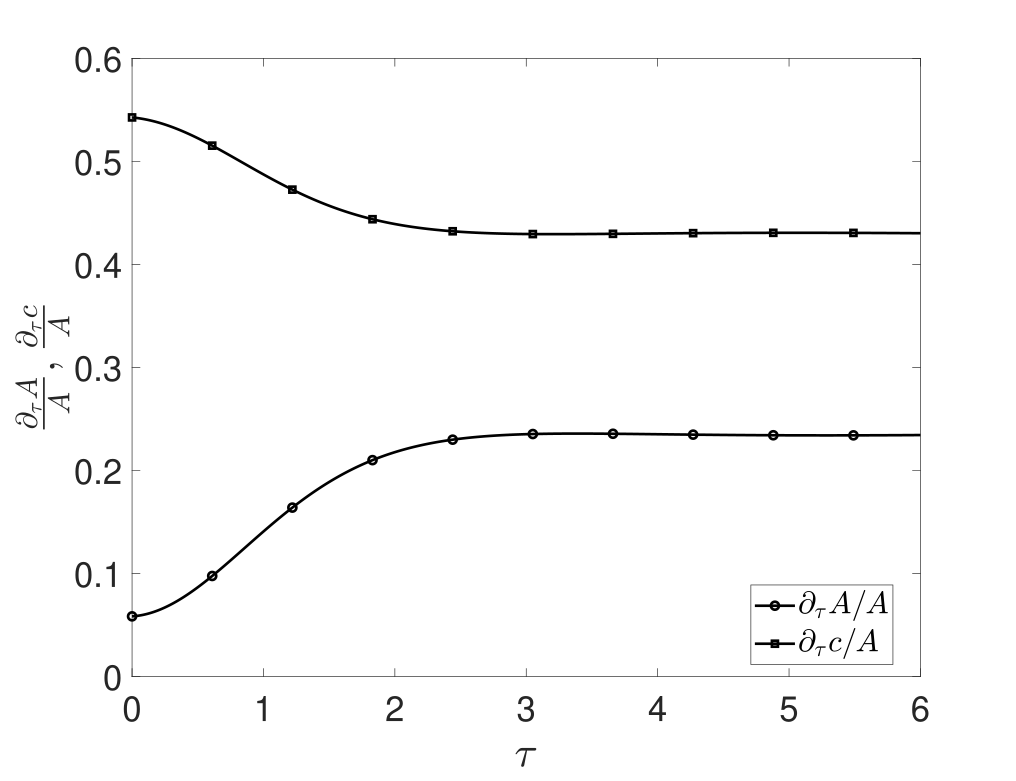

The paper discusses the use of SINDy and Neural ODEs for discovering reduced-order models, particularly moment equations derived from nonlinear dispersive PDEs. The methodology is validated on NLS models with parabolic traps, showing accurate recovery of closed moment dynamics and identification of coordinate transformations for closure.

Figure 5: Comparison of SINDy-predicted moment dynamics with ground truth, illustrating closure and transformation identification.

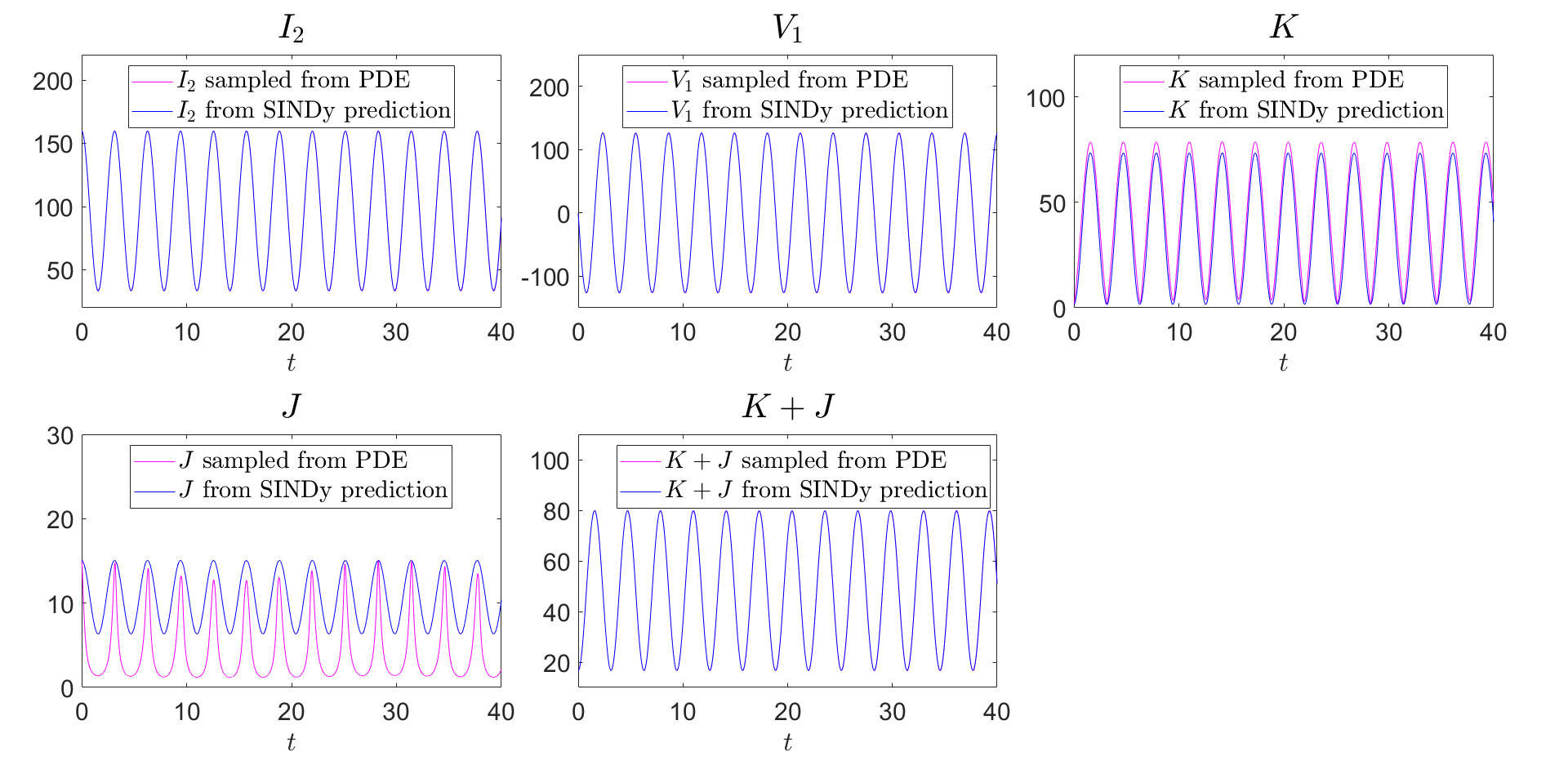

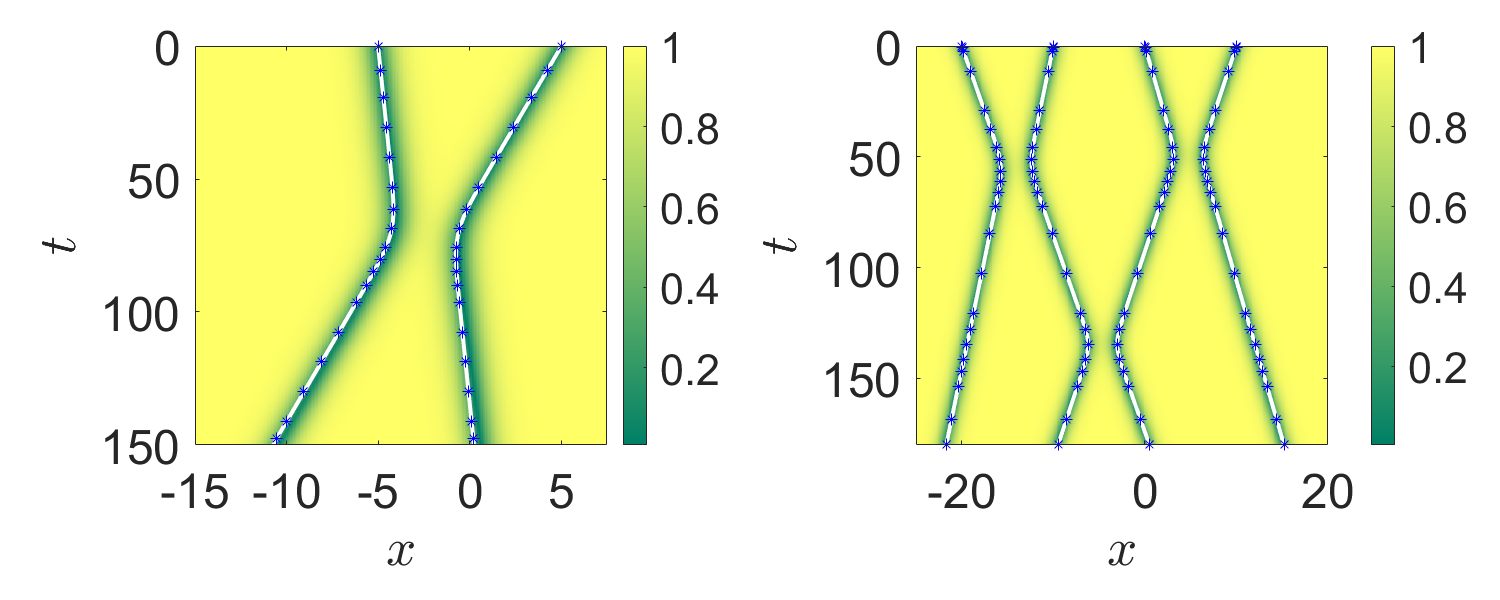

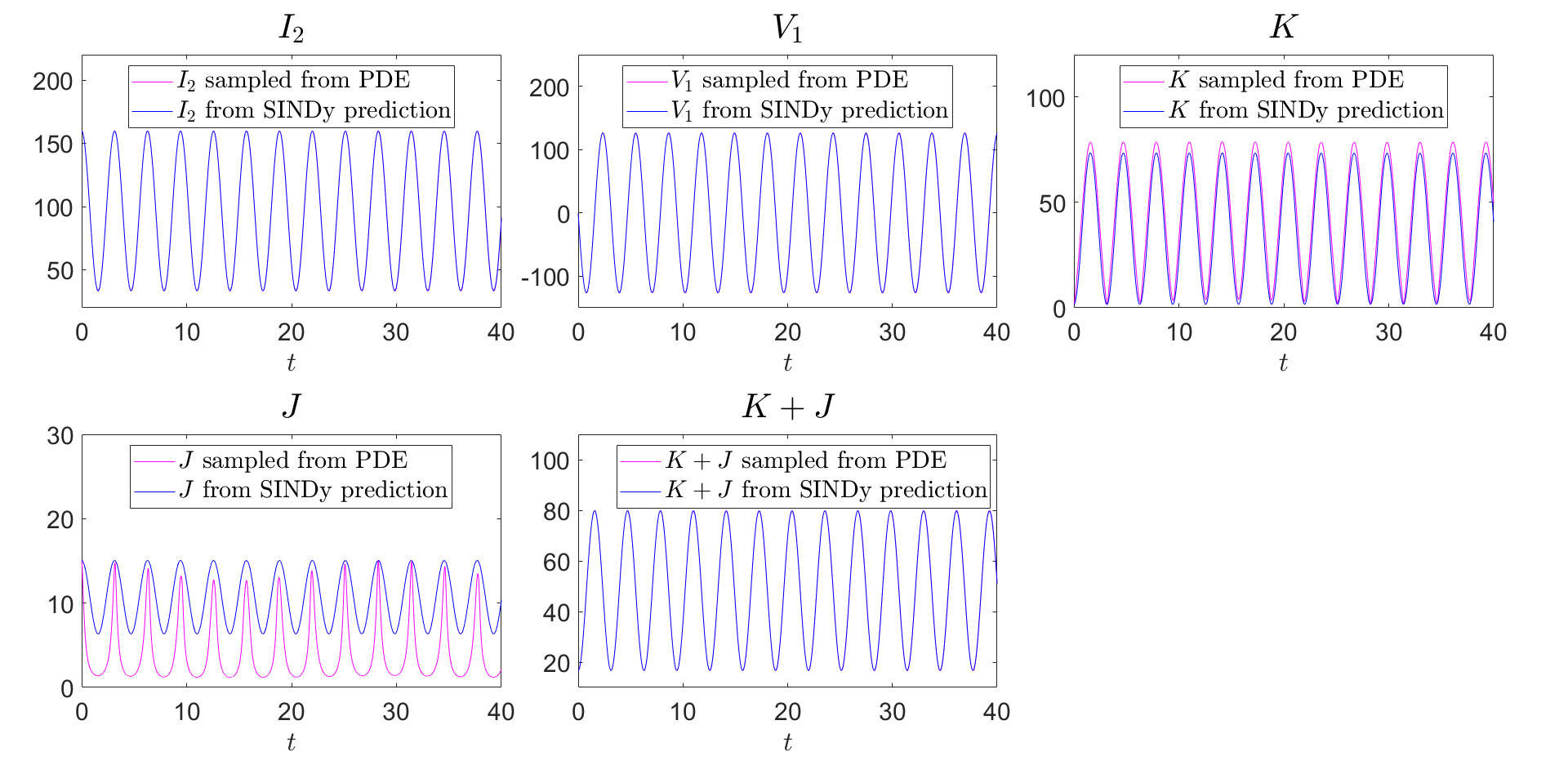

SINDy is further applied to the discovery of effective particle dynamics for soliton interactions, with variational approximations and greedy optimization used to extract interpretable ODEs for soliton positions and velocities.

Figure 6: Spatio-temporal dark-soliton interaction dynamics, comparing PDE simulation, variational approximation, and SINDy predictions.

Learning Structure: Hamiltonians, Conservation Laws, and Dissipative Extensions

Hamiltonian Neural Networks and Symplectic Learning

Hamiltonian Neural Networks (HNNs) are formalized for learning Hamiltonian functions from trajectory data, with symplectic integrators and flow map approximations (SympNets) discussed for long-horizon prediction and structure preservation. Extensions to noncanonical coordinates, latent symplectic manifolds, and graph-based Hamiltonian systems are reviewed.

Action-Angle Variables and Symplectic Normalizing Flows

Neural methods for learning action-angle transformations are presented, leveraging symplectic normalizing flows and symplectic neural networks to map canonical coordinates to integrable tori, demonstrated on Kepler, Neumann, and Calogero–Moser systems.

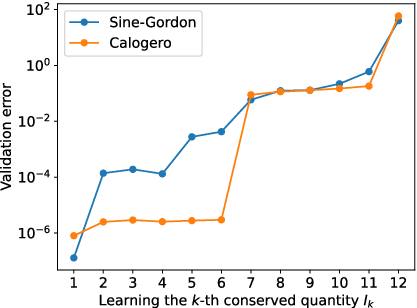

Neural Deflation for Conservation Law Discovery

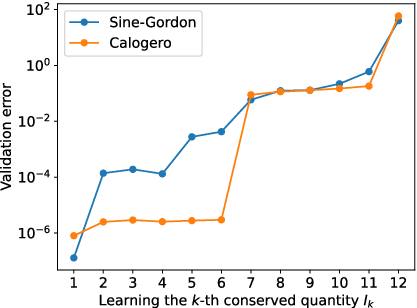

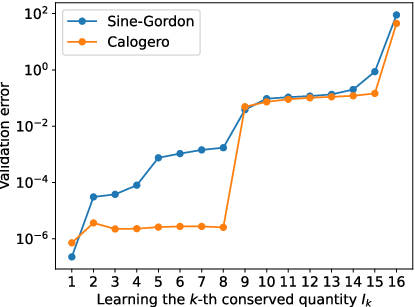

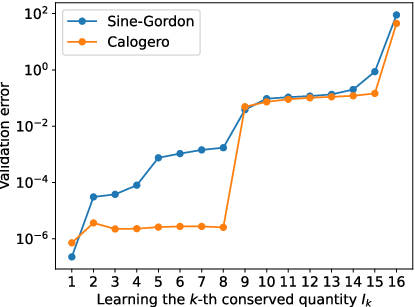

The neural deflation framework is detailed for the sequential discovery of functionally independent, Poisson-commuting conservation laws. The method is shown to recover complete sets of invariants in integrable systems (e.g., Calogero–Moser) and to detect non-integrability in others (e.g., discrete sine-Gordon).

Figure 7: Validation losses for learned conservation laws in integrable and non-integrable lattice systems, illustrating integrability detection via neural deflation.

GFINNs are introduced for learning dynamics compatible with the GENERIC formalism, ensuring exact preservation of energy and entropy degeneracy conditions via projection operators in neural architectures. The approach is validated on deterministic and stochastic systems, with extensions to PDEs via weak-form constraints.

Data-Driven Integrability Detection and Lax Pair Learning

Deep Learning and Symbolic Approaches

The paper reviews deep learning methods for Lax pair identification, including unsupervised physics-constrained optimization and mode-collapse penalties. The AKNS class is targeted for spectral operator identification via conserved quantity matching, enabling nonlinear Fourier analysis from data.

OptPDE: Maximizing Conserved Quantities

OptPDE is presented as a framework for discovering candidate integrable PDEs by optimizing coefficients to maximize the number of detected invariants, successfully rediscovering known integrable models and proposing novel candidates.

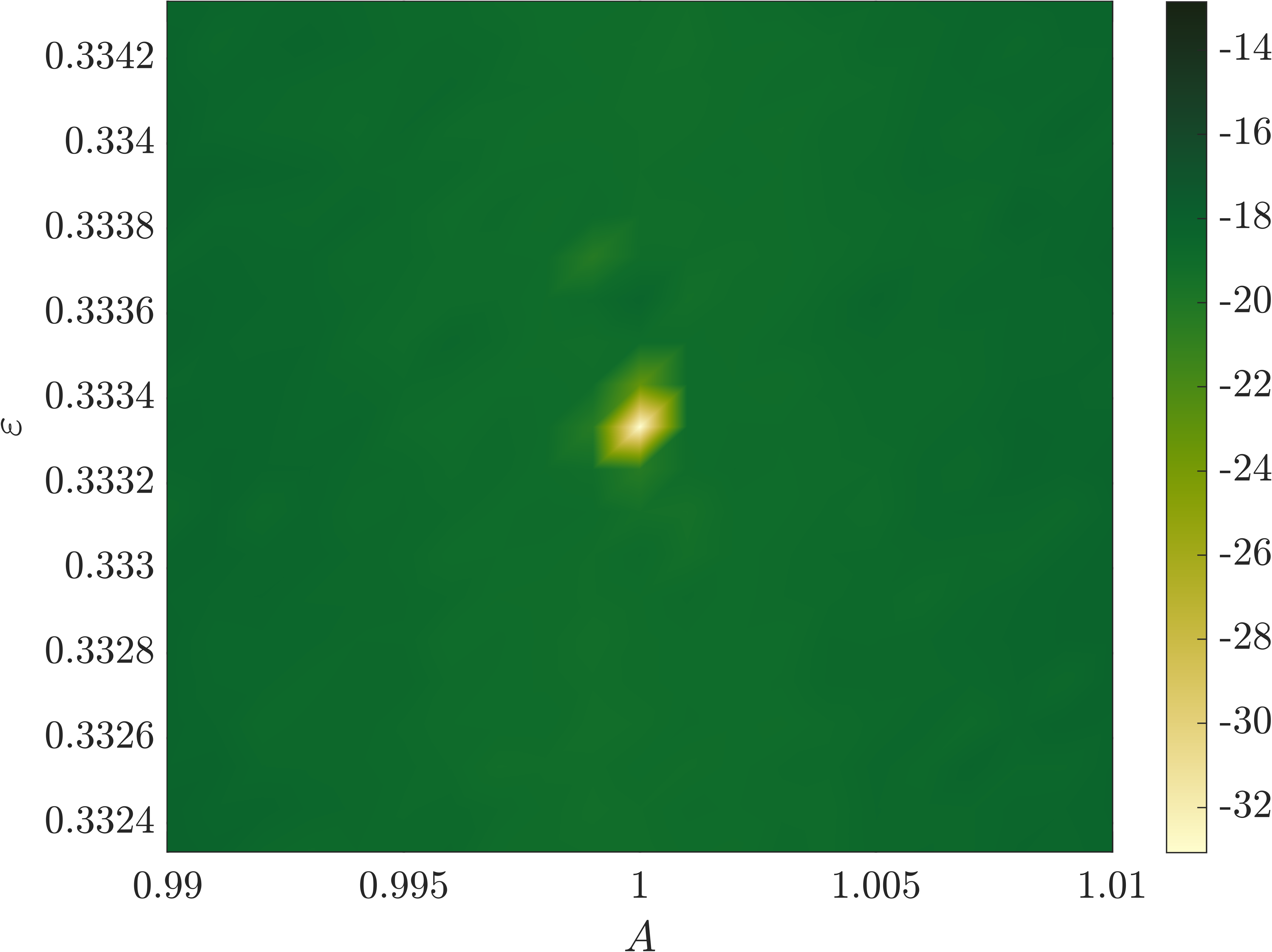

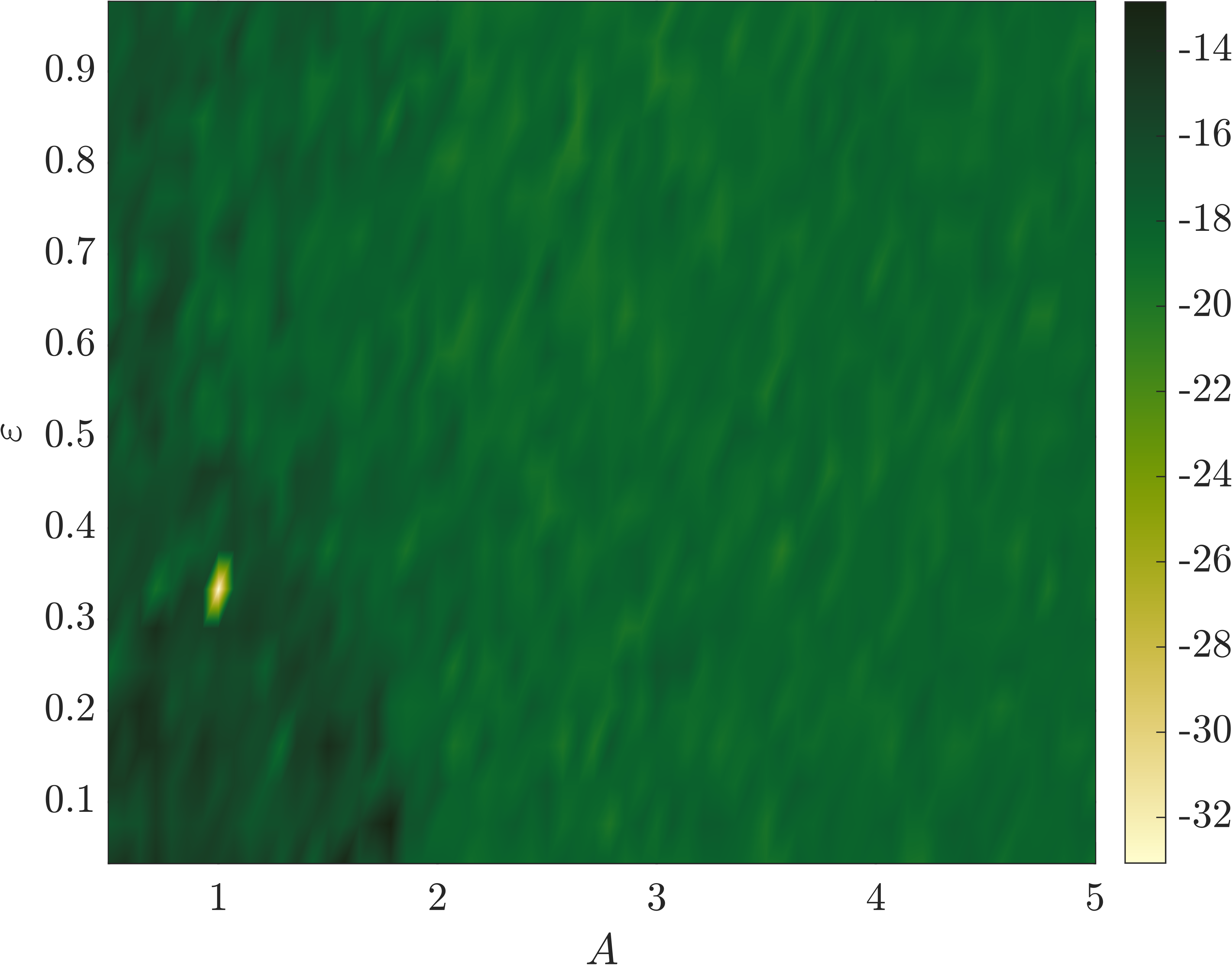

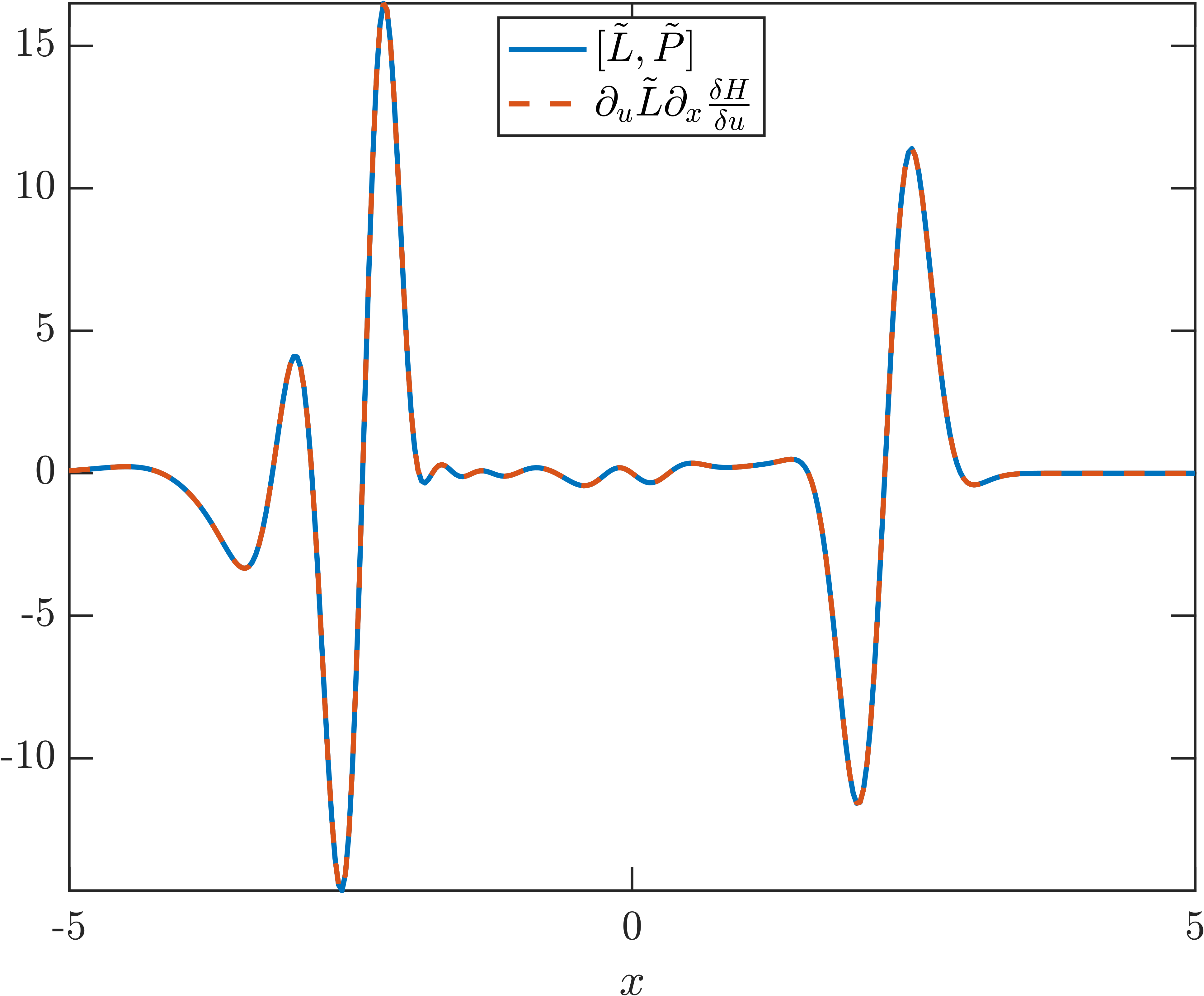

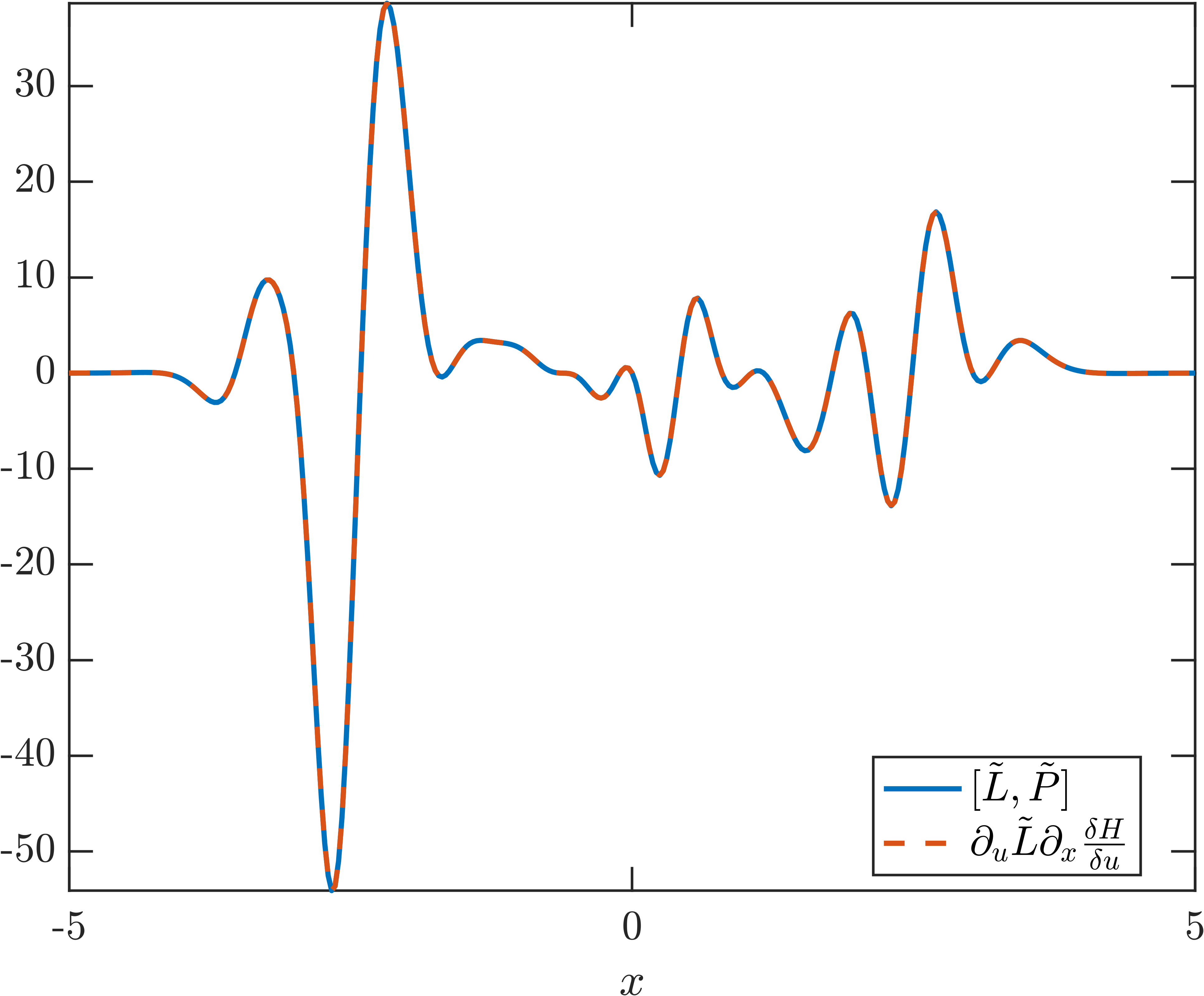

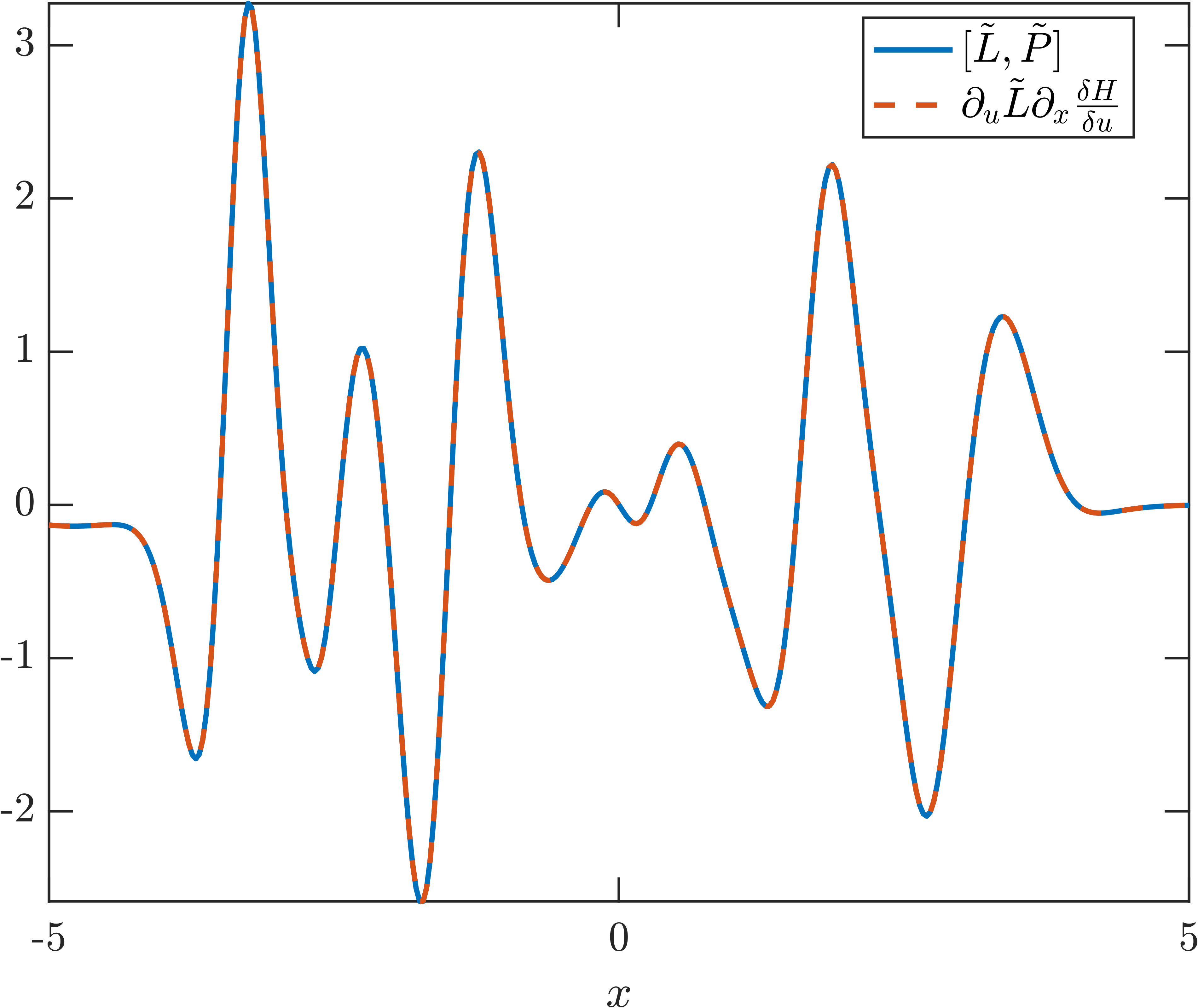

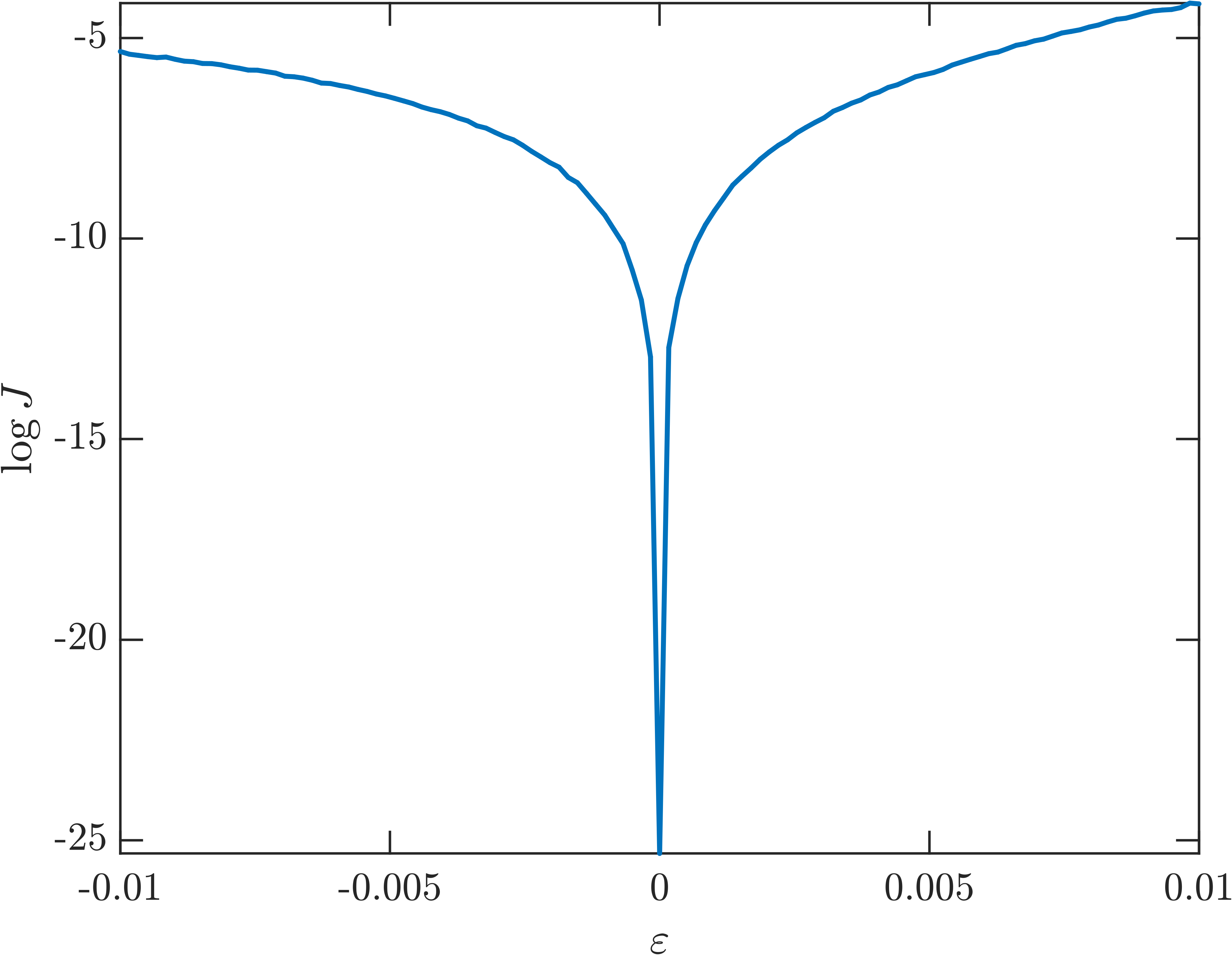

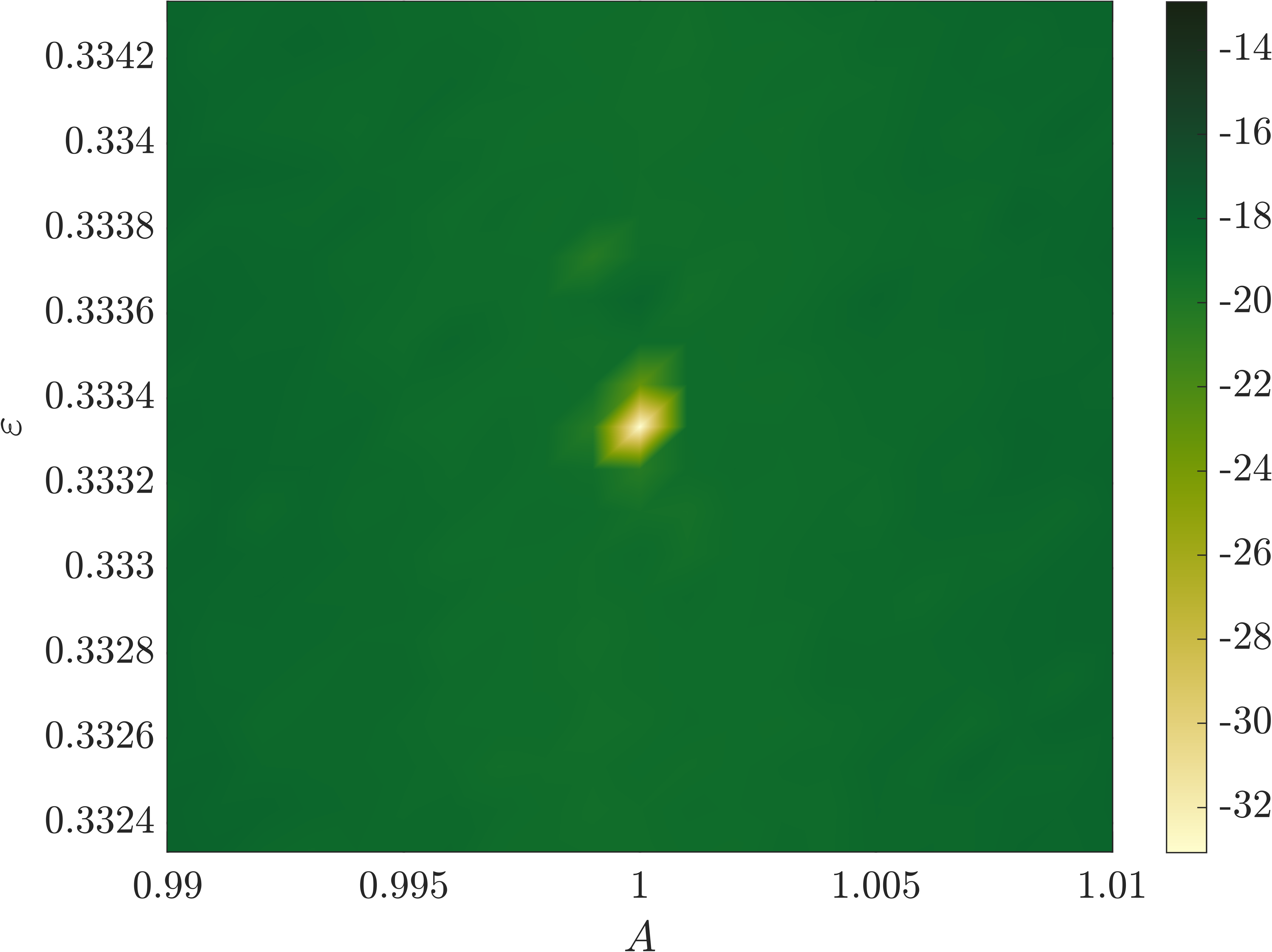

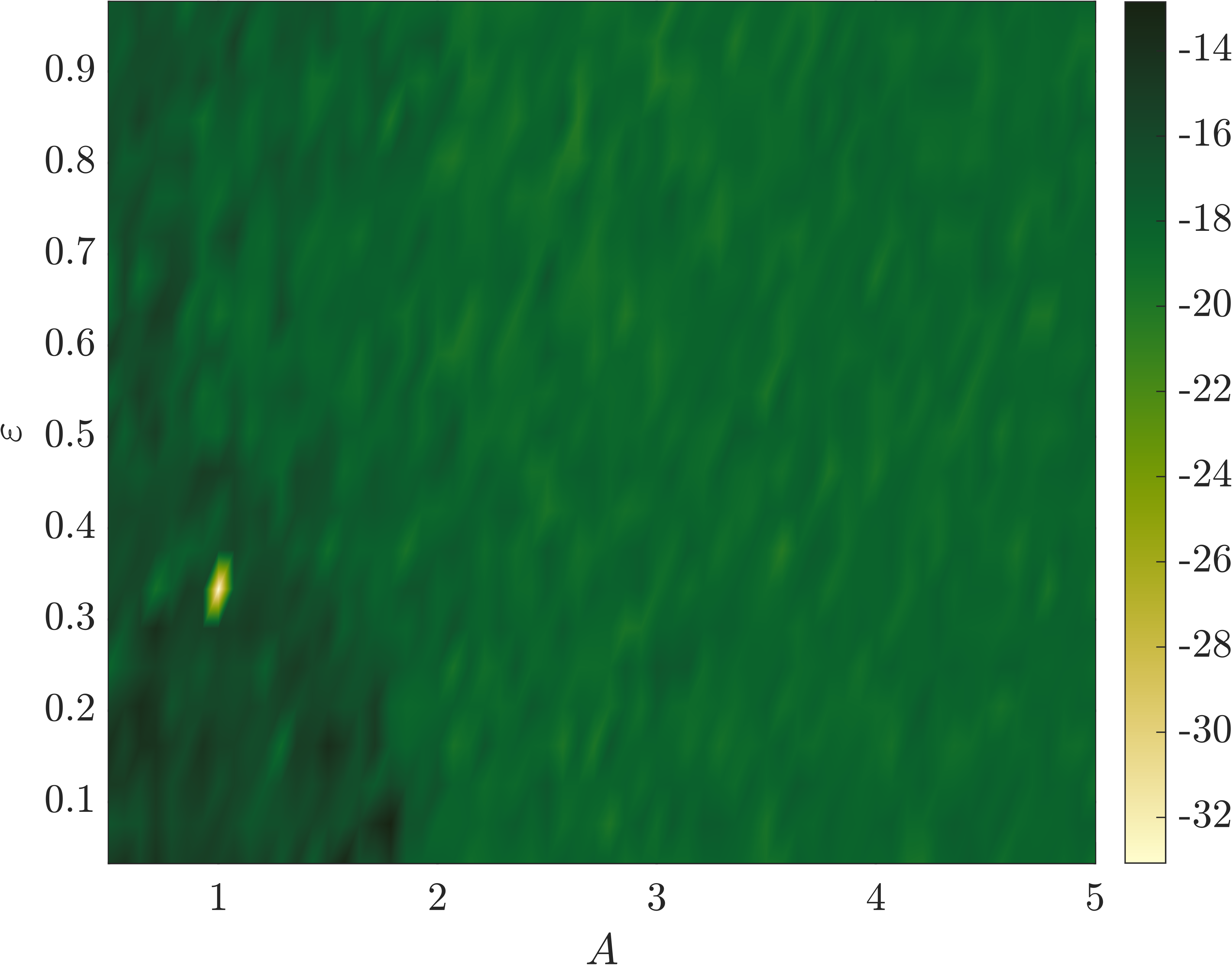

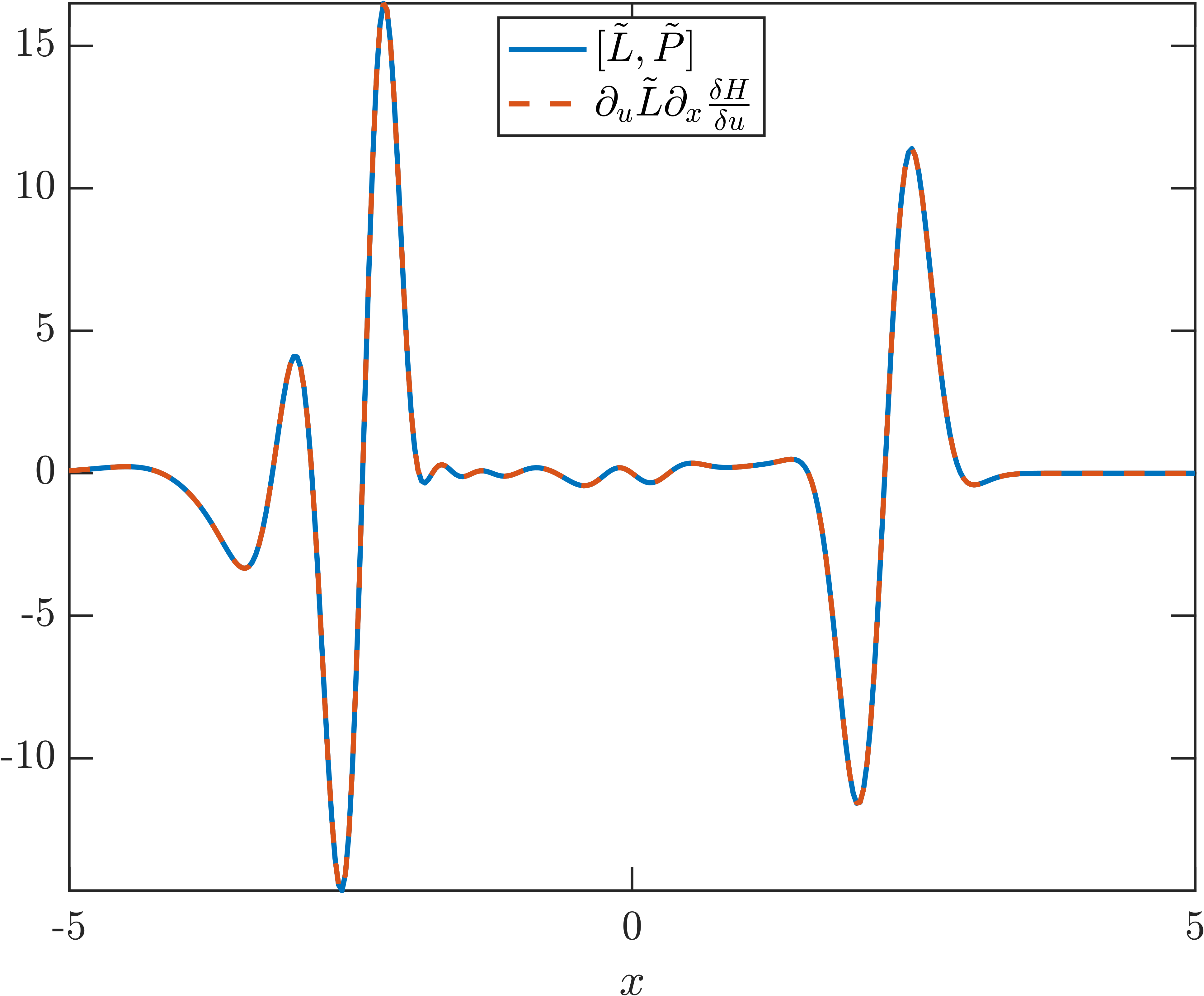

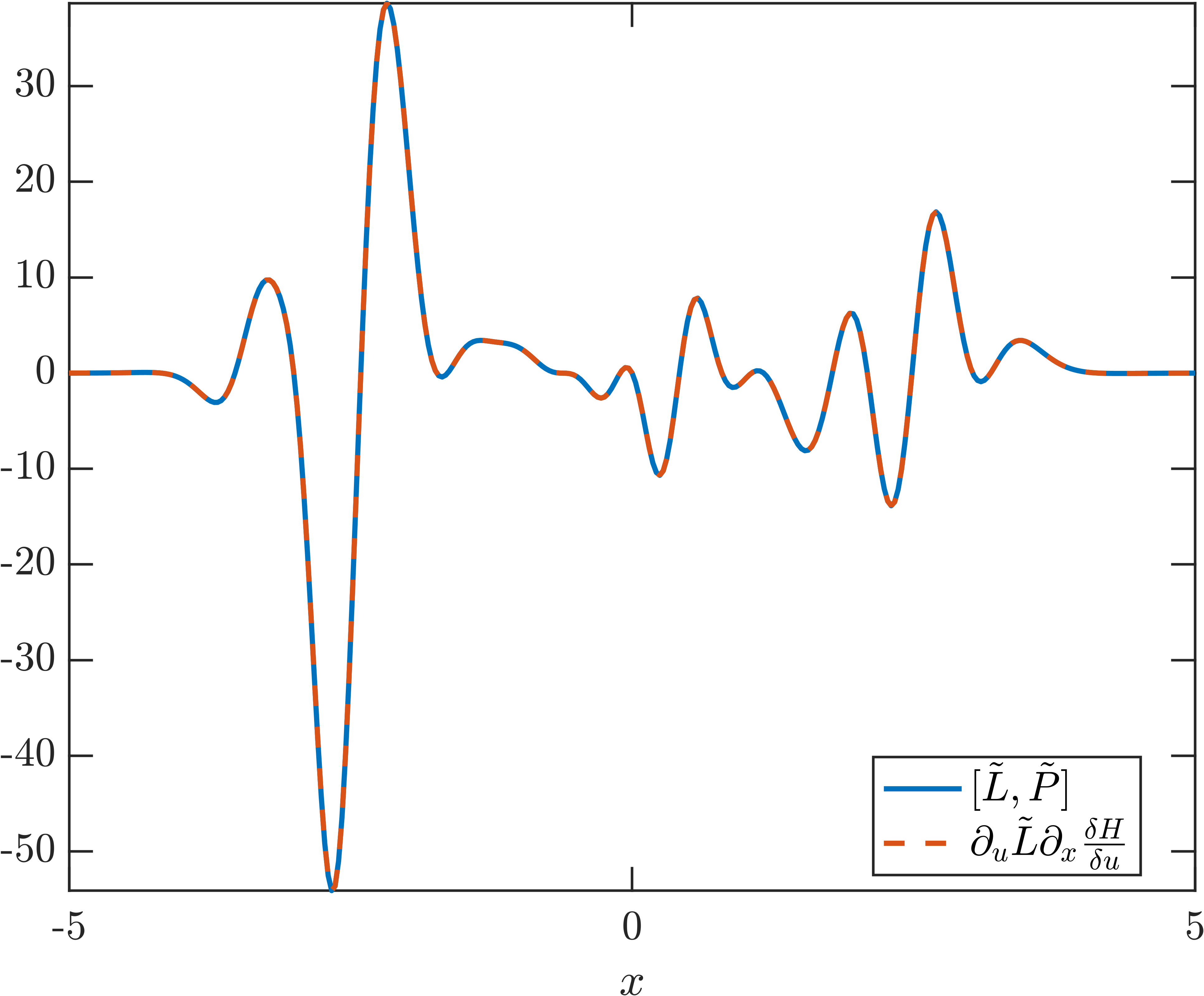

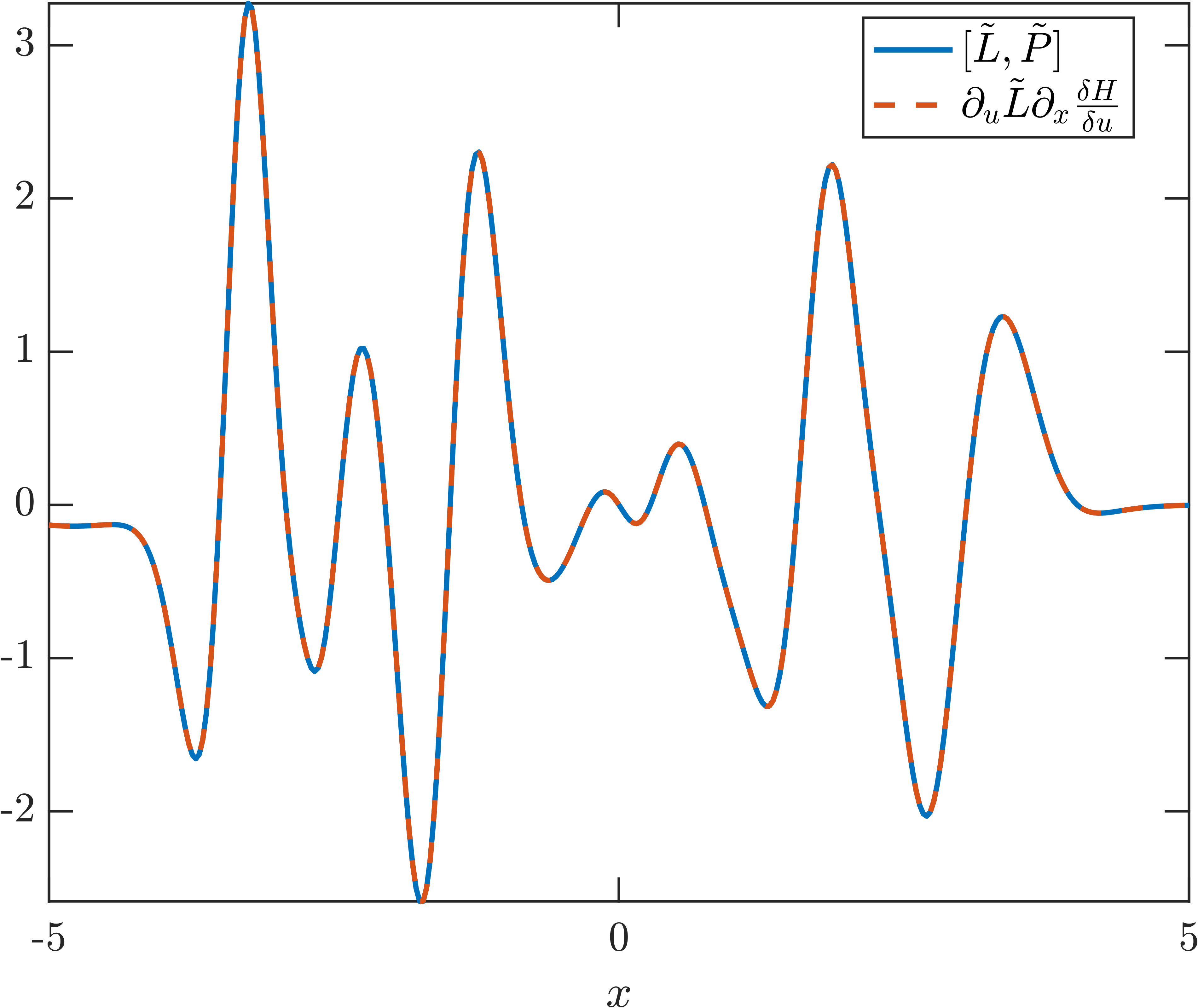

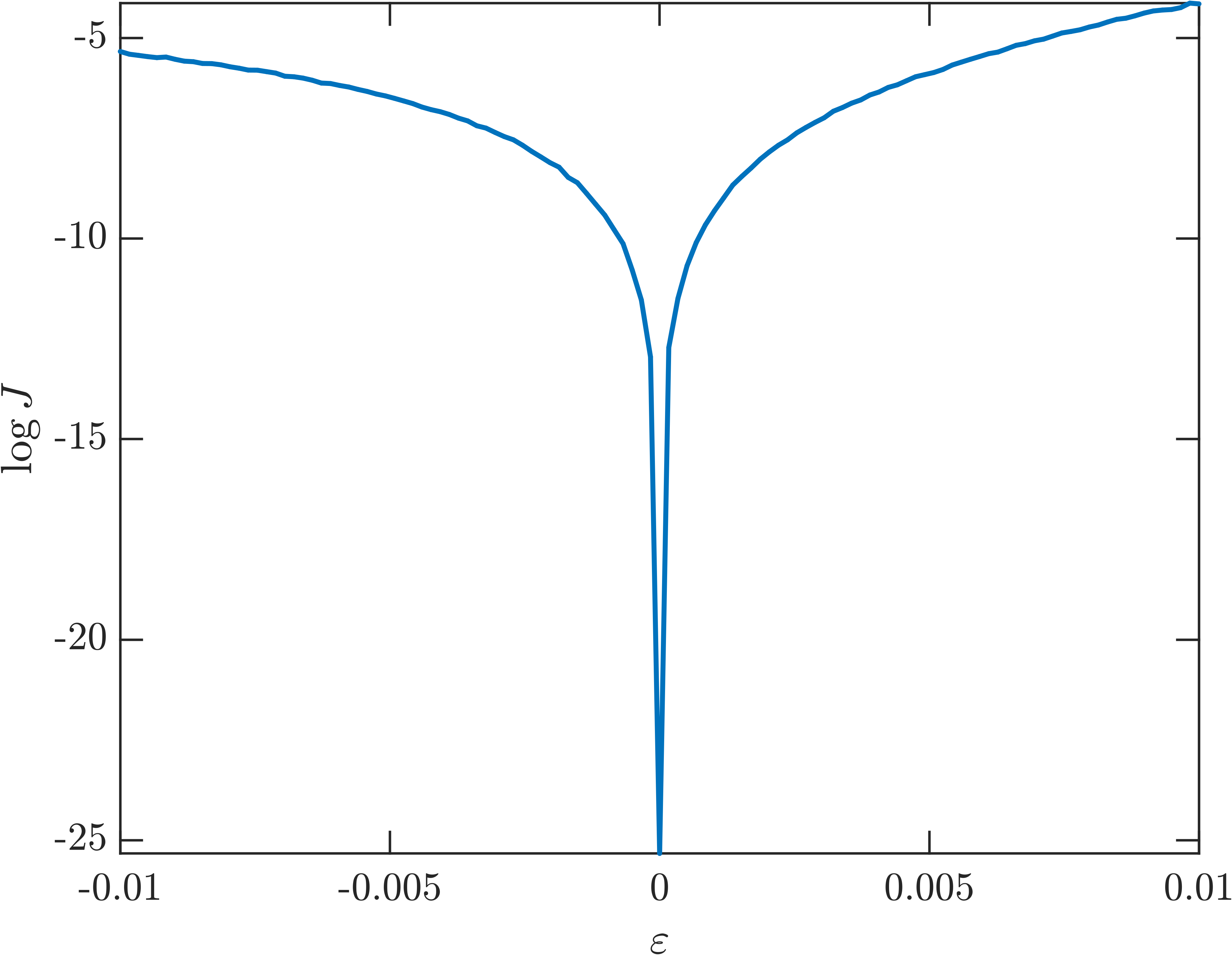

SILO: Sparse Identification of Lax Operators

SILO is formalized as a symbolic regression approach for sparse, interpretable Lax pair discovery in ODE and PDE systems. The method is validated on the Henon–Heiles system, Euler top, and KdV equation, with high-precision integrability detection and sensitivity to non-integrable perturbations.

Figure 8: Parameter search for integrability detection in the Henon–Heiles system, showing sharp loss minima at integrable points.

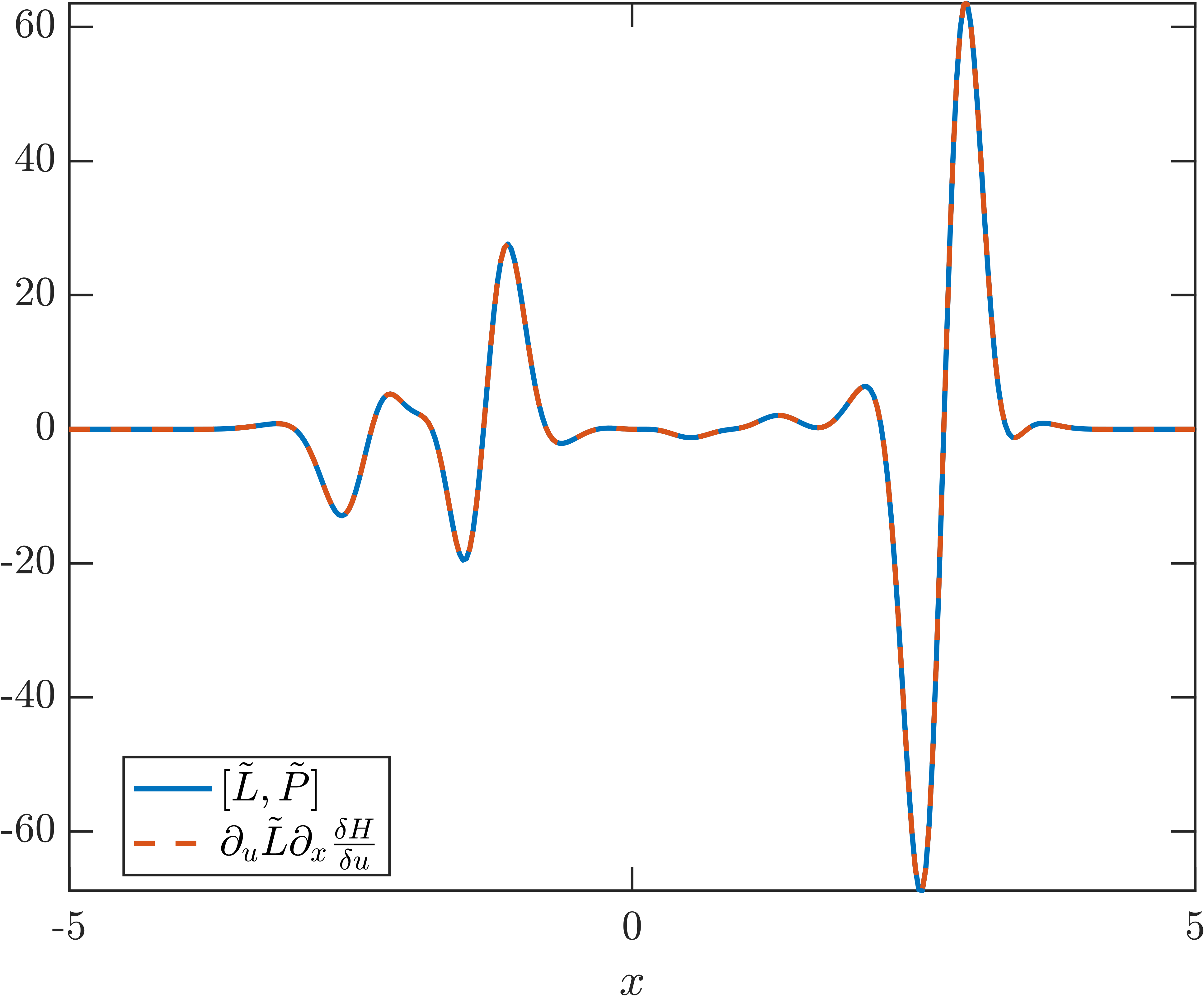

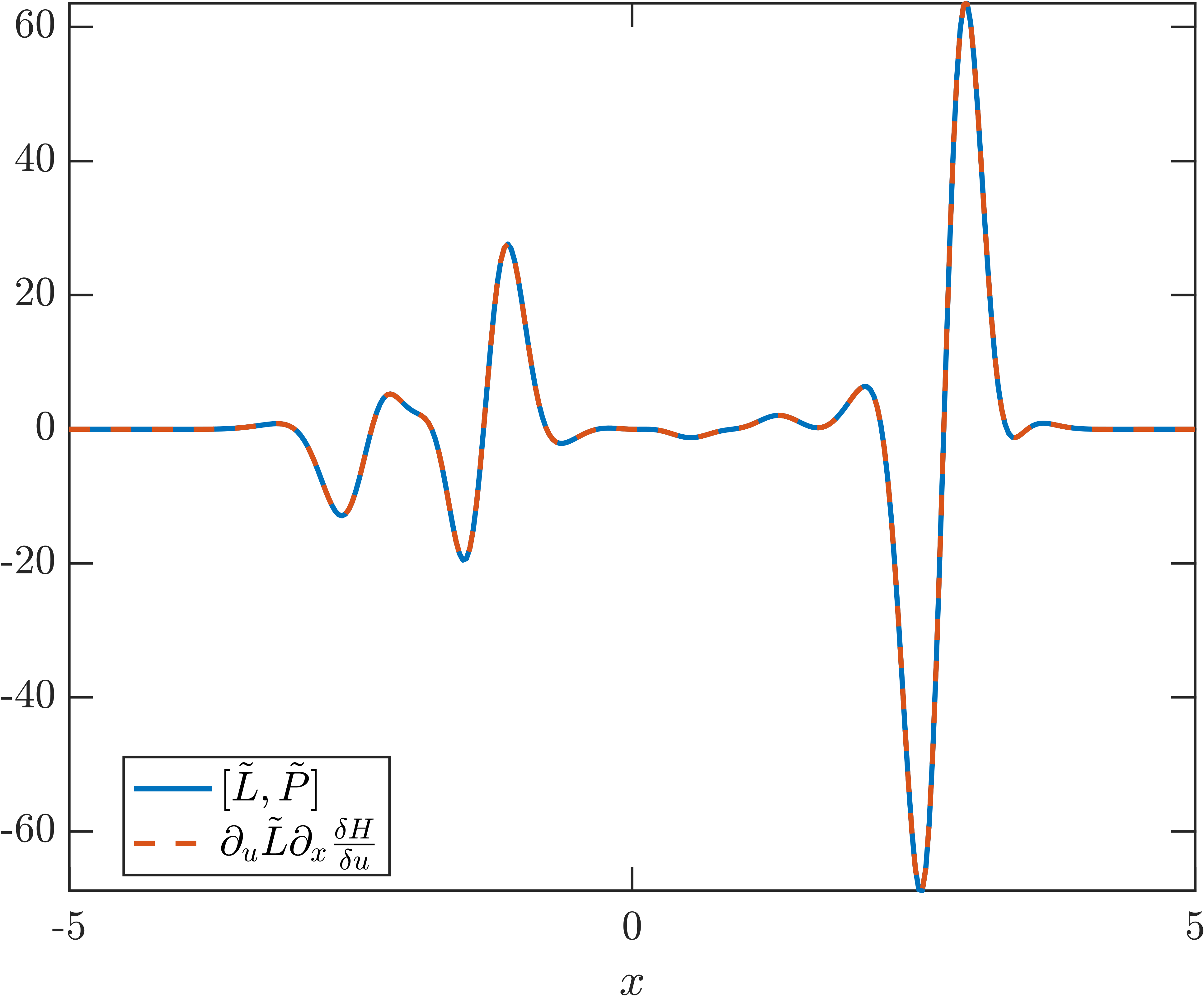

Figure 9: Cross-validation of SILO-discovered Lax pairs for KdV, comparing generalized Poisson brackets and commutators on unseen samples.

Figure 10: Perturbation study of KdV integrability, showing loss dependence on non-integrable Hamiltonian perturbations.

Implications, Limitations, and Future Directions

The integration of machine learning with nonlinear wave theory offers substantial opportunities for equation discovery, surrogate modeling, and the identification of hidden structure in complex systems. The paper emphasizes the importance of embedding physical priors—symmetries, conservation laws, integrability—into learning architectures to ensure interpretability, generalization, and physical fidelity.

Challenges remain in scaling these methods to high-dimensional systems, automating library construction, and avoiding degenerate or fake Lax pairs. The authors highlight promising directions in soliton gas modeling, dispersive shock wave theory, reduced-order modeling for transport-dominated problems, symbolic learning frameworks (AI-Descartes, AI-Hilbert), and operator learning for dispersive and integrable systems.

The omission of Koopman operator theory is noted, with a call for future work to integrate Koopman-based approaches with operator learning and conservation law discovery in nonlinear wave contexts.

Conclusion

This paper provides a rigorous and technically detailed synthesis of data-driven methods for nonlinear wave analysis, emphasizing the complementarity of machine learning and analytical approaches. By embedding structural priors and leveraging modern neural architectures, researchers can accelerate the discovery of governing equations, symmetries, conservation laws, and integrability properties in complex wave systems. The convergence of classical analysis and machine learning is poised to expand the frontiers of nonlinear wave science, offering new tools for both theoretical exploration and practical application.