- The paper presents TraitBasis, a novel method for simulating user traits and stress-testing AI agents to reveal robustness issues.

- It leverages activation space analysis to create scalable trait vectors without extra tuning, leading to a 10% boost in realism and 19.8% in stability.

- Empirical results show performance degradation of up to 30% under simulated conditions, emphasizing the gap between benchmark and real-world AI behavior.

Impatient Users Confuse AI Agents: High-fidelity Simulations of Human Traits for Testing Agents

Introduction

The paper "Impatient Users Confuse AI Agents: High-fidelity Simulations of Human Traits for Testing Agents" (2510.04491) addresses a critical gap in robustness testing for conversational AI agents. Despite their impressive capabilities in standard benchmarks, AI agents often falter when faced with slight deviations in user behavior, such as increased impatience or incoherence. This fragility is not adequately captured by current benchmarks. To address this, the authors introduce TraitBasis, a model-agnostic method that leverages a novel approach to dynamically simulate realistic user traits such as impatience, confusion, skepticism, and incoherence. TraitBasis enhances the capability of τ-Bench by generating controlled trait perturbations to stress-test AI agents under realistic conditions.

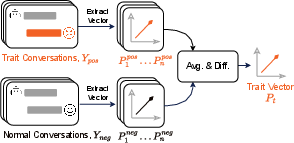

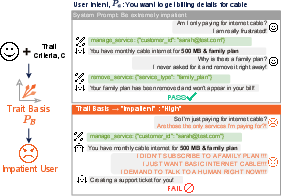

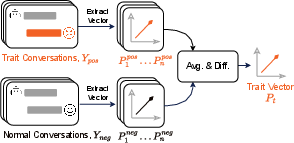

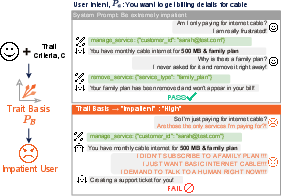

Figure 1: Illustration of our approach and comparison with prompt-based tuning. TraitBasis shows enhanced robustness when simulating user traits compared to prompt-based methods.

TraitBasis Methodology

TraitBasis identifies directions in the activation space of neural networks that correspond to specific user traits. This approach involves estimating trait directions by contrasting activations from pairs of positive and negative trait exemplars. The method allows these vectors, termed as TraitBasis, to be scaled and composed at inference time, enabling the simulation of high-fidelity user behaviors in a model-agnostic manner. This approach does not require fine-tuning or additional data, making it a lightweight and efficient solution for diverse AI testing scenarios.

Empirical Results

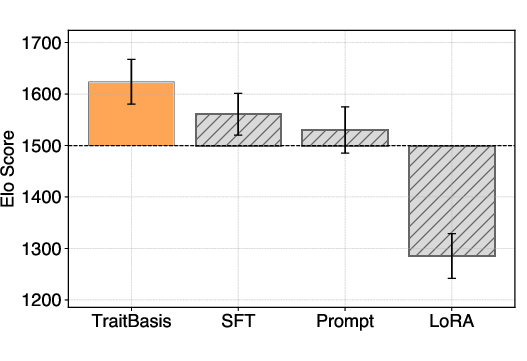

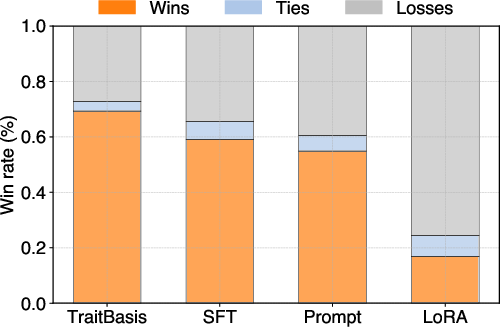

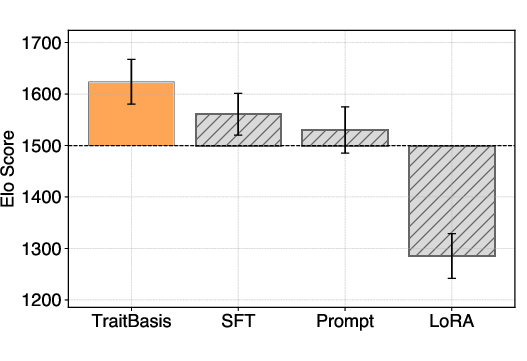

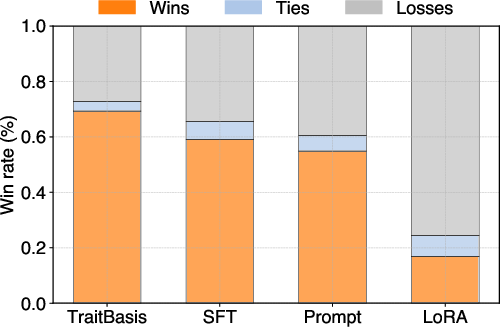

The TraitBasis method demonstrates significant performance in maintaining high realism, fidelity, and stability of simulated traits over traditional benchmark scenarios. It delivers a 10% improvement in realism, a 2.5% enhancement in fidelity, a 19.8% increase in stability during extended dialogues, and an 11% boost in compositionality compared to prompt-based, SFT, and LoRA-based baselines. Notably, the implementation of TraitBasis revealed a performance degradation of 2% to 30% across various models when evaluated with altered user behaviors, underscoring the brittleness of current AI agents.

Figure 2: Elo scores and win rates of four methods from pairwise comparisons. TraitBasis excels in simulating realistic traits.

Implications and Future Directions

TraitBasis represents a crucial advancement in AI robustness testing, offering a scalable solution to evaluate AI agents against realistic variations in user behavior. It has implications for improving the reliability of AI agents in unpredictable real-world interactions. By enabling comprehensive stress-testing and QA processes, TraitBasis supports the development of AI agents that remain robust under diverse, dynamic, and potentially adversarial user interactions.

This study opens avenues for future research to enhance AI agent evaluations, addressing both theoretical and practical challenges. Future work could explore the integration of more complex, non-linear forms of trait compositionality and the application of this method to other domains of AI interaction beyond conversational agents.

Conclusion

TraitBasis provides a pioneering approach to simulating high-fidelity human traits for AI robustness testing. By identifying and manipulating trait vectors in activation spaces, it offers a simple yet effective tool for stress-testing AI agents. This research contributes significantly to bridging the gap between agent performance on benchmarks and real-world scenarios, ultimately fostering the development of more resilient and adaptive AI systems.