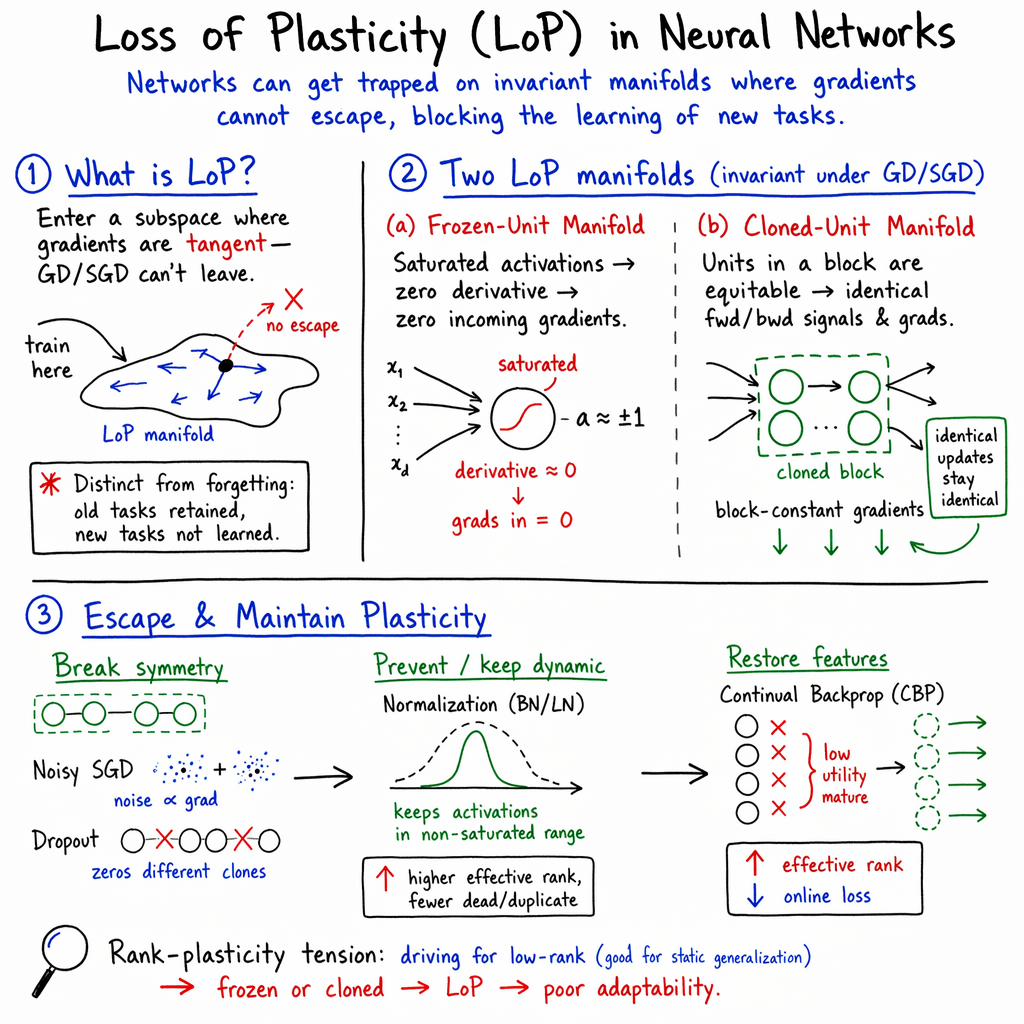

- The paper identifies invariant sub-manifolds, such as frozen and cloned units, that trap gradient descent and hinder the formation of new representations.

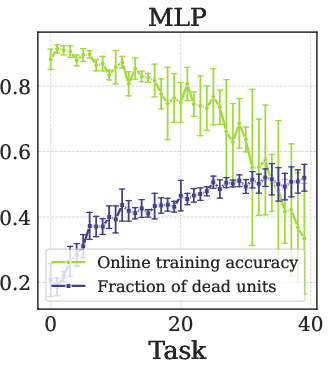

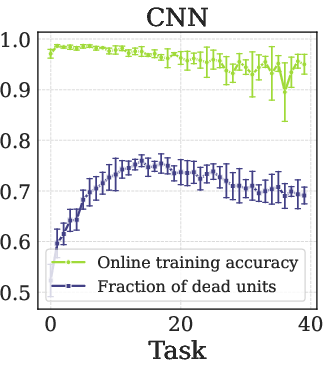

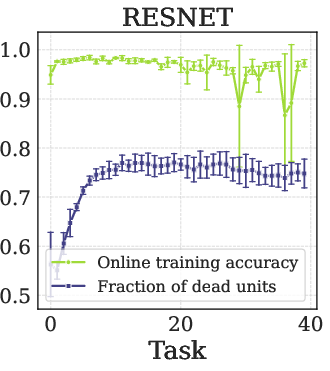

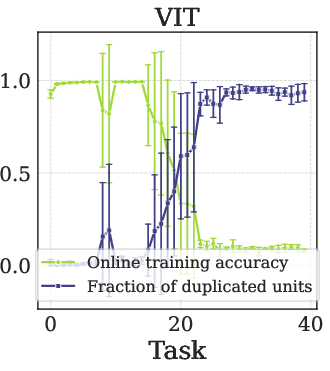

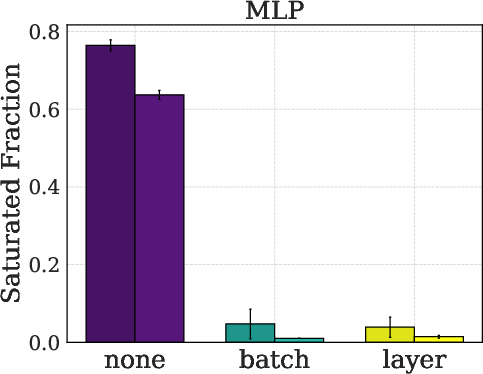

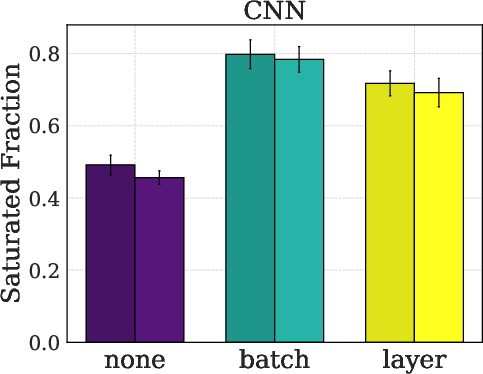

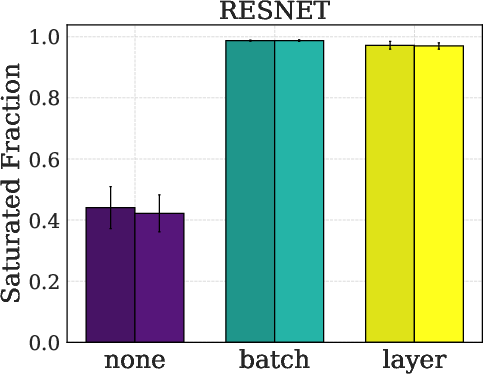

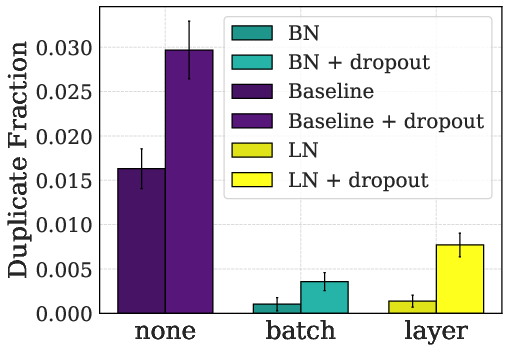

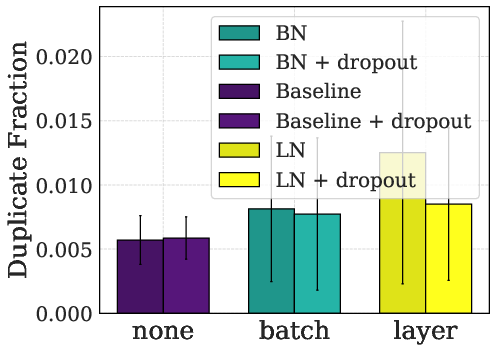

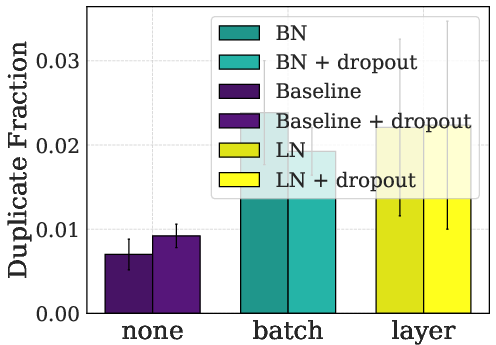

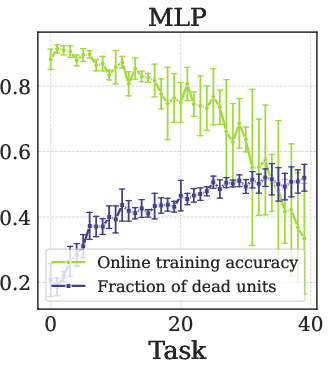

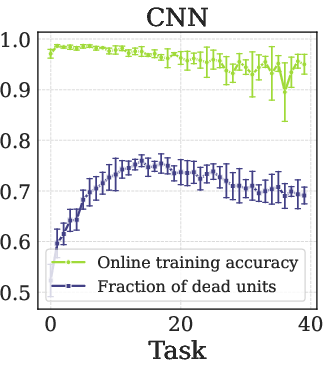

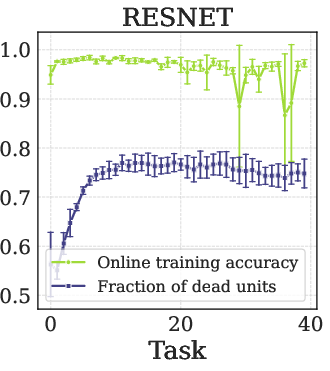

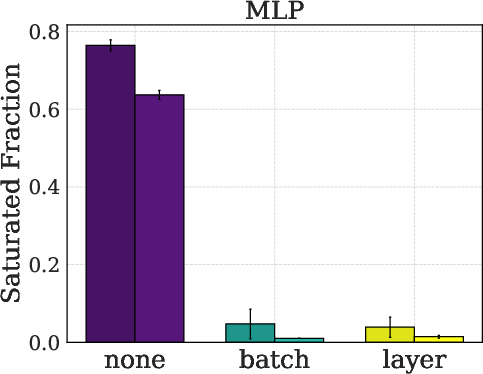

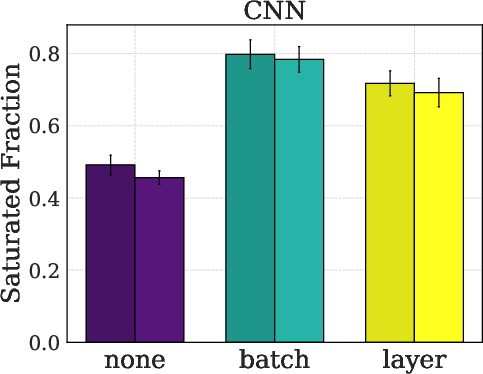

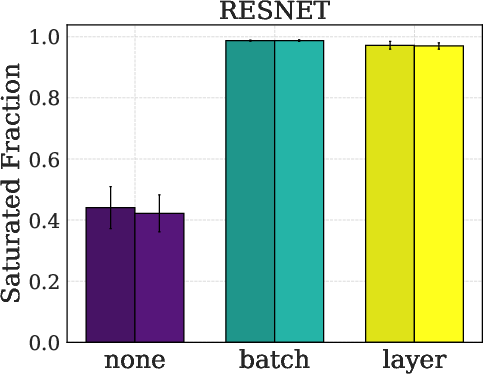

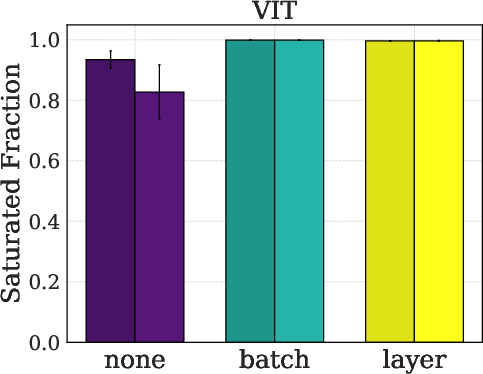

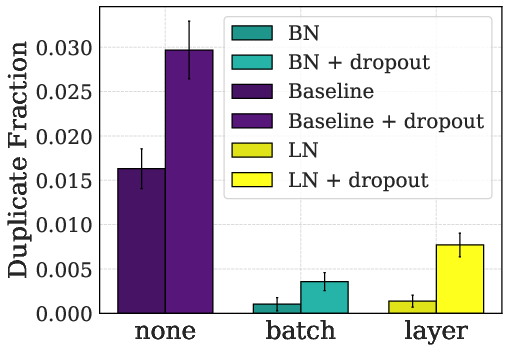

- It empirically demonstrates that normalization techniques reduce LoP by decreasing dead and duplicate activations across architectures like MLP, CNN, ResNet, and ViT.

- Recovery methods such as Noisy SGD and Continual Backpropagation help destabilize LoP manifolds, enhancing continual learning in non-stationary environments.

Mathematical Understanding of Loss of Plasticity

Introduction

The paper "Barriers for Learning in an Evolving World: Mathematical Understanding of Loss of Plasticity" (2510.00304) examines a critical challenge in deep learning, specifically the loss of plasticity (LoP) in non-stationary environments where continual adaptation is required. Traditional deep learning models assume stationarity and rely on random initialization for exploration. These assumptions are problematic in dynamic settings, such as continual learning, which needs stability for old knowledge retention and plasticity for new information acquisition.

LoP is a distinct phenomenon from catastrophic forgetting. While catastrophic forgetting eliminates learned information, LoP prevents the system from effectively learning new tasks. The paper centers around understanding LoP through dynamical systems theory and identifying invariant sub-manifolds as entrapments for gradient dynamics. It elucidates mechanisms underlying these traps, such as frozen units and cloned-unit manifolds, which are inherent to the optimization landscape dynamics.

LoP Manifolds and Their Implications

LoP is characterized by gradient descent trajectories entrapped within invariant sub-manifolds of the parameter space, severely limiting the capacity to learn new information. Two types of prominent mechanisms contribute to these traps:

- Frozen Units: Neural units become inactive or saturated due to activation functions, leading to a fixed state where incoming parameters no longer adjust.

- Cloned Units: Redundancies in representations lead to manifolds of identical activation patterns.

The existence of these sub-manifolds is verified through theoretical and empirical evidence, demonstrating that once these manifolds are entered, standard gradient-based optimization cannot escape due to geometric constraints.

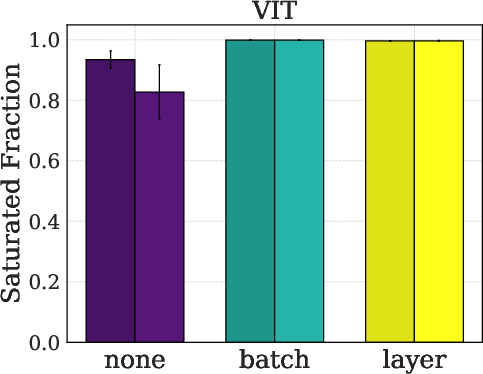

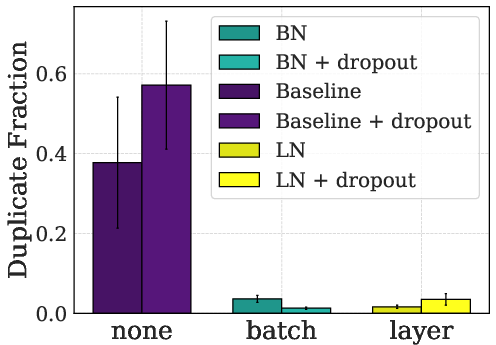

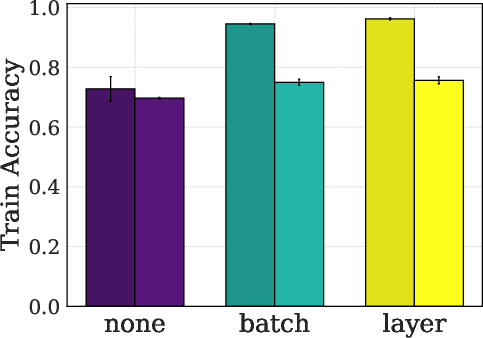

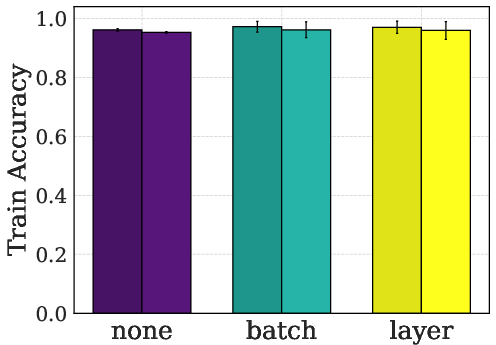

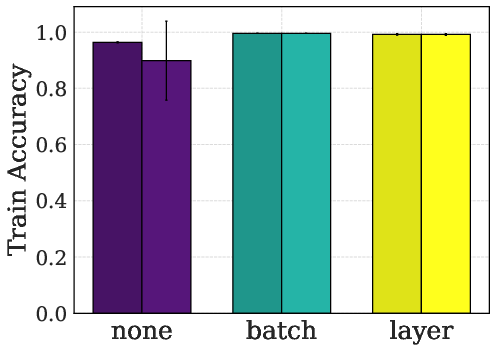

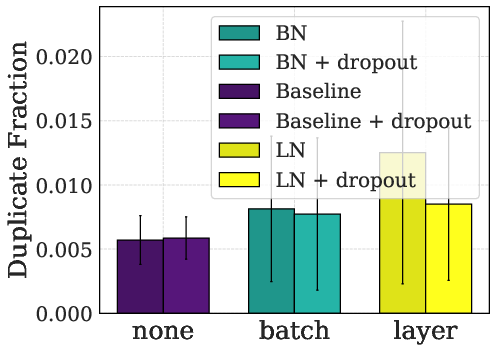

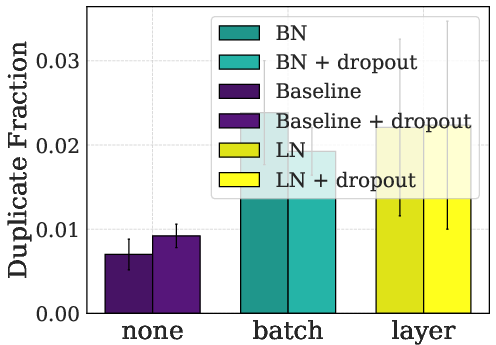

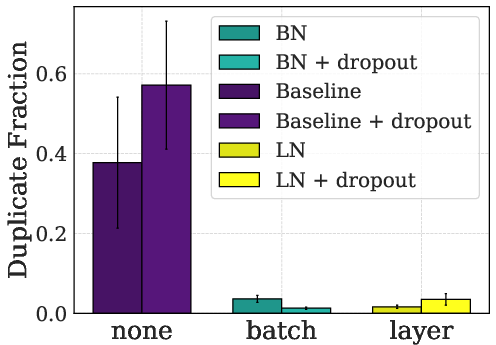

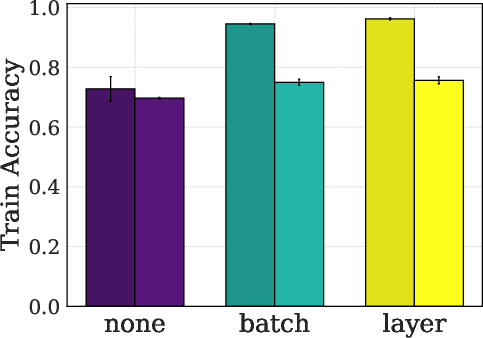

Figure 1: Causes and symptoms of Loss of Plasticity emerging during continual learning. The plots illustrate (across different architectures like MLP, CNN, ResNet, and ViT from left to right) an increase in the fraction of dead or duplicate units during training, coincidental with a decrease in training accuracy. These are key indicators of LoP.

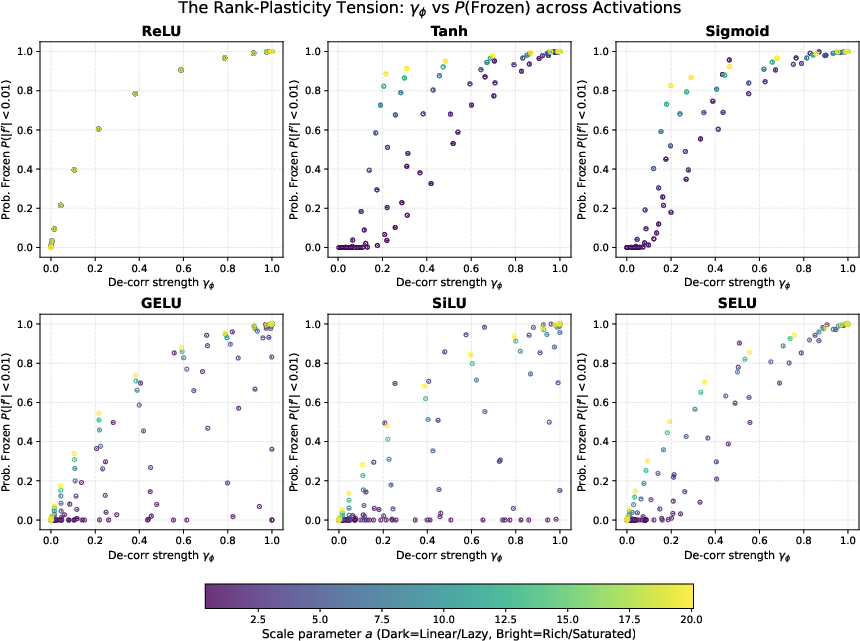

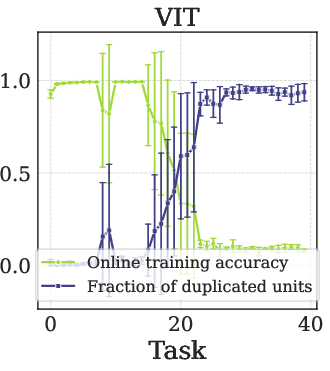

Rank-Plasticity Tension

The mechanisms that promote neural network generalization in static settings inadvertently lead to a loss of plasticity in dynamic environments. Low-rank structures, beneficial for generalization, are found to contribute actively to the formation of LoP manifolds. This tension emerges from rank dynamics where the network's configuration, optimized for tasks' generalization, hinders adaptability due to a reduction in the effective degrees of freedom.

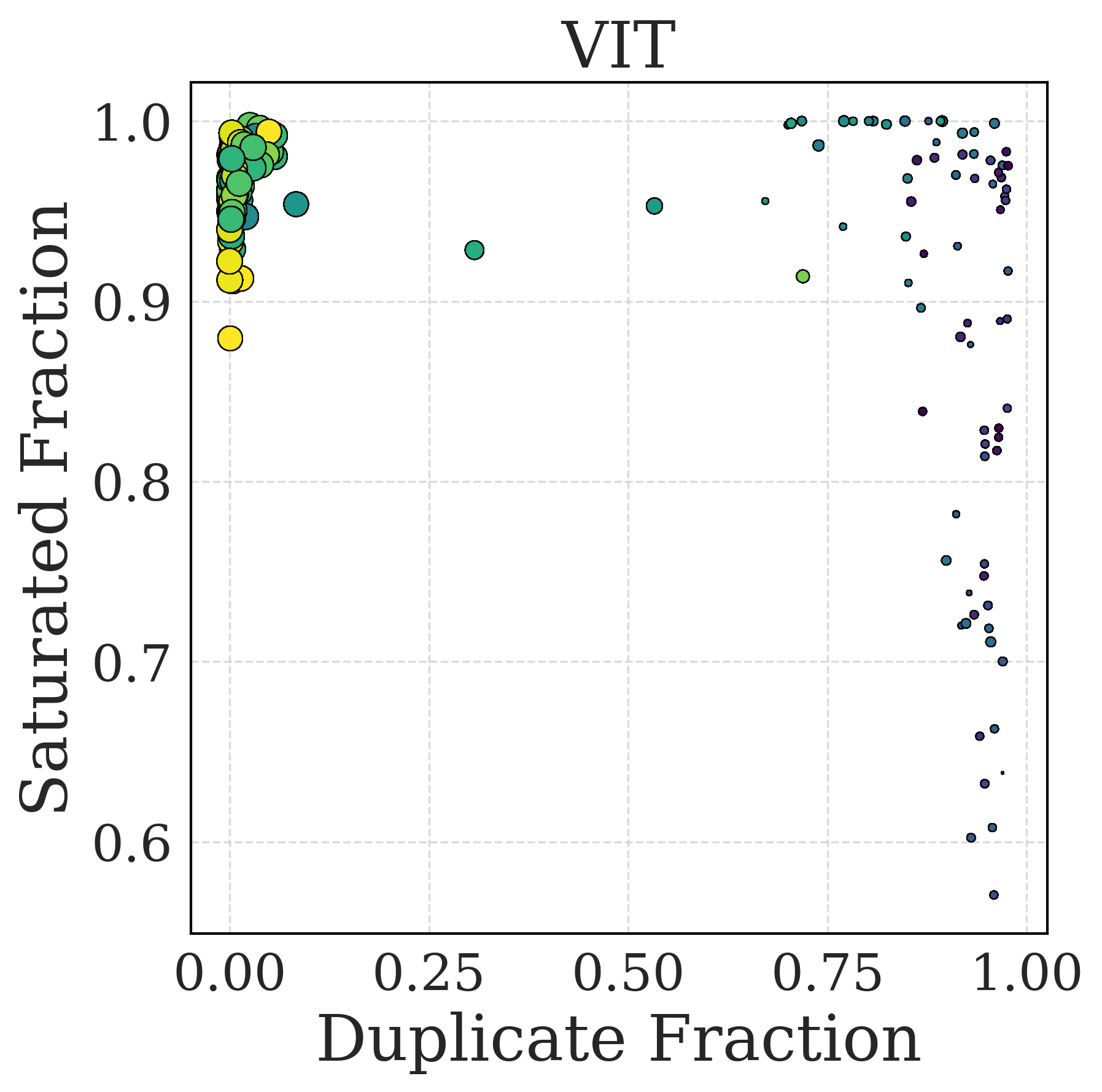

Figure 2: The rank-plasticity tradeoff for general activations. Empirical analysis of all activations γϕ vs the probability that the unit is frozen, when we vary the pre-activations mean and bias.

Methods for Mitigation and Recovery

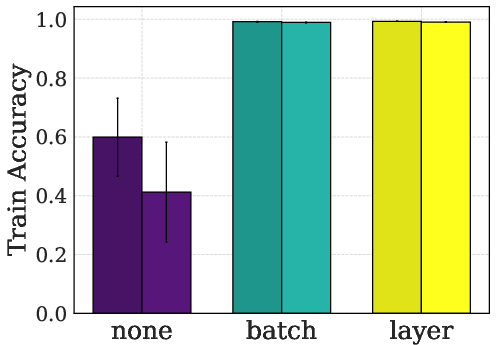

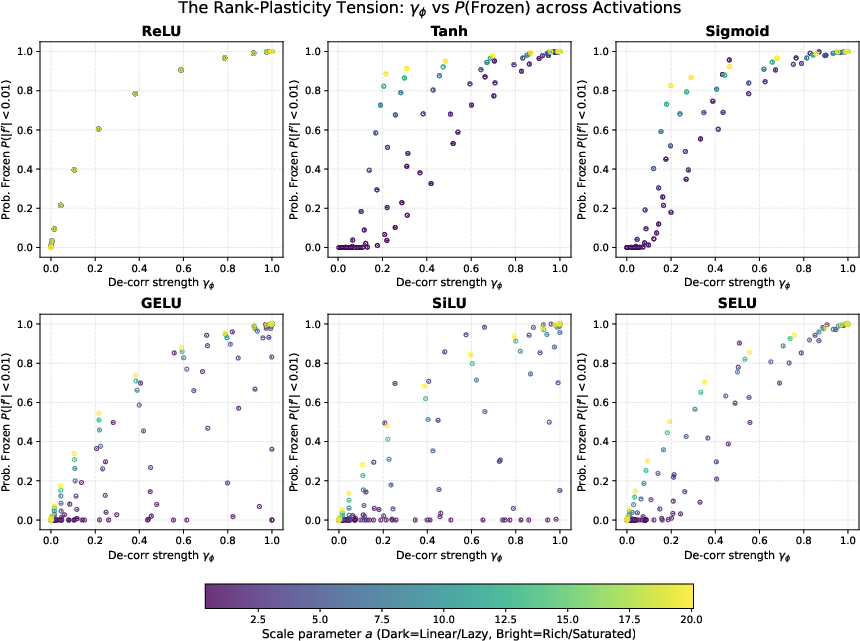

The research illustrates that architectural interventions like normalization stabilize the parameter space, reducing the conditions conducive to the formation of LoP manifolds. Empirical data shows normalization effectively reduces the incidence of frozen and duplicate states, thereby maintaining plasticity.

For scenarios where LoP conditions have already set in, recovery strategies such as injecting noise into gradient computation (e.g., Noisy SGD) and employing Continual Backpropagation (CBP) are posited as effective measures to destabilize these manifolds, facilitating escape and restoration of learning capacity.

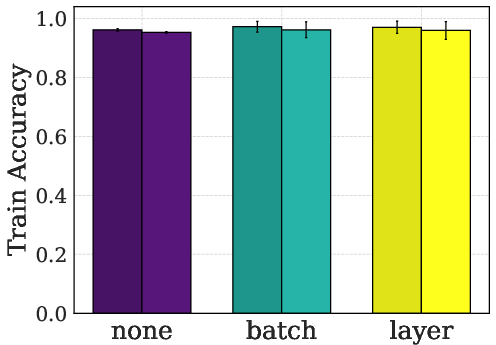

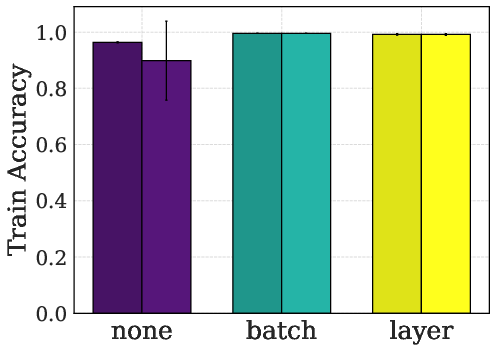

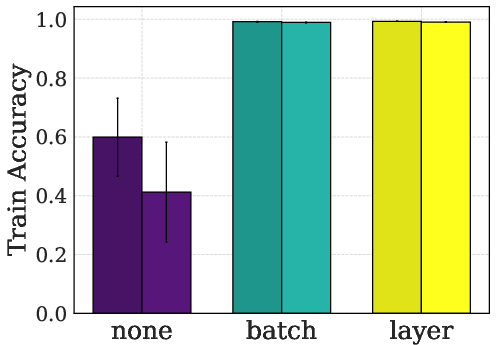

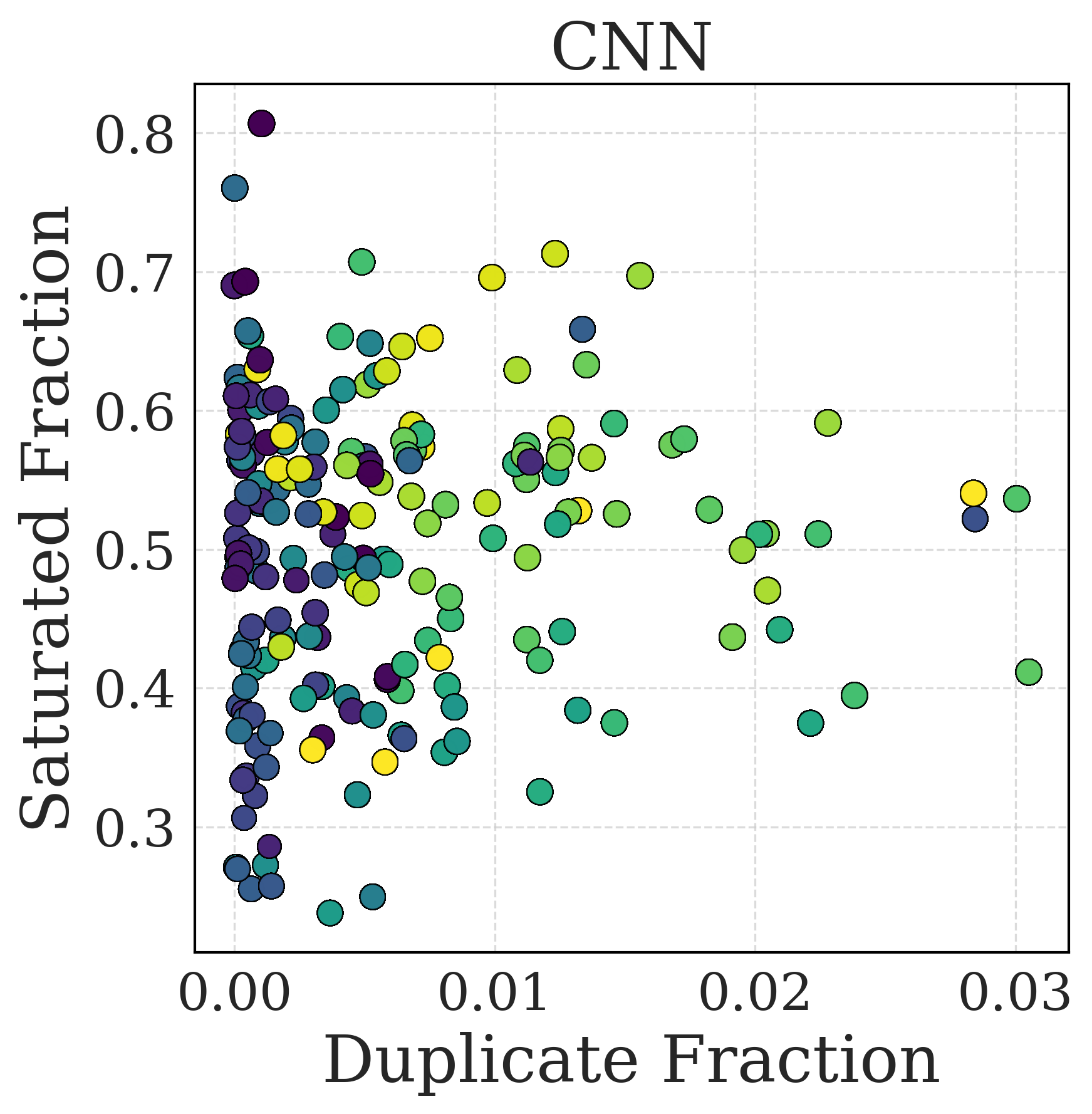

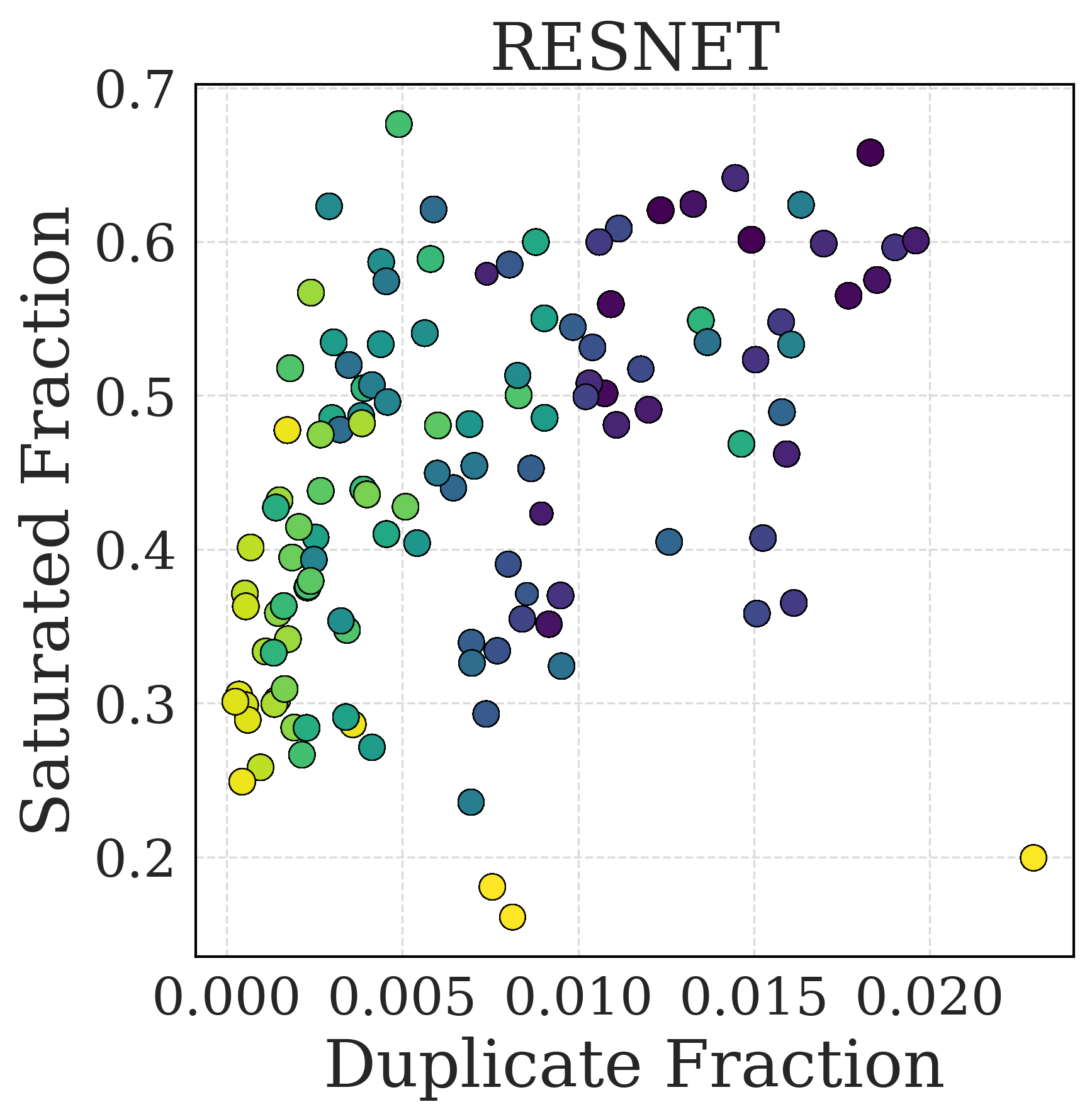

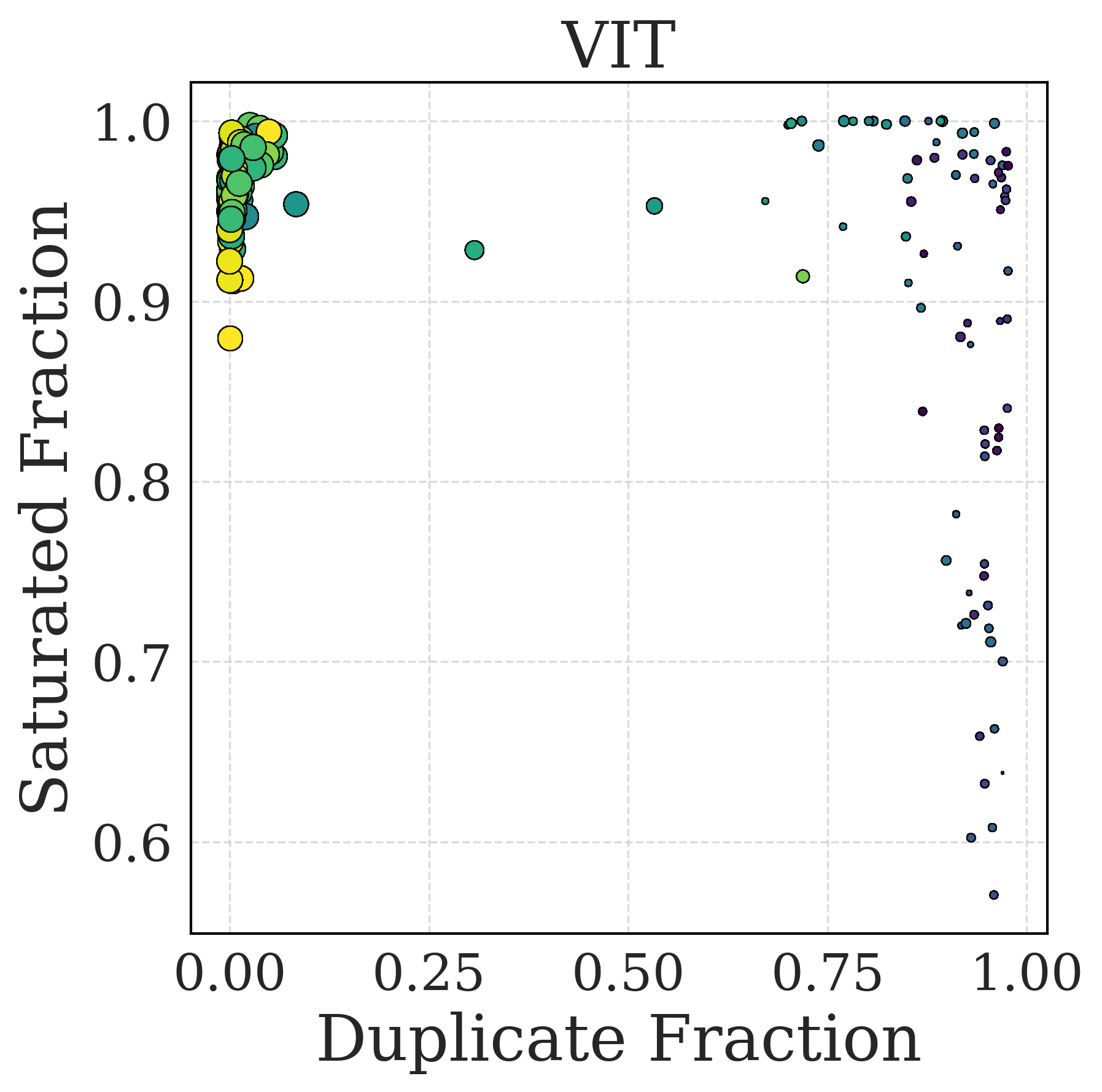

Figure 3: Normalization reduces the number of dead/saturated units (top row) and duplicated units (middle row), and its impact on training accuracy (bottom row) across different architectures. The training accuracy displayed is calculated as the average online accuracy over the entire training length. These results highlight the role of normalization in mitigating LoP symptoms.

Conclusion

The paper advances the theoretical understanding of LoP through a dynamical systems framework, identifying geometric structures that persistently hinder new learning. It outlines architectural strategies for mitigation and empirical validation of recovery techniques, paving the way for designing AI systems that can adapt continuously in non-stationary environments. The insights deepen the comprehension of the stability-plasticity dilemma, emphasizing the need for mechanisms maintaining feature diversity essential for lifelong-learning systems. Future work should explore the stability of non-linear manifolds and further develop practical approaches to ensure robust long-term adaptability.

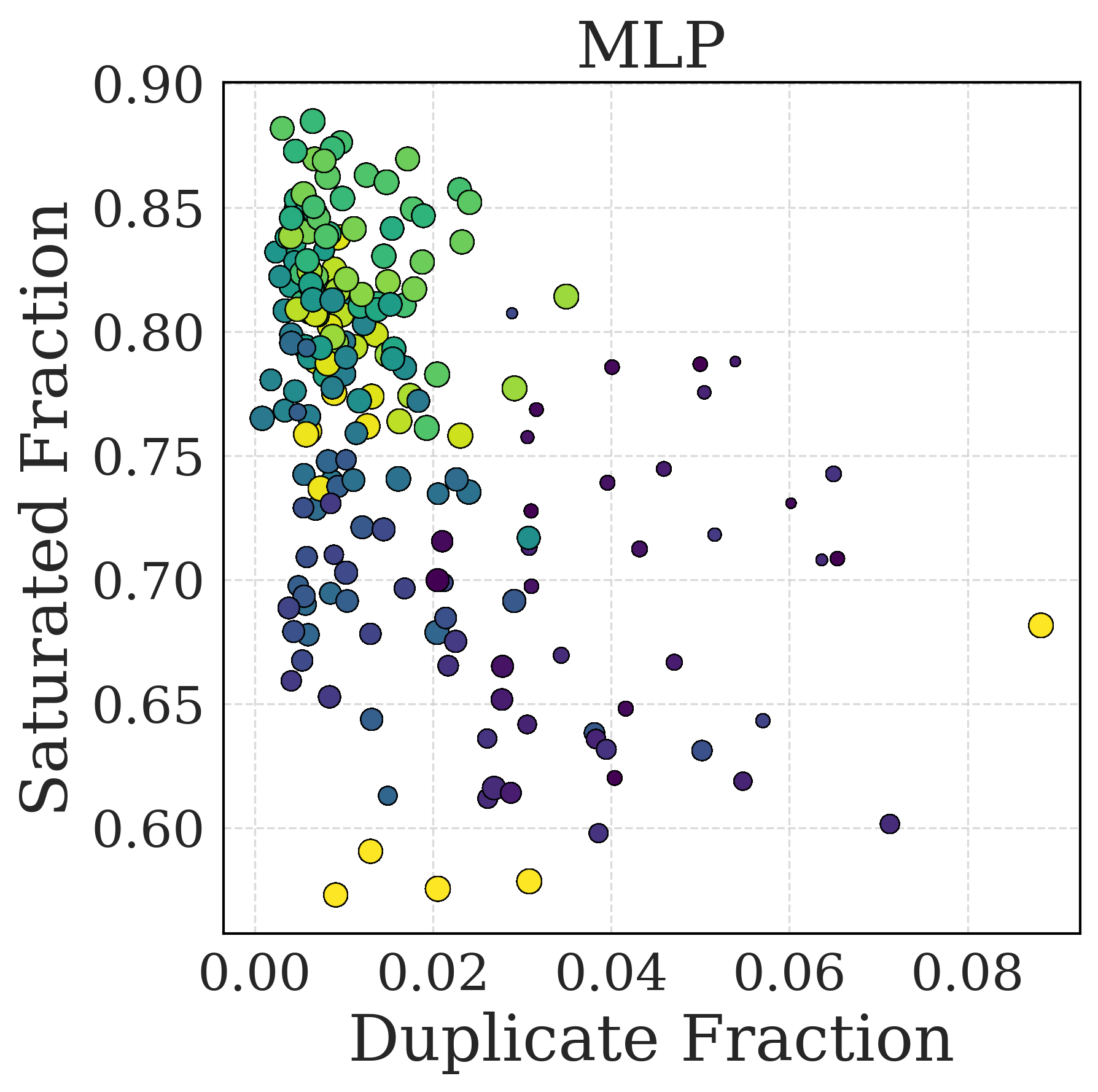

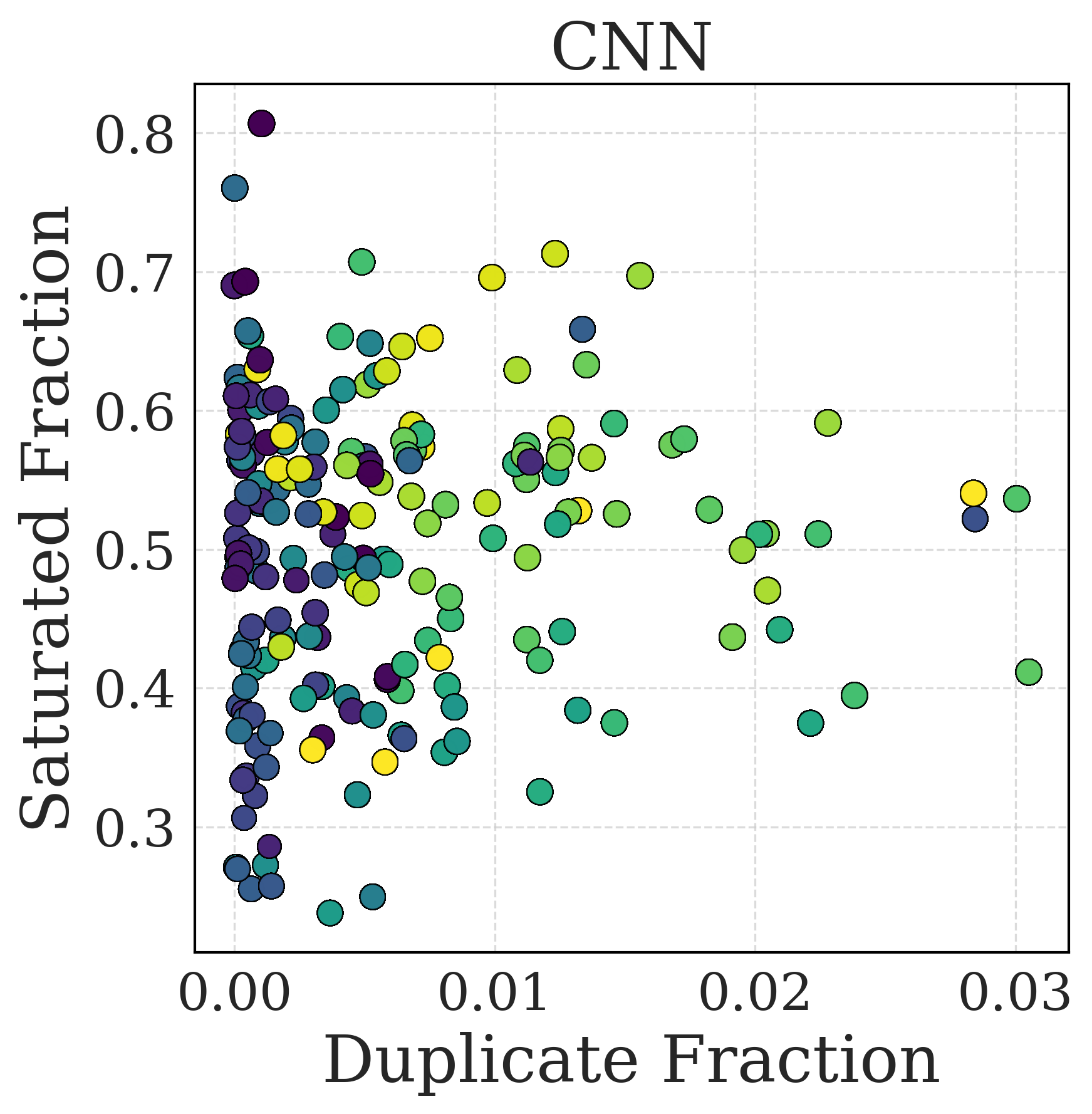

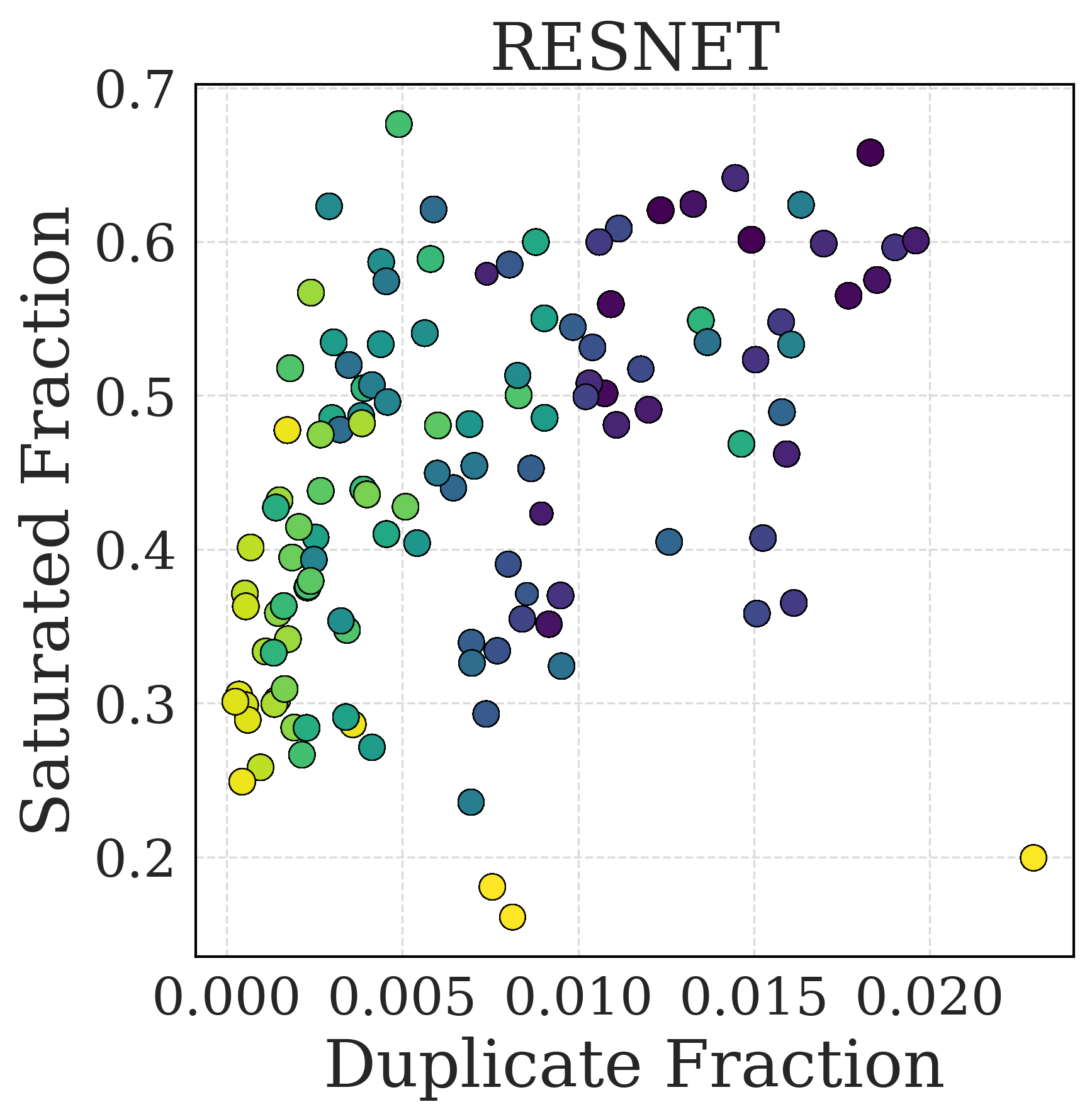

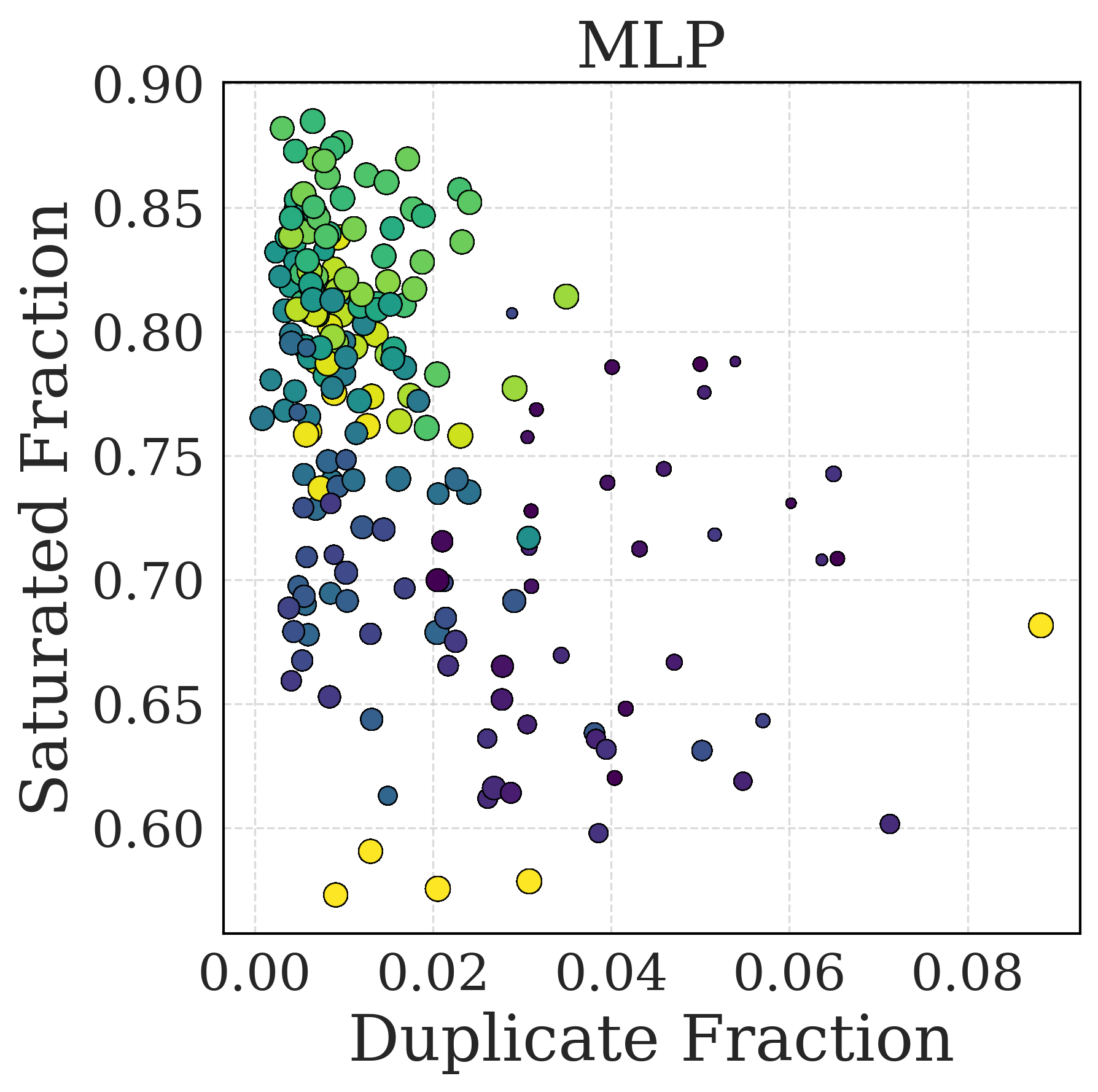

Figure 4: Evolution of duplicate/dead unit fractions and training accuracy. The colors correspond to training steps (lighter is earlier) and the points size to the Training Accuracy (bigger is higher). This figure illustrates the correlation between the increase in LoP symptoms (duplicate/dead units) and training dynamics.