- The paper presents an advanced threat model detailing nine unique vulnerabilities in generative AI agents, uncovering previously undocumented risks.

- The methodology integrates literature review, expert consultation, theoretical analysis, and case studies to validate the threat framework.

- The SHIELD framework offers six strategic mitigation measures to protect enterprise-grade GenAI agents from cognitive, temporal, and operational exploits.

Securing Agentic AI: A Comprehensive Threat Model and Mitigation Framework for Generative AI Agents

In recent years, generative AI (GenAI) agents have increasingly been integrated into enterprise environments, offering advanced capabilities such as autonomous reasoning, tool interaction, and persistent memory access. These capabilities differentiate GenAI agents from traditional AI systems and pose novel security challenges that existing security frameworks do not fully address. This paper introduces an advanced threat model tailored specifically for GenAI agents, highlighting the need for specialized security measures to mitigate these emerging threats.

Introduction to GenAI Agents and Security Challenges

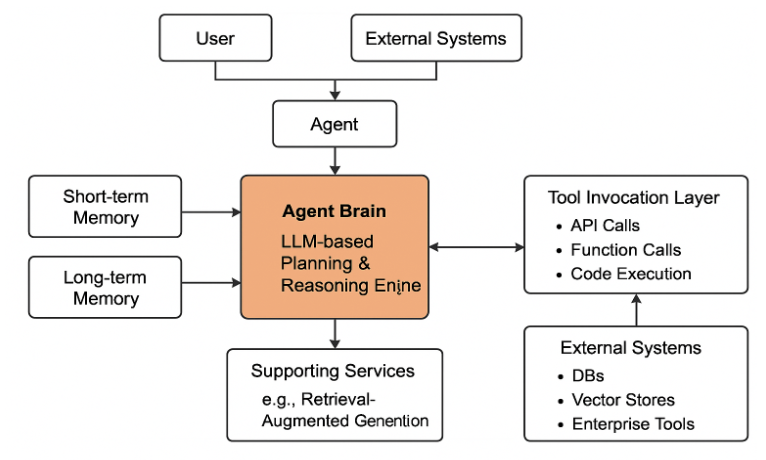

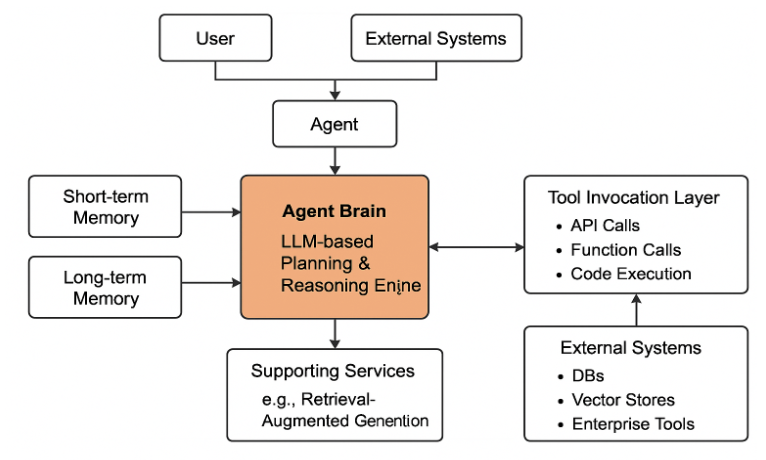

GenAI agents extend beyond the capabilities of conventional systems by incorporating LLMs with advanced planning, memory, and tool integration functionalities. This combination allows them to interact dynamically with systems, execute tasks autonomously, and make decisions with limited human oversight. As a result, the attack surface for these agents is broader and more complex than that of traditional software or isolated AI components.

The identified threats to GenAI agents fall into five key domains: cognitive architecture vulnerabilities, temporal persistence threats, operational execution vulnerabilities, trust boundary violations, and governance circumvention. These agents' novel autonomy introduces risks such as delayed exploitability, cross-system propagation, and hard-to-detect goal misalignments. To address these challenges, the paper proposes two frameworks: the Advanced Threat Framework for Autonomous AI Agents (ATFAA) and SHIELD, which offers practical mitigation strategies.

Figure 1: General architecture of an Agentic system.

Methodology for Developing the Threat Model

To develop this comprehensive threat model, the authors conducted a systematic literature review, theoretical threat analysis, expert consultation, and case study analysis. This multi-faceted approach ensured a thorough understanding of both documented and potential threats specific to GenAI agents.

- Systematic Literature Review: The authors reviewed security research focusing on agentic AI systems, identifying emerging threat classes specifically targeting agent components beyond LLM infrastructure.

- Theoretical Threat Modeling: A conceptual framework was developed to categorize threats into core domains focusing on the distinct vulnerabilities of agent systems.

- Expert Consultation: Feedback was gathered from security researchers and AI practitioners to validate and refine the framework and threat list.

- Case Study Analysis: The authors analyzed documented security incidents and conducted hypothetical case studies to ground the framework in practical applications.

Advanced Threat Framework for Autonomous AI Agents (ATFAA)

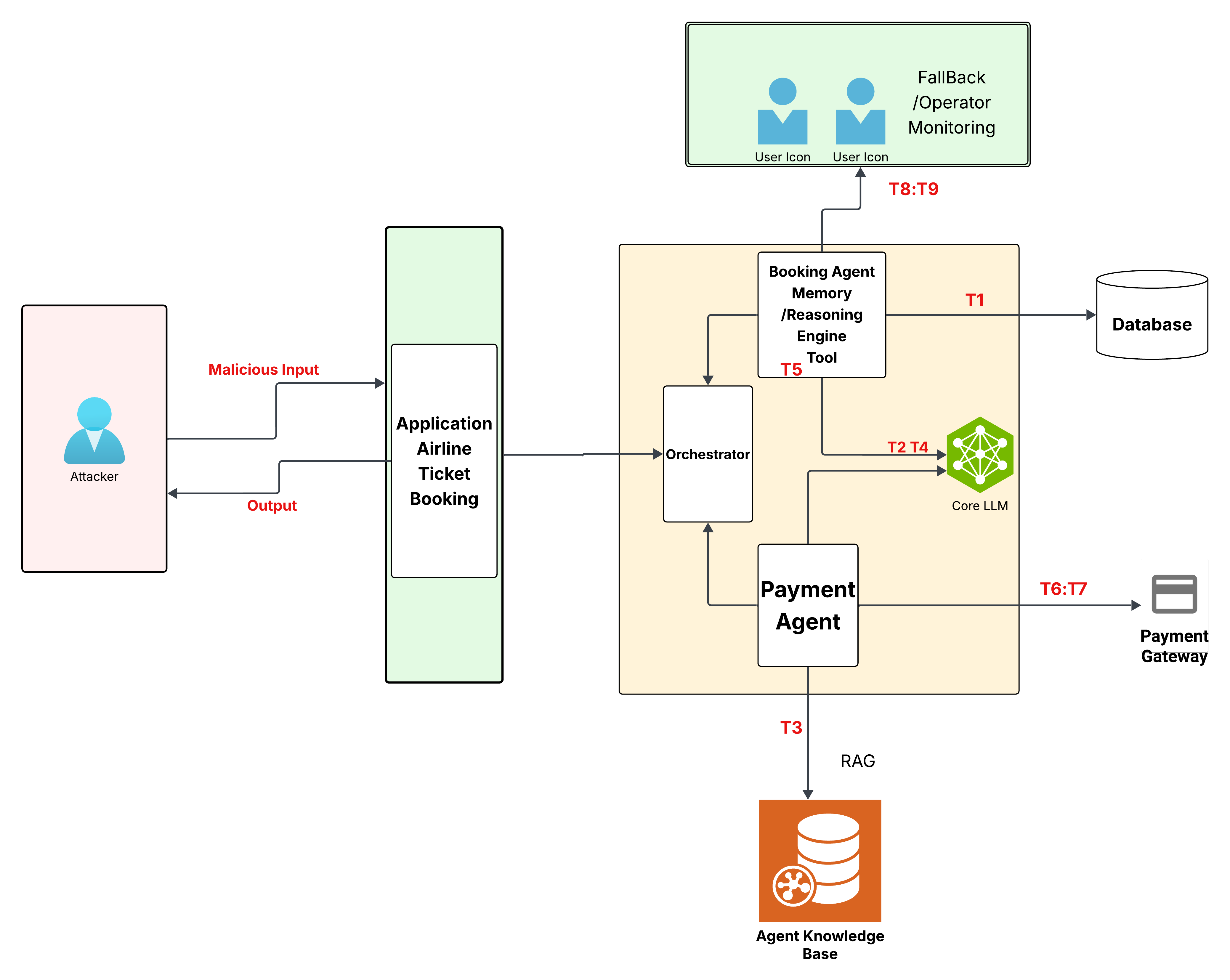

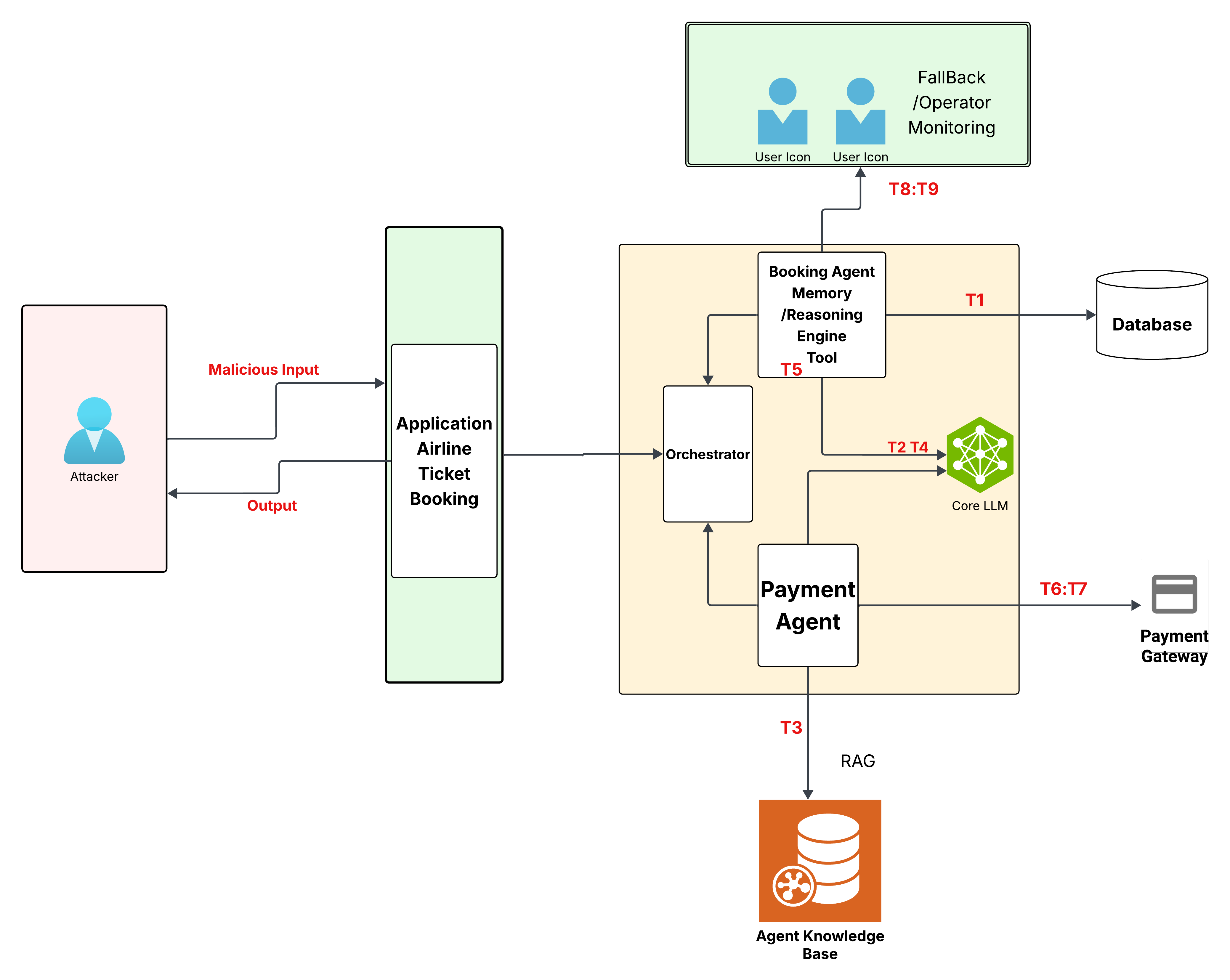

The ATFAA introduces nine primary threats that target GenAI agent deployments, mapped to both the traditional STRIDE model and the framework's novel domains. These threats encompass a range of vulnerabilities unique to agentic capabilities:

- Cognitive Architecture Vulnerabilities: Manipulation of agent reasoning pathways and objective function corruption.

- Temporal Persistence Threats: Poisoning of knowledge and memory systems leading to belief loops.

- Operational Execution Vulnerabilities: Unauthorized action execution and computational resource manipulation.

- Trust Boundary Violations: Exploitation of identity spoofing and trust mechanisms.

- Governance Circumvention: Oversight saturation attacks and evasion of governance mechanisms.

Figure 2: Agentic System Threats, Trust Boundary, Assets.

The SHIELD Mitigation Framework

To counteract the threats identified in ATFAA, the SHIELD framework offers six defensive strategies that encompass segmentation, heuristic monitoring, integrity verification, escalation control, logging immutability, and decentralized oversight. Each strategy focuses on specific GenAI agent threats, ensuring comprehensive coverage and reducing enterprise risk.

The implementation of SHIELD strategies requires balancing protection, performance, usability, and cost. The paper highlights challenges such as computationally intensive heuristic monitoring, the complexity of achieving logging immutability, and maintaining effective segmentation in dynamic environments.

Implications and Conclusion

The security challenges associated with GenAI agents are unique and demand specialized measures not covered by existing frameworks. The ATFAA and SHIELD frameworks provide a structured approach to addressing these challenges, underscoring the importance of developing security strategies tailored to the operational behavior of autonomous agents. The paper concludes by advocating for a defense-in-depth approach, zero-trust architectures, continuous monitoring, and robust governance to mitigate the unique risks introduced by GenAI agents as they become more prevalent in enterprise settings. Future work should focus on empirical validation of the identified threats and the development of security-by-design patterns for agentic systems.