- The paper introduces a secure communication framework using Google's A2A protocol for agentic AI systems.

- It employs threat modeling via the MAESTRO framework to identify and mitigate risks such as Agent Card spoofing, task replay, and server impersonation.

- The study recommends digital signature verification, nonce/timestamp checks, mutual TLS, and DNSSEC to enhance secure implementation.

Building a Secure Agentic AI Application Leveraging A2A Protocol

Introduction

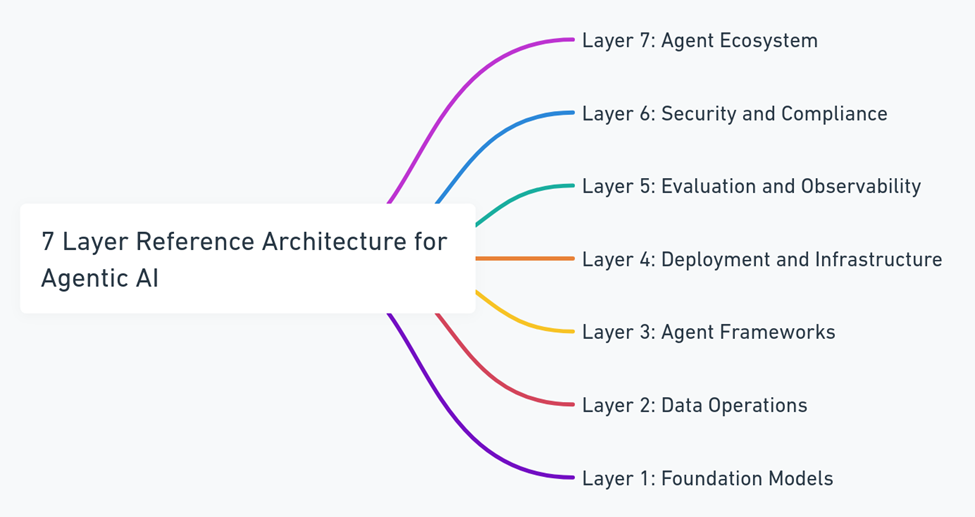

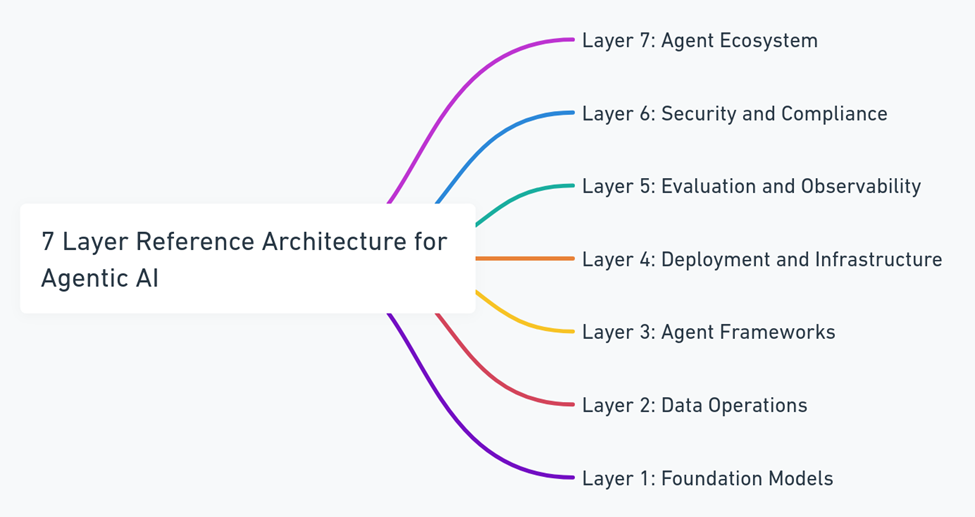

Agentic AI systems, which consist of intelligent agents collaborating autonomously, demand robust communication protocols to ensure secure and reliable interactions. The emergence of Google's Agent-to-Agent (A2A) protocol addresses these needs by providing a framework for secure communication among autonomous agents. This paper extensively analyzes the A2A protocol, focusing on its security architecture, threat modeling using the MAESTRO framework, and proposing implementation best practices to build resilient and secure agentic AI systems.

The Rise of Agentic AI and A2A Protocol

Agentic AI systems represent a shift from isolated task-specific models towards dynamic, multi-agent ecosystems. These systems are characterized by intelligent agents capable of independent decision-making, initiating actions, and collaborating with other agents and humans. As interactions across organizational and technological boundaries increase, secure interoperability becomes a critical requirement. Google's A2A protocol offers a structured, declarative framework for enabling secure communication between agents, ensuring identity, authentication, task exchange, and auditability.

Figure 1: Maestro Architecture - 7 Layers.

A2A Protocol Architecture

Protocol Overview

A2A facilitates communication between client agents, responsible for formulating tasks, and remote agents, responsible for executing these tasks. The protocol's design prioritizes agent independence, compliance with widely adopted web standards, and integrated security measures. Key components include Agent Cards for discoverability, JSON-RPC for communication, and Server-Sent Events (SSE) for streaming.

Discoverability Mechanism

Agent Cards contain structured metadata that describe an agent's capabilities, authentication methods, and interface details. By hosting these cards at standardized locations, agents can easily discover each other's functionalities, similar to web crawlers utilizing robots.txt files for discovery.

Threat Modeling with MAESTRO

Utilizing the MAESTRO framework, the paper identifies security risks specific to A2A protocol deployments, such as Agent Card spoofing, task replay, and server impersonation. MAESTRO's layered approach extends traditional security models to address AI-specific threats like autonomous decision-making risks and adversarial machine learning.

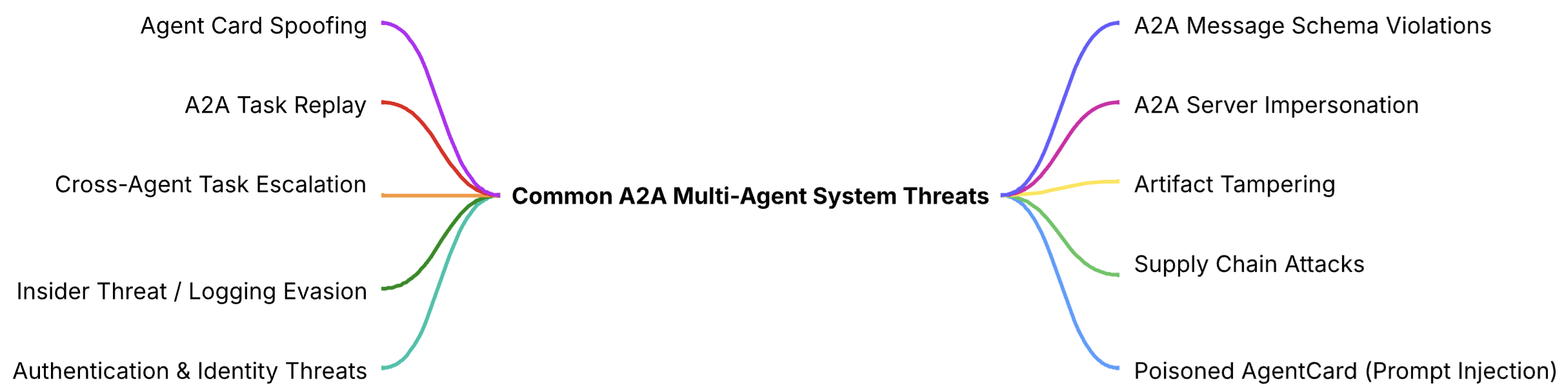

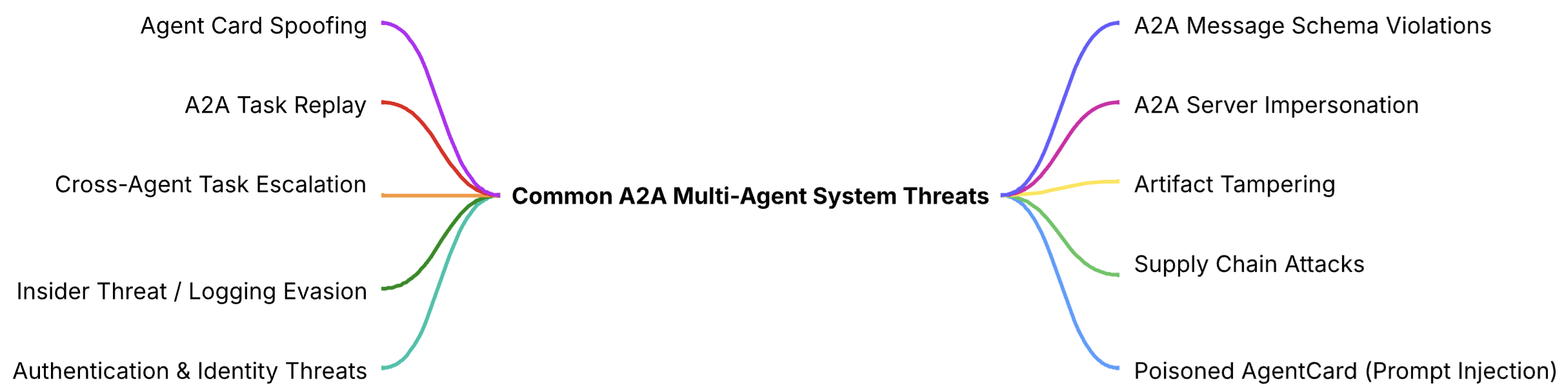

Figure 2: List of Common A2A Multi-Agent System Threats Identified by MAESTRO Threat Modeling Methodology.

Common Threats

The MAESTRO framework identifies several prevalent threats:

- Agent Card Spoofing: Fake Agent Cards can lead to data exfiltration and task hijacking.

- Task Replay: Captured requests can be replayed for unauthorized task execution.

- Server Impersonation: DNS spoofing can redirect traffic to fraudulent servers.

Additional Security Considerations

Continuous monitoring, incident response planning, and secure coding practices are vital for maintaining a robust security posture in A2A deployments.

Secure Implementation Strategies

Mitigation Techniques

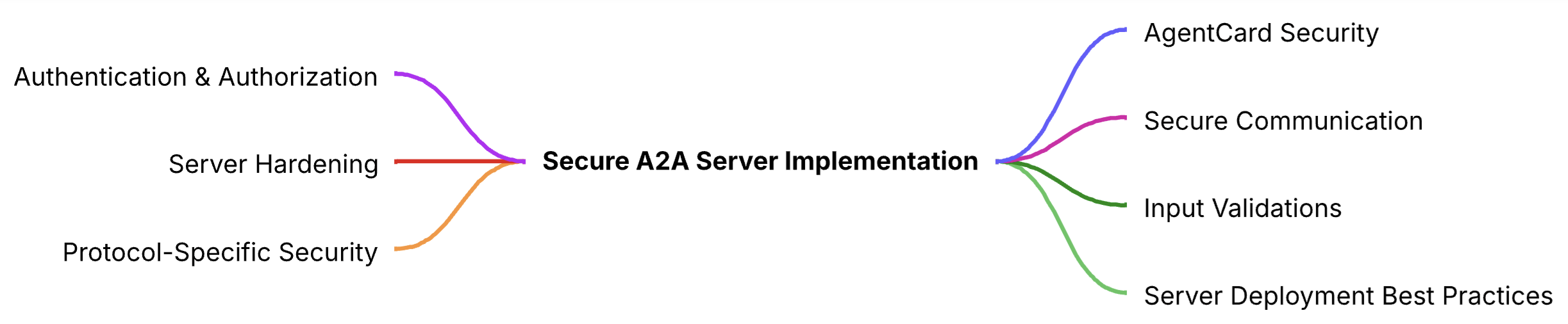

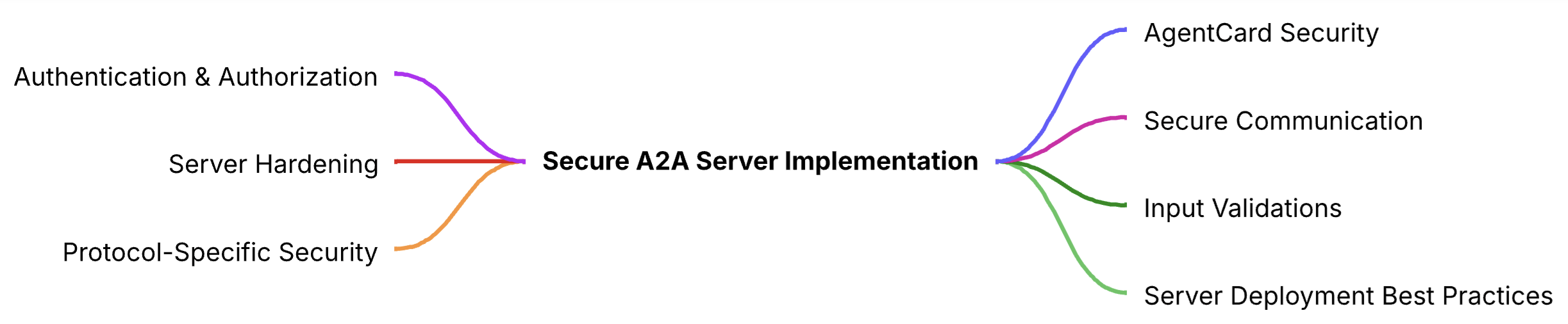

To counter identified threats, the paper recommends multiple strategies:

- Digital Signature Verification for Agent Cards

- Nonce and Timestamp verification to prevent replay attacks

- Mutual TLS and DNSSEC for server authentication

Secure Server Implementation

Figure 3: Best Practices For Secured A2A Server.

The paper provides detailed guidelines for deploying secure A2A servers, including secure communication protocols, rigorous input validation, robust error handling and logging, and server hardening techniques.

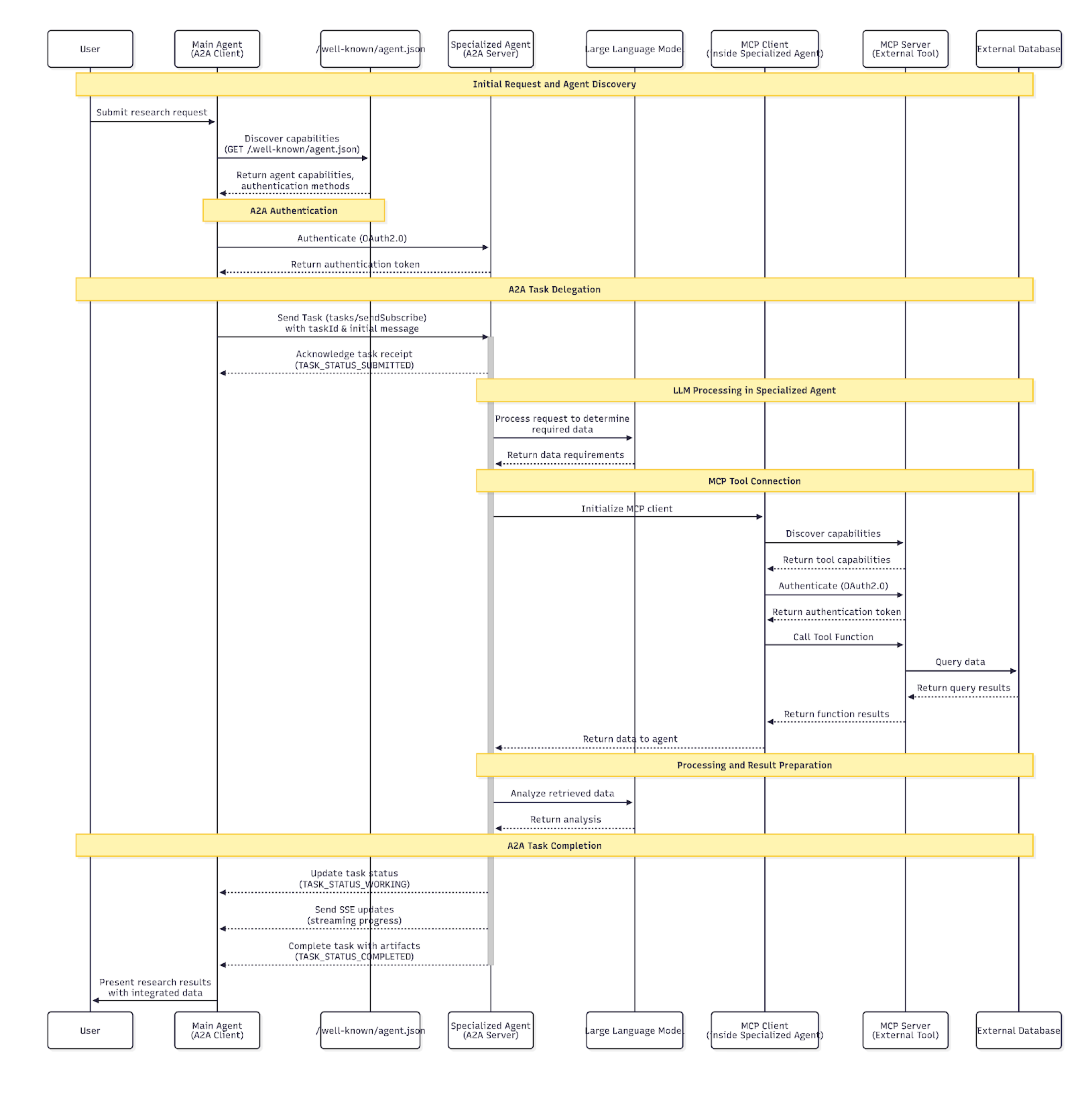

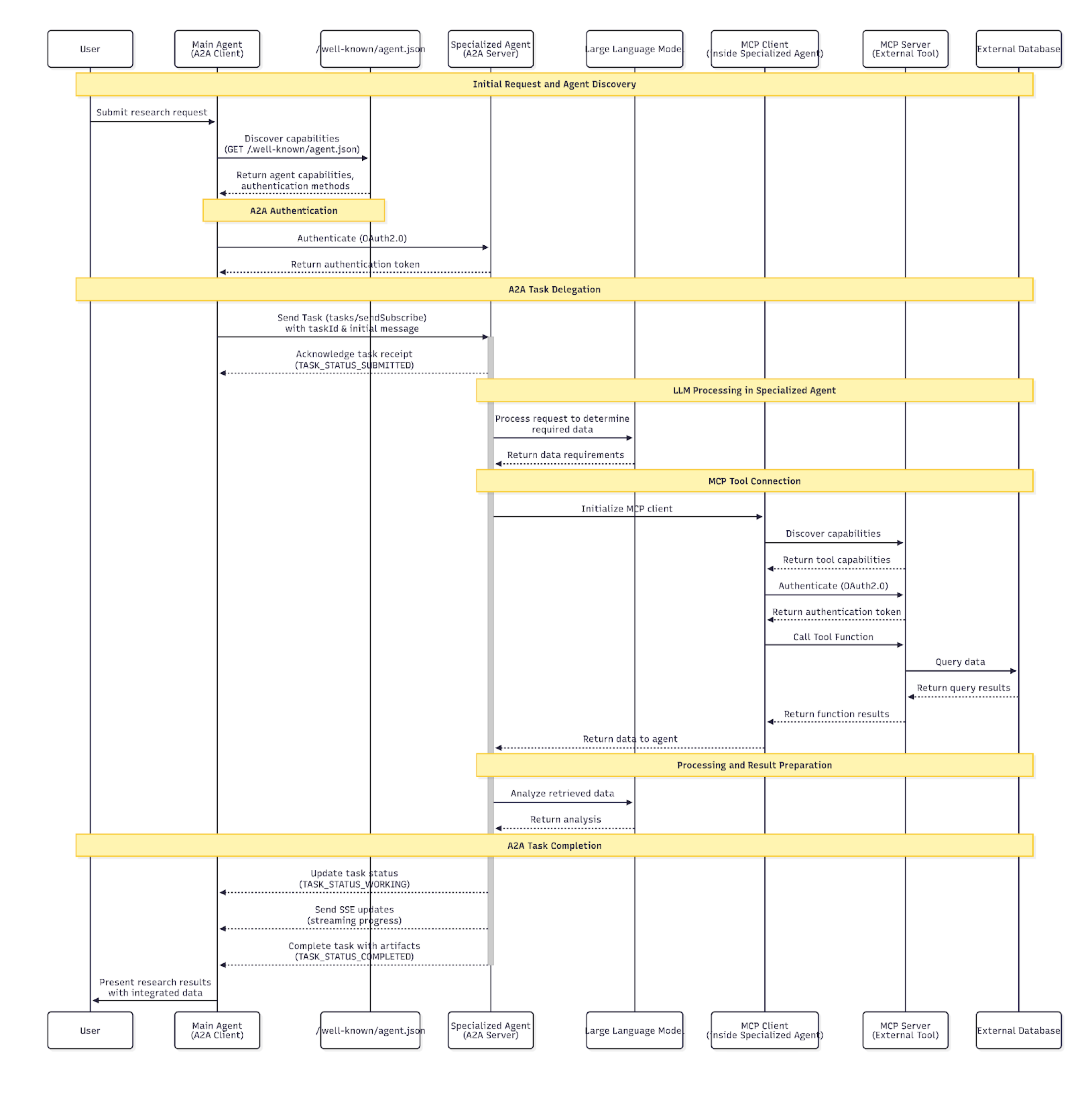

Synergy between A2A and MCP

Google's A2A and Anthropic's Model Context Protocol (MCP) can be utilized together to enhance agent capabilities. A2A enables agent coordination while MCP allows direct integration with tools and data sources. Their combined use fosters seamless agentic workflows, promoting modularity and flexibility in distributed systems.

Figure 4: End to End Agents collaboration utilizing A2A and MCP.

Conclusion

The A2A protocol forms a foundation for secure multi-agent systems, addressing the growing need for structured, interoperable communication protocols in agentic AI. By integrating threat modeling with MAESTRO, the paper identifies significant security challenges and proposes enhancements and best practices for robust real-world implementation. Future developments should focus on adapting Zero Trust principles for agentic AI, advancing authorization standards, and ensuring resilience against sophisticated threats, laying the groundwork for secure and trusted agent collaborations.