- The paper introduces the Conseca framework that dynamically generates contextual security policies to adapt AI agent security measures for every task.

- It employs a deterministic enforcement approach using language models to prevent issues like prompt injections and adversarial manipulation.

- Preliminary evaluations in a Linux-based prototype show that Conseca maintains task utility while significantly enhancing security.

Contextual Agent Security: A Policy for Every Purpose

Introduction

The paper "Contextual Agent Security: A Policy for Every Purpose" proposes the Conseca framework, which addresses security in AI and other agent systems by generating contextual policies designed specifically for each task and context. Unlike traditional systems that rely on static policies, Conseca adapts security measures dynamically to suit the environment and task at hand, aiming to mitigate issues of overrestriction or underpermissioning that arise from manually crafted policies.

Framework Design

Agent Structure and Context

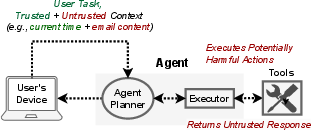

Agents consist of a planner and an executor, where the planner processes user requests and outputs actions, while the executor runs these actions. The context for these operations is pivotal, influencing whether actions are harmful or benign. Conseca emphasizes the importance of trusted context to prevent manipulation by adversaries.

Figure 1: An agent contains a planner and an executor that interfaces with external tools. Untrusted context from the initial request or tool responses may compromise the agent.

Contextual Security Policies

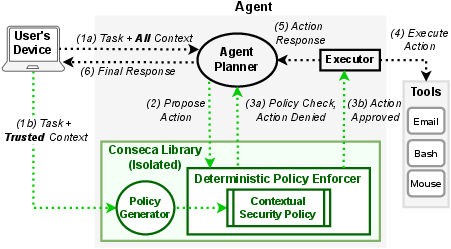

Conseca generates task-specific security policies using LLMs. These policies are transparent, human-verifiable, and deterministically enforced. By leveraging a LLM, Conseca provides fine-grained control over agent actions tailored to the specific context, surpassing conventional static policies that might not effectively handle diverse scenarios.

Policy Enforcement

Conseca's policy framework deterministically checks each action against the generated policy, ensuring adherence to constraints. This deterministic approach prevents common issues such as prompt injections, enhancing agent reliability and security.

Figure 2: Conseca enables policy generation and enforcement for an example computer use agent with access to external tools. Green lines indicate Conseca's control flows.

Implementation and Evaluation

Proof-of-Concept

The Conseca framework was integrated into a Linux-based AI agent prototype using Python. The agent interacts with several tools, including filesystem management and email processing utilities, and is enhanced by an LLM for policy generation. This prototype demonstrated a successful integration of contextual security within agent operations.

Case Studies and Results

Preliminary case studies revealed that Conseca-enabled agents can complete tasks with utility comparable to permissive static policies while demonstrating superior protection against inappropriate actions. By utilizing context-aware constraints, Conseca effectively blocks potentially harmful operations without severely restricting tasks, which static strategies often fail to achieve.

Security Model

Conseca effectively isolates trusted context to generate policies, reducing exposure to adversarial manipulation. By rigorously defining trusted parameters, Conseca enhances security without sacrificing operational capability. This isolation ensures robust protection against popular attacks such as prompt injections.

Conclusion

Conseca introduces a novel approach to agent security by creating adaptive policies informed by context, showing potential for broader applications where static policies prove insufficient. The framework underscores the necessity of integrative security mechanisms that are both robust and adaptable, aligning with the complex demands of modern computing environments. Future research can explore expanding contextual policy boundaries and improving policy generation for even more intricate scenarios.