- The paper introduces HAT to enhance LLM memory by efficiently aggregating and retrieving contextual information from long dialogues.

- It employs a memory agent to traverse the hierarchical tree, delivering optimal context for improved dialogue coherence.

- Experiments demonstrate superior performance with enhanced BLEU and DISTINCT scores, outperforming traditional retrieval methods.

Enhancing Long-Term Memory using Hierarchical Aggregate Tree for Retrieval Augmented Generation

This paper presents a novel approach to addressing the challenges of memory capacity in LLMs, particularly in long-form conversations. The authors introduce a new data structure, the Hierarchical Aggregate Tree (HAT), to enhance memory capabilities in retrieval-augmented generation (RAG) systems. Their methodology aims to balance the breadth and depth of information extracted during conversations, enabling more coherent and grounded dialogues.

Introduction

The rapid evolution of LLMs has fostered the development of personalized chat agents across various domains. Despite their capabilities, LLMs typically struggle with maintaining consistency and relevance over extended interactions due to inherent limitations in their context handling capabilities. This paper identifies a gap in the existing approaches, which predominantly focus on either summarizing past interactions or retrieving raw data chunks without a structured integration methodology.

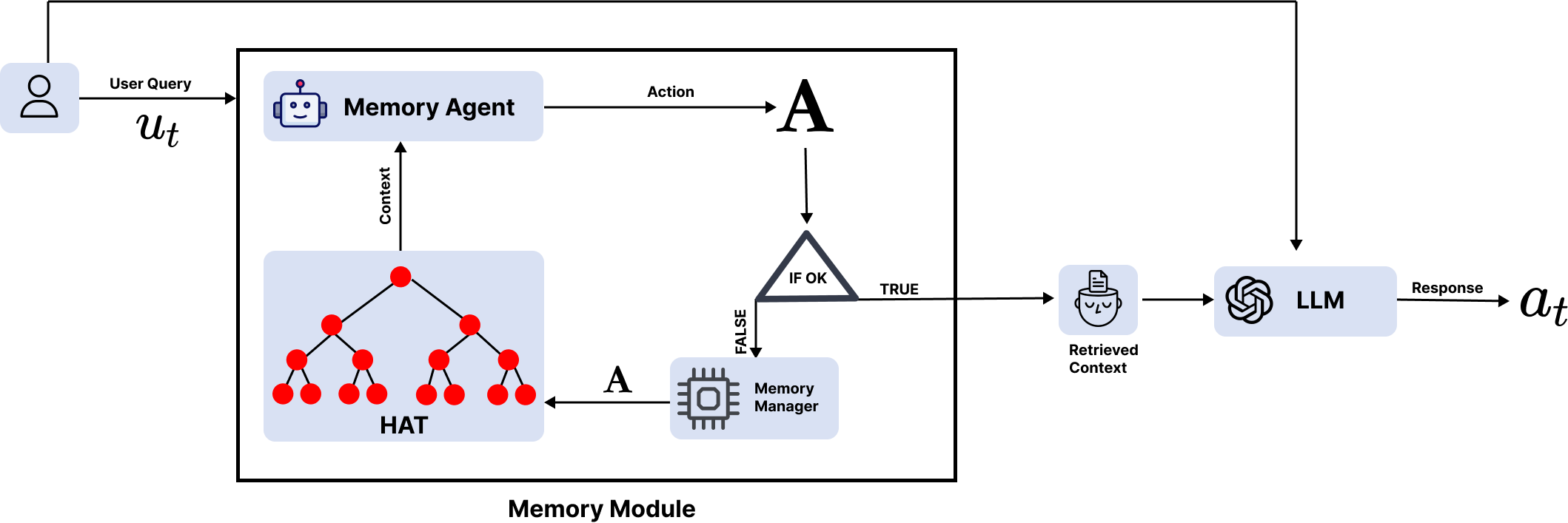

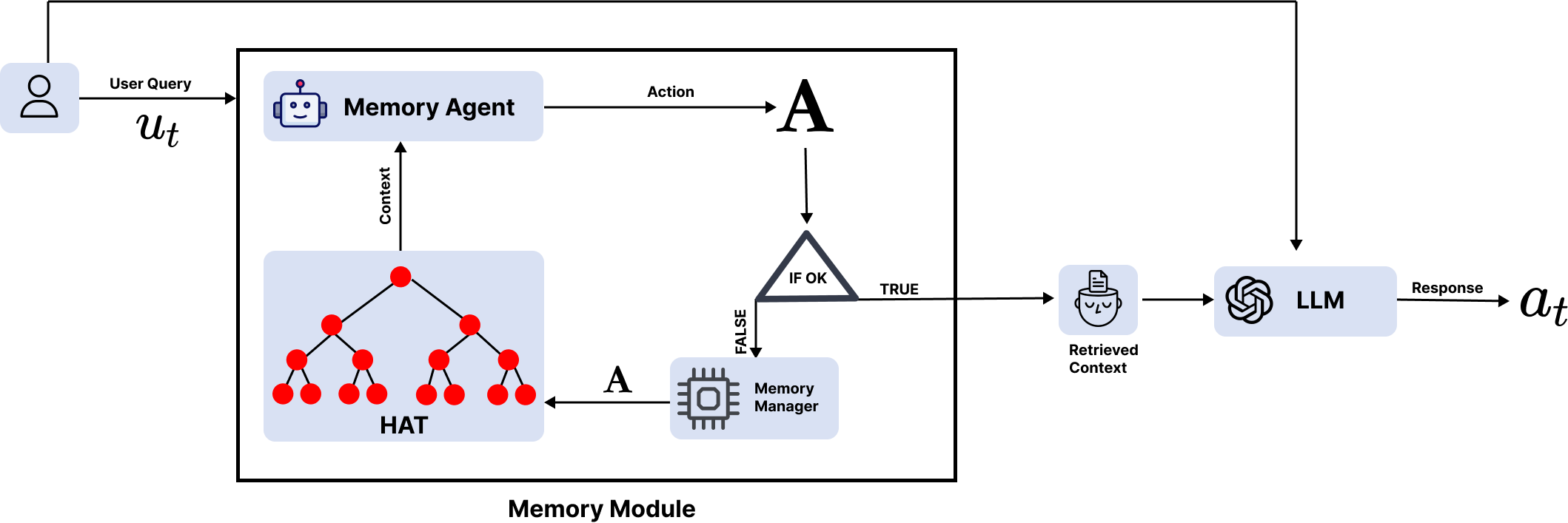

Figure 1: Overview of our approach. Given a user query, the memory module provides relevant context by traversing the HAT.

RAG systems provide a mechanism to introduce external data into an LLM's workflow without altering the model's weights. However, these systems are constrained by the LLM's context length budget. As a solution, the authors propose the Hierarchical Aggregate Tree (HAT), a data structure designed to preserve and aggregate conversational context recursively.

Methodology

The methodology is centered around implementing the HAT data structure and utilizing a memory agent to optimally traverse this tree. The approach attempts to capitalize on HAT's hierarchical nature, which retains resolution and which arranges information with more detail at deeper levels and more updated information along the horizontal axis.

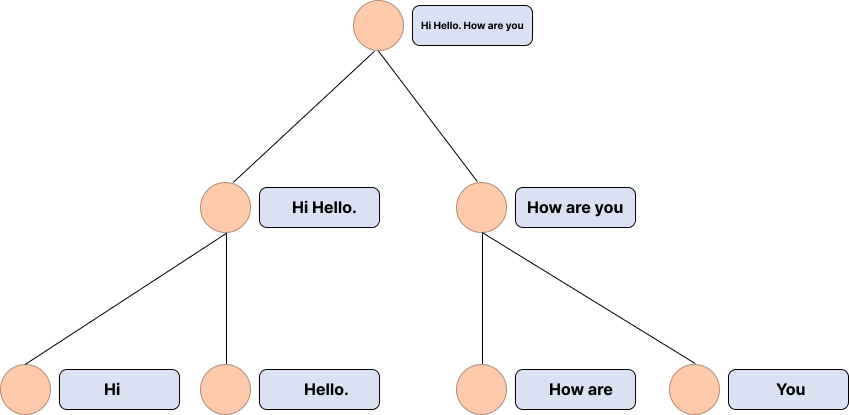

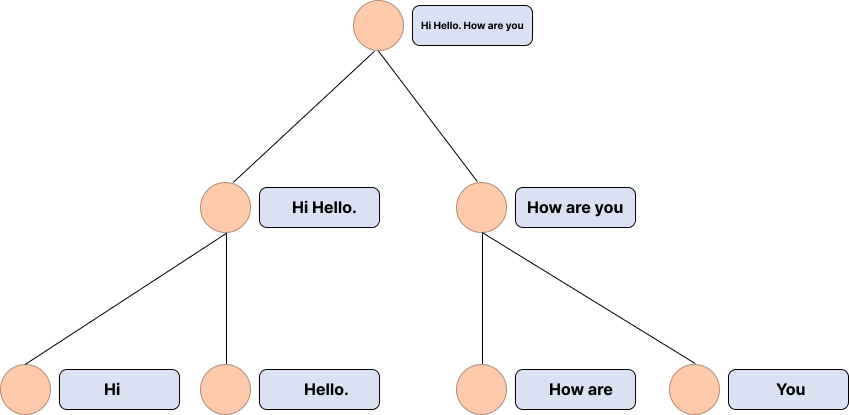

Figure 2: Illustration of HAT, with example aggregation function as simple concatenation and memory length of 2.

Hierarchical Aggregate Tree (HAT)

HAT organizes memory hierarchically into layers, with each layer containing nodes that aggregate relevant information from their children nodes. The key functional aspect of HAT lies in the aggregate function that summarizes or processes child nodes' data into concise, valuable insights for the parent node. This allows the memory structure to dynamically adjust as conversations progress, ensuring the retrieval of the most pertinent information possible when needed for a response.

Memory Agent

The memory agent is tasked with traversing the HAT to find the optimal path that best suits the context required by a user query. Utilized here is GPT, which acts as the agent to navigate the tree based on relevance and quality of available context, formalized as an MDP. Through this traversal mechanism, the agent ensures that the user query is satisfied with the minimal yet most relevant information set.

Experiments

The paper demonstrates the effectiveness and efficiency of the HAT structure through comprehensive experiments using a multi-session conversational dataset, which emphasizes recurrent interactions over distinct episodes. The results showcase notable improvements in dialogue coherence and information relevance compared to baseline models that use raw or summarized contexts.

Evaluations employ metrics such as BLEU scores and DISTINCT measures, where the HAT-enhanced methodology consistently surpassed traditional approaches. The experiments validate that targeted information retrieval yields better grounded dialogues, a testament to the efficacy of conditional traversal in memory management.

Limitations and Future Work

Despite promising results, the HAT implementation introduces latency issues due to computational overhead associated with navigating complex tree structures and API call dependencies. Addressing these performance limitations may involve optimization techniques such as heuristic-based searches or more efficient retrieval algorithms.

Furthermore, the scalability of this system in terms of memory footprint poses another area for future investigation, particularly in deploying the framework at scale. Potential solutions may encompass hybrid tree structures or vector-based retrieval methods to enhance efficiency.

Conclusion

This research proposes an innovative approach to augment LLMs with enhanced memory capabilities through the Hierarchical Aggregate Tree (HAT). By formulating memory retrieval as a structured tree traversal, the work balances the extraction of context in conversational agents. The results affirm the advantages of leveraging conditional paths for long-form dialogue management, highlighting new directions for future AI advancements in memory-augmented systems.